Lead Ranking: How to Prioritize Leads in 2026

Your scoring model says the hottest lead in the pipeline is a grad student who downloaded four whitepapers. Meanwhile, the VP of Engineering who requested a demo last Tuesday sits at a 42. That's not a lead ranking problem - it's a model problem. Let's fix it.

Lead Ranking vs Scoring vs Grading

These three terms get used interchangeably, and it causes real confusion.

Lead ranking is the prioritized output - your ordered list of leads sorted by conversion likelihood. Lead scoring is the point-based method that produces that ranking, typically on a 1-100 scale. Lead grading is an A-F fit assessment used alongside scoring to show how well a lead matches your ICP, regardless of what they've done on your site. Business.com's lead scoring guide defines scoring as a process that "ranks potential customers," which tells you how blurred these lines are in practice.

The mental model matters: grading tells you who they are, scoring tells you what they've done, and ranking is the final sorted list you hand to sales.

What You Need (Quick Version)

- Fit vs. behavior. Job title and company size live in a different bucket than pricing page visits.

- 5 rules, not 50. Complexity kills adoption.

- 70-point MQL threshold. Most leads fall within 41-60; fewer than 10% score 81-100.

- Clean data first. Scoring on empty CRM fields is scoring on fiction.

- Quarterly sales feedback. If reps ignore your MQLs, the model is wrong - not the reps.

Why Most Models Fail

Here's the scenario we've seen play out a dozen times. Marketing builds a scoring model weighted heavily toward content engagement - email opens, whitepaper downloads, blog visits. A researcher racks up 85 points in two weeks. A real buyer who skips the content funnel and goes straight to requesting a demo sits at 35 because they only triggered one rule. The model rewards curiosity, not purchase intent.

The consensus on r/hubspot is blunt: scoring models overweight vanity engagement and underweight actual buying signals. SDRs learn to ignore the scores within a month, and the whole system becomes shelfware. Another common failure teams call out is the classic trap - one person builds the model, documents nothing, and leaves the company. Eighteen months later, nobody knows why "visited careers page" is worth 15 points.

So what do you do instead? Pull your last 50 closed-won deals and your last 50 closed-lost. What did the winners do that the losers didn't? That's your model. Not guesswork - actual patterns from your CRM.

The Two-Axis Framework

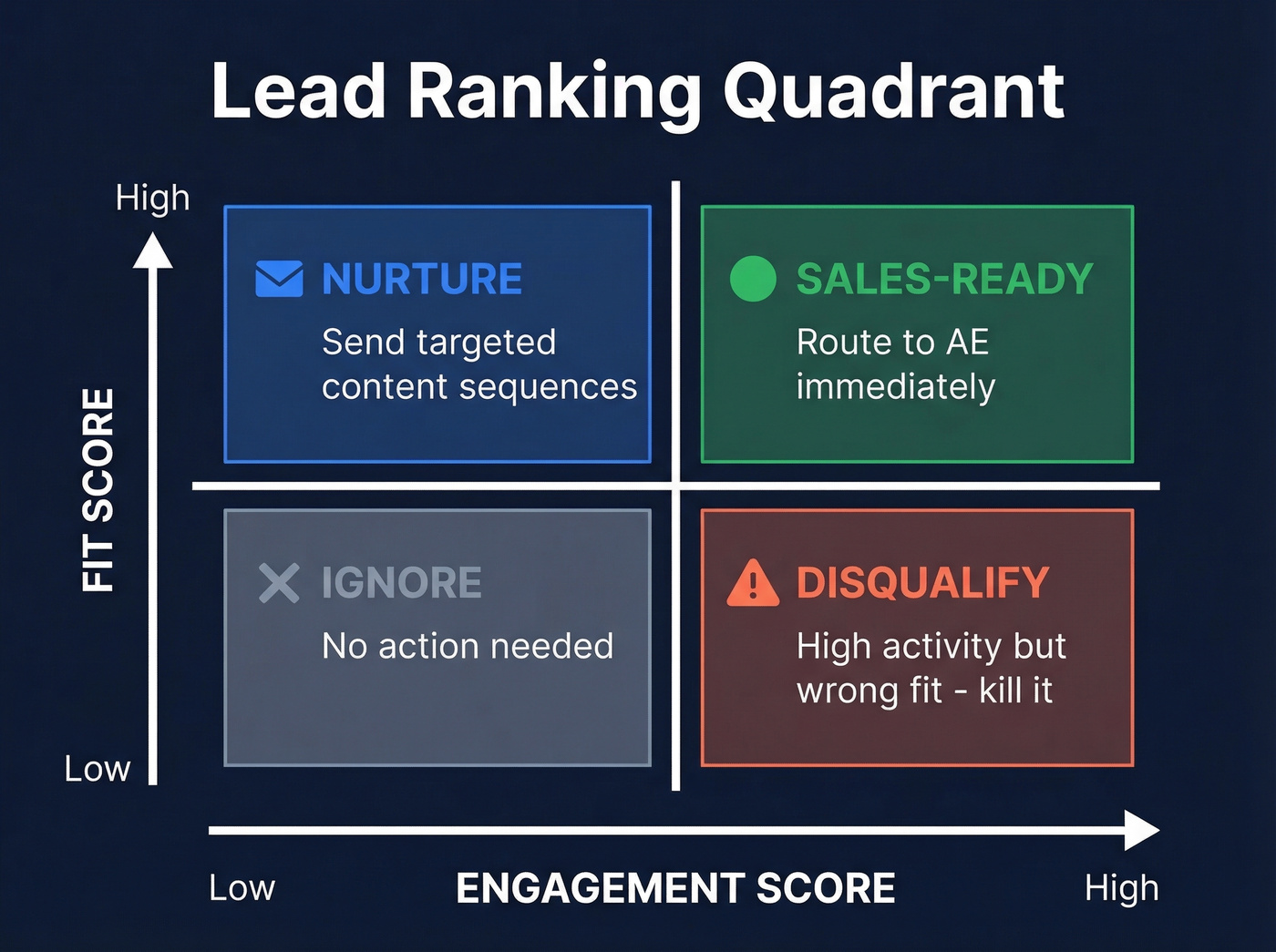

Every lead falls into one of four quadrants. This routing matrix turns a raw prioritized list into action.

| High Engagement | Low Engagement | |

|---|---|---|

| High Fit | Sales-ready - route to AE | Nurture - targeted content |

| Low Fit | Disqualify - don't waste reps | Ignore - no action |

Fit criteria map to classic BANT (budget, authority, need, timeline) and include job title, company size, industry, and tech stack. Engagement criteria follow ZoomInfo's intent tiers: low intent covers blog reads and social follows, medium intent includes email opens and webinar attendance, and high intent means pricing page visits, demo requests, and trial signups. Layer in third-party intent signals - funding rounds, leadership changes, hiring surges, technology purchases - to catch leads actively researching solutions before they ever hit your site.

The quadrant that trips teams up is high-engagement, low-fit. That wrong-fit, high-activity lead needs to die before it ever reaches a rep's queue.

Half of lead ranking models fail because they score empty CRM fields. Prospeo's enrichment API fills in 50+ data points per contact at a 92% match rate - so your fit scores reflect real firmographics, not fiction. Data refreshes every 7 days, not the 6-week industry average.

Stop ranking leads on stale data. Enrich your CRM for $0.01 per contact.

Build a Scoring Model in 15 Minutes

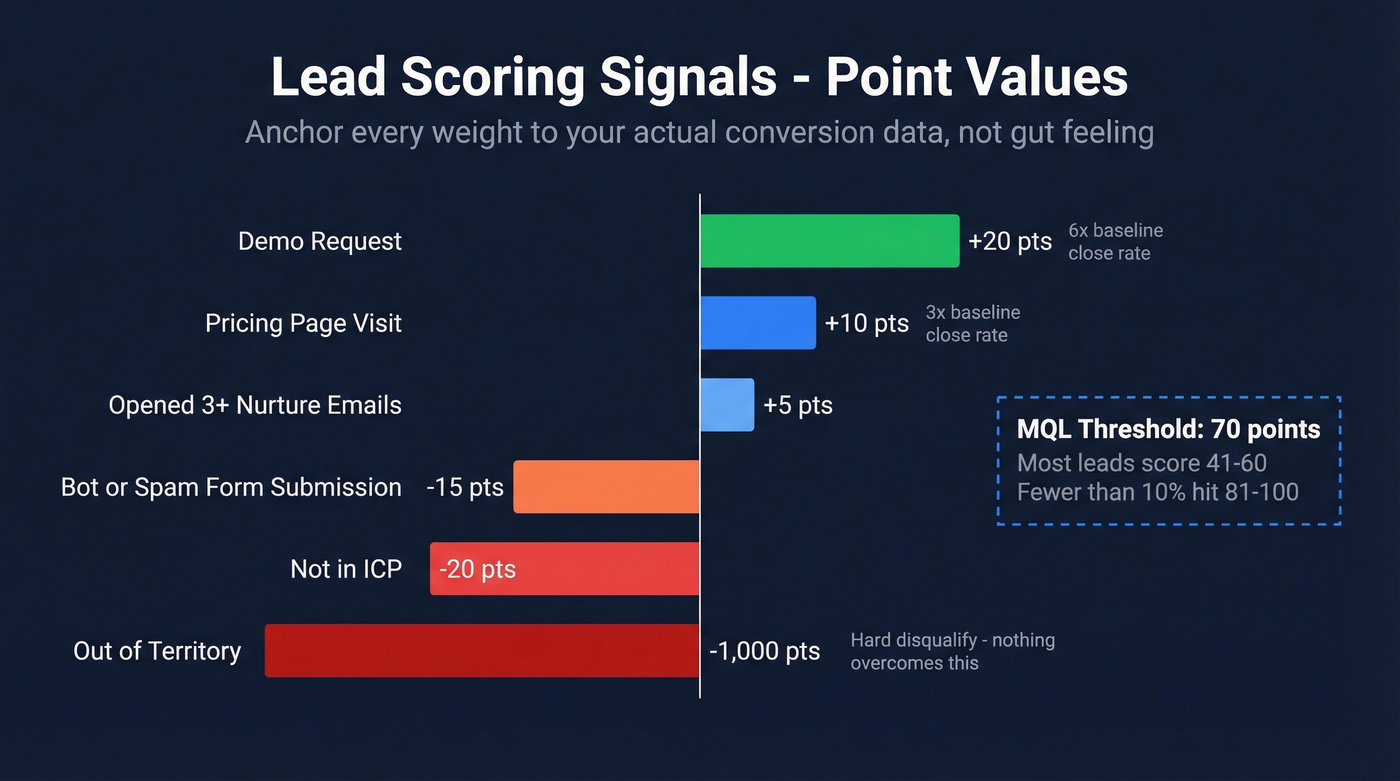

Don't overthink this. Here's a starter model you can deploy today:

| Signal | Points |

|---|---|

| Demo request | +20 |

| Pricing page visit | +10 |

| Opened 3+ nurture emails | +5 |

| Bot/spam form submission | -15 |

| Not in ICP | -20 |

| Out of territory | -1,000 |

The -1,000 is intentional - a hard disqualify that no amount of positive signals can overcome.

How to derive point values: If 30% of demo requesters close vs your 5% baseline, that 6x lift earns the highest point value. If pricing page visitors close at 15% (3x lift), they get half the points. Anchor every weight to your actual conversion data, not gut feeling.

Start with a binary gate before points even matter: Is this lead in our ICP? Yes or no. Only then do the engagement points count. Set your MQL threshold at 70 points - per LeadsBridge benchmarks, most leads fall within 41-60, and fewer than 10% score 81-100.

Before you assign a single point, though, your CRM data needs to be real. Prospeo's enrichment API returns 50+ data points per contact with a 92% match rate, so your fit scores are based on actual firmographics - not the empty fields that make half of most scoring models useless.

Score Decay and Data Freshness

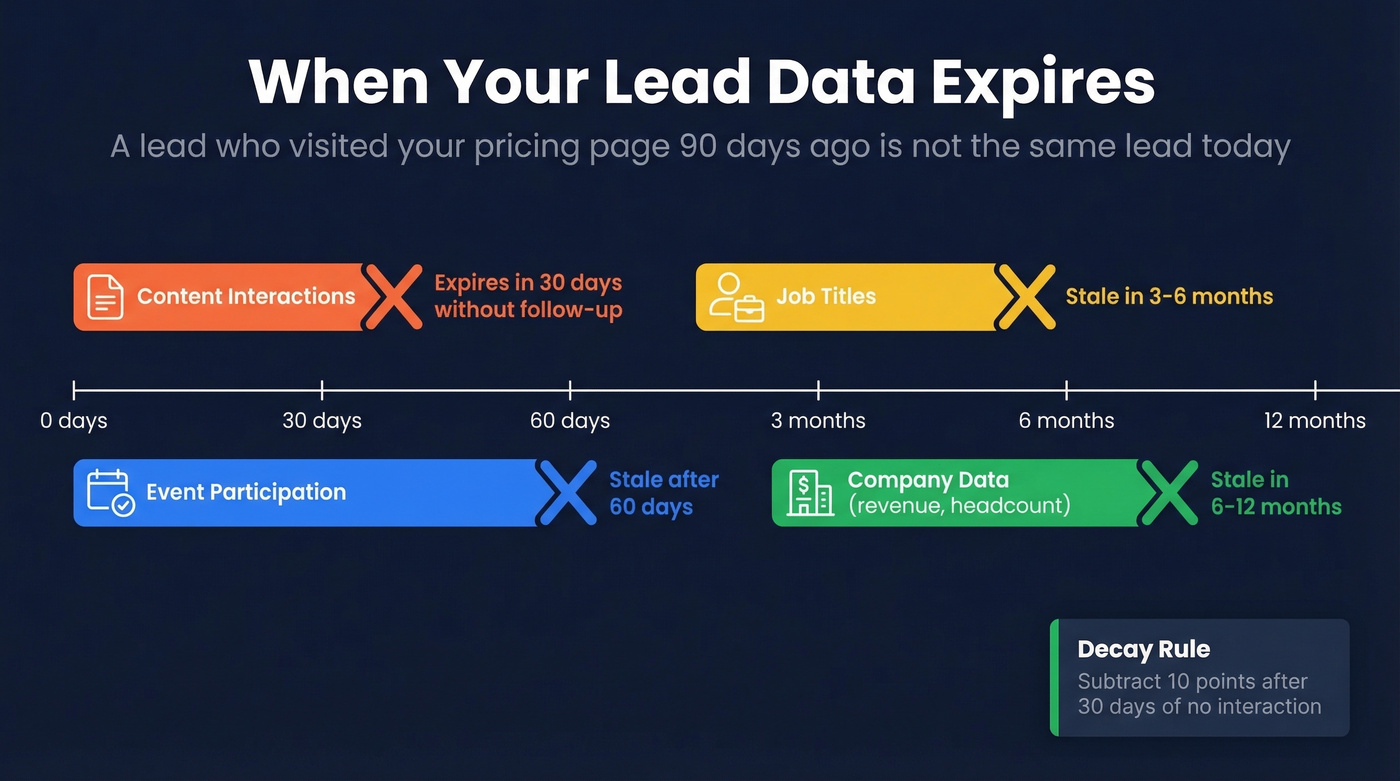

A lead who visited your pricing page 90 days ago isn't the same lead today. Scores need to decay, and the timelines are more aggressive than most teams realize.

| Data Type | Shelf Life |

|---|---|

| Content interaction signals | 30 days without follow-up |

| Event participation | 60 days |

| Job titles | 3-6 months |

| Company data (revenue, headcount) | 6-12 months |

A practical decay rule: subtract 10 points if there's been no interaction within 30 days. And here's the 18-month trap - that model someone built and never documented? Its decay rules are probably nonexistent, which means leads scored two years ago still sit in your "hot" queue with stale titles and dead emails.

Your lead ranking is only as good as the data feeding it. Prospeo refreshes B2B contact data every 7 days - vs the 6-week industry average - and verifies emails at 98% accuracy. When a lead crosses your MQL threshold, the email actually works and the job title is current.

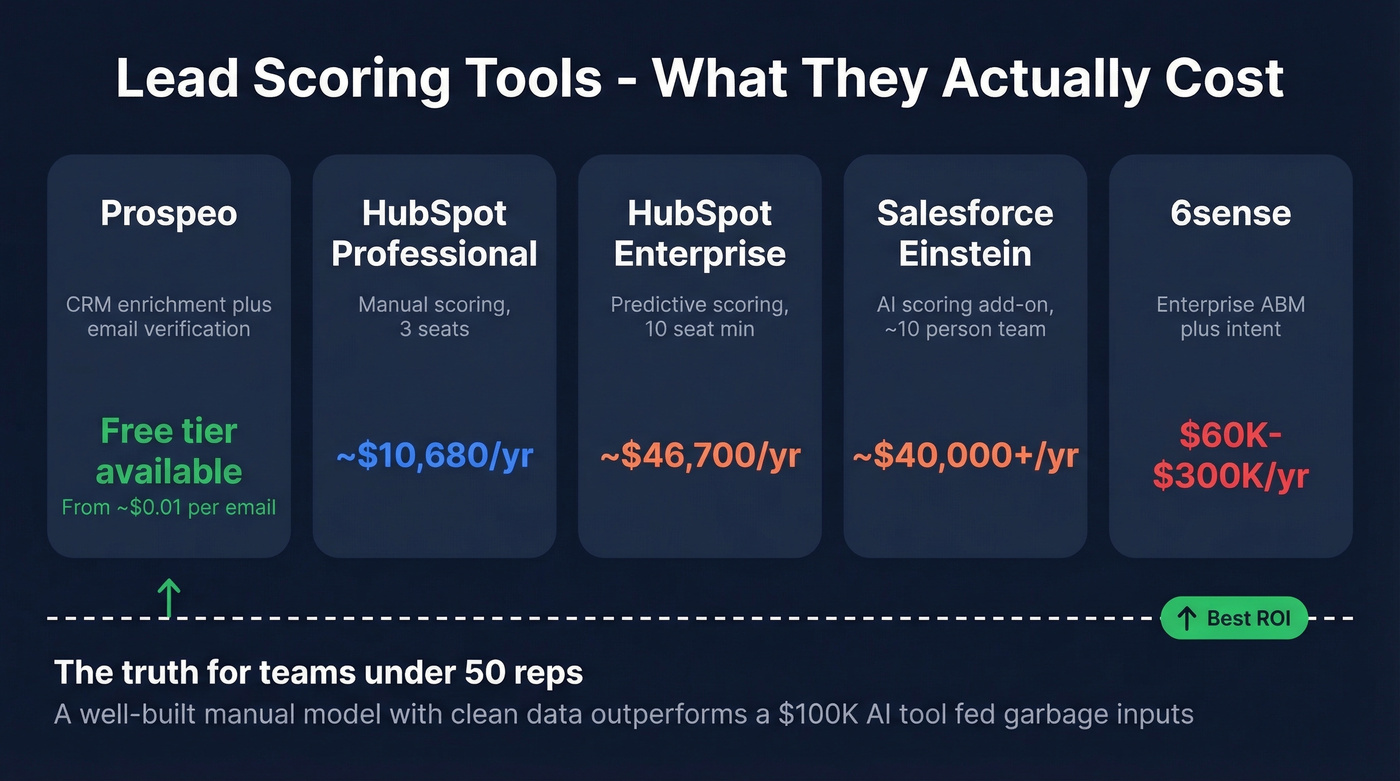

What Scoring Tools Actually Cost

Here's the thing nobody tells you upfront: scoring tools range from "basically free" to six figures annually.

| Tool | What You Get | Annual Cost |

|---|---|---|

| Prospeo | CRM enrichment + email verification | Free tier (75 verified emails/month); paid from ~$0.01/email |

| HubSpot Professional | Marketing or Sales Hub - manual scoring | ~$10,680/yr for 3 seats |

| HubSpot Enterprise | Predictive scoring | ~$46,700 first year (10-seat min + onboarding) |

| Salesforce Einstein | AI scoring add-on | ~$40,000+/yr for a ~10-person team |

| 6sense | Enterprise ABM + intent | $60K-$300K/yr |

For most teams under 50 reps, a well-built manual model with clean data outperforms a $100K AI tool fed garbage inputs. We've watched teams spend six figures on predictive scoring only to discover their CRM had a 40% stale-data rate. The AI faithfully scored garbage and produced garbage rankings. If your average deal size doesn't justify enterprise tooling, you almost certainly don't need enterprise-grade scoring - you need enterprise-grade data.

You don't need a $100K predictive scoring tool - you need data that's actually accurate. Prospeo delivers 98% verified emails and current job titles on a 7-day refresh cycle. When a lead crosses your MQL threshold, the contact info works and the title is real.

Start with 75 free verified emails per month. No contracts, no sales calls.

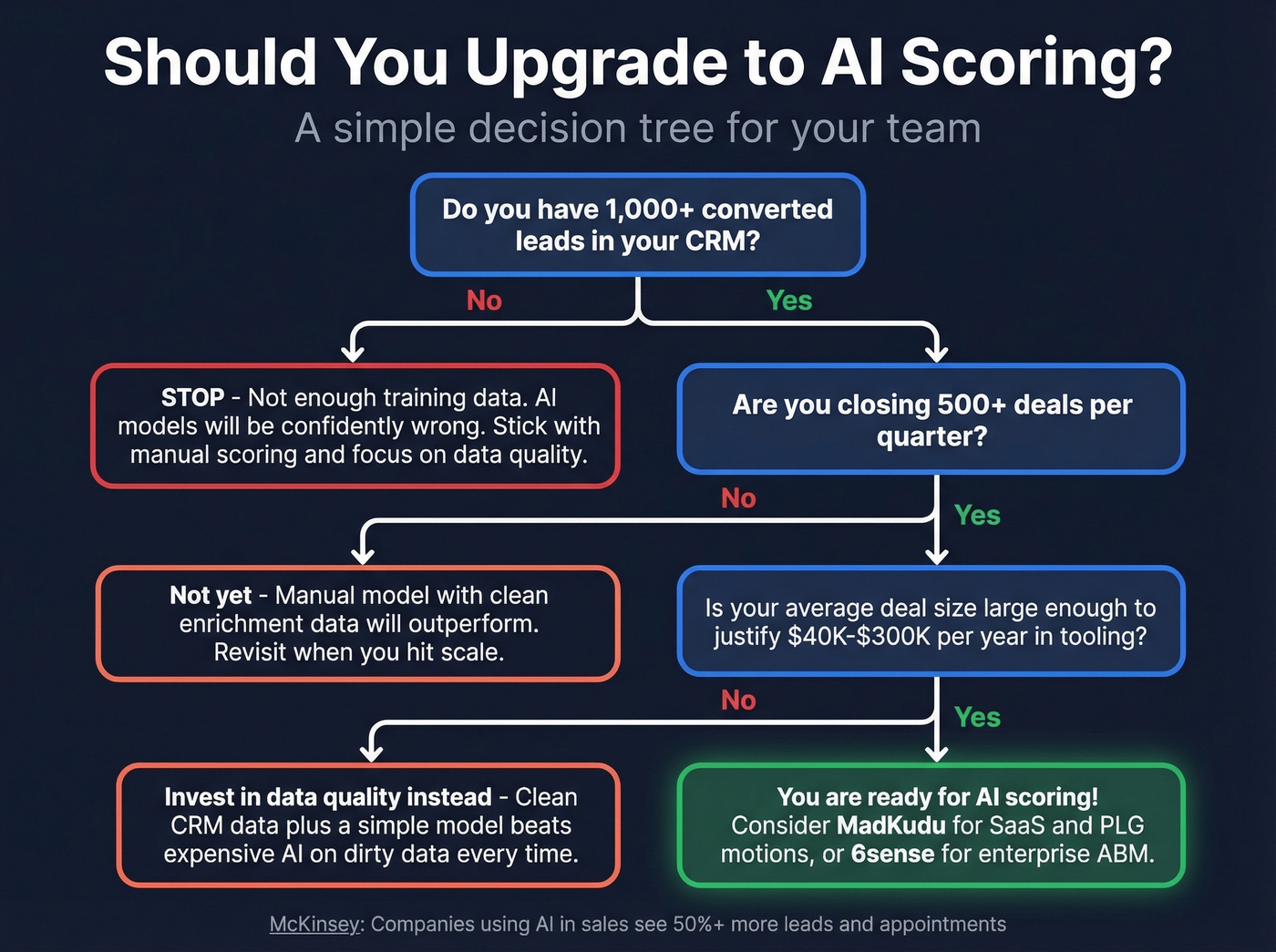

When to Upgrade to AI Scoring

AI scoring becomes worth it at scale. McKinsey research shows companies using AI in sales increase leads and appointments by more than 50%. A 2026 peer-reviewed study tested 15 ML algorithms on B2B lead data and found Gradient Boosting Classifier performed best, with lead source and lead status as the top predictive features.

Don't upgrade to predictive until you have 1,000+ converted leads in your CRM. The algorithms need training data, and thin datasets produce models that are confidently wrong. At that point, opportunity scoring shifts from a nice-to-have to a genuine advantage - the model surfaces deal-level signals that manual rules miss entirely. When you're ready, MadKudu fits SaaS and PLG motions well, and 6sense is the enterprise ABM play. Skip both if you're under 500 deals per quarter - you won't have enough signal to justify the spend.

Lead Ranking FAQ

What's the difference between lead ranking and lead scoring?

Lead ranking is the prioritized output - your ordered list sorted by conversion likelihood. Lead scoring assigns points to produce that ranking. Lead grading adds an A-F fit assessment. Most practitioners use the terms interchangeably, but the distinction matters when you're designing your system because it separates the methodology from the deliverable.

What's a good MQL threshold?

Start at 70 points on a 100-point scale. Adjust based on whether sales gets enough volume at acceptable quality - if reps are starving, lower it to 60; if they're drowning in junk, raise it to 80.

How often should I recalibrate my scoring model?

Quarterly at minimum. Compare your top-scored leads against actual closed-won deals. If the correlation is weak, your weights are wrong - pull recent wins and losses and look for the signals that actually differentiated them.

How does prospect scoring differ from opportunity scoring?

Prospect scoring evaluates individual contacts based on fit and engagement before a deal exists. Opportunity scoring kicks in once a deal is created in your CRM, weighting pipeline-stage signals like stakeholder involvement, competitive mentions, and timeline urgency. Both feed into your overall prioritized list, but they operate at different stages of the funnel.

What tools help keep lead data fresh for scoring?

Job titles go stale in 3-6 months and content signals expire in 30 days. Without fresh data, your scoring model ranks ghosts. Look for enrichment tools with weekly refresh cycles and high verification accuracy - then pair that with a CRM enrichment workflow so your rankings stay current automatically.