Account-Based Marketing Attribution: Why Your Model Fails and How to Fix It

Three dashboards. Three different numbers. Marketing says the webinar series influenced 40 accounts that closed. Sales says they'd been working those accounts for months. Finance looks at the spreadsheet and sees a pipeline number that doesn't match either story.

That's account-based marketing attribution for most B2B teams right now. With 82% of teams running ABM and 21% of total marketing budgets allocated to it, the stakes are real - yet under 25% of marketers rate their own measurement practices as even fair. You're not bad at this. The problem is structural.

The Quick Version

- Start with cohort analysis - compare influenced vs. non-influenced accounts. Don't jump to multi-touch models you can't maintain.

- Fix your CRM data quality first. If your contact records are stale, you're building attribution on phantom engagement.

- Set attribution windows of 90-365 days and define account lifecycle stages. Standard 7-30 day windows miss the vast majority of ABM touchpoints.

What ABM Attribution Actually Is

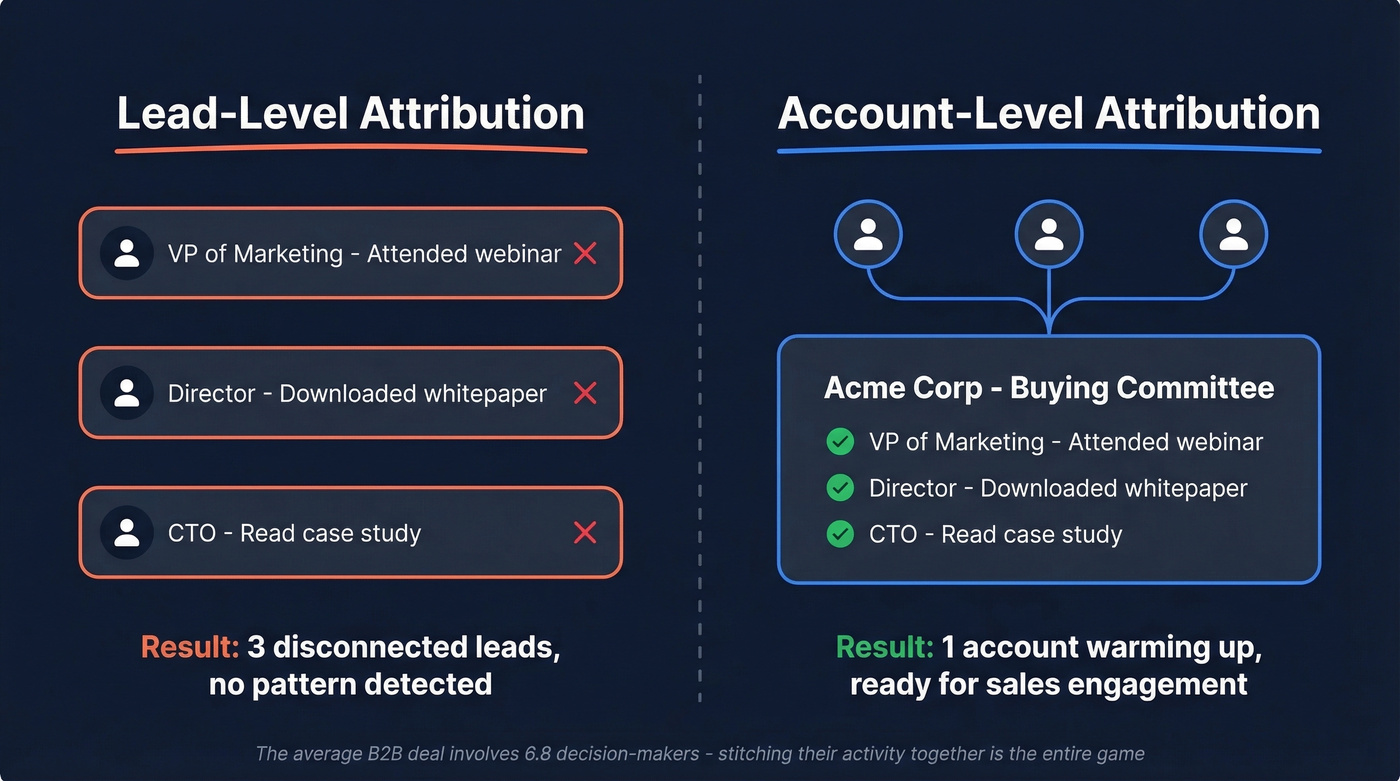

Standard marketing attribution tracks a lead through a funnel. Account-level attribution tracks an account - rolling up activity from every person in the buying committee into a single, coherent picture.

This distinction matters because the average B2B deal involves 6.8 decision-makers. Your VP of Marketing attends a webinar. A director downloads a whitepaper. The CTO reads a case study their colleague forwarded in Slack. Lead-level attribution sees three disconnected events. Account-level attribution sees one buying group warming up. And 86% of B2B marketers struggle to connect multiple stakeholders to that single account view - which is exactly why so many programs stall before they produce useful data. That rollup, stitching individual engagement into account-level signal, is the entire game.

Why ABM Attribution Breaks

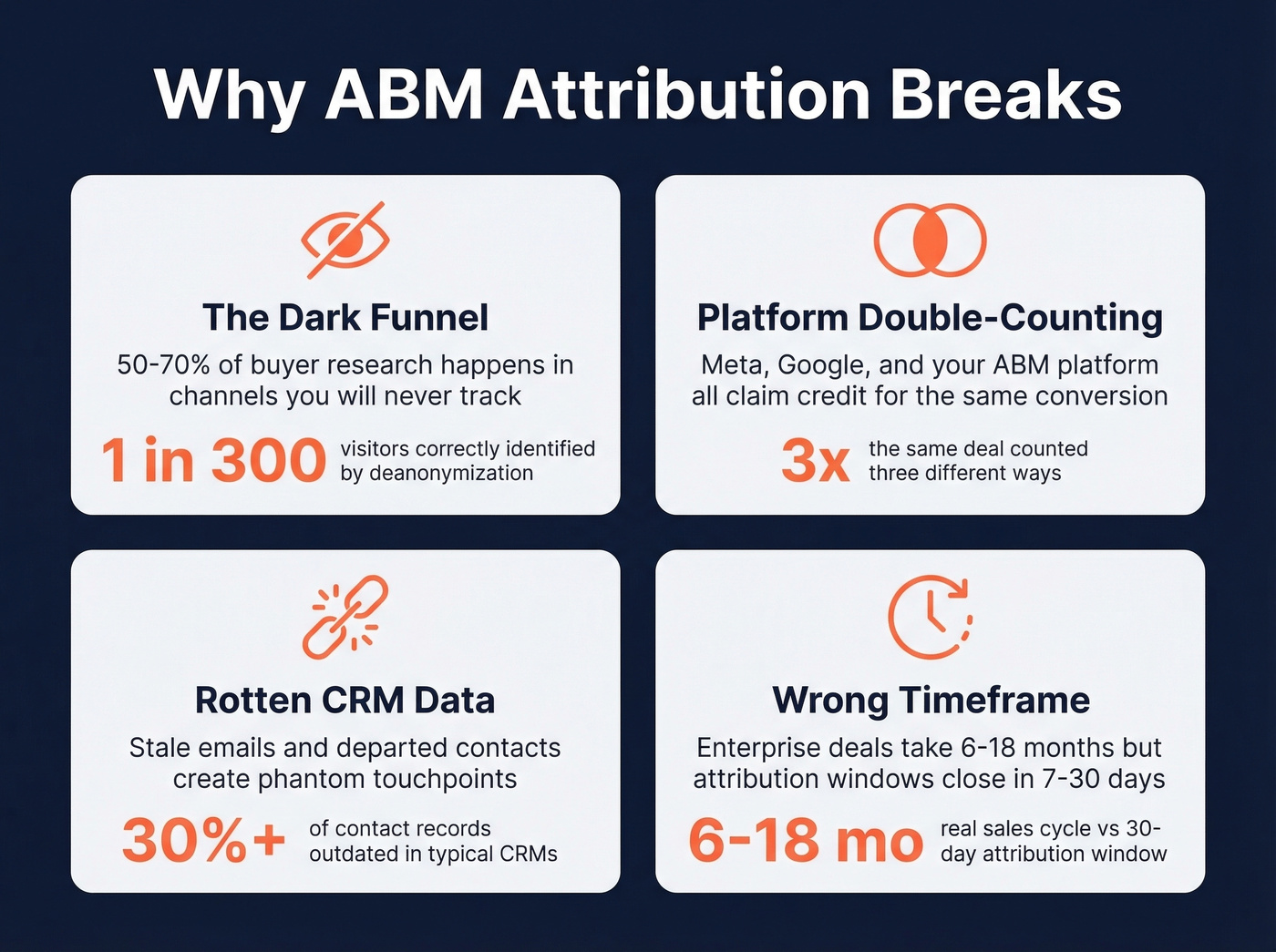

Four things consistently kill ABM attribution programs. Let's be honest: most teams are fighting at least two of these simultaneously.

The dark funnel swallows your data

50-70% of buyer research happens in channels you'll never track - Slack communities, private group chats, AI-generated summaries, internal email forwards. Your attribution model only sees the surface. One practitioner on r/LinkedinAds ran a campaign and found that website deanonymization correctly identified just 1 out of 300 landing page visitors. If you're trusting visitor identification at face value, you're building on sand.

Platforms double-count shamelessly

Meta uses a 1-day view / 28-day click attribution window. Google has its own. Your ABM platform has another. Every channel claims credit for the same conversion, and the numbers never reconcile. This isn't a bug - it's how ad platforms are designed to justify spend.

Your CRM data is rotten

Stale emails, disconnected phone numbers, contacts who left the company two quarters ago - these create phantom touchpoints. Your attribution model thinks marketing "touched" an account because it emailed someone who hasn't worked there since January. We've audited CRMs where 30%+ of contact records were outdated, and the attribution output was essentially fiction.

You're measuring the wrong timeframe

Standard attribution windows of 7-30 days work for e-commerce. Enterprise ABM cycles run 6-18 months. If your window closes before the deal does, you're systematically undercounting marketing's contribution.

Attribution Approaches Compared

| Approach | How It Works | Data Needed | B2B Fit | Best For |

|---|---|---|---|---|

| Cohort Analysis | Compare influenced vs. non-influenced accounts | CRM + campaign exposure | High | Most teams (start here) |

| Multi-Touch (MTA) | Weight credit across touchpoints | Full touchpoint tracking | Medium-High | Mature ops, clean data |

| Incrementality | A/B holdout to prove causality | Large sample + isolation | Low-Medium | Validating specific channels |

| Marketing Mix Modeling | Aggregate spend to outcome correlation | Years of clean spend data | Low | High-volume orgs with long, consistent spend history |

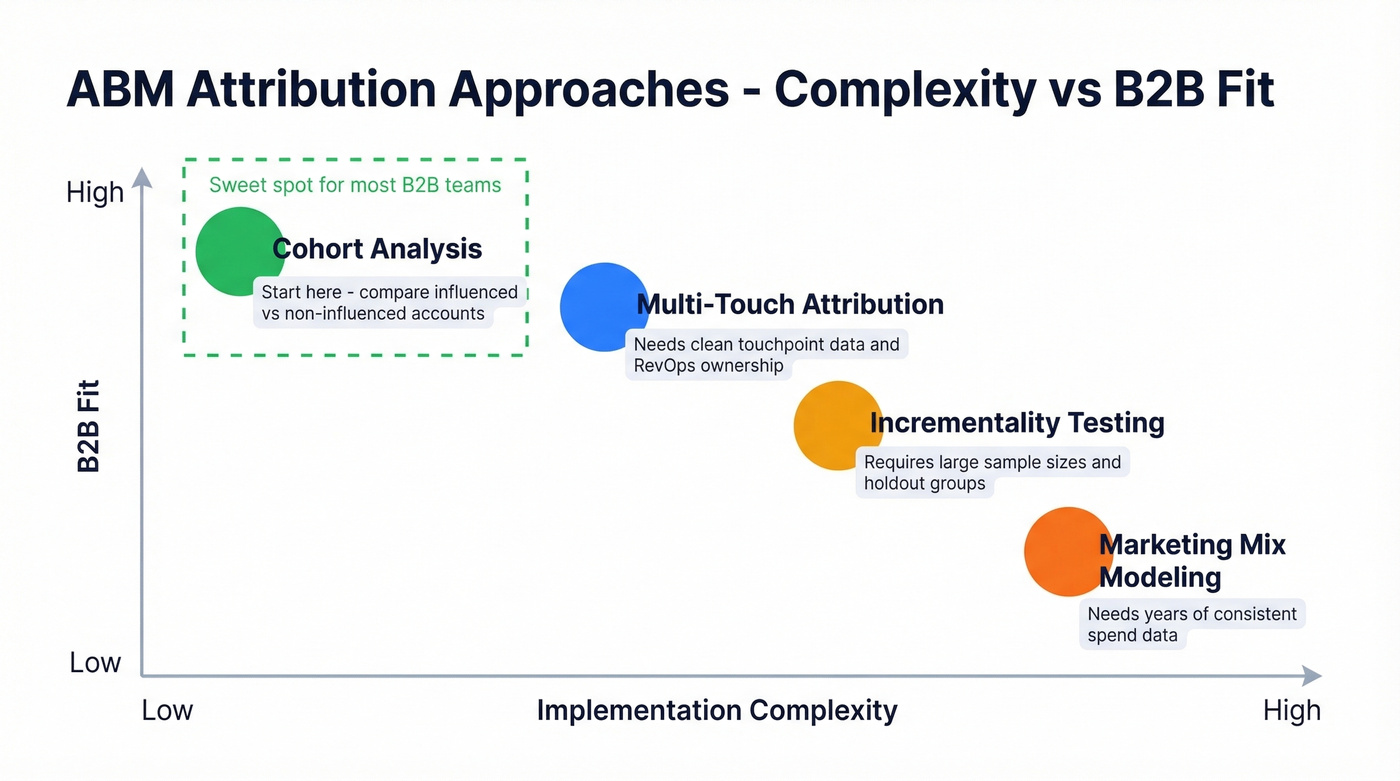

Cohort analysis is the right starting point for most B2B teams. Take your target accounts, split them into "exposed to ABM programs" and "not exposed," and measure the delta in win rate, deal size, and cycle length. It's not fancy. It works.

Multi-touch attribution makes sense once you've got clean touchpoint data and a RevOps person who can maintain it. Marketing mix modeling needs years of consistent spend data and conversion volumes that most B2B orgs simply don't have. CaliberMind's framework breaks this down well for the technically curious.

Here's the thing: a spreadsheet with cohort analysis will outperform a lot of expensive attribution suites. The tool isn't the bottleneck - the data and the process are.

You just read that 30%+ of CRM contacts are outdated - creating phantom touchpoints that poison your attribution model. Prospeo's 7-day data refresh cycle and 5-step verification keep your account records current, so every touchpoint maps to a real person who actually works there.

Stop attributing pipeline to contacts who left two quarters ago.

How to Build ABM Attribution That Works

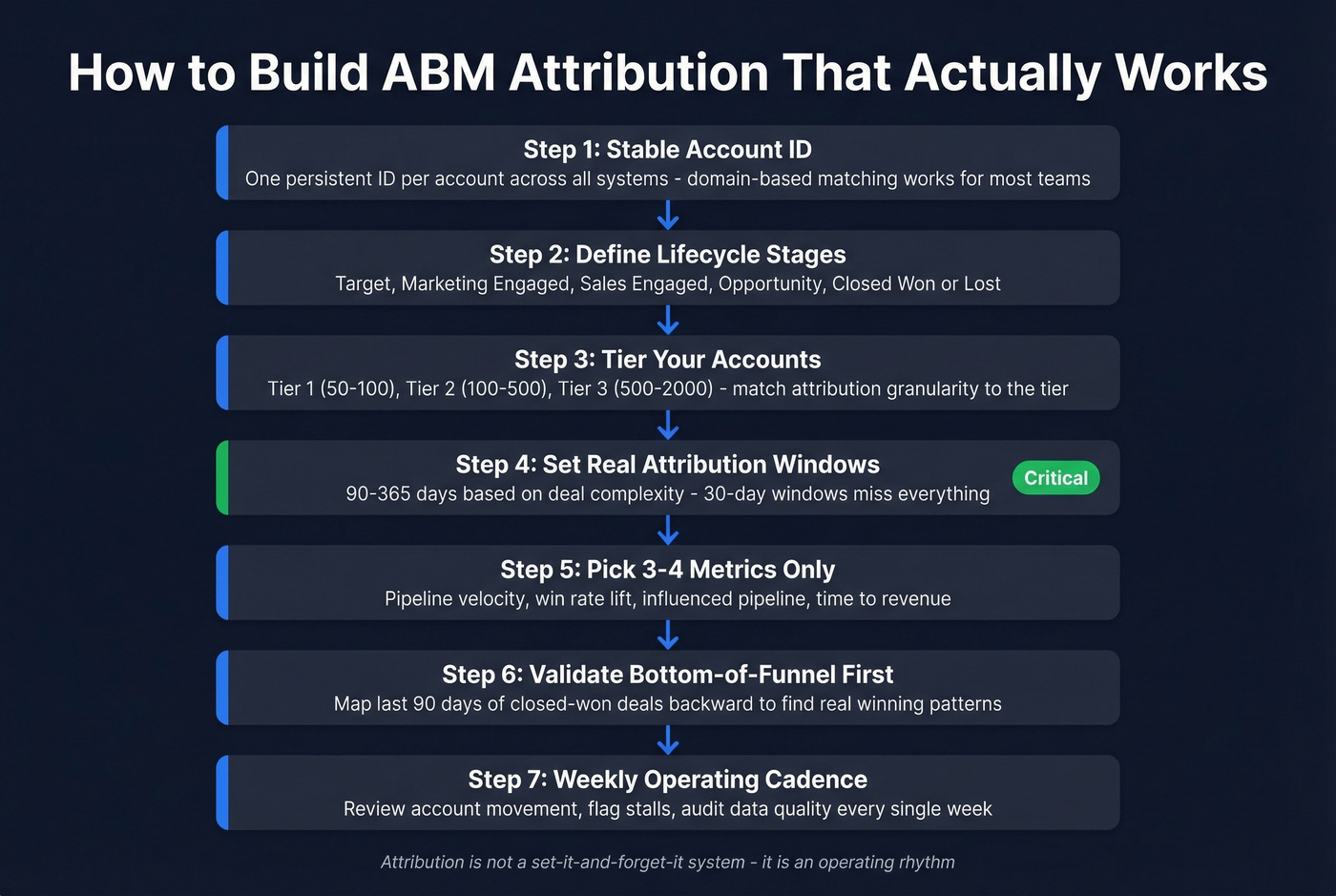

Step 1: Establish a stable account identifier. Before anything else, every account in your CRM needs a single, persistent ID that all systems reference. Without this, you can't stitch touchpoints across platforms. Domain-based matching works for most teams; enterprise orgs with complex subsidiary structures may need a dedicated identity resolution layer.

Step 2: Define account lifecycle stages. Use something like Target, Marketing Engaged, Sales Engaged, Opportunity, Closed Won/Lost. Every account sits in exactly one stage at any given time. This becomes your measurement backbone.

Step 3: Tier your target accounts. Tier 1 gets 50-100 named accounts with high-touch plays. Tier 2 runs 100-500 with semi-personalized campaigns. Tier 3 covers 500-2,000 with programmatic ABM. Attribution granularity should match the tier - don't try to track every touchpoint for 2,000 accounts unless you've got the infrastructure for it.

Step 4: Set attribution windows that match your sales cycle. If your average deal takes 9 months, a 30-day attribution window is useless. Set windows at 90-365 days depending on deal complexity. Enterprise deals need 180+ days minimum.

Step 5: Pick 3-4 metrics and ignore everything else. Pipeline velocity, win rate lift, influenced pipeline, and time to revenue. We've seen teams build 15-metric dashboards that nobody looks at after the first week. Start narrow, expand once the model proves itself.

Step 6: Validate bottom-of-funnel first. Pull your last 90 days of closed-won deals and map every touchpoint backward. This reverse-engineering exercise reveals which channels actually show up in winning patterns - and which ones your attribution model is missing entirely. In our experience, this single exercise changes more minds about attribution than any dashboard ever built.

Step 7: Run a weekly operating cadence. Review account movement between stages, flag stalled accounts, and audit data quality. Attribution isn't a set-it-and-forget-it system. It's an operating rhythm, and consistent review at this cadence is what separates teams that prove ROI from those that guess at it.

Mistakes That Kill ABM Measurement

Framing it as "credit." The moment you position attribution as "who gets credit for the deal," you've lost sales. CaliberMind nails this: frame it as investment optimization, not credit allocation. You're trying to figure out where to spend the next dollar, not who deserves a trophy.

Underestimating the maintenance burden. Keeping attribution clean requires near full-time data hygiene ownership. Fields decay, integrations break, new campaigns launch without proper tagging. We've watched attribution programs that lack a dedicated data owner degrade within 90 days. Every single time.

Trusting deanonymization numbers. That 1-in-300 identification rate from Reddit isn't an outlier - it's closer to the norm than most vendors will tell you. Use deanonymization as a directional signal, not a source of truth.

Ignoring the data layer entirely. If your contact records are full of stale emails and disconnected numbers, your touchpoint tracking is incomplete and your attribution output is noise. A tool like Prospeo - with 98% email accuracy and a 7-day refresh cycle - prevents the data rot that kills attribution before it starts. Most teams should fix their contact data foundation before investing in a $1,400/mo attribution platform.

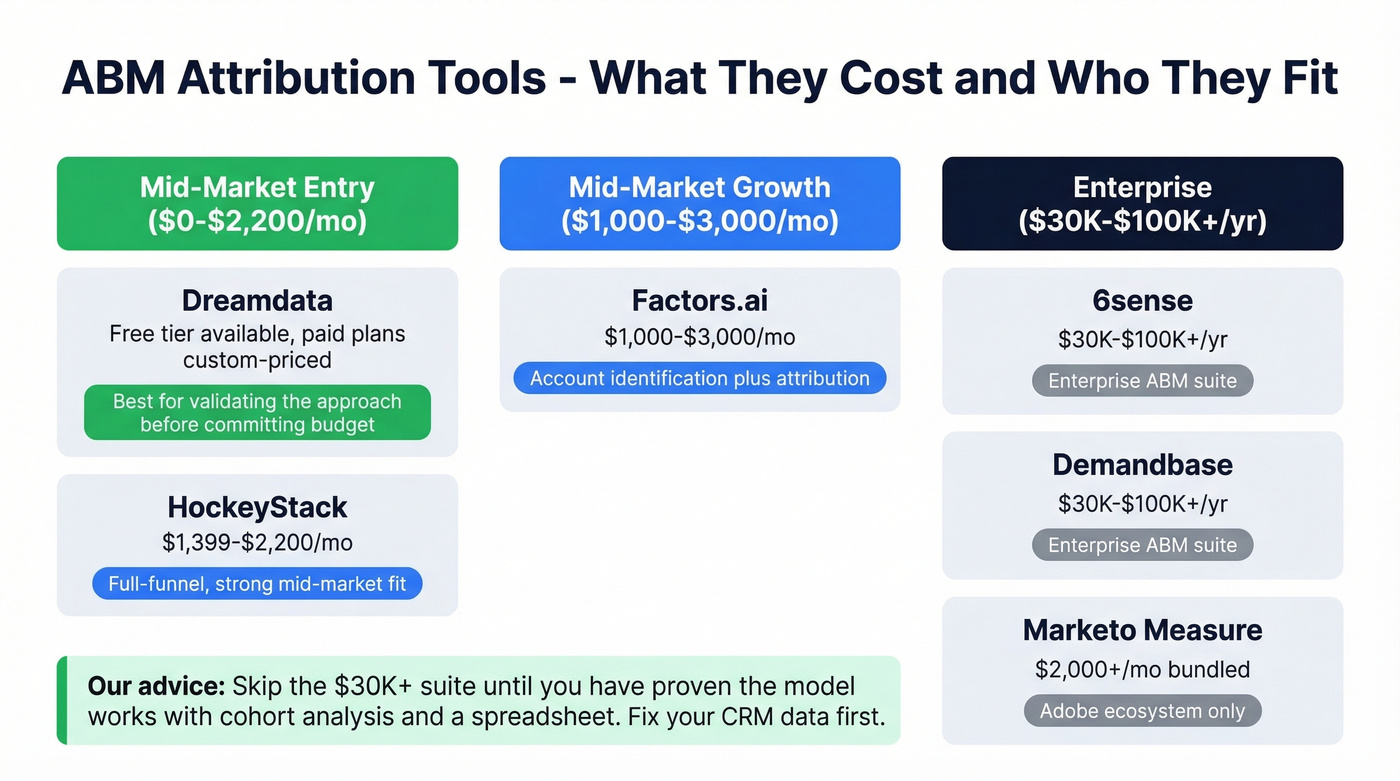

ABM Attribution Tools and Pricing

| Tool | Starting Price | Best For |

|---|---|---|

| HockeyStack | ~$1,399-2,200/mo | Mid-market, full-funnel |

| Dreamdata | Free tier; paid plans custom-priced | Mid-market, revenue attribution |

| Factors.ai | ~$1,000-3,000/mo | Account ID + attribution |

| 6sense | $30K-100K+/yr | Enterprise ABM suites |

| Demandbase | $30K-100K+/yr | Enterprise ABM suites |

| Marketo Measure | ~$2,000+/mo (bundled) | Adobe ecosystem users |

For mid-market teams, HockeyStack and Dreamdata are strong options. Dreamdata's free tier lets you validate the approach before committing budget. 6sense and Demandbase make sense at enterprise scale where you're already running their ABM orchestration - bolting on their attribution is a natural extension.

Skip the $30K+ attribution suite until you've proven the model works with cohort analysis and a spreadsheet. Seriously. We've talked to teams that bought enterprise attribution platforms before they had clean CRM data, and they spent six months configuring a tool that produced garbage because the inputs were garbage.

Fix the Inputs First

Every attribution model is only as reliable as the contact data feeding it. If your CRM is full of outdated records, your touchpoint tracking is incomplete and your account-based marketing attribution output is noise. Clean the data layer first, then invest in the attribution tooling. The order matters more than the budget.

ABM attribution requires stitching engagement across every buyer in the committee. That starts with having verified contact data for all 6.8 decision-makers, not just the one who filled out a form. Prospeo's 300M+ profiles and 30+ filters let you map full buying groups by role, seniority, and department - at $0.01 per email.

Build complete buying committees so your attribution actually reflects reality.

FAQ

What's the difference between sourced and influenced attribution in ABM?

Sourced means marketing created the opportunity from scratch. Influenced means marketing touched an account that entered the pipeline through another channel - like a sales-sourced deal that later engaged with a webinar. 57% of marketers track both, and you should too. They answer fundamentally different budget questions.

How long should an ABM attribution window be?

Set windows at 90-365 days depending on your average deal cycle. Enterprise deals with 6-18 month sales cycles need 180+ days minimum. Standard 7-30 day windows systematically undercount marketing's contribution and make ABM programs look ineffective.

Do I need a dedicated platform to measure ABM?

Not initially. Start with CRM data, clean contact records, and spreadsheet-based cohort analysis. Buy a dedicated attribution platform once you're running 500+ target accounts across multiple tiers and have outgrown manual tracking.