How to Evaluate Sales Performance (Without the Usual BS)

You're sitting in a quarterly review and a rep pushes back: "I closed 7 deals from cold outbound while the team average was 5, but I got half the inbound demos. How is my rating lower?" You don't have a good answer. That's because most sales performance evaluations are broken - 95% of HR leaders are unhappy with traditional reviews, and 59% of employees say they have no impact at all.

Knowing how to evaluate sales performance properly is the difference between coaching that works and reviews that demoralize your team. The payoff for getting it right is massive: structured coaching tied to proper evaluation drives a 19% increase in win rates and 353% ROI. The problem isn't that leaders don't care - it's that they're tracking the wrong things, ignoring context, and running evaluations on data they can't trust.

Here's the framework that actually works.

What You Need (60 Seconds)

- Track 5-7 metrics max. Three to four leading indicators, two to three lagging. That's it.

- Normalize for territory, ramp time, and inbound volume before you judge anyone.

- Use real benchmarks (below) instead of gut feel.

- Evaluate monthly, not annually. Weekly activity pulses feed into monthly pipeline reviews.

- Incorporate sales call scoring so reps get feedback on how they sell, not just what they close.

- Verify your pipeline data is accurate before trusting any of these numbers - garbage in, garbage out.

Stop building dashboards with 30 metrics. Nobody looks at them. Pick the ones that change behavior.

The Metrics That Actually Matter

Quantitative KPIs (With Benchmarks)

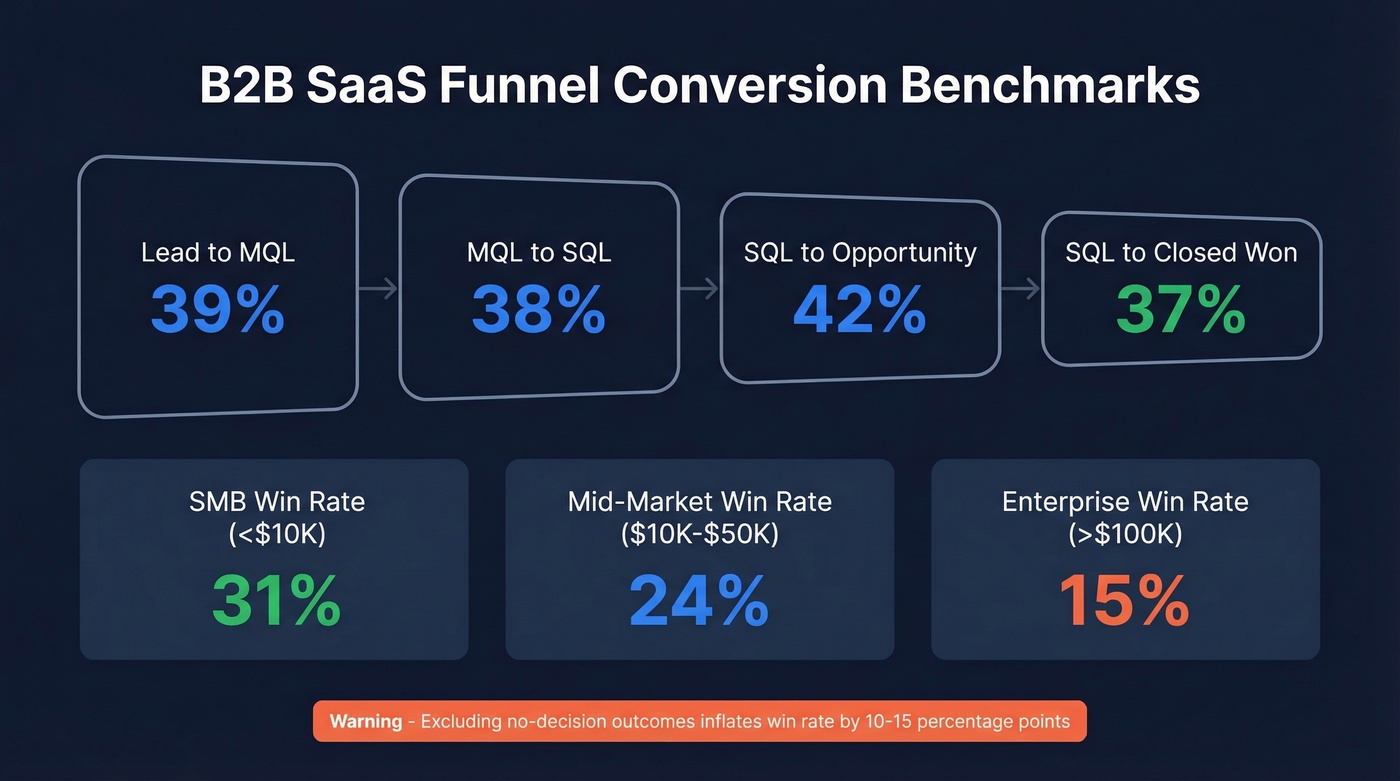

Most articles on sales performance evaluation list metrics without giving you a single number to compare against. Here are benchmarks pulled from B2B SaaS win-rate research and funnel conversion studies covering hundreds of companies.

| Metric | Benchmark | Watch Out For |

|---|---|---|

| Win rate (SMB <$10K) | ~31% | Inflated without "no decision" |

| Win rate (Mid-market $10K-$50K) | ~24% | Drops in $50K-$100K deals (~18%) |

| Win rate (Enterprise >$100K) | ~15% | "No decision" = 40-60% of pipe |

| Pipeline coverage | 3:1 to 4:1 | Below 3:1 = quota risk |

| Lead to MQL | ~39% | Varies wildly by channel |

| MQL to SQL | ~38% | Marketing-sales alignment |

| SQL to Opportunity | ~42% | Qualification quality check |

| SQL to Closed Won | ~37% | Your real conversion engine |

Excluding "no decision" outcomes inflates win rate by 10-15 percentage points. If your team reports a 35% win rate in mid-market SaaS, someone's not counting the deals that went dark.

Healthy pipeline coverage sits at 3:1 to 4:1. Below that, your team is flying blind into the quarter. Far above 4:1, you've usually got a pipeline hygiene problem - bloated stages, zombie deals, and contacts that bounced months ago.

Qualitative Signals

Numbers don't tell you everything. A rep hitting 110% quota while burning every customer relationship isn't a top performer - they're a liability.

Track customer feedback scores, call quality (discovery vs. just demoing), CRM hygiene, and cross-functional collaboration. Sales call scorecards are one of the most effective tools here. They give managers a repeatable way to assess discovery depth, objection handling, and next-step commitment on every recorded call.

Normalize qualitative inputs to a 0-100 score using a lightweight 360-degree approach: pull ratings from managers, peers, and customers. Anonymity increases honesty, especially when peers need to flag issues they won't raise in a team meeting.

Setting the Right Review Cadence

A leaderboard isn't an evaluation system. You need a rhythm.

| Cadence | What to Track | Why |

|---|---|---|

| Weekly | Activity quality, lead response time (<5 min), appointments set | Early warning system |

| Monthly | Pipeline coverage, win rate, conversion by stage, quota pacing | Course correction window |

| Quarterly | Territory health, coaching ROI, comp alignment, SaaS magic number | Big-picture calibration |

For capacity planning, keep it simple. BDR capacity = max daily leads x business days x number of BDRs. AE capacity follows the same logic with deals instead of leads.

Normalize for Territory and Context

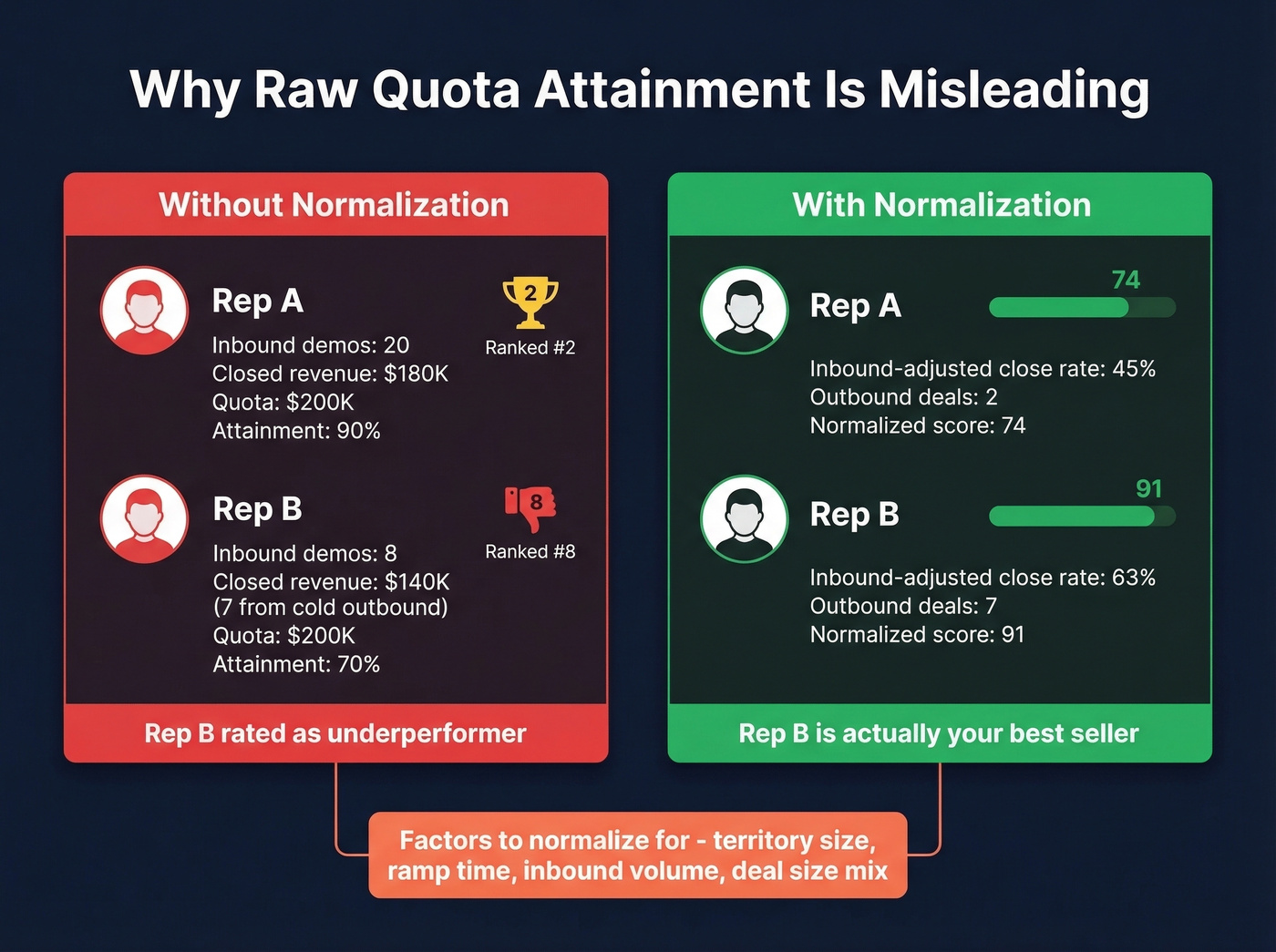

Here's the thing: territory assignment is the single biggest variable in sales performance, and most companies treat it as an afterthought. Raw quota attainment without context is meaningless.

Sales reps on r/sales regularly post about getting rated "partially met expectations" after being set up with impossible territory conditions. We've seen this firsthand - a rep gets assigned a $750K quota but doesn't start selling until three months into the year. They close $219K in direct sales, another $150K gets sold into their territory by others, and they get rated as underperforming. That's not an evaluation. That's a setup.

Inbound inequality is just as corrosive. When one rep gets 20 demo requests per quarter and another gets 8, comparing their closed revenue without adjusting for opportunity volume is lazy management. The rep with 8 inbound demos who closed 7 cold outbound deals might be your best seller - but the leaderboard won't show it.

Design territories based on workload and value, factoring in call frequency, meeting duration, travel time, and prep time. If you don't normalize for these, your performance evaluation is measuring territory luck, not sales skill.

Your performance scorecard is only as good as the data feeding it. When 35% of emails bounce, pipeline coverage ratios lie and win rates collapse. Prospeo delivers 98% email accuracy with a 7-day refresh cycle - so every metric you track reflects reality, not stale contacts.

Stop evaluating reps on pipeline data you can't trust.

Common Evaluation Mistakes

Measure outcomes with context - not activity in a vacuum. One company scored AEs using this formula: (2.5 x emails) + (1 x calls) + (talk time minutes) / (available minutes). A rep who sent 50 emails, made 100 calls, and talked for 2 hours in an 8-hour day scored 72% "productive" - below the 80% target. That's not evaluation. That's surveillance.

Adjust for ramp. A rep three months in shouldn't be judged against someone with two years of territory knowledge. Calibrate rating scales so every manager defines "exceeds expectations" the same way - manager-to-manager variance destroys trust faster than anything else.

Document continuously. Without it, recency bias means the deal that closed last week matters more than the one from month one. In our experience, the biggest evaluation mistake isn't picking the wrong metrics - it's not normalizing for context before applying them.

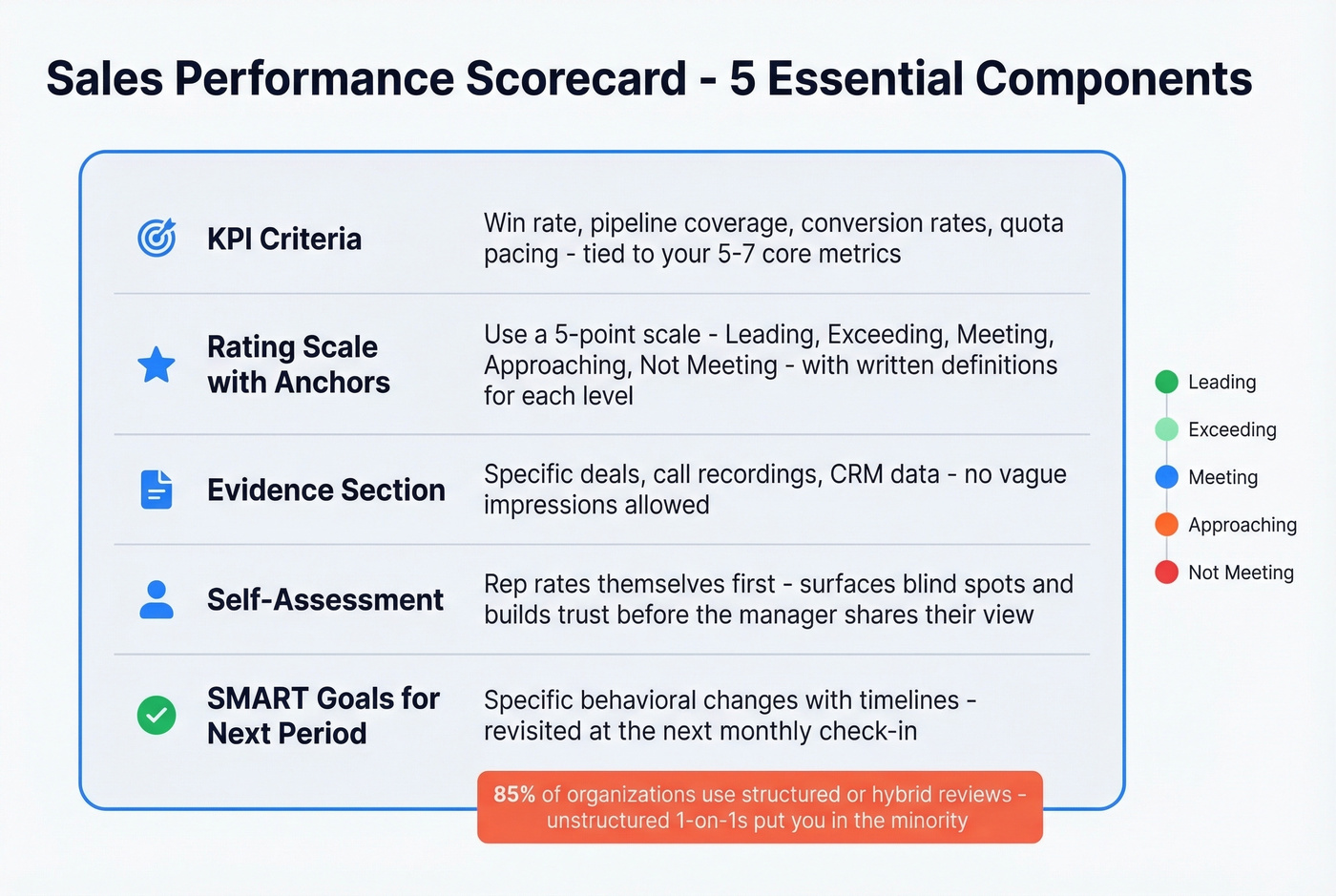

Build a Sales Performance Scorecard

85% of organizations use a structured or hybrid review process. If you're still winging it with unstructured 1:1s, you're in the minority - and not the good kind.

Pick a rating scale and anchor it with definitions. Harvard uses a 5-point system from Leading to Not Meeting Expectations. UC Berkeley's version triggers a performance improvement plan at Level 2. The specific labels matter less than consistency - every manager needs to use the same scale the same way.

Your scorecard needs five things: criteria tied to the KPIs above, a rating scale with written anchors, space for evidence, a self-assessment section, and SMART goals for the next period.

Running the Review Conversation

The scorecard is only half the equation. How you run the conversation determines whether anything changes.

A sales performance review meeting should follow a consistent structure: open with the rep's self-assessment, walk through the scorecard together, discuss two to three specific deals or calls as evidence, and close with agreed-upon next steps. Managers who let reps speak first surface blind spots faster and build more trust than those who lead with their own ratings.

Let's be honest - most review meetings devolve into a data dump. Don't let that happen. The goal isn't to recite every metric. It's to identify one or two behavioral changes that'll move the needle. Tie each action item to a timeline and revisit progress in the next monthly check-in. Reviews that end without clear, written commitments are just conversations that evaporate by Monday.

Data Quality Makes or Breaks Evaluation

Every metric in this article assumes your pipeline data is real. If your contact data bounces at 35%, your pipeline is fiction - and your evaluation is built on it.

Snyk's 50-person AE team was running bounce rates of 35-40% before switching to Prospeo's verified data. Bounces dropped under 5%, AE-sourced pipeline jumped 180%, and they were generating 200+ new opportunities per month. The evaluation framework didn't change. The data underneath it did. If your reps are building pipeline on stale, unverified contacts, every conversion metric you track is inflated. Fix the data first, then evaluate.

Territory normalization starts with real contact data. If your reps are wasting hours chasing bounced emails and dead numbers, you're measuring busywork - not sales skill. Prospeo gives your team 300M+ verified profiles with 125M+ direct dials, so activity metrics actually mean something.

Give every rep clean data before you judge their numbers.

FAQ

How many sales metrics should I actually track?

Five to seven, max. Pick three to four leading indicators - pipeline created, activity quality, lead response time - and two to three lagging indicators like win rate, quota attainment, and revenue. Monitoring 30 metrics leads to dashboard paralysis where nobody acts on anything.

How often should I run performance reviews?

Weekly activity pulses, monthly pipeline and win-rate reviews, and quarterly strategic deep-dives. Annual-only reviews are dead - 59% of employees say they have no impact. Monthly cadence is where real course correction happens.

What's a good win rate for B2B sales?

The average B2B win rate is ~21% across all opportunities, ~29% for qualified-only. By deal size: SMB 31%, mid-market 24%, enterprise 15%. Exclude "no decision" outcomes or your number is inflated by 10-15 points.

How do I make sure my pipeline data is accurate enough for evaluation?

Use a verified data source with regular refresh cycles. If your bounce rate exceeds 5%, your pipeline metrics are unreliable and every downstream evaluation is suspect. Look for providers that refresh contact data weekly rather than on the 6-week cycle that's standard across the industry.

How should I use call scorecards in reviews?

Build a scorecard with five to eight criteria - opening, discovery quality, objection handling, value articulation, next-step commitment - and score each on a 1-5 scale. Review two to three recorded calls per rep before each meeting so you're discussing specific moments, not vague impressions.