How Lead Nurturing Scoring Actually Works (and the Model You Can Steal)

You've got 3,000 MQLs in the CRM. Twelve became opportunities. Marketing says the leads are great. Sales says they're garbage. The real problem? Nobody agreed on what "qualified" means, and there's no lead nurturing scoring model to settle the argument.

Only 44% of organizations actually use lead scoring. The ones that do see 138% ROI on lead generation versus 78% without it. That gap is the difference between a pipeline that converts and one that just looks full.

Here's the thing: most teams don't need a fancier tool. They need a scoring model they'll actually maintain and a data layer that doesn't rot. Everything below is built around that principle.

The Four Pieces, Quickly

A scoring model with specific point values across fit, behavior, intent, and interaction. Decay rules with exact time windows. A threshold methodology tied to sales capacity, not gut feel. And a mistake checklist covering the three failures that kill most programs. Companies that nail this generate 50% more sales-ready leads at 33% lower cost.

How Scoring Powers Nurturing

Scoring prioritizes. Nurturing converts. Two halves of the same system - scoring tells you who needs attention, nurturing determines what kind. When you combine these into a single workflow, every touchpoint becomes intentional rather than arbitrary.

Map content to journey stages: TOFU guides for cold leads, MOFU case studies and webinars for warm ones, BOFU demos and pricing pages for leads approaching handoff. Trigger-based workflows built around score changes produce 8x higher open rates versus broadcast campaigns. And target the buying committee, not just the contact - B2B decisions are made by groups, not individuals, so account-level scoring matters as much as contact-level scoring.

The Scoring Model You Can Steal

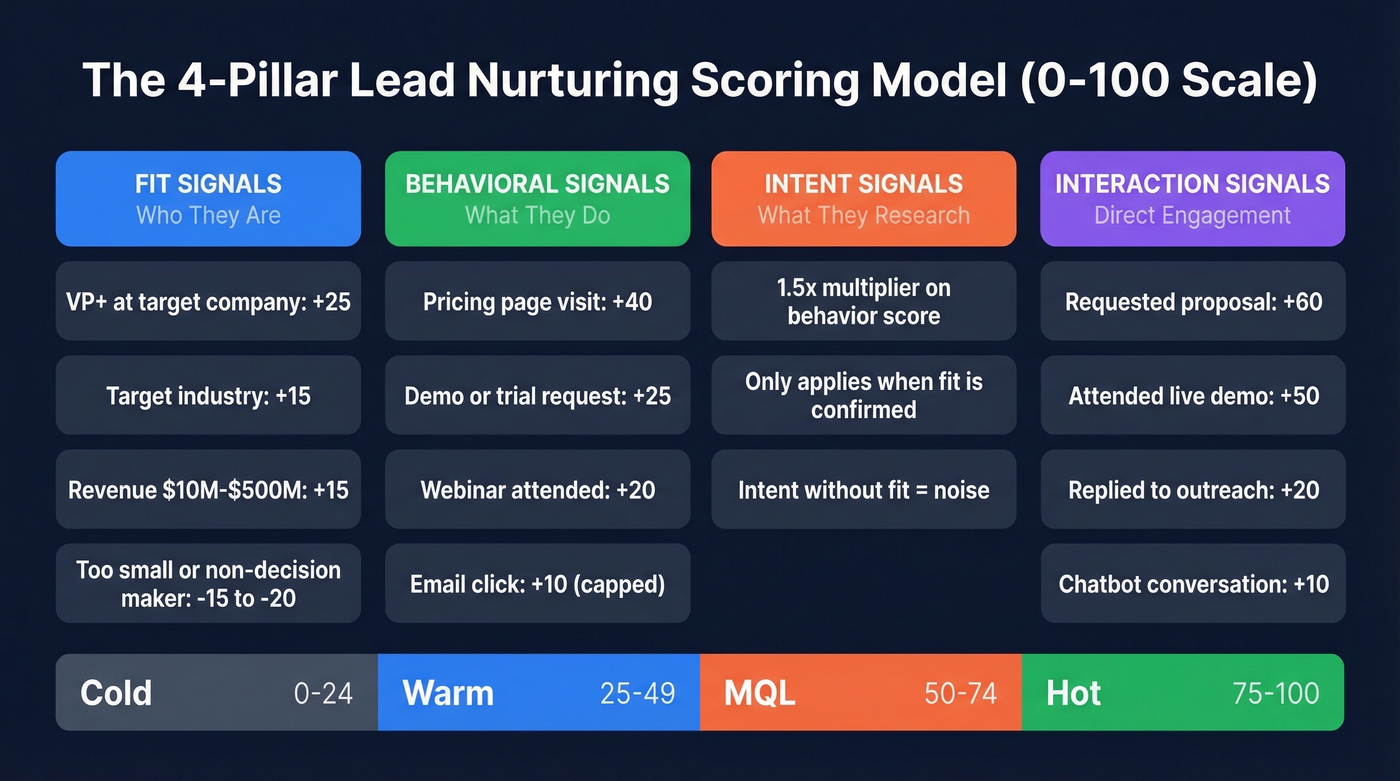

This framework uses four pillars scored on a 0-100 scale. We've seen variations of this work across dozens of implementations - the point values below reflect actual buying-signal hierarchy, not arbitrary numbers.

Score Bands and SLAs

| Band | Score | Action | SLA |

|---|---|---|---|

| Cold | 0-24 | Automated nurture only | None |

| Warm | 25-49 | Nurture + monitor | Weekly review |

| MQL | 50-74 | Route to SDR | Call within 48h |

| Hot | 75-100 | Priority handoff | Call within 24h |

Point Values by Pillar

Fit signals - who they are:

- VP+ at target company: +25

- Target industry: +15

- Revenue $10M-$500M: +15

- Company <10 employees: -20

- Non-decision-maker, intern, or student: -15

Behavioral signals - what they do:

- Demo or trial request: +25

- Pricing page visit: +40

- Webinar attended: +20

- Case study download: +15

- Email click: +10

- Email open: +3, capped at +15 total

- Blog read over 1 min: +3

Look - a demo request is someone raising their hand. A pricing page visit is someone window-shopping with intent. The point values should reflect that hierarchy. If your model scores passive browsing higher than active requests, you're routing the wrong leads to sales.

Intent signals - what they're researching:

Treat intent as a multiplier, not a standalone score. A lead researching your category but outside your ICP isn't an MQL - they're a content consumer. If a lead is actively researching your category via intent data, multiply their behavioral score by 1.5x. Intent without fit is noise.

Interaction signals - direct engagement with your team:

- Requested proposal: +60

- Attended live demo: +50

- Replied to outreach: +20

- Chatbot conversation: +10

Cap repeated behaviors. Someone who opens 30 emails isn't 30x more qualified - they're one engaged contact. Set ceilings per action type and expire engagement points after six months.

Negative Scoring

| Signal | Points |

|---|---|

| Competitor domain | -1,000 (instant disqualification) |

| Careers page visit | -30 |

| Unsubscribe | -25 |

| Consumer email (Gmail, Yahoo) | -15 |

| Company size outside ICP | -15 |

| Student or personal email | -10 |

The competitor domain rule is the most important one here. We've watched teams implement competitor-domain disqualification and see SDR productivity jump within the first week - nothing wastes rep time faster than routing a competitor's employee into a sales sequence.

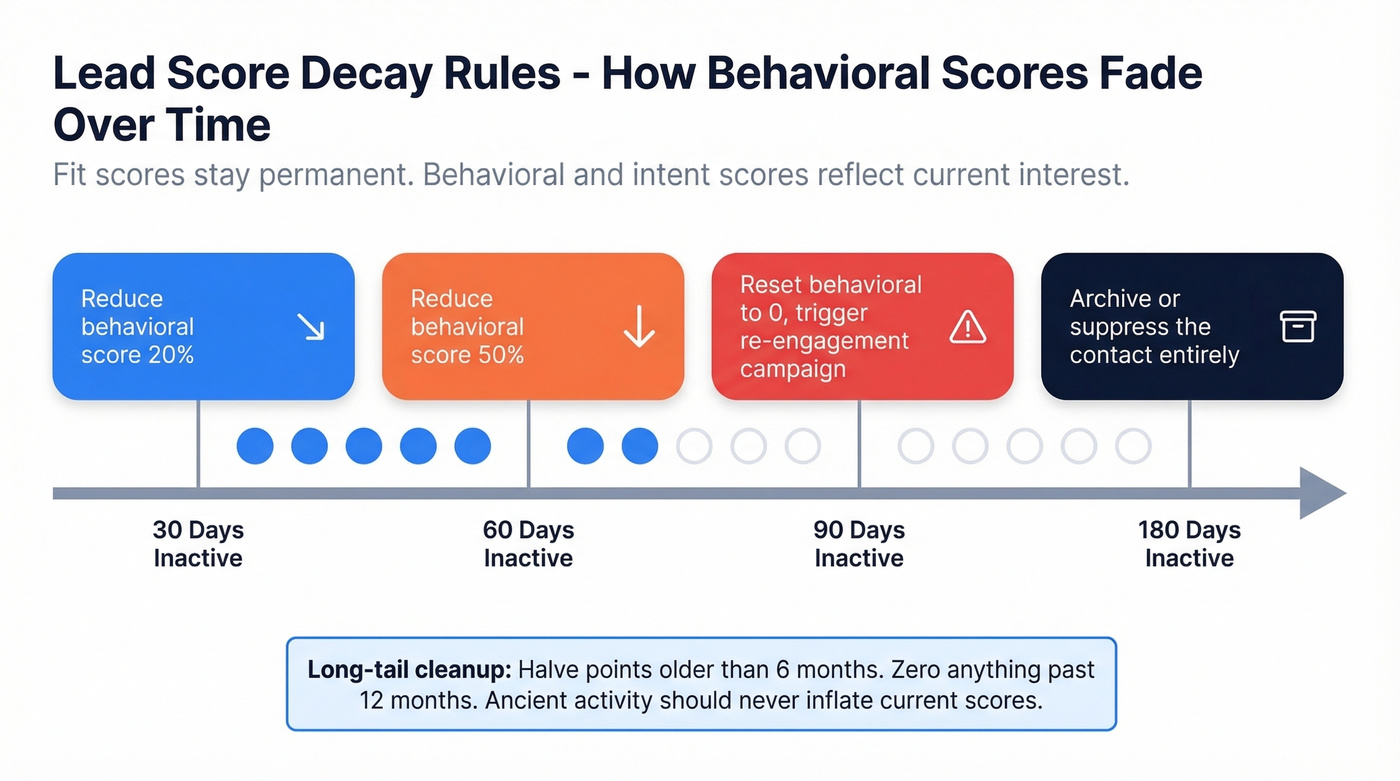

Score Decay Rules

Fit scores stay permanent - a VP at a target account is still a VP next quarter. But behavioral and intent scores reflect current interest, and current interest fades.

Use explicit inactivity windows to keep your behavioral score honest:

| Inactivity Window | Action |

|---|---|

| 30 days | Reduce behavioral score 20% |

| 60 days | Reduce behavioral score 50% |

| 90 days | Reset behavioral to 0, trigger re-engagement |

| 180 days | Archive or suppress |

Then add a long-tail cleanup rule: halve behavioral points older than six months, and zero anything past twelve months. Ancient activity shouldn't linger in edge cases and inflate scores that no longer mean anything.

Scoring models break when contact data decays. Prospeo refreshes every 7 days - not the 6-week industry average - so your fit and behavioral scores reflect reality, not stale records. With 98% email accuracy and 83% enrichment match rates, your MQL threshold actually means something.

Stop scoring leads you can't even reach.

Setting Your MQL Threshold

Your MQL threshold is a capacity calculation, not a magic number.

If your SDR team can handle 50 leads per week and you're sending 200, the threshold is too low. If pipeline is thin and SDRs are idle, lower it - but tighten demographic filters to maintain quality. Most B2B teams land between 60-100 points. One team we worked with lowered their activity threshold while tightening title and seniority filters, which increased their MQL-to-meeting rate by 13%. The lesson: threshold tuning isn't about the number - it's about which signals carry the weight.

Define MQL as "should I call them?" not "is this an opportunity?" That framing alone prevents half the arguments between marketing and sales.

Three Mistakes That Kill Nurturing

Premature handoff. MQL-to-SQL conversion below 20%? SDRs are overwhelmed and ignoring leads. The fix isn't more SDRs - it's raising the threshold and keeping leads in nurture longer.

Generic content scoring. Scoring all downloads the same is lazy. A buyer's guide signals consideration. A trends report signals curiosity. Score specific assets by funnel stage, because a pricing comparison PDF and a "state of the industry" report carry wildly different buying intent, and your model should reflect that gap with at least a 10-15 point spread between them.

No fit-versus-behavior matrix. Rigid demographic gates miss engaged prospects with incomplete enrichment data. Use a 2D model:

| High Behavior | Low Behavior | |

|---|---|---|

| High Fit | MQL | Nurture |

| Low Fit | Monitor | Suppress |

Let's be honest about the part most guides skip: you need a feedback loop. Have SDRs flag leads that scored high but didn't convert. Feed that data back into the model monthly. Without this loop, your scoring model calcifies around assumptions that were wrong from day one. The consensus on r/sales is that most scoring models fail not because of bad logic, but because nobody maintains them past launch.

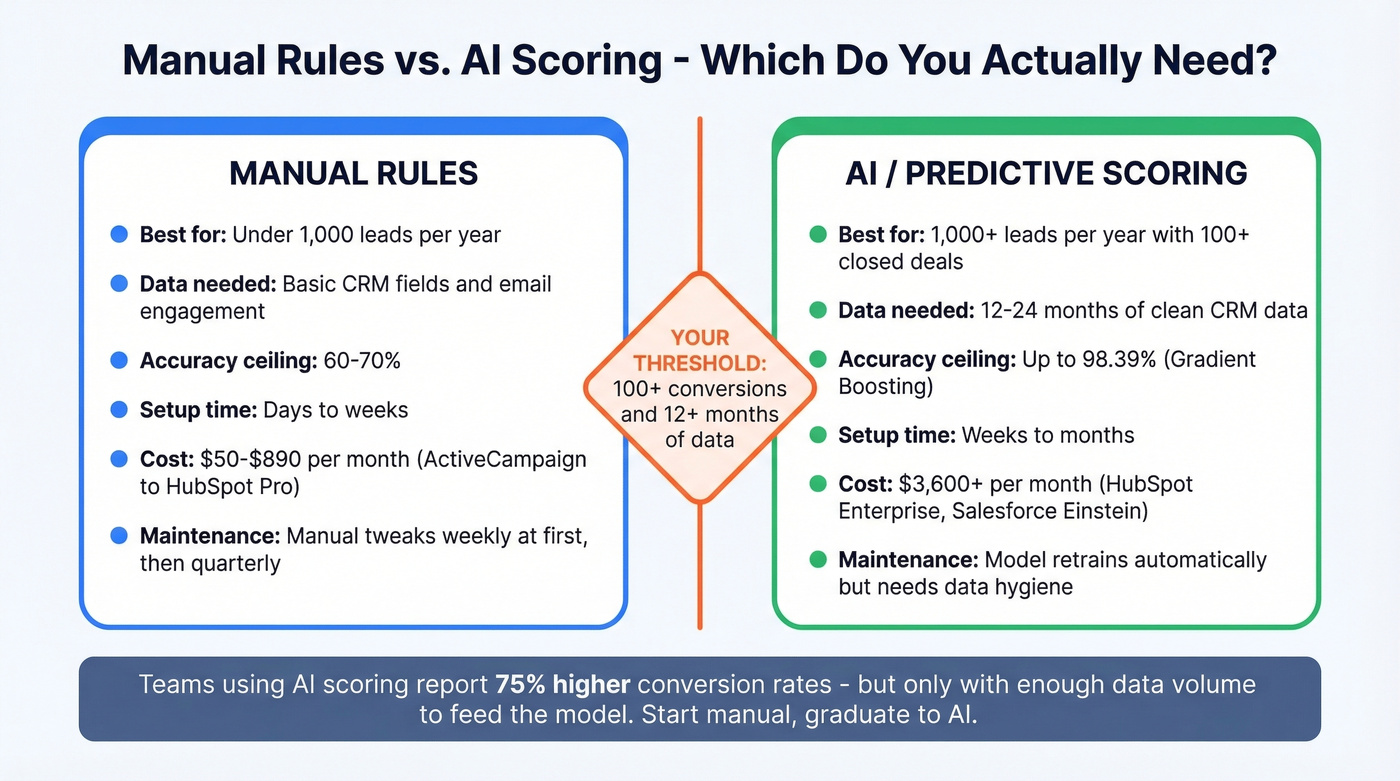

Do You Need AI Scoring?

Skip AI scoring if you're running fewer than 1,000 leads per year or have fewer than 100 closed deals to train on. Manual rules will outperform any predictive model at that volume - you simply don't have enough data.

AI becomes worth it with 12-24 months of clean CRM data and 100+ conversions. A Frontiers in Artificial Intelligence study testing 15 algorithms on B2B lead data found Gradient Boosting hit 98.39% accuracy, versus the 60-70% ceiling of rule-based scoring. Teams using machine learning report 75% higher conversion rates. The gap is real - but only if you have the data volume to feed it.

Save the $3,600/month HubSpot Enterprise bill until you do.

Tools and What They Cost

| Tool | Scoring Type | Price |

|---|---|---|

| HubSpot Pro | Manual rules | $890/mo (3 seats) |

| HubSpot Enterprise | Predictive | $3,600/mo (10-seat min) |

| HubSpot Breeze Intelligence | AI add-on | $45/mo for 100 credits |

| Salesforce Einstein | AI-powered | $165/user/mo + $50/user/mo add-on |

| Marketo | Behavior + Demographic | ~$1,000-$3,000/mo |

| ActiveCampaign | Rules-based | ~$50-$150/mo |

HubSpot Enterprise unlocks predictive scoring but requires $3,500 onboarding on top of the monthly cost. Salesforce Einstein needs Sales Cloud Enterprise plus the Einstein add-on, and implementation costs that balloon past $500K for complex orgs. Marketo takes a different approach with separate Behavior Score and Demographic Score fields that combine for routing. ActiveCampaign is the budget-friendly option for smaller teams who just need basic rules.

None of these tools matter if the data feeding them is bad. If 20% of your emails bounce, you're scoring contacts you can never reach. You route a lead to sales with a score of 85, the rep calls and the email bounces, and trust in the entire system erodes. Before you activate any scoring platform, run your contact list through Prospeo's verification - it pulls from 143M+ verified emails on a 7-day refresh cycle, so the data your scores are built on stays current instead of weeks stale.

Your fit-versus-behavior matrix needs complete data to work. Prospeo enriches contacts with 50+ data points - title, seniority, company size, revenue, tech stack - so you can score fit signals with confidence instead of guessing. At $0.01 per email, enriching your entire nurture database costs less than one misrouted MQL.

Fill the gaps in your scoring model before SDRs pay the price.

FAQ

What's the difference between lead scoring and lead grading?

Scoring assigns points based on behavior and engagement - what a lead does. Grading evaluates demographic and firmographic fit - who a lead is. Best practice is using both together in a fit-versus-behavior matrix so you don't route engaged-but-poor-fit leads to sales.

How often should I recalibrate my model?

Tweak weekly during the first one to two months after launch, then shift to quarterly reviews. Monitor MQL-to-SQL conversion rates - if they drop below 20%, your model needs recalibration. Running scoring in the background before announcing it to sales lets you validate without creating noise.

What's a good MQL threshold?

Most B2B teams land between 60-100 points, but the right number depends on your sales team's capacity. If SDRs are overwhelmed, raise the threshold. If pipeline is thin, lower it while tightening demographic filters so you're not sacrificing lead quality for volume.

How does data quality affect scoring accuracy?

Stale emails and wrong job titles mean fit scores are inaccurate and behavioral signals go to unreachable contacts. A 7-day data refresh cycle prevents scoring models from degrading - compared to the industry-standard six-week refresh at most providers. Data verification should be step zero before you configure any platform.