B2B Lead Scoring: Build a Model Sales Won't Ignore

Your marketing team sent 200 MQLs to sales last month. Sales booked 11 meetings. The VP of Sales is calling the leads "garbage," and marketing is pointing at open rates as proof the leads are "engaged." Both teams are right, and both are wrong - the lead scoring model between them is broken. Only 27% of leads sent to sales are actually qualified, which means the other 73% are wasting everyone's time.

Scoring leads in B2B isn't complicated. It's just poorly implemented at most companies. Before assigning any points, document your buyer persona and map the buyer journey. The persona tells you who to score highly (fit). The journey tells you which actions signal buying progress (behavior + intent). Without both, you're guessing at weights.

This playbook gives you exact point values, decay rules, SLA tiers, and operational guardrails to build a model your sales team will actually trust.

The System in Five Rules

Here's the entire framework compressed:

- Keep criteria to ~4 pillars. High-conversion companies average four scoring criteria. More complexity doesn't mean more accuracy - it means more maintenance and more drift.

- Weight intent over activity. A pricing page visit is worth more than ten email opens. Visiting a competitor comparison page signals buying mode. An email open signals a pulse.

- Enforce decay rules. Points from six months ago aren't worth what they were. Halve them. Points from twelve months ago? Zero them out.

- Tie scores to SLAs. A score without a response-time SLA is just a number. If a lead hits 80+ and nobody calls for three days, the model isn't the problem.

- Start with clean data. Stale firmographics and bounced emails make every score fiction. Fix the data layer first.

If your sales team ignores MQLs, the model is broken - not the sales team. Let's fix the model.

Why Scoring Leads Matters for Revenue

The ROI gap is stark: benchmarks show 138% ROI with lead scoring versus 78% without. Yet only 44% of organizations use it. That's a massive competitive edge sitting on the table.

The cost of not scoring isn't just inefficiency - it's lost revenue. 70% of leads are lost from poor sales follow-up. When every lead looks the same priority, reps either cherry-pick based on gut feel or work the list top-to-bottom. Neither approach optimizes for revenue. Meanwhile, 68% of efficient marketers cite lead scoring as a primary driver of revenue contribution. Teams that score leads close more deals, waste fewer hours, and fight less about lead quality.

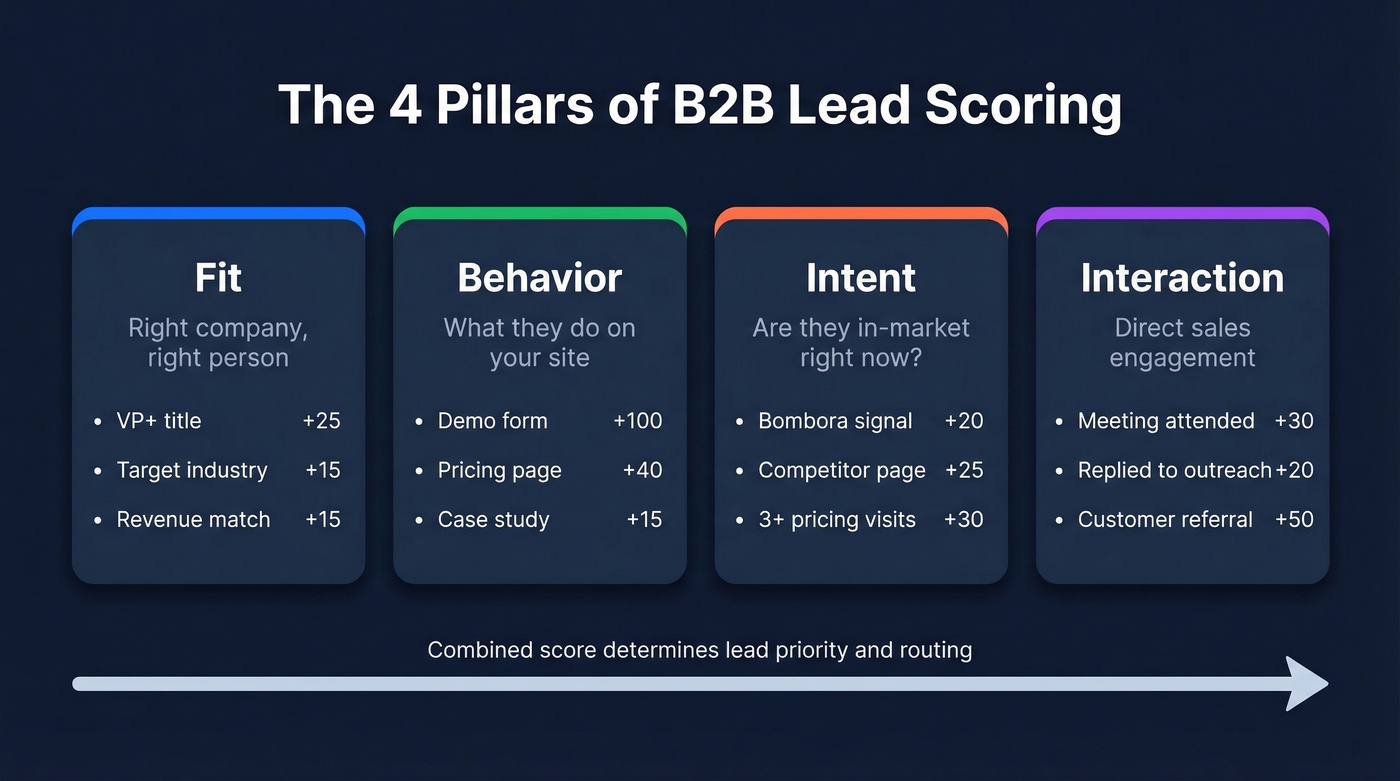

Four Pillars of an Effective Model

Every scoring model worth using rests on four pillars: fit, behavior, intent, and interaction. Most guides lump these together or skip two entirely. Here's each one with specific point values you can steal today.

Fit Scoring (Right Company, Right Person)

Fit scoring answers the most basic question: is this person at a company you can actually sell to, in a role that can actually buy? A perfect behavioral score from someone at a 5-person company when you sell enterprise software is still a bad lead.

| Signal | Points |

|---|---|

| VP+ at target company | +25 |

| Target industry | +15 |

| Revenue $10M-$500M | +15 |

| Within serviceable area | +10 |

| Outside serviceable area | -100 |

| Company <10 employees | -20 |

| Intern or student title | -15 |

If you sell a service with regional constraints, a prospect in your serviceable area gets +10. Outside it entirely? That's -100. Don't let geographic misfits inflate your pipeline.

Behavioral Scoring (What They're Doing)

Behavioral scoring tracks what prospects do on your site and in your campaigns. The trap here is score inflation - email opens are easy to accumulate and nearly meaningless as buying signals.

| Action | Points | Cap |

|---|---|---|

| Demo form submitted | +100 | None |

| Pricing page visit | +40 | None |

| Webinar registration | +30 | None |

| Webinar attendance | +20 | None |

| Case study download | +15 | None |

| Email click | +10 | None |

| Email open | +3 | +15 total |

That cap on email opens is critical. Without it, a newsletter subscriber who opens every Tuesday email for three months racks up 36+ points from opens alone - and they've never once looked at your product. Cap repeatable low-signal actions aggressively.

Intent Scoring (Are They In-Market?)

Intent data separates "interested in the category" from "actively evaluating solutions." Third-party intent signals from providers like Bombora, G2, and review sites reveal when a company is researching your category - often before anyone fills out a form.

| Signal | Points |

|---|---|

| Bombora intent signal for your category | +20 |

| Competitor comparison page visit | +25 |

| 3+ pricing page visits in 7 days | +30 |

| G2/review site activity | +15 |

| Return visit within 48 hours | +10 |

First-party intent is equally powerful: clusters of pricing page visits, repeated views of competitor comparison pages, and return visits within a short window all signal buying mode. Gartner highlights "willingness to advocate" as a heavily weighted criterion among high-conversion companies - prospects who engage with review sites and share content are further along than passive browsers. Layer third-party intent with first-party behavioral data for the strongest signal.

Interaction Scoring (Sales Touchpoints)

Once a prospect engages with your sales team directly, those interactions carry serious weight. A meeting attended, a reply to outreach, or a referral from an existing customer all indicate genuine interest that no amount of website tracking can replicate.

| Interaction | Points |

|---|---|

| Meeting attended | +30 |

| Replied to outreach | +20 |

| Referred by customer | +50 |

One override rule matters more than any point value: if someone requests a demo, a trial, or asks to talk to sales, route them immediately regardless of their score. Don't let a formula delay a hand-raiser.

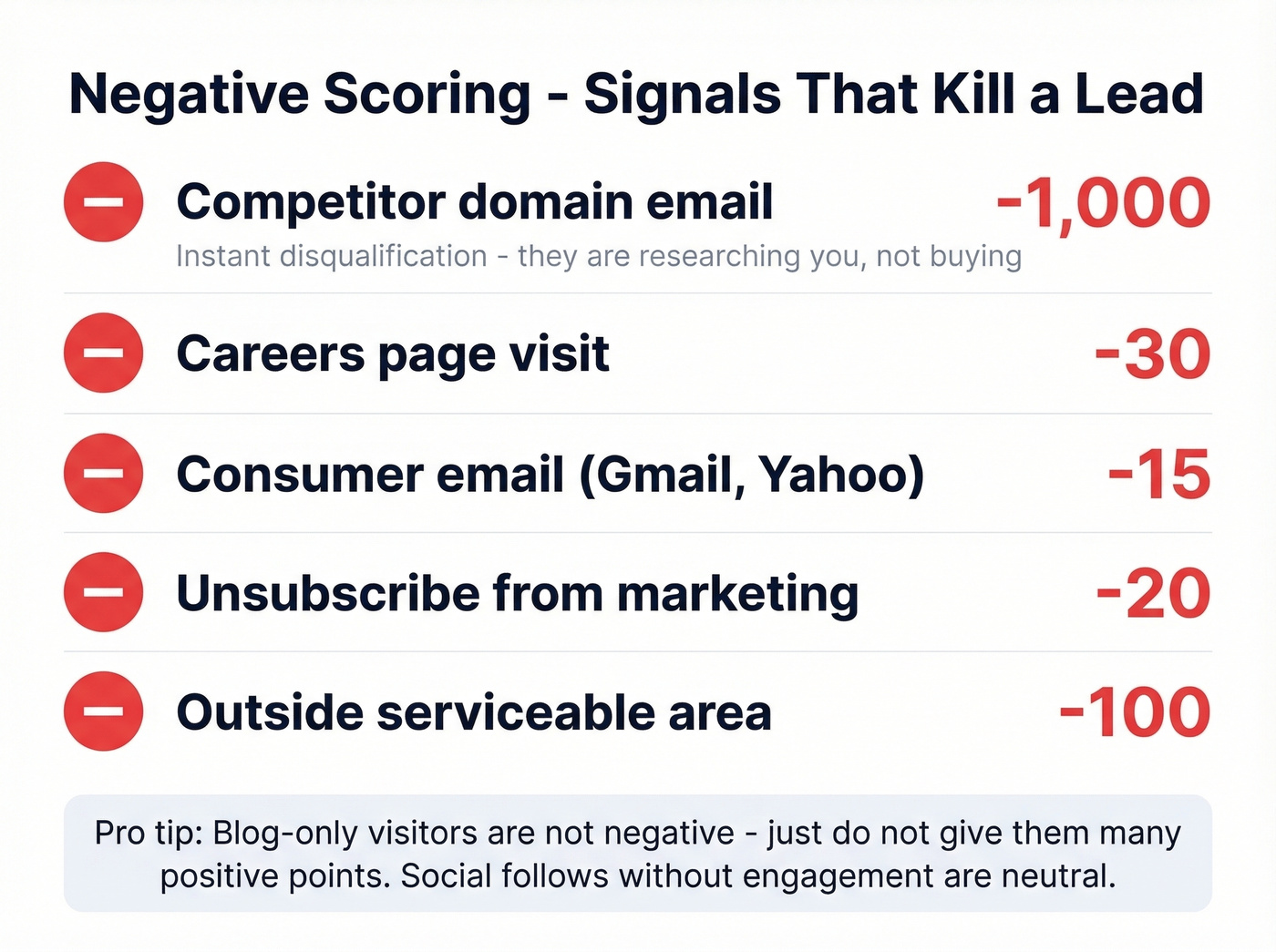

Negative Scoring and Disqualifiers

Penalize these aggressively:

- Competitor domain email: -1,000. They're researching you, not buying from you - instant disqualification.

- Careers page visit: -30. They want a job, not your product.

- Consumer email (Gmail, Yahoo): -15. Not always disqualifying, but a weak signal in B2B.

- Unsubscribe from marketing: -20.

Don't penalize blog-only visitors - they might be early-stage, so just don't give them many positive points. Social media follows without other engagement are neutral, not negative.

The competitor domain rule deserves emphasis. A -1,000 score effectively removes them from any routing logic. We've seen teams waste hours nurturing leads from competitor domains because nobody thought to build this filter. It takes five minutes to implement and saves hundreds of wasted touches.

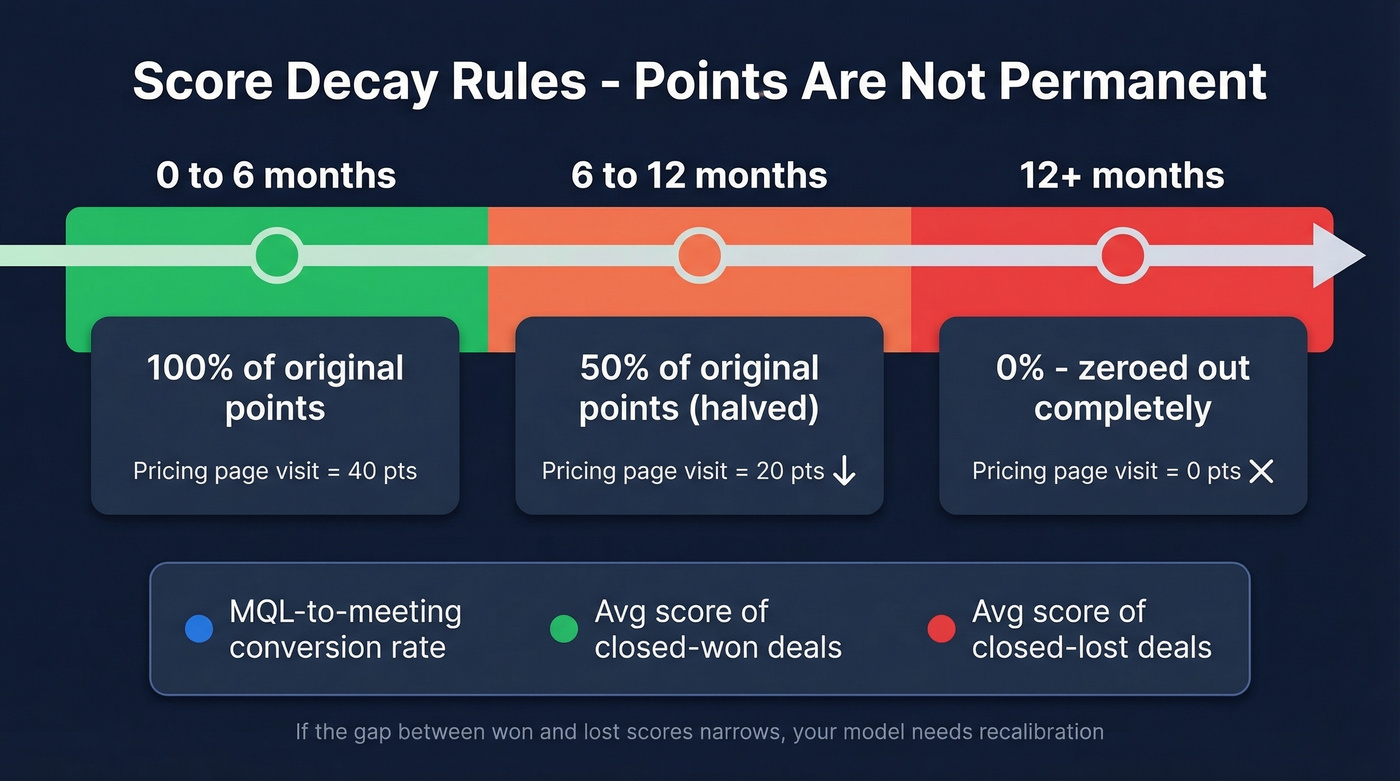

Decay Rules and Score Hygiene

Scores aren't permanent. A prospect who was highly engaged six months ago but has gone silent isn't the same lead they were. Without decay rules, your MQL list fills up with ghosts.

Halve all points for actions older than six months. Zero out anything past twelve months. Run this decay calculation automatically in your CRM or marketing automation workflows.

Beyond decay, enforce behavioral caps and audit your model quarterly. Start with 5-7 criteria and iterate based on closed-won data. If a criterion doesn't correlate with revenue after two quarters, drop it. One team we studied increased their MQL-to-meeting rate by 13% simply by lowering the activity threshold and tightening title/seniority filters - proof that small calibration changes compound.

Track three metrics every quarter:

- MQL-to-meeting conversion rate

- Average score of closed-won deals

- Average score of closed-lost deals

If the gap between won and lost scores narrows, your model is losing discriminatory power and needs recalibration.

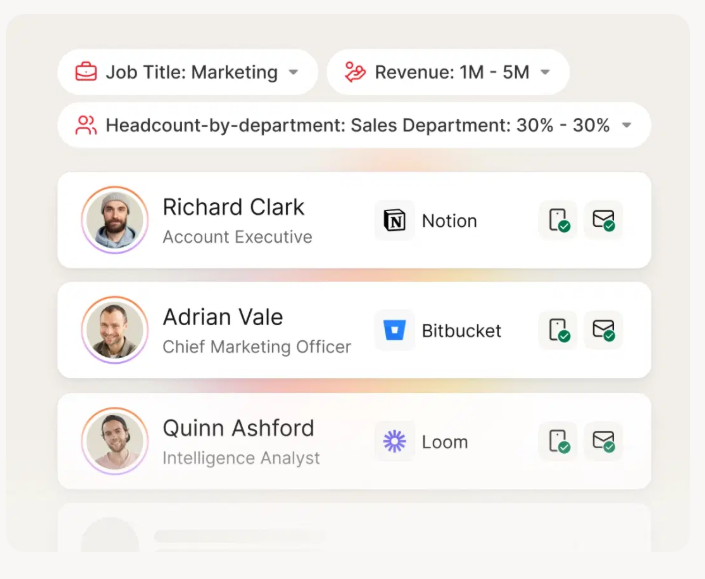

Fit scoring falls apart when your firmographic data is stale. Prospeo refreshes 300M+ profiles every 7 days - not the 6-week industry average - so your company size, revenue, and title fields stay accurate. 98% email accuracy means your scores reflect reality, not outdated CRM records.

Clean data in, accurate scores out. Start free with 75 verified emails.

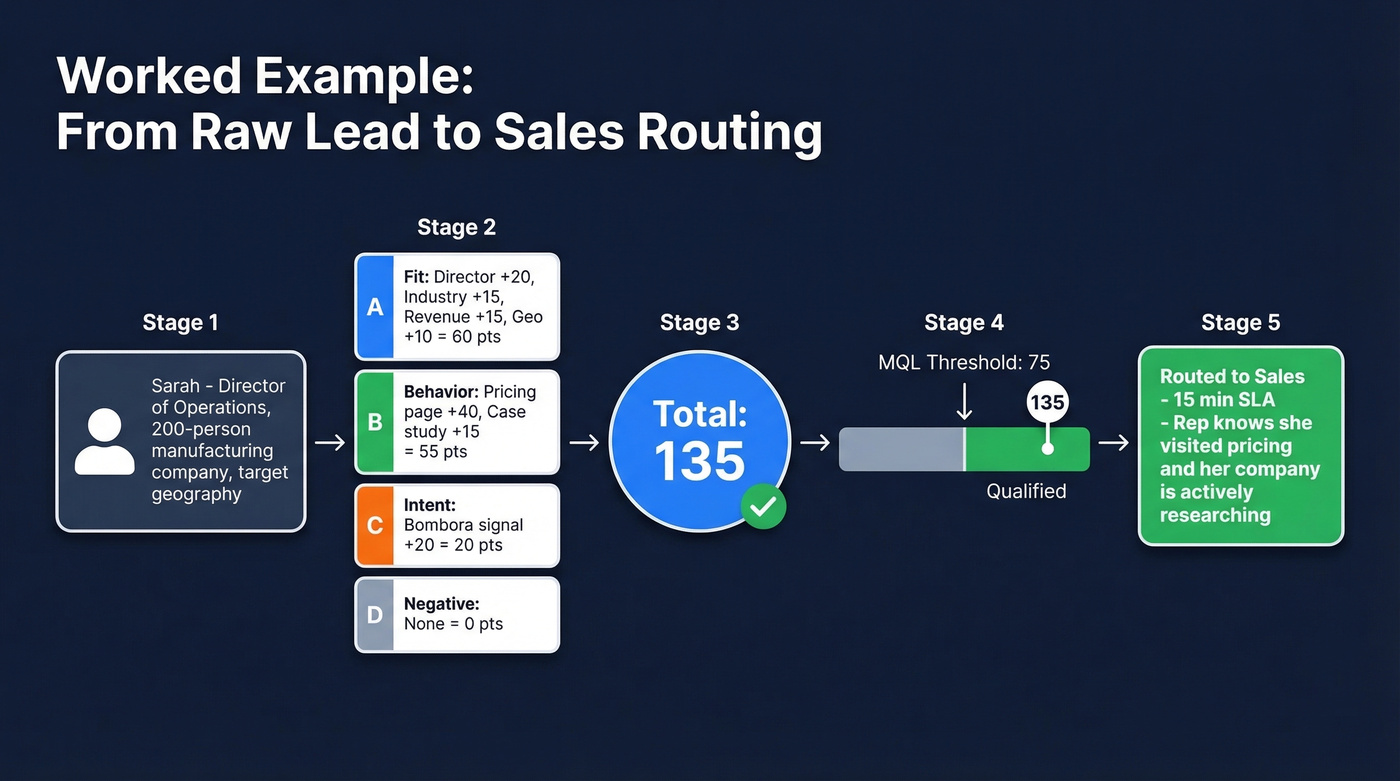

Worked Example: Raw Lead to Routing

Let's walk through a real scenario. Sarah is a Director of Operations at a 200-person manufacturing company in your target geography.

Fit: Director title (+20) + target industry (+15) + revenue in range (+15) + target geography (+10) = 60 points

Behavior: Visited pricing page (+40) + downloaded a case study (+15) = 55 points

Intent: Her company is showing Bombora intent signals for your category (+20) = 20 points

Interaction: None yet. Negative: No penalties.

Total: 135 points. With an MQL threshold of 75, Sarah crossed it comfortably. She's routed to sales with a 15-minute SLA. The behavioral and intent data give the rep context for the conversation - she's been on the pricing page and her company is actively researching.

That's the model working as designed: the score tells reps who to call, and the underlying data tells them what to say.

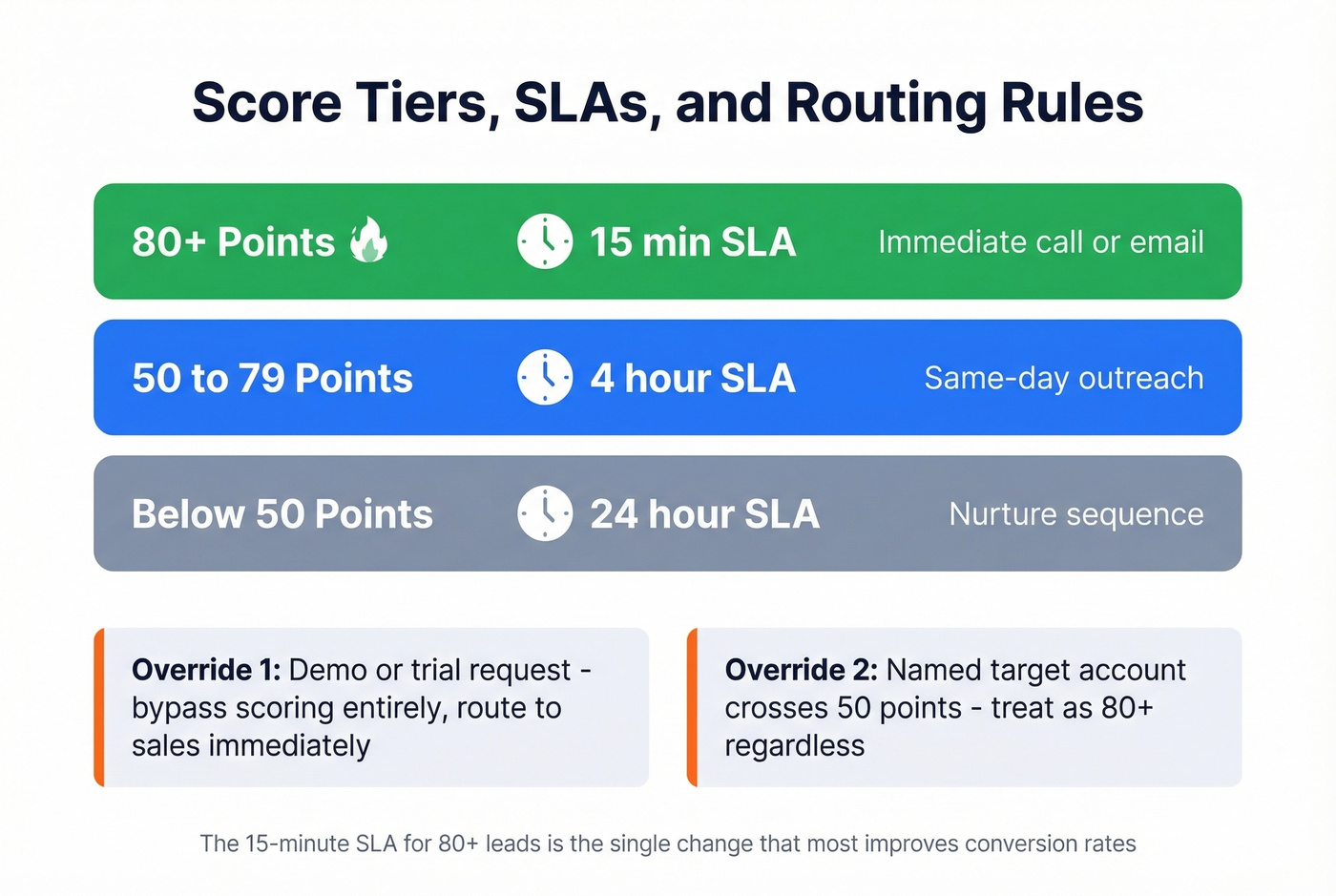

Thresholds, SLAs, and Routing

The MQL threshold isn't a magic number - it's driven by sales capacity. If your reps can handle 30 leads per week, set the threshold so roughly 30 leads cross it weekly. 75 points is a common starting point, but calibrate based on your volume.

| Score Range | SLA | Action |

|---|---|---|

| 80+ | 15 minutes | Immediate call/email |

| 50-79 | 4 hours | Same-day outreach |

| Below 50 | 24 hours | Nurture sequence |

Two override rules: demo and trial requests bypass scoring entirely - route to sales immediately. And if a lead from a named target account crosses 50 points, treat them as 80+ regardless. Account context changes the math.

Here's the thing: in our experience, the 15-minute SLA for 80+ leads is the single change that most improves conversion rates. The SLA is where most scoring models die. Marketing builds the model, sets the thresholds, and hands leads over. Sales ignores them because there's no accountability. Tie SLAs to dashboards. Track response times. Make it visible. A score without enforcement is just a number in a database.

Account-Level Scoring

Lead scoring works well for single-threaded sales motions. But modern B2B deals involve 6-10 stakeholders, and individual lead scores can't capture buying committee dynamics. A single champion downloading every piece of content looks "hot" at the lead level, but if nobody else at the account is engaged, the deal isn't real.

Account-level scoring aggregates signals across the entire buying committee. Multiple decision-makers attending a demo, quiet research from several senior stakeholders, and cross-functional engagement all boost the account score in ways individual lead scores miss.

For ABM, tier your accounts: Tier 1 (50-100 accounts) gets 1:1 treatment. Tier 2 (a few hundred) gets 1:few campaigns. Tier 3 gets 1:many programmatic coverage. Build lead-level scoring first if you're selling lower-ACV products or running PLG motions. Build account scoring first if you're selling six-figure deals into enterprise buying committees. Most teams eventually need both.

Manual vs. Predictive Scoring

Manual scoring - the rules-based model we've been building - is where every team should start. It's transparent, easy to debug, and doesn't require historical data. But once you've accumulated 6-12 months of clean conversion data, predictive scoring starts to pay off.

Predictive models trained on closed-won and closed-lost data typically improve conversion rates by 20-40%. Product-qualified leads scored based on actual product usage convert at 20-30%, roughly 2-3x the rate of traditional MQLs. If you're running a PLG motion, PQL scoring is the single highest-leverage investment you can make.

Predictive models are only as good as their training data. If your CRM records are stale, the model learns from noise - which is why a data layer with a weekly refresh cycle matters more for predictive scoring than for manual rules. Retrain quarterly. Data drifts, markets shift, and a model trained on last year's buyers won't perfectly predict next year's.

Skip scoring entirely if you're getting fewer than 50 inbound leads per month. A human can review 50 leads in 15 minutes. Scoring adds overhead without payoff at that volume. Start when volume forces prioritization - usually around 100+ leads per month.

Data Quality: The Foundation

Lead scoring without data quality is astrology. You're assigning points based on firmographic data that's six months old, email addresses that bounce, and job titles that changed two quarters ago. The model looks sophisticated. The outputs are fiction.

The industry average data refresh cycle is six weeks. For most teams, a meaningful percentage of their scored leads are working off stale records. When 20% of your emails bounce and company revenue data is from last fiscal year, your "high-scoring" leads are a coin flip.

Your scoring model is only as good as the data underneath it. Fix the data layer, and the scores start meaning something.

You built decay rules and SLAs, but your reps still can't reach high-scoring leads because the emails bounce and the phone numbers are dead. Prospeo delivers 98% verified emails and 125M+ mobile numbers with a 30% pickup rate - so when a lead hits your MQL threshold, sales actually connects.

Stop wasting 80+ scores on contacts your reps can't reach.

Tools for Scoring and Enrichment

The tool you need depends on where scoring lives in your stack. CRM-native tools handle the scoring logic. Data platforms ensure the inputs are accurate. Intent and predictive platforms add advanced signal layers.

| Tool | Type | Best For | Starting Price |

|---|---|---|---|

| Prospeo | Data + intent | Clean scoring inputs | Free; ~$0.01/verified email |

| HubSpot | CRM + scoring | Rules + predictive | Free CRM; ~$800+/mo for scoring |

| Salesforce Einstein | CRM + AI | Enterprise teams | ~$25+/user/mo plus add-ons |

| Clay | Enrichment | Multi-source waterfall | Free; paid from $185/mo |

| Apollo | Sales intelligence | SMB outbound | Free; paid from $59/user/mo |

| 6sense | Intent + ABM | Enterprise accounts | ~$30k-$100k+/year |

| MadKudu | Predictive | PLG companies | ~$10k-$30k+/year |

| Pipedrive | CRM + add-on | Small team CRM | From ~$14/user/mo |

HubSpot is the default for most mid-market teams. Rules-based scoring is available on lower tiers, and predictive scoring unlocks at Professional/Enterprise. Everything lives in one system - scoring, routing, nurture sequences, and reporting. The tradeoff: HubSpot's AI scoring is a black box that's hard to debug when results look wrong.

Salesforce Einstein makes sense for enterprise teams already deep in the Salesforce ecosystem. It trains on your historical lead and opportunity data and surfaces scores directly on lead records. You'll need an Einstein license on top of your Salesforce subscription, and the model needs substantial closed-won data to produce meaningful predictions.

Clay excels at waterfall enrichment - pulling data from multiple providers to fill gaps that any single source misses. At $185/mo for paid plans, it's a strong complement to your CRM-native scoring tool when data completeness is the bottleneck. Apollo is the obvious starting point for SMB teams running outbound; the free tier gets you in the door, and paid plans from $59/user/mo include basic scoring alongside the prospecting database. It won't replace a dedicated model, but for teams under 50 leads/month who need something simple, it's enough.

6sense is enterprise intent and ABM scoring at $30k-$100k+/year - a serious platform for account-level scoring across large buying committees, but overkill for most teams under 500 employees. MadKudu targets PLG companies specifically, scoring based on product usage data. Pipedrive offers basic CRM scoring starting around $14/user/mo, suitable for small teams who want something simple without the HubSpot price tag.

FAQ

How many criteria should a scoring model use?

High-conversion companies average four criteria. Start with 5-7 signals across fit, behavior, intent, and interaction, then iterate quarterly based on closed-won data. More criteria means more maintenance and more opportunities for the model to drift without anyone noticing.

What's a good MQL threshold score?

Set it based on sales capacity - if reps can handle 30 leads per week, calibrate the threshold so roughly 30 leads cross it weekly. 75 points is a common starting point, but the right number is whatever matches your team's bandwidth to actual conversion rates.

How often should I recalibrate?

Quarterly. Backtest scores against closed-won and closed-lost deals every quarter. If high-scoring leads aren't converting at meaningfully higher rates than low-scoring leads, your weights are wrong. Adjust, re-deploy, and measure again.

Does scoring work for small teams?

If you get fewer than 50 inbound leads per month, a human can review them all in 15 minutes. Scoring adds process overhead without meaningful payoff at that volume. Start building a model when lead volume forces prioritization - usually around 100+ leads per month.