Competitive Intel: The Practitioner's Guide to CI That Actually Works

A RevOps lead we know ran a post-mortem on seven lost deals last quarter. Every single one had the same root cause: reps were pitching against a competitor's old pricing model - the competitor had restructured six weeks earlier, and nobody updated the battlecard. That's $340k in ARR, gone. Not because the product was worse. Because the competitive intel didn't reach the people who needed it.

That gap - between collecting intelligence and actually getting it into seller workflows - is where most CI programs die. Let's fix that.

What Is Competitive Intelligence?

The definition is straightforward: it's the systematic, legal process of gathering, analyzing, and distributing information about competitors, market dynamics, and buyer behavior to make better business decisions. Every major CI professional organization, including SCIP (Strategic and Competitive Intelligence Professionals), publishes an ethics code drawing a hard line between open-source intelligence and anything involving theft or deception. This isn't theoretical - Oracle and SAP settled litigation for $2B related to SAP's unauthorized access to Oracle materials, and HP faced major legal fallout for pretexting in a boardroom leak investigation. The line matters.

A few distinctions worth nailing down. Market intelligence studies your total addressable market and buyer trends. Competitor intelligence focuses narrowly on what specific rivals are doing - their pricing, positioning, hiring patterns, and product moves. Business intelligence is internal: your own dashboards and revenue metrics. CI sits across all of these, but its core job is answering one question: why are we winning or losing deals, and what's the other side doing about it?

The best framing comes from the competitive enablement model: gathering, insight creation, distribution, action. If your CI program stops at "gathering," you don't have a program. You have a research hobby.

What You Need (Quick Version)

Most CI guides hand you a 6-step process and 15 tools. That's a reading list, not a CI program. Here's what actually matters:

- Weekly collection cadence. Not quarterly. Not "when someone asks." Weekly. Automate what you can, manually review what you can't.

- Single distribution channel reps actually check. Slack, CRM sidebar, whatever - pick one. If intel lives in a Google Doc, it's dead.

- Accurate data layer. Your battlecard is useless if the email address attached to the target account bounces.

The budget stack to start today: Feedly ($6/mo) for monitoring, Google Alerts (free) for news, SpyFu ($39/mo) for SEO intelligence, and Prospeo's free tier for verified contact data when you need to reach the accounts your CI identifies. Total: under $50/month.

Why Competitive Intel Matters in 2026

Competition has intensified. Nearly 7 in 10 deals are now head-to-head against a competitor per Crayon's State of CI report, and 55% of companies report seeing more competitive opportunities year over year. Sales teams rate their own competitive preparedness at 3.8 out of 10.

That gap has a dollar value. For a 50-person sales org, reps spend 8-12 hours per month each on ad hoc competitor research - that's 400-600 hours per month of duplicated effort, north of $400k/year in direct labor costs. The output is inconsistent, unverified, and rarely shared.

But the real cost isn't the labor. It's the reactive lag. A competitor ships a feature, and your reps find out from the prospect. That's exactly what happened in the $340k ARR loss - reps used outdated positioning for six weeks, lost seven deals, five of which were winnable in post-mortem analysis. Proactive intelligence programs compress that lag to days.

The CI tools market is projected to reach $1.46B by 2030 according to Mordor Intelligence, and CI team sizes have grown 24% year over year. This isn't a niche function anymore. It's infrastructure.

Here's the thing: if your average deal size is under five figures, you probably don't need a $30k CI platform. But you absolutely need a CI process. The difference between a $50/month stack with discipline and a $30k platform with no distribution is that the cheap one actually works.

Types of Competitive Intel

Not all CI serves the same purpose:

| Type | What It Covers | Example Use Case |

|---|---|---|

| Competitor intel | Rival strategies, pricing, positioning | Updating battlecards after a competitor rebrand |

| Product intel | Feature releases, roadmap signals, patents | Prioritizing your own roadmap based on gaps |

| Market intel | Market sizing, trends, buyer shifts | Entering a new vertical |

| Customer intel | Win/loss patterns, churn reasons, NPS | Refining ICP and messaging |

| Technological intel | Tech stack adoption, R&D signals | Identifying disruption risk |

Strategic CI answers "where is the market going?" - quarterly board-level analysis, scenario planning, and wargaming exercises. Tactical CI answers "how do we win this deal?" - battlecards, talk tracks, and objection handling. Most teams need both, but tactical CI delivers ROI faster because it touches active revenue. Start there.

The CI Process Step by Step

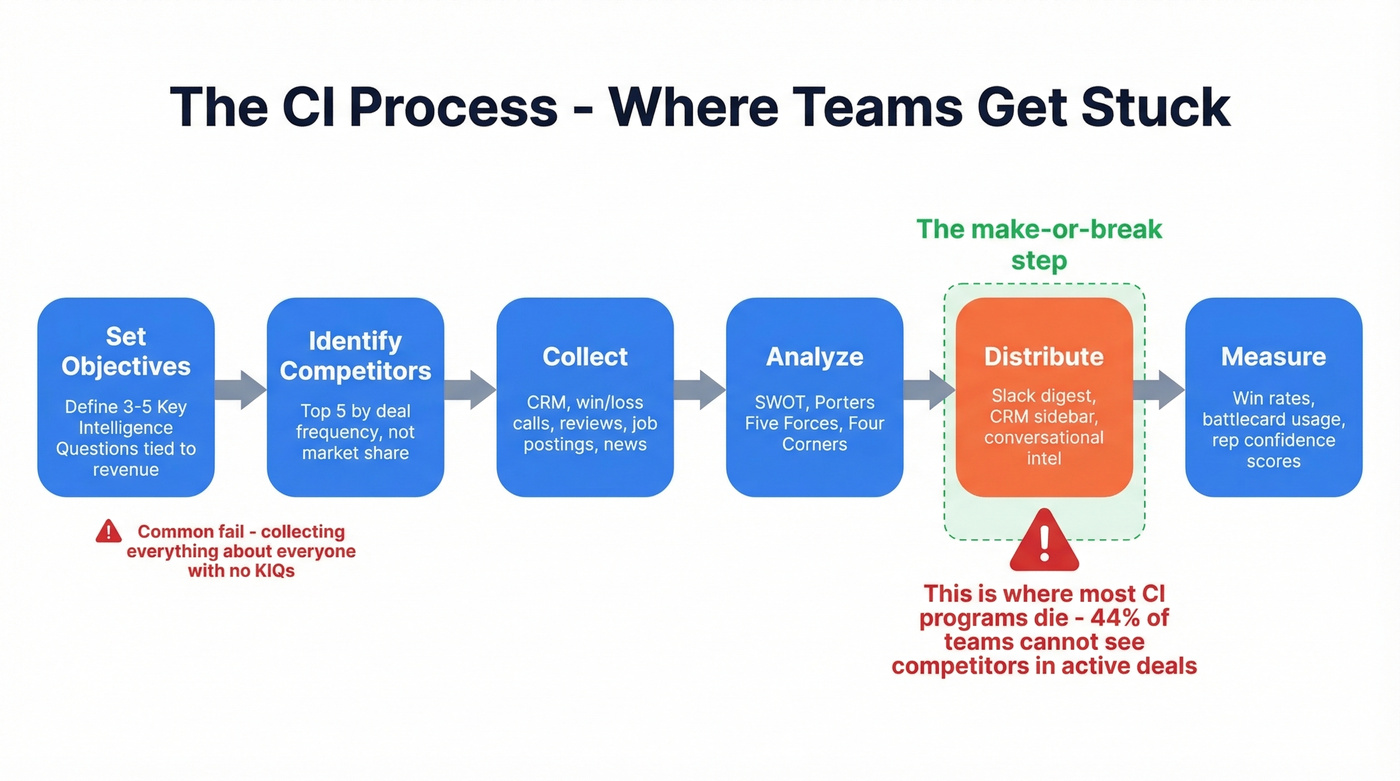

The standard framework has six steps: set objectives, identify competitors, collect, analyze, distribute, and measure. Here's where teams actually get stuck.

Set Objectives and Identify Competitors

The most common CI mistake is collecting everything about everyone. Programs without strategic direction - no Key Intelligence Questions, no alignment to business goals - produce mountains of data that nobody uses.

Start with three to five KIQs tied to revenue. "Why are we losing to Competitor X in mid-market?" is a KIQ. "What's Competitor X doing?" is not - it's a research project with no finish line. Identify your top five competitors by deal frequency, not by market share. The competitor you lose to most often matters more than the biggest name in the space.

Collect and Analyze

Internal sources are underrated. Your CRM, win/loss interviews, and recorded sales calls contain more actionable intelligence than most external monitoring tools. The problem is that CRM data is often dirty - wrong competitor fields, missing close reasons, duplicate accounts - which undermines every analysis you build on top of it.

External sources fill the gaps: competitor websites, G2 reviews, SEC filings, job postings (a hiring surge in a specific function signals strategic investment), news, and social channels. Don't overlook dark data either - support tickets, Slack conversations, and email threads contain competitive signals that never make it into formal reports.

For frameworks, match the tool to the question. SWOT works for quick competitive snapshots before a deal. Porter's Five Forces helps when evaluating market entry or positioning shifts. Four Corners analysis - a competitor's drivers, management assumptions, strategy, and capabilities - is the best framework for predicting what a rival will do next.

Who Owns CI?

Product marketing owns CI in 78.6% of surveyed companies. Usually it's a single person doing CI as 20% of their job alongside positioning, messaging, and launch work.

If that's you, don't try to boil the ocean. Focus on the top three competitors your reps actually face in deals, automate monitoring, and spend your limited hours on analysis and distribution rather than collection.

Your CI identified the right accounts - now reach them. Prospeo gives you 98% accurate emails and 125M+ verified mobiles so your battlecards translate into booked meetings, not bounced emails.

Bad contact data kills good competitive intel. Fix that for $0.01 per email.

Why CI Fails: The Distribution Problem

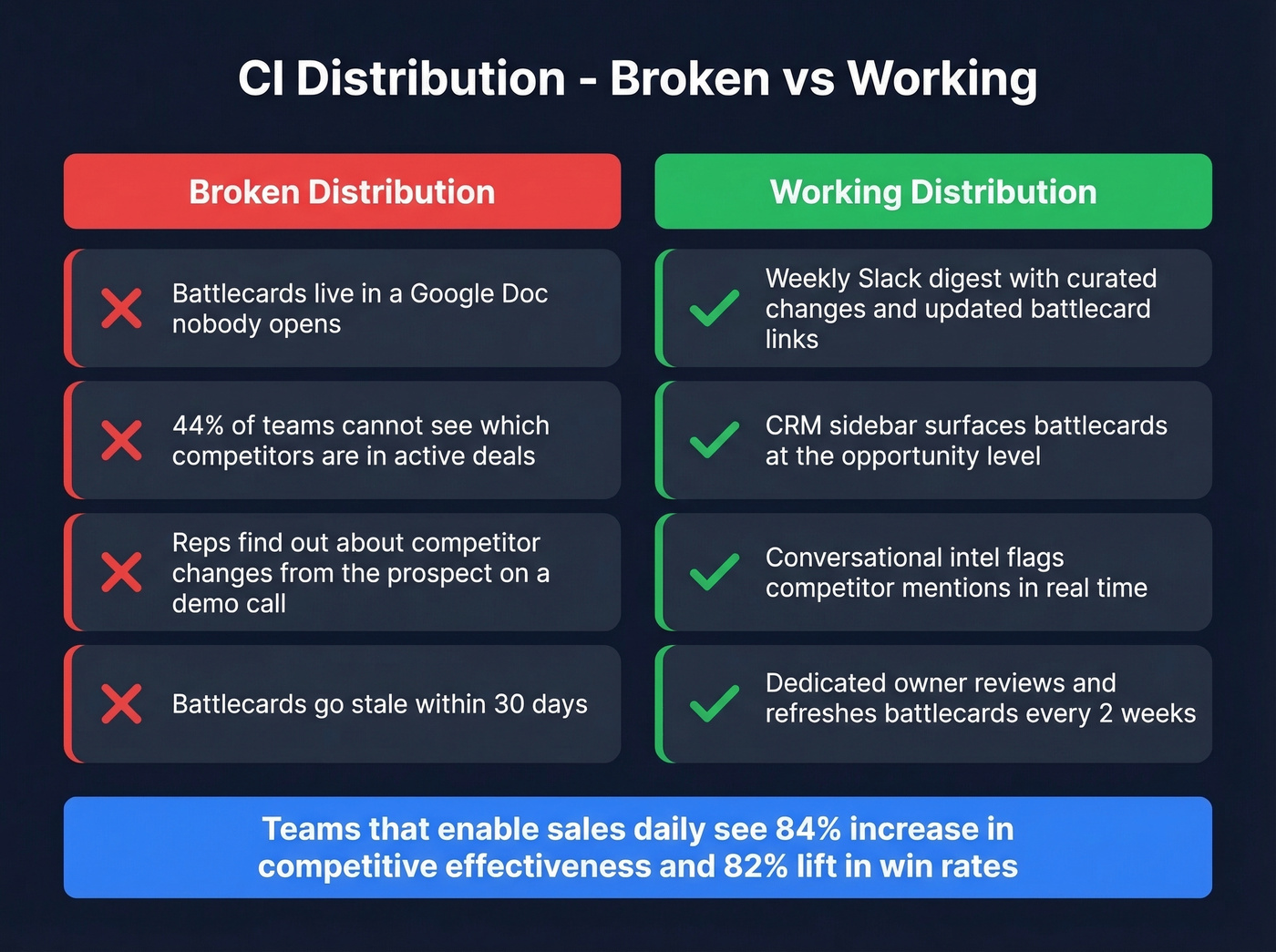

Here's the central thesis of this entire guide: CI doesn't fail at collection. It fails at distribution.

The data is damning. 44% of teams can't see which competitors are in active deals. Only 48% of compete programs have an executive sponsor from Sales. Battlecards go stale within 30 days. And when reps can't find current intel in the tool they're already using, they wing it - or worse, they pitch against a competitor's old positioning and lose.

The flip side is encouraging. Teams that enable sales daily see an 84% increase in competitive effectiveness. Using conversational intelligence to track competitor mentions in calls lifts win rates by 82%. And 71% of companies using battlecards report improved win rates - but only when those battlecards are accessible, current, and embedded in the workflow.

Practical fixes that work:

- Slack channel with a weekly digest. Not a firehose - a curated summary of what changed this week, with links to updated battlecards.

- CRM integration. Surface battlecards in Salesforce or HubSpot at the opportunity level, triggered by the competitor field.

- Conversational intelligence. Tools like Gong flag competitor mentions in calls, giving CI teams real-time signal on which competitors are showing up and what reps are saying about them.

The scenario you're trying to prevent: your competitor ships a feature, and your reps find out from the prospect on a demo call. If that's happening, your distribution is broken.

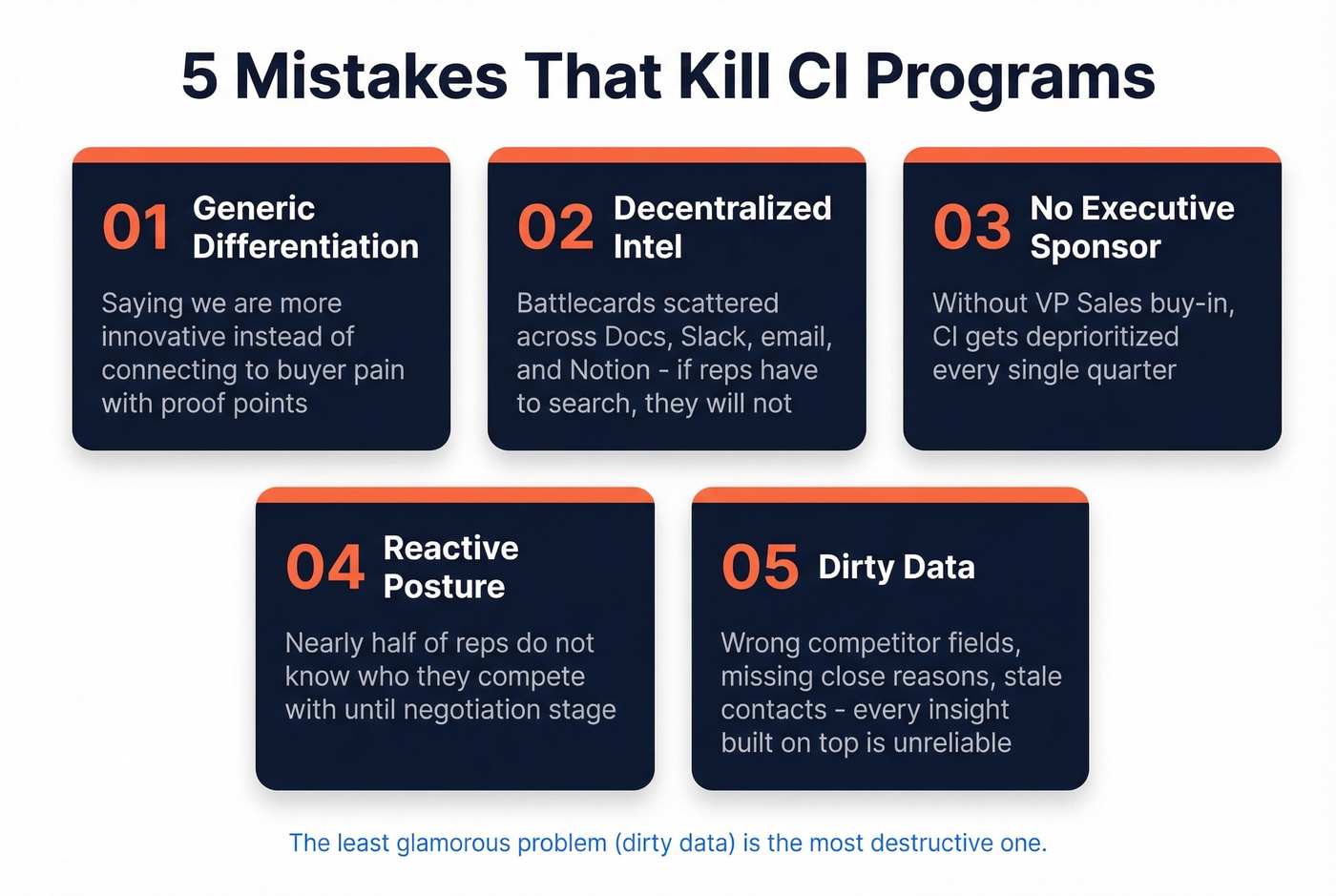

Mistakes That Kill CI Programs

We've seen the same five failure modes kill programs repeatedly, regardless of company size or tool budget.

Generic differentiation

Battlecards that say "we're more innovative" or list 47 feature comparisons without connecting to buyer pain. Start from value propositions and proof points, not feature matrices.

Decentralized intel

Battlecards scattered across Google Docs, Slack threads, email chains, and someone's Notion. If reps have to search for intel, they won't. One URL, one Slack channel, one CRM sidebar widget - pick a single source of truth.

No executive sponsor

Without VP Sales buy-in, CI is a side project that gets deprioritized every quarter. The programs that work have a sales leader who actively champions battlecard usage and holds reps accountable for logging competitor data in the CRM.

Reactive posture

In a survey of 300+ revenue leaders, nearly half said reps don't know who they're competing with until the negotiation stage. 13% said reps don't know even after the deal closes. If CI only activates when a rep panics mid-deal, you've already lost the positioning battle.

Dirty data

Your CRM is the foundation of every CI analysis - win/loss rates by competitor, deal velocity, pricing pressure. If competitor fields are wrong, close reasons are missing, and contact data is stale, every insight you build on top is unreliable. This is the least glamorous CI problem and the most destructive one.

Real CI Results

These are vendor-reported outcomes - marketing testimonials, not independent audits. But the pattern is consistent enough to be directional, and the specificity of the numbers makes them useful benchmarks.

Win rate improvements tell the clearest story. Affinity moved from a 16% competitive win rate to 45% after implementing structured CI. Allego doubled their overall win rate in six months and tripled it to 95% against their top competitor. Salsify saw a 22% increase in competitive win rate.

On the revenue side, Cognism attributed $6M in influenced revenue in under one year to their market intelligence function. Alteryx drove a 40% increase in battlecard adoption by integrating CI directly into seller workflows rather than publishing static documents - proving that distribution, not content quality, was the bottleneck.

A cybersecurity company documented in a GlobeNewsWire case study ran a full CI engagement - competitor mapping, pricing analysis, expert interviews - and used the output to refine positioning and identify whitespace. The engagement structure is a solid template for teams building their first formal CI program.

The common thread: CI worked when it was distributed into workflows, not when it sat in a report.

CI Tools and What They Cost

All-in-One CI Platforms

| Tool | Starting Price | G2 Rating | Best For |

|---|---|---|---|

| Klue | ~$20k-$40k/yr | 4.7/5 | Enterprise battlecard management |

| Crayon | ~$20k-$40k/yr | 4.6/5 | Real-time competitive monitoring |

| Contify | ~$15k-$30k/yr | 4.5/5 | News and media intelligence |

| Kompyte | From $300/yr | - | Budget-conscious teams |

SEO, Traffic & Research

| Tool | Starting Price | Best For |

|---|---|---|

| Semrush | From $139/mo | Full SEO competitive analysis |

| SpyFu | From $39/mo | Paid search and keyword intel |

| SimilarWeb | From $125/mo | Traffic and engagement benchmarks |

| Feedly | From $6/mo | RSS monitoring and alerts |

| AlphaSense | ~$24k/user/yr | Financial and earnings intel |

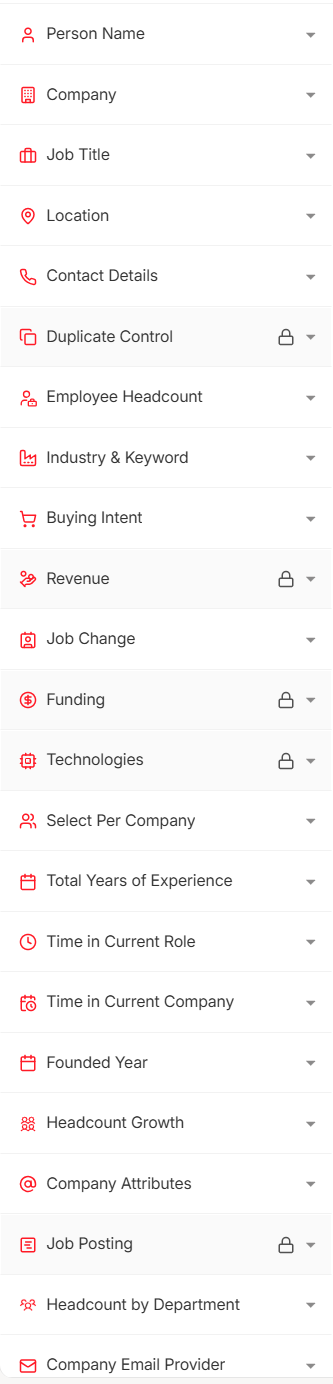

B2B Data & Contact Intelligence

| Tool | Starting Price | Best For |

|---|---|---|

| Prospeo | Free; ~$0.01/email | Verified contact data, 98% email accuracy |

| ZoomInfo | From ~$15k/yr | All-in-one sales intelligence |

| 6sense | ~$55k/yr median | Intent-based ABM |

| Gong | ~$1,600/user/yr + platform | Conversation intelligence |

Enterprise CI platforms like Klue and Crayon are excellent - real-time monitoring, battlecard management, Salesforce integration, the works. But at $20k-$40k/year, they're built for companies with dedicated CI headcount and enterprise procurement budgets. Skip them if you're a one-person CI operation inside product marketing.

For teams just getting started, the budget stack covers 80% of what you need: Feedly for competitor monitoring, Google Alerts for news triggers, SpyFu for SEO and paid search intelligence, and a solid data layer for contact verification. When your CI identifies a target account, you need verified contact data to actually reach the decision-makers - stale emails and wrong phone numbers kill outreach before it starts. The consensus on r/sales is pretty clear: data quality is the single biggest variable in outbound success, and it's the one most teams underinvest in relative to their tool spend on everything else.

The fact that most CI platforms won't publish pricing tells you they're built for enterprise procurement cycles, not for the product marketer doing CI as 20% of their job. Start with the budget stack and upgrade when you've proven the ROI.

Dirty CRM data undermines every CI analysis you build on top of it. Prospeo's enrichment API returns 50+ data points per contact at a 92% match rate - refreshed every 7 days, not 6 weeks.

Clean the data layer your competitive intelligence depends on.

AI and the Future of CI

AI adoption in competitive intelligence is up 76% year over year, with 60% of CI teams now using AI tools daily. The practical impact is real: teams report 85-95% reduction in manual research time for collection and monitoring tasks, and CI automation is driving 30-40% improvements in competitive win rates by shifting from quarterly battlecard updates to continuous accuracy.

The biggest untapped opportunity is dark data. Roughly 90% of organizational data is unstructured and underused - call recordings, support tickets, Slack conversations, email threads. AI is starting to mine these for competitive signals that never made it into formal CI reports. Gong's conversation intelligence is the most mature example, automatically flagging competitor mentions across hundreds of sales calls and surfacing patterns no human could track manually.

Look - AI makes the collection step nearly free. Which means the distribution and data quality problems we've been discussing become even more important. You can monitor 50 competitors in real time, but if you still can't get updated battlecards into your CRM sidebar, you've automated the wrong part of the process. The human work - analysis, strategic interpretation, distribution design - is where CI programs win or lose. AI handles the monitoring. You handle the "so what."

Measuring CI Impact

If you can't measure it, you can't defend the budget.

Win rate by competitor is the north star metric. Track it by competitor, by segment, and over time. Battlecard adoption rate tells you whether distribution is working - if fewer than half your reps view battlecards before competitive deals, fix that before building new content. Revenue influenced by CI connects the program to pipeline: tag deals where CI assets were used and measure closed-won revenue.

Beyond those three, track time saved (hours of manual research eliminated per month - this justifies tool spend), rep preparedness scores (survey quarterly; if you're above 3.8 out of 10, you're already beating the median), and deal-level competitor visibility. 44% of teams can't see which competitors are in which deals. Fix that first.

The measurement itself creates accountability. Once sales leadership sees win rates by competitor on a dashboard, they start asking why certain competitors are winning - and that's when CI gets executive sponsorship.

FAQ

Is competitive intelligence legal?

Yes. CI relies exclusively on publicly available information - websites, regulatory filings, reviews, news, job postings, and published research. SCIP's ethics code governs acceptable practices. The consequences for crossing the line are severe: the $2B Oracle-SAP settlement is the most cited example.

What's the difference between CI and market research?

Market research studies your customers and total addressable market. Competitive intelligence studies your competitors' strategies, positioning, pricing, and tactical moves. They overlap in informing go-to-market decisions, but CI focuses specifically on competitive dynamics - who you're losing deals to, why, and what they'll do next.

How often should battlecards be updated?

At minimum monthly, though the best programs update continuously as changes are detected. Battlecards go stale within 30 days. Automated monitoring tools catch competitor changes in real time, and the most effective teams push updates via Slack or CRM integrations the same day.

What's the cheapest way to start a CI program?

Feedly ($6/mo) for monitoring, Google Alerts (free) for news, SpyFu ($39/mo) for SEO intelligence, and a free-tier data tool for contact verification on target accounts. Total: under $50/month. Add a weekly Slack digest summarizing what changed, and you've got a functional program covering collection, monitoring, and distribution.

What does a CI team look like?

Product marketing owns CI in 78.6% of companies, typically as one person handling it alongside other PMM responsibilities. Dedicated CI teams are growing 24% year over year, but most programs are still one PMM with a tool stack, a Slack channel, and a weekly cadence. Cross-functional input from sales, CS, and product makes that one person dramatically more effective.

That $340k in lost ARR we opened with? It wasn't a data problem. It wasn't a tools problem. It was a six-week gap between a competitor's move and updated intel reaching the people who needed it. Every decision in your competitive intel program - what to collect, where to store it, how to distribute it - should be measured against one question: does this compress the lag? If it does, invest. If it doesn't, cut it.