Ethical Data Collection: Principles, Billion-Dollar Fines, and What to Actually Do

"I'd love comprehensive personal tracking, but I don't trust any third party to handle it responsibly." That's a real post from r/PrivacyGuides, and it captures the tension around ethical data collection perfectly. People want data-driven products. They just don't believe companies will handle their information well. When Meta gets hit with a €1.2 billion GDPR fine for moving EU data to the US without safeguards, it's hard to blame them.

The gap between what companies collect and what they should collect is a billion-dollar problem - literally. 81% of consumers say the risks of company data collection outweigh the benefits, and 37% have terminated relationships with companies over data concerns. Those aren't hypothetical losses. That's revenue walking out the door.

Quick Version

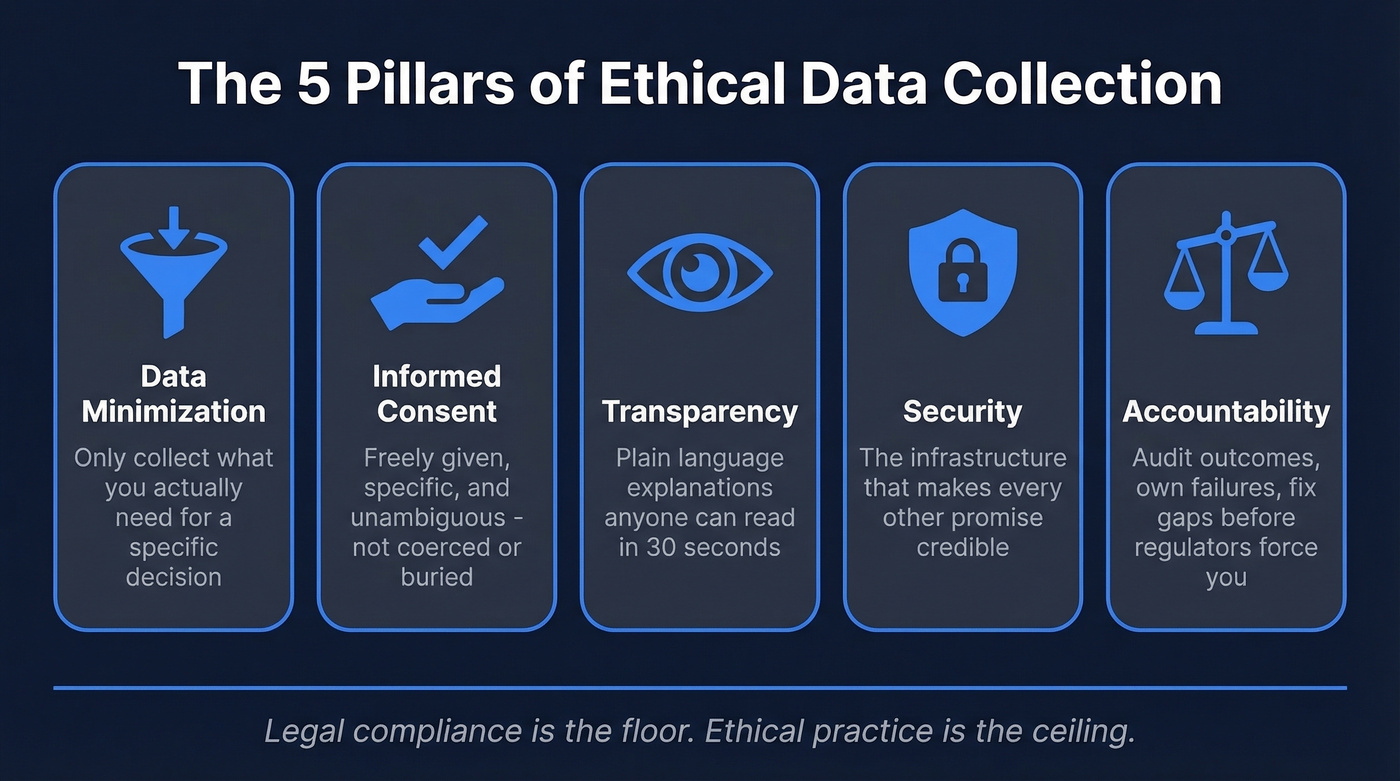

Responsible data handling comes down to five commitments:

- Collect the minimum. If you don't need it, don't ask for it.

- Get real consent. Not a pre-ticked box. Not a dark pattern. Actual informed agreement.

- Stay transparent. Tell people what you're collecting, why, and for how long.

- Protect what you store. Security isn't optional - it's the price of admission.

- Accept accountability when you fail. Own breaches, fix gaps, don't hide behind legalese.

What It Actually Means

Legal compliance and ethical practice aren't the same thing. GDPR, CCPA, and their global cousins set a floor - the minimum standard you can't fall below without getting fined. Ethical data collection is the ceiling: the choices you make when the law gives you room to maneuver but your users' trust is on the line.

GDPR Article 5 lays out the baseline principles - lawfulness, purpose limitation, data minimization, accuracy, storage limitation, integrity, and accountability. Most companies can recite these. Far fewer operationalize them beyond a checkbox in their compliance tracker.

Here's the thing: most companies that claim to be "GDPR compliant" are really just "GDPR-fine-avoidant." They meet the letter of the law while violating its spirit daily. The companies that earn trust - and keep customers - treat these principles as design constraints, not legal obligations to be minimized.

Core Principles With Real Examples

Data Minimization

Collect only what you need. ING Bank Slaski was fined €4.4 million for scanning identity documents without sufficient justification. Poland's Poczta Polska got hit with ~$7.4 million for processing voter PII it had no business holding. Both violations boil down to the same mistake: collecting data because you can, not because you need it.

Before adding a field to any form, ask: what decision does this data point enable? If you can't answer clearly, don't collect it. A healthcare provider doesn't need your browsing history. A recruitment AI doesn't need your ethnicity. "Minimum viable collection" should govern every form, workflow, and API call.

Informed Consent

Under GDPR, pre-ticked boxes and inactivity don't count as valid consent. But the consent problem runs deeper than checkbox formatting. When consent becomes a condition of service - agree to everything or you can't use the product - it's coerced consent. The value of that "yes" is close to zero.

Cookie banners that take seven clicks to reject but one click to accept are dark patterns. Regulators know it. Valid consent must be freely given, specific, informed, and unambiguous. Most consent UX fails at least two of those criteria.

Transparency

You don't need a 50-page privacy policy. You need a one-paragraph plain-language explanation of what you collect, why, and what happens to it. The legal team will want the 50 pages anyway - fine. But the user-facing explanation should be readable in 30 seconds.

If your privacy notice requires a law degree to parse, you've failed the transparency test.

Security as Foundation

Capita's £14 million ICO fine tells the whole story. A ransomware breach exposed data affecting roughly 7 million customers - not from a sophisticated zero-day exploit, just inadequate protections. Vodafone Germany got hit with €15 million and then €30 million for authentication gaps in their MyVodafone portal that allowed unauthorized eSIM access.

Security isn't a feature you bolt on after launch. It's the infrastructure that makes every other ethical commitment credible. Without it, consent and transparency are just promises you can't keep.

Fairness and Accountability

Latanya Sweeney's research showed that names more often given to Black babies were 80% more likely to trigger an "Arrested?" ad in search results. The algorithm wasn't explicitly racist. The training data and feedback loops were.

Accountability means auditing outcomes, not just inputs. It means asking who's harmed when your model gets it wrong, and building correction mechanisms before regulators force you to.

The Real Cost of Getting It Wrong

$6 billion+ in GDPR fines since 2018. That number keeps climbing.

| Company | Fine | Violation | Year |

|---|---|---|---|

| Meta | €1.2bn | EU-US data transfers | 2023 |

| Didi Global | ~$1.19bn | Data/network security | 2022 |

| Amazon | €746m | Consent/advertising | 2021 |

| TikTok | €530m | EU-China data transfers | 2025 |

| Vodafone DE | €45m combined | Auth gaps + oversight | 2025 |

| Capita | £14m | Security failings | 2025 |

| Replika/Luka | €5m | No consent, no age check | 2024 |

| ING Bank | €4.4m | Data minimization | 2024 |

The financial penalties are just the visible cost. 75% of consumers won't purchase from organizations they don't trust with personal data. 52% of U.S. adults have declined a product or service over data collection worries. The customers who quietly leave dwarf the fines.

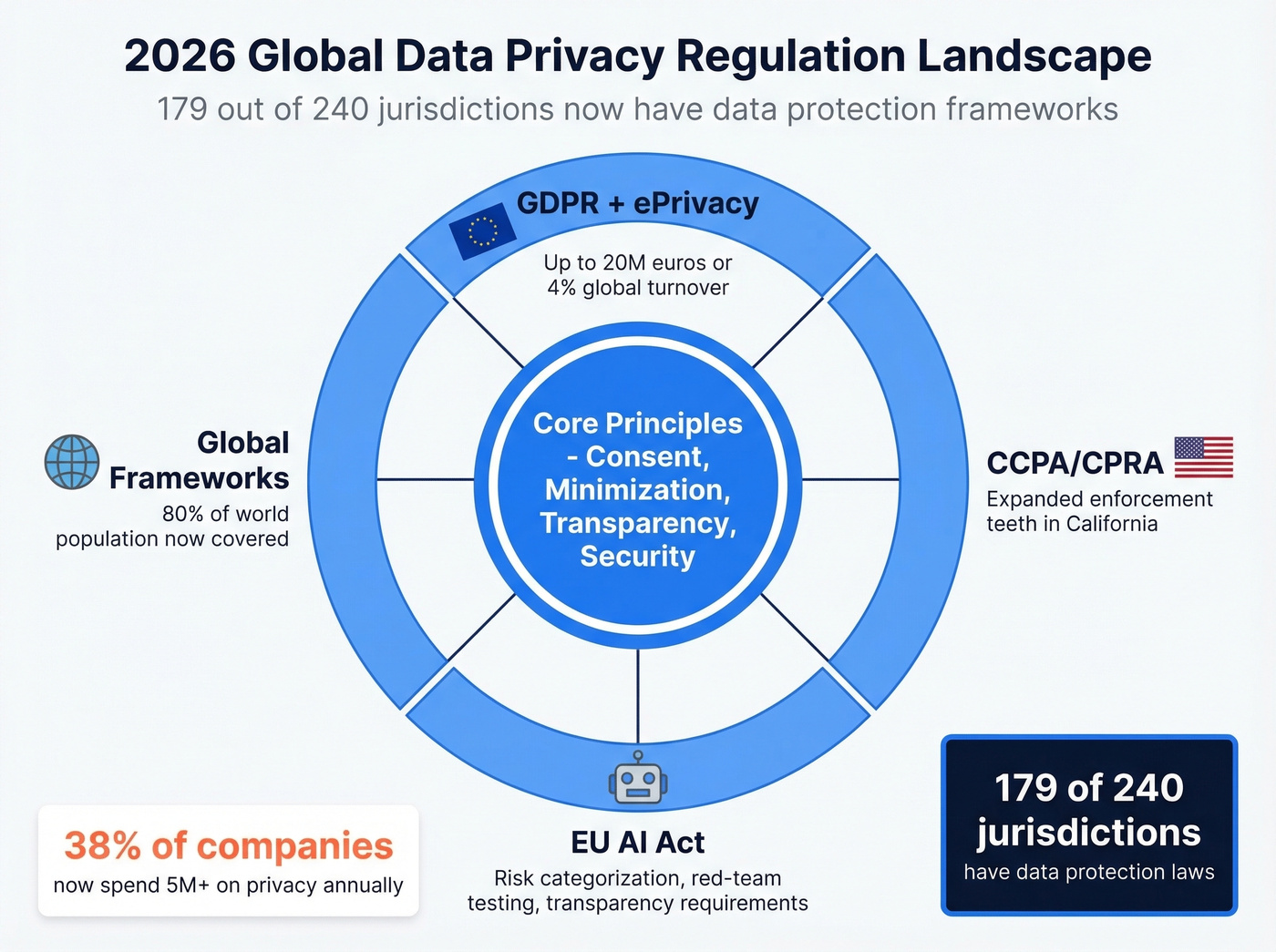

The 2026 Regulatory Picture

GDPR's penalty tiers remain the global benchmark: up to €20 million or 4% of global turnover for serious offenses. The CCPA's evolution into CPRA added enforcement teeth in California, while the ePrivacy Directive continues to govern cookie consent across the EU.

The bigger story is global convergence. 179 out of 240 jurisdictions now have data protection frameworks, covering roughly 80% of the world's population. The idea that data privacy is a "European thing" hasn't been true for years.

The EU AI Act entered enforcement in 2025, pushing organizations to categorize AI systems by risk level, strengthen oversight, run red-team testing, and publish transparency information. This changes how training data gets collected and processed - and it's already rewriting what "good" looks like for AI governance.

You just read about billion-dollar fines for collecting data without proper consent. Prospeo is built differently: GDPR compliant, opt-out enforced globally, DPAs available, and a Zero-Trust data partner policy that only uses vetted sources. 300M+ profiles verified through a 5-step process with spam-trap and honeypot removal - so your outreach stays clean and your domain stays safe.

Build pipeline on data that respects privacy. Start free today.

AI and the New Frontier

AI has rewritten the rules on privacy. 90% of organizations say their privacy programs expanded in scope because of AI, per Cisco's 2026 Data Privacy Benchmark Study. 38% of companies now spend $5 million or more on privacy annually - up from just 14% in early 2025. That's not incremental growth. That's a sea change.

The fear is specific: 34% of organizations cite generative AI data leaks as their top security concern in 2026, up from 22% the year before. Italy's Garante fined Replika's parent company Luka €5 million for collecting personal and behavioral data without proper consent and with no age verification - an early signal that AI-specific enforcement is real and escalating.

If your training data pipeline doesn't have ethical checkpoints at every stage, you're building on a foundation that regulators are actively undermining. Embedding protections at the model level - not patching them on after deployment - is the only approach that scales with the pace of AI regulation.

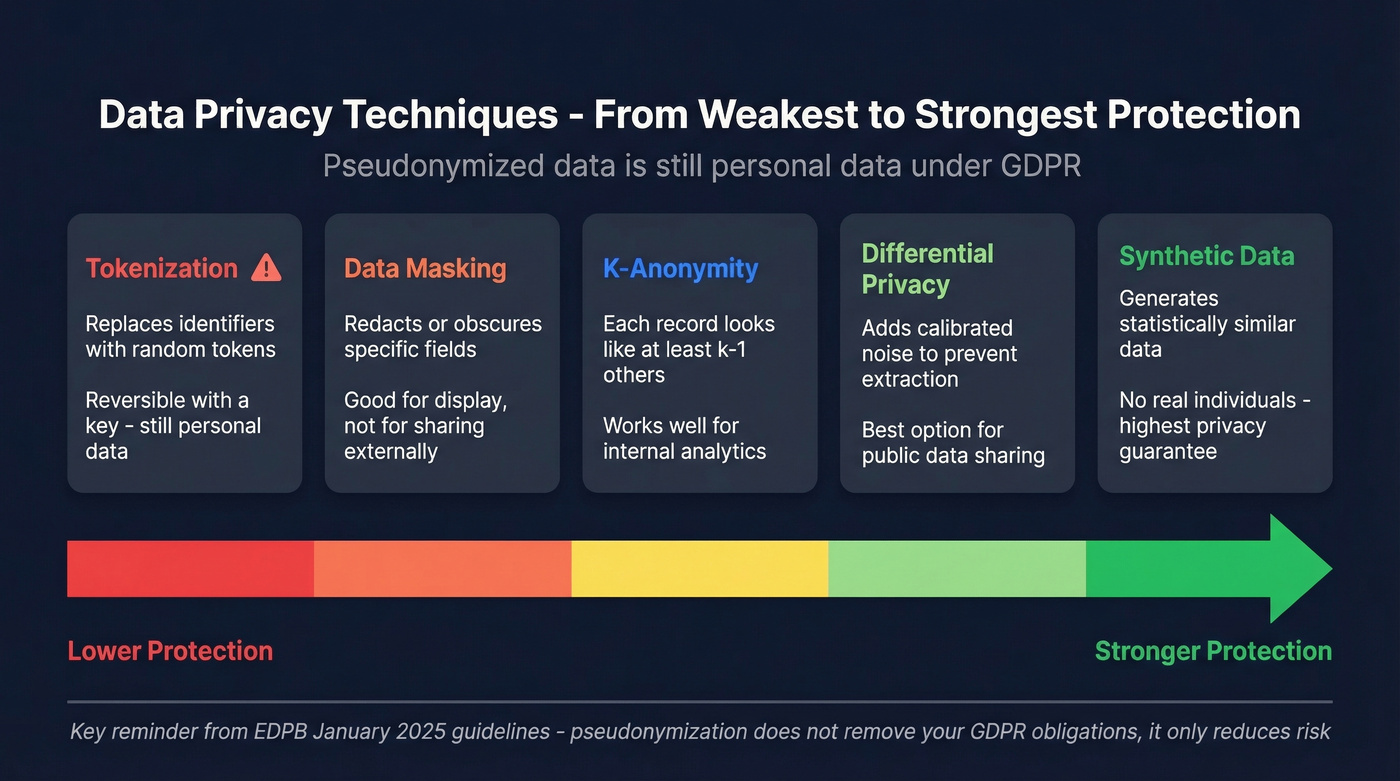

Anonymization vs. Pseudonymization

These terms get used interchangeably, and they shouldn't. The EDPB's January 2025 guidelines clarified a critical point: pseudonymized data remains personal data under GDPR. Replacing a name with a token doesn't remove your compliance obligations - it just reduces risk.

Let's break down the techniques, roughly ordered by strength. Tokenization replaces identifiers with random tokens and is reversible with a key. Data masking redacts or obscures specific fields. K-anonymity ensures each record is indistinguishable from at least k-1 others. Differential privacy adds calibrated noise so individual records can't be extracted. Synthetic data generates statistically similar datasets with no real individuals.

For public data sharing, differential privacy is one of the strongest options. For internal analytics, k-anonymity works well when re-identification risk is lower. The ODI's Data Ethics Canvas is a solid free framework for deciding which approach fits your use case.

Ethical Data Sourcing in B2B

B2B data ethics doesn't get the same attention as consumer privacy, but the principles are identical. The hiQ v. LinkedIn dispute is often cited for the idea that scraping truly public pages can be lawful, while crossing login walls creates real legal exposure. The line between "public" and "gated" is where most B2B data providers get sloppy.

Data freshness is an ethical obligation, not just a quality metric. Contacting someone who left a company two years ago isn't just ineffective - it's a failure of data stewardship. We've seen sales teams burn through thousands of contacts from a "verified" list, only to discover half the records were outdated. That's not a pipeline problem. It's an ethics problem. Responsible sourcing means verifying accuracy at the point of collection and continuously after that, not treating a one-time scrape as a permanent asset.

Skip any provider that can't tell you exactly when their records were last verified or how they handle opt-outs. In our experience, the vendors who dodge those questions are the ones shipping stale, non-compliant data. Prospeo's approach - 5-step verification with catch-all handling, spam-trap removal, and honeypot filtering, records refreshed every 7 days, opt-outs enforced globally - is what data minimization applied to quality looks like.

Data minimization isn't just a regulation - it's good business. Prospeo's 98% email accuracy means you're not hoarding bad data or blasting unverified contacts. Every record refreshes every 7 days, catch-all domains are handled, and you pay only for verified results at $0.01 per email. Ethical outreach that actually converts.

Stop collecting data you can't trust. Switch to 98% verified accuracy.

Implementation Checklist

- Audit every data collection point. Map every form, pixel, API call, and third-party integration. If you can't explain why you're collecting a data point, stop collecting it. If you're buying or enriching records, sanity-check your sources against a compliance guide.

- Fix your consent UX. Rejecting cookies should take exactly as many clicks as accepting them. Test it yourself - if you get frustrated clicking through your own banner, your users already have.

- Apply minimum viable collection. Cut every field that doesn't directly serve a stated purpose.

- Run a Data Protection Impact Assessment. Required under GDPR for high-risk processing, valuable for any data-intensive operation.

- Set retention schedules and enforce them. Data you no longer need is liability you're storing for free.

- Audit your data providers. Ask when records were last verified, whether opt-outs are enforced, and whether DPAs are available. If you're evaluating vendors, start with a shortlist of B2B data providers and compare against data quality benchmarks.

- Train every team that touches data. Sales, marketing, product, engineering - anyone who collects, processes, or acts on personal data needs to understand the principles. Make it ongoing, not a one-time onboarding slide deck. Ethical handling becomes culture when it's woven into weekly workflows, not annual compliance reviews.

Ethical Data Collection FAQ

What's the difference between ethical and legal data collection?

Legal compliance meets regulatory minimums - avoid fines, check boxes. Ethical collection goes further by minimizing data, ensuring genuine consent, and maintaining accuracy even when the law doesn't require it. The gap between the two is where customer trust lives or dies.

What's the biggest GDPR fine ever issued?

Meta received €1.2 billion in 2023 for transferring EU user data to the US without adequate safeguards. It remains the largest GDPR penalty to date and set the precedent that cross-border transfers carry serious financial risk.

Does the EU AI Act affect data collection?

Yes. Since 2025, organizations must categorize AI systems by risk level, strengthen oversight, run red-team tests, and publish transparency information. High-risk AI systems face strict requirements on training data provenance, bias auditing, and documentation.

How often should B2B contact data be refreshed?

The industry average is roughly every 6 weeks, but best practice demands weekly updates. Stale data wastes outreach and erodes sender reputation - a 7-day refresh cycle with 98% email accuracy is the standard we hold ourselves to.

Is scraping publicly available data ethical?

The hiQ v. LinkedIn dispute suggests scraping public pages can be lawful, but legality and ethics diverge. Always check terms of service and consider whether the people behind the data would reasonably expect their information to be collected and used this way.