Send Time Optimization: What Works in 2026

A Klaviyo user posted on Reddit, confused. Their Smart Send Time feature was recommending sends between 10pm and 2am for a US audience of men aged 35-75. "This can't possibly be the best time," they wrote. But the open rates were higher overnight. The algorithm wasn't broken - it was exploiting low-competition windows. That's the core tension with send time optimization: the math often disagrees with your gut, and neither is always right.

The Short Version

- STO delivers a real but modest lift. Expect 5-15% improvement in open rates. Worthwhile, not miraculous.

- If your tool optimizes for opens, Apple Mail Privacy Protection is poisoning the data. Switch to click-based optimization now.

- Set quiet hours, run a manual A/B test first, and don't trust overnight recommendations blindly. Two weeks of manual testing beats a month of trusting a cold-start model.

- Before optimizing when you send, verify who you're sending to. Stale data makes timing optimization pointless.

What Is Email Send Time Optimization?

Send time optimization is the practice of delivering emails, push notifications, or SMS at the predicted best moment for each individual recipient based on their engagement history. It isn't timezone sending, which just delivers at the same local time for everyone.

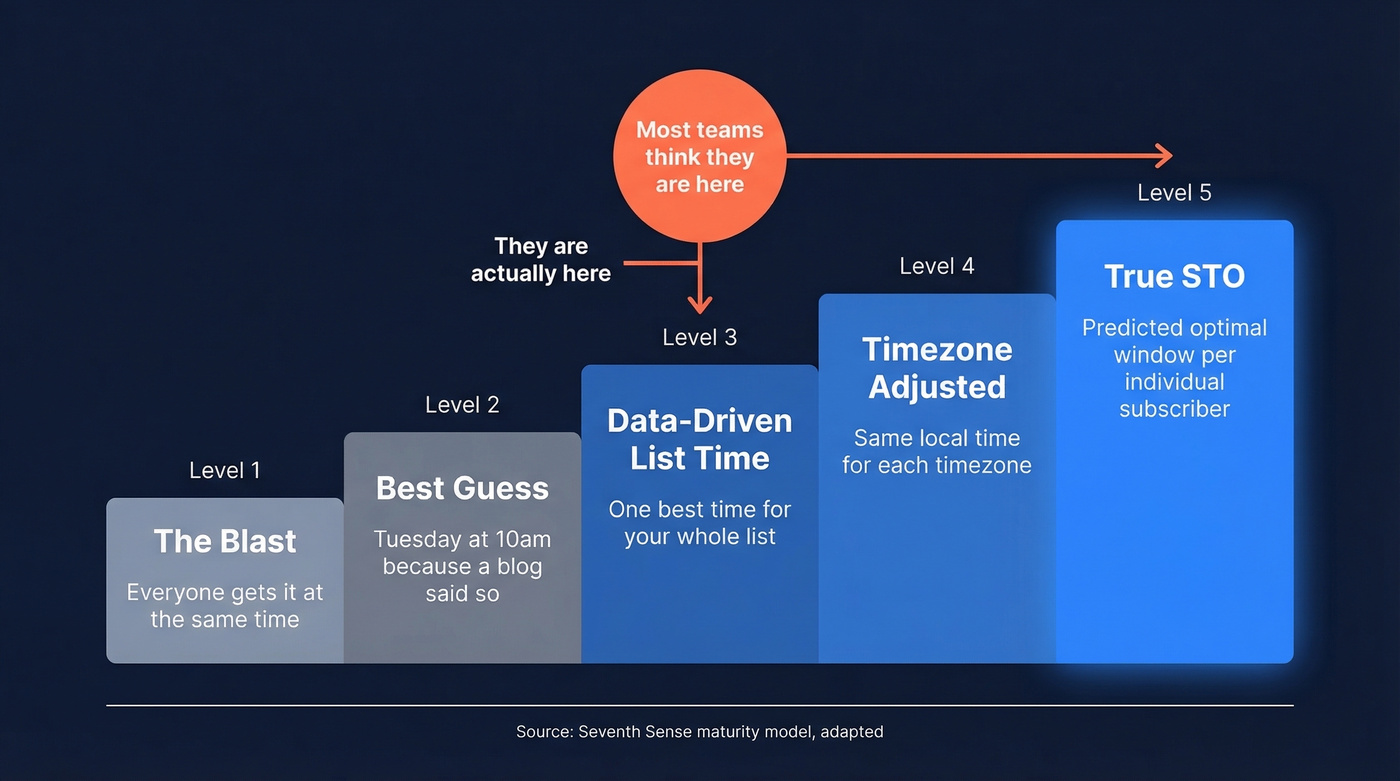

Seventh Sense published a useful five-level maturity model that frames this well. Level 1 is the "whenever" blast - everyone gets it simultaneously. Level 2 is the "best guess" blast, following generic advice like "Tuesday at 10am." Level 3 uses data to find the single best time for your entire list. Level 4 adjusts by timezone. Level 5 - true STO - predicts the optimal window for each subscriber individually.

Most teams think they're at Level 5 when they flip an STO toggle. They're usually at Level 3 with a timezone wrapper. The gap matters because per-person optimization requires fundamentally different data collection than list-level optimization.

How STO Actually Works

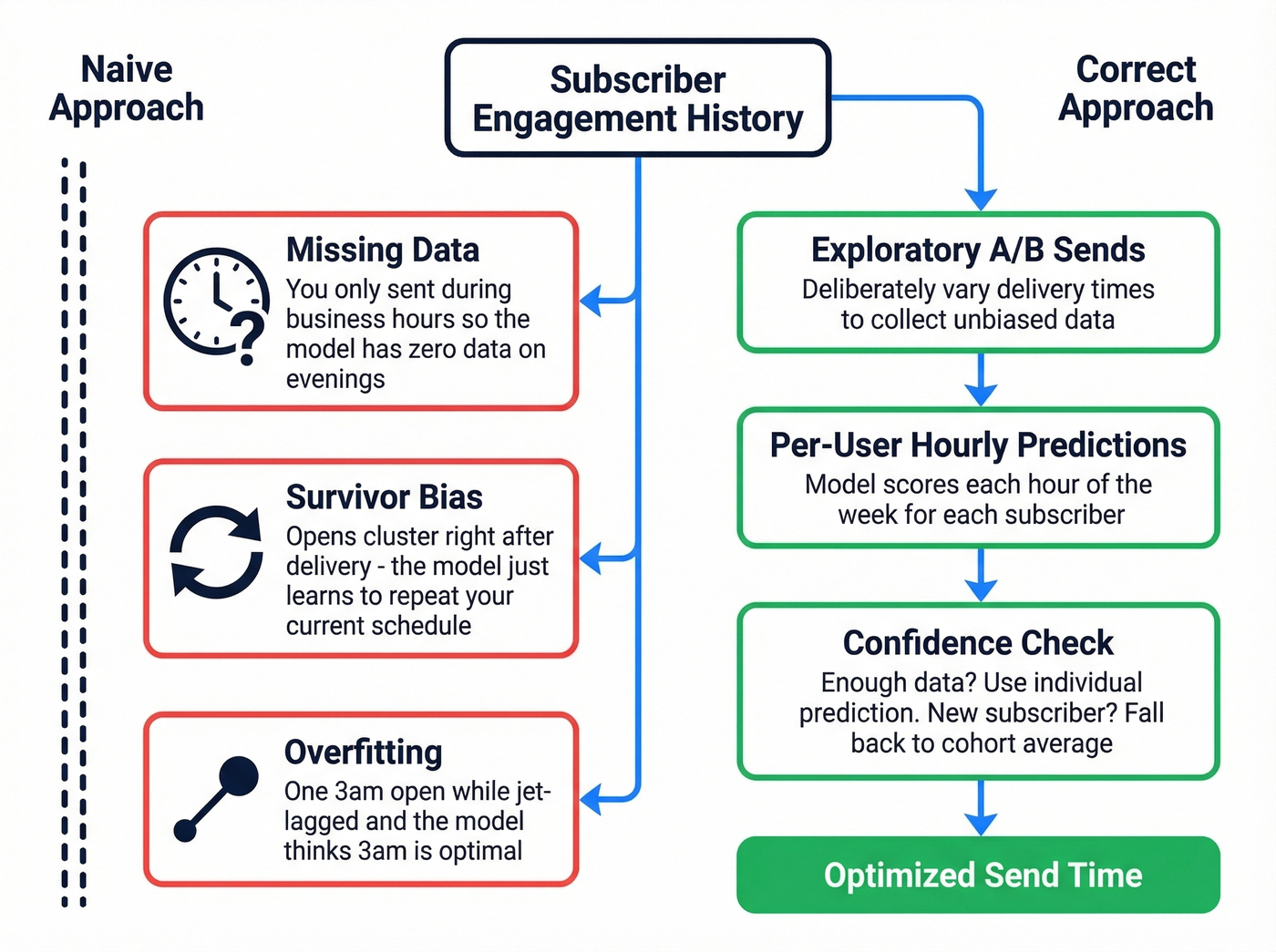

The intuitive approach seems simple: look at when each subscriber opened past emails, then send future emails at those times. Klaviyo's engineering team documented why this doesn't work, and the problems are instructive.

Missing data comes first. Most brands send campaigns during business hours. If you've never sent at 7pm, you have zero data about whether 7pm works. The model can't recommend a time it's never tested.

Survivor bias distorts everything. Opens cluster in the minutes after delivery - not because that's the best time, but because that's when the email is newest in the inbox. A model trained on this data just learns "send at whatever time you already send," which is circular and useless.

Overfitting kicks in with small datasets. If a subscriber opened one email at 3am while jet-lagged, a naive model concludes 3am is their optimal window. That's noise.

The solution is automated A/B testing - an "exploratory send" system that deliberately varies delivery times to collect unbiased data. Adobe Journey Optimizer's Send-Time Optimization is built around this approach, making predictions for each hour of the week for each user and letting you cap how long the system can delay a message.

Then there's the cold-start problem. When a new subscriber joins, the model has nothing to work with. Good STO tools fall back to cohort-level predictions, grouping new subscribers with similar users. Bad ones default to the list average, which defeats the purpose entirely.

Why Apple MPP Breaks STO

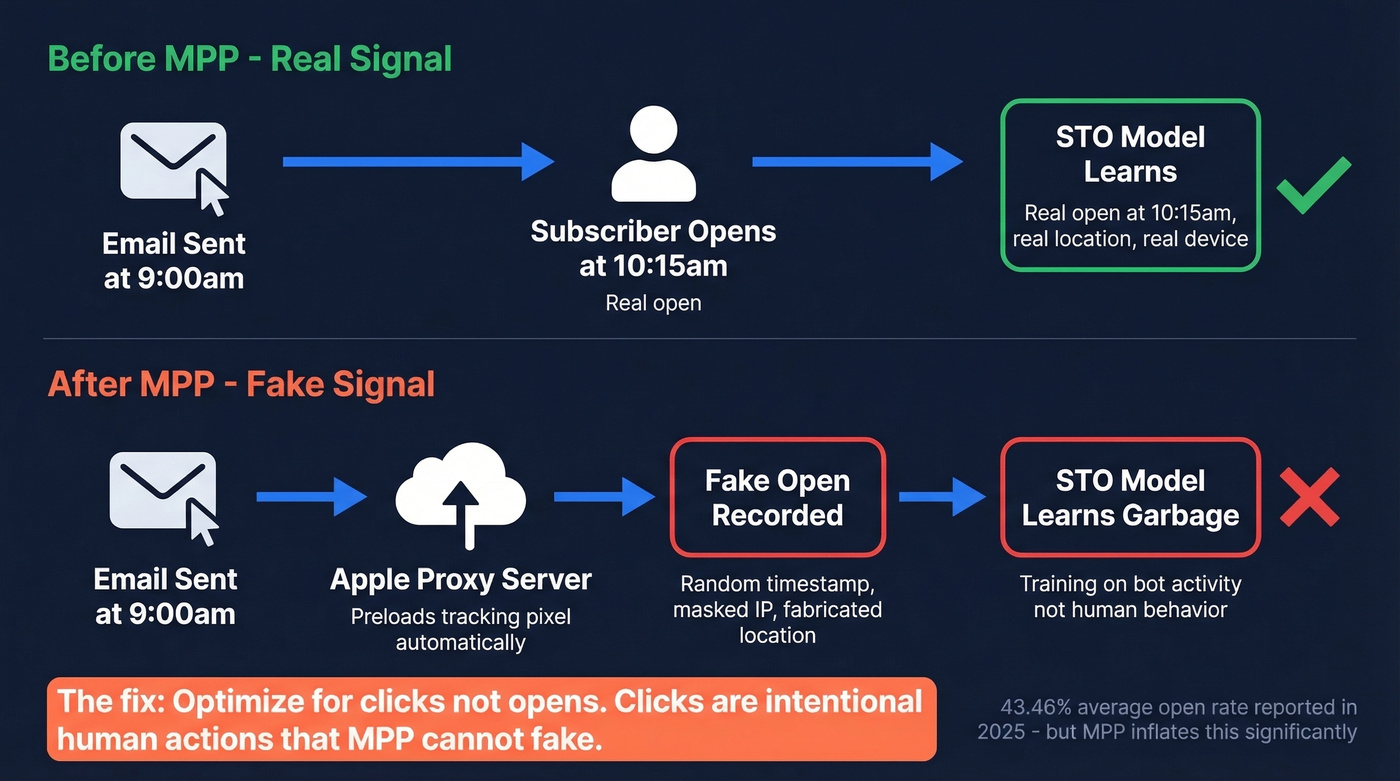

Here's the thing: many tools still default to open-rate optimization in 2026 - nearly five years after Apple Mail Privacy Protection made opens unreliable.

MPP, rolled out in 2021, preloads tracking pixels via Apple's proxy servers. When a subscriber uses Apple Mail, regardless of their email provider, the pixel fires automatically. The email registers as "opened" even if the person never looked at it. The timestamp, IP address, and geolocation are all masked or fabricated.

For timing optimization, this is devastating. The model is trying to learn when someone engages, but MPP feeds it fake engagement signals at random times. MailerLite's 2025 benchmark study across 3.6M campaigns reports an overall open rate of 43.46% - but explicitly caveats that Apple MPP inflates these numbers. Real human open rates are meaningfully lower.

It gets worse. With iOS 18, Apple introduced Link Tracking Protection that can strip tracking parameters from links in Mail and Safari, complicating attribution even for click-based measurement. If your STO tool optimizes for opens, switch to click optimization today. Clicks remain an intentional human action that MPP can't fake. Many major platforms offer this option - most just don't make it the default.

MPP is poisoning your open data, and stale contacts are wasting every optimized send window. Prospeo's 7-day data refresh and 98% email accuracy mean your STO algorithms train on real engagement from real people - not bounces and bot opens.

Clean data is the prerequisite for every timing optimization that actually works.

Does STO Actually Work?

Let's be honest about the evidence and who's presenting it.

Braze's case studies show the strongest numbers. OneRoof, a property platform, saw +23% click-to-open rate, +57% unique clicks, and +218% total clicks after implementing Intelligent Timing alongside personalization and in-app messaging. Foodora reported +9% email CTR and a 26% reduction in unsubscribes.

Impressive - but notice the bundling. OneRoof combined STO with personalization and in-app messaging. Isolating STO's contribution from the broader optimization package is nearly impossible from vendor case studies.

A hotel industry analysis offers more granular data. Across 1.68M sends for a luxury California resort, Wednesday performed best for leisure offers with a 22.83% open rate and 16.75% CTOR, while newsletters peaked on Sundays. Booking recovery emails hit 37.8% opens and an 8.1% conversion rate. The key insight: the optimal send window varies dramatically by campaign intent and audience, not by some universal "best day."

An early beta across 15 brands showed an average +10% lift in open rate between prior practice and optimized send times. That aligns with the broader industry consensus: STO delivers a real but modest 5-15% improvement. It won't save a bad campaign, but it's free lift on a good one.

STO is the most overhyped and underpracticed tactic in email marketing simultaneously. Everyone talks about it, almost nobody has the data quality to make it work, and the teams that do get it right treat it as the cherry on top - never the cake.

When to Override STO

Override it when:

- You're sending time-sensitive campaigns - flash sales, event reminders, breaking news. STO could delay delivery past the relevance window.

- Your list is new or small. Without around 2-4 weeks of engagement history per subscriber, the model is guessing. A well-chosen manual send time beats a cold-start prediction.

- The recommendation is clearly absurd. A Mailchimp user reported STO recommending 11pm for a B2B list of business professionals. Another saw the optimizer suddenly shift to 5am after months of normal recommendations.

Trust it when:

- You have sufficient engagement data and the model has stabilized over several campaigns.

- You've set quiet hours that prevent overnight sends, unless your data genuinely supports them.

- The "weird" recommendation has a logical explanation. That Klaviyo user seeing 10pm-2am recommendations? Less inbox competition overnight means higher open rates per send. The algorithm isn't wrong - it's optimizing for a metric that might not align with your goals.

Don't trust it blindly. Use it as a starting point, not an autopilot.

How to Get STO Right

Here's the playbook we've seen work across dozens of outbound teams:

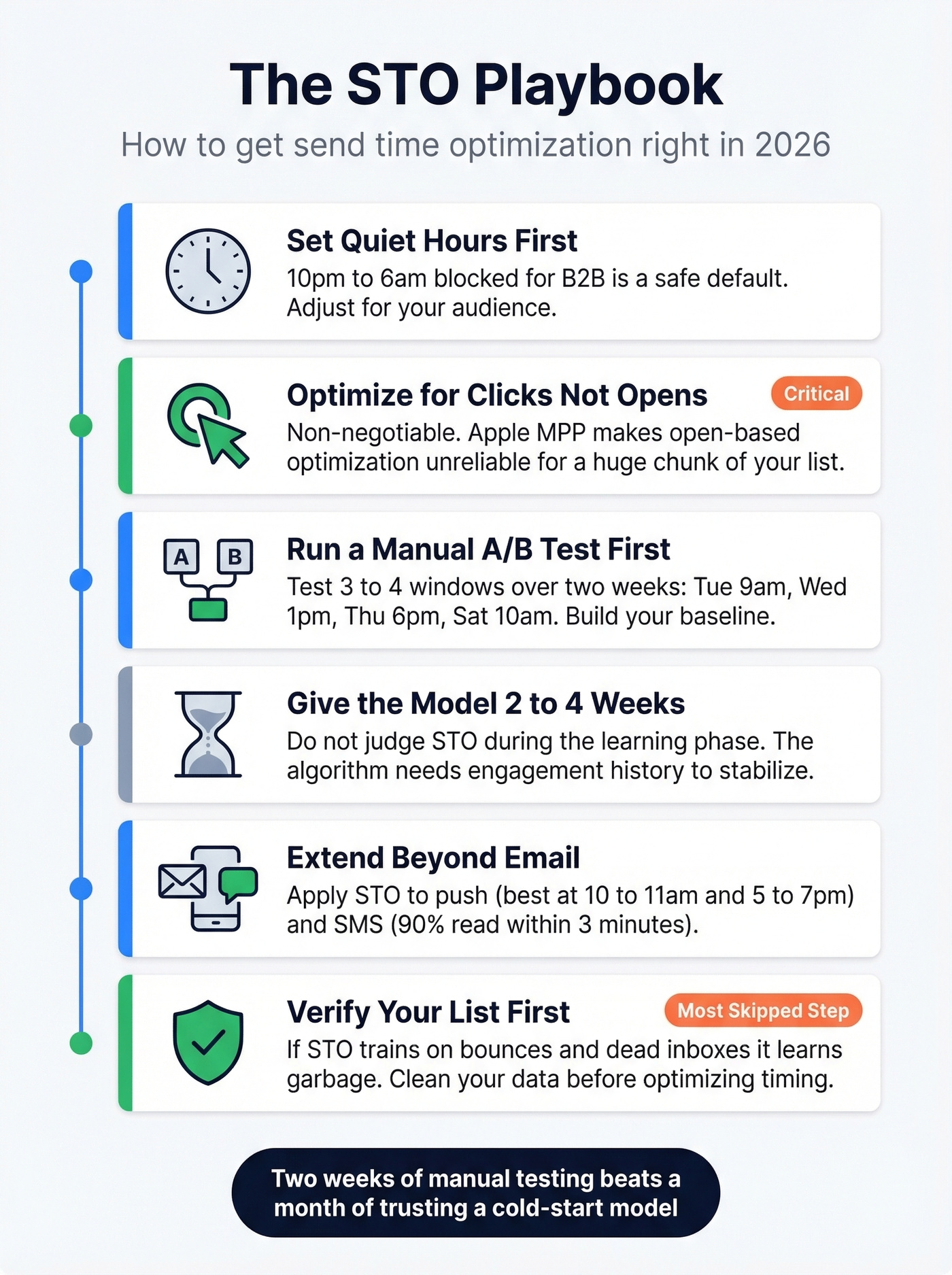

Set quiet hours first. Every STO tool lets you define windows where sends are blocked. 10pm-6am is a reasonable default for B2B. Adjust for your audience.

Optimize for clicks, not opens. Non-negotiable in 2026. Open-based optimization is compromised by Apple MPP for a significant chunk of your list.

Run a manual A/B test before trusting the model. Pick 3-4 send windows - Tuesday 9am, Wednesday 1pm, Thursday 6pm, Saturday 10am. Test each over two weeks. This gives you a baseline and helps you evaluate whether STO's recommendations actually beat your manual best.

Give the model enough data. Most platforms stabilize after around 2-4 weeks of engagement history per subscriber. Don't judge STO performance during the learning phase.

Extend STO beyond email. Modern platforms apply timing optimization to push notifications and SMS too. Push often performs best in the 10-11am and 5-7pm windows, and SMS demands even more precision - around 90% of texts are read within 3 minutes, so a poorly timed message is a wasted message.

Verify your list before optimizing timing. This is the step most teams skip, and it's the one that matters most. If your STO model is learning from bounces and dead inboxes, it's learning garbage. Prospeo's 5-step verification catches invalid addresses, spam traps, and catch-all domains before they corrupt your engagement signals.

STO Across Platforms

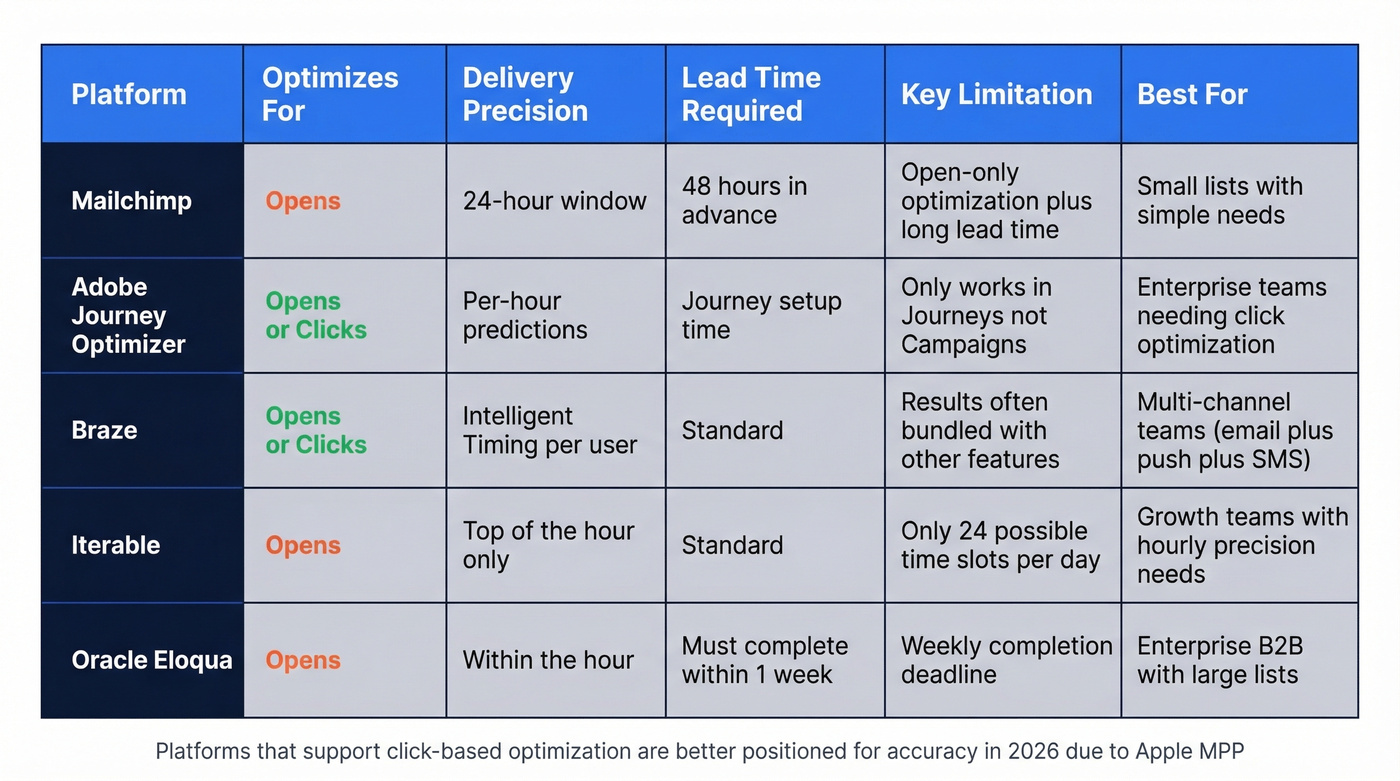

Not every email platform handles timing optimization the same way, and the differences matter more than you'd think.

Mailchimp optimizes for opens within a 24-hour window of the date you select, and requires scheduling at least 48 hours in advance. That lead time catches people off guard.

Adobe Journey Optimizer offers STO for built-in Email and Push actions inside Journeys, not Campaigns. Email can optimize for opens or clicks; push is optimized for opens. For teams that need click-based optimization, this is one of the stronger options alongside Braze.

Iterable sends at the top of the hour only - 24 possible slots per day, not infinite precision.

Oracle Eloqua sends within the hour around a contact's optimal time, and all STO-enabled emails must complete within the week of initiation.

Higher Logic makes STO available in all accounts but requires support to enable it, using each contact's historical open behavior to bias sends.

The biggest differentiator isn't price. It's whether the tool can optimize for clicks. If it only uses opens, you're building on a foundation tainted by MPP.

Fix Your Data Before Optimizing Timing

Here's a scenario we see constantly: a team enables STO, watches open rates for a month, sees marginal improvement, and concludes it doesn't work. But their list has a 12% bounce rate. The algorithm is dutifully optimizing delivery timing to inboxes that don't exist.

The hierarchy goes: data quality, then segmentation, then content, then deliverability, then send timing. Skip any layer and the ones above it underperform. Snyk's team saw bounce rates drop from 35-40% to under 5% after cleaning their contact data - that's the kind of foundation send time optimization needs to actually learn from real engagement patterns instead of noise.

You just read that STO delivers 5-15% lift on open rates. Know what kills that lift instantly? Sending to outdated emails that bounce. Prospeo verifies 143M+ emails through a 5-step process and refreshes every 7 days - so your carefully timed sends actually reach inboxes.

Stop optimizing send times for contacts who no longer exist.

FAQ

Is there a universal best time to send email?

No - that's exactly why STO exists. The answer differs for every recipient based on device habits, timezone, and inbox volume. Generic advice like "Tuesday at 10am" just concentrates sends into the same competitive window, which is why per-person optimization outperforms static scheduling.

Does STO work for small lists?

It can, but results are less reliable below a few thousand subscribers. Most STO models stabilize after 2-4 weeks of engagement data per person. For small lists, cohort-level fallbacks - grouping similar users together - matter more than per-person predictions.

How does Apple MPP affect STO?

MPP preloads tracking pixels and fires fake opens at random times, corrupting the timing data STO models depend on. Choose a tool that optimizes for clicks, not opens, and your model will learn from real human behavior instead of Apple's proxy servers.

Should I clean my list before enabling STO?

Yes. STO optimizes delivery timing, but if 10%+ of your list bounces, the model learns from bad data. Verify your list first so the algorithm has clean signals to work with. The consensus on r/Emailmarketing is that list hygiene is the single highest-ROI activity before any optimization - timing included.