How to Measure Lead Quality: Metrics, Scoring Models, and Benchmarks

It's Monday morning. Marketing's dashboard shows 400 MQLs from last month's campaign. Sales worked 12 into opportunities. The VP of Marketing says the leads are fine. The sales director says they're garbage. Nobody has a shared definition of "quality," so the argument goes nowhere - again.

If that sounds familiar, you don't need more opinions. You need shared metrics. And you need them built on clean data, because 97% of leads aren't ready to buy in the first place.

The Quick Version

Stop tracking CPL and MQL volume as quality indicators. Track MQL-to-SQL conversion rate, cost per qualified lead, and Speed-to-lead instead. Build a lead scoring model that Sales helped design, with negative scoring for inactivity. And fix your data quality first - a scoring model built on decayed contacts is just sophisticated garbage sorting.

Why Most Teams Get It Wrong

Two campaigns can have the exact same CPL - say, $45 - and produce wildly different pipeline. One converts 3% to opportunity. The other converts 30%. CPL told you nothing useful.

The diagnostic signal most teams miss is SDR behavior. When reps start cherry-picking inbound leads and ignoring the rest, that's not laziness. It's a quality problem surfacing through human filtering. The consensus on r/b2bmarketing is blunt: "more leads, lower CPL" looks great on paper until sales focuses only on what closes fastest, regardless of fit.

Here's the dollar cost nobody calculates: if your SDR costs $35/hour and spends 15 minutes qualifying each bad lead, 200 junk leads per month burns $1,750 - before you count the opportunity cost of good leads they didn't call. Most teams don't even have a structured qualification framework like BANT or MEDDIC to catch this waste early, which tanks the overall qualification rate before deals ever reach pipeline.

Lead Quality Metrics That Actually Matter

Forget the 15-metric dashboard. You need four numbers and one north star.

| Metric | What It Tells You | How to Calculate |

|---|---|---|

| MQL-to-SQL rate | Lead definition accuracy | SQLs / MQLs x 100 |

| CPQL | True cost of quality | Total spend / qualified leads |

| Speed-to-lead | Follow-up discipline | Time from MQL to first touch |

| Contact/bounce rate | Data quality health | Bounced / total contacted |

The north star is revenue per lead. It's the only metric that connects marketing spend to closed deals without letting either team hide behind vanity numbers. Once you know the dollar value per lead for each channel, budget allocation stops being a debate and starts being arithmetic.

One tactic we've seen top teams adopt: syncing CRM stage data back to ad platforms via Meta CAPI or Google Offline Conversions. This closes the loop between "lead generated" and "deal closed," so your ad spend optimizes for revenue, not form fills.

Top-performing B2B teams convert 25-35% of MQLs to SQLs. The industry average sits around 18-22%. Below 15%, your lead definitions or your data need work.

You just read that 70.8% of contact data changes within 12 months. If your scoring model runs on stale records, every metric above is meaningless. Prospeo refreshes 300M+ profiles every 7 days - not 6 weeks - delivering 98% email accuracy and an 83% enrichment match rate. No contracts. No sales calls.

Stop scoring leads against data that expired last quarter.

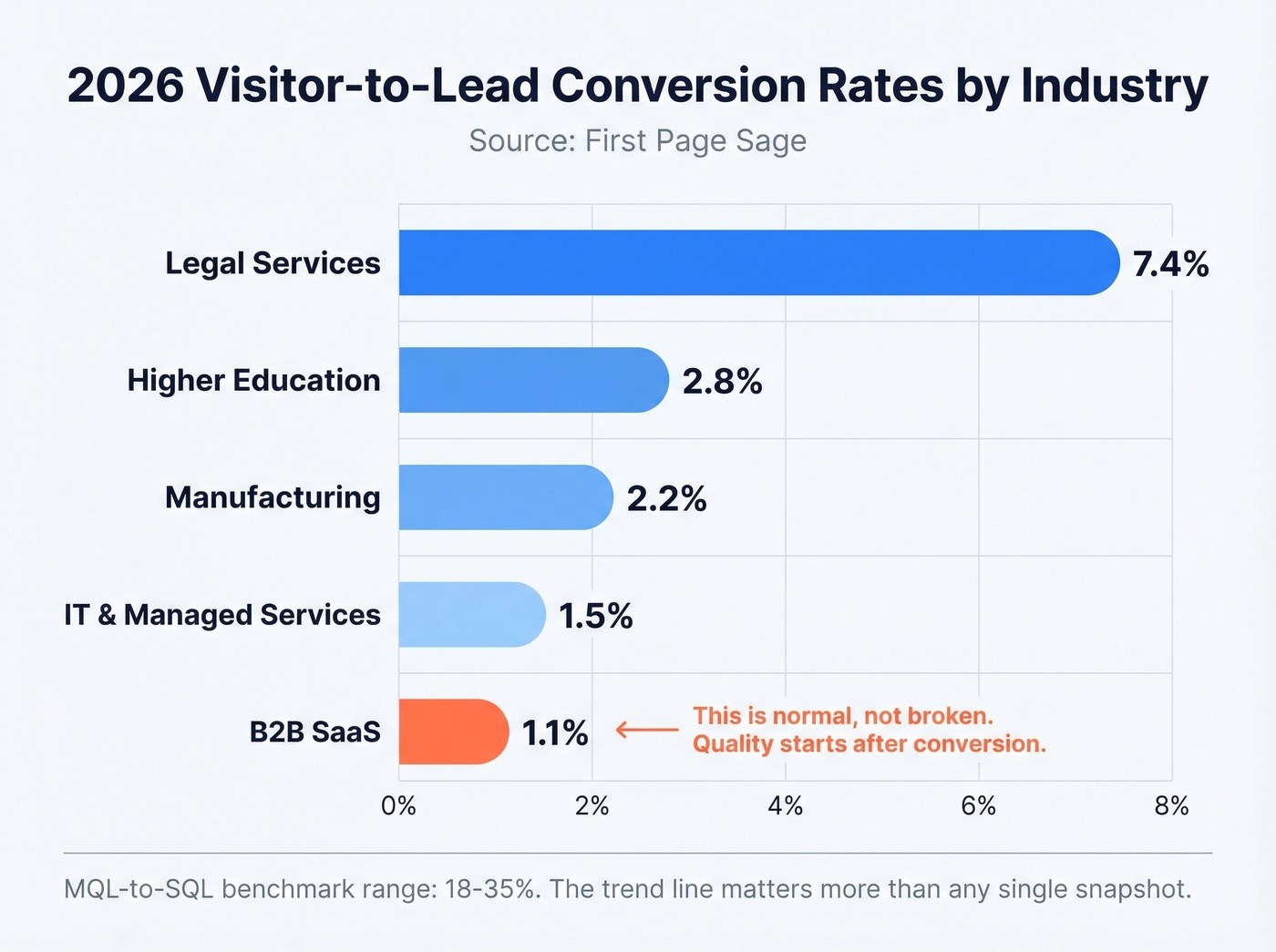

Benchmarks for 2026

Benchmarks are directional, not gospel. Here are 2026 visitor-to-lead conversion rates by industry:

| Industry | Visitor-to-Lead Rate |

|---|---|

| Legal Services | 7.4% |

| Higher Education | 2.8% |

| Manufacturing | 2.2% |

| IT & Managed Services | 1.5% |

| B2B SaaS | 1.1% |

If you're a B2B SaaS company converting visitors at 1.1%, that's normal - not broken. The quality question starts after conversion: what percentage of those leads become pipeline? For MQL-to-SQL specifically, 18-35% is the range you're calibrating against. Track qualified leads per month at each funnel stage. The trend line matters more than any single snapshot.

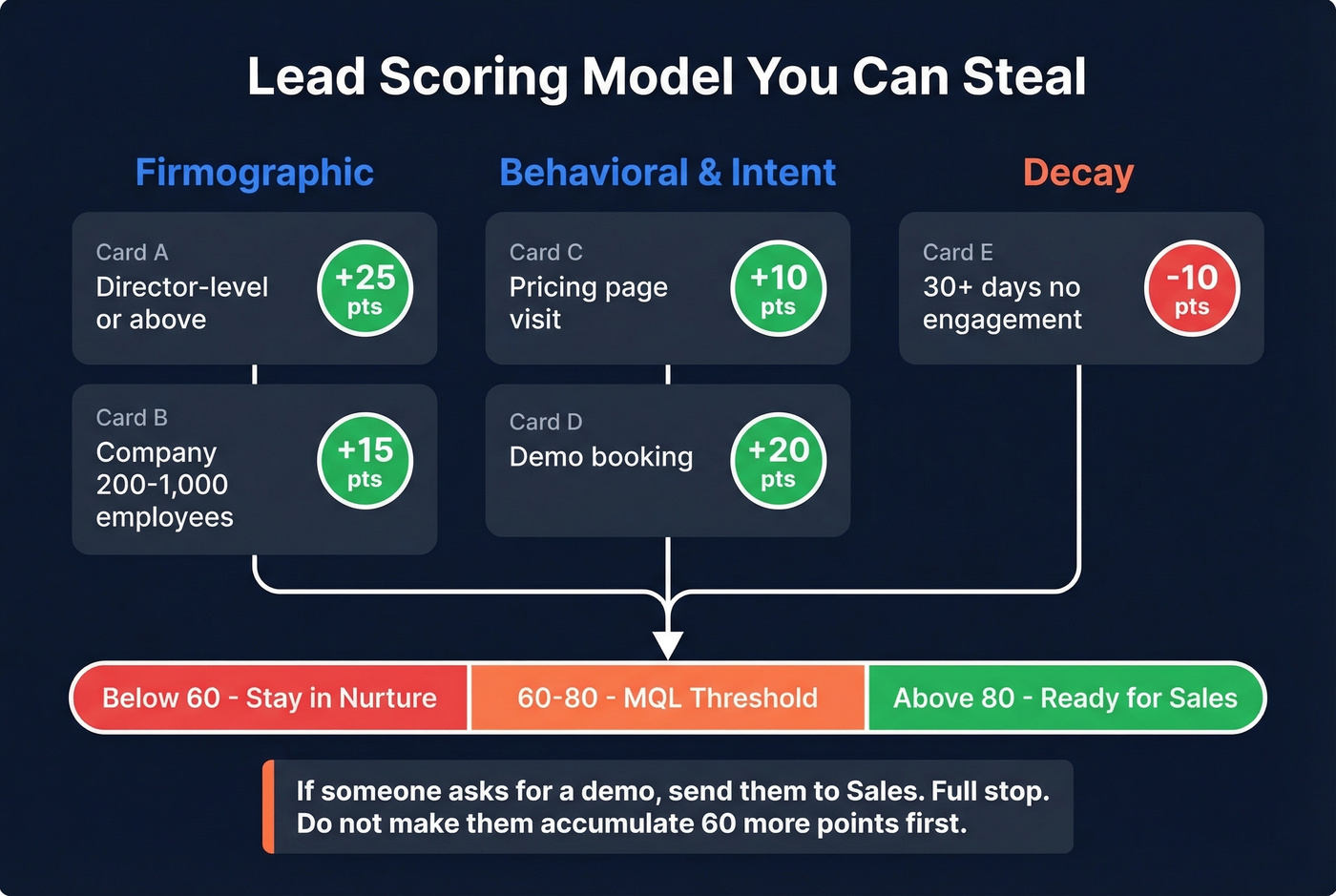

A Scoring Model You Can Steal

Most scoring models fail because marketing builds them in a vacuum. Here's one we've pressure-tested across multiple B2B teams:

| Signal | Points | Type |

|---|---|---|

| Director-level or above | +25 | Firmographic |

| Company 200-1,000 employees | +15 | Firmographic |

| Pricing page visit | +10 | Behavioral |

| Demo booking | +20 | Intent |

| 30+ days without engagement | -10 | Decay |

MQL threshold: 60-80 points. Below 60, the lead stays in nurture. Above 80, it's ready for a direct sales conversation.

The mistakes that kill scoring models:

- Don't score email opens. It's noise, not signal.

- Involve Sales in building the model. If they didn't help design it, they won't trust it.

- Handle inactivity aggressively. A contact with 365 days of zero engagement probably doesn't belong in your database at all.

- If someone asks for a demo, send them to Sales. Full stop. Don't make them accumulate 60 more points first.

Let's be honest about the ultimate test here: does the model increase the percentage of qualified leads that convert to closed-won? If your conversion rate doesn't improve within two quarters of deploying a new model, the scores aren't reflecting real buying intent. Scrap it and rebuild.

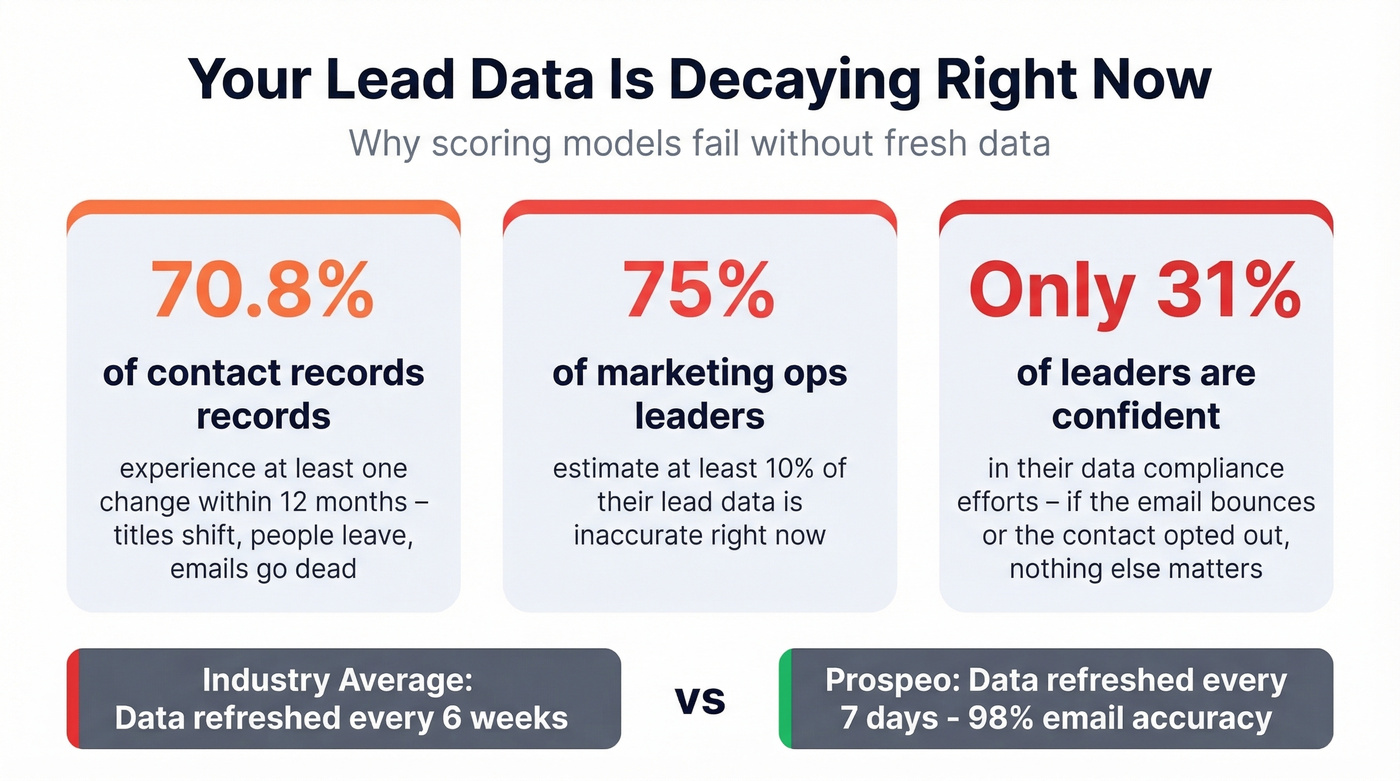

Data Quality: The Foundation Nobody Wants to Talk About

Look - a scoring model is only as good as the data it scores. 70.8% of contact data experiences at least one change within 12 months. Titles shift, people change companies, emails go dead. A 2026 survey by Integrate and Demand Metric found that 75% of marketing ops leaders estimate at least 10% of their lead data is inaccurate, and 34% have experienced reputational or financial harm from data governance lapses.

The compliance dimension matters more than most teams realize - only 31% of leaders are fully confident in their data compliance efforts. If the email bounces or the contact opted out, nothing else in your scoring model matters.

This is where data freshness becomes a real differentiator. Prospeo refreshes its database every 7 days compared to the 6-week industry average, which means 98% email accuracy and an 83% enrichment match rate. Your scoring model works with current data, not records that decayed three months ago. You can verify a list or enrich your CRM without a contract or a sales call.

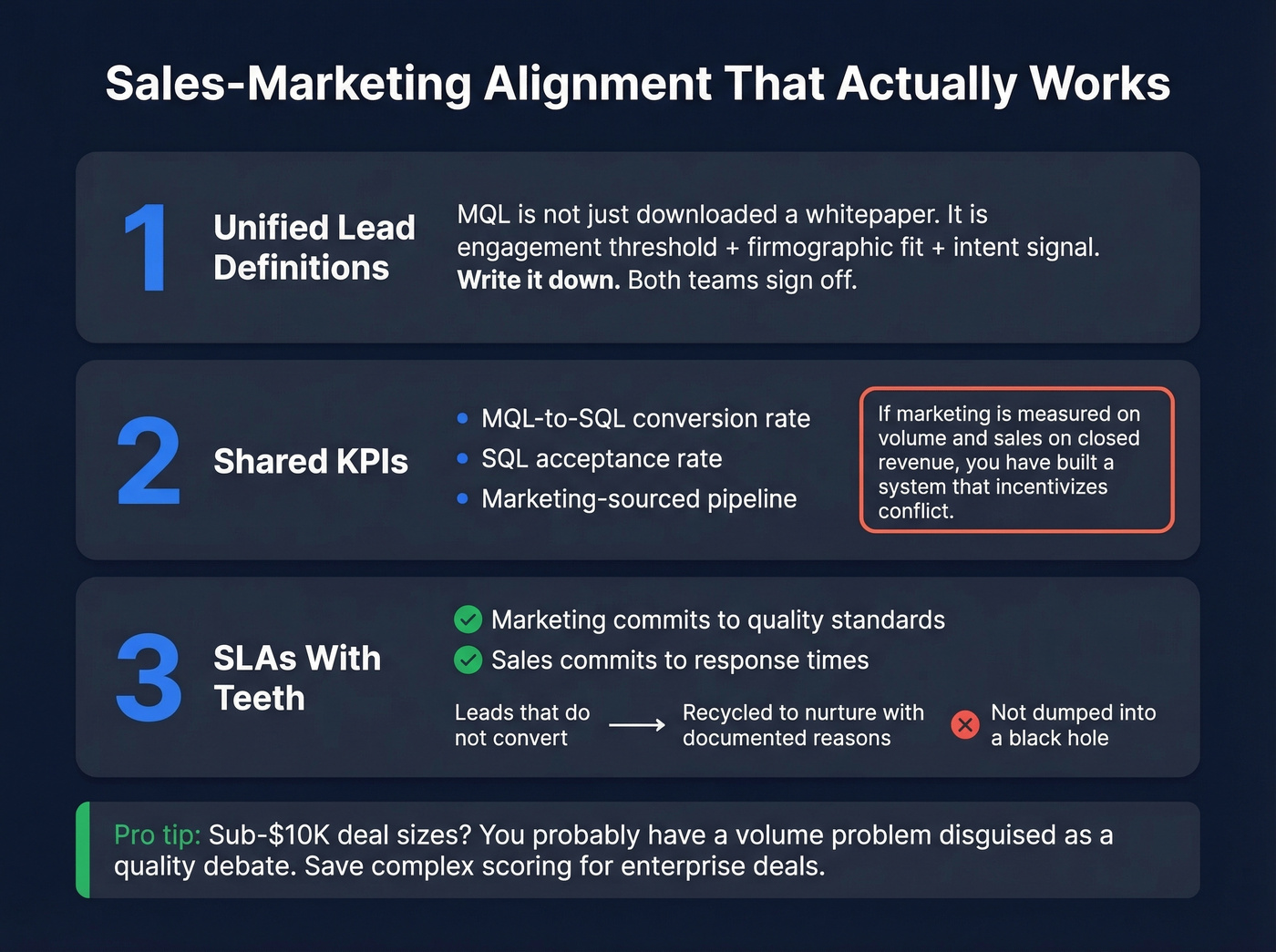

Sales-Marketing Alignment

Measurement only works when both teams agree on what they're measuring.

Unified lead definitions. An MQL isn't "downloaded a whitepaper." It's engagement threshold + firmographic fit + intent signal. Write it down. Get both teams to sign off. (If you need a starting point, use an Ideal Customer Profile rubric.)

Shared KPIs. MQL-to-SQL conversion rate, SQL acceptance rate, and marketing-sourced pipeline. If marketing is measured on volume and sales on closed revenue, you've built a system that incentivizes conflict. Calculating the average lead value by source helps both teams prioritize the channels that actually drive pipeline.

SLAs with teeth. Marketing commits to quality standards. Sales commits to response times. Leads that don't convert get recycled back to nurture with documented reasons - not dumped into a black hole.

In our experience, most teams with sub-$10K deal sizes don't actually have a lead quality problem. They have a lead volume problem disguised as a quality debate. When your average deal is small, the math favors speed and coverage over elaborate scoring. Save the 15-variable models for enterprise deals where a single bad handoff costs you a quarter.

Measurement without clean data is theater. Fix the foundation, align the teams, and the metrics follow. That's the real answer to how to measure lead quality - not more dashboards, but better data and shared accountability.

Bad leads cost your SDRs $1,750/month in wasted effort. Cut that waste at the source. Prospeo's 5-step email verification eliminates bounces, spam traps, and dead contacts before they ever hit your CRM - at $0.01 per email. Your MQL-to-SQL rate climbs when every contact is real.

Verified data turns your scoring model from theory into pipeline.

FAQ

What's the difference between MQL and SQL?

An MQL shows engagement and ICP fit - content downloads, repeat visits, firmographic match. An SQL shows buying intent: demo requests, pricing page visits, direct outreach. The gap between them is where most lead quality problems hide, and it's the first place to audit when conversion rates drop.

What's a good MQL-to-SQL conversion rate?

Top B2B teams convert 25-35% of MQLs to SQLs. The industry average is 18-22%. Consistently below 15%? Revisit your lead definitions and data quality before blaming sales follow-up - bad contact data is the silent killer most teams overlook.

How often should you refresh lead data?

Refresh contact data at least monthly - weekly is ideal. With 70.8% of records changing within 12 months, stale data tanks your bounce rate and scoring accuracy.

What's the best free way to audit lead quality?

Pull your last 90 days of MQLs and calculate the MQL-to-SQL conversion rate by source. Any channel below 10% needs immediate investigation - either the targeting is off or the contact data has decayed. Prospeo's free tier (75 emails/month) lets you spot-check email validity without a commitment.