How to Measure Sales Coaching Effectiveness in 2026

It's the end of the quarter. The VP asks for proof the coaching program moved the needle, and all you've got is a slide showing 200 training hours delivered and a 4.2/5 satisfaction score. That's not measurement - that's attendance tracking.

Only 16% of reps hit quota in 2024, down from 53% in 2012. And yet only 37% of organizations evaluate coaching outcomes beyond completion and satisfaction. The deeper problem: most dashboards stop at performance reporting and never feed insights forward into capacity planning or headcount decisions. That gap is costing teams real money.

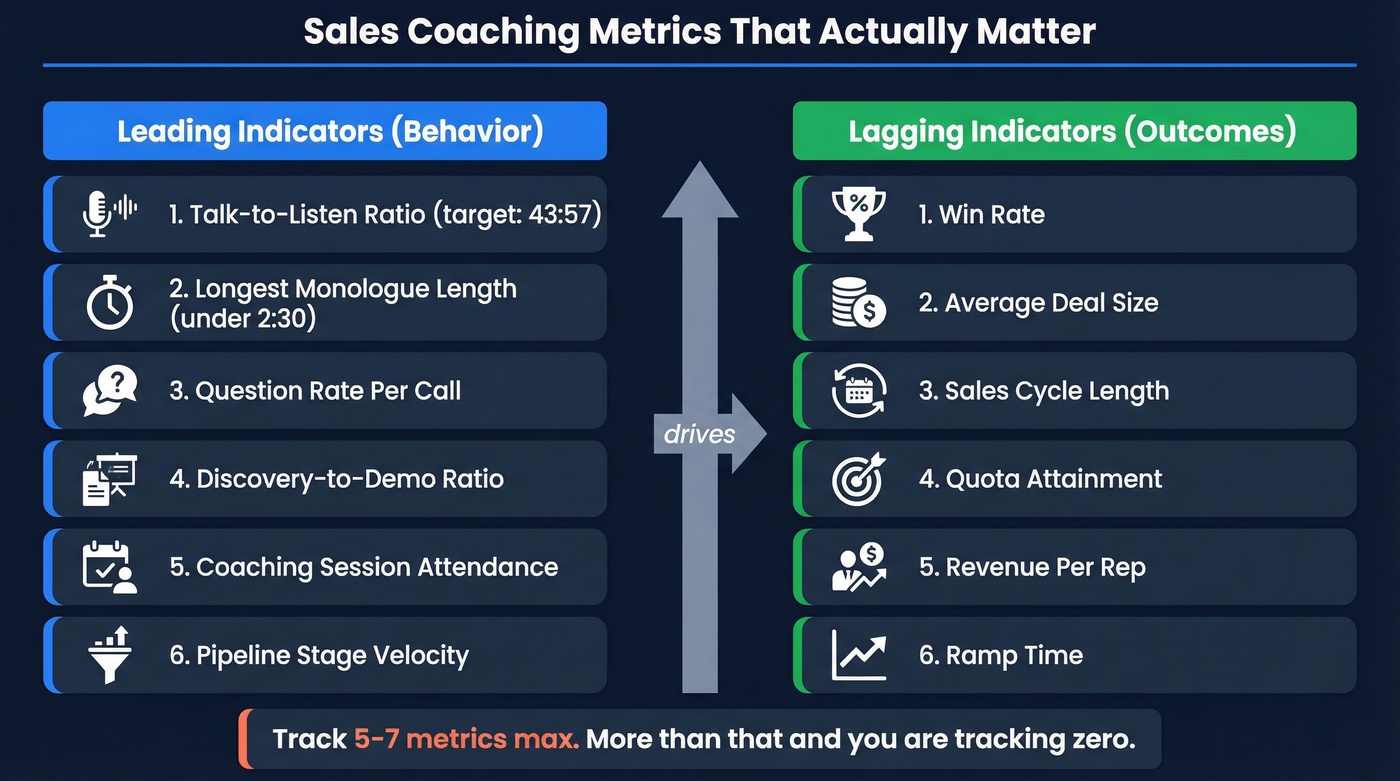

Quick version: Track 5-7 metrics max, split between leading indicators (behavior change) and lagging indicators (revenue impact). Measure the coaches, not just the reps. Build a coaching scorecard and run coached-vs-control cohorts for at least 3-6 weeks before drawing conclusions.

Metrics That Actually Matter

If you're only measuring lagging indicators, you're measuring luck, not coaching. The whole point is connecting behavior change to business outcomes - and that means tracking both sides.

| Leading Indicators (Behavior) | Lagging Indicators (Outcomes) |

|---|---|

| Talk-to-listen ratio | Win rate |

| Longest monologue length | Average deal size |

| Question rate per call | Sales cycle length |

| Discovery-to-demo ratio | Quota attainment |

| Coaching session attendance | Revenue per rep |

| Pipeline stage velocity | Ramp time |

The leading column tells you whether coaching is changing how reps sell. The lagging column tells you whether those changes produce revenue. You need both.

Gong's analysis of B2B sales calls found the highest-converting talk-to-listen ratio sits around 43:57 - reps talking 43% of the time. Longest monologue? Keep it under 2:30. If your reps are running five-minute product monologues, that's a concrete coaching target you can measure before and after intervention.

The pattern we've seen repeatedly - and that practitioners on r/SaaSSales describe in detail - is that reps learn MEDDIC in training, then revert to endless discovery calls and feature dumps within weeks. That reversion is exactly what your leading indicators should catch. Map each metric to a specific skill area so you know which coaching intervention drove which change.

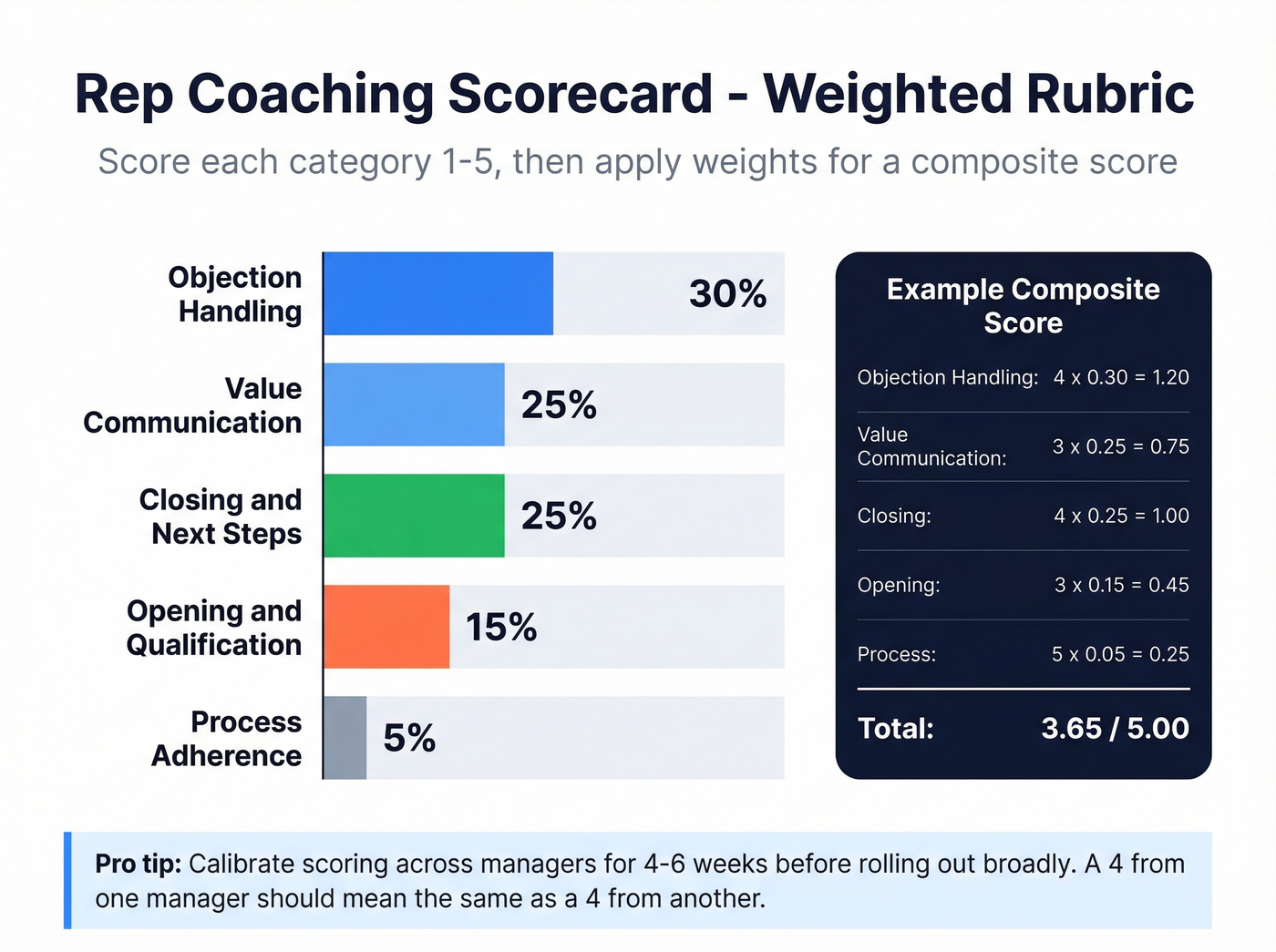

Build a Rep Coaching Scorecard

A scorecard turns subjective "that call felt good" into structured, repeatable evaluation. Here's a weighted rubric framework that works:

- Opening & Qualification - 15% weight

- Value Communication - 25% weight

- Objection Handling - 30% weight

- Closing & Next Steps - 25% weight

- Process Adherence - 5% weight

Each category gets scored on a 1-5 scale, weighted, and rolled into a single composite score. But the numbers alone aren't enough. Every scorecard entry needs qualitative notes on what went well, what to improve, and what the rep actually learned - because that narrative context is what makes the next coaching session productive. Five to seven metrics, max. Tracking 20 KPIs means you're tracking zero.

Pilot the scorecard with 2-3 managers for 4-6 weeks before rolling it out broadly. Calibrate scoring criteria so a "4" from one manager means the same thing as a "4" from another. We've seen teams skip calibration and end up with scores that are useless for comparison - one manager scores generously, another scores like a harsh professor, and the data tells you nothing about actual rep performance.

Measure the Coaches Too

Here's the thing: evaluating coaching effectiveness without evaluating the coaches is like grading students without assessing teachers. Less than 20% of a sales leader's time goes to actual coaching. Only 30% of managers coach within 24 hours of a call - and coaching within that window makes reps 2.5x more likely to improve.

Gong's coaching metrics data makes this concrete. It tracks TRUE/FALSE fields for whether a manager attended a call, listened to the recording, commented, scored it, or marked feedback as given. That's not subjective - it's binary. Either the manager coached or they didn't.

We've reviewed dozens of coaching programs where manager activity was never tracked. Every single one struggled to show ROI. Track these manager-side metrics monthly: coaching sessions per rep, time spent coaching, debrief frequency within 24 hours. If a manager runs one coaching session per month per rep, don't be surprised when behavior doesn't change.

Your coached reps can nail every discovery call - but if they're dialing wrong numbers and bouncing emails, the lagging indicators will never move. Prospeo delivers 98% email accuracy and 125M+ verified mobiles with a 30% pickup rate, so your coaching investment actually converts to pipeline.

Fix the data before you blame the coaching.

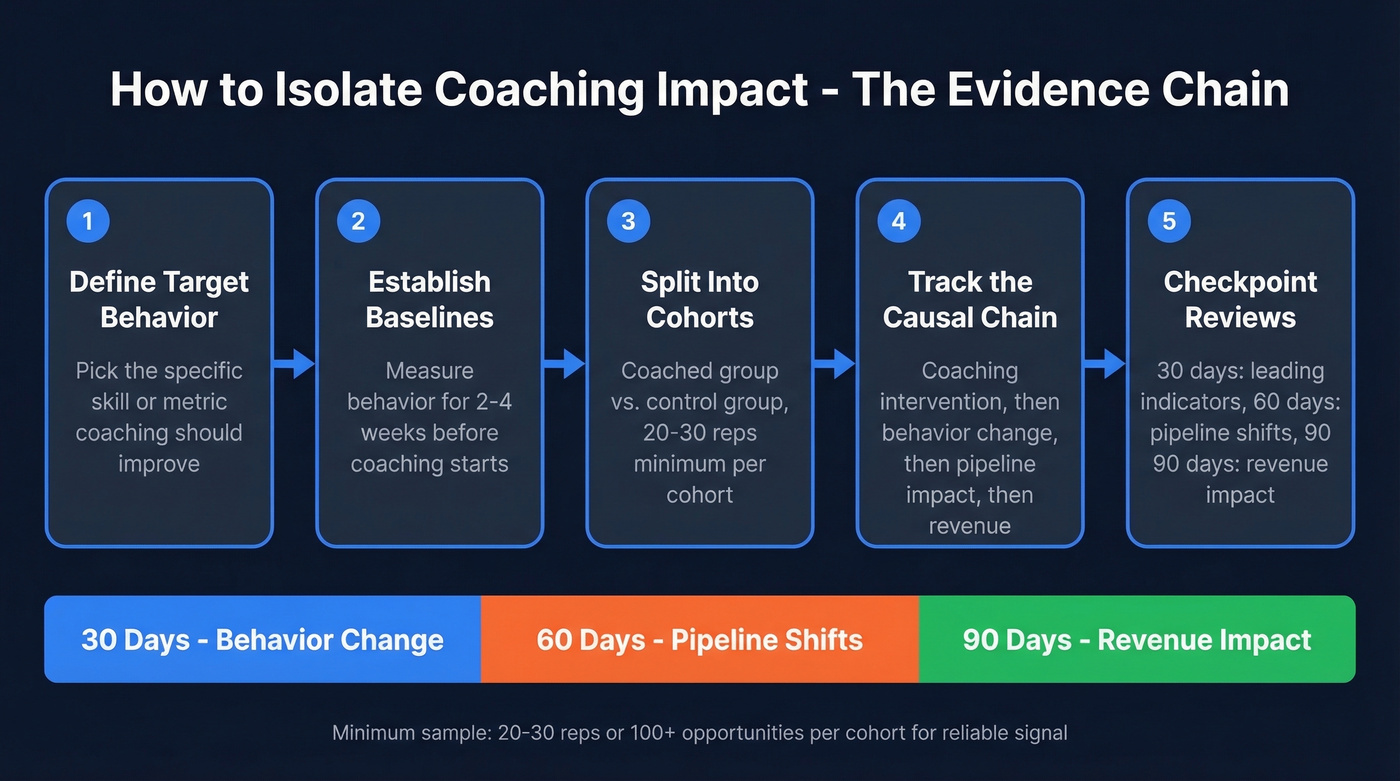

How to Isolate Coaching Impact

The number-one practitioner frustration: "I can't prove coaching caused the improvement." Fair. Attribution is hard. But you can build a preponderance-of-evidence case that holds up in a QBR.

- Define the target behavior - what specific skill or metric is coaching supposed to improve?

- Establish baselines - measure the behavior for 2-4 weeks before coaching starts. Without baselines, you have nothing to compare against.

- Split into cohorts - coached group vs. control group, divided by team or segment.

- Track the causal chain - coaching intervention, then behavior change, then pipeline impact, then revenue outcome.

- Set checkpoint reviews at 30, 60, and 90 days - leading indicators at 30, pipeline shifts at 60, revenue impact at 90.

Use 20-30 reps or 100+ opportunities per cohort as a minimum bar for a reliable signal.

Let's be honest: stop trying to calculate coaching ROI to the penny. You'll drive yourself crazy isolating every variable, and the spreadsheet precision is false precision anyway. Build the evidence chain instead. Leading indicators move, lagging indicators follow, coaching is working. That's the argument that wins budget. Teams that skip the baseline measurement phase always regret it at QBR time - we've watched it happen over and over.

What NOT to Measure

If your QBR slide shows training hours completed and average assessment scores, you're measuring inputs, not impact. Nobody got promoted for delivering 200 hours of training that didn't change win rates.

One sales trainer on r/Training summed it up perfectly - their QBR metrics were training hours, topics covered, and average assessment grades. Leadership's response: "but did it work?"

Kill these from your dashboard: training hours delivered, course completion rates, satisfaction/NPS scores, assessment grades. They measure activity, not outcomes. Skip this section if you're already past the "hours delivered" stage and want to jump straight to ROI math.

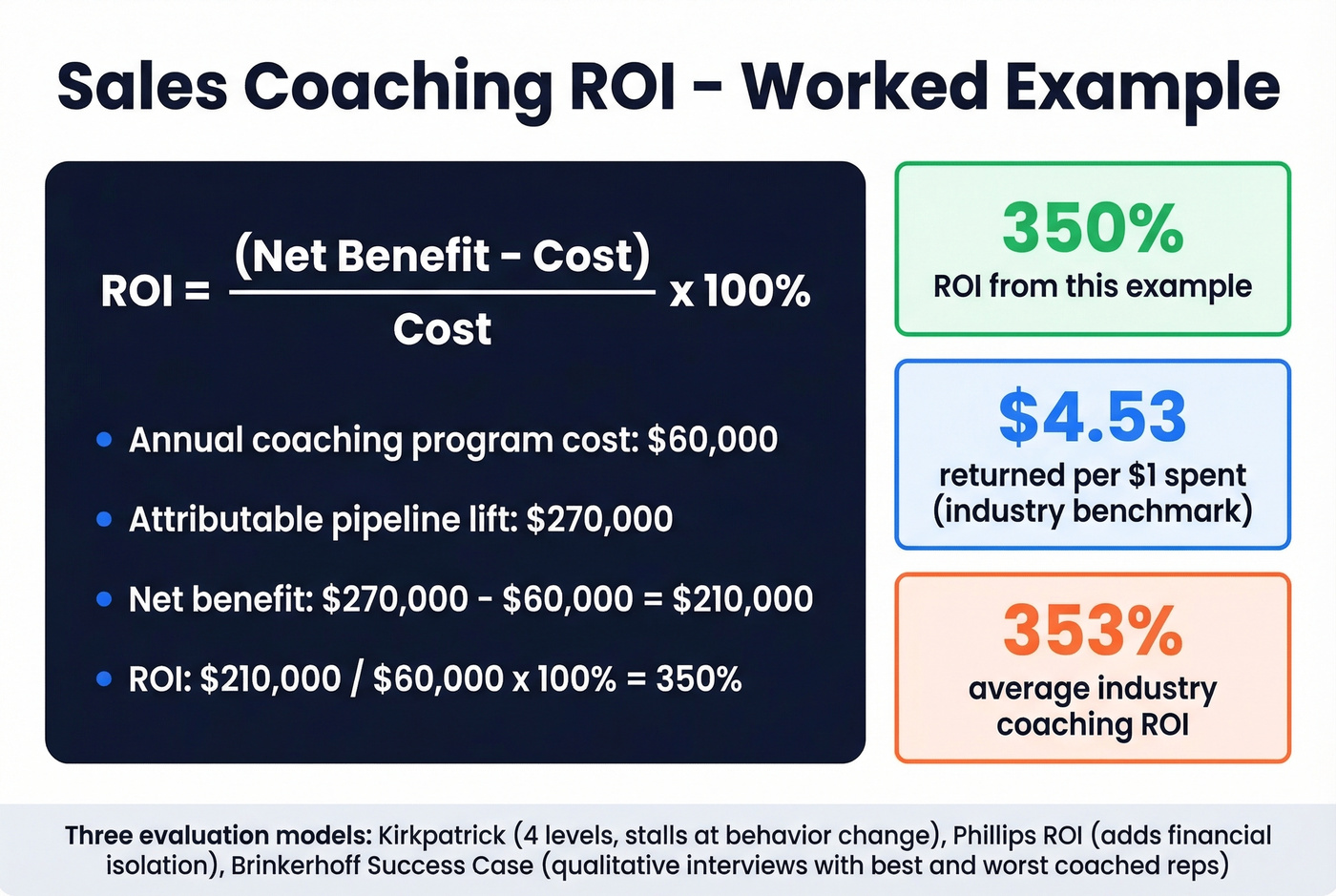

The ROI Formula

ROI = (Net Benefit - Cost) / Cost x 100%

Worked example: a $60K annual coaching program produces $270K in attributable pipeline lift measured via your coached-vs-control cohorts. That's a 350% ROI. Industry benchmarks land around 353% ROI - roughly $4.53 returned per $1 spent.

Most teams default to Kirkpatrick's four-level model but stall at Level 3 (behavior change) because Level 4 (business results) is hard to isolate. The Phillips ROI Model adds a fifth level focused specifically on financial isolation - worth exploring if leadership demands hard dollar figures. For a lighter-weight alternative, the Brinkerhoff Success Case Method skips the spreadsheets entirely: interview your best and worst coached reps to understand what worked and what didn't. It surfaces outlier wins that averages bury, and sometimes qualitative evidence is more persuasive than a formula.

Your Coaching Measurement Stack

Start with three things: a CRM with clean data, a conversation intelligence tool, and a coaching scorecard.

Gong is the market leader for conversation intelligence. It gives you the behavioral benchmarks - talk ratio, monologue length, question rate - plus coaching activity data that tracks whether managers actually coached. Expect roughly $100-200/user/month for mid-market contracts.

HubSpot (free CRM tier; Sales Hub from ~$20/seat/month) or Salesforce (from ~$25/user/month) handles pipeline reporting: stage progression velocity, win rates by cohort, cycle length trends. This is where your lagging indicators live.

Your coaching metrics are only as good as the data underneath them. If 15% of your emails bounce and half your phone numbers are disconnected, you can't tell whether a rep's low connect rate is a skill problem or a data problem. Prospeo keeps CRM data clean with 98% email accuracy and a 7-day refresh cycle, with native Salesforce and HubSpot integrations.

If you're seeing high bounce rates, fix deliverability first (and fast) with an email deliverability guide and a clear view of your email bounce rate.

You're tracking talk-to-listen ratios, win rates, and ramp time - but none of those metrics improve if reps waste 4-6 hours a week chasing bad contact data. Teams using Prospeo book 26% more meetings than ZoomInfo users, giving coached reps more at-bats to apply what they've learned.

Give your coached reps the data to actually prove the ROI.

FAQ

How long before coaching metrics show results?

Allow 3-6 weeks minimum for leading indicators like talk-to-listen ratio and question rate to show a reliable signal. Lagging indicators - win rate, deal size, cycle length - need 60-90 days. Don't draw conclusions from fewer than 20-30 reps or 100+ opportunities per cohort.

What's the single most important coaching metric?

There isn't one, and chasing a single number is the trap. Track the specific behavior your coaching targets (e.g., talk-to-listen ratio for discovery coaching) as the leading indicator, paired with one lagging indicator like win rate to confirm business impact. The pair matters more than either metric alone.

Do I need conversation intelligence software to measure coaching?

Conversation intelligence makes measurement dramatically easier but isn't strictly required. At minimum, you need a CRM with clean, verified contact data plus a structured coaching scorecard for consistent evaluation. The scorecard alone gets you 80% of the way there.

How do I set coaching benchmarks for my team?

Baseline your current performance across key metrics - win rate, cycle length, talk-to-listen ratio, quota attainment - before any coaching intervention begins. Those internal baselines become your benchmarks. Compare against industry data where available (e.g., Gong's 43:57 talk ratio), but your own historical trends are more actionable than generic external numbers.