Lead Scoring for Cold Email: A Model That Actually Works

Cold email reply rates have been sliding for years - from 8.5% in 2019 down to roughly 4% by 2025, based on a Mailshake analysis of 12 million emails. Blasting 10,000 messages and hoping for 50 replies stopped working a long time ago. The teams still hitting 10%+ reply rates aren't sending more emails. They're sending fewer, better-targeted ones.

And here's the kicker: outbound-sourced deals tend to be about 50% larger than inbound-sourced ones. That means every hour you spend on lead scoring for cold email pays back at a higher rate than almost anything else in your pipeline.

That targeting starts with scoring.

The Short Version

Score cold email leads on fit first - ICP match should represent 60-70% of the total score. Layer intent signals and engagement after that, but never score on opens. Start with a simple rule-based model using 5-10 criteria before you touch anything AI-powered. And none of it works if your data is garbage. Verify your list before you score it.

Below: the model, a scoring table, and why most scoring advice falls apart for outbound.

Why Inbound Scoring Guides Fall Apart for Outbound

Here's the thing: almost every lead scoring guide you'll find is written for inbound. They tell you to score webinar attendance, pricing page visits, and content downloads. Great - except your prospects have never visited your website. They don't know you exist yet.

Cold outreach scoring requires a fundamentally different signal set. Instead of tracking what prospects do on your site, you're evaluating who they are, whether they're likely in-market, and whether you can actually reach them. The RevBlack playbook draws a useful distinction between a lead score (intent and behavior) and a lead grade (fit). Their "A95" framing - where A is a perfect fit grade and 95 is a high intent score - maps cleanly to outbound. A C25 lead? Poor-fit prospect with minimal signals. Nurture pile, not call queue.

For cold email, the grade matters far more than the score, because you simply don't have much behavioral data yet. You're working with firmographics, intent signals, and deliverability risk - not page views.

Why Opens Are a Garbage Signal

If your scoring model adds points for email opens, you're building on sand.

Apple's Mail Privacy Protection, launched September 2021, preloads tracking pixels through proxy servers - generating "opens" even when nobody reads the email. Twilio's data showed that within the first week of MPP's rollout, unique open events jumped 6.5%. That's not engagement. That's noise.

It gets worse. MPP doesn't just inflate open rates - it blocks IP addresses, timestamps, geolocation, and device type. Apple's Link Tracking Protection can strip UTM parameters entirely, breaking your attribution alongside your open tracking. Twilio's guidance is blunt: prioritize clicks over opens for engagement scoring, A/B tests, and dashboards.

For cold outreach, the signals that actually matter are replies, link clicks, and conversions. A "tell me more" reply from a VP is worth infinitely more than a phantom open from an Apple Mail proxy.

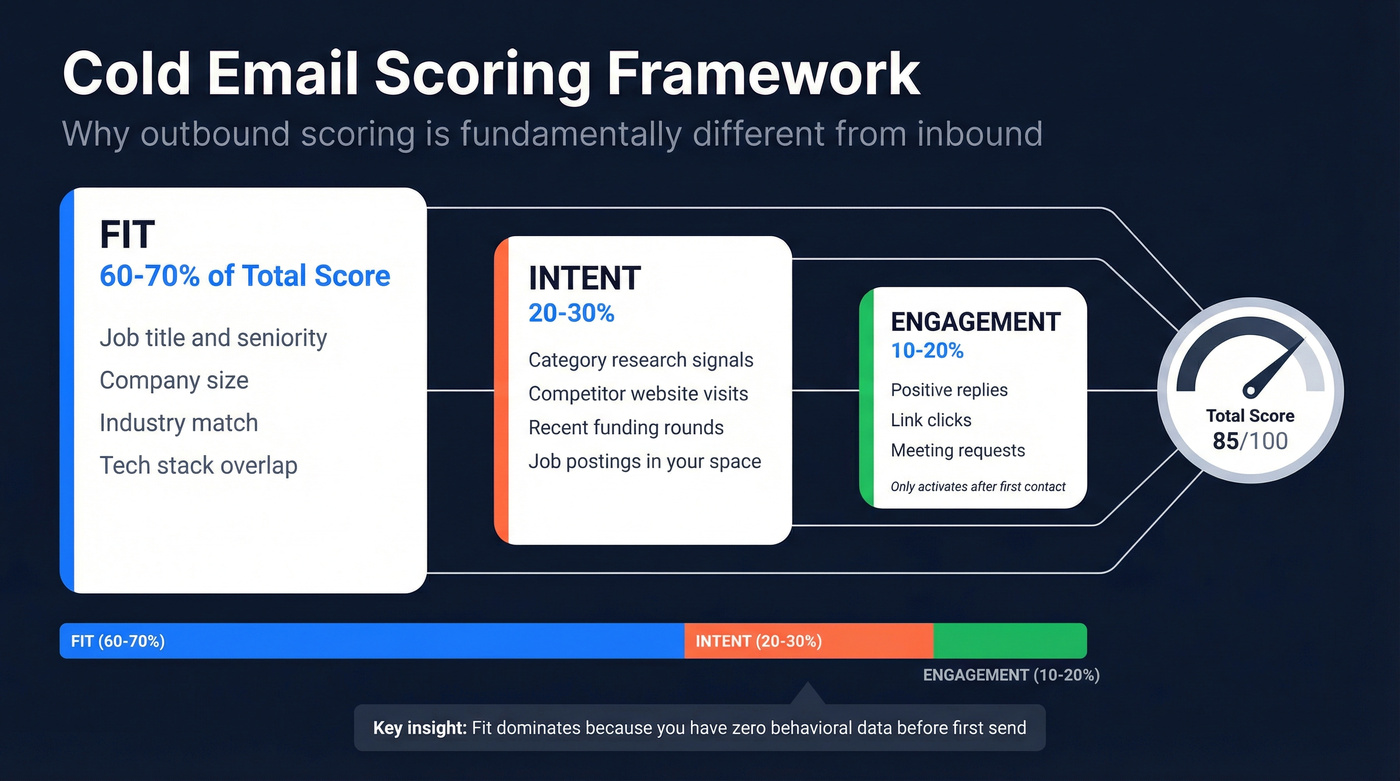

The Cold Email Scoring Framework

The Salespanel model breaks scoring into four pillars: Fit, Behavior, Intent, and Interaction. For cold email, we need to adapt these because two of the four pillars - behavior and interaction - are nearly empty when you're reaching out cold.

Here's how the weights shift for outbound.

Fit (60-70% of total score) is your ICP match: title, seniority, company size, industry, tech stack. It's the foundation because it's the one thing you can evaluate before sending a single email. A VP of Sales at a 200-person SaaS company in your target vertical starts with a massive head start over a marketing coordinator at a 15-person agency. In our experience, teams that weight fit below 50% end up chasing replies from people who'll never buy.

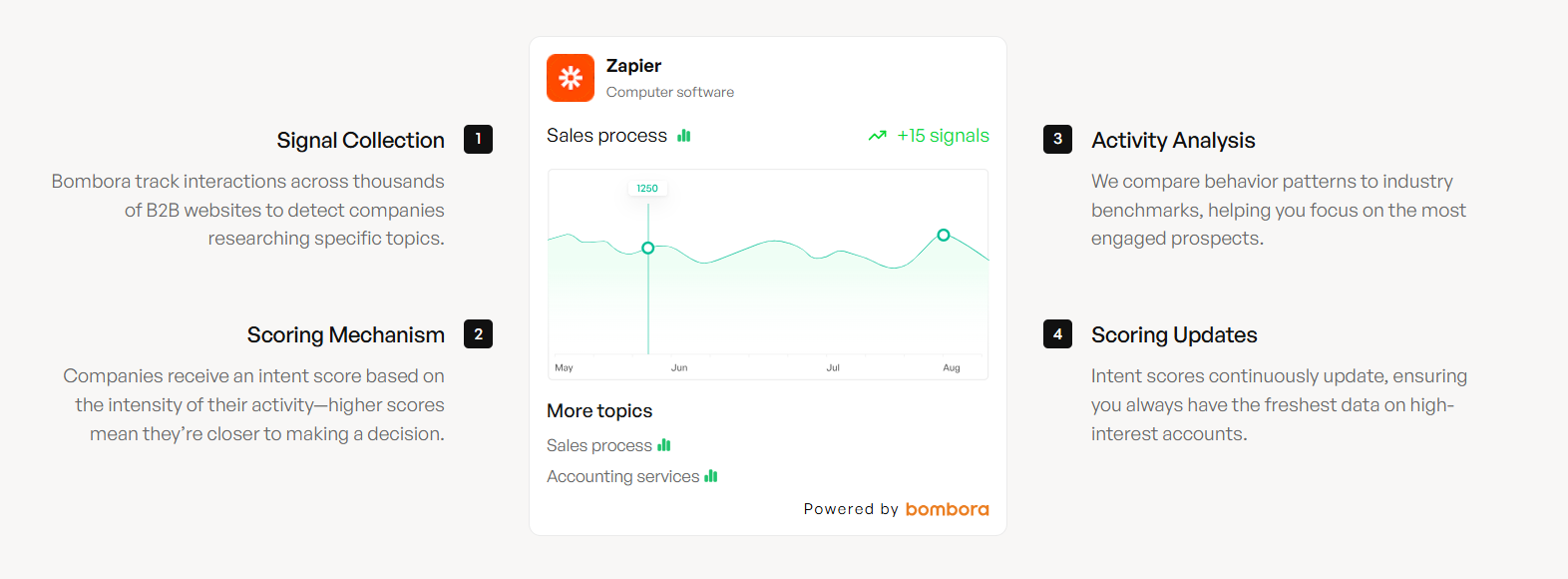

Intent (20-30%) captures whether this person or company is actively researching your category. Third-party intent data - topic-level research signals, competitor visits, relevant job postings, recent funding rounds - represents the highest-leverage input most teams ignore. Intent-driven leads convert at 20-25% versus 5-10% for traditional leads, and sales cycles close roughly 40% faster.

Engagement (10-20%) only activates after you've made contact. Positive replies, link clicks, meeting requests - these escalate a lead from "sequence continues" to "call immediately." Negative replies and unsubscribes pull the score down.

Let's make this concrete. Your SDR sent 500 emails yesterday. 18 people replied. Three of those are VP-level buyers at ICP-fit companies showing intent signals for your category. Those three get called within the hour. The other 15 replies get triaged by score. Without lead prioritization, your SDR treats all 18 the same - or worse, calls them in the order they hit the inbox.

Your scoring model collapses the moment bad data enters the pipeline. Prospeo's 5-step email verification delivers 98% accuracy, and every record refreshes every 7 days - so the fit scores you assign today are still valid next week. Layer in Bombora intent data across 15,000 topics to fill that 20-30% intent pillar without guessing.

Stop scoring leads on stale data. Start with contacts you can actually reach.

Build Your Model: The Scoring Table

Real talk: most teams overthink this. A lead hitting all positive firmographic signals and showing intent would score around 85-100 - that's an immediate call. A mid-level manager at a wrong-industry company with a catch-all email lands around -10 to +5. Disqualify and move on.

Pre-Send Signals (Fit + Deliverability)

These are the signals you evaluate before a single email goes out. They determine whether a lead is worth contacting at all.

| Signal | Points |

|---|---|

| CEO / VP title | +30 |

| Director title | +25 |

| Manager title | +15 |

| Individual contributor | +5 |

| ICP company size match | +25 |

| Wrong industry | -20 |

| Student / intern title | -50 |

| Verified email | +10 |

| Catch-all domain | -5 |

| Role-based email (info@, sales@) | -10 |

| Previous bounce | -25 |

| Personal email domain | -15 |

Post-Send Signals (Engagement + Intent)

These activate once you've started outreach or when third-party data reveals buying behavior.

| Signal | Points |

|---|---|

| Positive reply | +20 |

| "Tell me more" reply | +15 |

| "Not interested" reply | -10 |

| Link click | +10 |

| Unsubscribe | -25 |

| Researching your category | +20 |

| Competitor website visit | +15 |

| Recent funding round | +10 |

| Job posting in your space | +10 |

Decay rule: Subtract 10 points per week after 3 touches with no reply.

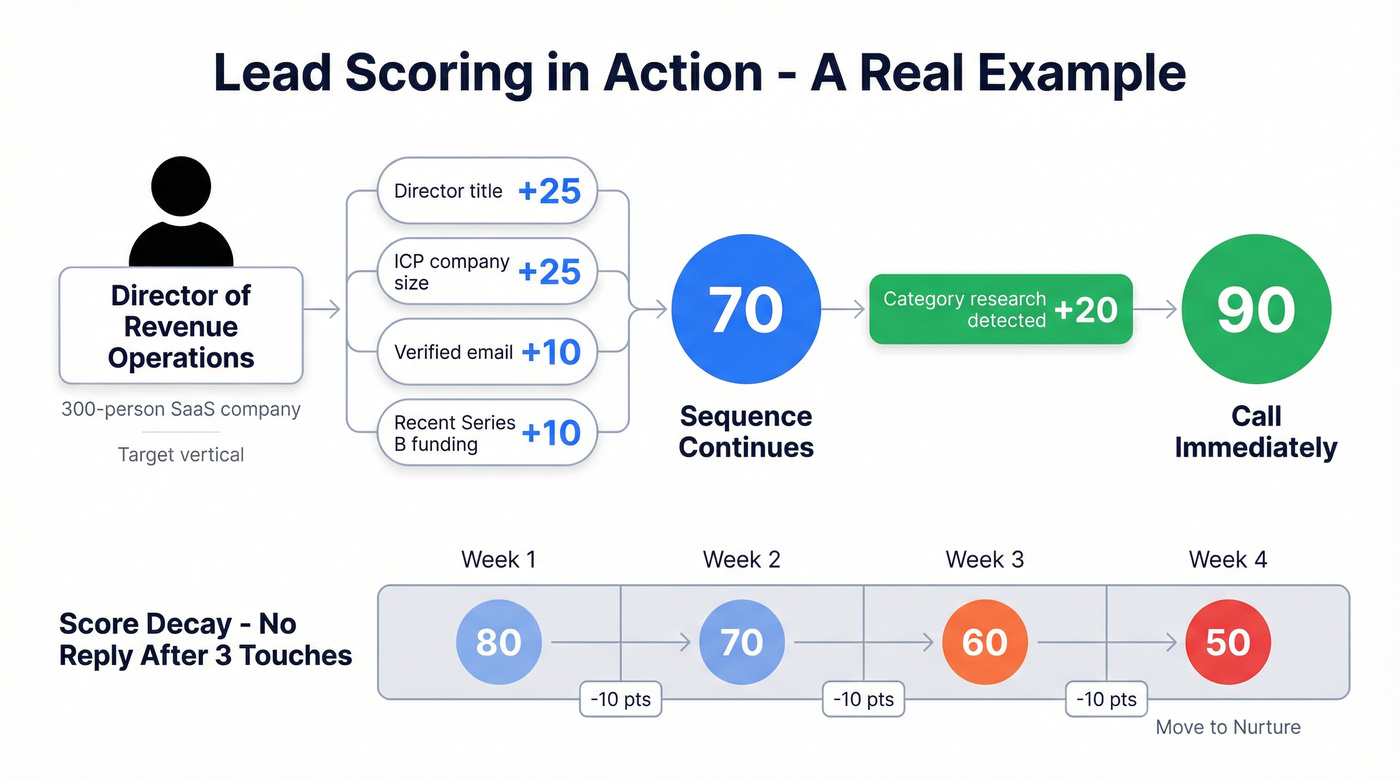

A Worked Example

Say you find a Director of Revenue Operations at a 300-person SaaS company in your target vertical. She has a verified email and her company just raised a Series B.

Starting score: Director title (+25) + ICP company size (+25) + verified email (+10) + recent funding (+10) = 70. That's solid "sequence continues" territory.

Her company is also researching your category on third-party review sites (+20). New score: 90. Call immediately.

Now imagine she doesn't reply after three touches. Week one: 80. Week two: 70. Week three: 60. By week four she's at 50 - your signal to move her to nurture and stop burning sequence steps.

You don't need AI for this. A spreadsheet with these rules will outperform a poorly trained predictive model every time, especially when your dataset is small. We've watched teams burn six months building predictive models on 200 data points. Start with rules.

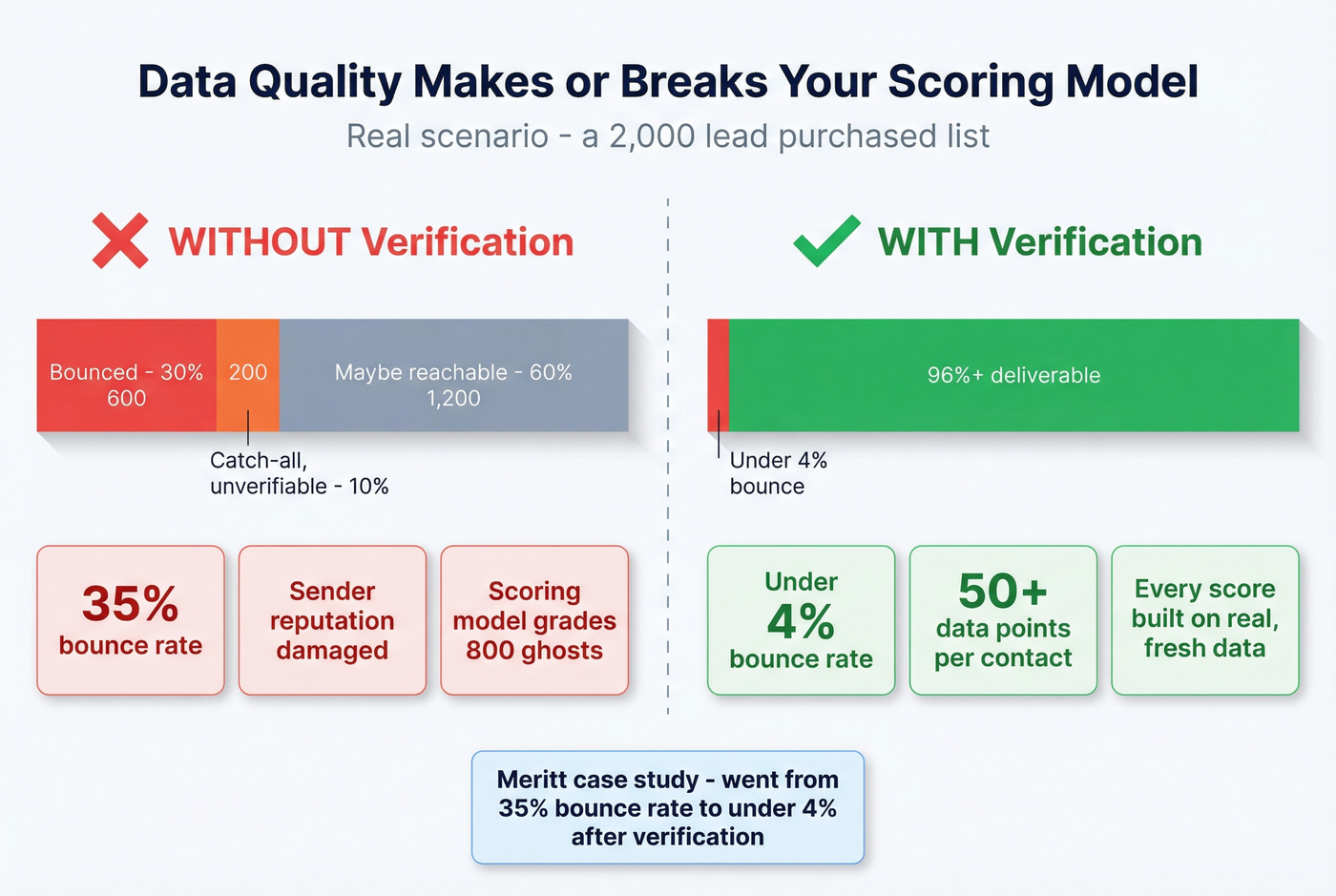

Data Quality Is Step Zero

Let's run a scenario. You buy a list of 2,000 decision-makers. 600 bounce. 200 are catch-all domains you can't verify. Your scoring model just assigned points to 800 ghosts. Every downstream metric - reply rate, conversion rate, score-to-meeting correlation - is now corrupted by bad data.

This is why email verification isn't step five in your scoring workflow. It's step zero.

Prospeo runs a 5-step verification process with catch-all handling, spam-trap removal, and honeypot filtering - 98% email accuracy on a 7-day data refresh cycle, so you're not scoring against stale records from people who changed jobs two months ago. The enrichment returns 50+ data points per contact, which directly powers your Fit Grade: title, seniority, company size, industry, tech stack, funding status. One customer, Meritt, went from a 35% bounce rate to under 4% after running their list through verification - that's the difference between a scoring model that works and one that's grading phantoms.

The scoring table above only works if your emails actually land. Catch-all domains, role-based addresses, and bounced contacts tank your sender reputation and your model. Prospeo flags catch-all domains, removes spam traps and honeypots, and returns 50+ data points per contact - giving you the firmographic and intent signals you need to score before you send.

Verify first, score second. Every signal starts with deliverable data.

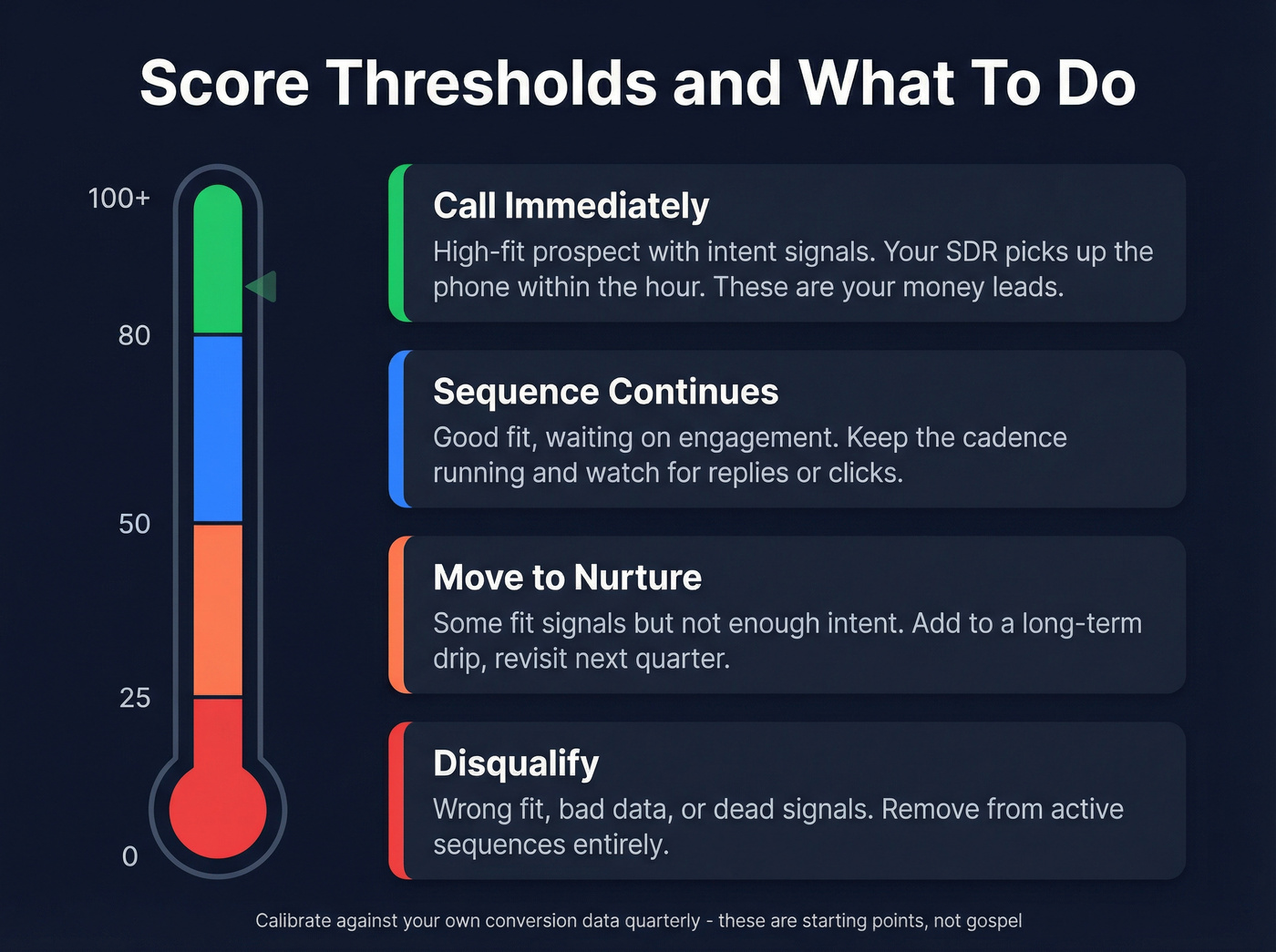

Thresholds and Handoff Rules

A score without a threshold is just a number.

| Score Range | Action |

|---|---|

| 80+ | Call immediately |

| 50-79 | Sequence continues |

| 25-49 | Move to nurture |

| Below 25 | Disqualify |

These thresholds aren't gospel - calibrate them against your actual conversion data. The RevBlack playbook benchmarks typical MQL-to-SQL conversion at 25-35%, with high-alignment organizations hitting 40-50%. If your top-scored leads aren't converting meaningfully better than mid-tier ones, your weights are wrong.

Skip the software if you're small. For teams sending fewer than 1,000 emails per month, a simple A/B/C tier in a spreadsheet is all you need. The scoring table above, a few conditional formatting rules in Google Sheets, and a weekly 15-minute review will outperform any tool you're not actually using consistently.

Review quarterly. Pull a report comparing score tiers against reply rates, meetings booked, and closed deals. Adjust weights based on what you find. The model isn't something you build once - it's a living system that gets sharper with every quarter of data.

Tools That Support Outbound Scoring

You don't need a single platform to do everything. Here's how the tooling breaks down by function.

Data quality and enrichment: Prospeo handles verification, enrichment, and Bombora intent data across 15,000 topics at ~$0.01/email with 98% accuracy. That means you can layer buyer intent signals directly into your Fit + Intent scoring without a separate vendor.

CRM scoring: HubSpot's lead scoring tool supports rule-based and predictive scoring in Professional and Enterprise tiers. Salesforce offers similar capabilities with AI-assisted prioritization, though pricing varies by edition.

Sending platforms with reply tracking: Apollo starts at $49/user/month and includes basic lead scoring. Instantly starts at $37/month with solid deliverability infrastructure. Lemlist starts at $32/user/month with strong personalization features. Smartlead from $39/month works well for agencies running multiple client inboxes. All four track replies, which feed your Engagement score.

Intent data: Bombora as a standalone typically runs $25K+/year - skip it unless you're running 50K+ emails per month, because the ROI math doesn't work below that volume. For smaller teams, bundled intent data through your enrichment platform is the smarter play.

Companies using lead scoring report up to 77% higher ROI and 50% revenue growth compared to those who don't score at all. The tooling doesn't need to be expensive - it needs to be consistent.

FAQ

How many scoring criteria should I start with?

Five to ten rules. Add complexity after six months of conversion data shows which signals actually predict deals. A spreadsheet with clear rules beats a broken AI model every time.

Should I use AI or predictive scoring?

Not until you have 500+ closed-loop outcomes to train on. Rule-based models outperform AI when your dataset is small - most teams under 5,000 emails/month should stick with manual weights.

What does bad calibration look like?

Your 80+ leads convert at 12% and your 50-79 leads convert at 10%. That two-point gap means your model isn't differentiating - your weights need surgery, not a tweak. Aim for at least a 3x conversion difference between your top and mid tiers.

What's a good free tool for cold email lead scoring?

Google Sheets with conditional formatting handles rule-based scoring for teams sending under 2,000 emails per month. Prospeo's free tier gives you 75 email verifications plus 100 Chrome extension credits monthly - enough to validate a small list and test your scoring model before investing in paid tooling.