Lead Scoring Methods That Actually Predict Revenue

Only 27% of leads sent to sales are actually qualified. The other 73% eat your reps' time, tank morale, and inflate your cost per opportunity. Most teams try to fix this with lead scoring - then pick the wrong method, assign arbitrary points to random actions, watch sales ignore the scores entirely, and conclude the whole exercise was a waste.

The problem isn't lead scoring. It's how most teams build it.

The Short Version

Start with a hybrid of explicit (fit) and implicit (behavioral) scoring on a 100-point scale. Set your MQL threshold between 60-80 points . Add score decay rules from day one - not "eventually." Graduate to predictive scoring only after you have 1,000+ historical conversions to train a model on. And the part nobody emphasizes enough: the single biggest variable isn't your model. It's whether the data feeding it is accurate.

Why Your Scoring Methodology Matters

The numbers aren't subtle. Companies using lead scoring see 138% ROI versus 78% without it. B2B teams report a 77% lift in lead generation ROI when scoring is in place. Machine-learning models push conversion rates 75% higher than manual prioritization.

Despite this, only 44% of organizations actually use lead scoring. If you're in the other 56%, you're prioritizing by gut feel, recency, or whoever happens to be at the top of the CRM view. That's pipeline left on the table.

When scoring isn't worth it: if you're getting fewer than 100 leads per month, have a sub-two-week sales cycle, or already convert at 30%+, a spreadsheet and common sense will outperform any scoring model. Scoring solves a prioritization problem - if you don't have one, skip it.

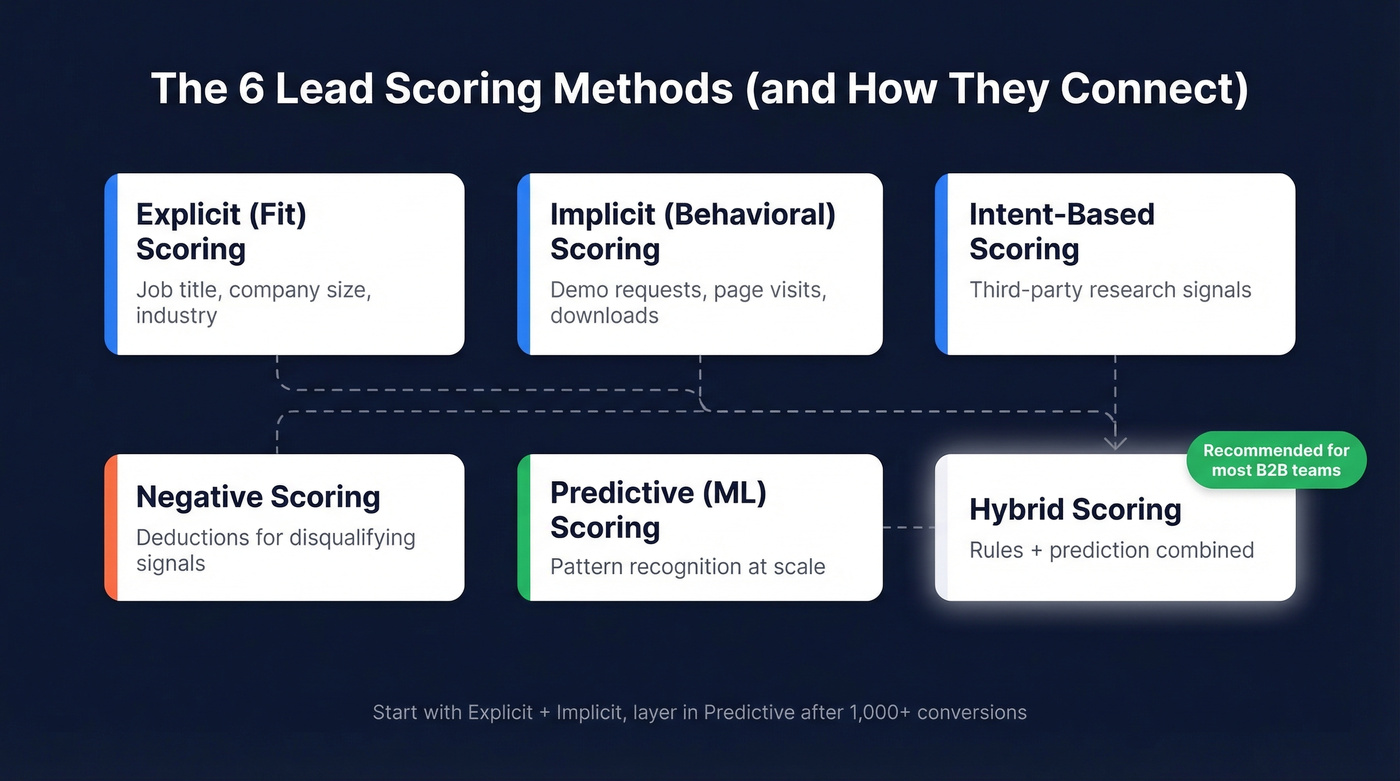

Types of Lead Scoring Models

Traditional Scoring (Explicit Fit)

Traditional scoring measures how closely a lead matches your ideal customer profile - the static attributes that don't change based on behavior: job title, company size, industry, annual revenue, geography, tech stack. A VP of Sales at a 500-person SaaS company gets more points than an intern at a nonprofit because the former is far more likely to buy.

Think of explicit scoring as your first filter. It answers "could this person ever be a customer?" before you look at what they've done on your site. This rule-based approach has been the foundation of lead qualification for decades, and it's still the right starting point for most teams today. It's simple, transparent, and you can build it in a spreadsheet over lunch.

Implicit (Behavioral) Scoring

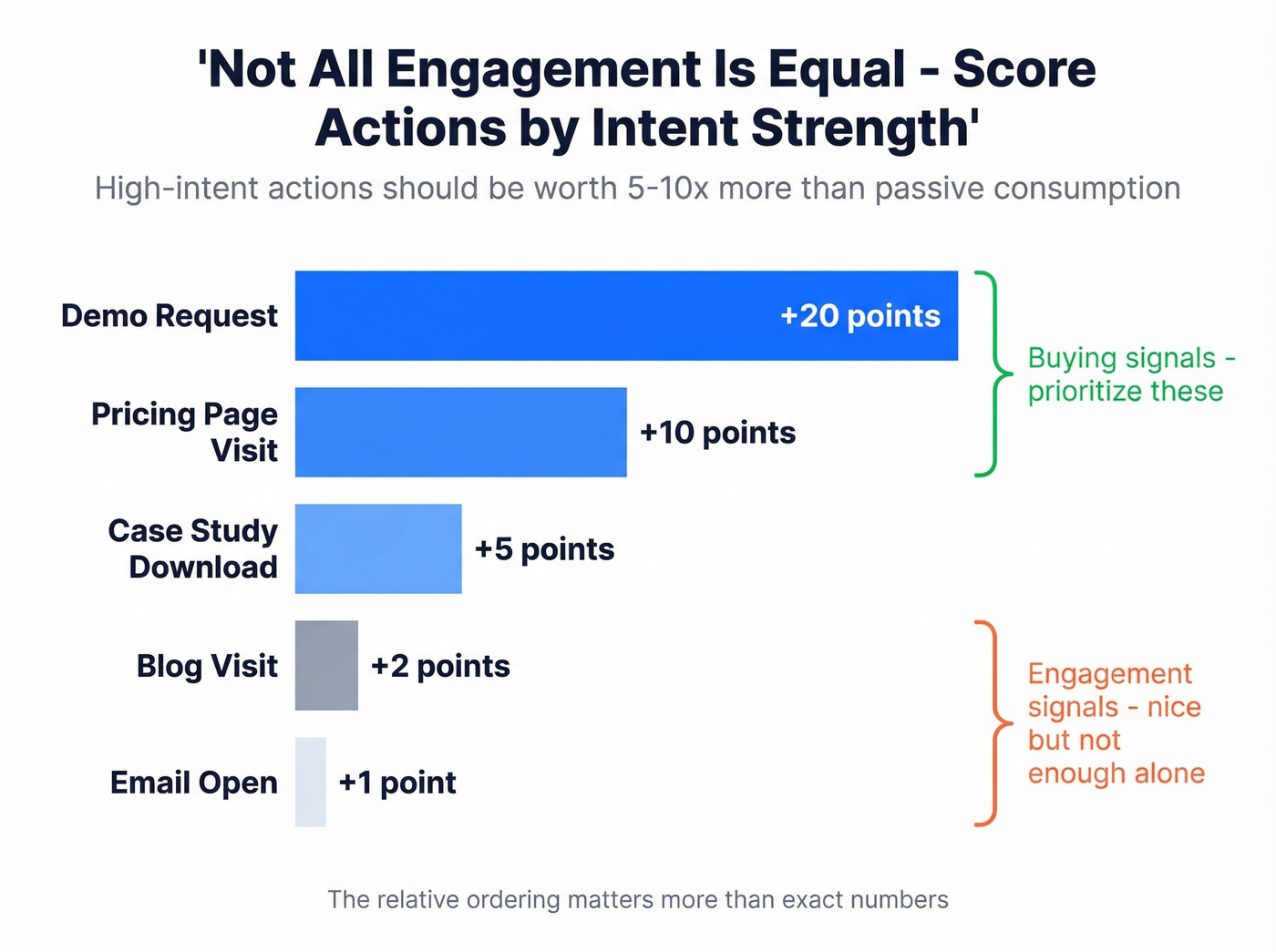

Implicit scoring tracks what a lead actually does - and not all actions are equal. A demo request is worth far more than a blog visit. A pricing page view signals buying intent. An email click shows engagement but not necessarily urgency.

Weight actions by intent strength. A reasonable starting framework:

| Action | Points |

|---|---|

| Demo request | +20 |

| Pricing page visit | +10 |

| Case study download | +5 |

| Blog visit | +2 |

| Email open | +1 |

The exact numbers matter less than the relative ordering - high-intent actions should be worth 5-10x more than passive consumption. Most failed scoring models treat all engagement equally. That's the fastest way to flood sales with leads who read one blog post and never think about you again.

Intent-Based Scoring

Third-party intent data adds a dimension most teams overlook: what prospects are researching outside your website. Surge signals - sudden spikes in topic consumption across the web - indicate a company is actively evaluating solutions in your category.

This is especially powerful for outbound, where you're reaching people who haven't visited your site yet. We've found that layering intent signals onto fit and behavioral data catches buying committees weeks before they'd ever fill out a form. Prospeo tracks 15,000 intent topics and maps those signals onto verified contact data, so you can score leads on whether their company is actively researching your category right now - not just whether they clicked an email last Tuesday.

Negative Scoring

Deductions are just as important as additions. Without them, stale leads accumulate points indefinitely and clog your pipeline.

Common deduction triggers:

- Unsubscribed from emails: -15 to -20

- Competitor domains like @competitor.com: -50 or auto-disqualify

- Bounced email address: -10

- No engagement in 30+ days: -10

- Job title mismatch (student, intern, retired): -20 to -30

- Free email domain for B2B: -5

Skip negative scoring and you'll wonder why your "hottest" leads include people who unsubscribed three months ago.

Predictive Scoring With ML

Modern predictive scoring uses machine learning to find patterns humans miss. The dominant architecture in production is gradient boosted decision trees - XGBoost, LightGBM, CatBoost - which typically achieve F1 scores in the 0.72-0.85 range. Teams using predictive models report +30% sales productivity and -50% time spent on unqualified leads.

The catch: you need roughly 1,000+ historical conversions to train a useful model. Below that threshold, the algorithm doesn't have enough signal to learn from. We've seen teams buy expensive predictive platforms with 200 conversions in their CRM and wonder why the scores feel random. If that's you, don't waste the money yet.

Predictive scoring works best as decision support - prioritization and planning - not as an autonomous decision engine. Evaluate your model against downstream outcomes like sales acceptance rate and pipeline conversion, not just score distribution. Keep human guardrails. Review quarterly.

Hybrid Scoring (Recommended)

Here's the thing: you don't have to pick one method. The best-performing scoring systems we've seen use rule-based scoring for initial qualification - does this lead fit our ICP? - and predictive scoring for prioritization within the qualified pool, deciding which qualified leads reps should call first.

Hybrid beats either approach alone. Rules give you transparency and control. Prediction gives you pattern recognition at scale. For enterprise deals with buying committees, aggregate individual scores to an account level: a single champion scoring 80 matters less than five stakeholders averaging 50. Start with rules, layer in prediction once you have the data to support it, and you'll follow the same path most successful B2B teams take.

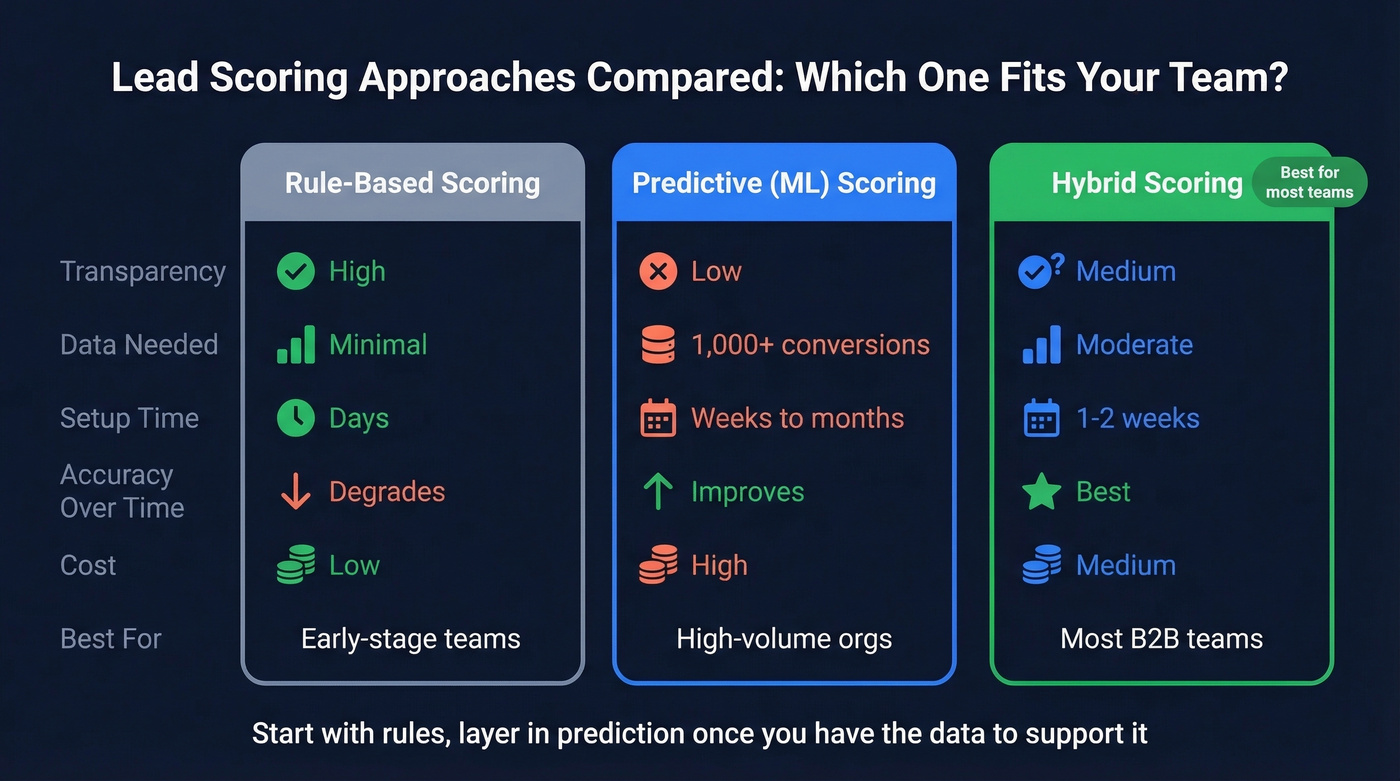

Comparing the Approaches

| Dimension | Rule-Based | Predictive (ML) | Hybrid |

|---|---|---|---|

| Transparency | High | Low | Medium |

| Data needed | Minimal | 1,000+ conversions | Moderate |

| Setup time | Days | Weeks-months | 1-2 weeks |

| Accuracy over time | Degrades | Improves | Best |

| Cost | Low | High | Medium |

| Best for | Early-stage teams | High-volume orgs | Most B2B teams |

For most teams, hybrid is the answer.

Worked Scoring Examples

If you're getting 500+ leads per month with a small team, even a simple 100-point model helps you find the top 50 worth personal outreach. Here are two copy-paste matrices.

B2B SaaS Example (100-Point Scale)

| Signal | Type | Points |

|---|---|---|

| Director+ title | Fit | +25 |

| 200-1,000 employees | Fit | +15 |

| Target industry | Fit | +10 |

| Pricing page visit | Behavior | +10 |

| Demo booked | Behavior | +20 |

| Case study download | Behavior | +5 |

| 30+ days no engagement | Decay | -10 |

| MQL threshold | 60 |

Professional Services Example

| Signal | Type | Points |

|---|---|---|

| Relevant decision-maker role | Fit | +20 |

| Proposal/SOW downloaded | Behavior | +15 |

| Repeat site visits (3+) | Behavior | +10 |

| Referral source | Behavior | +15 |

| Email bounced | Negative | -10 |

| MQL threshold | 70 |

MQL thresholds typically fall between 60-80 points. Start at 60, then adjust based on sales feedback. If reps say the leads are too raw, raise the threshold. If pipeline is thin, lower it. The threshold isn't sacred - it's a dial you tune quarterly.

Every scoring model in this article depends on one thing: accurate data. Bad emails, stale job titles, and outdated company info corrupt your scores from the inside out. Prospeo's 5-step verification and 7-day data refresh cycle keep your scoring inputs clean - 98% email accuracy, 50+ enrichment data points per contact, and 92% match rates on CRM enrichment.

Stop scoring leads with data that expired six weeks ago.

How Score Decay Works

Your SDR just spent 45 minutes researching a lead that scored 85 - only to discover the person left that company six months ago. That's not a scoring failure. It's a decay failure.

Data doesn't age gracefully:

| Data Type | Stale After |

|---|---|

| Job title | 3-6 months |

| Content interaction | 30 days |

| Event attendance | 60 days |

| Company info | 6-12 months |

Build decay into your model from day one. A simple rule: subtract 10 points if a lead has zero interactions within 30 days, another 10 at 60 days. By 90 days of silence, that lead should be back in nurture - not sitting in your MQL queue pretending to be hot.

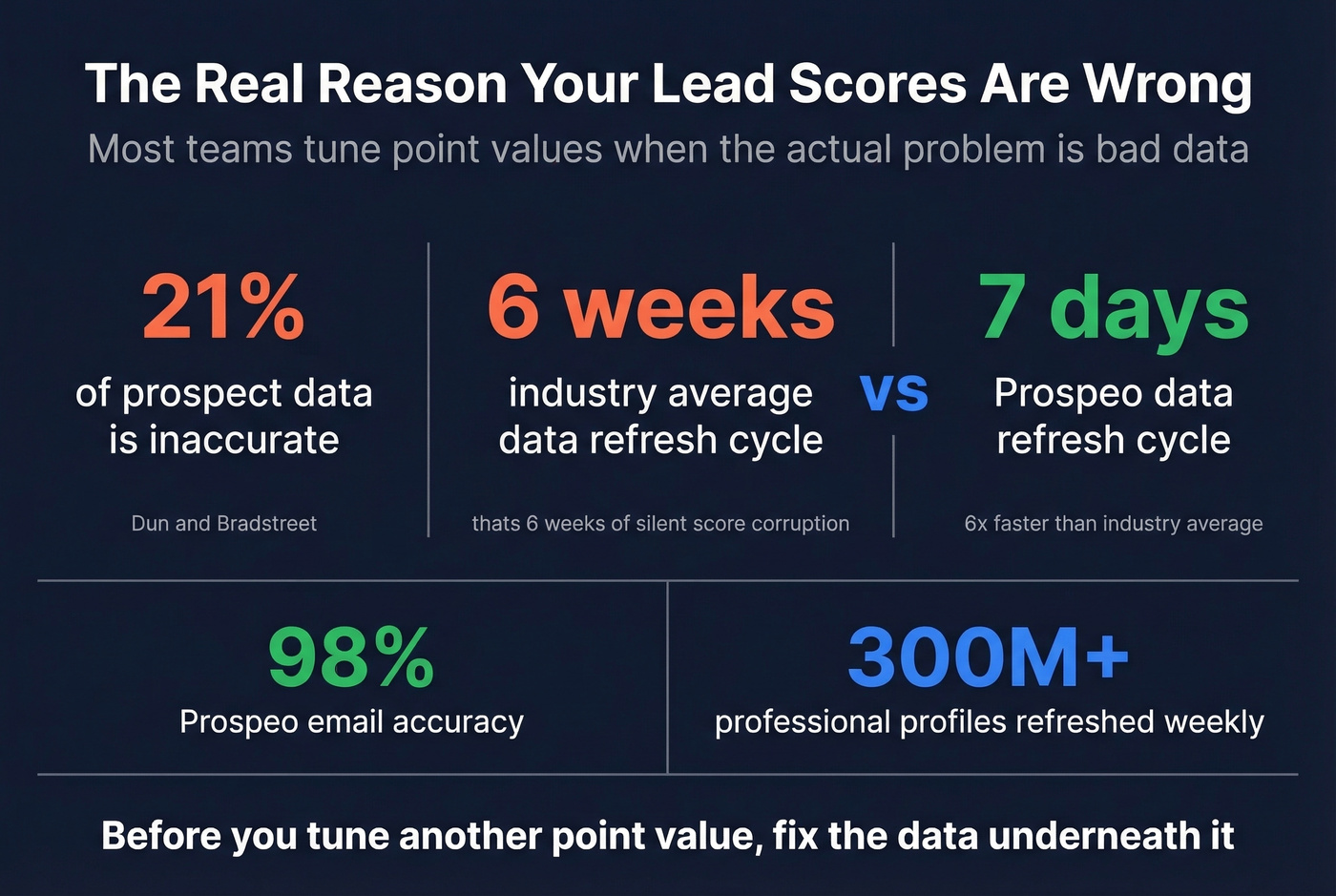

Data Quality: The Variable Nobody Wants to Talk About

Look, most teams spend weeks tuning point values when the actual problem is that one in five data points is wrong. Up to 21% of prospect data is inaccurate, according to Dun & Bradstreet. If your scoring model runs on bad data, your scores are fiction - no matter how elegant the math.

In our experience, this is the number one reason scoring projects fail. It's not the model. It's not the threshold. It's that the email bounces, the job title changed two quarters ago, and the company got acquired. Reddit threads on r/sales echo this constantly: teams don't trust their scores because the underlying data is stale, the scoring logic isn't documented, and nobody notices the drift until reps start complaining.

The industry average data refresh cycle is six weeks. That's six weeks of job changes, company moves, and email bounces silently corrupting your scores. Prospeo refreshes 300M+ professional profiles every 7 days at 98% email accuracy - six times faster than that average. Before you spend another sprint tuning point values, fix the data underneath them.

Intent-based scoring only works when you can act on the signal. Prospeo tracks 15,000 intent topics via Bombora and maps them directly to 300M+ verified contacts - so you can score, prioritize, and reach in-market buyers before they ever fill out a form. Layer intent signals onto fit data starting at $0.01 per email.

Turn intent signals into scored, contactable pipeline today.

What These Methods Cost

The tooling ranges from free to six figures:

| Tool | What You Get | Price |

|---|---|---|

| HubSpot Professional | Rule-based scoring | $890/mo (3 seats) |

| HubSpot Enterprise | Predictive scoring | $3,600/mo (10-seat min) |

| Prospeo | Data + enrichment layer | Free tier; ~$0.01/email |

| Salesforce Einstein | ML scoring add-on | ~$215/user/mo total |

| 6sense | Intent + predictive | $60k-$300k/yr |

| MadKudu | Predictive scoring | $20k-$60k/yr |

| Marketo | Rule + behavioral | $1,000-$3,000+/mo |

| Clay | Enrichment + workflows | Free tier; from $185/mo |

If you're paying $3,600/month for HubSpot Enterprise mainly to unlock predictive scoring, you're overpaying. A HubSpot Professional plan with manual scoring plus a solid data enrichment layer will outperform an Enterprise plan running on stale data - while keeping your stack simpler and your costs way down.

The most expensive part of scoring isn't the platform. It's the opportunity cost of scoring on bad data and sending reps after leads that don't exist anymore.

Common Mistakes to Avoid

Overcomplicating the first model. Start with 5-10 attributes, not 50. You can always add complexity later. Teams that launch with 40+ signals spend more time debugging the model than using it.

Never recalibrating. A model built in Q1 is stale by Q3. Compare top-scored leads against actual conversion rates quarterly. If your highest-scored leads aren't converting at 2-3x the rate of average leads, something's broken.

Ignoring score decay. Without decay, every lead trends toward MQL given enough time. That's inflation, not qualification.

Building without sales input. If sales didn't help define "qualified," they won't trust the scores. Full stop.

Ignoring data quality. If 21% of your data is wrong, your model makes confident predictions on a broken foundation. This is the mistake that kills more scoring projects than all the others combined.

FAQ

How many attributes should a scoring model have?

Start with 5-10 covering both fit and engagement. Add new signals only when they demonstrably correlate with closed-won conversion.

How often should you recalibrate scores?

Quarterly at minimum. Monthly reviews are better for high-volume teams processing 5,000+ leads.

Can small teams benefit from lead scoring?

Yes - if you're processing 500+ inbound leads per month, even a simple 100-point spreadsheet model helps you isolate the top 50 worth calling. Below that volume, manual triage usually works fine.

What's the difference between traditional and predictive scoring?

Traditional scoring relies on manually assigned point values and static rules - job title, company size, basic engagement thresholds. Predictive scoring uses machine learning to surface patterns rule-based systems miss. Most B2B teams get the best results starting with traditional lead scoring methods and layering in predictive capabilities once they have enough conversion data to train on.