Product Qualified Leads: The Practitioner's Playbook for 2026

Zendesk ran an experiment that should make every marketing team uncomfortable. They took 400 leads scored as "ready for sales" and 400 completely random, non-qualified leads, then tracked both groups for a full quarter. The result? No statistical difference in the ability to connect, re-engage, or close between the two groups. Traditional lead scoring - the kind built on whitepaper downloads and webinar attendance - performed no better than random chance.

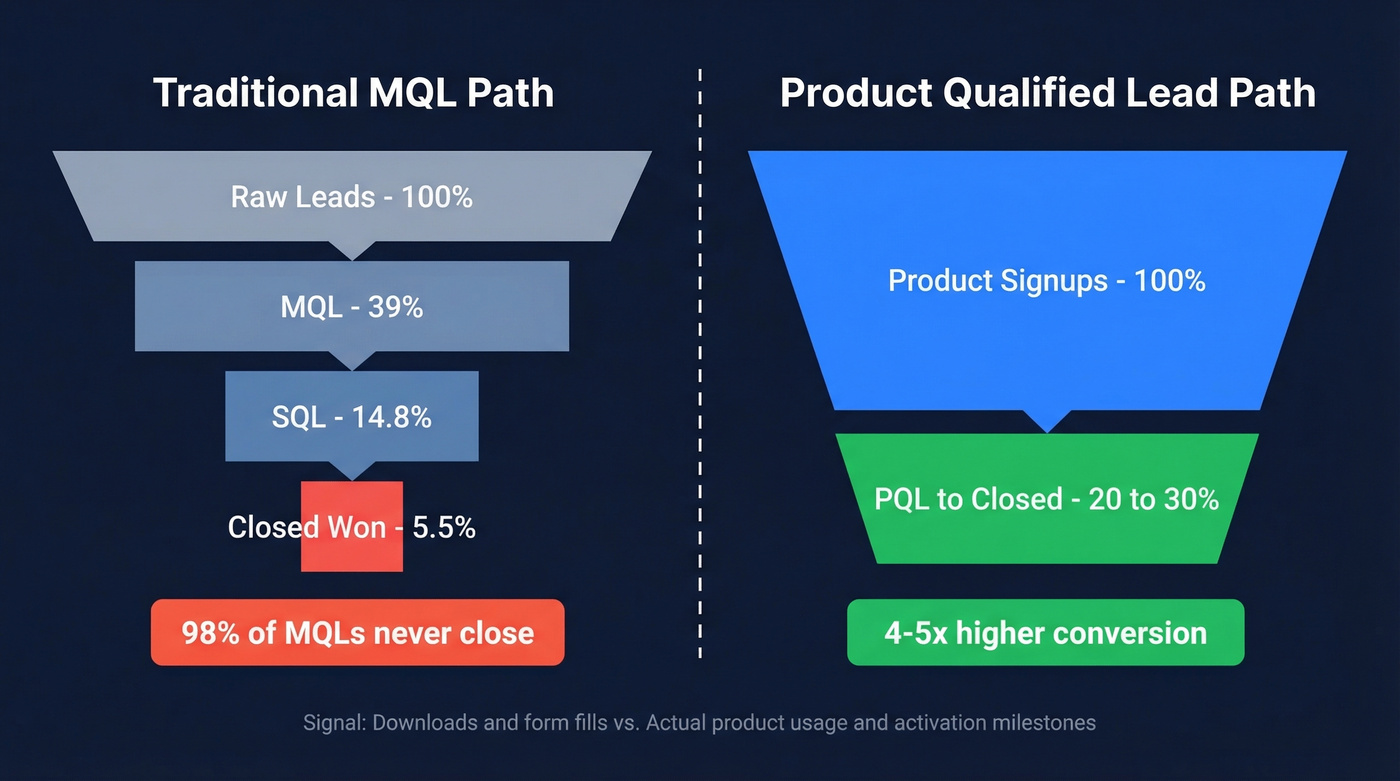

That experiment lines up with a broader finding from SiriusDecisions (now Forrester): 98% of MQLs never result in closed business](https://ortto.com/learn/product-led-approach-building-a-lead-scoring-framework-for-saas-marketing/). The model is broken. The product qualified lead is the fix.

Here's the short version. PQLs are trial or freemium users who've experienced real product value - not just downloaded content. They convert at 20-30% vs. roughly 5.5% when you compound a typical Lead-to-MQL-to-SQL-to-Closed path. And you need three things to operationalize them: product analytics, a scoring model, and verified contact data.

What Is a Product Qualified Lead?

A product qualified lead is a user who matches your ICP and has experienced meaningful value inside your product - through a free trial, freemium plan, or interactive demo. The key distinction from an MQL is simple: interest isn't intent.

A founder downloads your whitepaper? That's an MQL. A founder signs up for your free plan, invites three teammates, and activates your core workflow? That's a PQL. The difference is the gap between consuming content about your product and actually using it.

The canonical example is Slack. They identified that teams hitting 2,000 messages had crossed a value threshold - those teams understood the product, depended on it, and were far more likely to convert to paid. That's the PQL model in its purest form: find the usage milestone that correlates with willingness to pay, then build your sales motion around it.

PQLs don't replace MQLs everywhere. But for any company with a self-serve trial or freemium tier, they're the highest-signal lead type you can generate.

PQL vs. MQL vs. SQL

The traditional funnel produces dismal results. FirstPageSage's benchmark data across B2B SaaS companies tells a sobering story when you compound the conversion rates:

| Metric | MQL Path | PQL Path |

|---|---|---|

| Lead to MQL | 39% | Skipped - product usage does the qualifying |

| MQL to SQL | 38% | Often reduced or skipped entirely |

| SQL to Opportunity | 42% | Often reduced |

| SQL to Closed | 37% | 20-30% convert directly |

| Overall close rate | ~5.5% compounded | 20-30% |

| Signal type | Downloads, form fills | Usage, activation milestones |

| Sales trust level | Low (98% never close) | High (action-backed intent) |

The MQL path compounds down brutally. Even if you're above average at every stage - 39% lead-to-MQL, 38% MQL-to-SQL, 37% SQL-to-closed - you're still looking at roughly 5.5% of raw leads turning into revenue. Product-qualified users cut through the early qualification stages because the product already did the qualifying.

Gartner research shows 83% of the B2B buying journey happens before a prospect talks to sales. Meanwhile, paid acquisition costs are up 4% year-over-year while impressions are down 15%. The economics increasingly favor letting the product do the selling and the qualifying.

The conversation on r/b2bmarketing reflects this shift. Fewer teams are celebrating MQL volume. The discussion has moved to pipeline velocity, SQL conversion, and revenue impact. MQLs remain baked into dashboards, but practitioners know they're a vanity metric in most PLG contexts.

How to Define Your PQL Criteria

Your PQL criteria should map to the specific actions that correlate with conversion in your product. There's no universal threshold, but here are common patterns we've seen across trial SaaS products:

- Log in 5-10 times per week

- Run 3+ core workflows (reports, projects, campaigns - whatever your "aha" action is)

- Invite collaborators or team members

- Click on premium feature education or upgrade prompts

- Visit the pricing page

For interactive demos, the signals shift to completion rate (80-100% watched), sharing behavior with colleagues, engagement with interactive elements, and click-throughs to documentation or signup pages. These are weaker signals than trial usage, but they still beat form fills.

Don't forget negative qualifiers. Competitor domains, student email addresses (.edu), and known tire-kicker patterns should disqualify leads regardless of usage. A competitor's product manager exploring your free tier isn't a sales opportunity - they're doing competitive research.

The best approach: pull a cohort of users who converted to paid in the last 6 months and look for the common behavioral thread. That's your PQL signal.

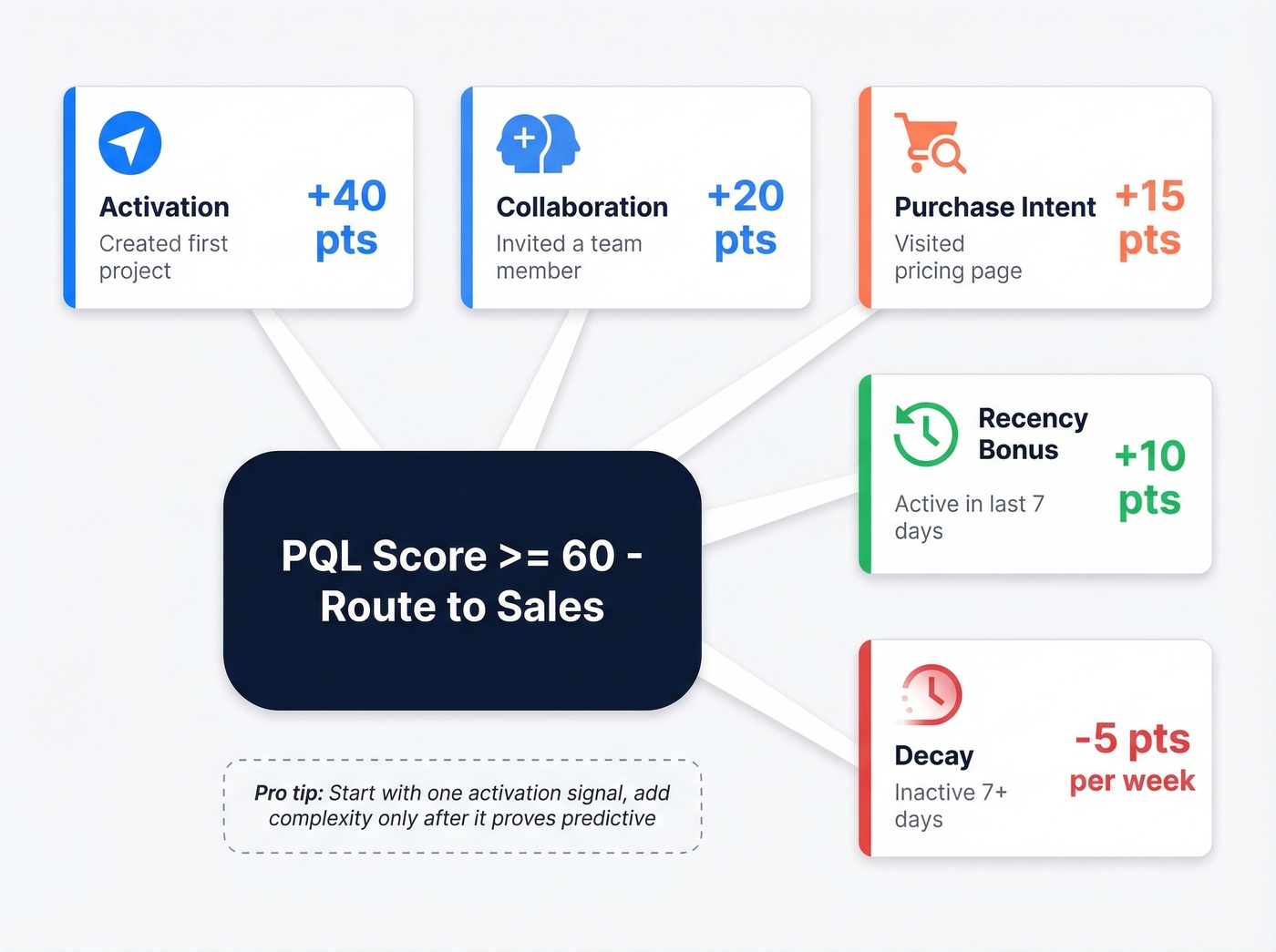

Build Your PQL Scoring Model

This is where most teams overcomplicate things. Let's break it down. The HelloGrowthCRM scoring template splits PQL scoring into five components: firmographic signals, behavioral signals, recency signals, threshold routing, and score decay. You can set this up in 1-2 hours.

Here's a concrete scoring model you can adapt:

| Signal Category | Example Event | Points | Notes |

|---|---|---|---|

| Activation | Created first project | +40 | Core "aha moment" |

| Collaboration | Invited team member | +20 | Multi-user = stickier |

| Purchase intent | Visited pricing page | +15 | High-intent signal |

| Recency | Active in last 7 days | +10 | Bonus for freshness |

| Decay | Inactive 7+ days | -5/week | Prevents stale leads |

| Threshold | >=60 | Route to sales |

The activation event carries the most weight because it's the strongest signal that a user has experienced value. A team invite at +20 points matters because multi-user adoption dramatically increases stickiness and deal size. The pricing page visit is a classic intent signal - someone checking what it costs is thinking about buying.

Recency and decay are what most scoring models miss. Without them, you end up with a backlog of "qualified" leads who were active three months ago and have long since moved on. The -5 points per inactive week ensures stale leads drop below threshold automatically.

Start with a minimum viable PQL. Before building a complex model, validate one signal. Find the single activation event most correlated with conversion - like Slack did with message count - and route those leads to sales. Add the other components only after that base signal proves predictive. In our experience, we've seen teams spend weeks building elaborate scoring systems that perform no better than a single well-chosen trigger. Your minimum viable PQL is that one trigger, and it's enough to start generating revenue from product usage data today.

Metrics That Matter

Once your model is live, track three numbers: raw PQL count (how many users cross your threshold per week), PQL rate (PQLs divided by total signups), and PQL-to-paid conversion rate.

That last one is the most important - and it cuts both ways. If your PQL-to-paid rate is above 50%, your threshold is probably too high and you're leaving money on the table. If it's suspiciously high, say 80%+, your product might be underpriced. An extremely high conversion rate means nearly everyone who uses the product enough is willing to pay, which signals you could charge more.

Different teams should own different slices. Marketing owns PQL rate - are we attracting the right signups? Sales owns PQL-to-paid conversion. Product owns the activation events that feed the model. When everyone watches the same dashboard but owns their piece, the whole system improves faster.

PQLs convert at 20-30% - but only if sales can actually reach them. When a user crosses your scoring threshold, stale or invalid contact data kills momentum. Prospeo's 143M+ verified emails refresh every 7 days, so your reps connect with PQLs while they're still active in your product.

Don't let a 60-point PQL bounce because the email was wrong.

The PQL Maturity Model

Not every company needs the same level of PQL sophistication. Decibel VC's framework breaks this into three tiers:

PQL 101 - Free-to-paid conversion signals. This is where most teams start. You're tracking which free users hit activation milestones and routing them to sales or automated upgrade flows.

PQL 201 - Paid-to-higher-tier signals. Once you've nailed free-to-paid, you start identifying expansion opportunities: users hitting plan limits, requesting features only available on higher tiers, or adding seats beyond their current plan's threshold. Slack does this brilliantly with message history limits and integration caps.

PQL 301 - Cross-sell experimentation and advanced segmentation. You're running multiple scoring models for different product lines, testing monetization triggers, and optimizing by segment.

Skip PQL 201 and 301 until you've proven PQL 101 works. Seriously. We've watched teams jump straight to multi-model segmentation before they even validated a single activation trigger, and the results were predictably messy.

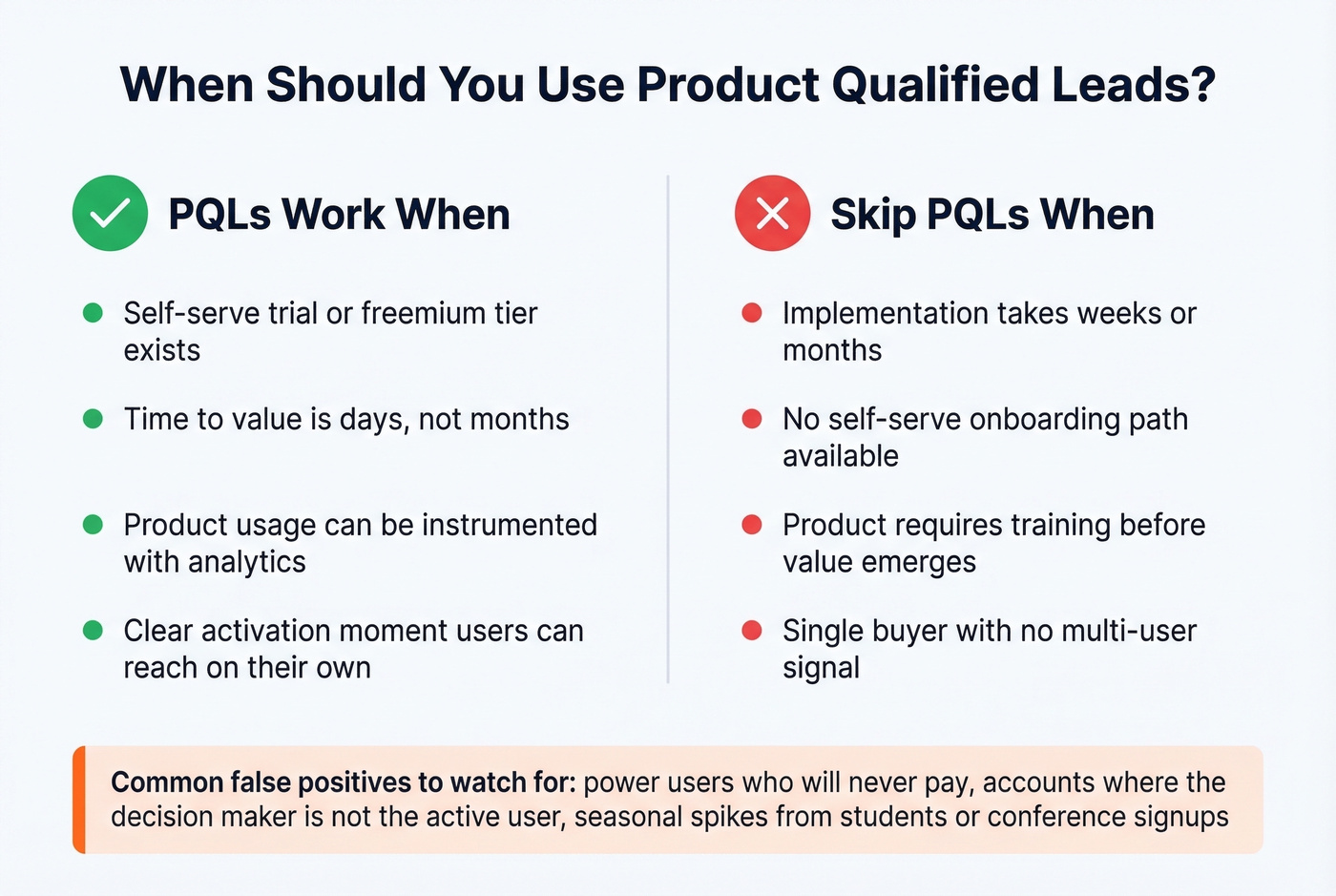

Product-qualified signals also break down when time-to-value is long. If your product requires months of implementation, integrations, and professional services before a user experiences value - think enterprise CRMs or complex data platforms - there's no "aha moment" in a 12-week implementation.

When PQLs Fail

PQLs work when you have a self-serve trial or freemium tier, time-to-value is short (days, not months), you can instrument product usage with analytics, and your product has a clear activation moment.

Skip them when implementation takes weeks, there's no self-serve onboarding path, your product requires training before value emerges, or you're selling to a single buyer with no multi-user signal.

Even in ideal conditions, false positives will erode your sales team's trust. Power users on free plans who'll never pay. Multi-user accounts where the decision-maker isn't the active user. Seasonal distortion from students or conference-driven signups. The useful framing: "interest doesn't equal intent" applies even within product usage. Someone exploring your tool for a blog post isn't the same as someone building a workflow they depend on.

Here's the thing: most teams that fail with PQLs don't have a scoring problem - they have a data quality problem. Your model identifies 47 hot leads this week. Half the emails bounce. The trial expires before a rep makes contact. The scoring model works perfectly. The contact data underneath it doesn't. That gap between a fired PQL alert and a reachable human is where deals go to die.

The PQL Tech Stack

The average GTM organization runs 23 tools in their stack. For PQL operationalization, you need these layers working together:

| Layer | Purpose | Tools |

|---|---|---|

| Data warehouse | System of record | Snowflake, BigQuery, Redshift |

| Product analytics | Track usage signals | Amplitude, Mixpanel, Heap |

| CDP | Unify user data | Segment, RudderStack |

| Reverse ETL | Sync to CRM | Hightouch, Census |

| CRM | Sales workflow | Salesforce, HubSpot |

| Enrichment / Intent | Account intelligence | Clearbit, 6sense |

| Alerting / Orchestration | Route PQLs to reps | Pocus, Correlated |

| Data verification | Ensure contact accuracy | Prospeo |

The data warehouse becomes the source of truth in a PLG stack - not the CRM. Product analytics tools feed usage events into the warehouse, the CDP unifies user identity across touchpoints, and reverse ETL pushes scored leads back into the CRM for sales action.

The layer most teams neglect is verification. Your PQL scoring model is only as good as the contact data it routes to sales. Prospeo handles this with 98% email accuracy and a 7-day data refresh cycle, so when a PQL alert fires, the rep has a verified email and direct dial - not a stale record from six weeks ago. At roughly $0.01 per email, it's the cheapest layer in the stack and arguably the one with the highest ROI.

Account-Level PQLs for Enterprise

Individual-level PQL scoring breaks down in enterprise deals. Buying groups average 5-11 stakeholders across functions - a single user hitting activation milestones doesn't represent the account's buying intent.

The fix is account-level aggregation: roll up product usage across all users at a company, then score the account as a whole. Three people from the same company active in your trial is a stronger signal than one power user, even if the power user's individual score is higher. Before a rep touches an account-level PQL alert, every contact in the buying group should be enriched and verified. Stale data on even one stakeholder can stall the entire deal.

Your scoring model routes product-qualified leads to sales - but what about the ones who signed up with personal emails? Prospeo's enrichment API returns 50+ data points per contact at a 92% match rate, giving your team verified work emails and direct dials to turn anonymous signups into closeable pipeline.

Turn anonymous free-tier users into fully enriched, sales-ready contacts.

FAQ

What's the difference between a product qualified lead and an MQL?

An MQL engaged with marketing content - downloaded an ebook, attended a webinar, filled out a form. A product qualified lead used your product and hit activation milestones that correlate with conversion. PQLs convert at 20-30% vs. roughly 5.5% for a typical compounded MQL path, which is why PLG companies increasingly treat them as the primary qualified lead type.

How many PQL signals should I track?

Start with one. Find the single activation event most correlated with conversion - Slack started with message count alone. Validate it with a cohort analysis comparing converted vs. churned users. Add complexity like team invites, pricing page visits, and recency decay only after the base signal proves predictive.

What happens when a PQL alert fires but the contact data is wrong?

The lead goes cold. Trial users have a narrow engagement window - if a rep can't reach them within days, conversion drops sharply. Bad data turns a great PQL model into an expensive notification system. This is why the verification layer matters more than most teams realize.

Can PQLs work for enterprise sales cycles?

Yes, but you need account-level aggregation instead of individual scoring. Roll up usage across all users at a company and score the account as a whole. Three active users from one company outweigh a single power user. Enterprise PQLs also require enriching the full buying group - 5-11 stakeholders on average - not just the trial user.