How to Build a Sales Call Scorecard That Actually Predicts Revenue

Three managers score the same discovery call. One gives it a 4 out of 5. Another gives it a 2. The third skips the scorecard entirely and writes "good energy" in the notes.

This is how most sales orgs run call quality - on vibes, not data. 83% of businesses use sales scorecards, and 96% of those say they're effective. The gap isn't adoption. It's rigor. A scoring rubric that moves revenue needs 5-7 weighted criteria tied to deal outcomes, behavioral anchors for each score level, stage-specific benchmarks, and a monthly calibration process. Below you'll find a copy-paste rubric, talk/listen benchmarks from 350+ analyzed calls, and a tools table with real pricing.

What a Call Scorecard Actually Is

A sales call scorecard is a structured rubric that evaluates rep performance on specific, observable behaviors during a call. It's not a call-center QA form - those focus on compliance and script adherence - and it's not a sales dashboard, which tracks outcomes rather than inputs.

| Scorecard | Dashboard | |

|---|---|---|

| Focus | Rep-level inputs & behaviors | Org-level outcomes & KPIs |

| Purpose | Coaching tool | Operational reporting |

Companies with a defined sales process are 33% more likely to be high performers, and those with a formal process see 18% more revenue growth than those without one. The scorecard is how you enforce that process at the conversation level.

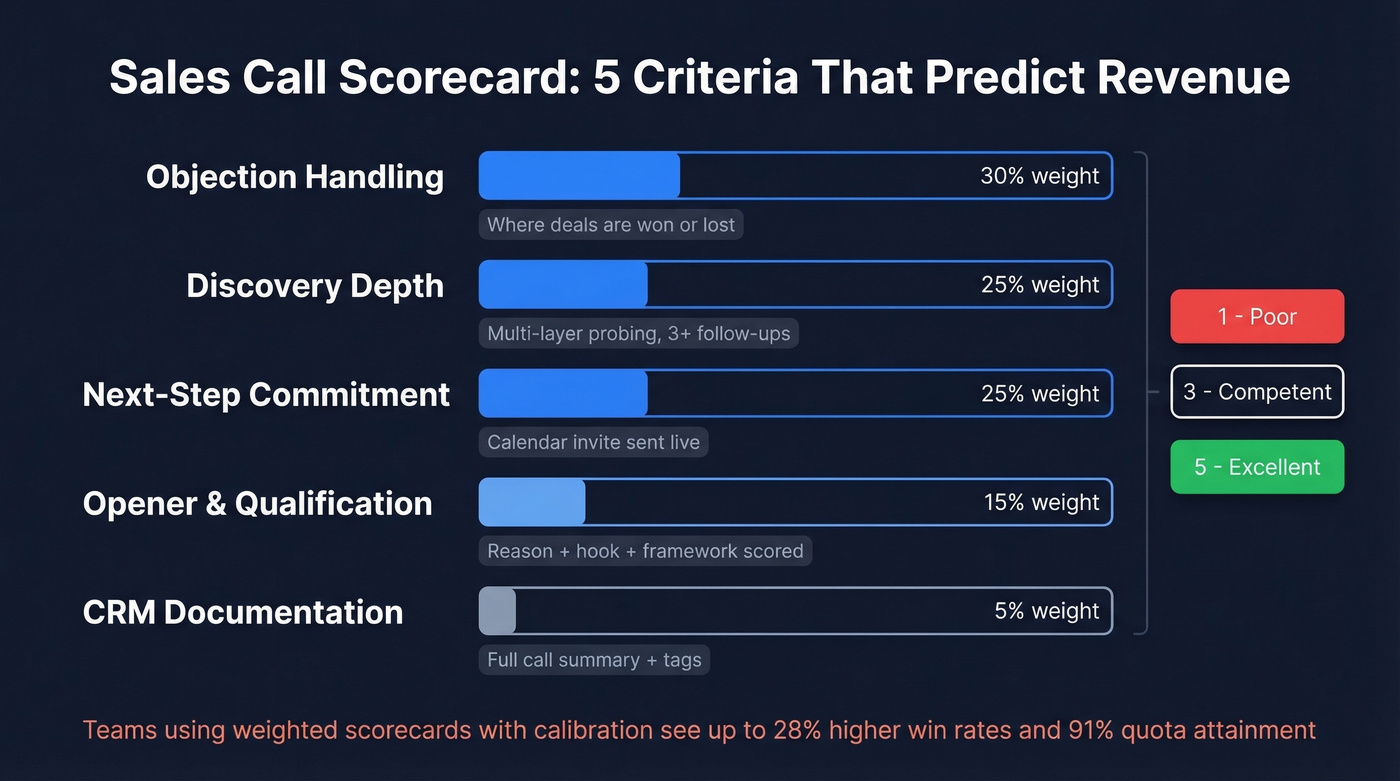

5 Criteria That Predict Revenue

Most scorecards fail because they measure the wrong things. "Was the rep polite?" doesn't predict pipeline. Here are five criteria that do, with weights aligned to deal impact and behavioral anchors for a 1-5 scale.

| Criterion | Weight | 1 (Poor) | 3 (Competent) | 5 (Excellent) |

|---|---|---|---|---|

| Opener & qualification | 15% | No reason for call; no framework | States reason; partial framework | Reason + hook; full framework scored |

| Discovery depth | 25% | Surface questions only | Uncovers 1-2 pains | Multi-layer probing, 3+ follow-ups |

| Objection handling | 30% | Ignores or deflects | Acknowledges objection | Reframes with proof point |

| Next-step commitment | 25% | Vague follow-up | Tentative next step | Calendar invite sent live |

| CRM documentation | 5% | No notes logged | Basic notes | Full call summary + tags |

Objection handling gets the heaviest weight because it's where deals are won or lost. Openers matter, but they're table stakes - handling resistance and locking down next steps is where revenue lives. Teams that implement weighted conversation scorecards with calibration can see win rates climb up to 28% and quota attainment reach 91%.

Example evaluation questions to score against:

- Discovery depth: "Did the rep ask at least two follow-up questions after the initial pain point surfaced?"

- Objection handling: "When the prospect raised a concern, did the rep acknowledge it before responding - or did they bulldoze past it?"

- Next-step commitment: "Did the call end with a specific date, time, and agenda for the next conversation?"

These questions give managers a concrete checklist when reviewing recorded conversations. Copy the table above into Google Sheets, add your methodology criteria from the framework section below, and you've got a working scorecard in 10 minutes. That's not a shortcut - it's genuinely all you need to start.

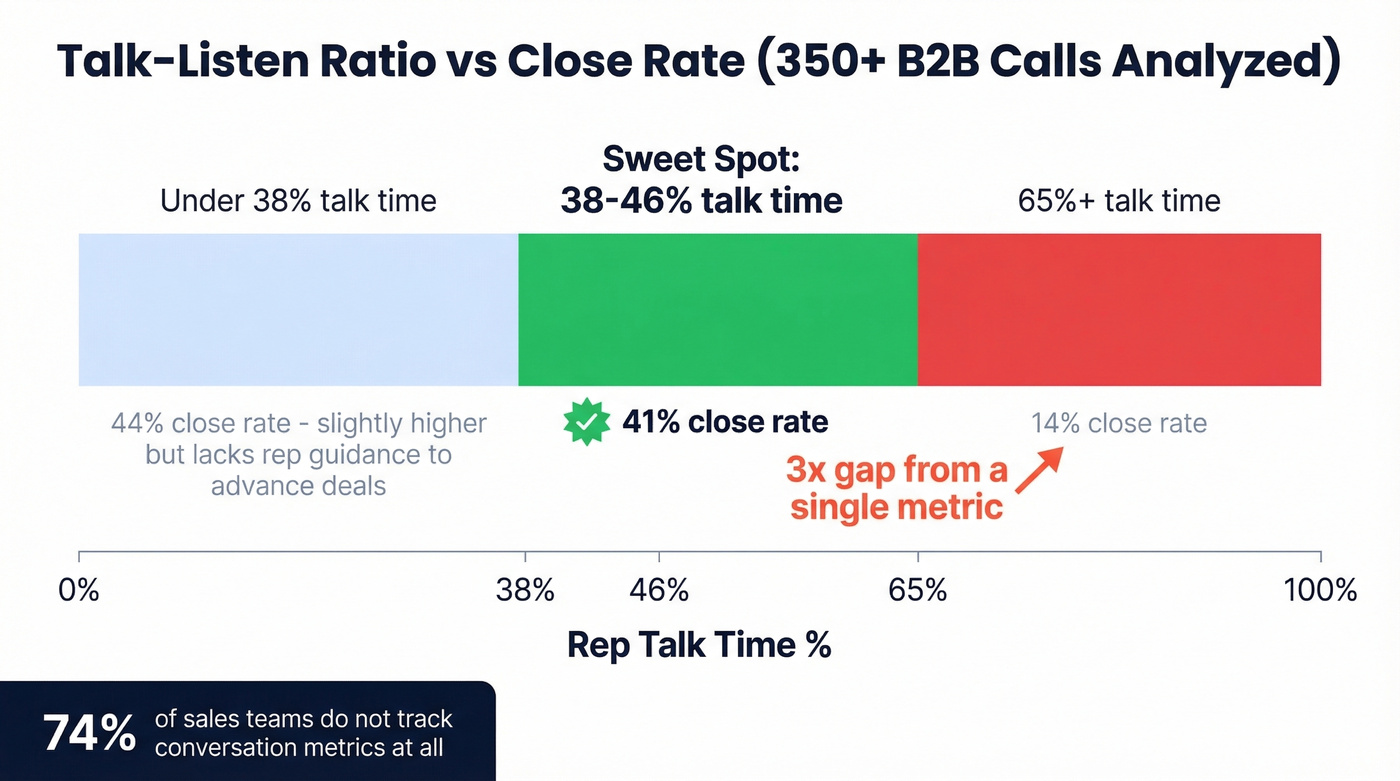

What the Data Shows

A study of 350+ B2B calls across 47 teams found that reps who talked 38-46% of the time closed at a 41% rate. Reps who talked 65% or more? 14%. That's a 3x gap from a single metric. Interestingly, reps who talked less than 38% closed at 44% - slightly higher - but those calls often lacked enough rep guidance to advance the deal. The sweet spot is 38-46%: enough listening to understand, enough talking to lead.

For openers, Gong's analysis of 90,380 cold calls found that stating the reason for your call increases success rate 2.1x. "Did I catch you at a bad time?" produced a 0.9% success rate - worse than saying nothing at all.

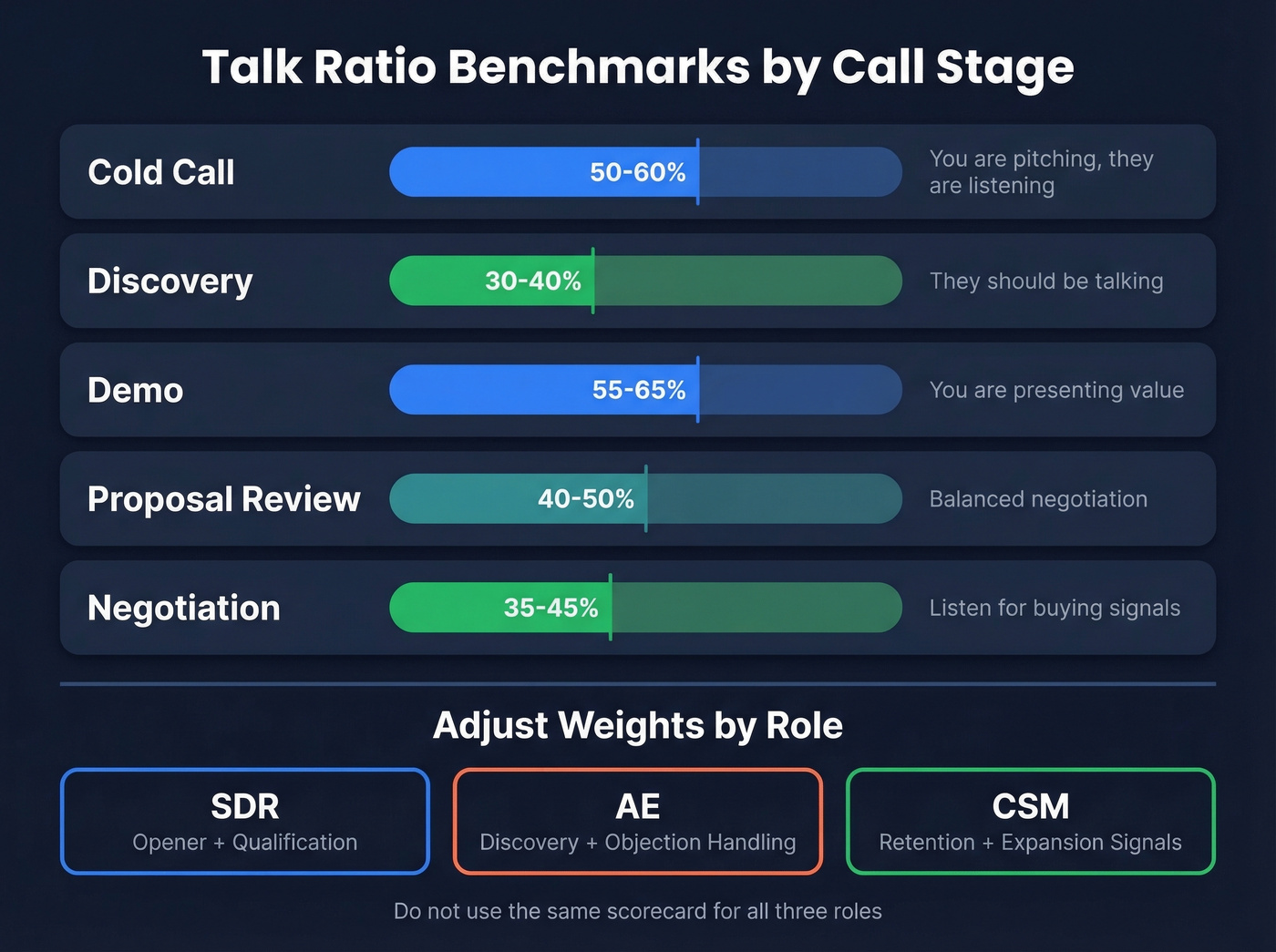

Stage-Specific Benchmarks

One talk-ratio target doesn't fit every call type.

| Call Stage | Rep Talk Target | Why |

|---|---|---|

| Cold call | 50-60% | You're pitching; they're listening |

| Discovery | 30-40% | They should be talking |

| Demo | 55-65% | You're presenting value |

| Proposal review | 40-50% | Balanced negotiation |

| Negotiation | 35-45% | Listen for buying signals |

Only 26% of sales teams track conversation metrics at all. If you're scoring these, you're already ahead of three-quarters of the market.

How criteria shift by role: SDRs should be scored primarily on opener quality and qualification adherence - their job is to open doors, not close deals. AEs carry the weight on discovery depth and objection handling. CSMs should be evaluated on retention signals: did they surface expansion opportunities, and did they confirm the customer's ongoing success metrics? Don't use the same scorecard for all three roles. Adjust the weights or you'll coach SDRs on skills they don't need yet.

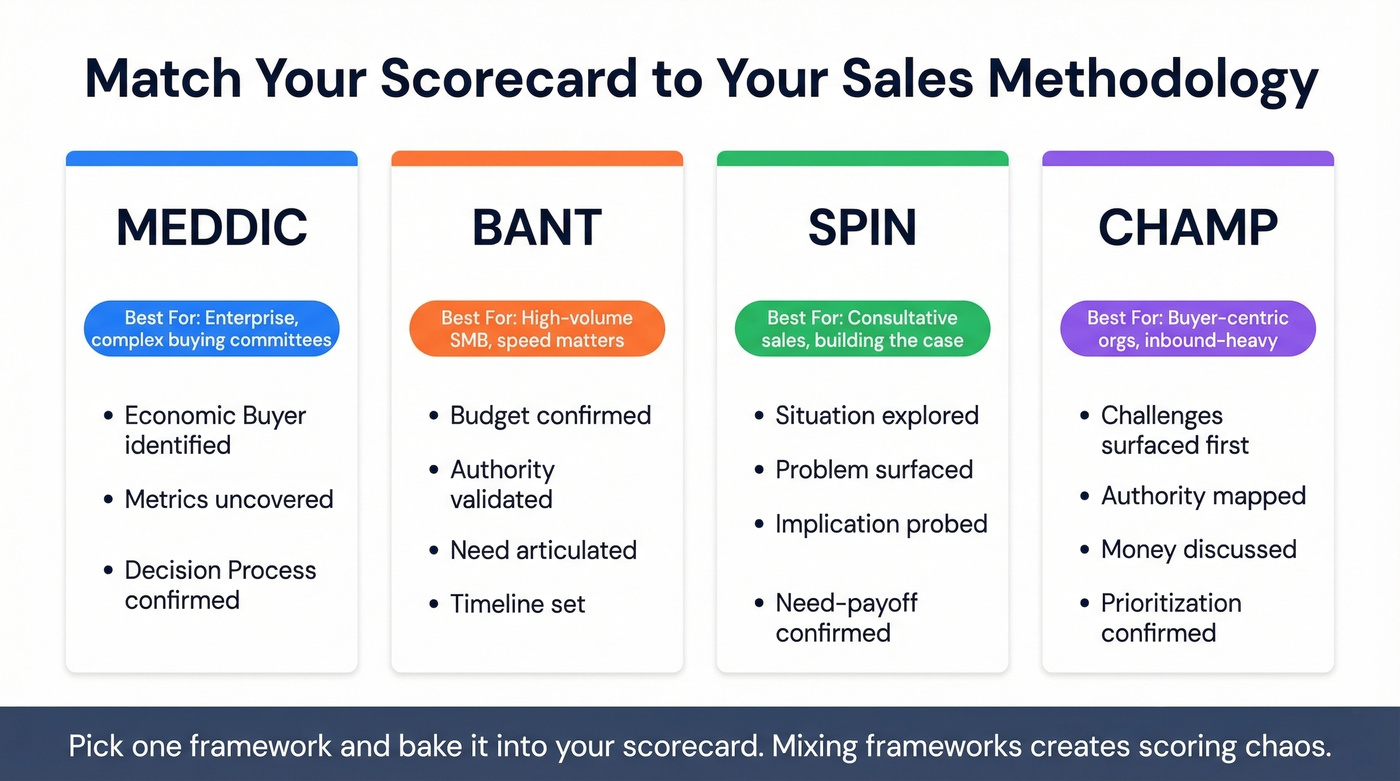

Align Criteria to Your Methodology

Your scorecard should reflect how your team actually sells.

| Framework | Score These Behaviors | Best Fit |

|---|---|---|

| MEDDIC | Economic Buyer ID'd, Metrics uncovered, Decision Process confirmed | Enterprise, complex buying committees |

| BANT | Budget confirmed, Authority validated, Need articulated, Timeline set | High-volume SMB, speed matters |

| SPIN | Situation explored, Problem surfaced, Implication probed, Need-payoff confirmed | Consultative sales, building the case |

| CHAMP | Challenges surfaced first, Authority mapped, Money discussed, Prioritization confirmed | Buyer-centric orgs, inbound-heavy |

CHAMP is a buyer-centric variant of BANT that leads with challenges instead of budget - it works well for teams where the prospect doesn't know they have a problem yet. Pick one framework and bake it into your scorecard. Mixing frameworks across the same team creates scoring chaos.

A perfect scorecard won't save a call made to the wrong person. Prospeo's 300M+ profiles with 30+ filters - including buyer intent, job changes, and department headcount - ensure your reps are calling verified decision-makers, not gatekeepers. 98% email accuracy. 125M+ verified mobiles with a 30% pickup rate.

Score higher on every call by starting with better prospects.

Calibration, Sampling, and Rollout

Here's the thing - a perfect scorecard template is worthless without calibration.

- Calibration sessions: Have multiple managers score the same recorded call independently, then compare. Target >85% inter-rater reliability before going live.

- Frequency: Weekly calibration during launch, monthly once scores converge across evaluators.

- Sampling: Score 5-10 calls per rep per month. Manual QA typically covers only 2-5% of calls - that's not enough to spot patterns.

- Pilot first: Run the scorecard with one team for 4-6 weeks before full rollout. Fix the rubric before you scale it.

- Share criteria upfront: Reps should see the scorecard before they're ever scored on it. Transparency kills resistance.

Let's be honest: you don't need a $30K platform. A Google Sheet with the right criteria, weighted correctly, and calibrated monthly will outperform an expensive tool nobody uses. We've seen teams spend six figures on conversation intelligence and still have managers scoring vibes because nobody built the rubric. The tool doesn't matter. The rubric does.

Using Your Scorecard for Coaching

The real value of a sales call scorecard isn't the score itself - it's the coaching conversation that follows. Each criterion on your rubric doubles as a coaching template: pull up the recorded call, walk through the specific moments where the rep scored a 2 or 3, and role-play the alternative approach together.

Managers who tie coaching sessions directly to scorecard criteria see faster skill development because feedback is anchored to observable behavior, not abstract advice like "be more consultative." In our experience, the reps who improve fastest aren't the ones getting the highest scores - they're the ones whose managers actually review the tape with them every week.

Scoring Tools and Pricing

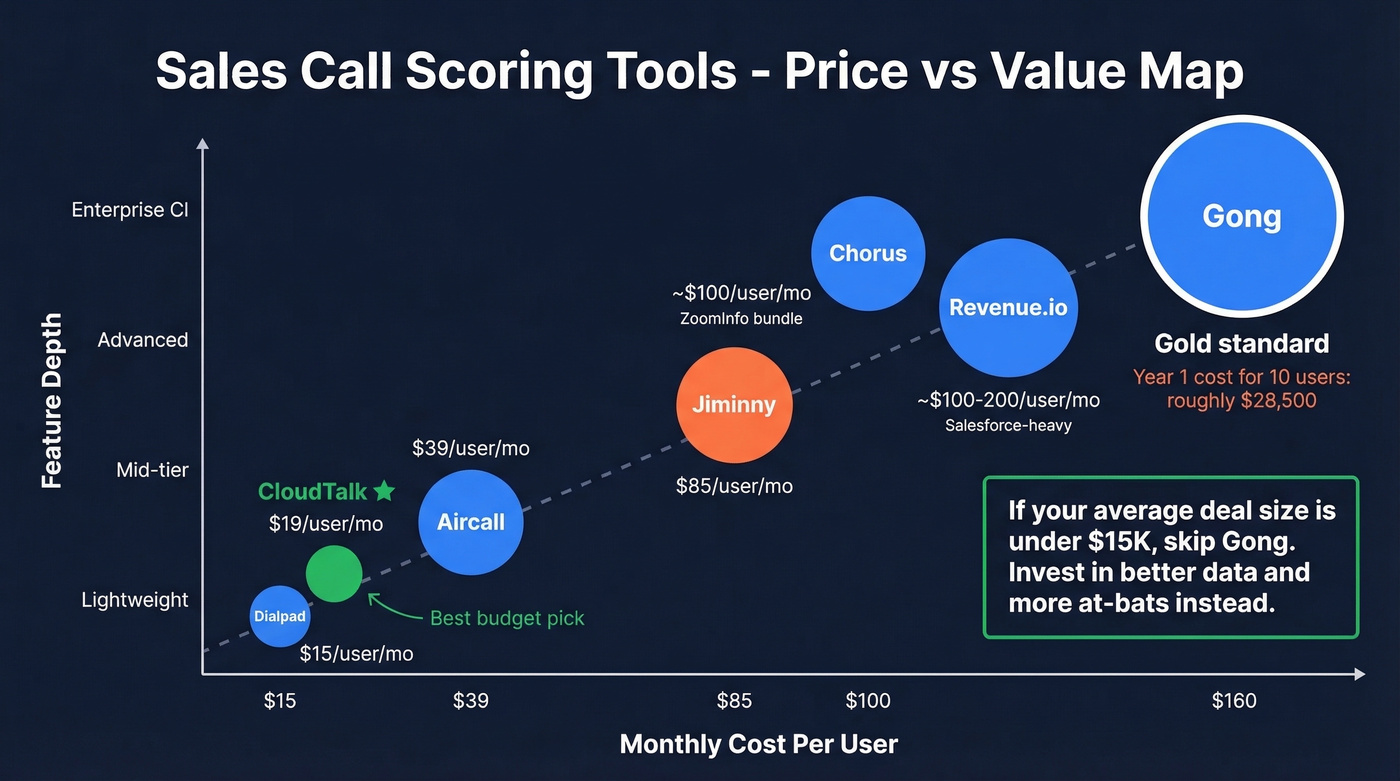

If you do want software, here's what the market looks like:

| Tool | Starting Price | Best For |

|---|---|---|

| Gong | ~$160/user/mo + platform fee | Enterprise CI + coaching |

| Chorus | ~$100/user/mo (ZoomInfo bundle) | ZoomInfo customers |

| Revenue.io | ~$100-200/user/mo | Salesforce-heavy orgs |

| Jiminny | $85/user/mo | Mid-market coaching |

| Aircall | $39/user/mo | SMB call scoring |

| CloudTalk | $19/user/mo | Budget-friendly AI scoring |

| Dialpad | $15/user/mo | Lightweight AI + calls |

Gong is the gold standard, but the math is real. A 10-user deployment runs roughly $28,500 in year one - $16K in licenses, $5K platform fee, and $7.5K for onboarding. Watch for 5-15% auto-renewal uplifts on multi-year contracts.

CloudTalk or Dialpad get you 80% of the scoring value at 10% of the cost. For most SMB teams, that's the right call. If your average deal size is under $15K, skip Gong - invest that budget in better data and more at-bats instead.

If you're comparing call platforms before you buy, start with Dialpad alternatives and Aircall vs CloudTalk.

The Data Quality Prerequisite

None of this matters if your reps are calling disconnected numbers. Your SDR team makes 200 dials a day, but 30% of the numbers bounce. You're scoring calls that never should have happened - and the noise corrupts every metric on your scorecard.

Before you score a single call, make sure your reps are reaching real people. Tools like Prospeo cover 300M+ professional profiles with 143M+ verified emails at 98% accuracy and 125M+ verified mobile numbers that hit a 30% pickup rate. Data refreshes every 7 days instead of the 6-week industry average. At roughly $0.01 per email with a free tier of 75 emails plus 100 Chrome extension credits per month, there's no reason to let bad data pollute your coaching metrics.

If you want to tighten targeting before your reps ever dial, use an ideal customer profile and layer in data enrichment to keep records current.

Your reps hit 41% close rates when they talk 38-46% of the time - but only if they're talking to the right buyer. Prospeo's intent data tracks 15,000 topics so your team calls prospects who are actively in-market. No more wasted discovery calls on cold leads.

Stop scoring calls that should never have been made.

FAQ

Should I use a 1-5 scale or pass/fail?

Use a 1-5 scale with behavioral anchors - it gives managers coaching specificity that binary scoring can't. Pass/fail works only for compliance items like "Did the rep log the call in CRM?" For skill-based criteria like discovery depth and objection handling, granularity drives better feedback and faster improvement.

How many calls should I score per rep per month?

Score 5-10 calls per rep for a statistically meaningful sample without overwhelming managers. That's already 2-5x more than most teams review. AI tools like Gong or CloudTalk can score 100% of calls and flag outliers for human review, stretching your QA budget further.

What if my reps push back on being scored?

Share the scorecard criteria before you score anyone - reps resist surprise evaluations, not transparent coaching frameworks. It also helps to ensure reps are evaluated on real conversations with real prospects, not dead-end dials to disconnected numbers. Clean your contact data first, then score.

How is this different from call-center QA?

Call-center QA focuses on compliance - script adherence, disclaimers read, customer name used. A sales call scorecard focuses on revenue-driving behaviors like discovery depth, objection handling, and next-step commitment. The structure is similar, but the criteria and coaching outcomes are fundamentally different.