AI Lead Scoring: What Works, What Breaks, and What It Costs

A RevOps lead we know used AI lead scoring to cut 1,000+ "active" leads down to 400. They ditched firmographic-only scores, weighted repeat pricing page visits, demo watch time, and recent email clicks instead. Reply rates went from 3% to 8% in six weeks. For context, baseline cold email reply rates sit at 1-5% - that jump put them well above average.

Every guide on this topic is written by a company selling you a scoring tool. This one isn't.

What You Need First

Before you read another word:

- 12-24 months of clean CRM history with consistent field hygiene

- ~1,000 converted leads (Salesforce's common Einstein benchmark)

- Behavioral tracking in place - website visits, email engagement, content downloads

- Verified contact data - stale emails and bad phone numbers corrupt every model downstream

If you don't have those four things, stop here. A spreadsheet with three high-intent signals will outperform any AI-powered scoring tool running on garbage data.

What Is AI Lead Scoring?

AI lead scoring uses machine learning to analyze historical conversion patterns and rank incoming leads by likelihood to buy. Instead of a marketing manager manually assigning 10 points for "VP title" and 5 for "opened an email," the model finds patterns across hundreds of signals - firmographic, behavioral, technographic, intent - and weights them automatically.

Only 44% of organizations use lead scoring at all. The ones that do consistently outperform: companies using lead scoring report 138% ROI versus 78% for those without.

| Dimension | Traditional (Manual) | AI-Powered |

|---|---|---|

| Speed | Hours to set up rules | Hours to days to retrain |

| Data inputs | A handful of fields | Hundreds of signals |

| Scalability | Breaks at volume | Improves at volume |

| Accuracy | Decays without updates | Improves with retraining and new outcomes |

| Cost | Low (built-in CRM) | Free tiers to $300K+/year |

How Does It Actually Work?

The process breaks into four steps: collect signals, analyze patterns, score leads, and optimize over time. The key - and the failure point - is in what signals you collect and how you weight them.

Signals fall into three tiers:

- Low intent: Blog visits, social follows, generic page views - awareness, not buying behavior.

- Medium intent: Email opens, content downloads, webinar attendance - engagement, but not necessarily commercial.

- High intent: Pricing page visits, demo requests, form submissions - these are the money signals.

The practical rule: weight high-intent actions 5x higher than passive behaviors. A pricing page visit is worth more than fifty blog reads.

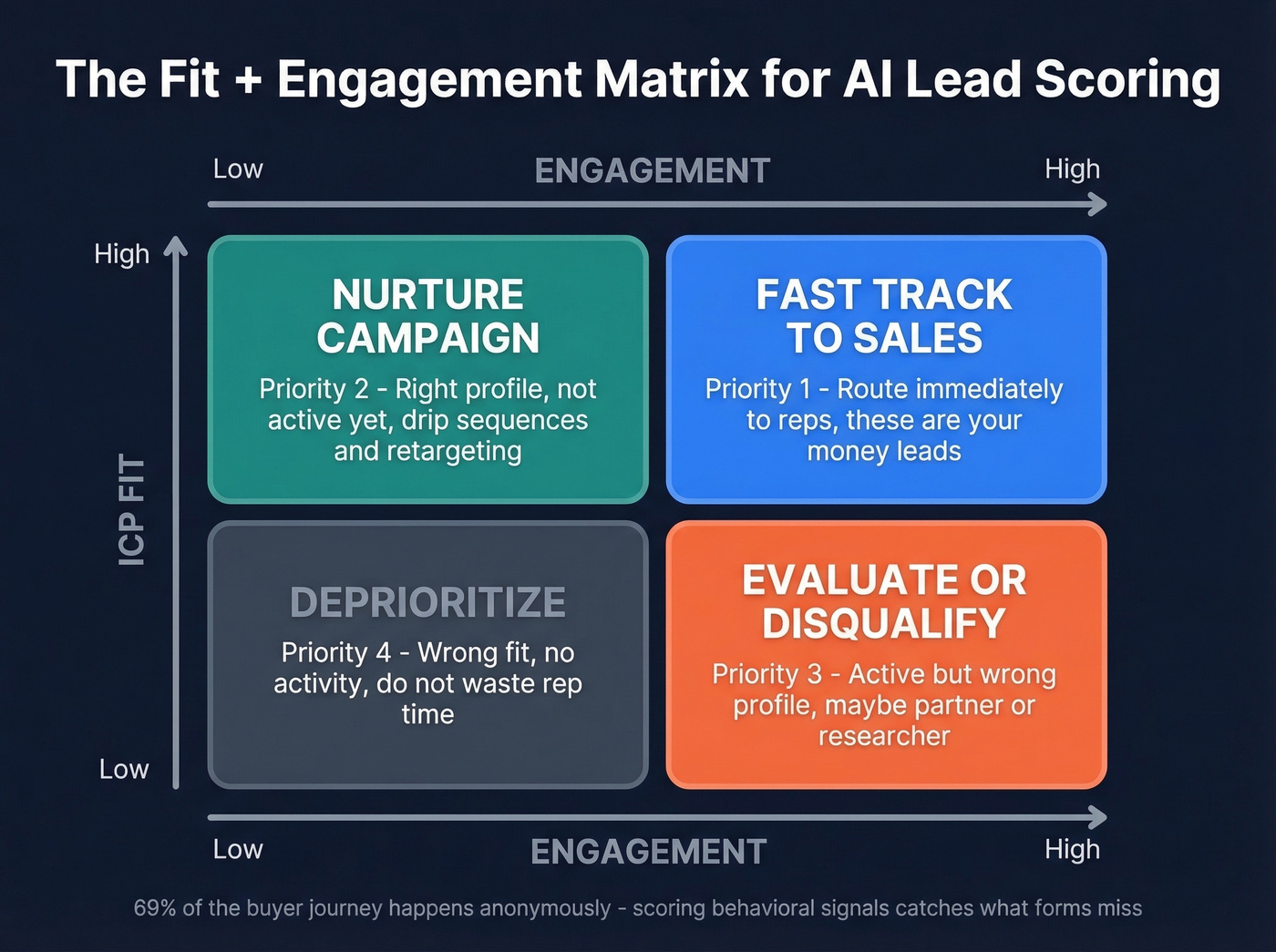

The best models combine fit (whether this person matches your ICP) with engagement (whether they're showing buying behavior). High-fit, high-engagement leads go straight to sales. High-fit, low-engagement leads get nurtured. Low-fit, high-engagement leads get routed differently or disqualified entirely. This fit + engagement matrix, outlined well in ZoomInfo's scoring guide, is the foundation every intelligent scoring model builds on.

Here's the thing that makes this urgent: buyers complete up to 69% of their journey anonymously before talking to sales. If you aren't scoring behavioral signals, you're blind to most of the buying process.

A common setup in practice is a 0-100 score with clear qualification bands - "Highly likely," "Likely," and "Unlikely" - so sales and marketing agree on what "qualified" actually means.

Real Benefits With Numbers

Forrester's AI in B2B Sales research is widely cited for outcomes like 38% higher conversion rates from lead to opportunity, 28% shorter sales cycles, 17% higher average deal values, and a 35% reduction in cost per acquisition.

These numbers align with what we've seen in practice. The 3% to 8% reply rate jump from the team above is a real-world example of what happens when you stop chasing every "looks right on paper" lead and start prioritizing actual buying behavior. Beyond conversion, predictive lead scoring also sharpens pipeline forecasting - when your lead quality inputs are reliable, weighted pipeline values and revenue projections get measurably more accurate.

The caveat: these numbers assume clean data, proper implementation, and enough historical volume. Without those, you're just adding complexity.

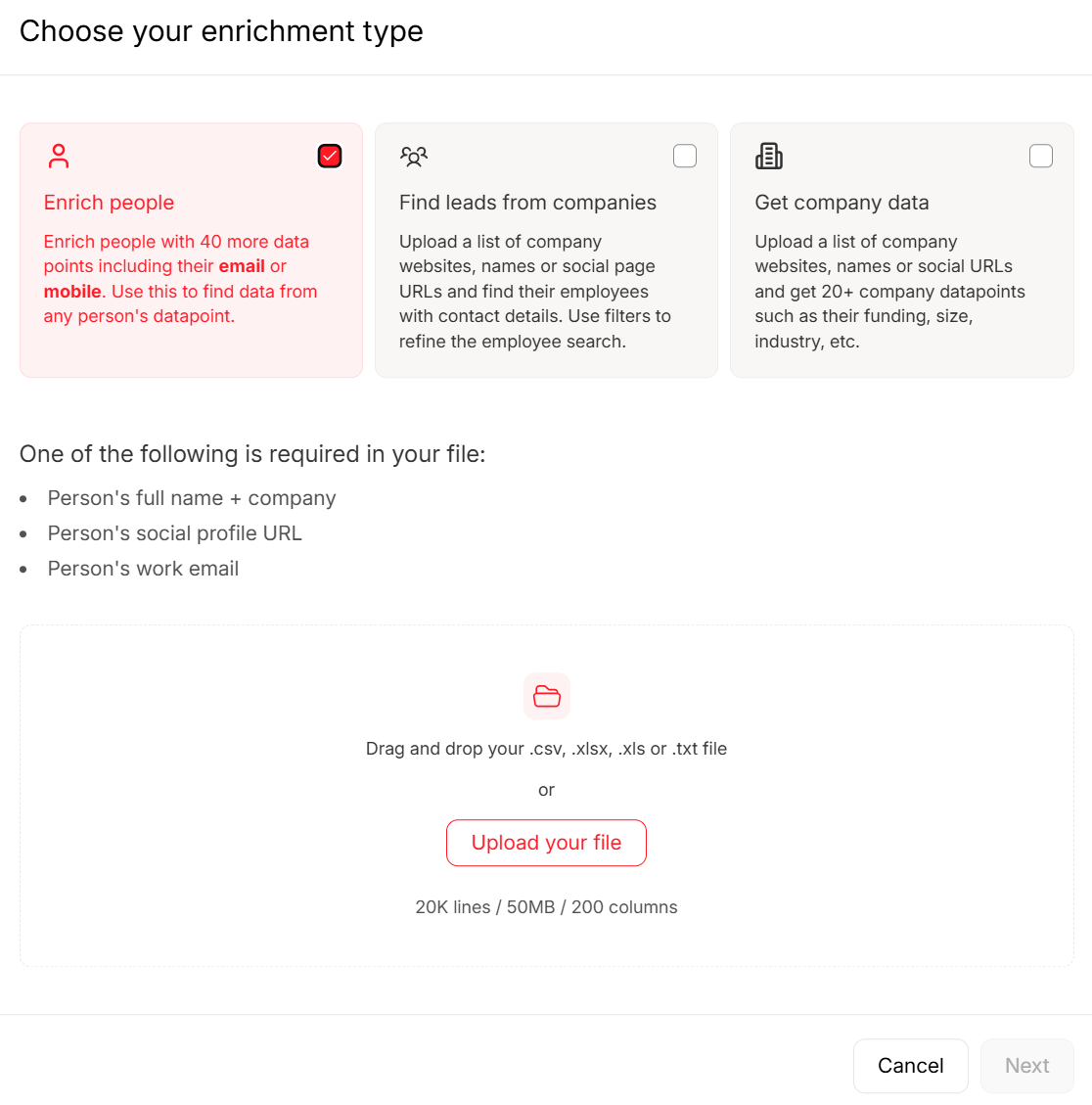

Your scoring model is only as good as the data feeding it. Prospeo refreshes 300M+ profiles every 7 days - not every 6 weeks - so your AI trains on current reality, not ghosts. 98% email accuracy, 5-step verification, spam-trap removal included.

Stop training your scoring model on stale data.

Five Ways Scoring Models Break

This is the section most guides skip. AI-powered scoring fails more often than vendors admit.

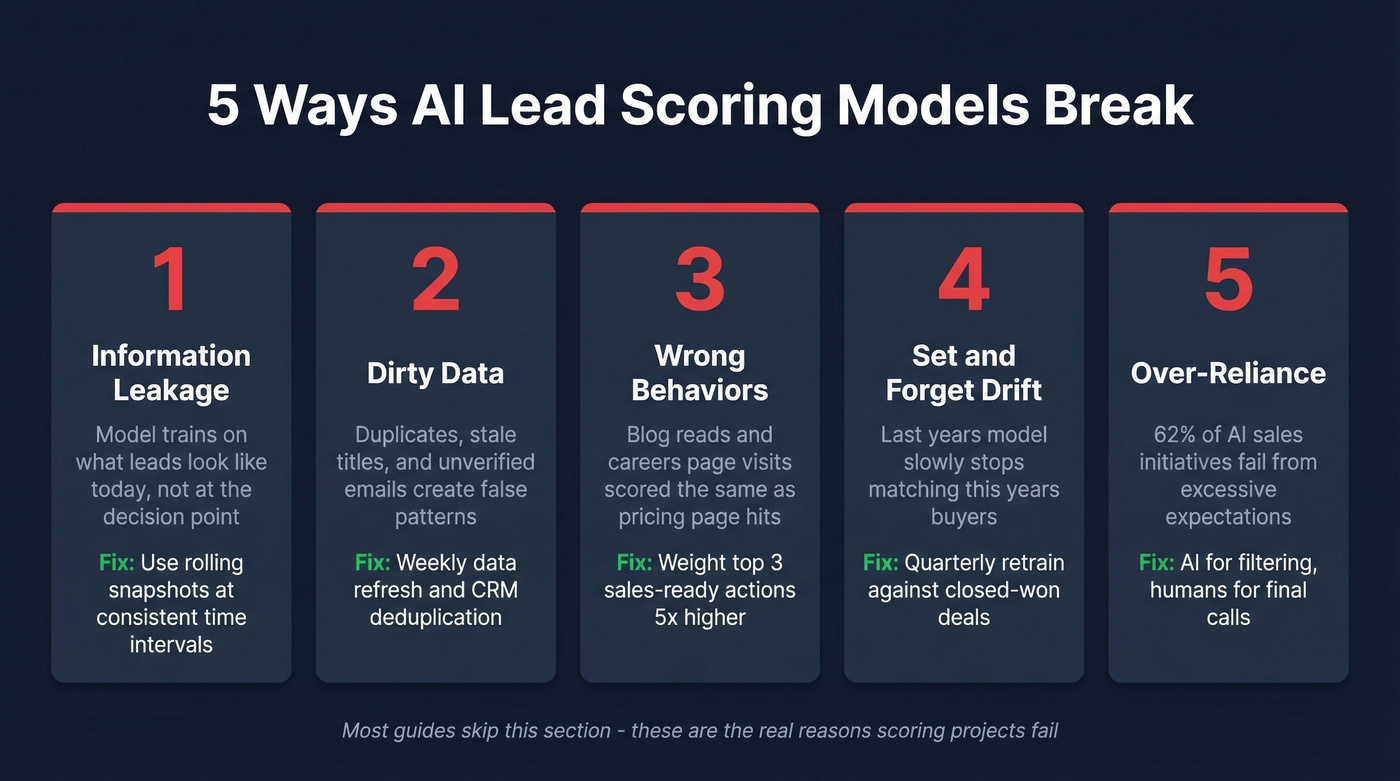

1. Information Leakage

No vendor talks about this: CRMs store the "latest state" of a lead, not the state at the decision point. Your model trains on what the lead looks like today - after months of nurturing, field updates, and enrichment - not what it looked like when the buying decision was actually made.

This creates three problems: information leakage from training on "future" signals, feature distribution bias where Day 30 data looks nothing like Day 0, and implicit penalties against new leads that haven't accumulated enough history. The fix is rolling snapshots - capturing lead data at consistent time intervals so your model trains on what leads actually looked like when decisions happened.

2. Dirty Data, Garbage Scores

Your model is only as good as the data feeding it. Duplicates, outdated job titles, missing fields, and unverified emails create false patterns. A common example: Gmail addresses getting mistakenly treated as hot leads because a handful of early customers used personal email. The model learns "Gmail = buyer" and starts scoring every freemail address higher.

CRM hygiene isn't optional - it's a prerequisite. Machine learning models trained on stale or unverified contact data produce unreliable scores every time. This is where a data layer with weekly refresh cycles matters: if your "current" data is six weeks old, your model is learning from ghosts.

3. Scoring the Wrong Behaviors

Not all engagement is buying intent. A prospect reading your blog about industry trends is showing educational interest. Someone visiting your Careers page is looking for a job. Someone hitting your pricing page three times in a week? That's commercial intent. If your model treats all three equally, it's useless.

Define your top three sales-ready actions and weight them 5x higher than passive behaviors. Use negative scoring for disqualifiers - Careers page visits, competitor research patterns, out-of-area locations.

4. Set-and-Forget Drift

Markets shift. Your ICP evolves. A model trained on last year's conversion patterns will slowly drift out of alignment with reality. We've seen teams launch scoring, celebrate the initial lift, and then wonder six months later why lead quality feels worse than before.

Review closed-won deals every quarter. If the deals your model scores highest don't match the deals that actually close, retrain.

5. Over-Reliance Without Human Review

62% of AI initiatives in sales fail due to excessive expectations and inadequate preparation. The consensus on r/sales is blunt - as one B2B marketer put it, the fear is teams using AI "as a crutch" and causing "havoc" in pipeline decisions. They're right to worry. Scoring algorithms are excellent at filtering - surfacing the 20% of leads worth a rep's time. They're terrible at reading context or catching the nuance that makes a "low-score" lead actually worth pursuing.

The rule: AI for filtering, humans for final calls.

Are You Ready?

Before you buy anything, honestly assess where you stand:

- 12-24 months of CRM history with consistent data entry

- ~1,000 converted leads (ideal for Salesforce Einstein)

- Clean, deduplicated contact records with verified emails

- Behavioral tracking active across website, email, and content

- Sales team willing to actually use scores in their workflow

- Budget for at least 6 months of tool + maintenance costs

If you can't check all six, you aren't ready. Here's our hot take: most teams with deal sizes under $10K don't need this at all. You need clean data, three high-intent behavioral signals, and a spreadsheet. Pricing page visits, demo requests, and return visits within 7 days - score those manually and you'll outperform most poorly implemented models. The tool doesn't create the insight; the signal hierarchy does.

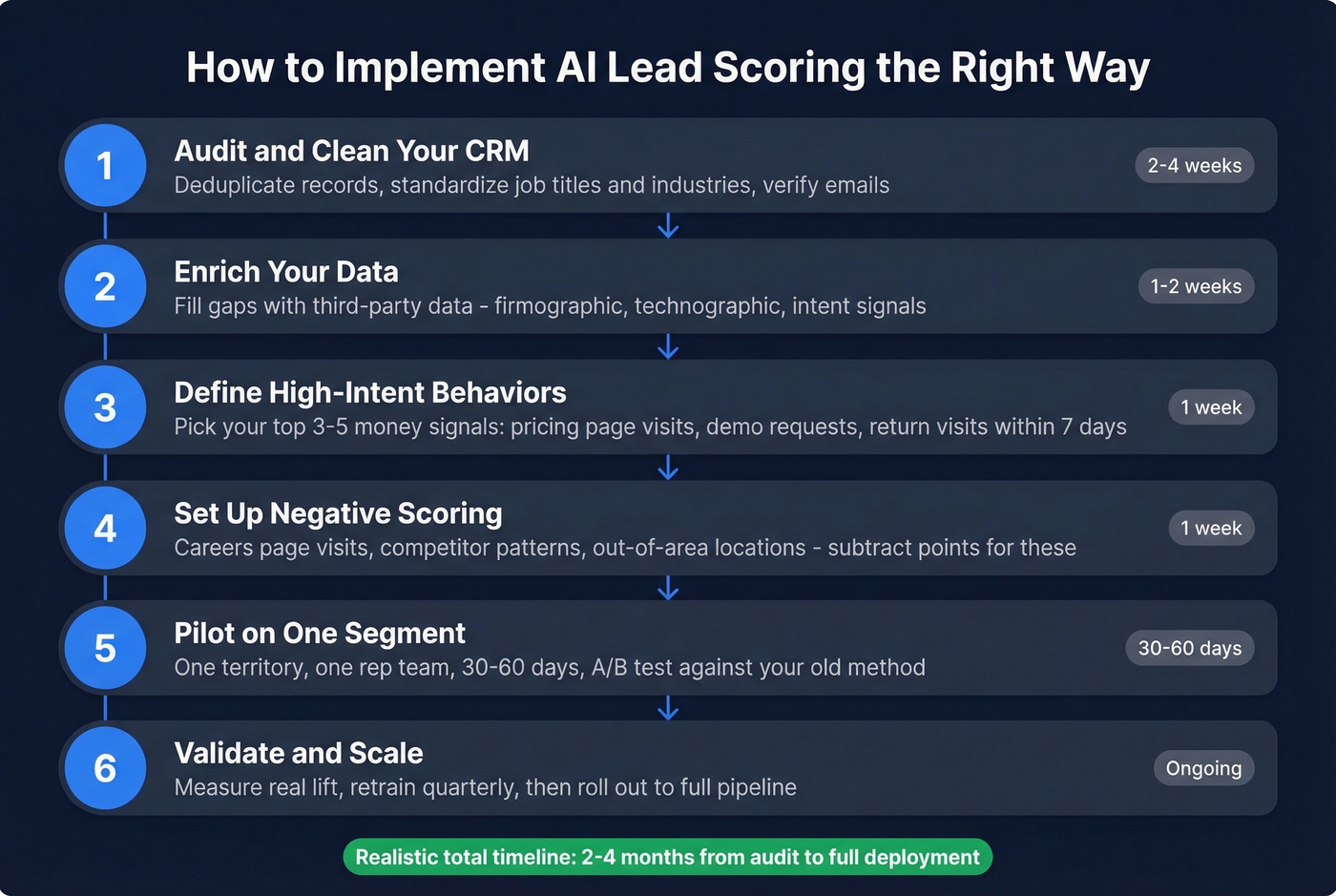

How to Implement It Right

Start with an audit. Deduplicate your CRM, verify email addresses, standardize job titles and industry fields. This alone often takes a few weeks, and it's the step everyone wants to skip. Don't.

Next, enrich your data to fill the gaps your CRM can't fill on its own. Layer in intent data so your model has both fit and intent signals from day one, then define your top 3-5 high-intent behaviors, weight them, and set up negative scoring for disqualifiers. Keep it simple - you can add complexity later.

Pilot on a subset before rolling scoring across your entire pipeline. Pick one segment, one rep team, one territory. Run it for 30-60 days, then A/B test scored leads against a control group using your old prioritization method. Forbes recommends this hybrid approach - algorithmic scoring plus human validation - to build trust and measure real impact. Once you've validated lift, scale to the full pipeline. Some teams also explore autoML platforms that automate model selection and hyperparameter tuning, reducing the need for a dedicated data science team. Expect realistic timelines: ProPair deploys in under 30 days, 6sense takes 1-3 months, Leadspace typically needs 2+ months.

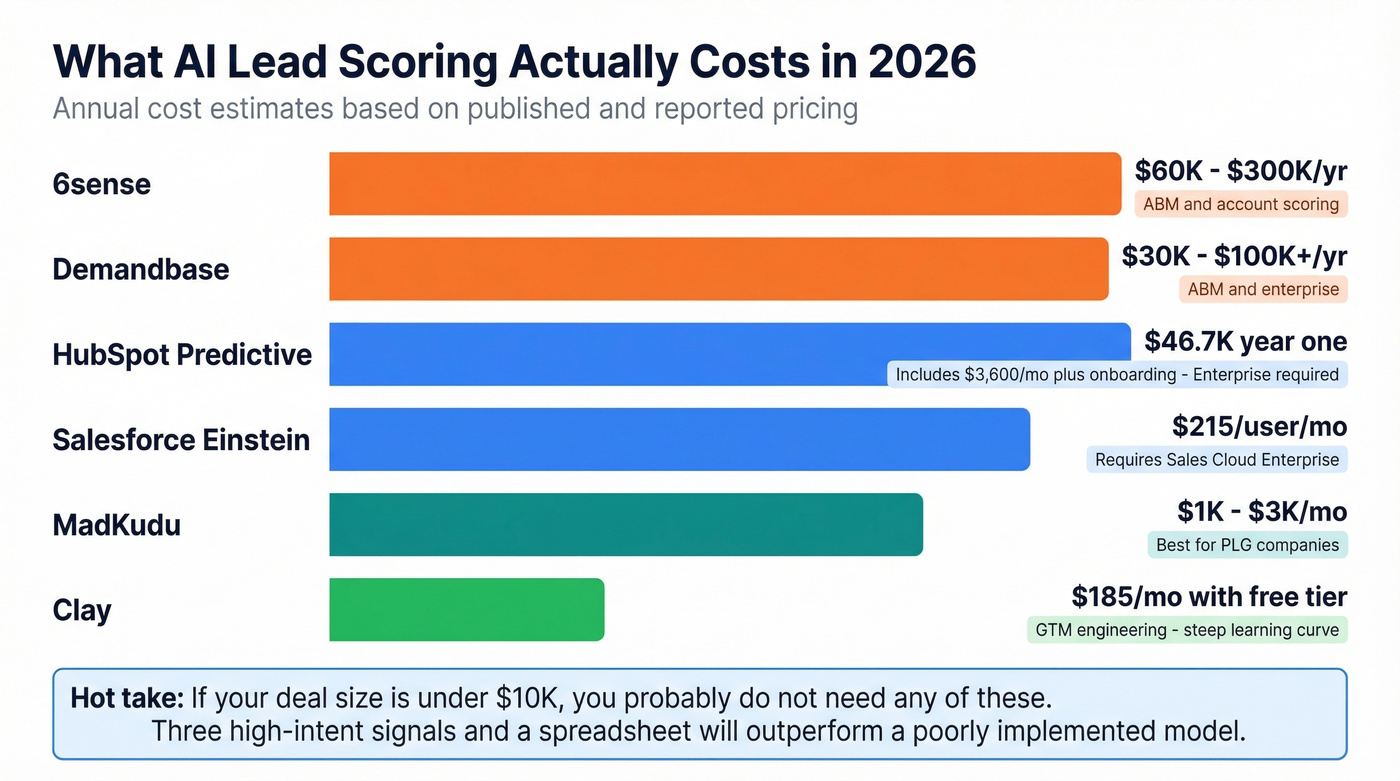

What It Actually Costs

Most vendors don't publish pricing. Here's what you'll actually pay:

| Tool | Best For | Starting Price | Note |

|---|---|---|---|

| Salesforce Einstein | Enterprise SF shops | $215/user/mo | Requires Sales Cloud Enterprise |

| HubSpot Predictive | Mid-market HubSpot | $3,600/mo (10 seats) | $46.7K in year one with onboarding |

| 6sense | ABM / account scoring | $60K-$300K/year | 1-3 month deployment |

| Demandbase | ABM / enterprise | $30K-$100K+/year | Custom pricing only |

| Clay | GTM engineering | $185/mo (free tier available) | Steep learning curve |

| MadKudu | PLG companies | ~$1K-$3K/mo | Segment/Mixpanel integration |

Let's be real about HubSpot: predictive scoring requires Enterprise at $3,600/month with a 10-seat minimum plus $3,500 onboarding. That's $46.7K in year one. HubSpot also offers Breeze Intelligence as an add-on at $45/month for 100 credits - a lighter option if you don't need full Enterprise. If you're buying Enterprise just for scoring, the math doesn't work.

Prospeo sits in a different category - it's the data quality layer that makes every scoring tool work better. Clean, verified, enriched contacts at $0.01 per email with a 92% API match rate and 50+ data points per contact. With a 7-day refresh cycle versus the 6-week industry average, your model trains on current reality instead of stale records.

Picking the Right Tool

Five criteria that actually matter:

Data accuracy - does the tool verify its own data, or are you scoring against stale records? First-party data support - can you feed in your own CRM conversion history? Scoring transparency - can you see why a lead scored high, or is it a black box? B2B scoring with machine learning demands explainability so reps trust the output. Integration depth - does it connect natively to your CRM, sequencer, and enrichment tools? Pricing model - per-seat, per-lead, or platform fee? Make sure it scales with your volume.

Start with what you already have. If you're on Salesforce Enterprise, try Einstein first. On HubSpot Enterprise, use their predictive scoring. Buy standalone only when you've outgrown native capabilities. Skip this entirely if you're processing fewer than 50 leads a month - you don't need a machine learning model, you need a prioritized call list.

FAQ

How many leads do I need?

Around 1,000 converted leads and 12-24 months of clean CRM history. Below that threshold, manual scoring with three high-intent signals works just as well and costs nothing.

Can scoring models work with bad CRM data?

No. Dirty data produces unreliable scores - duplicates, outdated titles, and unverified emails create false patterns that corrupt the model. Clean and verify contact data before it reaches your model, not after.

How often should I retrain?

Quarterly at minimum. Audit closed-won deals each quarter to detect model drift. Retrain immediately if your market, ICP, or product offering changes significantly - scoring models degrade faster than most teams expect when conditions shift.

Is it worth it for small teams?

Not until you have volume. Teams processing fewer than 50 leads per month get more value from manual prioritization than from implementing and maintaining a machine learning model. The ROI inflection point typically hits around 200+ inbound leads per month.

Dirty CRM data is the #1 scoring killer. Prospeo's enrichment API returns 50+ verified data points per contact at a 92% match rate - giving your AI model clean firmographic, technographic, and behavioral inputs at $0.01 per email.

Fix your CRM data before your next model retrain.