Deal Scoring: What It Is, How to Build It, and Why Most Teams Get It Wrong

Your VP just asked why forecast accuracy is still ±20%. The CRM says you've got $4.2M in pipeline, but half those deals haven't had a next step logged in three weeks. Before you buy a shiny AI tool, read this - because the problem with your deal scoring almost certainly isn't the model. It's the data underneath it.

What Is Deal Scoring?

Deal scoring assigns a numerical value to an open opportunity based on how likely it is to close and how healthy the deal looks right now. It's not lead scoring. Lead scoring measures a contact's engagement level - email opens, page visits, webinar attendance - and lives in marketing's world. Deal scoring lives in sales. It evaluates the opportunity itself: buyer behavior, deal progression, stakeholder engagement, and CRM completeness.

Most teams confuse three distinct score types, and the confusion wrecks their forecasts.

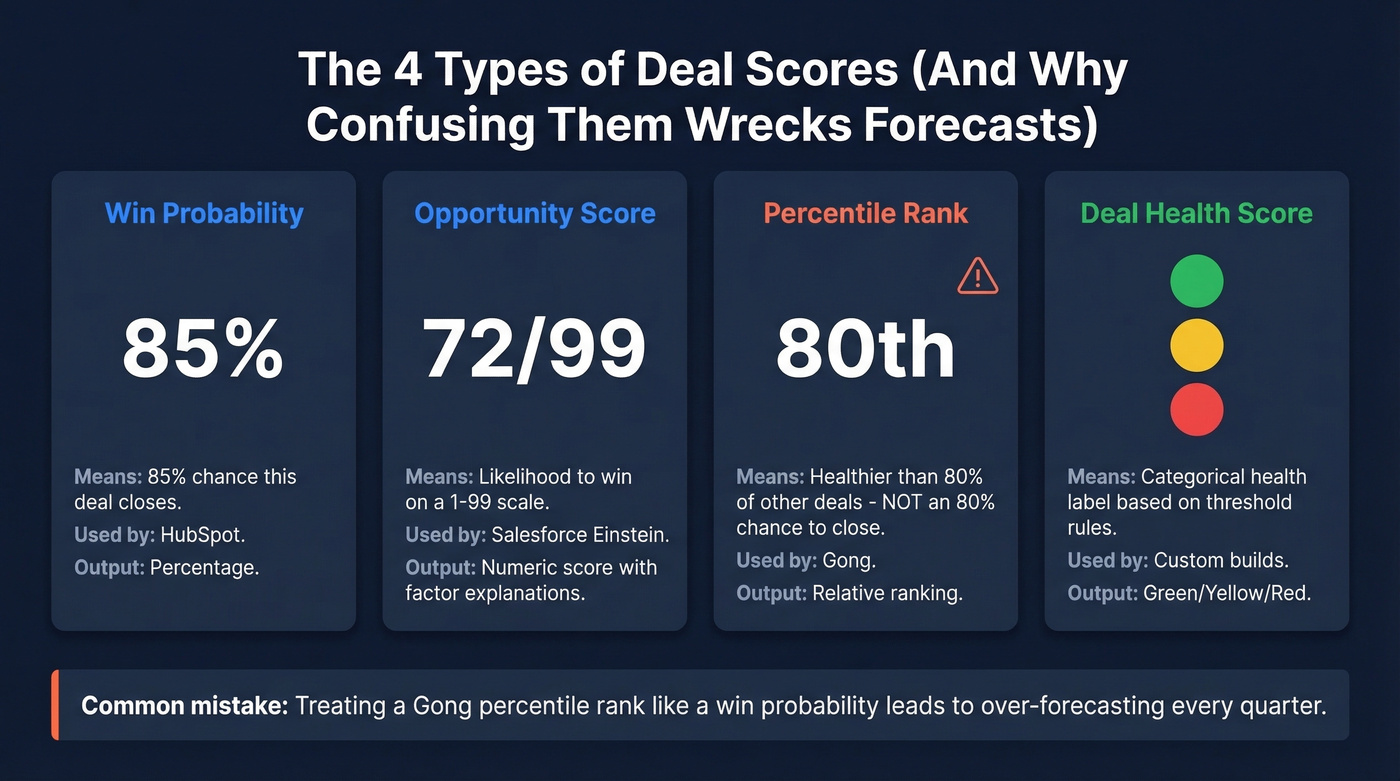

Win probability is what HubSpot outputs - a percentage estimating the chance a deal closes. A score of 85 means roughly 85% likelihood. Salesforce Einstein Opportunity Scoring outputs a 1-99 score indicating likelihood to win, along with positive and negative factor explanations. Percentile rank is what Gong uses - a score of 80 means this deal is healthier than 80% of other deals in the same period, not that it has an 80% chance of closing. That distinction matters enormously, and Gong's own docs spell it out clearly. Deal health scores (pipeline health) (Green/Yellow/Red) are the simplest version - categorical labels based on threshold rules. Less precise, but easier for reps to act on.

If your team treats a Gong percentile rank like a win probability, you'll over-forecast every quarter.

What You Actually Need

Three decision paths based on where you are right now:

- Under 200 closed deals in the last two years? Use a manual, rules-based scoring framework. You don't have enough data for AI - it'll just produce confident-sounding noise.

- On HubSpot Sales Hub Professional/Enterprise or Salesforce Performance/Unlimited? Turn on native scoring first. It's included in your license on those tiers. Don't spend $50K on Gong before you've proven the concept with what you already have.

- Before you do anything: Fix your CRM data. Scores built on 50% field coverage are random number generators.

The Numbers Behind It

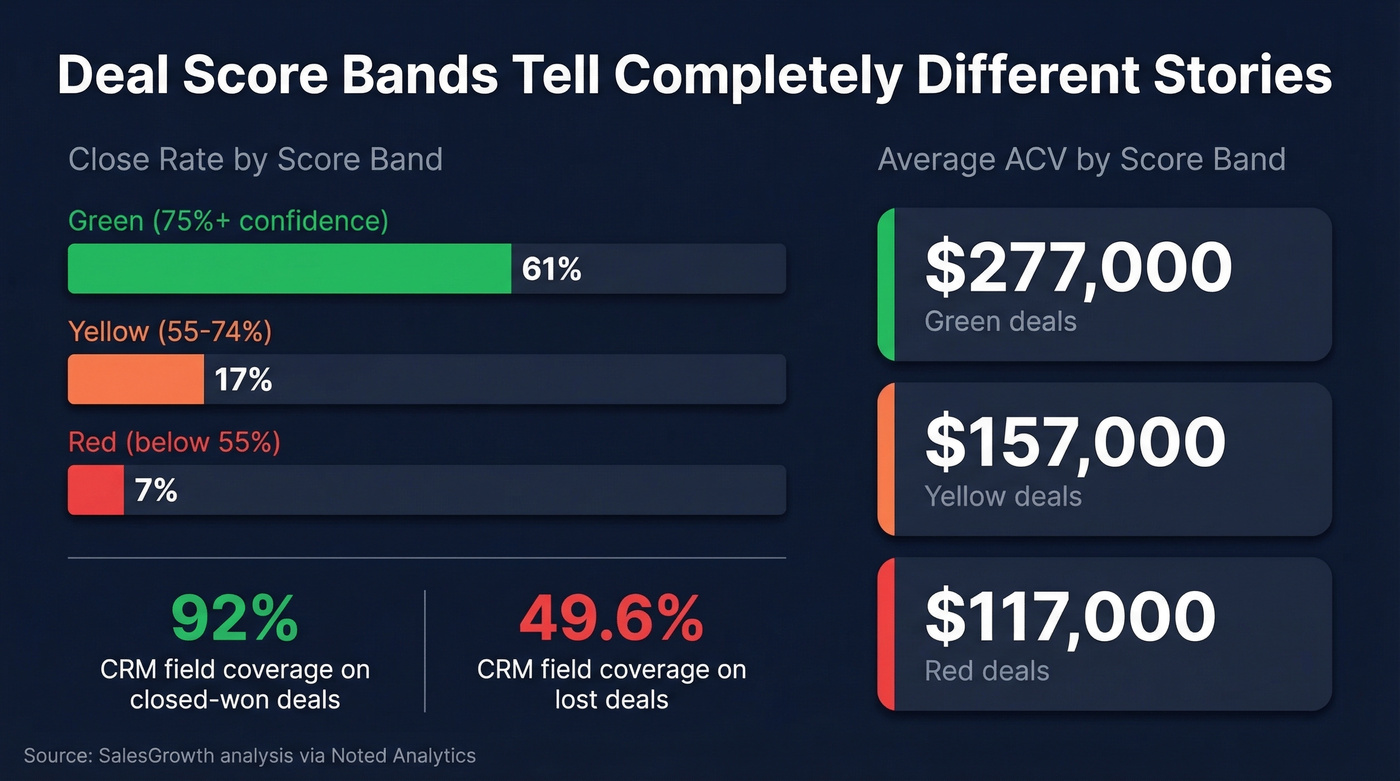

The business case isn't theoretical. A SalesGrowth analysis using data from Noted Analytics found stark differences across score bands.

Green-scored deals (≥75% confidence) closed 61% of the time at an average ACV of $277,000. Yellow deals (55-74%) closed just 17% at $157,000 ACV. Red deals? Seven percent close rate, $117,000 ACV. That's not a marginal difference - it's a completely different business depending on which deals your reps spend time on. Tracking a deal health score at each pipeline stage lets managers intervene before a Yellow deal slides to Red.

The data hygiene correlation is even more striking. Closed-won deals had 92% CRM field coverage. Lost deals sat at 49.6%. Winning deals moved through the pipeline in 74 days on average, while lost deals lingered for 143+ days, slowly decaying into pipeline fiction.

The model is rarely the bottleneck. The CRM is.

Signals That Matter Most

One practitioner on r/SaaSSales shared a telling before-and-after. Their team shifted scoring from static firmographics like job title and company size to behavior signals - repeat pricing page visits, demo watch time, recent email engagement. Pipeline shrank from ~1,000 "active" leads to ~400 real opportunities. Reply rates jumped from 3% to 8% in six weeks.

The lesson: behavior signals beat fit signals. Fit tells you who could buy. Behavior tells you who is buying.

For teams running a structured sales process, MEDDPICC provides a natural scoring framework. 73% of SaaS companies selling above $100K ARR use some version of it, and full adoption is associated with 18% higher win rates and 24% larger deal sizes. Here's how the elements map to scoreable CRM fields:

| MEDDPICC Element | Scoreable CRM Signal |

|---|---|

| Metrics | Business case documented (Y/N) |

| Economic Buyer | EB identified + engaged |

| Decision Criteria | Criteria captured in notes |

| Decision Process | Timeline + steps mapped |

| Paper Process | Legal/procurement engaged |

| Implicate Pain | Pain quantified in $ |

| Champion | Internal advocate active |

| Competition | Competitive landscape known |

Each element becomes a binary or weighted input. A deal with six of eight elements filled scores differently than one with two. Simple, transparent, and it works without any AI at all. This kind of deal health scoring gives managers a shared, repeatable language for pipeline reviews instead of gut-feel guesses.

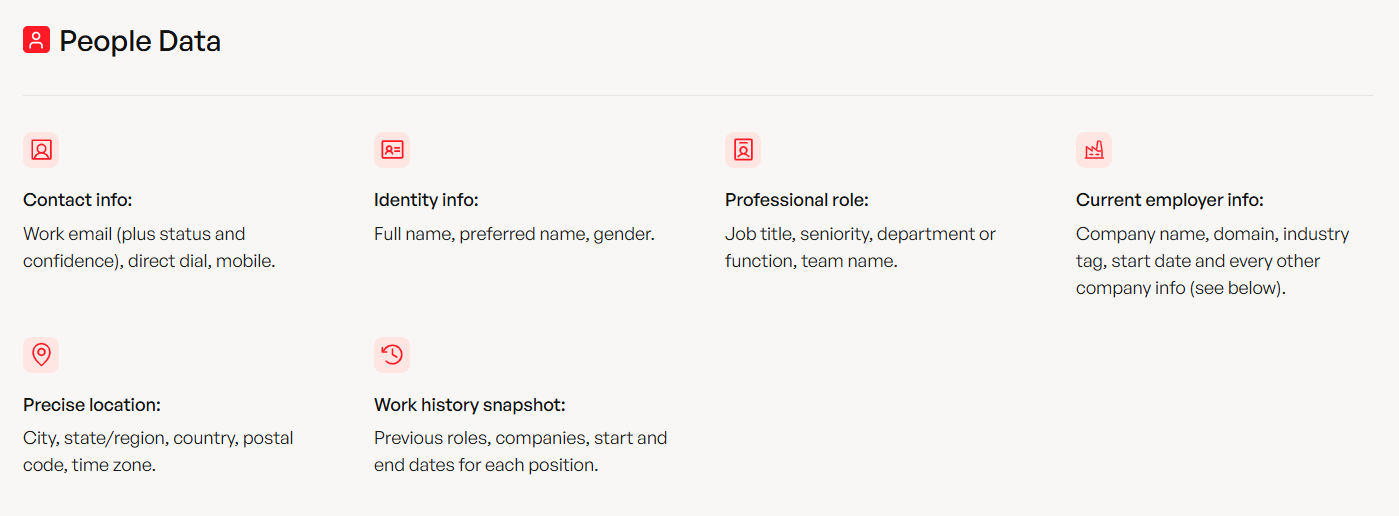

Closed-won deals have 92% CRM field coverage. Lost deals sit at 49.6%. Prospeo fills the gap - upload your pipeline contacts and get 50+ verified data points back per record at a 92% match rate. No contracts, no sales calls, $0.01 per email.

Fix your CRM data before you fix your scoring model.

The Data Quality Prerequisite

Here's the thing: 44% of companies lose more than 10% of annual revenue to bad data. Scoring amplifies the problem - a model trained on incomplete CRM records learns the wrong patterns and confidently recommends the wrong deals.

Before you enable any scoring tool, run through this checklist:

- Standardize taxonomies. Industries, lifecycle stages, and deal stages should use picklists, not free text. "Enterprise" and "enterprise" and "Ent." shouldn't be three different segments.

- Enforce required fields. If close date, amount, and stage aren't mandatory, your scoring model has nothing to work with.

- Deduplicate. Match on email and domain. Duplicates poison both training data and live scores.

- Enrich. Fill in missing firmographics, job titles, verified emails, and direct dials. Prospeo handles this at scale - upload a CSV or connect your CRM, and it returns 50+ data points per contact at a 92% API match rate with verified emails, mobile numbers, and company firmographics. Snyk ran this playbook across 50 AEs and dropped their bounce rate from 35-40% to under 5%, generating 200+ new opportunities per month.

- Archive stale records. Anything with no activity in 18+ months is noise, not signal.

We've seen teams waste six figures on Gong licenses before fixing their CRM data. Don't be that team.

Behavior signals beat fit signals, but only when your contact data actually connects reps to real buyers. Prospeo delivers 98% email accuracy and 125M+ verified mobile numbers with a 30% pickup rate - refreshed every 7 days, not every 6 weeks.

Stop scoring deals your reps can't even reach.

Tools Compared (2026)

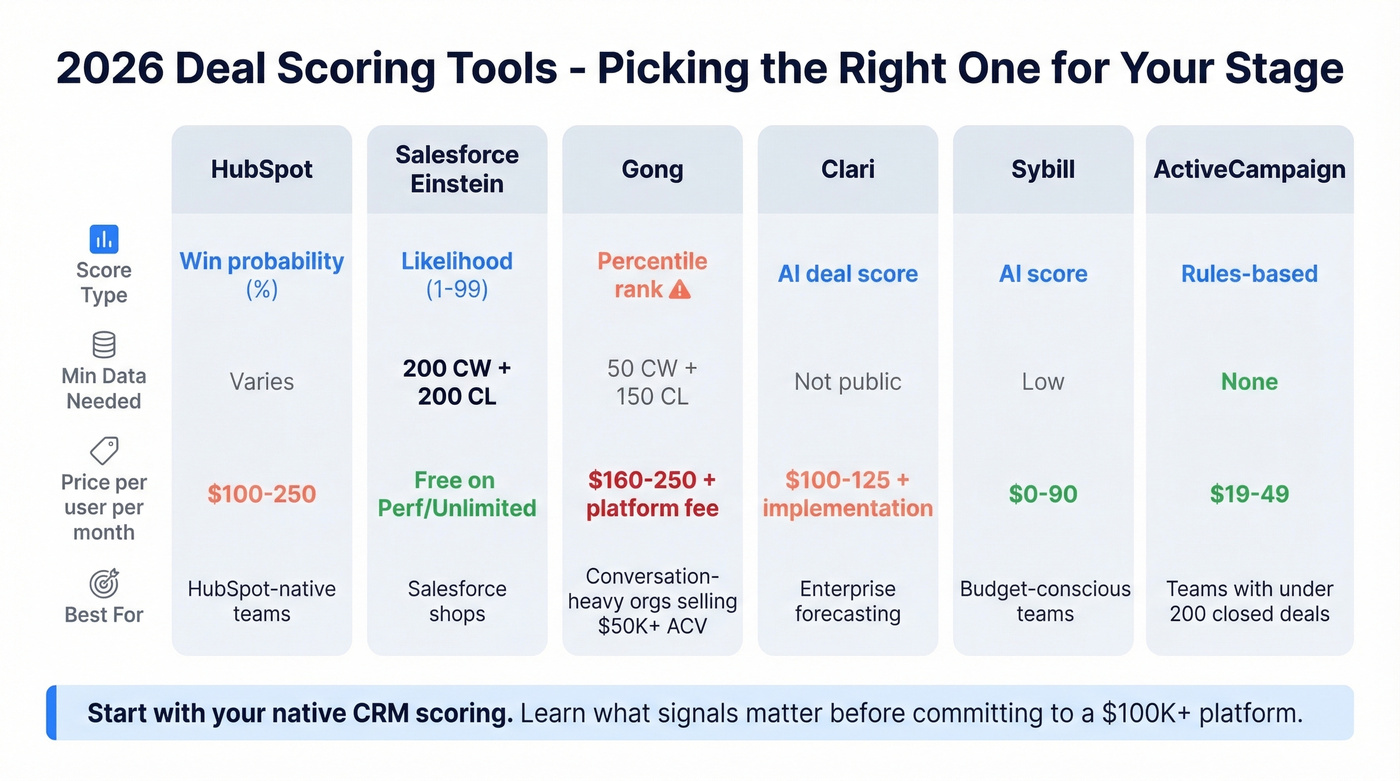

For most teams, HubSpot or Einstein native scoring is the right starting point - you'll learn what signals matter before committing to a $100K+ platform. If you're evaluating platforms, compare your options against dedicated sales forecasting solutions and broader best sales forecasting tools to see where deal scoring fits in your stack.

| Tool | Score Type | Min Data | Pricing | Best For |

|---|---|---|---|---|

| HubSpot | Win probability (%) | Varies | $100-250/seat/mo | HubSpot-native teams |

| Einstein | Win likelihood (1-99) | 200 CW + 200 CL | Free on Perf/Unlimited | Salesforce shops |

| Gong | Percentile rank | 50 CW + 150 CL | ~$160-250/user/mo | Conversation-heavy orgs |

| Clari | AI deal score | Not public | ~$100-125/user/mo | Enterprise forecasting |

| Sybill | AI score | Low | $0-90/user/mo | Budget-conscious teams |

| ActiveCampaign | Rules-based | None | ~$19-49/user/mo | Teams with <200 closed deals |

HubSpot Sales Hub

Use this if you're already on HubSpot Sales Hub Professional or Enterprise and want to test scoring without adding another vendor. HubSpot's deal scores reflect a win probability percentage, included in your existing license. New deals get scored within about 36 hours (up to 48 during peak processing), and scores only shift when the change exceeds a ±3% significance threshold - so you won't see noise from minor CRM edits. Skip this if you need transparency into which signals drive the score. HubSpot's model is largely a black box, and the consensus on r/hubspot is that validating accuracy is harder than it should be.

Key limitation: Black-box model with no signal-level explainability.

Salesforce Einstein

Einstein Opportunity Scoring outputs a 1-99 deal likelihood score with positive and negative factor explanations. It's free on Performance and Unlimited editions, making it the obvious first move for Salesforce shops. The catch: you need 200 closed-won and 200 closed-lost opportunities in the last two years, and the model only refreshes monthly.

One detailed 18-month review reported close prediction accuracy of just 52% - though the "at-risk" flags were more useful, correctly identifying deals needing intervention about 68% of the time. An upside worth noting: Einstein Activity Capture improved email logging compliance from ~40% to 85% in the same org, which directly feeds better scoring inputs.

Key limitation: Monthly refresh only; 52% close prediction accuracy in real-world testing.

Gong

Gong's deal likelihood scoring pulls from 300+ signals - CRM data, call transcripts, email engagement, multi-threading patterns. It's the most signal-rich option on this list. But the score is a percentile rank, not a win probability, and it's expensive. Expect $160-250/user/month plus a $5K-$50K platform fee. Year-one TCO for a 50-person team runs $88.5K-$121K.

You also need 50 closed-won and 150 closed-lost deals from the last two years before scores generate, and initial processing can take up to two weeks. For teams selling $50K+ ACV deals, the ROI math works. For teams closing deals under $20K, Gong is overkill.

Key limitation: $88.5K+ year-one TCO; percentile rank often misread as win probability.

Clari

Clari's RevAI includes AI-powered scoring and AI-driven forecasting. The fact that Clari still doesn't publish pricing in 2026 is absurd for a tool targeting revenue operations. Expect ~$100-125/user/month plus $15K-$75K in implementation fees.

Key limitation: No public pricing; enterprise-grade implementation required.

Sybill

Budget-friendly AI scoring. Paid plans run $0-90/user/month. The signal set is smaller than Gong's, but for teams that want AI-assisted deal insights without a five-figure commitment, it's worth testing.

Key limitation: Smaller signal set than conversation intelligence platforms.

ActiveCampaign

Rules-based scoring for SMB and mid-market teams. No AI, no ML - just weighted criteria you define. That's a feature, not a bug, when you've got fewer than 200 closed deals. You don't have enough data for machine learning anyway.

Key limitation: No AI/ML; manual rule maintenance required.

How to Build a Framework

For teams building a rules-based model or supplementing AI with manual inputs, the SalesGrowth five-area framework provides a solid foundation:

- Problem - Has the buyer articulated a specific, quantified problem?

- Root cause - Do they understand why the problem exists?

- Impact/cost of inaction - What happens if they do nothing?

- Buying process - Are stakeholders, timeline, and decision criteria mapped?

- Next steps - Is there a concrete, committed next action?

Score each area 0-20 and sum for a 0-100 total. Set thresholds: Green ≥75, Yellow 55-74, Red <55. Weight areas based on what predicts wins in your specific business - for most B2B teams, "buying process" and "next steps" carry more predictive weight than "problem." The resulting scores give your team a shared language for pipeline reviews instead of gut-feel guesses. If you want a tighter definition of what counts as a real signal, use a checklist for identifying buying signals before you assign weights.

Let's be honest: if your ACV is under $25K, you probably don't need AI at all. A five-question framework that reps actually fill out beats a 50-variable AI model trained on a half-empty CRM. In our experience, the five-area framework outperforms more complex models for any team under 500 closed deals.

Why 95% of AI Pilots Fail

An MIT Media Lab NANDA Initiative report reviewed 300 AI projects, interviewed 150 executives, and surveyed 350 employees. The finding: 95% of AI pilot projects failed to deliver measurable financial impact.

The failure drivers weren't about model sophistication. They were about organizational learning gaps, workflow design, and data quality. Teams bought AI scoring tools, plugged them into broken CRMs, and wondered why the scores didn't match reality. Purchased AI solutions succeeded 67% of the time, while internal builds succeeded only about 22%. The implication is clear - use your CRM's native scoring or a proven vendor before trying to build a custom model.

How to Measure Accuracy

Most CRMs don't include a score accuracy report. That's a product failure, frankly.

Here's how to build your own:

- Export closed deals with their scores at various pipeline stages.

- Segment by score band - Green, Yellow, Red - at each stage.

- Compare predicted vs actual - what percentage of Green deals actually closed? What percentage of Red deals were correctly flagged?

- Recalibrate quarterly. Markets shift, buyer behavior changes, and your model needs to keep up. Reassess whether your deal likelihood thresholds still align with actual close rates each quarter.

The r/hubspot community has been asking for exactly this kind of reporting. Until platforms build it natively, even a quarterly backtest is better than blindly trusting scores you've never validated. If your pipeline is still bloated after backtesting, start with the root causes in sales pipeline challenges and tighten rep execution with a consistent set of sales activities.

FAQ

What's the difference between deal scoring and lead scoring?

Lead scoring measures contact engagement and is primarily a marketing tool. Deal scoring evaluates an open sales opportunity based on buyer behavior, progression velocity, and CRM data completeness. Lead scoring asks "is this person interested?" Deal scoring asks "is this deal going to close?"

How many closed deals do I need for AI scoring?

Salesforce Einstein requires 200 closed-won and 200 closed-lost opportunities. Gong needs 50 closed-won and 150 closed-lost. Below those thresholds, use a rules-based framework - AI trained on too few examples produces unreliable scores.

Is a score of 80 an 80% chance to close?

It depends on the tool. HubSpot outputs win probabilities, so 80 roughly means 80% likelihood. Gong's score is a percentile rank - 80 means healthier than 80% of comparable deals, not an 80% win probability. Always check which score type your platform uses before interpreting numbers.

Can I score deals without AI?

Yes. Rules-based frameworks outperform AI for teams with fewer than 200 closed deals. Score opportunities across five to eight qualification criteria like the MEDDPICC elements above, set Green/Yellow/Red threshold bands, and review weekly in pipeline meetings.