The ICP Framework That Actually Lives in Your CRM (Not a Slide Deck)

A RevOps lead we worked with ran the numbers on their pipeline and found something brutal: two-thirds of their demos were with companies that would never buy. Close rate? 12%. Not because the reps were bad - because the targeting was. The ICP lived on a slide deck from a 2023 offsite, and nobody had looked at it since.

Stop building ICPs in workshops. Build them in spreadsheets, score them with a rubric, and wire them into your CRM. That's what a proper ICP framework does.

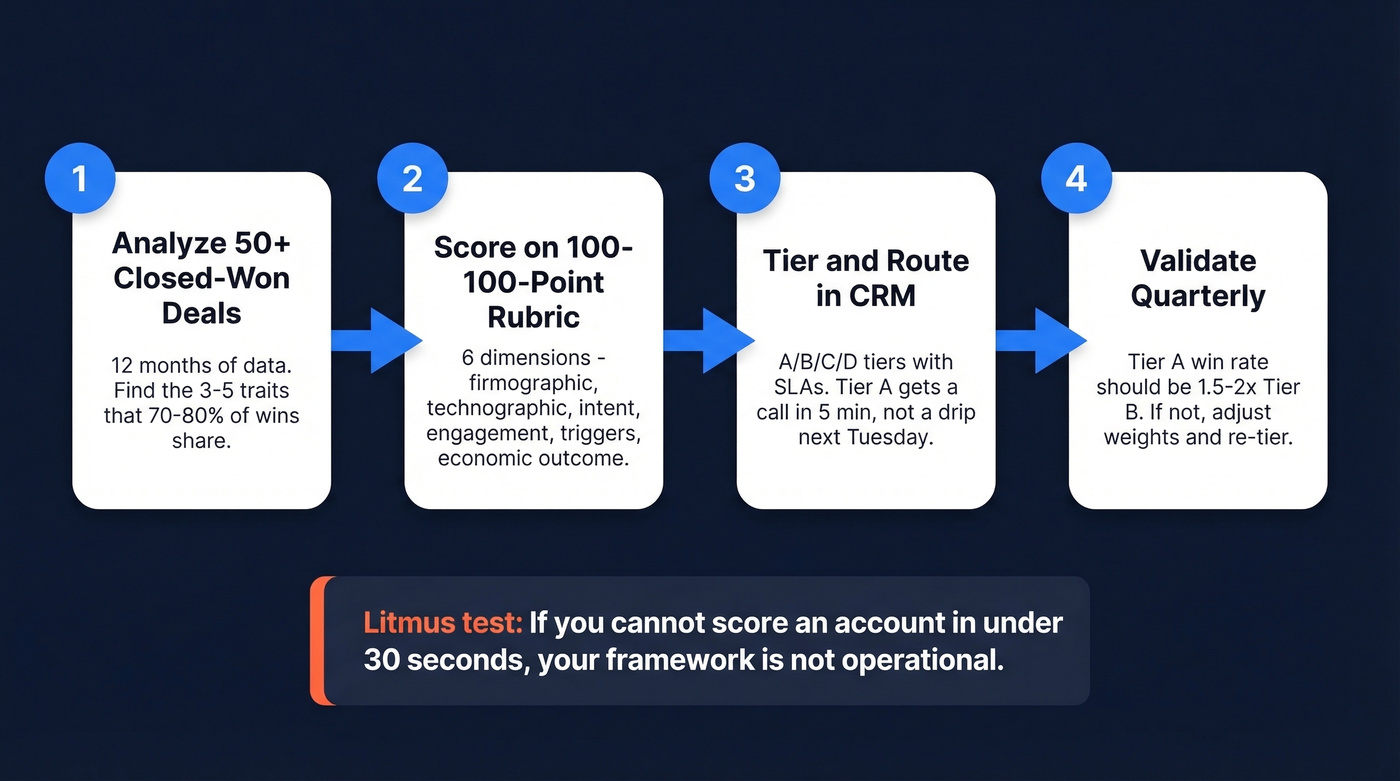

The Framework in Four Moves

If you're short on time, here's the entire thing:

- Analyze 50+ closed-won deals from the last 12 months. You'll find 70-80% share 3-5 common traits. Those traits are your ICP's skeleton.

- Score accounts on a 100-point rubric across six dimensions: firmographic, technographic, intent, engagement, triggers, and economic outcome.

- Tier accounts A/B/C/D and wire scoring into CRM routing with SLAs - Tier A gets a call within 5 minutes, not a drip sequence next Tuesday.

- Validate quarterly. If Tier A win rates aren't 1.5-2x Tier B, your ICP is wrong. Adjust the weights and re-tier.

Here's the litmus test you should carry through this entire guide: if you can't score an account against your ICP in under 30 seconds, your framework isn't operational.

Most ICP guides stop at definition. This one gives you a scoring rubric, negative scoring guardrails, routing SLAs, and validation benchmarks - everything you need to make your ideal customer profile framework actually work inside a CRM.

What an ICP Framework Actually Is

Most teams conflate three concepts that operate at completely different altitudes.

| Concept | What It Defines | Scope | Example |

|---|---|---|---|

| TAM | Total addressable market | Entire market | $4.2B global HR tech |

| ICP | Best-fit company type | Filtered segment | Series B+ SaaS, 100-500 emp, using Salesforce |

| Buyer Persona | Individual decision-maker | Person within ICP company | VP of Sales, 5+ yrs exp, manages 10+ reps |

Your TAM is the ocean. Your ICP is the fishing spot. Your buyer persona is the specific fish. You need all three, but the order matters - ICP comes before personas, because the same VP of Sales behaves completely differently at a 50-person startup versus a 2,000-person enterprise.

Within every ICP company, there's a buying committee with distinct roles: the decider (chooses the solution), the payer (signs the check), and the user (lives with it daily). These are often three different people. Your ICP model needs to account for all of them, because marketing to the user when the payer has veto power is how deals stall at stage 3.

Your ICP isn't a permanent truth - it's a hypothesis. The situational ICP approach frames it as a defined timeframe, a specific goal, and an honest assessment of your current capabilities. The best teams treat every 3-6 month window as a new hypothesis cycle: set milestones, measure, pivot if the data says you're wrong.

Why Your Ideal Customer Profile Matters

The business case isn't abstract. Companies using lead and account scoring models see a 77% boost in lead generation ROI compared to those flying blind. Tightly defined ICPs drive 25-30% improvements in marketing ROI by cutting spend on prospects who were never going to convert.

The flip side is uglier. When your ICP is wrong - or worse, when it doesn't exist - you get extended onboarding cycles for customers who shouldn't have been sold in the first place. Support tickets spike. Churn accelerates. Your brand takes hits from customers who were a bad fit and who tell everyone about it.

Look, most teams don't have an ICP problem. They have an operationalization problem. The ICP exists somewhere - in a founder's head, in a strategy deck, in a Notion doc nobody reads. The consensus on r/sales is that these profiles become shelfware: built once in a workshop, never wired into anything. That's exactly why this guide focuses on scoring and routing, not just definition.

Five Mistakes That Break Your Profile

1. Guessing without data. The most common ICP process is a whiteboard session where marketing and sales debate who the "ideal" customer is based on vibes. No closed-won analysis, no profitability data, no pattern recognition. You end up with a description that sounds right but doesn't predict anything.

2. Too broad to be useful. "Mid-market SaaS companies" isn't an ICP - it's a TAM description. If your profile doesn't narrow your total addressable market by at least 60-70%, it's not doing its job. You need specifics: revenue range, headcount band, tech stack requirements, geographic focus.

3. Ignoring profitability. The customers who respond to your marketing aren't necessarily the customers who make you money. MarTech's analysis of ICP mistakes highlights this: resonance doesn't equal profit. The 80/20 rule applies - 20% of your customers likely drive 80% of your profit. Use activity-based costing to figure out which segments are actually profitable after support, onboarding, and churn costs. And consider your adopter type: early adopters tolerate rough edges, but widespread adopters churn quietly if reliability drops. Your ICP should reflect which type you're targeting right now.

4. No scoring model. Binary fit/no-fit is how most teams operate. An account either "matches" the ICP or it doesn't. That's like grading exams pass/fail when you need a curve. Without a numerical score, you can't tier, you can't route, and you can't measure whether your ICP is actually predictive.

5. Never validating. Your ICP from 18 months ago was built for a different product, a different market, and a different competitive set. Treat every 3-6 months as a new hypothesis window - with a defined timeframe, a specific goal, and an honest assessment of your current capabilities.

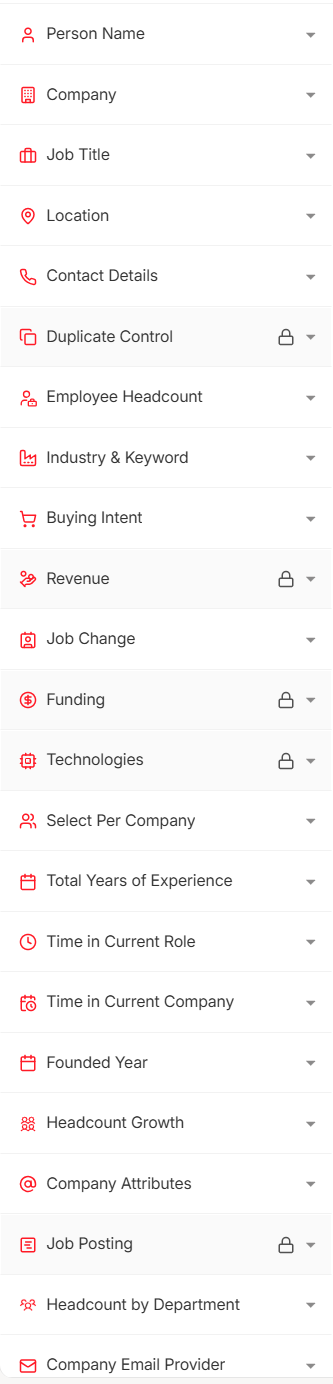

Your ICP scoring rubric is only as good as the data feeding it. Prospeo gives you 30+ filters - buyer intent, technographics, headcount growth, funding, revenue - across 300M+ profiles so you can tier accounts on facts, not guesses.

Stop scoring accounts against stale data. Start with a 7-day refresh cycle.

How to Build Your Ideal Customer Model

Step 1: Mine Closed-Won Deals

Pull your last 50+ closed-won deals from the past 12 months. Tag every one with firmographic attributes (industry, revenue, headcount, geography) and technographic attributes (CRM, marketing stack, data tools). You'll almost always find that 70-80% share 3-5 common traits. Those shared traits are your ICP's foundation - not your assumptions, not your aspirations.

Step 2: Interview Top Customers

Data tells you what happened. Interviews tell you why.

Talk to your top 10 customers - the ones with the highest NPS, the fastest onboarding, the biggest expansion revenue. Ask what problem they were solving, what alternatives they evaluated, and what almost stopped them from buying. The patterns here fill in the behavioral and trigger dimensions that CRM data misses.

Step 3: Document Your Attributes

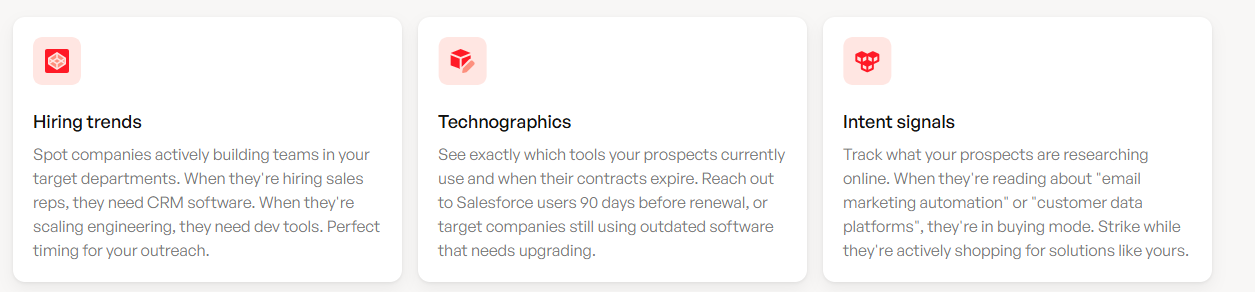

Organize everything into four categories. Firmographic: industry, revenue band, employee count, geography, growth stage. Technographic: specific tools in their stack that indicate compatibility or need. Behavioral: how they engage with your content, what pages they visit, how they enter your funnel. Trigger events: funding rounds, leadership changes, job postings, tech migrations. Each category gets weighted in your scoring model - we'll build that next.

Step 4: Define Your Anti-ICP

This is the step most teams skip, and it's the most valuable.

Document who you should never sell to. Which industries churn at 3x your average? Which company sizes require custom onboarding that destroys your margins? Which tech stacks create integration nightmares? Your anti-ICP protects your pipeline from deals that close but never succeed.

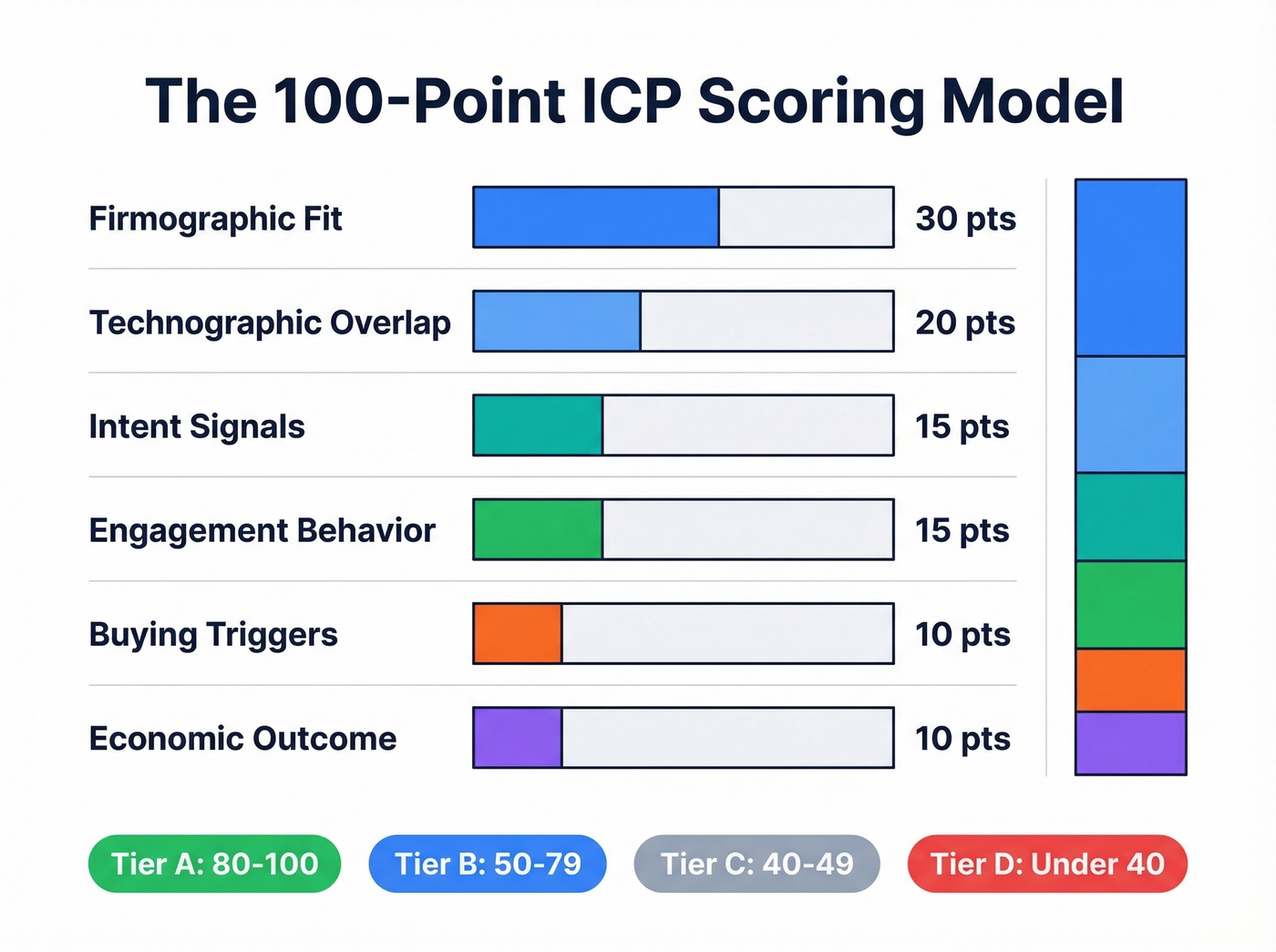

The 100-Point Scoring Model

A scoring model turns your ICP from a description into a decision engine. Here's the six-dimension model that works best for B2B SaaS teams.

How the Weights Work

| Dimension | Weight | What It Measures | Example Criteria |

|---|---|---|---|

| Firmographic fit | 30% | Industry, revenue, headcount, geo | SaaS, $10-100M ARR, 100-500 emp |

| Technographic overlap | 20% | Tech stack alignment | Uses Salesforce + Outreach + Segment |

| Intent signals | 15% | Active research on your category | Bombora topic surge in last 14 days |

| Engagement behavior | 15% | Interaction with your content/site | Pricing page visit, webinar attendance |

| Buying triggers | 10% | Events indicating readiness | New funding, leadership change, job posts |

| Economic outcome | 10% | Predicted LTV and profitability | ACV >$30K, <90-day implementation |

Firmographic fit gets the heaviest weight because it's the most stable signal - a company's industry and size don't change week to week. Intent and engagement are weighted lower individually but together they represent 30% of the score, which gives recent buying behavior real pull without letting a single webinar attendance override fundamental fit.

Let's score a real account to make this concrete:

| Dimension | Max | Score | Rationale |

|---|---|---|---|

| Firmographic | 30 | 25 | SaaS, $45M ARR, 280 emp; APAC HQ (-5) |

| Technographic | 20 | 20 | Salesforce + Outreach + Segment - full match |

| Intent | 15 | 10 | Bombora surge, no first-party touch yet |

| Engagement | 15 | 5 | One ebook download 3 weeks ago (decaying) |

| Triggers | 10 | 10 | Series C closed 6 wks ago, 3 SDR job posts |

| Economic | 10 | 5 | Est. ACV $25K (slightly below ideal) |

| Total | 100 | 75 | Tier B+ - nurture with targeted sequence |

This account scores 75/100 - solidly Tier B. The firmographic and technographic fit is strong, but engagement is weak and the economic outcome is slightly below threshold. The right play is a targeted sequence, not a cold call blitz. That's the power of scoring: it doesn't just tell you who to pursue, it tells you how.

Tier thresholds: A (80-100) - pursue immediately with senior rep attention. B (50-79) - nurture with sequences and SDR qualification. C (40-49) - deprioritize. D (<40) - no active outreach.

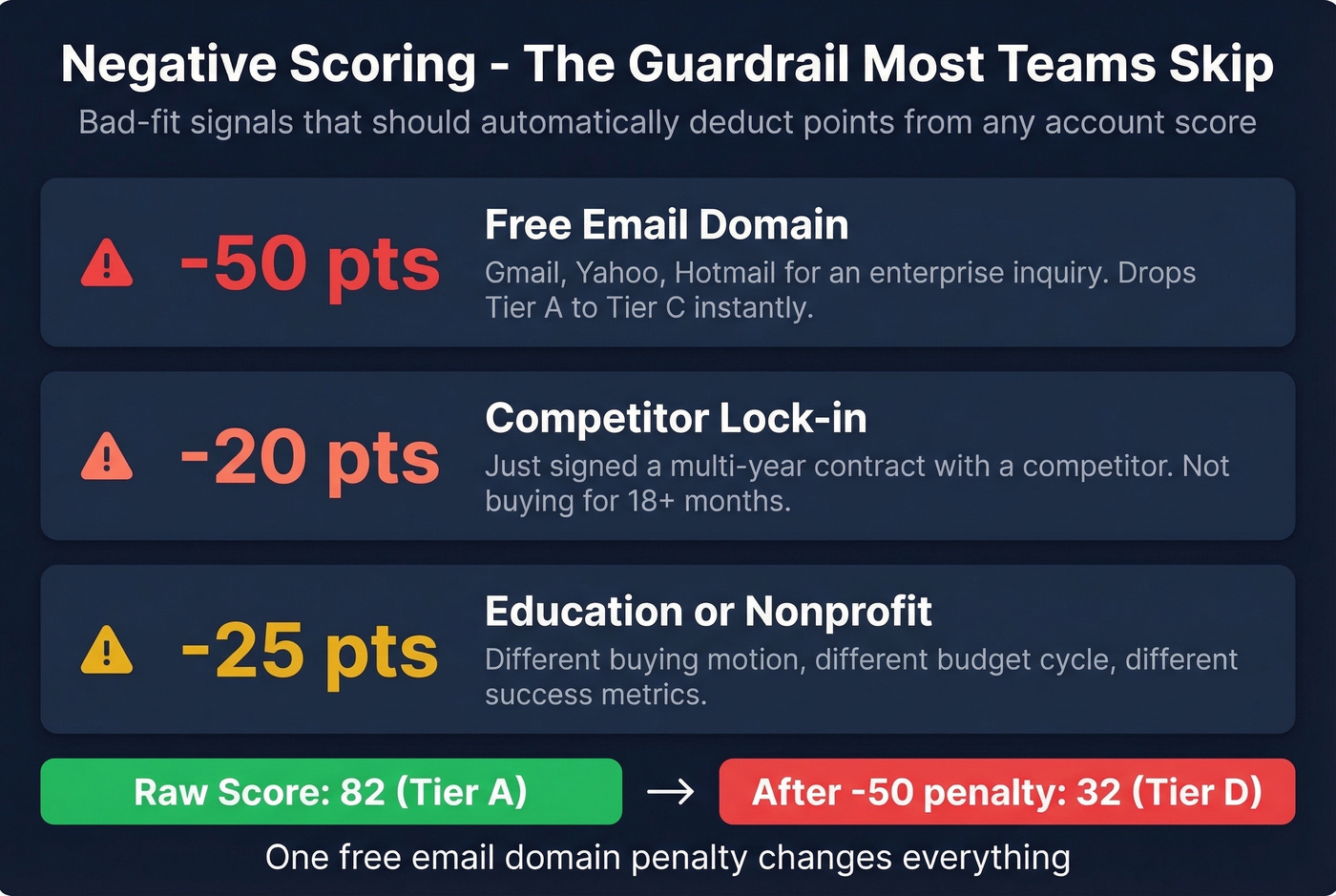

Negative Scoring: The Guardrail Most Teams Skip

Most rubrics only add points. That's a problem, because it means a fundamentally bad-fit account can score well if they happen to show intent and engagement. Negative scoring fixes this.

A prospect using a @gmail.com address for an "enterprise" inquiry should lose points, not gain them.

- Free email domain (Gmail, Yahoo, Hotmail): -50 points. This alone drops an account from Tier A to Tier C, which is exactly the point.

- Competitor lock-in (just signed a multi-year contract): -20 points. They're not buying for 18+ months.

- Education/nonprofit (if you sell enterprise SaaS): -25 points. Different buying motion, different budget cycle.

- Legacy tech stack (no modern CRM, no marketing automation): -30 points. Integration costs eat your margins.

We've seen teams waste entire quarters chasing "high-intent" accounts that had a negative score of -70 once you applied the guardrails. Don't be that team.

Operationalize Your ICP in the CRM

A scoring model that lives in a spreadsheet is only marginally better than one that lives on a slide deck. The goal is a unified, CRM-native scoring engine with automated routing so every team works from the same source of truth.

Signal Decay and Intent Validation

Not all signals age equally. A pricing page visit from yesterday is gold. The same visit from 45 days ago is noise.

Apply a 30-day half-life rule: any signal older than 30 days loses 50% of its value. Engagement scores should decay automatically in your CRM - if they don't, you're routing based on stale data.

For third-party intent signals, add a validation layer. Only count an intent spike if at least one first-party touch occurred in the same 14-day window. This filters out the noise that plagues intent platforms when used without guardrails. Intent without engagement is curiosity, not buying behavior.

Routing SLAs by Tier

| Score Range | Tier | Action | SLA |

|---|---|---|---|

| 80-100 | A (Hot) | SDR direct call + personalized email | Within 5 min |

| 60-79 | B (Warm) | Automated sequence + SDR follow-up | Within 24 hrs |

| 40-59 | C (Nurture) | Marketing nurture track | Within 48 hrs |

| <40 | D (Deprioritize) | Passive content only | No active outreach |

These SLAs only work if the data feeding your scoring model is accurate and current. Stale firmographic data means wrong scores. Wrong scores mean wrong routing. Wrong routing means your best reps are calling Tier C accounts while Tier A prospects sit in a nurture drip for three days.

The Data Layer That Makes It Work

Building an operational ICP framework requires enrichment and intent data, and the cost range is enormous. Enterprise intent platforms like 6sense and Bombora typically run $30K-$100K+/year. Enrichment tools like ZoomInfo typically cost $15K-$40K/year depending on seats and modules. Self-serve alternatives like Prospeo offer 300M+ profiles at roughly $0.01 per lead with no annual contracts - 30+ search filters covering buyer intent, technographic installs, headcount growth, and funding events, with a 7-day data refresh cycle and 98% email accuracy.

The refresh cadence matters more than most teams realize. If your data layer runs on the 6-week industry average, your intent and trigger scores are already decayed by the time they hit the CRM.

Skip the enterprise stack if your average deal size is under $10K. A self-serve data tool plus a well-built scoring model in HubSpot or Salesforce will outperform a $50K/year intent platform that nobody configures properly. The framework matters more than the tooling.

You just built a 100-point ICP rubric. Now you need firmographic, technographic, and intent data to actually populate it. Prospeo tracks 15,000 intent topics via Bombora and enriches every lead with 50+ data points at 92% match rate - for $0.01 per email.

Wire your ICP framework into real buyer signals today.

ICP-Specific Messaging

Once your ICP is defined, your messaging should reflect it. Instead of generic value props, use ICP-specific proof points: "Cut manual reconciliation time by 40% in 90 days" beats "improve efficiency" every time. If you can't write a proof point that names the specific pain, timeline, and outcome for your ICP, your profile isn't specific enough yet.

How to Tell Your ICP Is Wrong

Your ICP isn't a set-it-and-forget-it artifact. Five diagnostic red flags signal it needs revision:

Bounce rate above 55%. If more than half your outbound emails bounce, you're either targeting the wrong companies or using bad data - both are ICP problems. (If you need bounce benchmarks and fixes, start with bounce rate.)

Email unsubscribe rate over 2%. High unsubscribes mean your messaging doesn't resonate with the audience you're reaching. That's a targeting issue, not a copy issue.

Site conversion below 3-5%. If ICP-matched traffic isn't converting at baseline SaaS benchmarks, your definition and your positioning are misaligned.

Tier A win rates aren't 1.5-2x Tier B. This is the most important diagnostic. If your top-tier accounts don't close at materially higher rates, your scoring model isn't predictive - which means your attributes aren't the right ones.

Sales cycles aren't shortening by tier. You should see a 15-20% gap between Tier A and Tier B cycle times. If Tier A deals take just as long, your "best-fit" accounts aren't actually easier to sell.

Run these diagnostics quarterly. If two or more flags are red, don't tweak - rebuild. Go back to Step 1, pull fresh closed-won data, and re-derive your attributes.

From 12% to 47% Close Rate in 90 Days

A B2B SaaS startup was running a pipeline that looked healthy on the surface - plenty of demos, decent volume. But when they audited the numbers, two-thirds of those demos were with companies that didn't match their ICP. Close rate: 12%. Forecast accuracy: 23%. The pipeline was fiction.

They analyzed their last 50 closed-won deals for patterns beyond basic demographics - pain intensity, buying behavior, tech stack signals, trigger events. They used this data to build an ideal customer model, tiered their pipeline, and routed high-scoring leads to senior reps while mid-tier leads went through SDR qualification. Low-scoring leads went to nurture instead of clogging the demo calendar.

The results in 90 days: close rate jumped from 12% to 47%. Pipeline stages collapsed from 11 to 5. Forecast accuracy went from 23% to 81%. Sales cycle compressed by 22% - 15 days faster. They didn't hire a single new rep. They just stopped wasting the reps they had on accounts that were never going to close.

That's what an operational ICP framework does. It doesn't generate more pipeline. It makes the pipeline you have actually convert.

FAQ

Can you have more than one ICP?

Yes - most scaling companies run 2-3 ICPs for different products or motions, like PLG versus enterprise sales-led. Score and tier each separately with its own rubric. But start with one. Trying to serve three ICPs before nailing the first is how teams end up with a broad, useless profile that predicts nothing.

How often should you update your ICP?

Quarterly at minimum. Revisit whenever your product ships major features, you enter a new market, or your diagnostic metrics drift. Treat every 3-6 months as a new hypothesis window with defined milestones.

What's the difference between ICP and buyer persona?

ICP defines the company type - firmographics, technographics, triggers, economic fit. Buyer persona defines the individual - job title, goals, objections, daily workflow. Build ICP first, because the same title behaves differently at different company types. Personas layer on after.

How do you get the data to score accounts?

You need firmographic, technographic, and intent data layered together. Prospeo's 30+ search filters cover buyer intent across 15,000 topics, tech stack detection, headcount growth, and funding - letting you filter and export contacts matching your ICP attributes with 98% email accuracy and a 7-day refresh cycle.

What tools do you need to operationalize an ICP framework?

At minimum: a CRM with custom scoring fields (HubSpot or Salesforce), an enrichment layer for firmographic and technographic data, and an intent data source. Enterprise stacks (6sense + ZoomInfo) run $50K-$140K/year. Self-serve stacks built around native CRM scoring and a good enrichment tool deliver comparable results at a fraction of the cost - especially for teams with average deal sizes under $25K.