How to Automate Lead Qualification (With a Scoring Template You Can Copy)

A RevOps lead on r/b2bmarketing described processing 3,000 target accounts a month through Apollo and Lusha - and still manually qualifying every single one because the data was too unreliable to trust downstream. That's not a workflow. That's a bottleneck dressed up as automation.

79% of leads never convert into sales due to poor qualification, and lead qualification automation exists to kill exactly this problem. You need three things: clean data, a simple scoring model, and a router that books meetings the moment a lead qualifies. The scoring template below is the centerpiece - copy it, adjust the weights, and you're live in an afternoon.

What Is Automated Lead Qualification?

Manual qualification means a human reviews every lead before routing it to sales. Automated lead qualification replaces that bottleneck with scoring rules, enrichment triggers, and instant routing so reps only see leads that already meet your criteria. It also forces sales and marketing to agree on what "qualified" actually means, which is half the battle at most orgs.

61% of marketers say generating quality leads is their top challenge. Automation won't fix lead quality directly, but it stops your team from burning cycles on leads that were never going to close.

How to Automate Lead Qualification

Fix Your Data First

Automation built on bad data scales bad decisions. Only 56% of B2B companies verify leads before passing them to sales - nearly half are feeding unverified contacts into scoring models and wondering why AEs don't trust the MQL label.

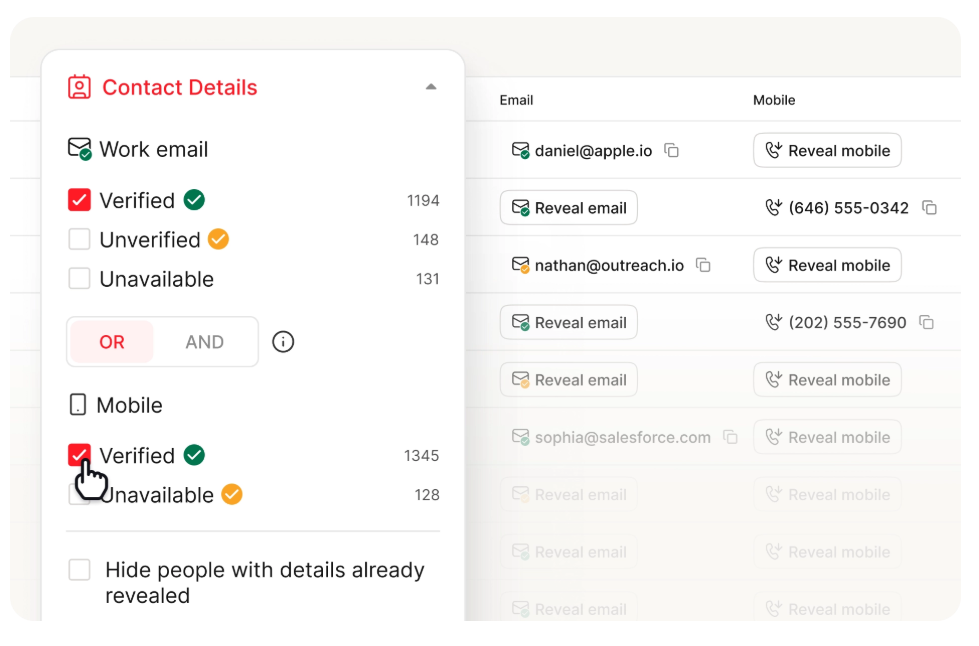

Here's the thing: if your enrichment tool refreshes data every six weeks, your scoring model is making decisions on month-old information. We've seen teams run their lists through Prospeo's bulk verification before anything touches the scoring engine - 98% email accuracy, a 92% API match rate, and a 7-day refresh cycle mean your scores reflect reality, not stale records.

Choose Your Framework

BANT is fine for 80% of teams. Stop overthinking frameworks.

| Framework | Best For | Automatable? | Key Rule |

|---|---|---|---|

| BANT | High-volume, transactional | Yes - 3 of 4 criteria | Qualified if 3 of 4 met |

| CHAMP | Consultative, mid-market | Partially - needs rep input | Challenges lead the convo |

| MEDDIC | Enterprise, complex deals | Mostly manual | Avg 7 stakeholders involved |

BANT maps cleanly to firmographic and behavioral data you can score automatically. CHAMP flips the order to lead with challenges - better for consultative sales but harder to automate fully. MEDDIC is the enterprise heavyweight; PTC grew from ~£195M to £650M in four years after implementing it, but it requires human judgment at nearly every step.

For volume outbound, BANT is fine plus a solid scoring model gets you 80% of the way there.

Build Your Scoring Model

Most lead qualification guides skip the actual template - because they were written by content marketers, not practitioners. Here's one you can implement today.

Positive signals:

| Signal | Points | Type |

|---|---|---|

| C-suite title | +20 | Firmographic |

| Job title matches ICP | +10 | Firmographic |

| Company size >100 | +5 | Firmographic |

| Form submission | +20 | Behavioral |

| Pricing page visit | +15 | Behavioral |

| Opened 3+ emails | +8 | Behavioral |

| Clicked email link | +10 | Behavioral |

Negative signals:

| Signal | Points |

|---|---|

| Unsubscribed | -15 |

| No company email | -15 |

| No activity 30 days | -20 |

| Careers page visit | -10 |

| Competitor domain | -10 |

Score above 50 = MQL. Above 70 = SQL, trigger a sales alert. Apply time decay - 30 days of silence docks 20 points automatically.

One thing we've learned from running this model across different teams: the negative signals matter more than most people think. A competitor-domain flag or a careers-page visit saves reps from chasing leads who were never buyers in the first place, and those small deductions compound across hundreds of leads per week.

Pick Your Tool Stack

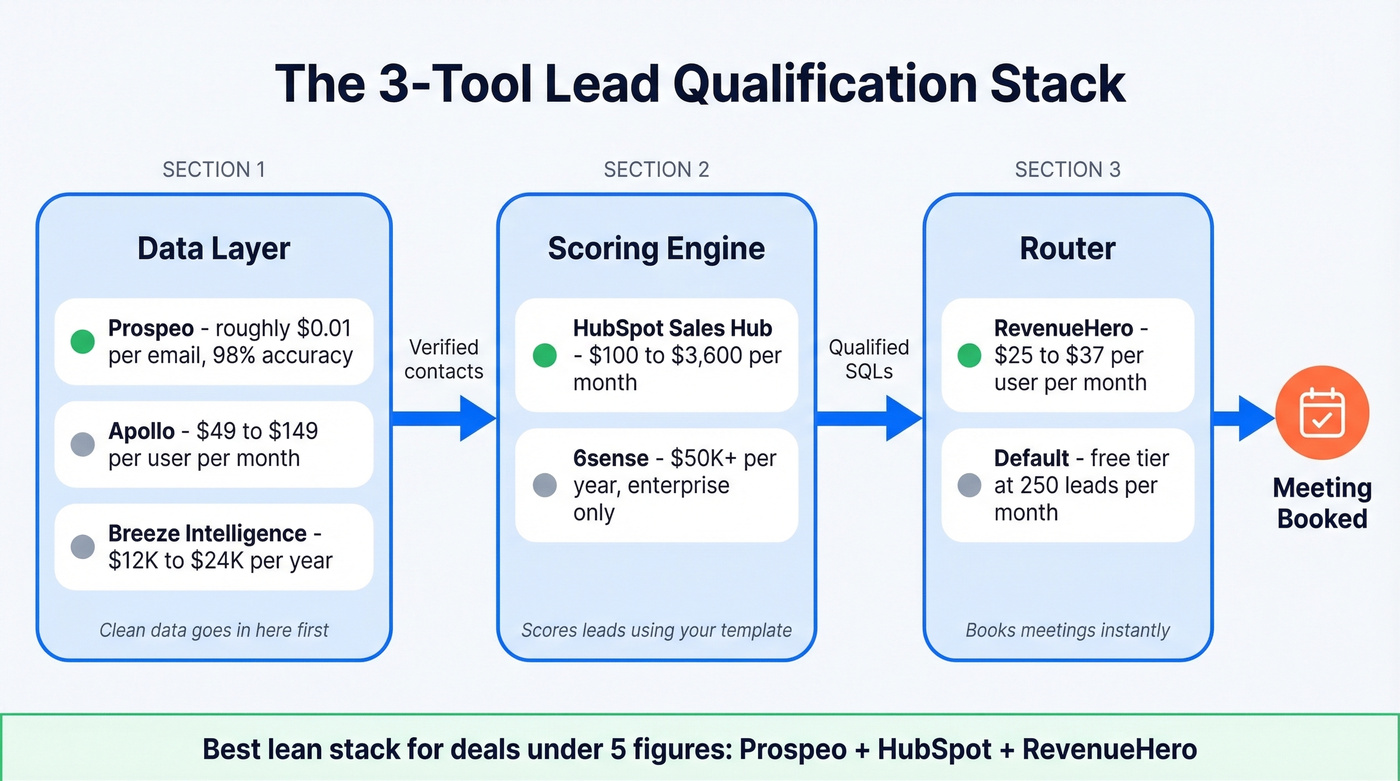

You need three tools, not ten: a data layer, a scoring engine, and a router.

| Function | Tool | Pricing |

|---|---|---|

| Data layer | Prospeo | ~$0.01/email, free tier available |

| Data layer | Apollo | $49-$149/user/mo |

| Data layer | Breeze Intelligence (Clearbit) | ~$12K-$24K/yr |

| Scoring engine | HubSpot Sales Hub | $100-$3,600/mo |

| Scoring engine | 6sense | $50K+/yr (enterprise) |

| Router | RevenueHero | $25-$37/user/mo |

| Router | Default | Free tier at 250 enriched leads/mo |

Let's be honest: if your average deal size sits below five figures, you don't need 6sense or Clearbit-level spend. A verified data layer at $0.01/email plus HubSpot's scoring plus RevenueHero for routing gets you the most for the least - and that stack outperforms bloated enterprise setups when the data underneath is actually accurate.

Half of B2B teams feed unverified contacts into scoring models, then wonder why sales ignores the MQL label. Prospeo's 5-step verification and 7-day data refresh mean your qualification scores reflect real buyers - not stale records from six weeks ago. At $0.01/email with a 98% accuracy rate, your scoring engine finally has a data layer it can trust.

Stop qualifying leads against month-old data. Start with 75 free verified emails.

Benchmarks That Matter in 2026

Once your automation is live, here's what good looks like:

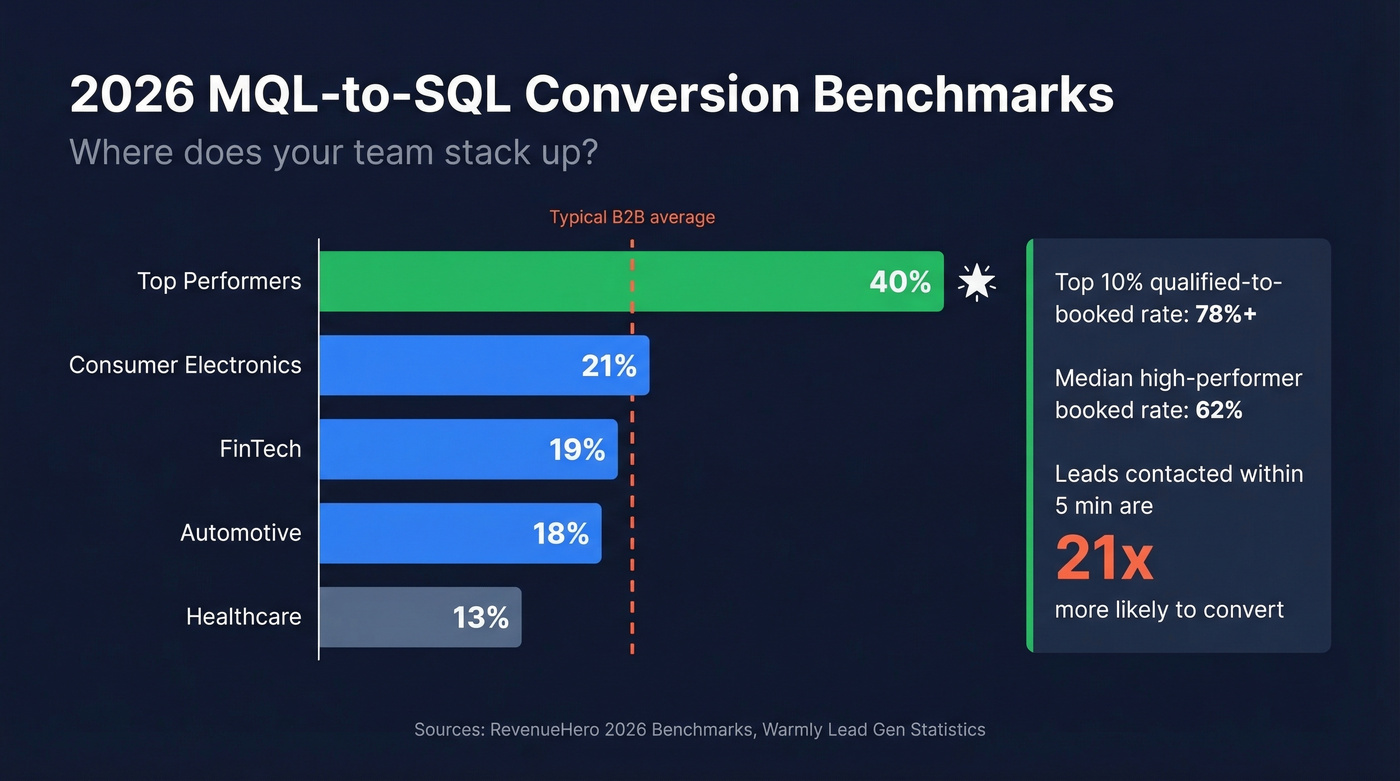

| Industry | MQL-to-SQL Rate |

|---|---|

| Consumer Electronics | 21% |

| FinTech | 19% |

| Automotive | 18% |

| Healthcare | 13% |

| Top performers | 40% |

The median qualified-to-booked rate for high-performing B2B SaaS companies is 62%, with the top 10% hitting 78%+. Speed matters enormously here - leads contacted within 5 minutes are 21x more likely to convert than those contacted after 30 minutes. That stat alone justifies the router in your stack.

Keep Your Scoring Model Calibrated

You set up scoring six months ago and nobody's touched it since. Your AEs have stopped trusting the MQL label. Sound familiar?

Refresh training data every 30-90 days. Trigger a full retrain when feature importance shifts more than 25% - for example, "pricing page visit" was your top signal last quarter but "webinar attendance" now outranks it. That's model drift, and it quietly turns your automation back into manual work. Audit MQL-to-SQL conversion monthly. If it drops below your industry benchmark, your weights are stale.

70% of sales teams spend too much time on manual data entry. Bi-directional CRM syncing keeps scores reflecting real pipeline outcomes instead of decaying assumptions.

Your scoring template assigns +20 for a C-suite title and -15 for missing a company email - but none of that matters if the contact data underneath is wrong. Prospeo returns 50+ enrichment data points per contact at a 92% match rate, giving your scoring engine the firmographic and behavioral inputs it needs to route real SQLs, not ghosts.

Enrich every lead with 50+ verified data points before it hits your scoring model.

Does It Actually Work?

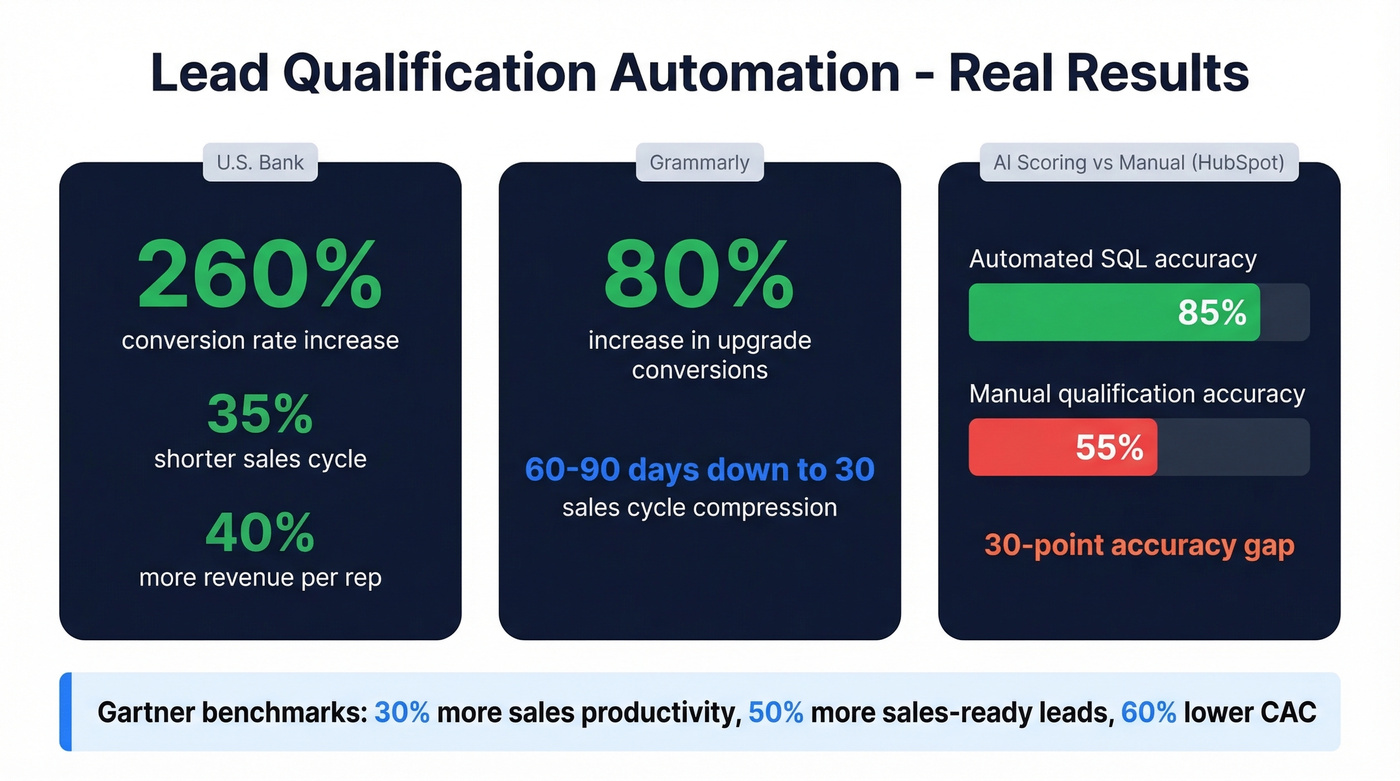

The short answer: yes, but only when the data layer is clean. Here are the numbers we keep coming back to.

U.S. Bank saw a 260% conversion rate increase, a 35% shorter sales cycle, and revenue per rep up 40% after deploying AI lead scoring. Grammarly got an 80% increase in upgrade conversions and compressed their sales cycle from 60-90 days down to 30 using Salesforce Einstein. HubSpot's internal benchmark showed automated SQL accuracy hitting 85% vs 55% with manual qualification - a 30-point accuracy gap that compounds across every rep on the team.

At the macro level, Gartner's benchmark data shows AI-driven scoring yields a 30% increase in sales productivity, 50% more sales-ready leads, and 60% lower CAC.

Same story every time - accurate data in, faster deals out. The teams winning aren't using fancier tools. They're the ones who actually recalibrate their scoring weights and keep their data layer fresh.

FAQ

What's the difference between lead scoring and lead qualification?

Scoring assigns points based on firmographic and behavioral signals. Qualification is the binary decision - MQL or SQL - made when those points cross a threshold. Scoring is the input; qualification is the output.

How often should I recalibrate my scoring model?

Every 30-90 days, or immediately when feature importance shifts more than 25%. Stale models erode sales trust fast. Monthly MQL-to-SQL audits catch drift before it compounds.

Can I trigger an automated email when a lead hits the SQL threshold?

Yes, and you should. When a lead crosses your SQL score, fire an automated email through your router or CRM that confirms interest, shares a booking link, and gives the rep context before the call. This closes the gap between qualification and first touch, where most pipeline leaks happen.

What's the cheapest way to start?

Skip the enterprise tools. HubSpot's free CRM handles basic scoring and routing at zero cost. Pair it with a free-tier data verification tool for your data layer, and you've got a working automation stack without budget approval - enough to validate the model before scaling spend.