Lead Scoring Automation: Build a Model Sales Won't Ignore

A RevOps lead shared a story on Reddit that should be required reading for anyone building a scoring model. Their "perfect" lead - scored 89 out of 100 in HubSpot - turned out to be a college student researching for a class project. Meanwhile, the lead that scored 12? A CTO who booked a demo from a cold outbound message and closed as their biggest deal of the quarter.

Most lead scoring automation measures curiosity, not buying intent. That's exactly why sales ignores it. The gap between what marketing thinks is a "hot lead" and what actually closes is where scoring models go to die.

Let's fix that.

The Quick Version

- Start with 5-7 scoring criteria, not 50. Use a signal-tier framework that weights intent signals above activity and demographics.

- Set your MQL threshold at the top 20% of leads by score - typically 50-75 points on a 100-point scale.

- Clean your data first. Your scoring model is only as good as the contact records feeding it. Use an enrichment layer to keep job titles, emails, and firmographics current before you score anything.

What Is Automated Lead Scoring?

Lead scoring automation assigns numerical values to leads based on their behavior and demographic fit, then routes them automatically via CRM or marketing platform rules. Instead of a rep eyeballing a list and guessing who's ready to buy, the system surfaces the top 10-20% based on criteria you define - or, with predictive models, criteria the algorithm discovers.

Here's the thing: only about 44% of organizations actually use lead scoring, despite data showing teams that do see 138% ROI on lead gen spend versus 78% without it. That's a massive gap between what works and what teams implement.

Why Scoring and Prioritization Matter

79% of marketing leads never convert into sales - mostly due to poor prioritization. And 41% of B2B marketers struggle to align marketing-generated leads with sales expectations. Scoring creates a shared language between the two teams.

Machine learning-based scoring delivers roughly 75% higher conversion rates compared to no scoring at all. For context on channel quality, SEO-driven leads close at 14.6% vs. outbound at 1.7% - scoring helps you treat those channels differently instead of dumping everything into the same queue.

The real win isn't the numbers themselves. It's that sales stops cherry-picking leads based on gut feeling and starts working a prioritized queue backed by data.

Intent Signals vs. Activity Signals

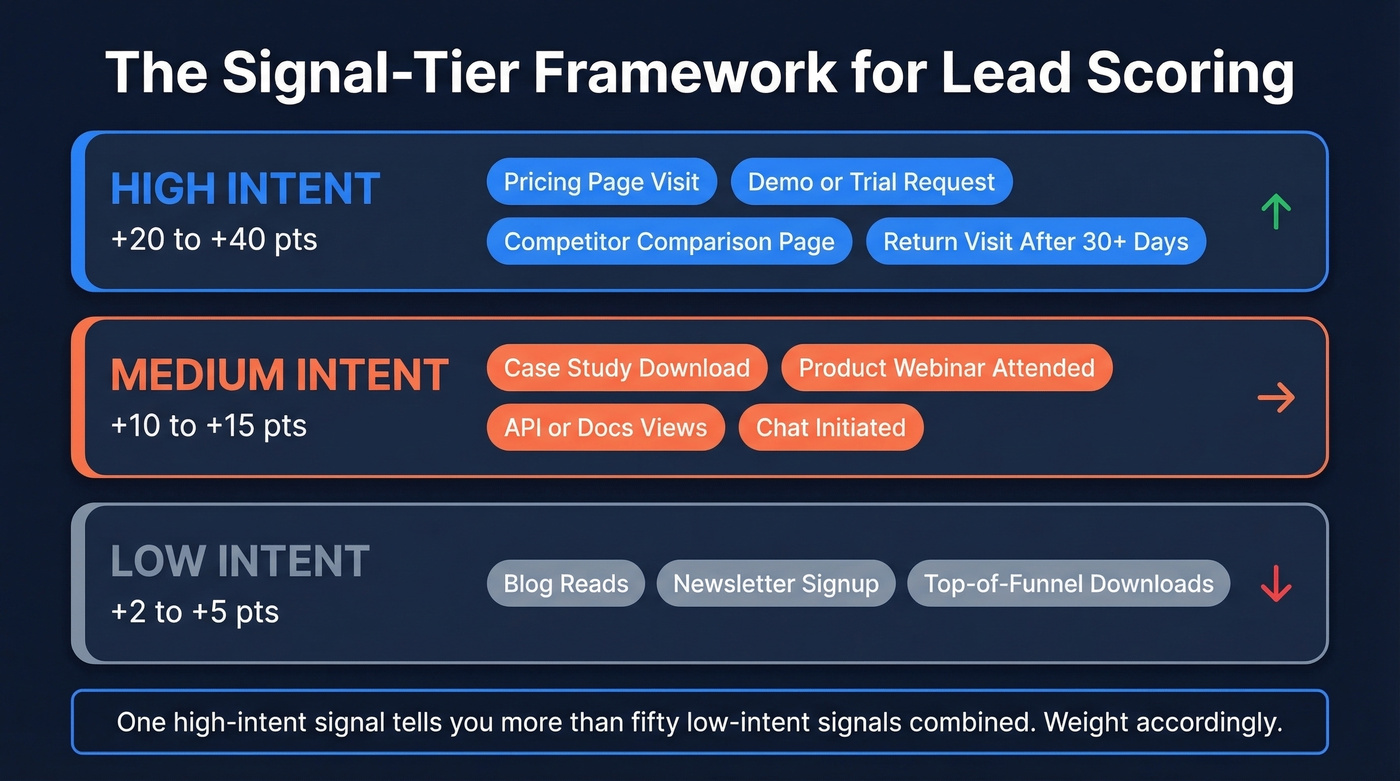

Not all engagement is created equal. A pricing page visit and a blog read are both "engagement," but they signal completely different things. We use a three-tier signal framework to make scoring concrete:

| Signal Tier | Examples | Score Weight |

|---|---|---|

| High intent | Pricing page, demo/trial request, competitor comparison, return visit after 30+ days | +20 to +40 |

| Medium intent | Case study download, product webinar, API/docs views, chat initiated | +10 to +15 |

| Low intent | Blog reads, newsletter signup, TOFU content downloads | +2 to +5 |

Remember the 89-point college student from the intro? They racked up dozens of low-intent signals - blog reads, newsletter opens, whitepaper downloads. The CTO who scored 12 hit one high-intent signal: a pricing page visit followed by a demo request. One signal told you more than fifty.

This matters because 38% of B2B deals are lost to "no decision" - not to a competitor. Your model needs to identify leads who are actively evaluating, not just passively learning.

Rule-Based vs. Predictive vs. Hybrid

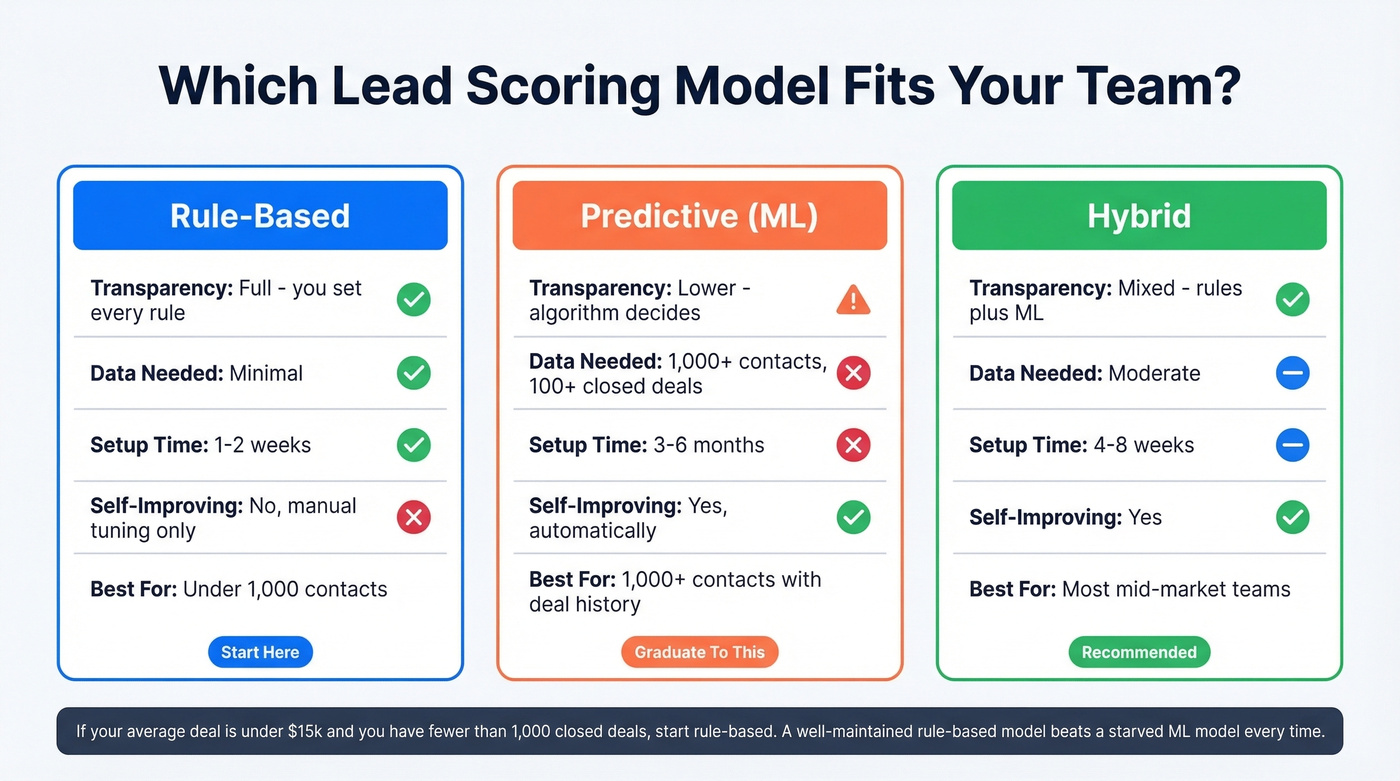

| Dimension | Rule-Based | Predictive (ML) | Hybrid |

|---|---|---|---|

| Transparency | Full - you set every rule | Lower - algorithm decides | Mixed |

| Data needed | Minimal | 1,000+ contacts, 100+ closed deals | Moderate |

| Setup time | 1-2 weeks | 3-6 months | 4-8 weeks |

| Improves over time | Only with manual tuning | Yes, automatically | Yes |

| Best for | <1,000 contacts | 1,000+ contacts with history | Most mid-market teams |

Rule-based scoring wins on transparency and speed. You can launch in a week with 5-7 criteria, and every rep can understand why a lead scored the way it did. Predictive scoring discovers patterns you'd never think to codify - but it needs volume.

HubSpot supports both rules-based and AI-driven scoring. AI scoring is available on Enterprise tiers, and many teams treat 1,000+ contacts, 100+ closed deals as the practical minimum to generate a useful model.

Our take: If your average deal size is under $15k and you have fewer than 1,000 closed deals, predictive scoring is a distraction. A well-maintained rule-based model will outperform a starved ML model every time. Save the AI budget for something with enough data to learn from.

Start rule-based. Graduate to predictive once you've got the data volume. Most teams we've talked to end up running a hybrid - rule-based for initial qualification (does this lead match our ICP?), predictive for prioritization within the qualified pool. Some even run parallel models for different segments, one for inbound and one for outbound, then A/B test scoring criteria quarterly to see which converts better.

Your scoring model collapses when job titles are outdated and emails bounce. Prospeo refreshes 300M+ profiles every 7 days - not every 6 weeks - so your firmographic and intent signals stay accurate. 83% of leads come back enriched with 50+ data points, giving your scoring rules something real to work with.

Stop scoring leads against stale data. Enrich first, score second.

How to Build a Model Sales Trusts

Step-by-Step Implementation

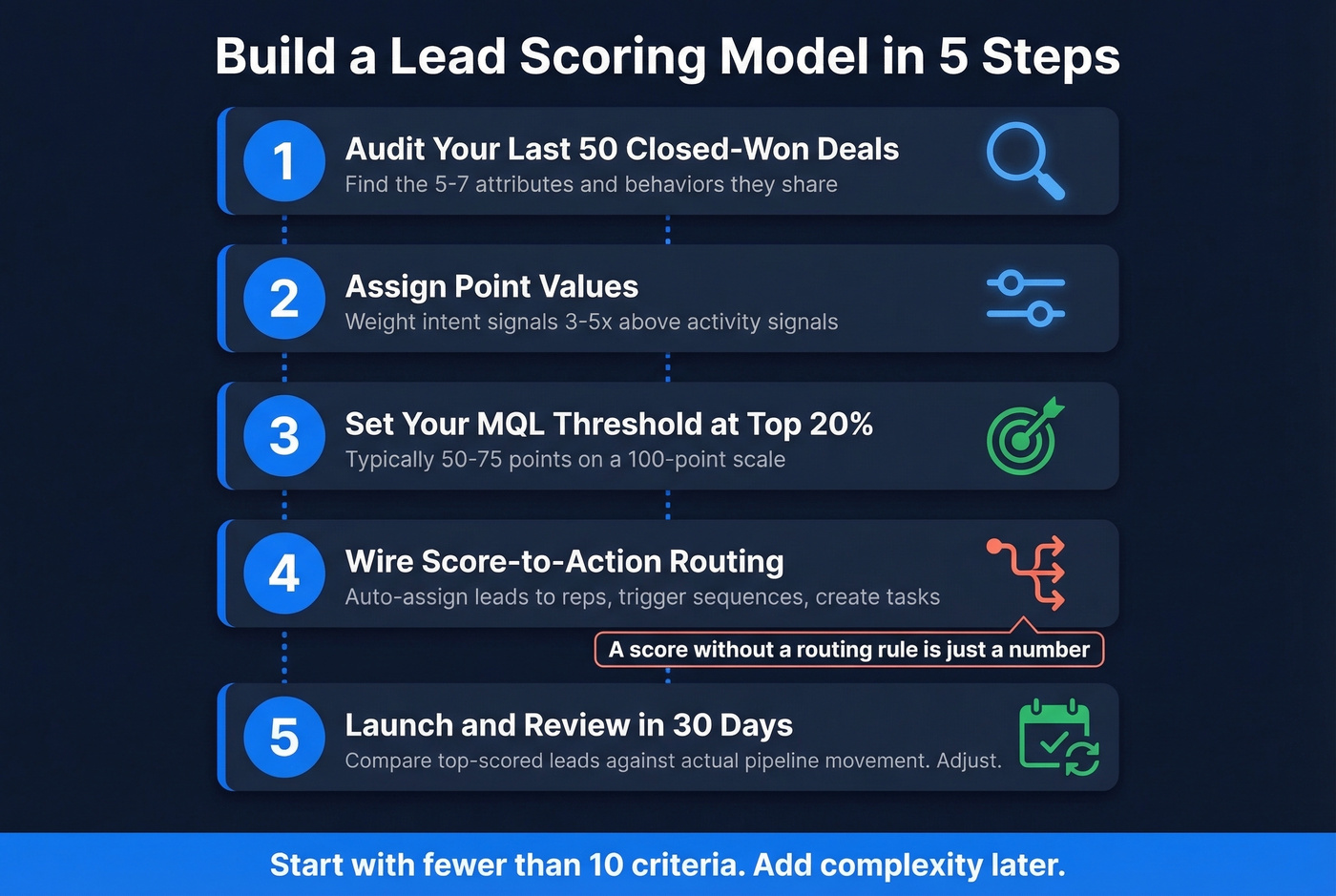

- Audit your last 50 closed-won deals. Identify the 5-7 attributes and behaviors they share.

- Assign point values using the template below. Weight intent signals 3-5x above activity signals.

- Set your MQL threshold at the top 20% of leads by score (typically 50-75 points).

- Wire score-to-action routing. A score without a routing rule is just a number. Auto-assign leads above threshold to a rep, trigger a sales sequence, or create a CRM task. Scoring in isolation doesn't close deals - connecting scores to outreach is what drives revenue.

- Launch and review in 30 days. Compare top-scored leads against actual pipeline movement. Adjust.

Your Scoring Template

Combine firmographic fit with behavioral signals. Here's a starting rubric built from Monday.com's framework and practitioner templates:

Positive signals:

| Criterion | Points | Category |

|---|---|---|

| Demo request | +40 | Intent |

| C-level decision maker | +30 | Fit |

| Target industry match | +25 | Fit |

| Pricing page visit | +20 | Intent |

| Company size match | +15 | Fit |

Negative signals:

| Criterion | Points | Category |

|---|---|---|

| Competitor employee | -50 | Disqualifier |

| Email unsubscribe | -25 | Disengagement |

| Wrong company size | -20 | Fit mismatch |

| Personal email (B2B) | -15 | Fit mismatch |

| Single page bounce | -10 | Low engagement |

| 30+ days no engagement | -10 | Decay |

The negative signals are just as important as the positive ones. A competitor employee who downloads every piece of content you publish will score sky-high without them.

Setting Your MQL Threshold

Set your threshold to capture the top 20% of leads by score. On a 100-point scale, that typically lands between 50 and 75 points. Expect 15-25% conversion rates from qualified leads to closed deals - if you're seeing significantly less, your criteria are off.

Start with fewer than 10 criteria. We've seen teams launch with 50 rules and spend more time debugging the model than actually selling. You can always add complexity later.

Score Decay Rules

Leads go stale. A prospect who was hot three months ago and hasn't engaged since isn't hot anymore.

Gradual decay reduces points by 25% monthly without new activity. A lead scored at 80 drops to 60 after one quiet month, 45 after two. Cliff decay cuts points by 50% after 30 days of inactivity - more aggressive, but simpler to implement. Pick one and stick with it. The specific model matters less than having one at all. Scoring isn't "set and forget" - it's a living system that needs maintenance.

Common Mistakes That Kill Scoring Models

The most common failure mode is over-engineering at launch. A team spends two weeks in a conference room building a 50-rule model, launches it, and wonders why sales ignores it three months later. Start with 5-7 criteria that map to your actual closed-won patterns and review quarterly. When models get too complex, some teams revert to a simple qualification triad - problem, budget, decision-maker - which defeats the purpose of automation entirely.

The second killer is weighting every touchpoint equally because "all engagement matters." It doesn't. That's how college students outscore CTOs. Weight intent signals - pricing page visits, demo requests, competitor comparisons - 3-5x higher than activity signals like blog reads and newsletter opens.

Third, nobody owns the model. Assign a scoring model owner who reviews performance quarterly by comparing top-scored leads against actual closed deals. The model you built in Q1 won't work in Q4. Buying patterns shift, your ICP evolves, and new content changes the signal mix.

And finally - don't build a sophisticated model on top of garbage data. If your contacts have bounced emails, outdated job titles, and wrong company sizes, no amount of scoring logic saves you.

Data Quality: The Foundation Most Teams Skip

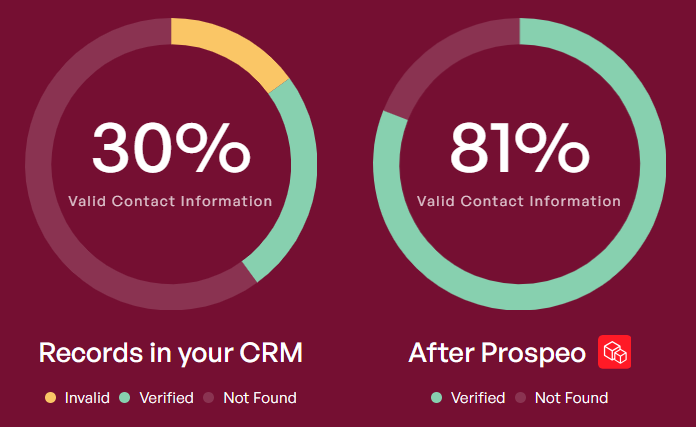

Your scoring model is only as good as the data feeding it. If 30% of your emails bounce and job titles are six months stale, your fit scores are fiction. A lead who changed roles from SDR to VP of Sales three months ago is getting scored as an SDR - and your reps are either skipping them or pitching wrong.

This is where an enrichment layer becomes non-negotiable. Prospeo enriches CRM contacts with 50+ data points per record at an 83% match rate, on a 7-day data refresh cycle versus the six-week industry average. Email accuracy runs 98%. That means your fit scores reflect reality, not a snapshot from last quarter. It also layers in Bombora intent data across 15,000 topics, giving your model a second signal axis: not just "does this lead match our ICP" but "are they actively researching solutions right now."

That CTO who scored 12 in the intro? With enriched data, your model would have known their title, company size, and tech stack before they ever hit your pricing page. Prospeo's 30+ filters - including buyer intent powered by 15,000 Bombora topics - let you build scoring criteria against verified, current records at $0.01 per email.

Give your scoring model the data quality it deserves for a penny per lead.

Best Lead Scoring Tools in 2026

| Tool | Best For | Scoring Type | Starting Price | Scope |

|---|---|---|---|---|

| HubSpot | HubSpot ecosystem teams | Rules + AI scoring | ~$890/mo (3 seats) | AI scoring on Enterprise tiers |

| Prospeo | Data layer for any scoring tool | Enrichment + intent | Free / ~$39/mo | Pair with a CRM scoring engine |

| Salesforce Einstein | Large Salesforce orgs | Predictive (ML) | $165/user/mo + $50/user/mo | $50k-$500k implementation |

| 6sense | Enterprise ABM | Account-level intent | ~$60k-$300k/yr | Overkill for SMB |

| Apollo | SMB/mid-market outbound | Rules-based | Free / $79/user/mo | Less depth than enterprise |

| ActiveCampaign | Email-heavy B2B | Rules + automation | ~$49/mo | Limited firmographic scoring |

| Pipedrive | Small sales teams | Rules-based | $79/seat/mo (Premium) | Basic scoring only |

| MadKudu | PLG companies | Predictive | ~$1k-$3k/mo | Niche use case |

Other enrichment layers worth evaluating include Clearbit and Demandbase for account-level scoring.

HubSpot: The Default for Marketing Automation Scoring

HubSpot is the obvious pick for teams already running their CRM and marketing automation on the platform. Manual scoring is available on Professional tiers - you set positive and negative criteria, and scores update as contact properties change. AI scoring requires Enterprise.

Pricing benchmarks: Professional starts around $890/month (commonly packaged with 3 seats), while Enterprise often starts around $3,600/month. The AI gating at Enterprise is a common frustration in r/hubspot - teams on Professional feel locked out of the feature they actually need. Breeze Intelligence adds enrichment from $45/month for 100 credits. Solid all-in-one if you're already paying for the ecosystem, but expensive to grow into if you're not.

Salesforce Einstein

Einstein Lead Scoring typically sits on top of Sales Cloud Enterprise at $165/user/month, with the Einstein add-on starting at $50/user/month. For a 20-person sales team, you're looking at $50k+/year in licensing before implementation costs of $50k-$500k+. Skip this if you don't have a Salesforce admin on staff.

6sense

Running enterprise ABM with 10,000+ leads per month? This is your shortlist. Annual contracts run $60k-$300k/year. Reddit threads show buyers actively evaluating 6sense against Leadspace for dedicated scoring. Skip this if you're a Series A company or your total lead volume is under 1,000/month.

Apollo

The obvious starting point for SMB and mid-market teams that want scoring and outbound in one platform without a five-figure annual commitment. Professional at $79/user/month adds more sophistication. For teams under 50 reps, it covers the basics - just don't expect HubSpot Enterprise depth.

ActiveCampaign

Plans start around $49/month. Strong for email-heavy B2B teams that need scoring tightly integrated with automation workflows - when a lead hits your threshold, trigger a sequence automatically. Less suited for complex firmographic scoring, but the price-to-value ratio is hard to beat for email-first teams.

Pipedrive

Starts at $24/seat/month; scoring requires Premium at $79/seat/month or higher. Simple rules-based scoring for small sales teams who need something basic without the HubSpot price tag.

MadKudu

Purpose-built for product-led growth companies that need to score based on in-app behavior - free-to-paid conversion signals, feature adoption patterns, usage frequency. Custom pricing, typically $1,000-$3,000/month. Niche but effective if that's your motion.

Building a CRM Workflow for Scored Leads

The scoring model itself is only half the equation. Without a CRM workflow that routes scored leads to the right action, scores sit in a field nobody checks.

Here's how to close the loop: when a lead crosses your MQL threshold, auto-assign it to a rep and create a CRM task with a 24-hour SLA. Leads that score between 30 and your threshold enter a nurture sequence - marketing automation keeps them warm until intent signals push them over. Leads that decay below 20 get recycled back to marketing or archived, freeing your reps to focus on active opportunities.

The goal is a closed system: score triggers action, action generates data, data refines the score. That feedback loop is what separates lead scoring automation that drives pipeline from a vanity metric nobody trusts.

FAQ

How long does it take to implement lead scoring automation?

Rule-based models launch in 1-2 weeks with 5-7 criteria - you can have scores flowing by end of sprint. Predictive scoring takes 3-6 months and requires at least 1,000 contacts with 100+ closed deals to train a reliable model. Start with rules, graduate to predictive once you've got the data volume.

What's the difference between lead scoring and lead grading?

Scoring measures behavioral engagement - what a lead does (pages visited, demos requested). Grading measures demographic and firmographic fit - who the lead is (title, company size, industry). The best models combine both: a high-fit, high-engagement lead gets fast-tracked, while a high-engagement, low-fit lead gets flagged before a rep wastes time.

How do I keep my scoring model accurate over time?

Review quarterly by comparing top-scored leads against actual closed deals. If your highest-scoring leads aren't converting, recalibrate your criteria. Keep contact data fresh with enrichment tools on a weekly refresh cycle - stale job titles and dead emails corrupt fit scores silently, and no amount of model tuning fixes bad underlying data.

How does marketing automation scoring differ from manual scoring?

Manual scoring relies on a rep reviewing each lead against a checklist - it works at low volume but breaks past 100 leads per week. Automated scoring applies your rules programmatically across every lead in real time, updating scores as new signals arrive. The automation layer is what makes scoring scalable and consistent enough for sales to trust.