Open-Ended vs Closed-Ended Questions: When to Use Each (With Evidence)

Your NPS came back at 7.2. Decent, not great. You scroll down to the open-ended field - "Any additional feedback?" - and find 340 responses. Sixty percent say "N/A." Another twenty percent say "No."

The rest are a mix of one-word answers and a few paragraphs from people who clearly had a bad day. You've got a number that tells you nothing specific and a text field that tells you even less. The problem isn't your respondents - it's the questions you asked them. Understanding open-ended vs closed-ended questions changes the data you collect, not just the depth but the direction.

Definitions and examples aren't enough. The format of your question literally shapes your results. Let's get into what actually matters.

The Short Version

- Use open-ended questions for discovery - the things you don't know yet. Use closed-ended for measurement - tracking variables you've already identified.

- A practical survey mix is often 70-80% closed-ended and 20-30% open-ended. Don't go all-in on just one format.

- Open-ended data is only valuable if you have a plan to analyze it. Without a coding framework, it's just a pile of text you'll never use.

Definitions That Actually Matter

An open-ended question lets the respondent answer in their own words, with no predefined options. "What was your experience with our onboarding process?" is open-ended. The answer could be three words or three paragraphs.

A closed-ended question constrains the response to a fixed set of options - yes/no, multiple choice, rating scales, rankings. "How would you rate our onboarding process on a scale of 1-5?" is closed-ended. The answer is always a number.

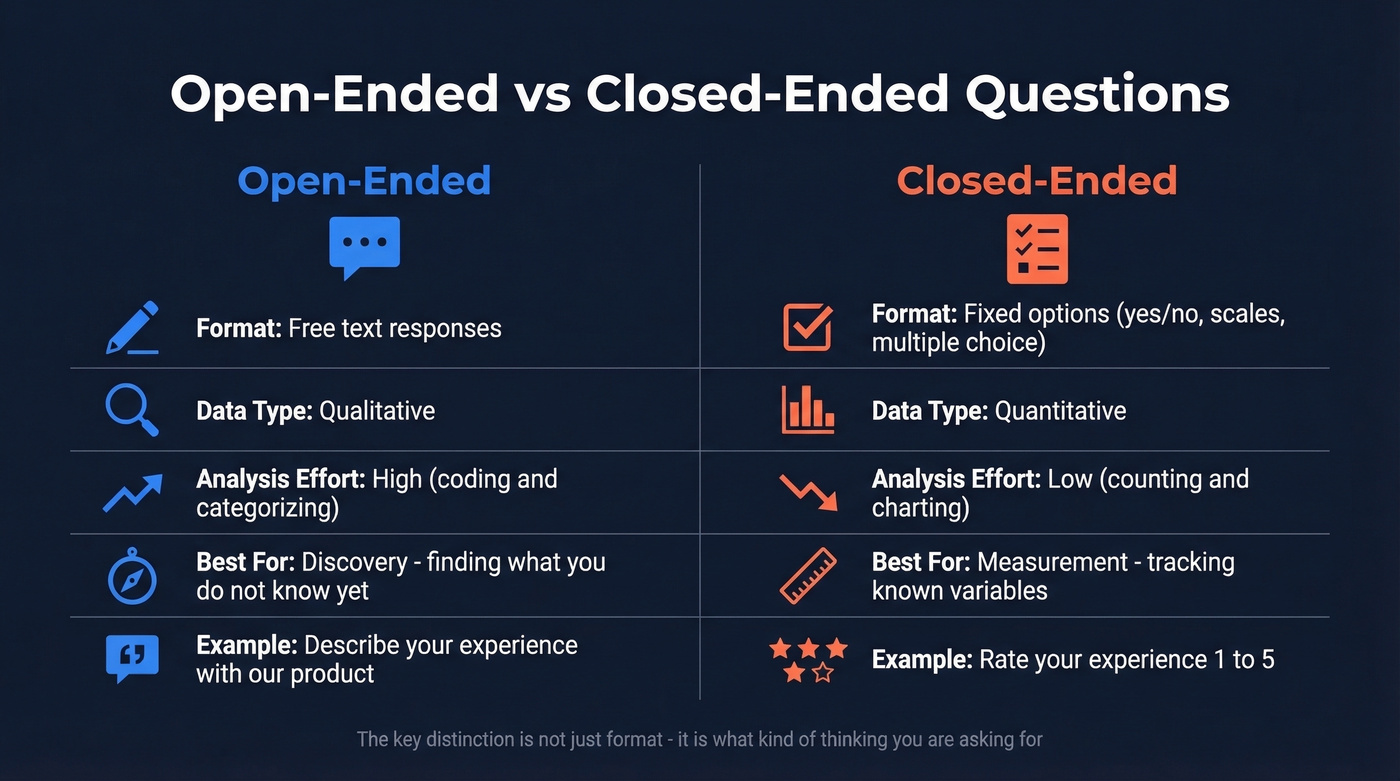

There's a nuance most people miss. As NN/g points out, some questions are technically open-ended but function as closed in practice. "How many times did you contact support last month?" has infinite possible numeric answers, but it's seeking a short factual response, not rich qualitative insight. The distinction isn't just about format - it's about what kind of thinking you're asking for. Think of it like an exam: closed-ended questions are the multiple-choice section, open-ended are the essay questions.

| Dimension | Closed-Ended | Open-Ended |

|---|---|---|

| Format | Fixed options | Free text |

| Data type | Quantitative | Qualitative |

| Analysis effort | Low (counting) | High (coding) |

| Best for | Measurement | Discovery |

| Example | "Rate 1-5" | "Describe your experience" |

Why Question Format Changes What You Learn

Closed-ended questions aren't inferior - they're essential for measurement. The mistake is using them for discovery.

A 2024 study comparing open-ended and closed-ended questions about refugee policy in Poland found that closed-ended questions produced more negative-leaning results, while open-ended responses surfaced richer, more positive nuance. The predetermined options in the closed format boxed respondents into the researcher's framing. Open-ended questions let attitudes emerge from the bottom up.

This isn't just an academic curiosity. NN/g warns that closed-ended questions become leading by revealing what the researcher cares about. "Was that experience helpful?" tells the respondent you're interested in helpfulness and primes them to evaluate along that axis. "How did you find that experience?" leaves the door open for answers you didn't anticipate.

Here's a concrete rewrite that shows the difference:

- Closed (leading): "Did you find that task difficult?"

- Open (neutral): "How did you find that task?"

The first question tells the respondent you suspect it was difficult. The second lets them tell you what actually happened.

Decision Framework: Discovery or Measurement?

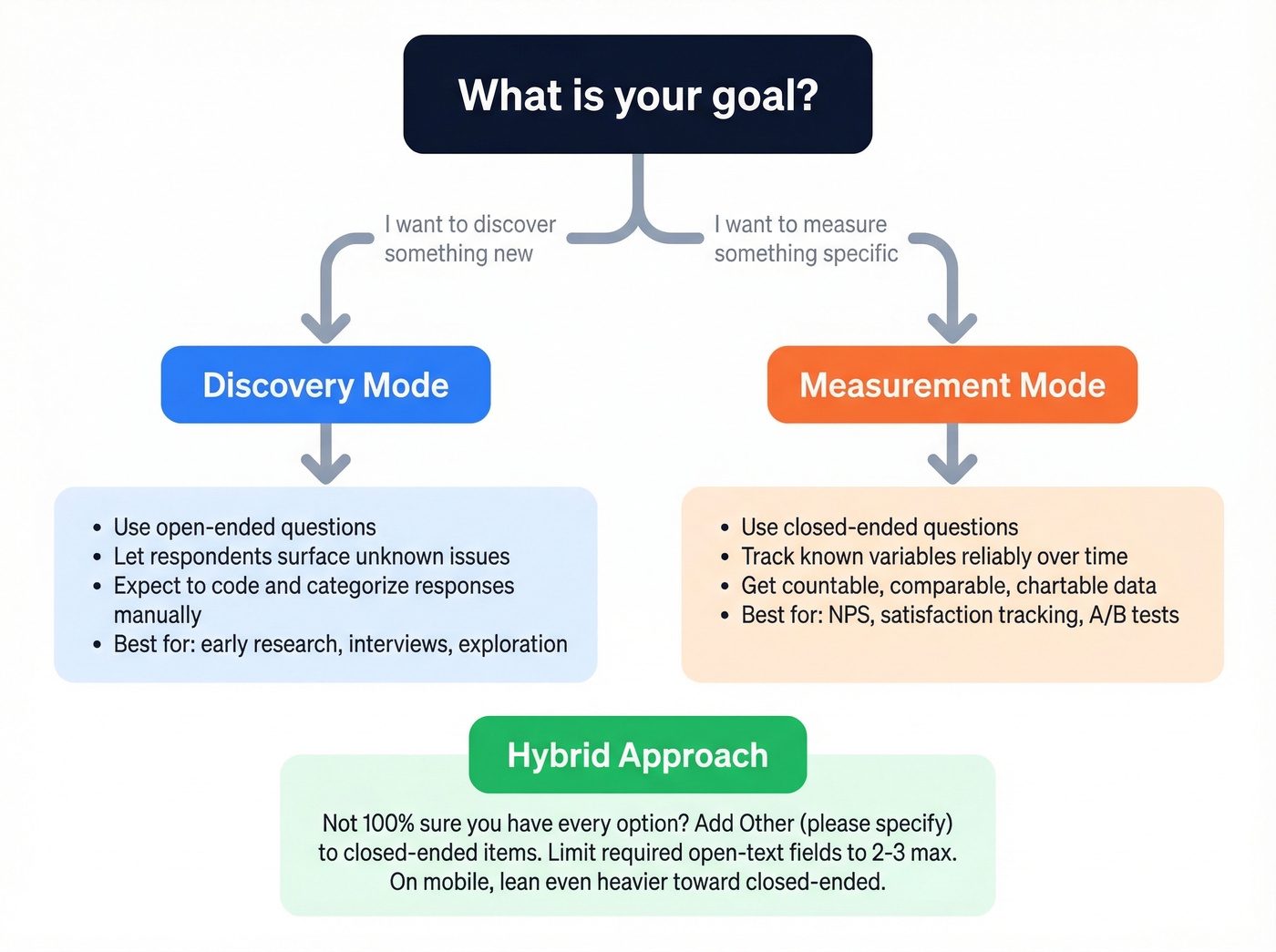

The cleanest framework we've found comes from [MeasuringU's survey question decision tree]. It starts with one question: are you trying to discover something or measure something?

If discovery: you don't know what the issues are yet. Open-ended questions let respondents surface problems, ideas, and language you haven't thought of. The tradeoff is that you'll need to categorize and code the responses manually - there's no automatic chart at the end.

If measurement: you already know the variables and want to track them reliably. Closed-ended questions give you countable, comparable data. A rating scale, a multiple-choice set, a yes/no - these produce numbers you can trend over time.

The hybrid move: for measurement questions where you're not 100% sure you've captured every option, add an "Other (please specify)" field. This gives you the structure of closed-ended with an escape hatch for surprises. But don't overdo it - adding "and why?" after every closed item causes fatigue and dropout. Keep required open-text to two or three fields max.

On mobile surveys, open-ended questions yield lower response rates, so weight your ratio even more heavily toward closed-ended for mobile-first audiences.

Open-ended questions uncover pain points. Closed-ended questions qualify deals. But neither matters if you're asking the wrong person. Prospeo gives you 98% accurate emails and 125M+ verified mobile numbers so your discovery calls start with the right decision-maker - not a gatekeeper.

Stop perfecting your questions for prospects who'll never buy.

Examples by Domain

Surveys and Market Research

The biggest pitfall in survey design is adding "please explain" after every item. It feels thorough. It's actually a recipe for garbage data and abandoned surveys.

Concrete rewrites that show when to switch formats:

- Closed: "Are you satisfied with our product? (Yes/No)" --> Open: "What has your experience been with our product over the past 3 months?"

- Closed: "Which feature do you use most? (A/B/C/D)" --> Open: "Walk me through how you use our product in a typical week."

- Closed: "Would you recommend us? (1-10)" --> Open: "If a colleague asked about us, what would you tell them?"

The closed versions are fine for tracking. The open versions are better for learning. Use both - just don't stack five open-text boxes in a row and expect people to finish.

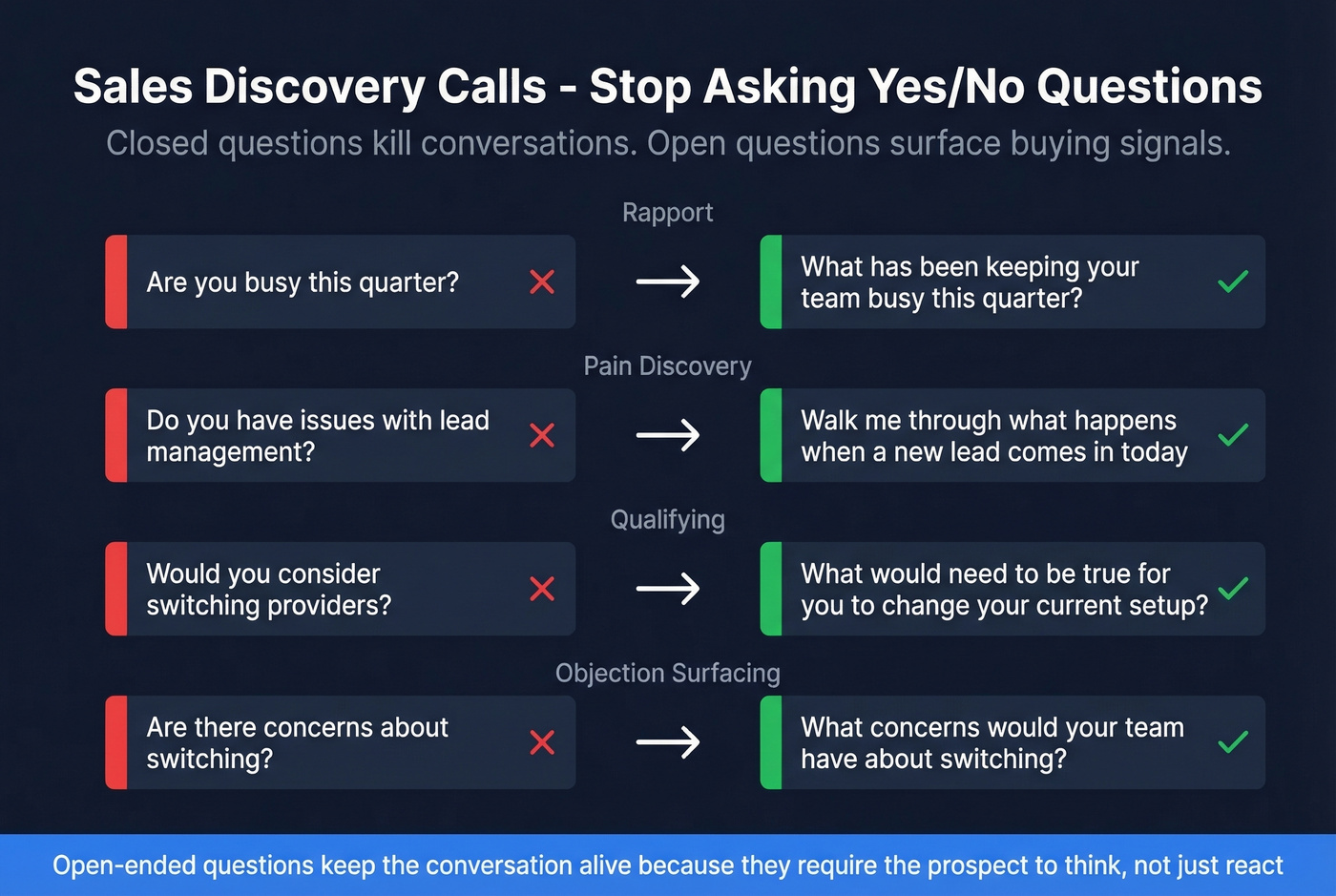

Sales Discovery Calls

Every closed-ended question on a discovery call is a missed opportunity to learn something unexpected. "Are you happy with your current provider?" gets you a yes or no. "Tell me about your experience with [current solution]" gets you a story - and stories contain objections, pain points, and buying signals.

Open-ended prompts that actually work:

- Rapport: "What's been keeping your team busy this quarter?"

- Pain discovery: "Walk me through what happens when a new lead comes in today."

- Qualifying: "What would need to be true for you to change your current setup?"

- Objection surfacing: "What concerns would your team have about switching?"

We've watched SDRs whose calls die in under three minutes because they run through a checklist of yes/no questions. The prospect gives short answers, the energy flatlines, and the call ends with "send me some info." Open-ended questions keep the conversation alive because they require the prospect to think, not just react.

Of course, great discovery questions are wasted if you're calling the wrong person. Before your next call block, verify your prospect list with Prospeo - 98% email accuracy and verified direct dials mean your questions actually reach decision-makers.

UX Research and Usability Testing

NN/g recommends a funnel technique: start broad with open-ended questions, then narrow to closed-ended for specifics. In a usability test, that looks like opening with "How did you find that task?" before drilling into "On a scale of 1-5, how confident were you that you completed it correctly?"

The classic mistake is leading with closed questions that telegraph your hypothesis. "Did you find the navigation confusing?" tells the participant you think it's confusing. "What stood out to you about the navigation?" doesn't.

Interviews and Healthcare

In job interviews, open-ended behavioral questions like "Tell me about a time you handled a difficult stakeholder" reveal how candidates actually think and act. Closed-ended screening questions filter efficiently but tell you nothing about capability.

Healthcare follows a similar pattern. "Are you experiencing pain?" gets a yes. "Describe what you've been feeling over the past week" gets context - location, intensity, triggers, duration - that changes the diagnosis.

Mixed-Method Survey Design

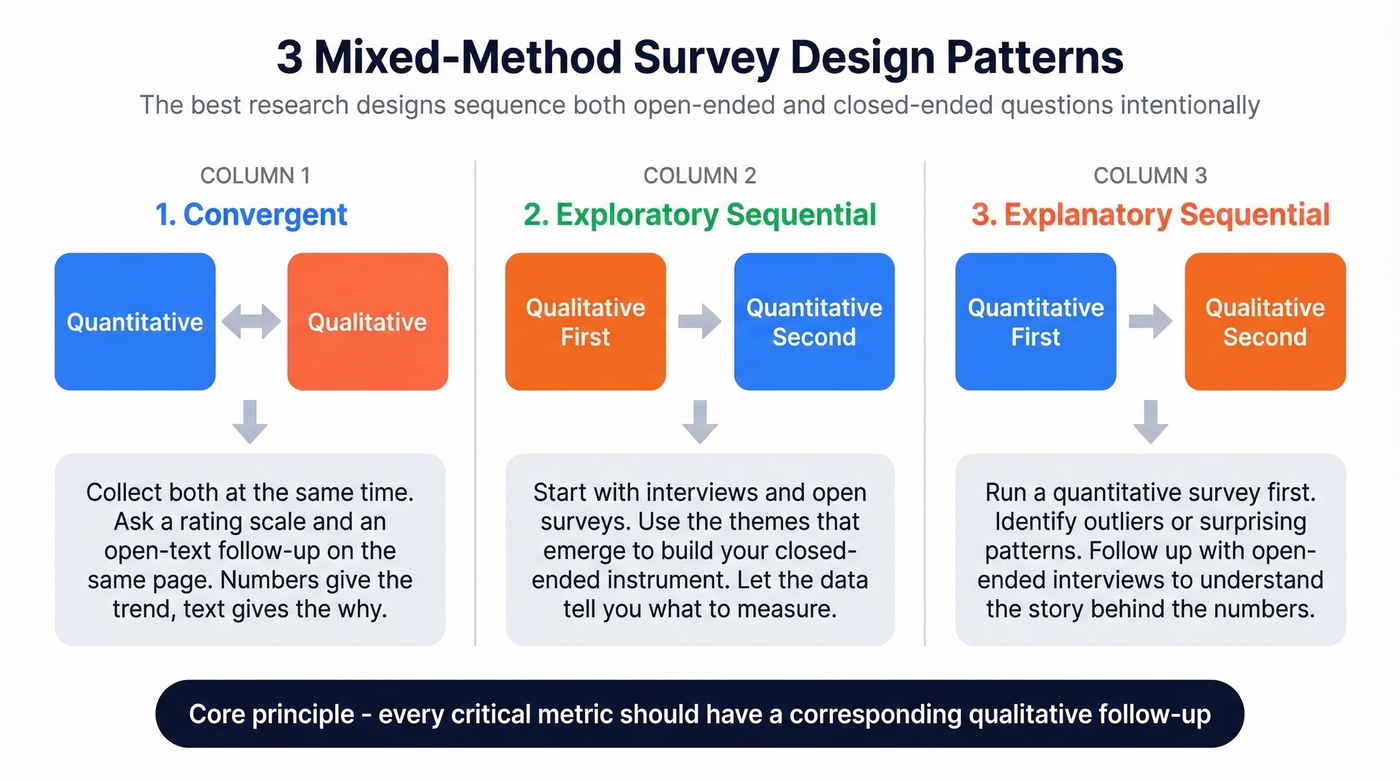

The best research designs don't pick one format. They sequence both intentionally. Three established design patterns are worth knowing.

Convergent design collects both simultaneously. You ask a rating scale question and an open-text follow-up on the same page. The numbers give you the trend; the text gives you the why.

Exploratory sequential starts with open-ended questions - interviews, focus groups, open surveys - then uses the themes that emerge to build a closed-ended instrument. You're letting the data tell you what to measure before you measure it.

Explanatory sequential goes the other direction. You run a quantitative survey first, identify outliers or surprising patterns, then follow up with open-ended interviews to understand what's behind the numbers.

The principle underneath all three: every critical quantitative metric should have a corresponding qualitative follow-up. A satisfaction score without context is just a number. Add the story behind it and you have something actionable.

Common Mistakes and Fixes

Too many open-ended questions. If your survey has more than three required open-text fields, expect garbage data. People rush, copy-paste, or abandon. Fix: limit required open-ended to two or three. Make the rest optional.

False open-ended questions. "What did you like about it?" feels open-ended but still constrains the respondent to the positive dimension. A thread on r/NoStupidQuestions captures this misconception well - some "what" questions can still feel boxed-in. Fix: use genuinely neutral stems like "Describe your experience with..." or "What stood out to you about..."

Leading questions that reveal researcher bias. "To what extent was the visual design a factor in your decision?" tells the respondent you think visual design matters. Fix: "What factors did you consider?" lets them bring up design - or not.

Overlapping response options. Ranges like "1-5, 5-10" or checkboxes that should be radio buttons create ambiguity and dirty data. Fix: test your closed-ended options for mutual exclusivity before launching.

The "and why?" trap. Adding an open-text "why?" after every closed item seems like good practice. It's actually the fastest way to tank completion rates. Fix: pick the two or three most important items and add a follow-up. Leave the rest alone.

Analyzing Open-Ended Responses

Here's the thing most articles skip: open-ended questions give you "richer data" only if you have a plan to analyze it. Without a coding framework, you have a pile of text you'll never use.

The state of open-ended analysis is worse than you'd think. A systematic review of 956 psychology articles found that only 21% included human-coded open-ended data - and of those, only about a third reported enough detail to evaluate the validity of their coding process. The rest were black boxes.

That same review introduces the concept of "kappa-hacking" - where researchers artificially inflate inter-rater reliability by repeatedly testing or manipulating categories until the numbers look good. It's the qualitative equivalent of p-hacking, and it's more common than anyone admits.

For practical analysis, start with manual coding on your first pass. Expect a few hours per 100 medium-length responses. Tools like MEH (Meaning Extraction Helper) and LIWC can automate theme extraction and emotional tone analysis at scale. LLMs can assist with initial coding passes too, but the same transparency concerns apply: if you can't explain how your categories were derived, the analysis is a black box regardless of whether a human or a model did the work.

One underappreciated use of open-ended responses: data quality screening. Across four online surveys totaling 3,771 participants, 18-35% of respondents were flagged for copying their open-ended answers from online sources. Those same participants showed lower reliability on the closed-ended scales. Open-ended fields don't just collect qualitative data - they help you identify who's actually paying attention.

Let's be honest: if your average deal size is under $15k and you're running customer surveys, you probably don't need a sophisticated analysis platform. A spreadsheet with a consistent coding manual and two independent coders will outperform any tool used sloppily. The framework matters more than the software.

If you're using these insights in a revenue context, tie your findings back to lead scoring and pipeline health so qualitative themes translate into action.

Your SDRs just learned to ask better discovery questions. Now make sure they're not wasting them on bad numbers and bounced emails. Prospeo's data refreshes every 7 days - not 6 weeks - so the direct dial you pull today actually connects tomorrow.

Great questions deserve a live person on the other end.

FAQ

What's the ideal ratio of open-ended to closed-ended questions?

A practical target is 70-80% closed-ended and 20-30% open-ended. Keep required open-text fields to two or three per survey to prevent dropout - completion rates fall sharply beyond that threshold.

Can you convert a closed-ended question into an open-ended one?

Yes. Replace yes/no stems with "How," "Tell me about," or "What was your experience with" to remove the leading frame and invite richer responses. "Was onboarding easy?" becomes "Describe your onboarding experience."

How long does it take to analyze open-ended survey responses?

Expect 2-4 hours per 100 medium-length responses for first-pass manual coding. Automated tools like MEH and LIWC cut theme extraction time by roughly 60-70%, making them worthwhile above 200 responses.

How do I prepare for sales discovery calls with open-ended questions?

Write 5-7 open-ended prompts covering rapport, pain discovery, and qualifying. Verify your prospect's contact data beforehand so your questions actually reach the right decision-maker - bad contact info wastes everyone's time.