Qualitative vs Quantitative Forecasting: How to Choose, Measure, and Combine Both

Global organizations lose an average of $184M annually from supply chain disruptions. The qualitative vs quantitative forecasting debate frames this as either/or. It's not. The real question is which approach to weight given your data maturity, forecast horizon, and how much uncertainty you're actually dealing with.

Quick Decision Guide

- 2+ years of clean historical data? Start quantitative with exponential smoothing, then benchmark against a naive forecast.

- New market or product launch? Start with a structured Delphi process, then layer in quantitative models as data accumulates.

- Sales forecasting? Combine CRM pipeline data with weekly deal-level qualitative review. Your forecast is only as good as your input data.

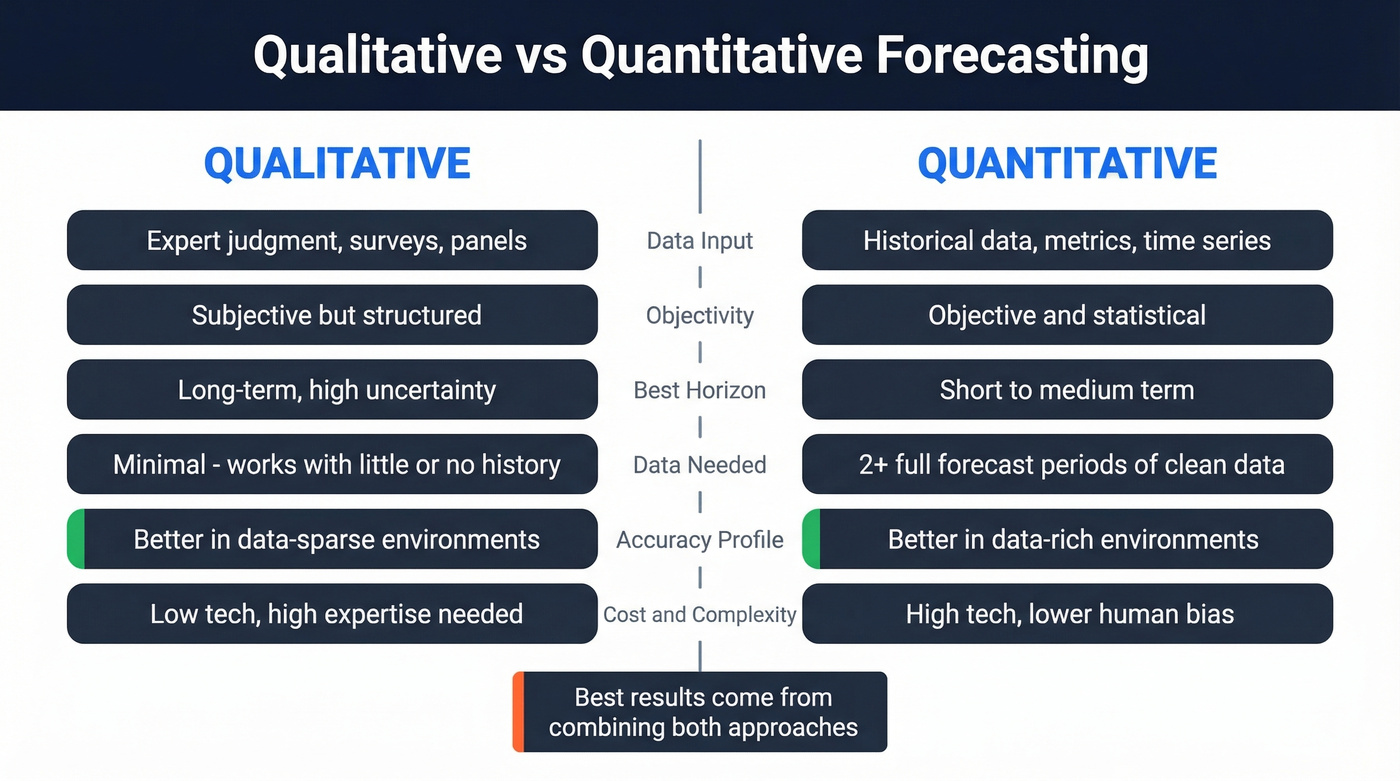

Both Methods at a Glance

| Dimension | Qualitative | Quantitative |

|---|---|---|

| Data input | Expert judgment, surveys | Historical data, metrics |

| Objectivity | Subjective, structured | Objective, statistical |

| Best horizon | Long-term, uncertain | Short-to-medium term |

| Data needed | Minimal | 2+ full forecast periods |

| Accuracy profile | Better in data-sparse | Better in data-rich |

| Cost/complexity | Low tech, high expertise | High tech, lower bias |

Stop treating qualitative as the "less rigorous" option. In data-sparse environments, structured expert judgment consistently matches or outperforms statistical models. The rigor comes from the process, not the math.

Common Qualitative Methods

Delphi Method is the gold standard for structured judgment-based forecasting. You assemble 5-20 experts with diverse backgrounds - for a retail chain, that might mean store managers, an economist, and a supply chain lead. Distribute forecasting questions anonymously, collect initial estimates with written justifications, then summarize and redistribute. Two to three rounds typically produce consensus, and equal weighting of final estimates keeps any single voice from dominating. The anonymity is what makes it work - it kills hierarchy bias.

Executive opinion aggregates senior leadership judgment. Fast, but prone to the loudest-voice-in-the-room problem.

A practical way to make qualitative forecasting real instead of "gut feel" is to score specific drivers: switching costs, network effects, barriers to entry, competitive intensity, and management execution. Qualitative is still structured work - it just isn't expressed as a single equation.

Market research and surveys capture customer intent directly, best for launches where no historical signal exists. Scenario planning models best, worst, and most-likely cases - essential for strategic decisions under genuine uncertainty.

Common Quantitative Methods

Naive forecasting is your baseline: "next period = last period." If your fancy model can't beat this, it's not adding value. Simple as that.

Moving averages are another straightforward benchmark. Easy to implement, but they give equal weight to each period and lag when trends shift.

Exponential smoothing (ETS / Holt-Winters) weights recent data more heavily and handles trend and seasonality well. This is where we'd recommend most teams start their quantitative journey.

ARIMA/SARIMA is often assumed to be "more general" than ETS, but that's a myth - neither model class strictly dominates the other. Use time series cross-validation to compare them; AICc can't compare ETS vs ARIMA directly because likelihoods are computed differently.

Regression and causal models matter when external drivers like price changes, promotions, or macro indicators influence demand.

ML models like Random Forest and LSTM can outperform traditional methods on non-linear patterns and massive datasets, but 63% of employers cite a skills gap as a primary adoption barrier. Don't reach for ML until you've exhausted simpler methods.

You just read that hybrid forecasting wins - but even the best model breaks when your CRM is full of stale contacts. Prospeo refreshes 300M+ profiles every 7 days with 98% email accuracy, so your pipeline data actually reflects reality.

Stop forecasting on bad data. Start with contacts you can trust.

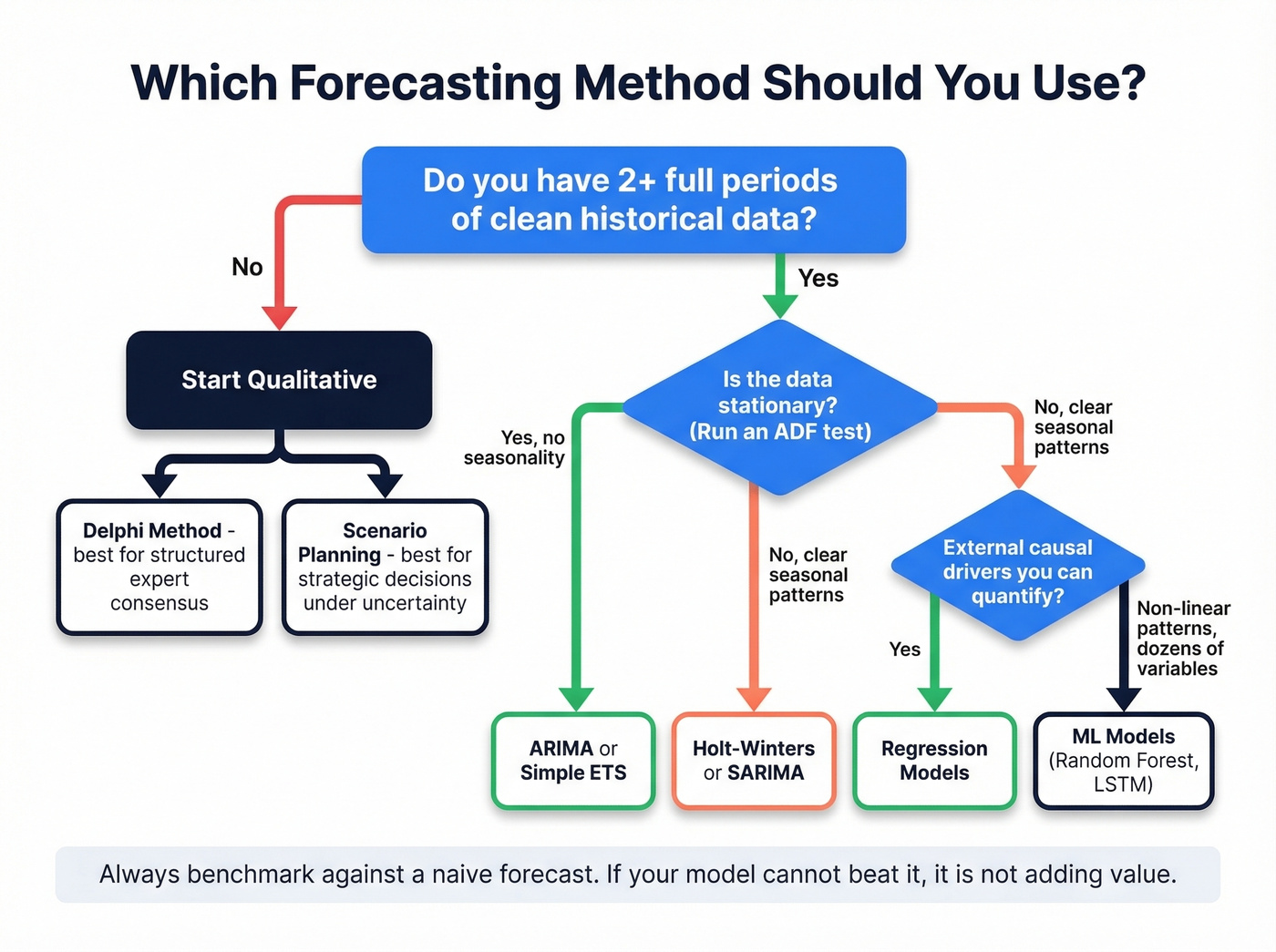

How to Choose a Method

Start with data volume. Do you have at least two full forecast periods of clean historical data? If not, you're in qualitative territory - run a Delphi or structured expert panel.

If you do have sufficient data, assess stationarity with an ADF test. Stationary data with no seasonality points to ARIMA or simple ETS. Clear seasonal patterns? Holt-Winters or SARIMA. External causal drivers you can quantify? Regression. Non-linear relationships across dozens of variables? Now you're in ML territory.

For inventory planning, forecast error directly drives carrying costs and stockouts, so the method choice has immediate financial consequences. Intermittent demand - common in spare parts and specialty manufacturing - often needs specialized handling or a qualitative overlay, because simple time series baselines behave badly when demand is mostly zeros.

Always benchmark against a baseline forecast. Skip this step and you've got no idea whether your model is adding value.

Measuring Forecast Accuracy

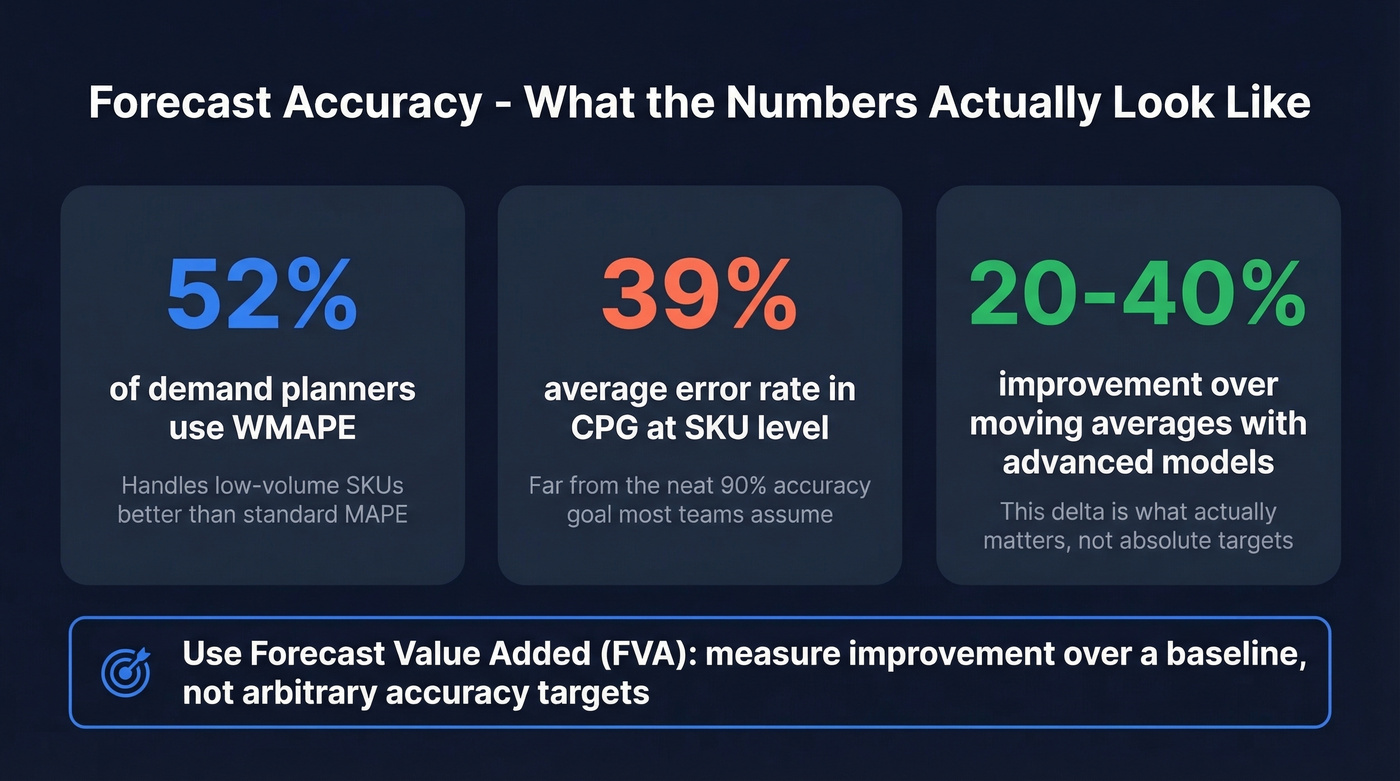

Most teams measure accuracy wrong. Or don't measure it at all.

In a demand planning survey, 52% of planners used WMAPE because it handles low-volume SKUs more gracefully than standard MAPE. That's a good instinct.

Here's the thing: absolute accuracy targets are misleading. A 70% target means nothing without context. Real-world error rates run around 39% in CPG and 36% in chemicals at the SKU level - numbers that would terrify anyone anchored to a neat "90% accuracy" goal.

The better framework is Forecast Value Added (FVA): measure your model's improvement over a baseline, not some arbitrary number. Advanced models often outperform moving averages by 20-40%, and that delta is what actually matters. If your ARIMA model beats the naive forecast by 25%, that's real value. If it beats it by 2%, you're paying for complexity you don't need.

Why You Need Both

Let's be honest: the biggest forecasting failures aren't model failures - they're communication failures. This is what hybrid forecasting solves. Quantitative models provide consistency and scale; qualitative review surfaces context the model can't see.

In sales forecasting, that looks like weekly deal reviews layered on pipeline analytics. If nobody challenges whether the biggest deals have real executive sponsorship, your "quantitative" forecast can still be wildly wrong for very human reasons. We've seen teams with sophisticated pipeline scoring still miss quarters because a single whale deal was stuck in legal and nobody flagged it.

Upwork achieves 95% forecast accuracy using weekly deal reviews layered on quantitative pipeline analysis - that's the hybrid in action.

For sales forecasting specifically, accuracy starts with data accuracy. If your CRM is full of stale contacts and outdated org charts, your pipeline numbers are fiction. Tools like Prospeo, which refreshes contact data every 7 days at 98% email accuracy, keep the data feeding your pipeline current so your quantitative forecast reflects reality rather than last quarter's headcount.

If you're pressure-testing your pipeline inputs, start with pipeline analytics and the most common sales pipeline challenges that distort forecasts.

Mistakes That Kill Forecasts

Over-reliance on historical data during regime shifts. COVID proved this. So did every tariff change and supply chain shock since.

Ignoring external factors. Promotions, competitor moves, and macro shifts aren't in your time series. Build them in or overlay them qualitatively.

Overcomplicating models. Overfitting to past data destroys future predictive power. We've watched teams spend months tuning an LSTM when a Holt-Winters model with a qualitative overlay would've gotten them 90% of the way there in a week.

Using static forecasts instead of rolling forecasts. A forecast locked in January is useless by March.

Siloed departments. Sales, finance, and ops forecasting independently produce three different numbers and zero alignment. If your forecasting process doesn't include a cross-functional review step, skip everything else in this article and fix that first.

If your forecast is tied to revenue operations, align on definitions like sales forecast vs sales goal and standardize your sales operations metrics.

Forecast accuracy starts with data accuracy. If 35% of your emails bounce, your pipeline metrics are fiction. Prospeo's 5-step verification drops bounce rates below 4% - ask Snyk, whose 50 AEs now generate 200+ opportunities per month on clean data.

Clean data in, accurate forecasts out. It's that simple.

FAQ

Is quantitative forecasting more accurate than qualitative?

Not inherently. In stable, data-rich environments, quantitative methods win. In data-sparse contexts - new markets, regime shifts - structured qualitative approaches like Delphi consistently match or beat statistical models. Choose based on data availability, not perceived rigor.

Can you combine both approaches?

Yes, and most high-performing teams do. Use quantitative models as the baseline, then apply qualitative overlays for factors the model can't capture: competitive moves, regulatory changes, deal-level intelligence. Upwork hits 95% accuracy this way.

What tools help improve forecast accuracy?

For demand planning, tools like Forecast Pro, SAP IBP, or Kinaxis handle quantitative modeling ($5K-$500K+/year depending on scale). For sales forecasting, your CRM's native forecasting plus verified contact data is the foundation - stale prospect data silently kills pipeline forecasts, which is why a weekly data refresh cycle matters more than most teams realize.