Sales Demo Training: What 67,149 Recorded Demos Reveal About Winning

Your AE just bombed a demo with a $200K opportunity. You watched the recording - forty-five minutes of feature walkthrough, zero discovery recap, one buyer question. You don't need motivation. You need a sales demo training system that forces better behavior on the next call.

Sandler Training's research shows 75% of buyers decide who they're going with before a proposal even lands. Pair that with average AE ramp time of 4.9 months, and "they'll figure demos out over time" becomes an expensive fantasy.

Here's the thing: most demo training programs teach theater. We've spent years watching teams run demos, reviewing recordings, and tracking what actually moves pipeline. The answer isn't charisma or slide design. It's structure, measurement, and relentless coaching against a handful of specific benchmarks.

Demo Mistakes That Kill Deals

These seven anti-patterns show up in nearly every underperforming team we've reviewed.

- Demoing on the first call. Without discovery, you're guessing what matters.

- Playing tour guide. A feature parade signals you don't understand the buyer's priority.

- Showing everything. Pick three moments max. Everything else is noise.

- Monologuing past 76 seconds. Winners don't do it. Full stop.

- Skipping the discovery recap. If you don't restate the pain, the buyer assumes you missed it.

- Ending without a next step. "We'll follow up" is where deals go to die.

- Running demos for bad-fit prospects. A demo is a diagnostic tool - if the problem isn't real or you can't solve it, walk away fast.

Hot take: if your team's average deal size sits below $10K, you probably don't need a "perfect" demo. You need tight qualification and a clean next step. Most teams over-train demos and under-train the decision process.

What 67,149 Recorded Demos Actually Show

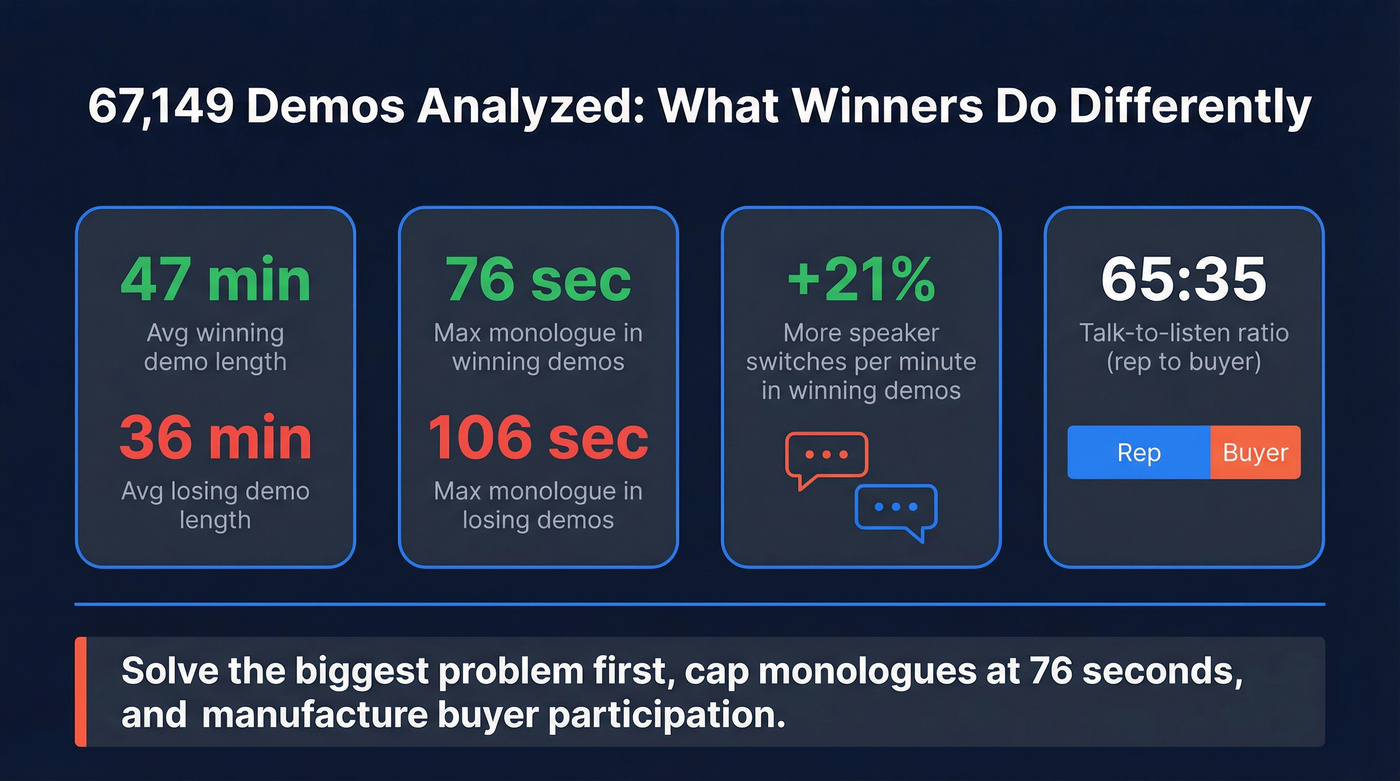

Gong analyzed 67,149 sales demos over ten weeks, tying screen-share recordings to CRM outcomes. The benchmarks are specific enough to coach to.

Winning demos averaged 47 minutes compared to 36 minutes for unsuccessful ones. But the real separator wasn't duration - it was interaction.

No winning demo had an uninterrupted monologue longer than 76 seconds. Unsuccessful demos had uninterrupted stretches up to 106 seconds. Successful demos also had 21% more speaker switches per minute, which is a fancy way of saying the buyer stayed involved. The talk-to-listen ratio landed around 65:35 (rep to buyer), though the ratio alone didn't predict outcomes. Cadence did. Top reps get more buyer questions because they do one simple thing: show the end result, state the value, and stop talking.

If you remember nothing else from the 67K-demo analysis, remember this: solve the biggest problem first, cap monologues at 76 seconds, and manufacture buyer participation. Everything else is decoration.

A Repeatable Demo Structure

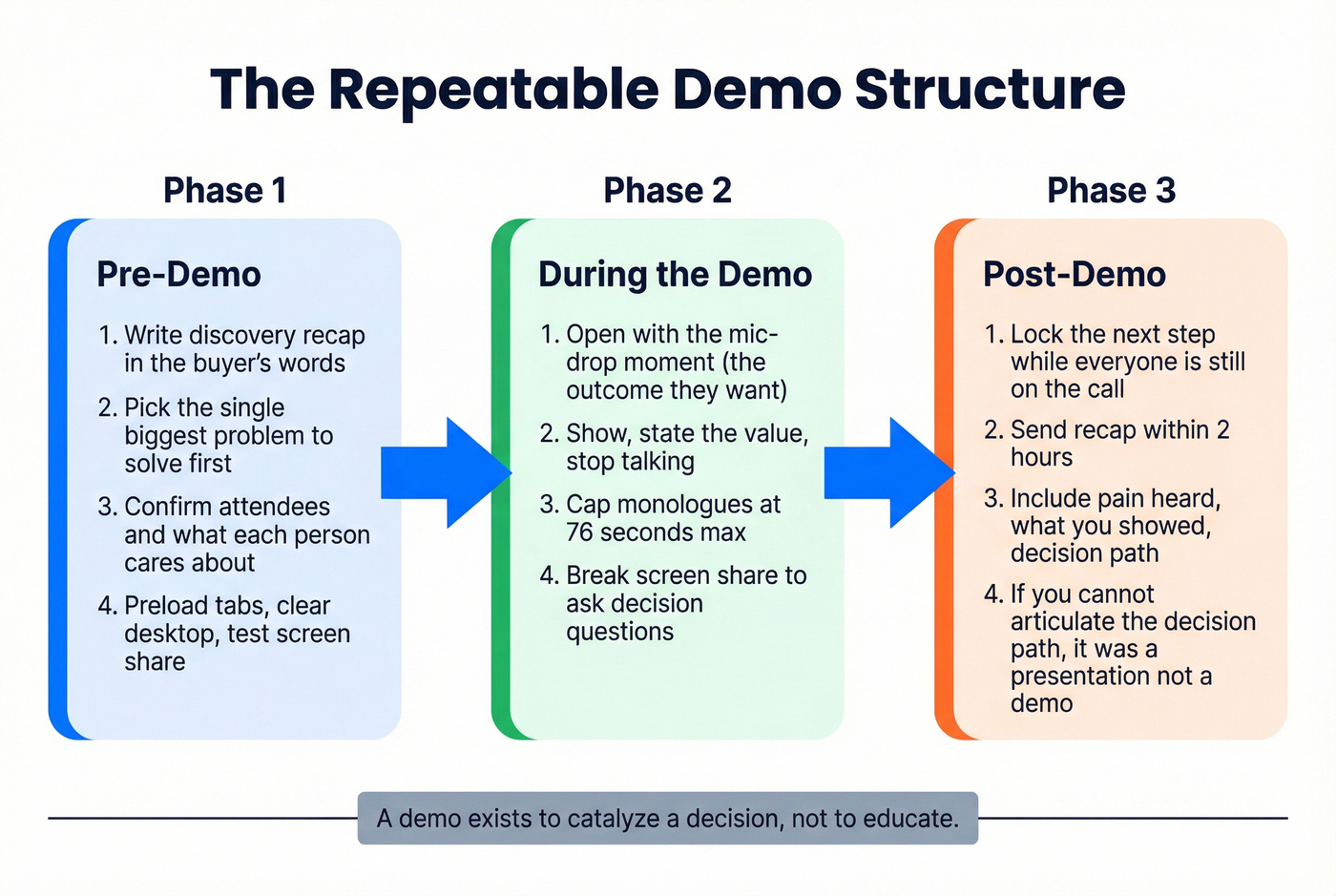

A demo exists to catalyze a decision, not to educate. The "demo trap" is real: show too little and you feel evasive, show too much and you drown the buyer in features. The way out is structure.

Pre-Demo

Ten minutes of prep saves ten hours of follow-up. Start by writing a one-paragraph discovery recap in the buyer's words - not yours, theirs. Then pick the single problem you'll solve first on-screen. Confirm who's attending and what each person cares about. Remove friction: preload tabs, clear your desktop, test screen share.

During the Demo

Open with the "mic-drop" moment: the outcome they want, not your navigation menu. Then run a tight loop - show, state the value, stop. Treat 76 seconds as a hard ceiling for uninterrupted talking. Break screen share every few minutes to ask a decision question: "Is this the workflow you're trying to replace?" or "What would block this internally?"

Let's be honest - this feels awkward at first. Reps tell us the silence after stopping feels like an eternity. It isn't. It's three seconds, and it's where the buyer starts selling themselves.

Post-Demo

Lock the next step while everyone's still on the call. Send a recap within two hours that includes the pain you heard, what you showed, and the decision path - who needs to approve what, by when. If you can't articulate the decision path, you didn't run a demo. You ran a presentation.

A perfect demo structure means nothing if your prospect's contact data is dead. Prospeo's 7-day refresh cycle and 98% email accuracy ensure every demo slot goes to a real buyer - not a bounced inbox. GreyScout cut rep ramp time in half after switching.

Stop prepping demos for contacts that don't exist.

Demo Coaching Scorecard

Most teams track pipeline metrics but never score the demo itself. That's the gap. Use this weekly on recorded demos to turn benchmarks into coaching conversations.

| Criterion | What to Measure | Target |

|---|---|---|

| Discovery recap | Delivered at demo start | Yes |

| Longest monologue | Uninterrupted pitch length | < 76 seconds |

| Speaker switches | Switches per minute | ≥ 21% above team avg |

| Buyer questions | Unprompted questions received | ≥ 3 |

| Talk-to-listen ratio | Rep vs. buyer talk time | ≤ 65:35 |

| Biggest pain first | Top issue addressed early | Yes |

| Next step secured | Booked on the call | Yes |

| Contact data verified | Pre-demo data check done | Yes |

Score each criterion 1-5 after reviewing a recording. Any rep averaging below 3 doesn't need another workshop - they need two targeted fixes and a rewatch.

One thing we've learned from running this internally: don't score more than two demos per rep per week. Managers burn out, reps feel surveilled, and the coaching quality drops. Two scored demos with focused feedback beats five with vague notes every time.

Before the Demo - Fix Your Data

We see this constantly: reps prep a sharp demo, then the prospect's email bounces or the phone number is dead. Chili Piper's analysis of roughly 4 million form submissions found 14.1% of form fills are unqualified from the start - wasted demo slots before a rep even opens their deck.

Skip this section if your bounce rate is already under 5%. But if it's higher, your demo training won't matter until you fix what's upstream.

GreyScout cut rep ramp time from 8-10 weeks down to 4 weeks after fixing their data pipeline with Prospeo's 98% email accuracy and 7-day refresh cycle, so reps stopped prepping demos for contacts that don't exist. You can test this yourself with 75 verified emails free each month - enough to pressure-test your list quality immediately. (If you're diagnosing deliverability, start with bounce rate and work backward.)

The 67K-demo study proves structure wins deals. But the scorecard item most teams skip? Pre-demo data verification. Prospeo gives you 300M+ profiles with verified emails and direct dials so your AEs spend prep time on discovery recaps, not hunting for working contact info.

Reps who reach real buyers book 26% more meetings.

Demo Training Programs and Tools

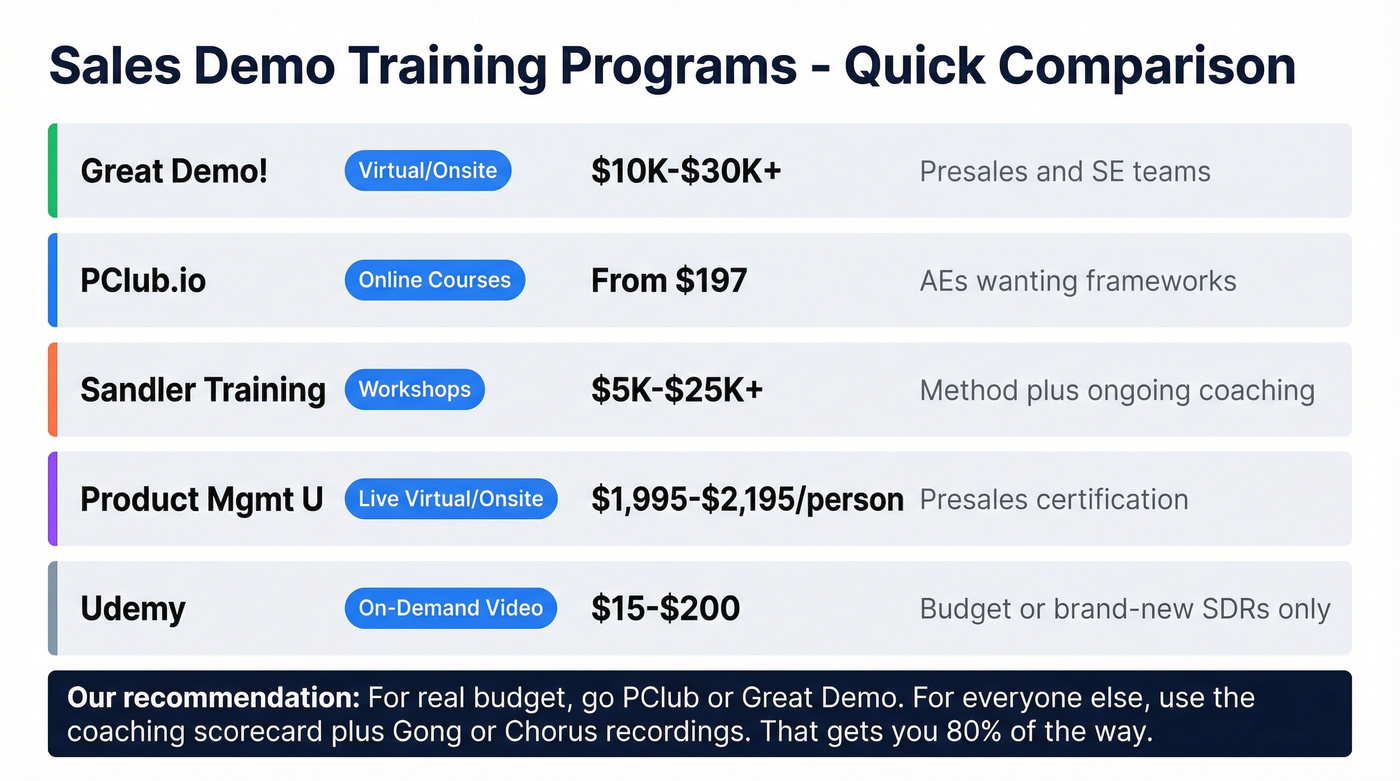

If you're picking one training investment, start with reviewing your own recorded demos against the scorecard. That's the fastest path to better calls because it's your market, your product, your objections. The consensus on r/sales backs this up - most reps who post about "demo training that actually worked" point to call reviews with their manager, not a $20K workshop.

If you want a tighter pre-call system, use a product demo checklist so reps stop winging it.

Training Programs

| Provider | Format | Price | Best For |

|---|---|---|---|

| Great Demo! | Virtual/onsite | ~$10K-$30K+ | Presales / SE teams |

| PClub.io | Online courses | From $197 | AEs wanting frameworks |

| Sandler Training | Workshops | ~$5K-$25K+ | Method + ongoing coaching |

| Product Mgmt U | Live virtual/onsite | $1,995-$2,195/person | Presales certification |

| Udemy | On-demand video | ~$15-$200 | Budget only |

Real talk: Udemy courses won't change your win rate. They're fine for a brand-new SDR who's never seen a demo, but if you're reading this article, you're past that. PClub and Great Demo! are where we'd point teams with real budget. For everyone else, the scorecard above and a Gong/Chorus subscription gets you 80% of the way there.

If your team struggles to keep demos moving after the call, steal a few sales follow-up templates and standardize the recap.

Demo Software

| Tool | Starting Price | Enterprise |

|---|---|---|

| Storylane | Free / $40/mo | $1,200/mo |

| Navattic | Free / $500/mo | $2,000-$6,000+/mo |

| Consensus | $600/mo | $2,000-$8,000+/mo |

| Walnut | $750/mo | $3,000-$10,000+/mo |

| Demostack | ~$2,750/mo | $6,000-$15,000+/mo |

| Reprise | ~$2,620/mo | $6,000-$20,000+/mo |

Storylane and Navattic are solid entry points if you want interactive product tours for async demos. Demostack and Reprise make sense for enterprise teams running complex, multi-persona demos where you need sandboxed environments. For most mid-market teams, I'd start with Storylane's free tier and see if async demos actually shorten your sales cycle before committing to a $3K/month tool.

If you're selling six-figure deals, align the demo to your broader enterprise B2B sales motion (multi-threading, mutual plans, and tighter qualification).

FAQ

How long should a sales demo be?

Winning demos average 47 minutes based on the 67,149-demo analysis, but time isn't the lever. Keep monologues under 76 seconds, increase speaker switches, and secure a next step live on the call. A tight 30-minute demo with high interaction beats a rambling 60-minute one every time.

What's the best free resource for demo training?

Your own call recordings plus the coaching scorecard above. Review one demo per rep per week, grade it against the seven criteria, and coach one behavior change at a time. Supplement with free research from Gong's blog and tactical frameworks from PClub.

How do you measure demo quality?

Score the demo itself: longest monologue, speaker switches per minute, unprompted buyer questions, discovery recap delivery, and whether a next step was booked live. Track these weekly - if those metrics improve, win rates follow within a quarter.

Does product demo training actually improve win rates?

Yes, but only when tied to observable behavior. Teams that review real recordings and coach against specific benchmarks see measurable improvement within a single quarter. One-off workshops with no follow-up scoring rarely move the needle. Sandler's research confirms that reinforcement - not the initial training event - drives lasting behavior change.