Click to Open Rate: What It Means, Why It's Broken, and How to Fix It

Your click to open rate dropped from 18% to 9% over six months, and you didn't change a single thing about your emails. Same subject lines. Same CTAs. Same content. What changed wasn't your performance - it was the way opens get measured.

That distinction matters. Teams that chase a falling CTOR without understanding why it fell end up rewriting emails that were working fine. Here's what this metric actually tells you, where it breaks down, and what to do about it.

The Quick Version

- Formula: (Unique clicks / Unique opens) x 100

- 2026 realistic range: 10-20% for many marketing emails, depending on industry and Apple Mail share of your list

- All-industry average CTOR: 5.3% per HubSpot's latest benchmarks

- Honest verdict: CTOR is still useful for A/B testing creative and CTA performance. It's unreliable as an absolute benchmark post-Apple MPP because the denominator is inflated.

- Single most impactful fix: Clean your email list before optimizing anything else. Bad addresses tank deliverability, which distorts every metric downstream.

What Is CTOR?

Click to open rate measures how many people who opened your email actually clicked something inside it. Sometimes called click through open rate in industry shorthand, the formula is simple:

CTOR = (Unique clicks / Unique opens) x 100

Say you send a campaign that gets 200 unique opens and 30 unique clicks. That's a 15% CTOR.

The reason this metric exists is isolation. Regular clickthrough rate blends everything together - deliverability, subject line performance, and content quality all affect the number. CTOR strips away the first two layers. It only looks at people who already opened, so it isolates whether your email body and CTA are doing their job. That's the theory, at least. In practice, the "opened" part of the equation has gotten messy.

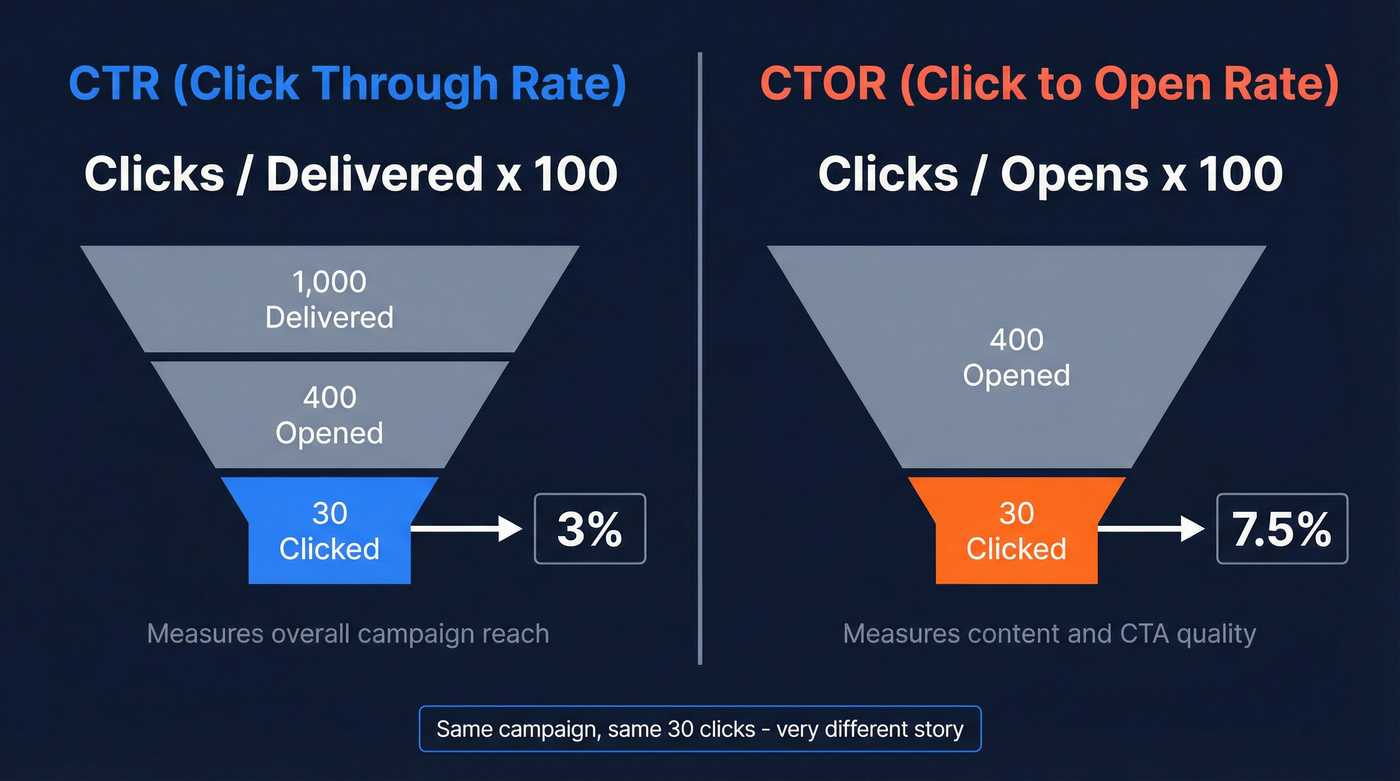

CTR vs CTOR: Which One to Use

These two get confused constantly, and they answer different questions.

| CTR | CTOR | |

|---|---|---|

| Formula | Clicks / Delivered | Clicks / Opens |

| Denominator | All delivered emails | Only opened emails |

| What it measures | Overall campaign reach | Content/CTA quality |

| Best used for | Campaign health | Creative diagnostics |

Here's a concrete example. You send 1,000 emails. 400 get opened. 30 get clicked.

- CTR = 30 / 1,000 = 3%

- CTOR = 30 / 400 = 7.5%

Same campaign, very different numbers. Your CTR tells you how the whole funnel performed. CTOR tells you whether the email content converted the people who actually saw it.

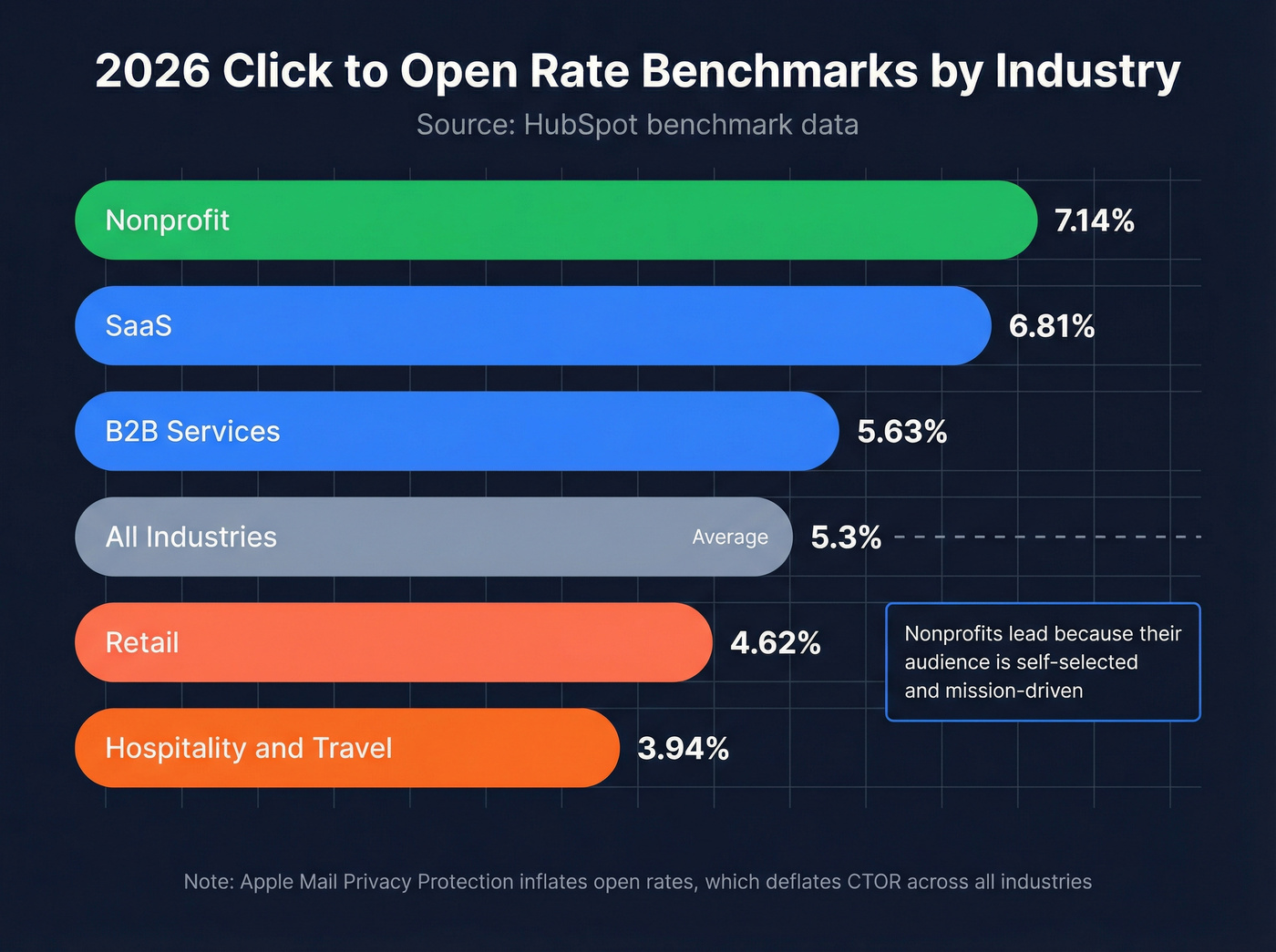

2026 CTOR Benchmarks by Industry

Let's ground this in real numbers. HubSpot's latest benchmarks give us the clearest CTOR-by-industry picture:

| Industry | Open Rate | CTR | CTOR |

|---|---|---|---|

| All industries | 42.35% | 2.3% | 5.3% |

| Retail | 38.58% | 1.34% | 4.62% |

| B2B Services | 39.48% | 2.21% | 5.63% |

| Nonprofit | 46.49% | 2.66% | 7.14% |

| SaaS | 38.14% | 1.19% | 6.81% |

| Hospitality & Travel | 45.21% | 2.43% | 3.94% |

A few things jump out. Nonprofits lead on CTOR because their audience is self-selected and mission-driven - people open those emails because they care. SaaS CTOR looks decent at 6.81%, but that's partly because SaaS open rates are lower, meaning fewer casual opens inflate the denominator.

Mailchimp's dataset of billions of emails shows a lower all-user open rate of 35.63% - the gap between that and HubSpot's 42.35% illustrates exactly how much Apple MPP has inflated reported opens.

For a quick gut-check by email category, Pushwoosh's benchmark ranges for CTR are a useful reality check: welcome emails land around 16-26%, triggered automations hit 5-10%+, newsletters sit at roughly 3-4%, and promotional emails hover between 1-3%.

Litmus and Validity position 10-15% as a healthy CTOR target. That's a reasonable goal for well-segmented lists, but the average of 5.3% tells you most senders aren't there. The biggest reason? Apple Mail Privacy Protection is inflating the denominator for nearly half of all email clients.

The article says it plainly: clean your list before optimizing anything else. Prospeo's 5-step email verification catches spam traps, honeypots, and catch-all domains - the invisible CTOR killers. At 98% accuracy and $0.01 per email, you stop inflating opens with dead addresses and start measuring real engagement.

Fix the denominator first. Every metric downstream depends on it.

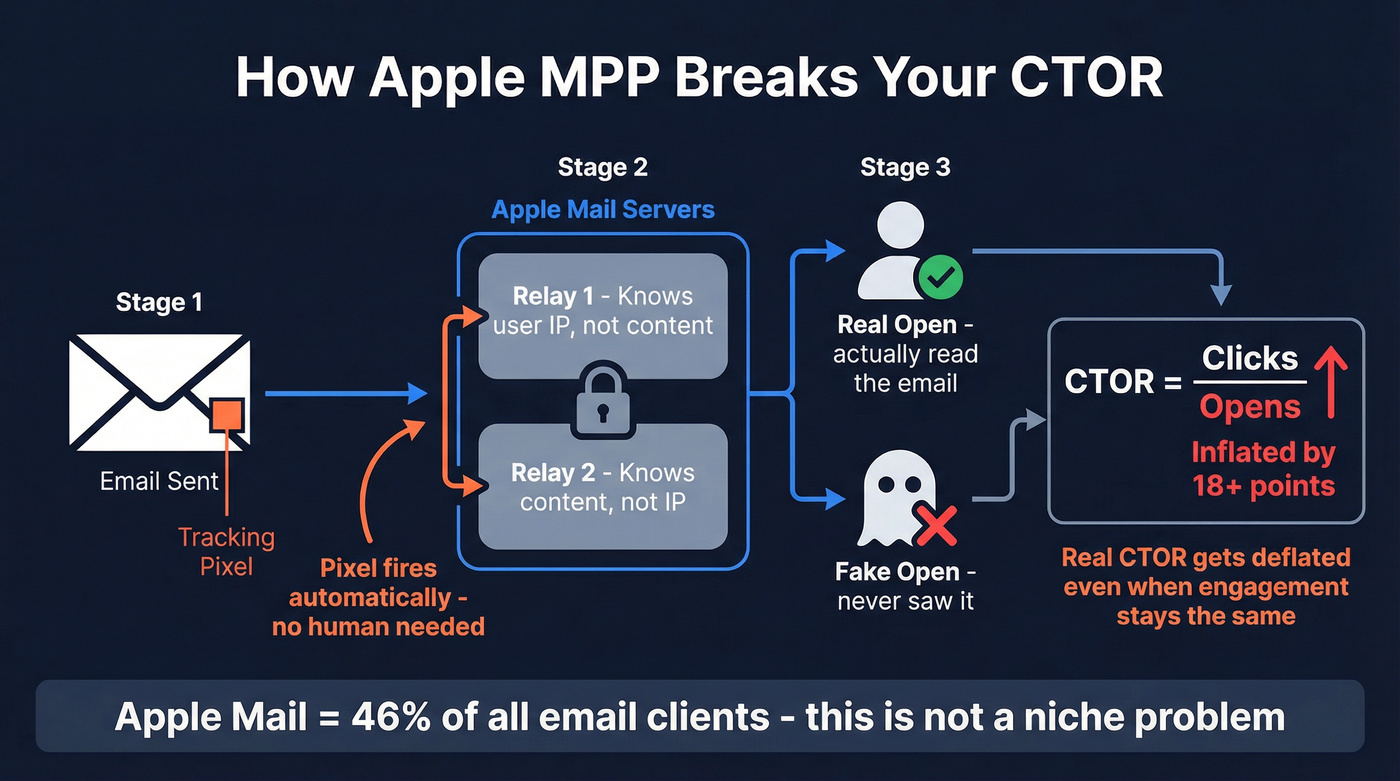

Why CTOR Is Partially Broken

Apple Mail Privacy Protection, introduced with iOS 15, fundamentally changed how opens get tracked. When an Apple Mail user receives an email, Apple's servers preload the content - including tracking pixels - through a two-relay proxy system. One relay knows the user's IP but not the content. The other knows the content but not the IP. Every email appears "opened" whether the human actually looked at it or not.

Apple Mail accounts for 46% of email clients. That's not a niche edge case.

The impact is measurable. HubSpot cites a study of 80,000+ email accounts that found open rates increased by 18 points, jumping above 40%, within six months of MPP's rollout. beehiiv documented newsletters seeing open rates leap from 28% to 55% overnight - same content, same list, completely different measurement.

This creates a double distortion. Inflated opens push the denominator up, which deflates your CTOR even when real engagement hasn't changed. Meanwhile, security scanners can pre-click links in emails before a human ever sees them, inflating the numerator. The metric gets pulled in both directions by non-human activity. We've seen teams panic over a CTOR drop that was entirely explained by a shift in their list's Apple Mail composition - no content change needed, just a measurement artifact.

Here's the thing: CTOR was the gold standard for email content measurement for a decade. It isn't anymore. It's still useful for relative A/B comparisons, but if you're using it as your primary email KPI in 2026, you're optimizing against a broken ruler. Revenue per recipient is the metric that actually correlates with business outcomes.

How to Interpret Your Results

Rather than obsessing over a single number, use CTOR as a diagnostic signal alongside other metrics:

| Pattern | Likely Diagnosis | Fix |

|---|---|---|

| High opens, low CTOR | Content/CTA mismatch | Fix body/CTA alignment |

| Low opens, high CTOR | Subject line or deliverability issue | Test subjects; check reputation |

| High clicks, low conversions | Landing page problem | Optimize post-click experience |

| CTOR 20%+ consistently | Strong - or bot-inflated | Check click timing and user-agents |

Under 10% CTOR suggests a content or CTA mismatch. 10-15% is healthy. Above 20% is either excellent or suspicious - investigate before celebrating.

The most practical advice? Track your own 90-day baseline. The consensus across email marketing communities and r/emailmarketing threads is that CTOR works best for relative A/B comparisons, not absolute benchmarking - and practitioners are right. A 7% rate that's stable and trending up is more meaningful than chasing someone else's 15%.

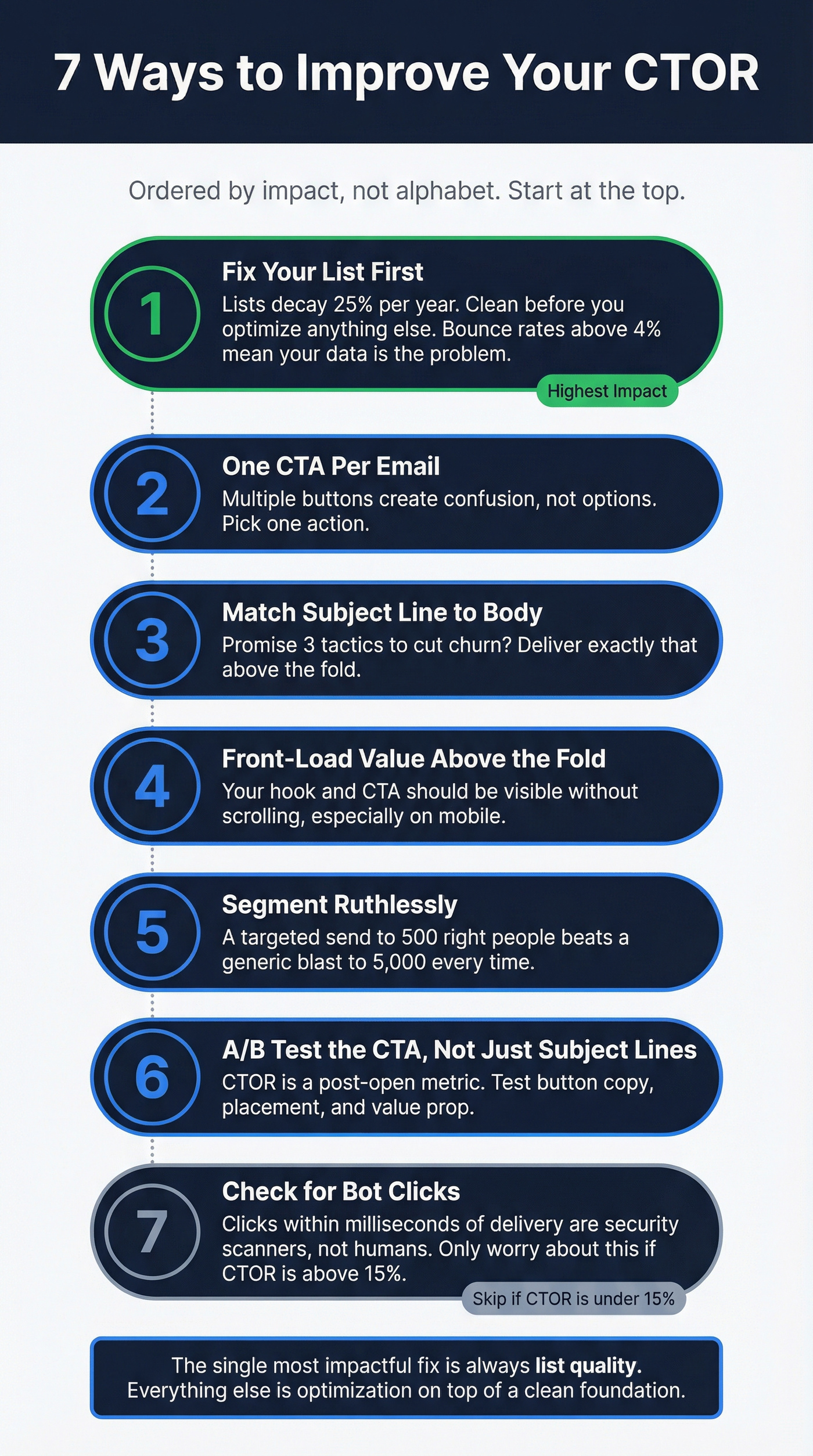

How to Improve Your CTOR

These are ordered by impact, not alphabetical convenience. Start at the top.

1. Fix Your List First

Every metric downstream - open rate, CTOR, conversion rate - is only as reliable as your deliverability. And deliverability starts with list quality. Email lists decay at roughly 25% per year as people change jobs, abandon addresses, and domains go stale. If you're running campaigns against a list you haven't cleaned in six months, skip every other optimization on this page until you've dealt with that.

Prospeo's 5-step verification catches invalid addresses, spam traps, and honeypots, delivering 98% email accuracy on a 7-day refresh cycle. The free tier covers 75 verifications per month - enough to audit a segment and see the difference. Meritt cut their bounce rate from 35% to under 4% after running their list through verification, which made every downstream metric actually reliable.

2. One CTA Per Email

Multiple CTAs split attention and dilute clicks. Pick the single action you want the reader to take and make it unmissable. This sounds obvious, but we still see campaigns with three buttons pointing to three different pages. That's not giving options - it's creating confusion.

3. Match Your Subject Line to Your Body

A subject line that promises "3 tactics to cut churn" needs to deliver exactly that above the fold. Bait-and-switch kills engagement because people open, see something different, and bounce without clicking anything.

4. Front-Load Value Above the Fold

Your hook and primary CTA should be visible immediately, without forcing a scroll. Mobile readers especially won't dig for it.

5. Segment Ruthlessly

A generic blast to your entire list will always underperform a targeted send to 500 people who match the message. Segment by engagement recency, role, industry, or buying stage. For teams doing outbound, this is where having accurate contact data matters most - you can't segment by role if half your job titles are outdated.

6. A/B Test the CTA, Not Just the Subject Line

Most teams only test subject lines. But CTOR is a post-open metric - the subject line is already done. Test button copy, placement, color, and the value proposition around the CTA. I've seen a single word change on a button ("Get the report" vs "Download now") swing CTOR by 4 points.

7. Check for Bot Clicks

If your CTOR looks suspiciously high, analyze click timing. Clicks that happen within milliseconds of delivery are typically security scanners, not humans. Filter by user-agent patterns and look for clusters of instant clicks from the same IP ranges. Skip this step if your CTOR is under 15% - bots aren't your problem at that level.

CTOR only works when real humans open real emails at real addresses. Teams using Prospeo's 143M+ verified emails see bounce rates drop below 4% - which means your open counts reflect actual readers, not bots and bad data. That gives you a CTOR you can actually trust for A/B testing.

Stop optimizing against a broken ruler. Start with data that's accurate.

What to Track Alongside CTOR

CTOR is still useful for one thing: relative comparisons. If Version A of your email gets a 22% CTOR and Version B gets 14%, Version A's content is resonating better. That signal holds even with MPP distortion, because both versions are affected equally.

But don't use CTOR as your north star. Revenue per recipient is a better one. We've seen cases where the lower-CTOR email generated 3x more revenue because it attracted higher-intent clicks. Vanity engagement metrics mislead. Klaviyo's dataset of 325B+ emails shows abandoned cart automations averaging $3.65 revenue per recipient versus $0.11 for standard campaigns - a 30x gap that CTOR alone would never reveal.

Track these alongside your click to open rate for a complete picture: reply rate, conversion rate, revenue per email sent, and retention cohorts over time. Consumers now engage with an average of eight channels per brand. Email CTOR is one signal in a much bigger picture.

If you want a broader framework for email metrics, see Open Rate vs Click Rate and how teams are adapting post-MPP.

FAQ

What is a good click to open rate?

10-15% is the standard target per Litmus and Validity, while the all-industry average sits at 5.3%. Track your own 90-day baseline rather than chasing a universal number - list composition and Apple Mail share heavily influence results.

How do you calculate CTOR?

Divide unique clicks by unique opens, then multiply by 100. Always use unique counts - totals inflate the number with repeat actions from the same recipient.

Is CTOR the same as CTR?

No. CTR uses all delivered emails as the denominator, while CTOR uses only opened emails. CTR measures overall campaign reach; CTOR isolates content and CTA quality. Both are useful for different diagnoses.

Does Apple Mail Privacy Protection affect CTOR?

Yes - significantly. MPP preloads tracking pixels via proxy servers, inflating open counts for 46% of email clients. This deflates CTOR regardless of real engagement, making absolute benchmarks unreliable.

How can I make sure my CTOR reflects real engagement?

Start by verifying your email list to remove invalid addresses and spam traps. Clean data ensures bounces aren't distorting deliverability, which cascades into every engagement metric. From there, filter out bot clicks by analyzing click timing, and use CTOR for relative A/B comparisons rather than absolute targets.