Data Collection Best Practices: The 2026 Playbook With Numbers, Not Platitudes

Every guide on data collection best practices tells you to "define clear objectives." That's like telling a chef to "use good ingredients." Here's what they leave out: the dollar cost when you don't, the KPIs that prove you did, and the compliance checklist that keeps you out of court.

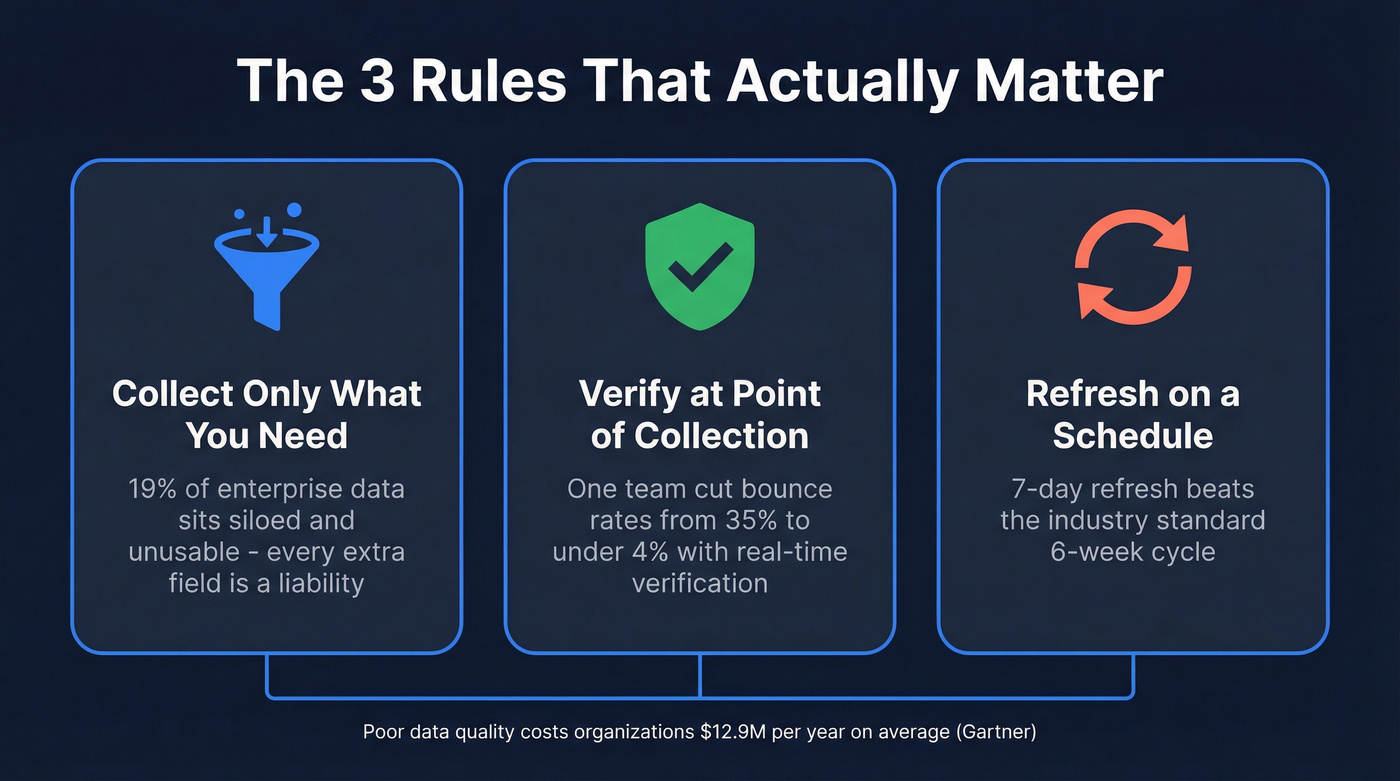

Over a quarter of organizations lose more than $5M annually to poor data quality. Gartner pegs the average at $12.9M per organization per year. And Gartner predicted 30% of GenAI projects would be abandoned by end of 2025 due to shaky data foundations - data quality hasn't gotten easier since.

What You Actually Need

You don't need ten rules. You need three you follow every single time:

- Collect only what you need. Every unnecessary field is a liability, a compliance risk, and a maintenance burden.

- Verify at the point of collection. Catch bad data on entry instead of cleaning it up downstream.

- Refresh on a schedule. Stale data bounces. Fresh data books meetings.

Everything below adds KPIs, dollar figures, and compliance checklists that make these three rules stick.

Practices That Move the Needle

Define Your Objective First

The SurveyCTO process framework gets this right: goals first, then methods, then QA. We've seen teams spend six months building a 40-field intake form, only to discover sales reps skip everything after field 5. Before you build a form, write a query, or buy a database, answer one question: what decision will this data inform?

If you can't answer that in one sentence, you're not ready to collect. Inventory your current sources, formats, and volume before setting new collection goals - you'll often find you already have half of what you need buried in a tool nobody checks.

Collect Only What You Need

Do this: Define a consumer - a report, workflow, or model - for every field you collect. If nobody's using it, stop collecting it.

Skip this if you recognize yourself: Hoarding fields "just in case." About 19% of enterprise data sits siloed and unusable, and 70% of leaders believe valuable insights are trapped in data they can't access. That's not a storage problem. It's a collection problem. Data minimization isn't just a GDPR/CCPA requirement - it's operational discipline that saves you from drowning in fields nobody reads.

Standardize Definitions and Formats

Here's the thing: enterprise data spreads across an average of 897 applications, with only 29% effectively connected. The common culprit isn't technology - it's definitions. What "MQL" means to marketing and what it means to sales are often two completely different things, and that mismatch cascades through every report and dashboard downstream.

Before you integrate anything, get your teams to agree on what each field means, how it's formatted, and who owns it.

Automate Collection, Kill Manual Entry

Ask any data engineer what kills their pipeline, and the answer is almost always upstream collection, not downstream modeling. A human typing company names into a spreadsheet will produce "Salesforce," "salesforce.com," "SFDC," and "Salesforce Inc." in the same column. A Salesforce report found that 84% of people who work with data daily say their data strategy needs significant overhauls before AI can deliver value.

Automate ingestion wherever possible. And assign a clear automation owner - someone accountable for pipeline health, not just pipeline existence. If you're mapping this into your RevOps tech stack, document ownership the same way you document SLAs.

Verify at the Point of Collection

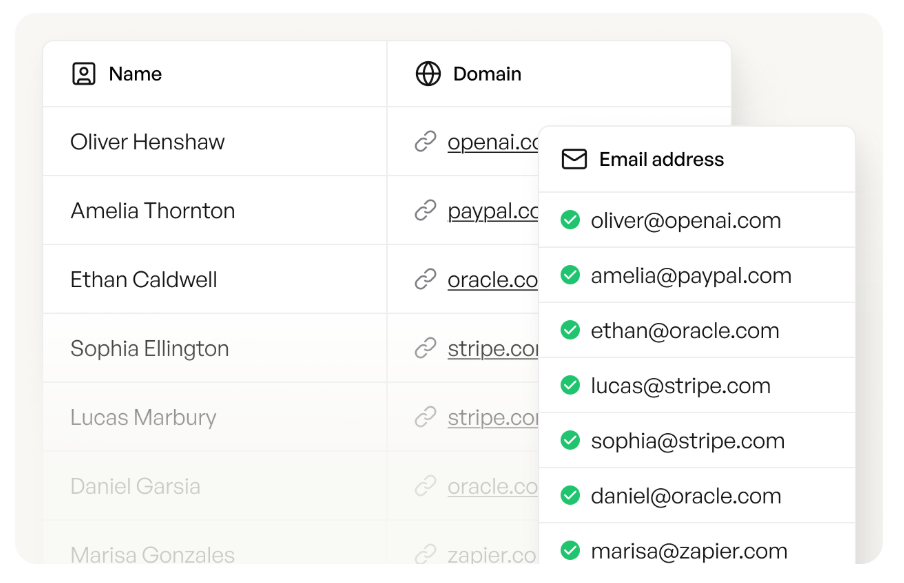

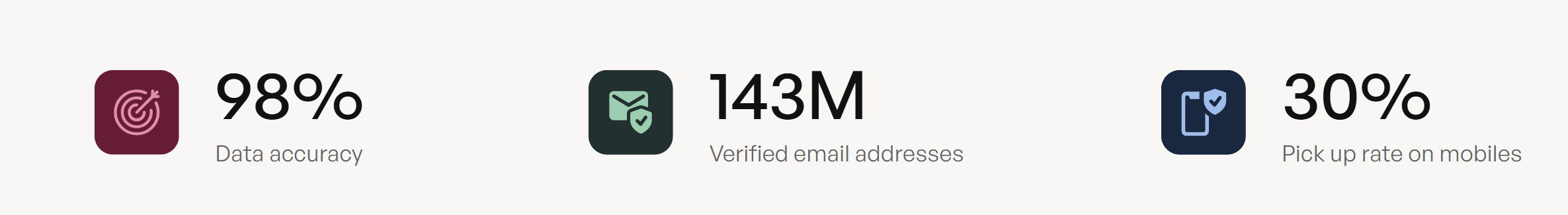

Pilot testing and schema validation catch structural errors, but they don't catch stale or fabricated data. For B2B contact data, verification at the point of collection is non-negotiable. Prospeo runs every record through a 5-step verification process - catch-all handling, spam-trap removal, honeypot filtering - delivering 98% email accuracy. One customer saw bounce rates drop from 35% to under 4% after switching away from unverified providers.

If you're evaluating vendors, start with a verified contact database and an email verifier benchmark before you buy.

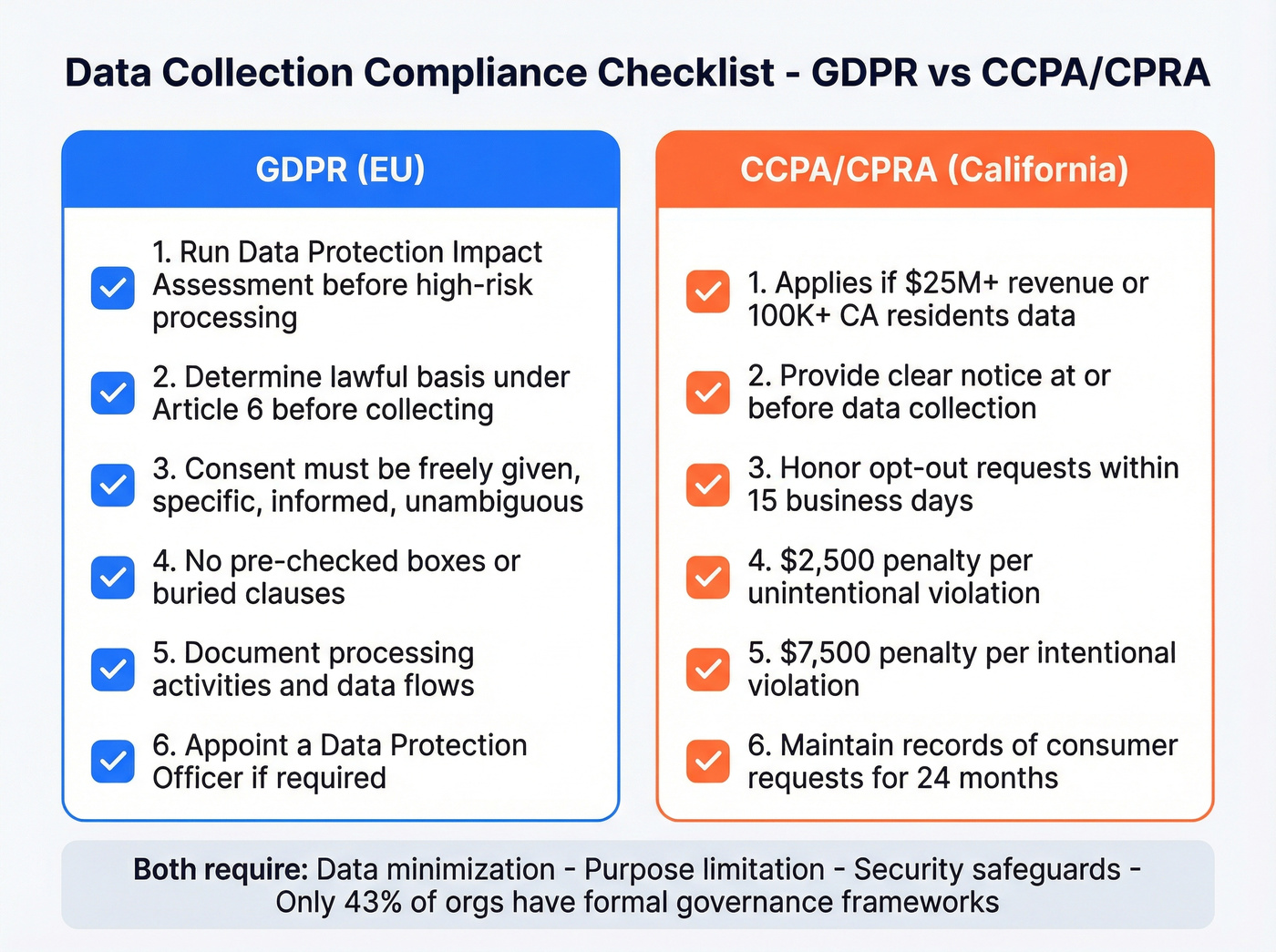

Build Compliance In

Compliance isn't a checkbox at the end. It's architecture at the beginning. Only 43% of organizations report having formal governance frameworks, which means the majority are flying blind on regulatory exposure.

If you're collecting B2B contact data at scale, align your process with B2B compliance requirements early, not after the first complaint.

GDPR essentials: Run a Data Protection Impact Assessment before high-risk processing. Determine your lawful basis under Article 6 before collecting a single record. Consent must be freely given, specific, informed, and unambiguous - no pre-checked boxes, no buried clauses.

CCPA/CPRA: Applies if you have $25M+ gross revenue or handle data on 100,000+ California residents. Penalties run $2,500 per unintentional violation, $7,500 per intentional one. Those add up fast when you're processing records at scale.

Keep Data Fresh

Data freshness is the most underrated practice on this list. In our experience, the freshness gap is where most B2B pipelines silently bleed revenue - you're emailing people who changed jobs two months ago and wondering why reply rates tanked. Most providers refresh contact data about every 6 weeks. Prospeo refreshes 300M+ professional profiles on a 7-day cycle. In B2B, job changes and company moves happen constantly, and a weekly refresh means your outbound hits current inboxes, not dead ones.

If job changes are a core trigger in your outbound motion, build a system for automated champion tracking so your data stays aligned with reality.

You just read that poor data quality costs organizations $12.9M per year. Prospeo's 5-step verification - catch-all handling, spam-trap removal, honeypot filtering - delivers 98% email accuracy on 300M+ profiles refreshed every 7 days. One team cut bounce rates from 35% to under 4%.

Fix your data collection at the source, not downstream.

How to Measure Collection Quality

Practices without metrics are just opinions. These KPIs tell you whether your collection is actually working:

If you want to tie collection quality to revenue outcomes, map these to account executive KPIs and pipeline reporting.

| KPI | Formula | Flag Threshold |

|---|---|---|

| Accuracy | Accurate records / total records x 100 | Below 95% |

| Completeness | (Total - nulls) / total x 100 | Below 90% |

| Reliability | Data incidents per period | Rising trend |

| Usability | Documented columns / total columns x 100 | Below 80% |

Track these monthly. If accuracy dips below 95%, audit your sources before adding more data. If completeness drops, you're collecting fields nobody fills in - cut them or make them required. Don't just dashboard these numbers; assign someone to act on them when they cross a threshold.

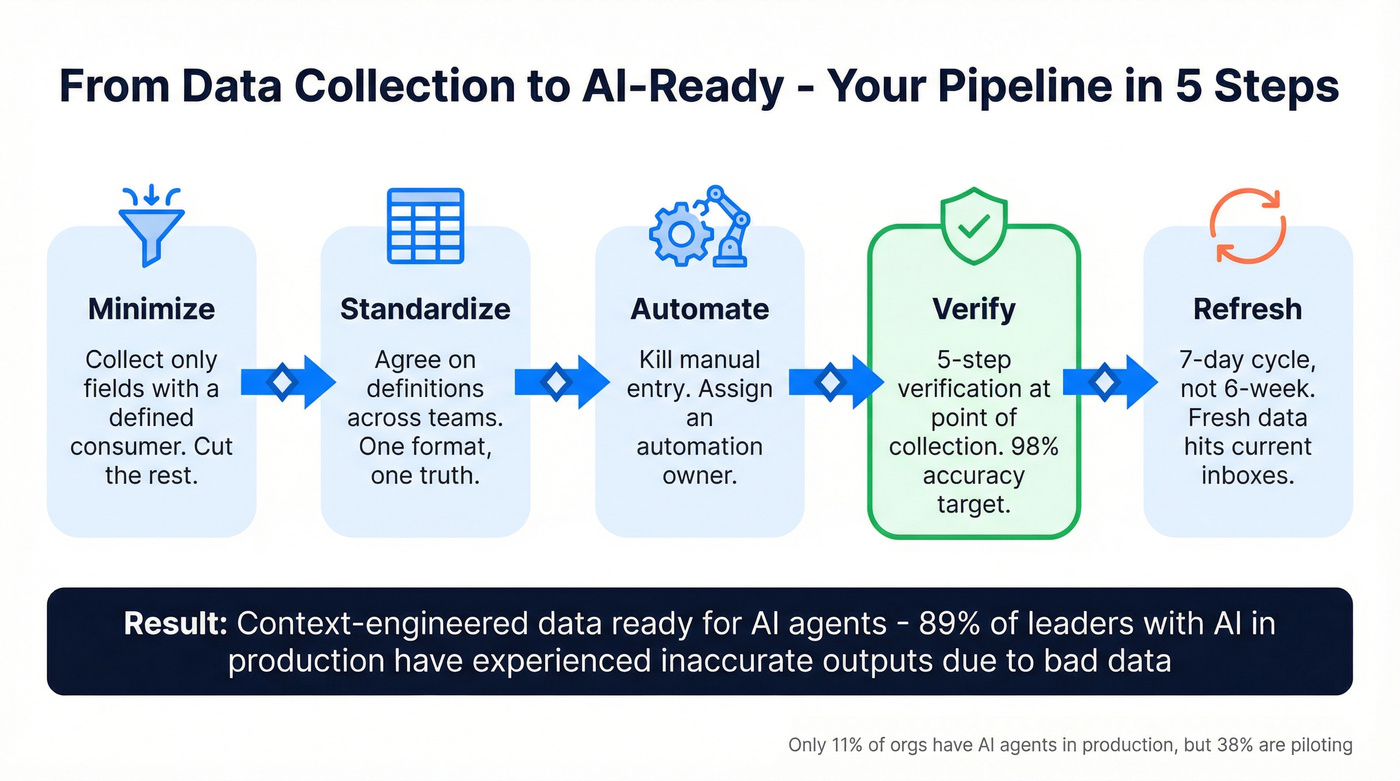

Preparing Your Collection for AI Agents

Let's be honest: most AI failures aren't model problems. They're data collection problems wearing a model-shaped mask. Only 11% of organizations have AI agents in production, but 38% are piloting. And 89% of leaders with AI in production have already experienced inaccurate or misleading outputs. The root cause? 45% of business leaders cite data accuracy and bias as the primary barrier to scaling AI.

If you're planning an AI rollout, treat collection as part of your GTM AI foundation, not a separate project.

The emerging discipline is context engineering: delivering fresh, structured data fast enough for AI agents that consume it in real time. Every practice above - minimization, standardization, verification, freshness - is infrastructure for the AI workflows your team will run within 18 months. If your collection practices aren't solid now, your AI rollout won't magically fix them.

Every best practice in this article - minimization, verification, freshness, AI-readiness - breaks down when your underlying data decays. Most providers refresh every 6 weeks. Prospeo refreshes 300M+ profiles weekly, at $0.01 per email. No contracts, no stale records.

Build your data collection on a 7-day refresh cycle, not a 6-week one.

FAQ

What's the biggest data collection mistake teams make?

Collecting everything without a defined objective. About 19% of enterprise data ends up siloed and unusable. Start with the decision you need to make, then collect only the fields required to support it.

How often should you refresh collected data?

For B2B contact data, weekly is the gold standard - Prospeo's 7-day refresh cycle keeps bounce rates under 4%. For survey or analytics data, quarterly reviews with monthly spot-checks catch most decay before it compounds.

What's the difference between data validation and verification?

Validation checks format - is this a real email syntax? Verification confirms the record is accurate and deliverable right now. You need both. Skipping verification is how bounce rates climb past 20%.