Lead Capture and Scoring: The Practitioner's Playbook for 2026

A founder we know spent three weeks building an "elaborate" scoring model in HubSpot. Whitepaper downloads got +15 points. Pricing page views got +25. Case studies got +10. His highest-scoring lead - 89 points - was a grad student writing a thesis. His biggest deal that quarter? A $20K-ARR close from a lead who scored 12 out of 100 and booked a demo cold.

That's the gap between measuring curiosity and measuring intent. It's the core problem that lead capture and scoring systems need to solve.

96% of website visitors aren't ready to buy. Even among those who convert into leads, only about 20% will ever close. If your capture and scoring system can't separate the 20% from the noise, you're just building a very organized list of people who'll never pay you.

What You Need (Quick Version)

Three things to get right, in order:

- Capture leads with short forms (3 fields max) and exclusivity-driven copy - then score them on buying signals (budget, problem, decision-maker), not engagement vanity metrics. When that founder switched to manually reviewing leads on those three criteria, his close rate tripled.

- Start with rule-based scoring using the copy-paste matrix below. Predictive AI is overkill unless you've got 1,000+ contacts and 100+ closed deals to train on.

- None of it works if your contact data is stale. Verify emails and enrich records before you assign a single point. Garbage data in means garbage scores out.

That's the whole system. The rest of this playbook shows you how to build each piece.

What Are Capture and Scoring?

Lead capture and lead scoring are two halves of one system. Capture is how you collect contact information - forms, gated content, webinar registrations, chatbots, demo requests. Scoring is how you rank those contacts by their likelihood to actually buy.

Together, they form the handoff mechanism between Marketing and Sales. Marketing captures and nurtures. The scoring model decides when a lead becomes an MQL (Marketing Qualified Lead) and gets routed to Sales. Sales accepts it as an SQL (Sales Qualified Lead) - or kicks it back. That handoff, governed by an SLA between the two teams, is where most pipelines either accelerate or die.

Here's the math: even among leads who convert from visitor to contact, only about 20% will close. Your scoring model's job isn't to predict the future - it's to make sure your reps spend their limited hours on that 20%, not the other 80%.

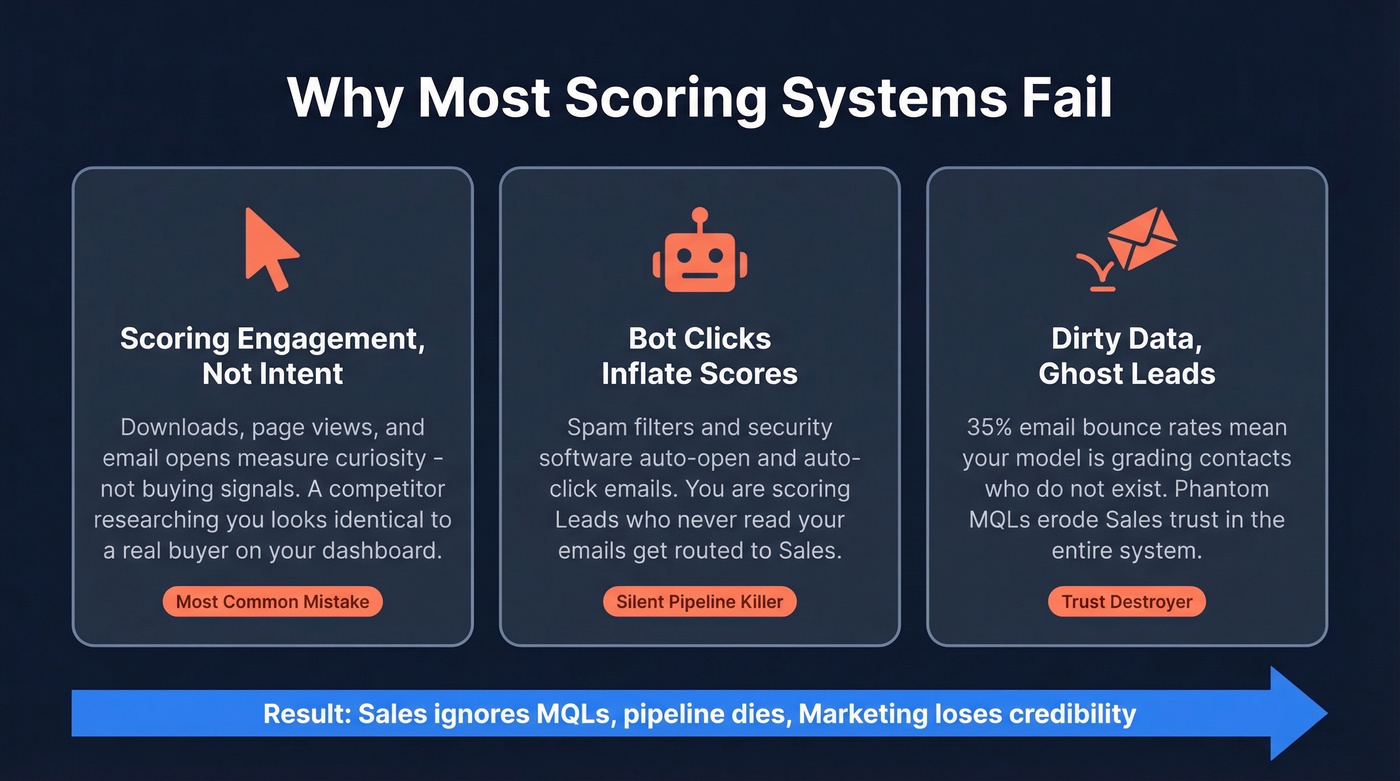

Why Most Scoring Systems Fail

The #1 failure mode is simple: teams score engagement instead of intent. Downloads, page views, email opens - these measure curiosity. A competitor researching your product looks identical to a buyer researching your product, at least on a dashboard.

The bot-click problem makes this worse. Spam filters and security software auto-open and auto-click emails. If you're scoring email opens at +5 points each, you're inflating scores for leads who've never actually read a word you've sent. The consensus on r/sales is clear: engagement-heavy scoring misclassifies "curious" non-buyers as hot leads while missing real buyers who convert fast with minimal tracked engagement.

Then there's the data quality problem. If 35% of your emails bounce, your scoring model is grading ghosts. You're assigning points to contacts who don't exist, routing phantom MQLs to Sales, and eroding trust in the entire system.

This is where data hygiene becomes non-negotiable. Before you score a single lead, you need to know the contact is real. Prospeo runs a 5-step email verification process - catch-all handling, spam-trap removal, honeypot filtering - delivering 98% email accuracy on 143M+ verified addresses. Records refresh every 7 days, not the 6-week industry average. When Snyk's 50-person AE team plugged this into their workflow, bounce rates dropped from 35-40% to under 5%, and AE-sourced pipeline jumped 180%.

Capture Forms That Convert

Form Design Benchmarks

A 2025 Typeform study of 10,000 forms surfaced three findings worth building around. Copy that implies exclusivity - "Get early access" instead of "Download now" - correlated with a 10.6% increase in completion rates. Forms using hidden fields for personalization saw a 4.8% lift. And adding a question to the welcome screen actually decreased completion by 5%.

The takeaway: keep forms short, make the offer feel scarce, and personalize behind the scenes. Three-field forms convert 27% better than five-field forms. Every additional field you add is a tax on conversion. Ask for name, email, and company. Everything else - title, phone, company size - you can enrich after capture using tools like CRM enrichment platforms that return 50+ data points per contact.

71% of customers expect personalized interactions, but that doesn't mean you need to front-load a dozen questions. Use hidden fields, UTM parameters, and post-capture enrichment to build the profile without killing your conversion rate.

Channel Benchmarks

Not all channels produce leads at the same cost or quality. Here's where the numbers land for B2B:

B2B benchmarks, 2026 data. Ranges are directional - your vertical will vary.

| Channel | Conversion Rate | CPL Range |

|---|---|---|

| Webinars | 11.2% | $60-$80 |

| 6.5% | $30-$45 | |

| Google Search Ads | 4.5% | $90-$150 |

| LinkedIn Ads | 3.2% | $120-$200 |

| SEO / Content | 1.8% | $30-$60 |

Webinars dominate on conversion rate and deliver a reasonable CPL. LinkedIn ads convert at a fraction of the rate but give you precise targeting. SEO is the long game - lowest conversion rate, but the CPL stays low and compounds over time.

The channel mix matters for scoring, too. A webinar attendee who stayed for the Q&A signals different intent than someone who clicked a LinkedIn ad and bounced after 8 seconds. Weight your scoring model accordingly.

You just read it: 35% bounce rates mean you're scoring ghosts. Prospeo's 5-step verification delivers 98% email accuracy on 143M+ verified addresses - refreshed every 7 days, not 6 weeks. Enrich 3-field form captures into 50+ data point profiles automatically. Snyk cut bounces from 40% to under 5% and grew pipeline 180%.

Stop assigning points to contacts that don't exist.

How to Build a Scoring Model

Rule-Based vs. Predictive

Predictive scoring uses machine learning to analyze historical conversion patterns and assign scores automatically. Sounds great on a vendor slide deck. It's also overkill for 90% of B2B teams.

HubSpot's predictive scoring needs at least 1,000 contacts and 100 closed deals to produce reliable models. Salesforce Einstein has similar data requirements. Enterprise intent platforms like 6sense take 1-3 months to deploy. If you don't have that volume, the model is guessing - and you won't understand why a lead scored high or low.

Rule-based scoring is transparent. You define the signals, assign the weights, and can explain to Sales exactly why a lead hit MQL. Start here. Graduate to predictive when your data justifies it.

We've seen this play out dozens of times: a well-maintained rule-based model built with Sales input will outperform a predictive model trained on thin data every single time. Don't let a vendor upsell you into complexity you don't need.

A Matrix You Can Copy

This model combines practitioner frameworks from SaaS outbound teams and proven marketing-ops patterns. We built it for teams of 2-20 people - small enough to move fast, big enough to have budget. Copy it, adjust the weights for your ICP, and ship it this week.

| Signal | Category | Points | Rationale |

|---|---|---|---|

| Demo request | Behavior | +100 | Route immediately - skip scoring |

| Funding milestone | Firmographic | +30 | Budget signal |

| Webinar attended (full session) | Behavior | +30 | High engagement; calibrate to your funnel |

| Public pain signal | Intent | +25 | Founder posts about the problem you solve |

| Decision-maker accessible | Fit | +20 | Can reach VP/founder in 2 touches |

| ICP industry match | Demographic | +15 | Right vertical |

| Pricing page 3x post-demo | Behavior | +15 | High buying intent in context |

| Stack investment | Technographic | +10 | Already paying for adjacent tools |

| Timing trigger | Intent | +10 | New hire, planning cycle, product launch |

| Consumer email domain | Negative | -10 | Gmail/Yahoo in B2B = weaker signal |

| Unsubscribe | Negative | -15 | Disengaged |

| 90-day inactivity | Decay | -10 | Stale lead |

| Competitor domain | Negative | -50 | Not a buyer |

Here's the thing: the specific point values matter less than the relative weights. A demo request should always massively outweigh a webinar registration. A competitor domain should nuke the score. In one SaaS team's implementation, leads scoring 70+ closed at 3x the rate of those under 50 - but only because the signals were intent-based, not engagement-based. Get the hierarchy right and the exact numbers will calibrate themselves over a quarter or two.

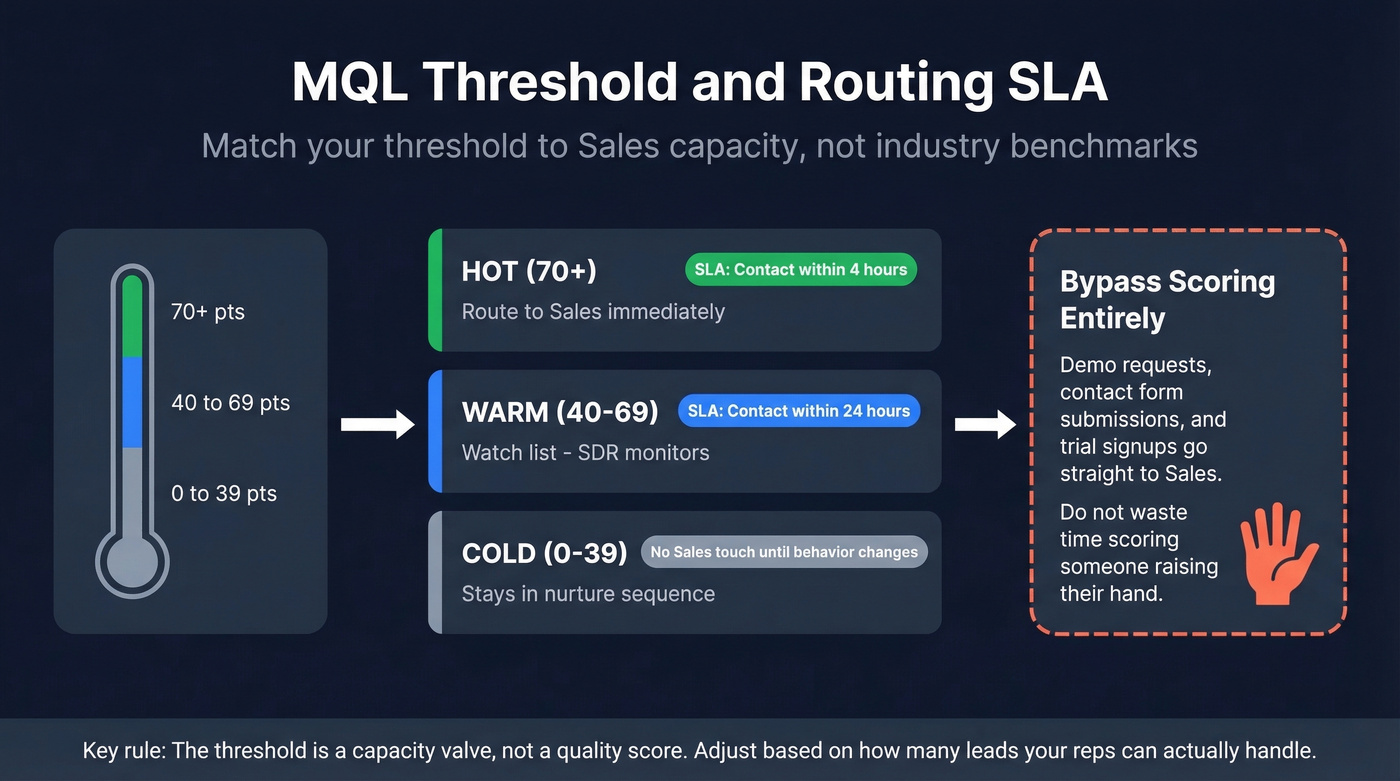

Setting Your MQL Threshold

Most B2B teams set their MQL threshold between 60 and 100 points. The right number isn't an industry benchmark - it's driven by your Sales team's capacity.

If your reps can handle 50 leads per week and you're sending them 200, tighten the threshold. If they're sitting idle, loosen it. The threshold is a capacity valve, not a quality score.

A simple three-tier band works for most teams:

- 0-39 = Cold. Stays in nurture.

- 40-69 = Warm. Watch list, SDR monitors.

- 70+ = Hot. Route to Sales immediately.

Pair your threshold with a response-time SLA. Score 80+ means Sales contacts within 4 hours. Score 60-79 means Sales contacts within 24 hours. Under 60 stays in nurture until behavior changes. Without an SLA, scoring is academic - leads rot while reps cherry-pick.

One critical rule: demo requests, contact form submissions, and trial signups bypass scoring entirely. Route them to Sales immediately. Don't waste time scoring someone who's raising their hand.

One team we worked with tightened their title/seniority filters while lowering the activity threshold - the result was a 13% increase in MQL-to-meeting rate. Sometimes the fix isn't more points; it's better filters.

Mistakes That Tank Your Pipeline

Eight anti-patterns that kill scoring models in production:

Scoring email opens. Bot clicks and security software auto-open emails. You're scoring spam filters, not humans. Remove email opens from your model entirely.

Over-relying on job titles. Free-text title fields are chaos - "VP Sales," "Vice President, Sales," "Head of Revenue" are the same person. Normalize titles before scoring, or you'll miss half your decision-makers.

Building without Sales input. If Sales doesn't trust the model, they'll ignore MQLs. Involve them from day one.

Scoring demo requests instead of routing them. Someone who asks for a demo is a hand-raiser. Send them to a rep within the hour - leads contacted in the first hour are 7x more likely to qualify.

Ignoring inactivity. A lead who scored 75 six months ago and hasn't engaged since isn't an MQL - they're a ghost. Apply negative scoring for inactivity. At 365 days of silence, consider removing the record.

Treating the model as set-and-forget. Your ICP evolves. Your product changes. Recalibrate quarterly using conversion data and Sales feedback. A model you built six months ago is scoring against an ICP that no longer exists.

Interpreting behavior in isolation. A single pricing page view means nothing. Three pricing page views in 48 hours after a demo? That's buying intent. Score behavior in sequence and context, not as isolated events. Combining scoring with lead tracking - watching both the point total and the behavioral timeline - gives reps the full picture before they pick up the phone.

Ignoring consumer email domains in B2B. A VP who signs up with a Gmail address might still be legit, but it's a weaker signal than a corporate domain. Apply a small negative score - not enough to disqualify, but enough to flag it.

Best Tools for the Job

| Tool | Best For | Scoring Type | Starting Price | Key Limit |

|---|---|---|---|---|

| Prospeo | Data quality layer | Enrichment + verify | Free tier; ~$39/mo | Pair with CRM for scoring |

| HubSpot | Mid-market all-in-one | Rule + predictive | $890/mo (3 seats) | Predictive = Enterprise only |

| Salesforce Einstein | Enterprise CRM teams | AI predictive | ~$215/user/mo | Expensive for scoring alone |

| ActiveCampaign | Budget scoring + email | Rule-based | ~$49/mo | Less sophisticated |

| Marketo | Enterprise marketing ops | Behavior + demo | ~$1,500-$3,500/mo | Complex setup |

| Freshsales | SMB AI scoring | Freddy AI | ~$15-$39/user/mo | Limited integrations |

HubSpot

The default choice for mid-market teams who want capture, scoring, and nurture in one platform. Rule-based scoring comes with the Professional plan at $890/mo for 3 seats. Predictive scoring requires Enterprise at $3,600/mo with a 10-seat minimum plus $3,500 onboarding. Breeze Intelligence adds enrichment from $45/mo. The gap between Professional and Enterprise is steep - and predictive is the feature most teams want but can't justify paying for.

If you're building this inside HubSpot, use a dedicated HubSpot lead scoring setup so Sales can audit the logic.

Salesforce Einstein

Requires Enterprise Sales Cloud ($165/user/mo) plus the Einstein add-on ($50/user/mo). For a 10-person team, that's $40K+ annually just to get AI scoring. The models are powerful if you've got the data volume, but the pricing is absurd for what should be a core CRM feature. Skip it if you're evaluating CRM and scoring together - HubSpot gives you more for less at the mid-market level.

ActiveCampaign

If you need email automation first and scoring second, ActiveCampaign is the pragmatic pick. Scoring lives on the Plus plan at ~$49/mo. You won't get the depth of HubSpot's reporting or Marketo's routing logic, but for teams running straightforward nurture sequences with basic lead prioritization, it handles the job without the sticker shock.

Marketo

Marketo uses a two-field approach - separate Behavior Score and Demographic Score - which is elegant for complex routing where marketing ops teams need granular control. Custom pricing, typically $1,500-$3,500/mo. This is a tool that demands a dedicated admin. If you don't have one, you'll spend more time configuring than converting.

Freshsales

Small team, tight budget, want AI scoring without a six-figure contract? Freshsales Freddy starts around $15/user/mo and is far cheaper than Salesforce Einstein. The trade-off is a thinner integration ecosystem, so make sure it connects to your existing stack before committing.

If you're comparing platforms, start with a shortlist from lead scoring software and work backward from your routing needs.

Three-field forms convert 27% better - but you still need title, company size, and phone to score leads properly. Prospeo's CRM enrichment returns 50+ data points per contact at a 92% match rate, so you capture more leads and score them on real buying signals, not form-field guesses.

Capture less, know more. Enrich every lead for $0.01.

When NOT to Score

If you're getting fewer than 100 leads per month, don't build a scoring model. Just talk to every lead.

Let's be honest: scoring adds value only when Sales can't talk to everyone. For early-stage teams, a simple routing rule beats a point system. Demo request? Goes to Sales. Everything else? Goes to nurture. That's your "model." Graduate to the full matrix when volume demands it.

If you're still building the foundation, start with a clean lead management process and add scoring once routing and follow-up are consistent.

FAQ

What's the difference between lead capture and lead scoring?

Capture is collecting contact information through forms, gated content, or demo requests. Scoring is ranking those contacts by purchase likelihood. They're two halves of one system - capture fills the funnel, scoring decides who moves through it.

How many points should trigger an MQL?

Most B2B teams set thresholds between 60 and 100 points, but the right number depends on Sales capacity. If reps are overwhelmed with 200 leads a week, raise the threshold. If they're idle, lower it. It's a capacity dial, not a quality benchmark.

Is predictive lead scoring worth it?

Only if you have 1,000+ contacts and 100+ closed deals to train the model. Below that, rule-based scoring is simpler, cheaper, and more transparent - and you can always upgrade later when data volume justifies the investment.

How often should I recalibrate my scoring model?

Quarterly at minimum. Pull conversion data, gather Sales feedback, and adjust weights. The ICP you defined six months ago might not match the deals you're actually closing today.

What's a good free tool for cleaning data before scoring?

Prospeo's free tier includes 75 email verifications per month - enough to test data quality on a small list before committing. For larger volumes, paid plans start at ~$39/mo with 98% accuracy and a 7-day refresh cycle, which keeps scores grounded in current, deliverable contacts.