Lead Scoring and Qualification: A Practitioner's Playbook

A RevOps lead we work with ran their first scoring model last year. Within two weeks, the top-scored "leads" were marketing students downloading every whitepaper on the site, while actual buyers - the ones who skipped content and went straight to the demo form - sat at the bottom of the queue. That's the classic failure mode of lead scoring and qualification done poorly, and it's far more common than anyone admits. Companies using lead scoring see 138% ROI versus 78% without it, but only when the model actually reflects buying behavior.

The Short Version

Lead scoring assigns points based on fit and behavior. Lead qualification confirms readiness through a framework like BANT or MEDDIC. Most scoring fails because of dirty data and overcomplicated models, not bad logic. Build a simple model this week, route leads by score band, and clean your contact data before you score anything.

Scoring vs. Qualification

These two terms get used interchangeably, and that's where confusion starts.

Scoring is automated - your marketing platform assigns points based on who someone is (fit) and what they do (behavior). Qualification is human-driven - a rep confirms need, budget, authority, and timing using a structured framework. They work in sequence: scoring triages the firehose so reps aren't manually reviewing every inbound lead, and qualification validates that the high-scoring leads are actually worth a meeting. One without the other breaks down fast.

Build Your Scoring Model This Week

The biggest mistake teams make is trying to build a perfect 20-variable model before launching anything. A 5-variable model that's running beats a 20-variable model stuck in a spreadsheet every single time. Let's start with the two-score approach that Marketo practitioners recommend: separate fit (who they are) from behavior (what they've done).

The Scoring Matrix

Here's a copy-paste starting point. Adjust the numbers for your business, but don't overthink v1.

| Signal | Points | Type |

|---|---|---|

| Demo request | +100 | Behavior |

| Webinar attended | +30 | Behavior |

| Pricing page visit | +20 | Behavior |

| Whitepaper download | +15 | Behavior |

| Email open | 0 | - |

| ICP industry match | +10 | Fit |

| Wrong industry | -20 | Fit |

| Bounced email | -10 | Fit |

Why are email opens set to zero? Because bots account for over 40% of internet traffic. Spam filters and security tools auto-open emails constantly. Scoring opens injects noise directly into your model.

Routing by Score Band

Once you've got scores flowing, route them into three buckets:

- 25+ points - Sales alert, immediate follow-up

- 10-24 points - Mid-funnel nurture sequence

- Under 10 - Top-of-funnel, keep warming

Most B2B teams set their MQL threshold between 60 and 100 points. Start at 70 and adjust after 30-45 days of data. Getting your MQL scoring criteria right at this stage prevents reps from chasing leads that were never ready for a conversation.

The Hand-Raiser Rule

If someone requests a demo, starts a trial, or fills out a "contact sales" form, route them to a rep immediately. No formula override. No waiting for them to accumulate enough points.

This is the one rule that should never be automated away - qualification at that stage should be human, not formula-based.

Score Decay

A lead who scored 150 points six months ago and hasn't visited since isn't hot. They're stale. Add decay after 30-45 days of inactivity, and reset to 50% of your MQL threshold rather than subtracting a flat amount. If your threshold is 70, decay inactive leads to 35. This prevents ancient leads with inflated scores from clogging your pipeline.

Run v1 for 30-45 days, track which scored leads actually convert to meetings, then tune.

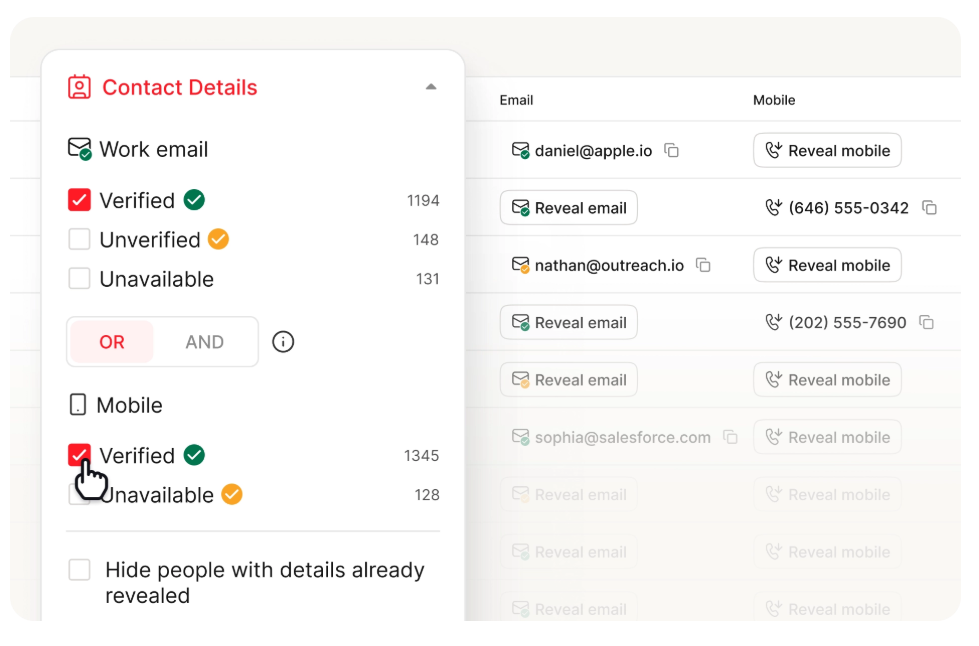

Scoring on bounced emails tanks your model. If 20% of contacts are unreachable, your reps are chasing ghosts. Prospeo delivers 98% email accuracy on a 7-day refresh cycle - so every scored lead is actually contactable when it hits the queue.

Fix the data layer before you score a single lead.

Pick a Qualification Framework

Once scoring surfaces the right leads, reps need a consistent way to qualify them. B2B buying decisions often involve around 7 stakeholders, and winging it doesn't scale.

| Framework | Best For | Core Questions |

|---|---|---|

| BANT | High-volume, <$50k deals | Budget, Authority, Need, Timeline |

| CHAMP | Consultative sales | Challenges, Authority, Money, Priority |

| MEDDIC | Enterprise, 5+ stakeholders | Metrics, Econ. Buyer, Decision Criteria/Process, Pain, Champion |

BANT is fast and works for transactional cycles. MEDDIC is heavier but essential when you're navigating complex orgs with six-figure deals. CHAMP sits in between - it leads with the prospect's challenges rather than their budget, which tends to produce better discovery conversations.

Lead Stages in Brief

- PQL (Product Qualified): Hit usage thresholds in a trial or freemium product

- MQL (Marketing Qualified): Engaged enough to meet your scoring threshold

- SAL (Sales Accepted): Rep reviewed the MQL and agreed to work it

- SQL (Sales Qualified): Confirmed need, timing, budget, and authority - ready for a proposal

The handoff from MQL to SAL is where most leads die. An agreed SLA between marketing and sales - response time, follow-up cadence, feedback loop - is non-negotiable. A well-calibrated scoring model makes this handoff smoother because sales trusts the scores they're receiving.

Mistakes That Break Scoring

Scoring email opens. Over 40% of internet traffic is bots. Your "engaged" leads are often just spam filters clicking every link. Set opens to zero points and weight actual page visits and form fills instead.

Overcomplicating the model. We've seen teams spend months building a 20-variable model and never ship it. Start with 5 variables. A simple model running in production generates data you can learn from. A complex model in a spreadsheet generates nothing.

Blocking hand-raisers with formulas. If someone asks to talk to sales, send them to sales. A demo request from a lead with a score of 12 is still a demo request.

Scoring on dirty data. Here's the thing - if 20% of your emails bounce, one in five "hot leads" is literally unreachable. Scoring on top of bad data is worse than no scoring at all. Prospeo verifies emails at 98% accuracy on a 7-day refresh cycle, which means scored leads are actually reachable when reps pick up the phone. Clean your contact data before you score a single lead.

No SLA with sales. If you launch scoring without an agreed routing and response-time SLA, sales will ignore scores within a week. A common takeaway on r/hubspot is simple: scoring without sales buy-in is a marketing exercise, not a revenue tool.

Do You Need AI Scoring?

Probably not yet. Only 13% of marketers use AI for predictive scoring. HubSpot's predictive model requires at least 1,000 contacts and 100 closed deals. Salesforce Einstein needs roughly 1,000 converted leads to build an accurate model.

If you don't have that volume, a rules-based model will outperform a starved AI model every time. Start with rules, accumulate data, and revisit predictive scoring once you've got the historical volume to feed it. Skip this entirely if your CRM has fewer than 5,000 contacts - you'll get better results from manual qualification alone.

Tools and What They Cost

| Tool | Scoring Type | Approx. Cost |

|---|---|---|

| Salesforce Einstein | Predictive | ~$165/user/mo (Sales Cloud Enterprise) + ~$50/user/mo add-on |

| HubSpot Professional | Manual rules | $890/mo (3 seats) |

| HubSpot Enterprise | Predictive | $3,600/mo + $3,500 onboarding |

| 6sense | Intent + predictive | $19k-$300k/yr |

| Prospeo | Data quality layer | Free tier, ~$0.01/email |

Prospeo isn't a scoring tool - it's the data layer underneath your scoring tool. At a fraction of what you'd spend on any platform above, it ensures the contacts you're scoring actually have valid emails and working phone numbers. Its 92% API match rate and 83% enrichment match rate mean your CRM stays current without manual cleanup.

If you’re evaluating providers, start with a quick scan of data enrichment services and a practical lead enrichment workflow.

Your scoring model routes leads by fit and behavior - but fit scores are worthless without accurate contact data. Prospeo enriches leads with 50+ data points at a 92% match rate, giving you verified emails, direct dials, job titles, and company signals to score against.

Enrich first, score second. That's how top teams operate.

FAQ

What's the difference between lead scoring and lead qualification?

Lead scoring is automated point assignment based on fit (job title, industry, company size) and behavior (page visits, demo requests, content downloads). Lead qualification is a human-driven step where a rep confirms need, budget, authority, and timing using BANT or MEDDIC. Scoring triages at scale; qualification validates one-to-one.

What's a good MQL threshold to start with?

Most B2B teams set their MQL threshold between 60 and 100 points. Start at 70, run for 30-45 days, then adjust based on how many scored leads convert to meetings. If sales is drowning in unqualified calls, raise it. If pipeline is thin, lower it.

How do I keep lead scores from going stale?

Add score decay after 30-45 days of inactivity - reset to 50% of your MQL threshold, not a flat subtraction. This prevents ancient leads with inflated scores from staying "hot." Pairing decay with a data provider that refreshes on a weekly cycle keeps underlying contact data current so you're not scoring leads whose emails have already bounced.

Can I run lead scoring without expensive software?

Yes. HubSpot's free CRM supports basic list segmentation, and you can build a manual scoring model in any spreadsheet. The critical investment isn't the platform - it's clean data. A free verification tool lets small teams validate contacts before scoring, which matters more than a fancy predictive engine running on bad records.