MQLs Aren't Dead - Your Process Is Just Stuck in 2019

HubSpot's own research found that 95% of salespeople say they receive low-quality leads from marketing. On Reddit, the sentiment is even blunter - one r/AskMarketing thread calls MQLs "marketing theater." And yet, every B2B company still runs some version of the marketing qualified lead model.

Picture the quarterly review. Marketing slides show MQL volume up 40% year-over-year. Sales sits there, arms crossed, because pipeline is flat and half those "qualified" leads never responded to a single outreach. The CFO asks why the funnel chart looks like a megaphone pointed at a brick wall.

The problem isn't the concept. It's that most teams are running a 2019 scoring model against a 2026 buying process and wondering why nothing converts.

What You Need (Quick Version)

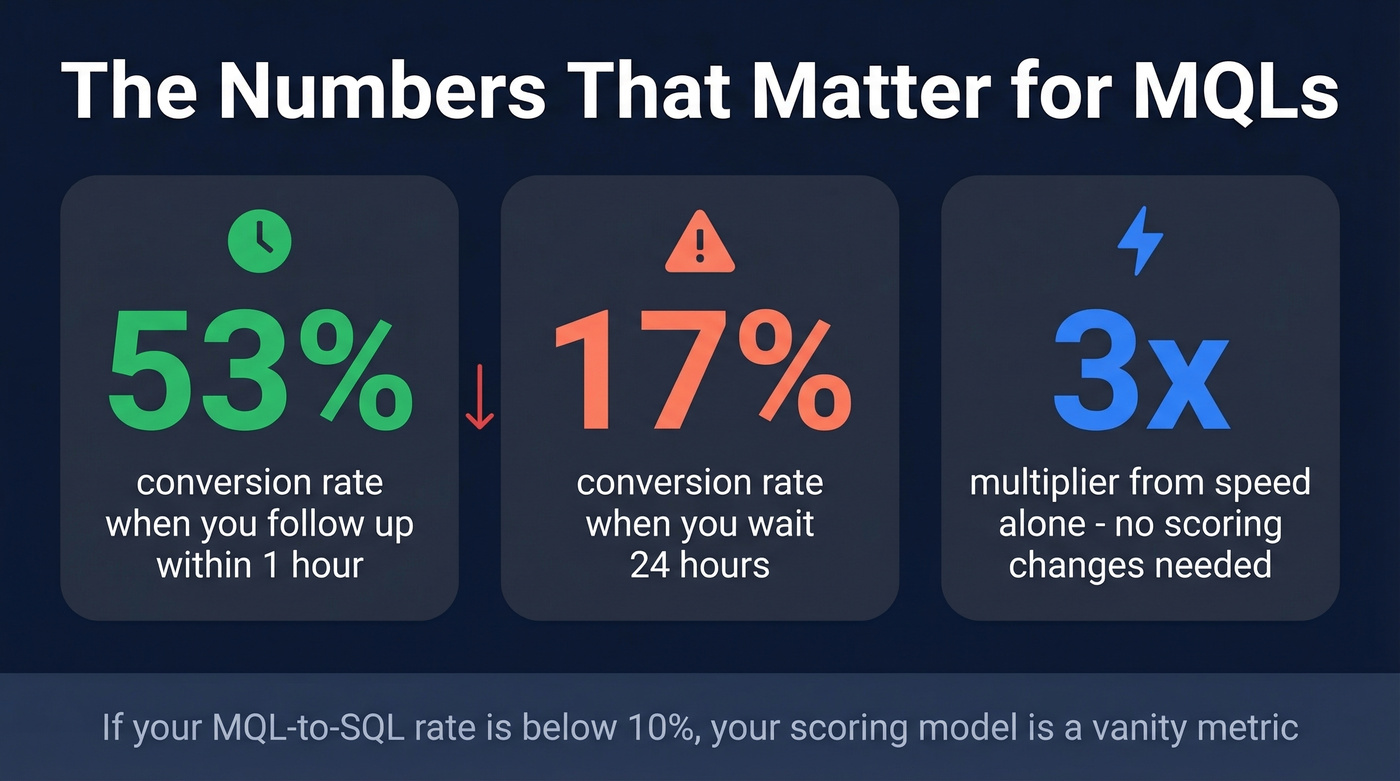

MQLs still work when you get three things right: a shared definition between sales and marketing, a scoring model that includes negative signals, and an SLA with time-bound handoff targets. If your MQL-to-SQL rate is below 10%, your scoring model is a vanity metric.

One stat to keep in your back pocket: following up within the first hour converts leads at 53%, while waiting 24 hours drops that to 17%. Speed alone is a 3x multiplier. The rest of this piece delivers the operational playbook - plus benchmarks by industry and channel so you know what "good" actually looks like.

What Does MQL Stand For?

MQL stands for Marketing Qualified Lead - a prospect who's shown enough engagement with your marketing and fits your ideal customer profile well enough to warrant sales attention. Not a customer. Not an opportunity. A signal that says "this person is worth a phone call."

The term traces back to SiriusDecisions, now part of Forrester, who built it into their Demand Waterfall framework in the early 2000s. The framework gave marketing and sales a shared vocabulary: leads move from inquiry to MQL to SQL to opportunity to closed-won. It stuck because it was easy to explain in a board meeting and easy to report in a dashboard. If you've searched for the MQL acronym and landed on conflicting definitions, that's because every company adapts the framework to its own funnel - but the core meaning hasn't changed.

That simplicity is also its curse. Because marketing qualified leads are easy to count, they became the metric marketing optimized for - regardless of whether those leads ever turned into revenue. The concept is sound. The execution, at most companies, is broken.

MQL vs SQL vs SAL vs PQL

These acronyms get thrown around interchangeably, which creates exactly the kind of misalignment that kills handoffs.

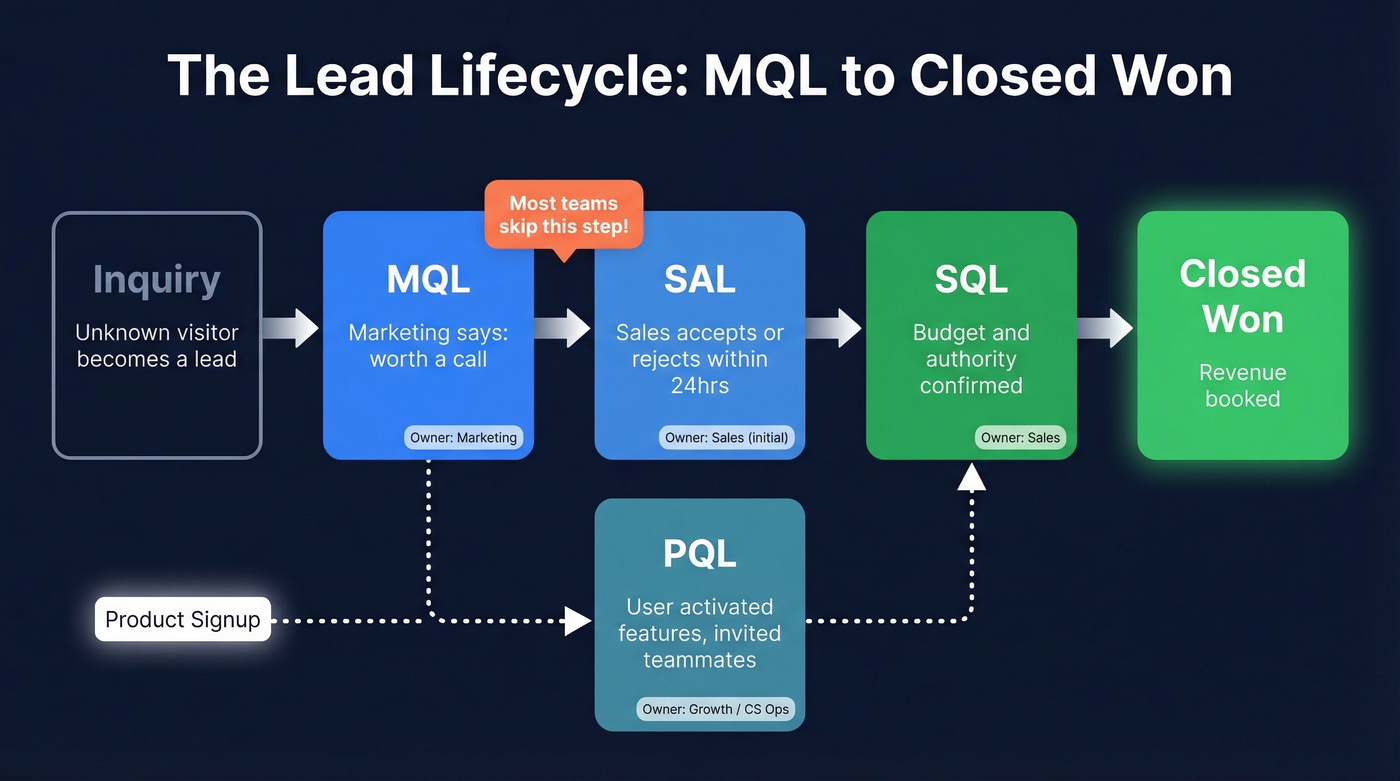

| Stage | Owner | Qualification Signal | Readiness | Example Behavior |

|---|---|---|---|---|

| MQL | Marketing | Engagement + fit | Low-Medium | 3+ assets, pricing page visit, ICP match |

| SAL | Sales (initial) | Sales accepted | Medium | Rep reviewed, confirmed fit, started outreach |

| SQL | Sales | Budget/authority confirmed | High | Meeting held, BANT validated, active deal |

| PQL | Growth / CS Ops | Product usage milestones | High | Signed up, activated feature, invited teammates |

The SAL stage is the one most teams skip - and it's the one that matters most. Without it, leads get tossed over the wall and sit in a sales queue untouched. SAL creates accountability: a rep has to actively accept or reject the lead within a defined window. If they don't, it reverts to marketing for nurturing.

PQLs deserve attention if you're running a product-led growth motion. A whitepaper download is an MQL. A user who signs up, invites three teammates, and activates a core feature is a PQL - and the intent signal is far stronger.

Here's the key distinction: marketing owns the MQL definition, sales owns the SQL definition. When those definitions aren't jointly agreed upon, you get the quarterly review disaster from the intro.

How to Define MQLs for Your Business

B2B vs B2C Criteria

In B2B, firmographics do the heavy lifting: company size, industry, tech stack, budget authority, job title, department. A director of marketing at a 200-person SaaS company downloading your ROI calculator is a completely different signal than a student doing the same thing.

In B2C, demographics and behavioral patterns matter more - purchase history, engagement frequency, cart abandonment, email click patterns, product browsing depth. A consumer who's visited your pricing page three times and abandoned a cart twice is showing real purchase intent.

The mistake is applying B2C scoring logic to B2B leads. Email opens and content downloads are weak signals in B2B. They tell you someone is researching, not buying. Firmographic fit is the foundation; behavior is the accelerant.

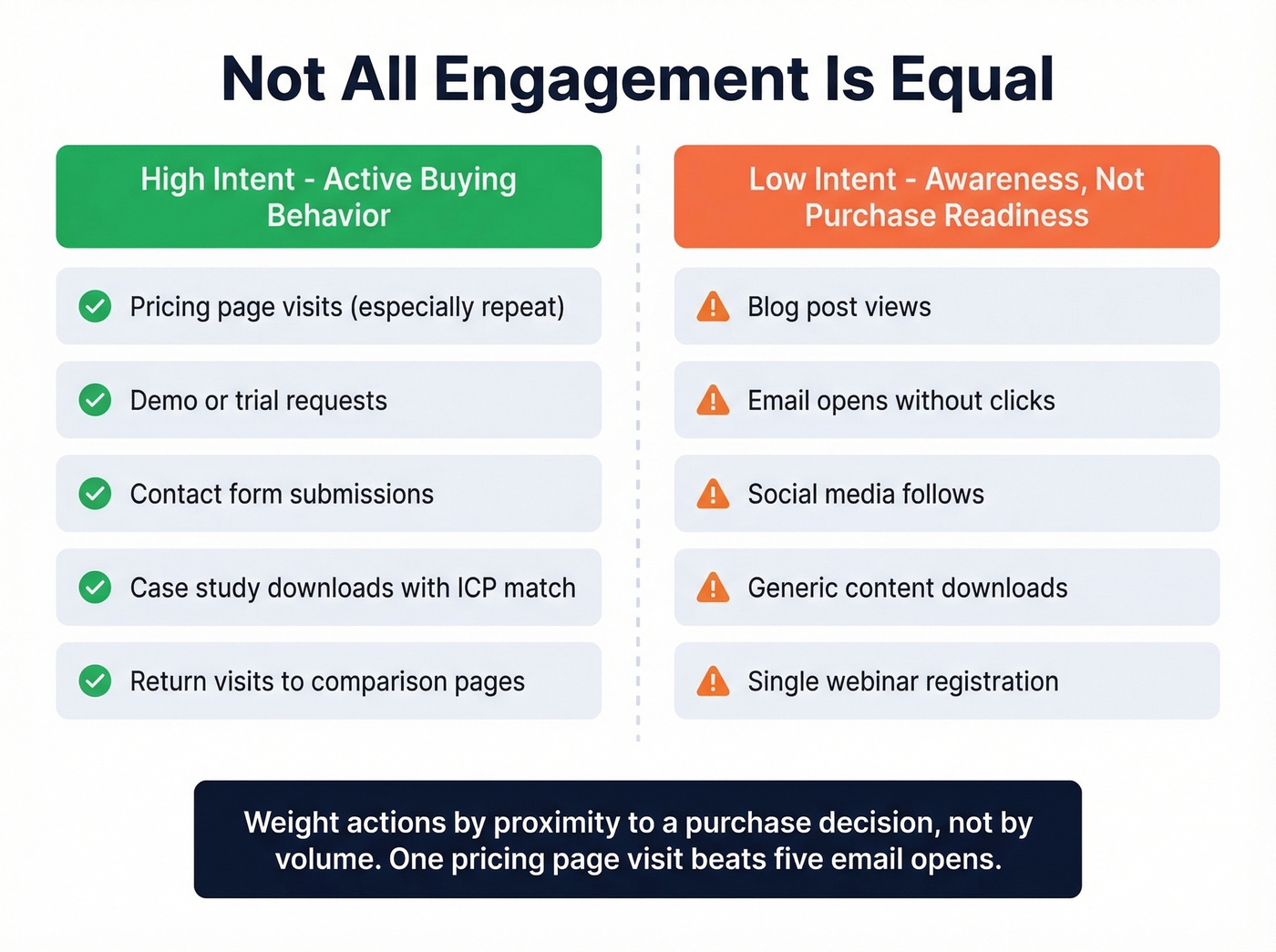

Behavioral Signals That Matter

High-intent signals - active buying behavior:

- Pricing page visits, especially repeat visits

- Demo or trial requests

- Contact form submissions

- Case study downloads with matching firmographics

- Return visits to product comparison pages

Low-intent signals - awareness, not purchase readiness:

- Blog post views

- Email opens without clicks

- Social media follows

- Generic content downloads

Someone who opened five emails but never clicked a link isn't more qualified than someone who visited your pricing page once. Weight actions by proximity to a purchase decision, not by volume.

Five Mistakes That Kill Your Pipeline

1. Setting the bar too low to inflate numbers. If downloading a single checklist makes someone qualified, you're optimizing for dashboard vanity, not pipeline. Reddit threads consistently flag this as the top problem - marketing sets the bar low to show growth, and sales stops trusting the leads entirely.

2. No feedback loop between sales and marketing. If sales rejects 70% of leads and marketing never hears about it, the definition stays broken forever. Monthly reviews of rejected leads with specific rejection reasons are non-negotiable.

3. Overweighting surface engagement. Three email opens and a blog visit shouldn't cross the threshold. Score them in low single digits, not double digits.

4. Not updating criteria as your ICP evolves. Your ideal customer profile from 2022 probably doesn't match your 2026 reality. Anteriad's research found many senior marketers haven't revisited their qualification definitions in over five years. If you've moved upmarket or shifted verticals, your definition needs to follow.

5. Sales and marketing disagreeing on what "qualified" means. This is the root cause of most dysfunction. If marketing thinks a content download plus firmographic fit equals qualified, but sales won't touch anything without a demo request, you've got a definitional gap that no amount of scoring can fix. Sit in a room together and write it down.

Your MQL-to-SQL rate tanks when sales can't reach the leads marketing sends over. Prospeo's 98% email accuracy and 30% mobile pickup rate mean reps connect with real buyers - not dead inboxes. 300M+ profiles with 30+ filters so every lead matches your ICP before it ever hits the scoring model.

Stop scoring leads your reps can't reach. Start with data that connects.

How to Build a Lead Scoring Model

Score vs Grade - You Need Both

Lead scoring measures intent and behavior - how engaged is this person? Lead grading measures fit - does this person match your ICP? Some platforms use letter grades A-F for fit, with adjustments like +1 letter for decision-maker titles and -1 for contacts outside your target region.

You need both because they fail independently. A VP of Marketing who's visited your pricing page three times is a high-score, high-grade lead. A college student who downloaded your ebook is high-score, low-grade. Without the grade, you're sending students to your sales team.

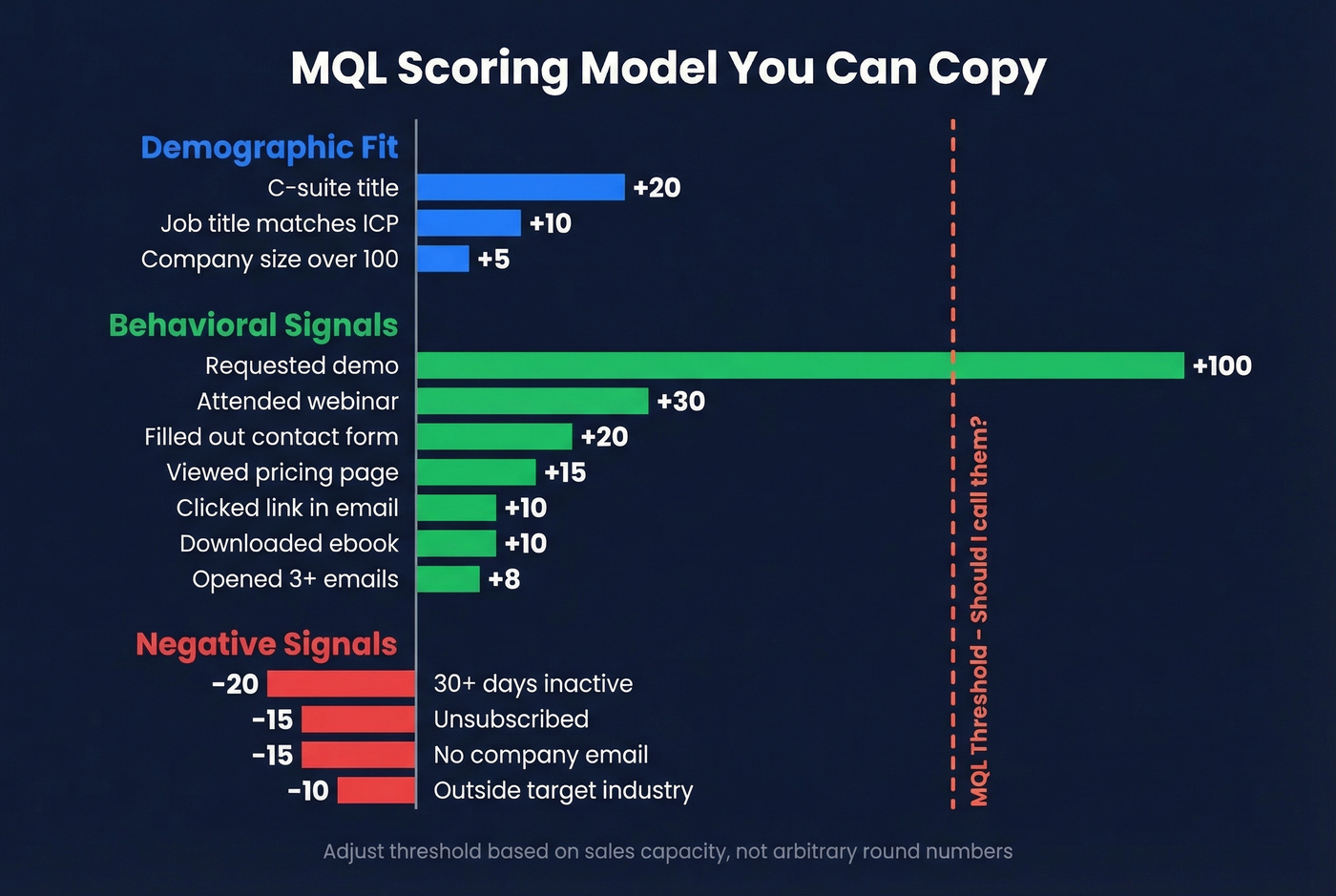

A Scoring Model You Can Copy

Here's a point-value model based on common implementations across HubSpot, Pardot, and Marketo environments. Adjust the specific numbers to your business, but the relative weighting holds across most B2B motions.

| Action / Attribute | Points | Category |

|---|---|---|

| Job title matches ICP | +10 | Demographic |

| Company size >100 | +5 | Demographic |

| C-suite title | +20 | Demographic |

| Opened 3+ emails | +8 | Behavioral |

| Clicked link in email | +10 | Behavioral |

| Viewed pricing page | +15 | Behavioral |

| Filled out contact form | +20 | Behavioral |

| Requested demo | +100 | Behavioral |

| Attended webinar | +30 | Behavioral |

| Downloaded ebook | +10 | Behavioral |

| Unsubscribed | -15 | Negative |

| No company email | -15 | Negative |

| Outside target industry | -10 | Negative |

| 30+ days inactive | -20 | Negative |

Common thresholds range from 50 to 75, and many Marketo-style models use ~70 as the "sales alert" line. But here's the critical nuance: your threshold should be driven by sales capacity, not arbitrary round numbers. If your team can handle 50 marketing qualified leads per week, set the threshold so roughly 50 leads cross it. The question is "should I call them?" - not "is this an opportunity?"

Notice the weighting gap between a demo request (+100) and a webinar registration (+30). That's intentional. A demo request is a hand-raise. A webinar is research. We've seen teams report a 13% jump in MQL-to-meeting rate after tightening title and seniority filters and reweighting activity thresholds along these lines.

Negative Scoring and Decay

A scoring model without negative signals is just an inflation machine. Scores only go up, which means every lead eventually qualifies if they stick around long enough. That's not qualification - that's time passing.

Build in decay: if a lead goes 30 days without engagement, subtract 20 points. Unsubscribes, personal email addresses, wrong geography - all penalties. The goal is a scoring model that breathes. Your qualified lead list should be a living snapshot of current intent, not a historical record of everyone who ever clicked something.

MQL-to-SQL Conversion Benchmarks

Benchmarks by Industry

First Page Sage analyzed client data from 2019-2025 to produce widely cited industry benchmarks:

| Industry | MQL-to-SQL Rate |

|---|---|

| Business Insurance | 26% |

| HVAC | 26% |

| eCommerce | 23% |

| Heavy Equipment | 23% |

| Higher Education | 21% |

| Consumer Electronics | 21% |

| FinTech | 19% |

| Automotive | 18% |

| B2B SaaS | 13% |

| Healthcare | 13% |

| Construction | 12% |

Most B2B motions land between 10-20%. Above 20% means your scoring model is tight and your sales-marketing alignment is working. Below 10% means something is structurally broken - either the definition is too loose, the handoff is too slow, or sales doesn't trust the leads. Top-performing teams with advanced scoring and strong alignment can push toward 40%.

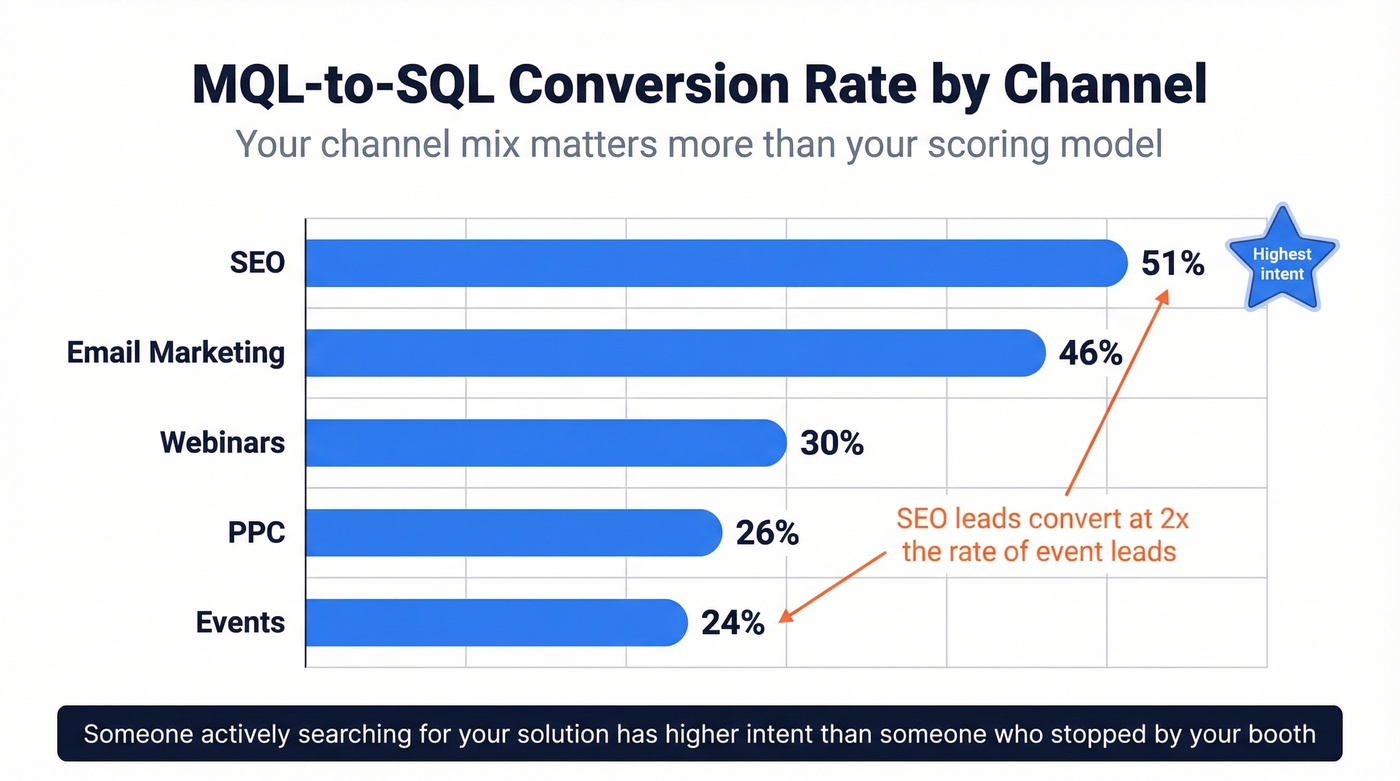

Benchmarks by Channel

Here's the data point most benchmark articles miss entirely. Your channel mix affects your overall conversion rate more than your scoring model does:

| Channel | MQL-to-SQL Rate |

|---|---|

| SEO | 51% |

| Email Marketing | 46% |

| Webinars | 30% |

| PPC | 26% |

| Events | 24% |

SEO leads convert at more than double the rate of event leads. Someone actively searching for your solution category has higher intent than someone who wandered past your booth. If your marketing mix is heavy on events and light on inbound, your overall conversion rate will look worse even if your scoring model is solid.

The Conversion Rate Formula

(SQLs / MQLs) x 100 = MQL-to-SQL conversion rate

Worked example: 400 MQLs in Q1, 120 accepted as SQLs = 30% conversion rate.

Measure by cohort, not calendar month. An MQL created in March might not become an SQL until May. If you're dividing March SQLs by March MQLs, you're mixing cohorts and getting meaningless numbers.

The Handoff: SLA Framework

58% of organizations rate their sales-marketing alignment as "poor." Only 43% have SLAs in place, and just 11% have jointly managed SLAs. That's a staggering gap given what's at stake - Aberdeen Group research shows companies with strong alignment achieve 20% annual revenue growth, while poorly aligned organizations see a 4% decline.

Speed matters more than almost anything else in the handoff. We keep coming back to that stat: 53% conversion within the first hour, 17% after 24 hours.

Your SLA should specify:

- Response time: Sales accepts or rejects within 8 hours, or the lead reverts to marketing

- Advancement window: SAL must advance to SQL within 4 business days, or revert

- First outreach timing: Within 1 hour of acceptance for high-score leads

- Outreach channels and cadence: Email, phone, social - with defined attempt counts and spacing

- Disqualification criteria: What specifically makes a lead "not qualified" - documented, not vibes

- Recycling rules: Where rejected leads go, what nurture track they enter, when they can re-qualify

The SLA isn't a document that lives in a shared drive. It's a weekly operational review. When sales rejects a lead, they log why. Marketing reviews those reasons monthly and adjusts scoring. That feedback loop is the entire point.

How to Improve Your Conversion Rate

Speed-to-lead is your #1 lever. Teams obsess over scoring model refinements when the real problem is that leads sit untouched for 48 hours. Automate the routing. Alert reps in Slack. Do whatever it takes to compress that window.

Behavioral scoring lifts conversion by up to 40%. If you're still scoring leads purely on firmographic fit without behavioral weighting, you're leaving massive conversion gains on the table. Implement the scoring model from the previous section, measure for 90 days, then refine based on which signals actually predict SQL conversion.

Tighten your ICP filters. Broad targeting feels productive because it generates volume. But volume without fit is noise. Fewer leads in, more SQLs out - that's the trade-off you want.

Build the feedback loop. Every rejected lead should come with a reason code. Every month, marketing and sales should review the top rejection reasons and adjust scoring accordingly. Without this loop, your model degrades as your market shifts.

Fix your data quality. The most common reason leads die in the handoff isn't bad scoring - it's bad data. If sales can't reach the lead, the lead doesn't exist. Prospeo verifies emails at 98% accuracy and refreshes records every 7 days, so your lead list doesn't rot between quarterly reviews. Our enrichment returns 50+ data points per contact with a 92% API match rate, giving reps the context they need to have a real conversation instead of a cold guess.

You just read that following up within one hour converts 3x more leads. That only works if contact data is accurate on arrival. Prospeo refreshes every 7 days - not 6 weeks - so your MQLs never age out before the first call. Layer buyer intent from 15,000 Bombora topics to score leads on real purchase signals, not vanity downloads.

Feed your scoring model intent data and verified contacts at $0.01 per email.

Are MQLs Dead in 2026?

The consensus on r/AskMarketing is blunt: "MQLs are marketing theater." One practitioner put it this way - "90% of those leads have zero intent to buy." Over on r/b2bmarketing, fewer teams celebrate lead volume and more focus on pipeline velocity, SQL conversion, and revenue impact.

They're not wrong about the symptom. But they're wrong about the diagnosis.

The problem isn't the concept. It's that most teams haven't updated their definitions in five-plus years, their scoring models reward activity over intent, and their handoff processes have no accountability. Kill the metric and you still have the same underlying problem - marketing and sales disagreeing on what constitutes a qualified buyer.

Let's be honest: if your average deal size is under $10k, you probably don't need a sophisticated scoring model at all. Route demo requests straight to sales, nurture everything else with automation, and skip the scoring theater. The infrastructure pays for itself when deal sizes justify the operational overhead. Below that threshold, you're building a machine that costs more to maintain than the deals it produces.

Anteriad has proposed a useful reframe: the "Marketing Qualified Spectrum." Instead of one binary gate, they suggest a continuum - Marketing Qualified Contact, Marketing Qualified Trial, Marketing Qualified Demand, Marketing Qualified Account, and Marketing Qualified Lead. Each maps to a different level of commercial readiness. It's more nuanced, and nuance is exactly what the traditional model lacks.

The bigger structural shift is toward buying groups. Forrester's Buyers' Journey Survey found that an average of 13 people are involved in a purchasing decision. Qualifying a single lead when the buying committee has a decision-maker, champion, influencer, ratifier, and end-user is like qualifying one wheel and calling the car ready to drive.

Palo Alto Networks ran a "revenue process transformation" built around buying-group qualification. The results: win rates doubled in the pilot, opportunity progression increased 800% after scaling, and they estimated a 13% revenue increase if applied retroactively. That's not a marginal improvement - that's a different operating model entirely.

MQLs aren't dead. But the single-lead, single-score, throw-it-over-the-wall version absolutely should be. The teams winning in 2026 are qualifying buying groups, scoring accounts alongside individuals, and measuring marketing on pipeline contribution rather than lead volume.

MQL vs PQL - When Product Usage Wins

If you're running a product-led growth motion, PQLs are a stronger signal than marketing qualified leads will ever be. A PQL is a user who's signed up for your free tier or trial, hit core value milestones, and matches your ICP.

The concrete triggers look different for every product. For an email marketing tool: connected a domain, imported a list, and sent at least one campaign. For project management: created a project, invited a teammate, and moved tasks through a workflow. As one r/SaaS practitioner put it: "Your product is your funnel." A whitepaper download tells you someone is curious. A user who's invited three teammates and activated core features tells you someone is buying.

PQLs don't replace marketing qualified leads for every business. If you don't have a self-serve product, you don't have PQLs. But if you do have a free tier and you're still qualifying leads based on content downloads instead of product usage, you're leaving the strongest signal on the table.

FAQ

What does MQL stand for?

MQL stands for Marketing Qualified Lead - a prospect who meets predefined engagement and firmographic criteria set jointly by marketing and sales. It's a handoff signal indicating the lead is worth direct outreach, not a guarantee they'll buy.

What's a good MQL-to-SQL conversion rate?

Most B2B companies see 10-20%. Top-performing teams with tight scoring and strong sales-marketing alignment reach 30-40%. Anything below 10% signals a broken definition or scoring model - start by auditing your criteria against actual SQL outcomes.

What's the difference between lead scoring and lead grading?

Lead scoring measures behavior - email clicks, pricing page visits, demo requests. Lead grading measures fit - job title, company size, industry, budget authority. You need both: a high score with a low grade sends unqualified contacts to sales, while a high grade with a low score means the right person hasn't shown interest yet.

How do you keep MQL data accurate before handoff?

Validate emails and phone numbers before passing leads to sales. Bad contact data is the silent killer of otherwise well-scored leads - tools like Prospeo, ZoomInfo, or Clearbit can automate this step with real-time verification.

Are MQLs still relevant in 2026?

Yes, but the model needs updating. Many teams now supplement them with PQLs and buying-group qualification. The concept works - the problem is stale definitions, broken handoff processes, and scoring models that haven't changed in years. Fix the process, and the metric delivers.