Sales Predictive Analytics: Why Most Implementations Fail (and How to Fix Yours)

A RevOps lead we know spent $180,000 on a sales predictive analytics platform last year. Twelve months later, his VP of Sales still pulls the "real" forecast from a Google Sheet every Sunday night. The AI tool sits in a browser tab nobody opens.

This isn't an outlier - it's the norm.

Global IT spending is set to hit $6.08 trillion in 2026, up nearly 10% year-over-year. Companies are pouring money into AI-powered everything, including sales forecasting. Yet most revenue teams still rely on spreadsheets and gut calls for their pipeline predictions. MIT Sloan Review illustrated this gap with a composite case study of a medical device company ("NovaMed") where revenue declined three consecutive years and annual sales fell 20% short of target - not because it lacked data, but because its territory structures and quotas were built on outdated assumptions no algorithm could fix.

You don't need a better algorithm. You need better data. That thesis runs through everything below.

The Short Version

If you're pressed for time, here's the playbook:

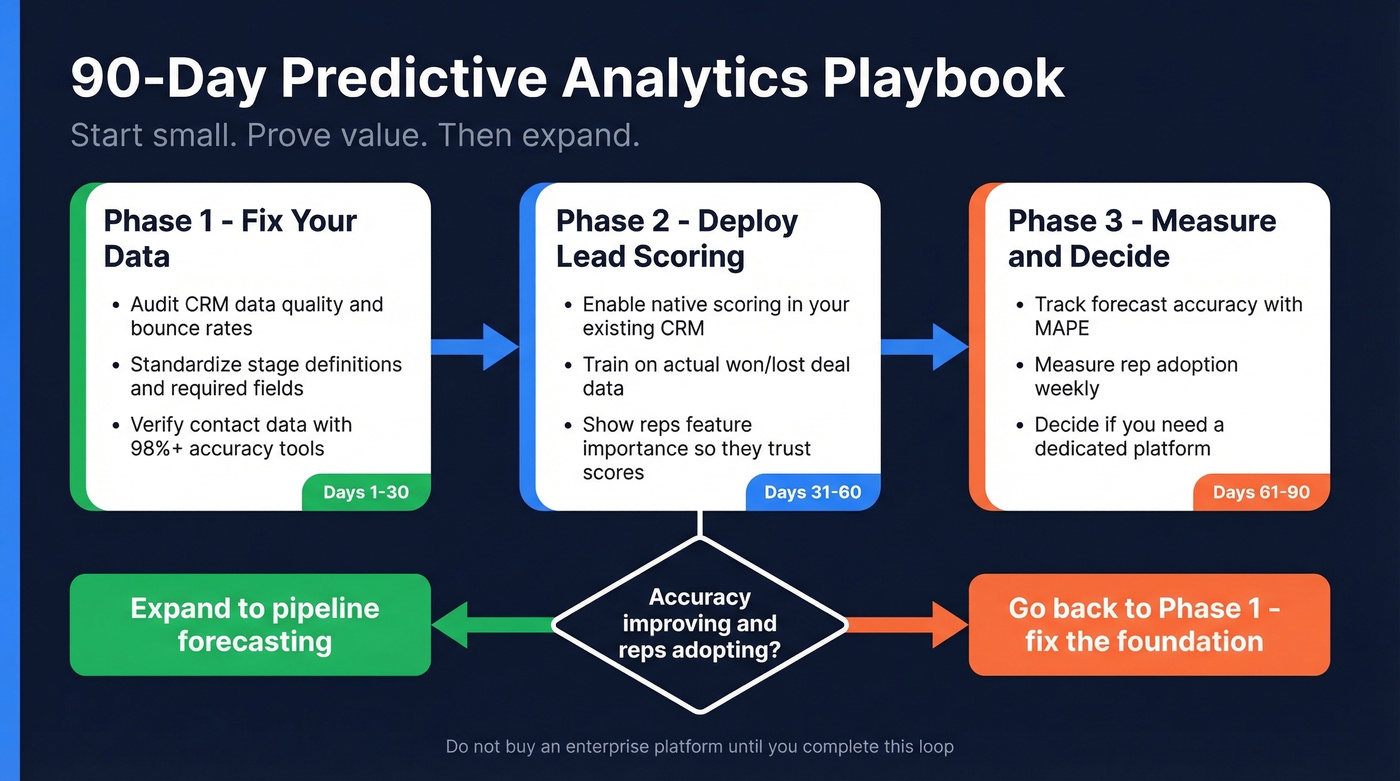

- Start with predictive lead scoring in your existing CRM. Salesforce Einstein and HubSpot both have native scoring. Don't buy a standalone platform yet.

- Run for 90 days before expanding. Measure accuracy, track rep adoption, then decide if you need a dedicated forecasting tool.

Here's the contrarian take most vendor guides won't give you: enterprise forecasting platforms aren't worth evaluating until your data layer is solid.

What Predictive Analytics in Sales Actually Means

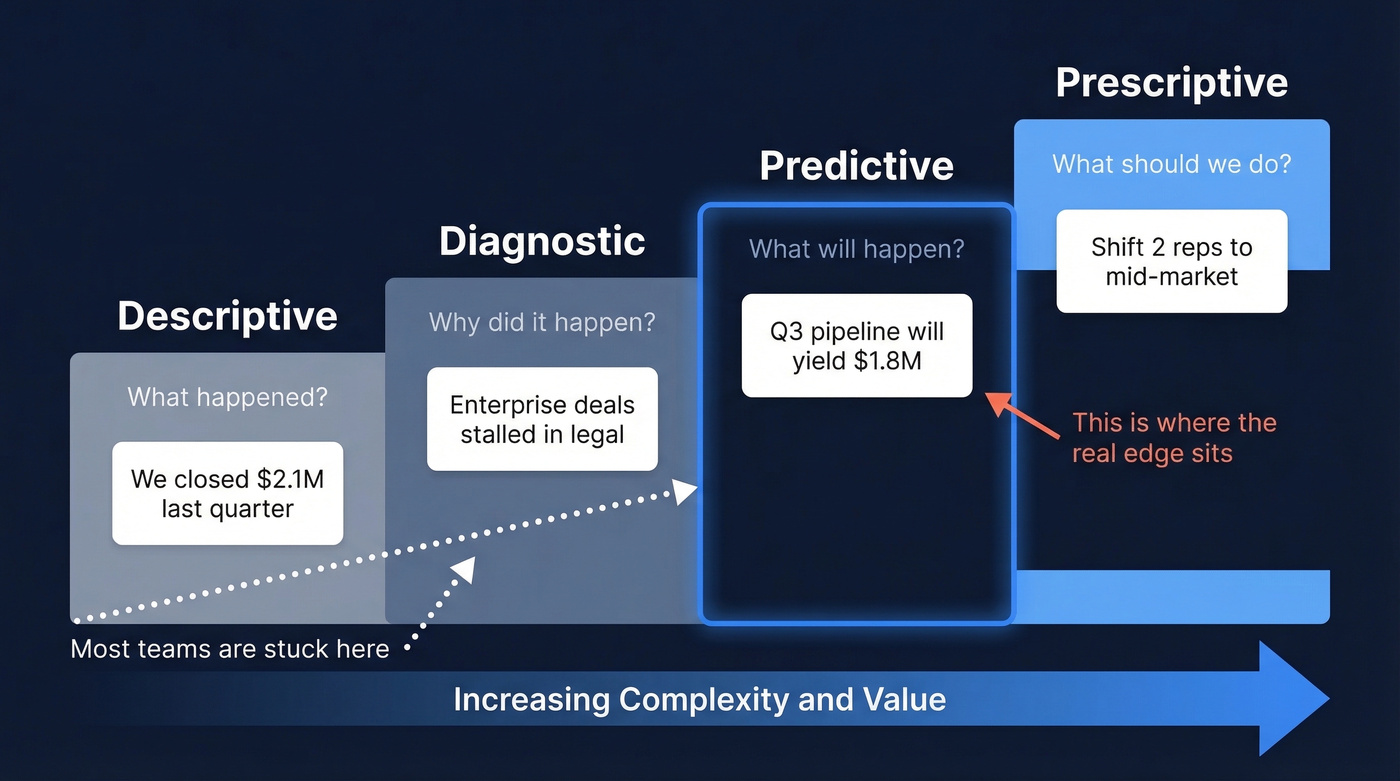

Sales predictive analytics uses historical data, statistical models, and machine learning to forecast future sales outcomes - which leads will convert, what Q3 revenue will look like, which accounts are about to churn. It's pattern recognition at scale, trained on outcomes your team has already generated.

| Type | What It Does | Sales Example |

|---|---|---|

| Descriptive | What happened? | "We closed $2.1M last quarter" |

| Diagnostic | Why did it happen? | "Enterprise deals stalled in legal" |

| Predictive | What will happen? | "Q3 pipeline will yield $1.8M" |

| Prescriptive | What should we do? | "Shift 2 reps to mid-market" |

Most teams live in descriptive and diagnostic. Predictive is where the real edge sits - but only if the inputs are clean.

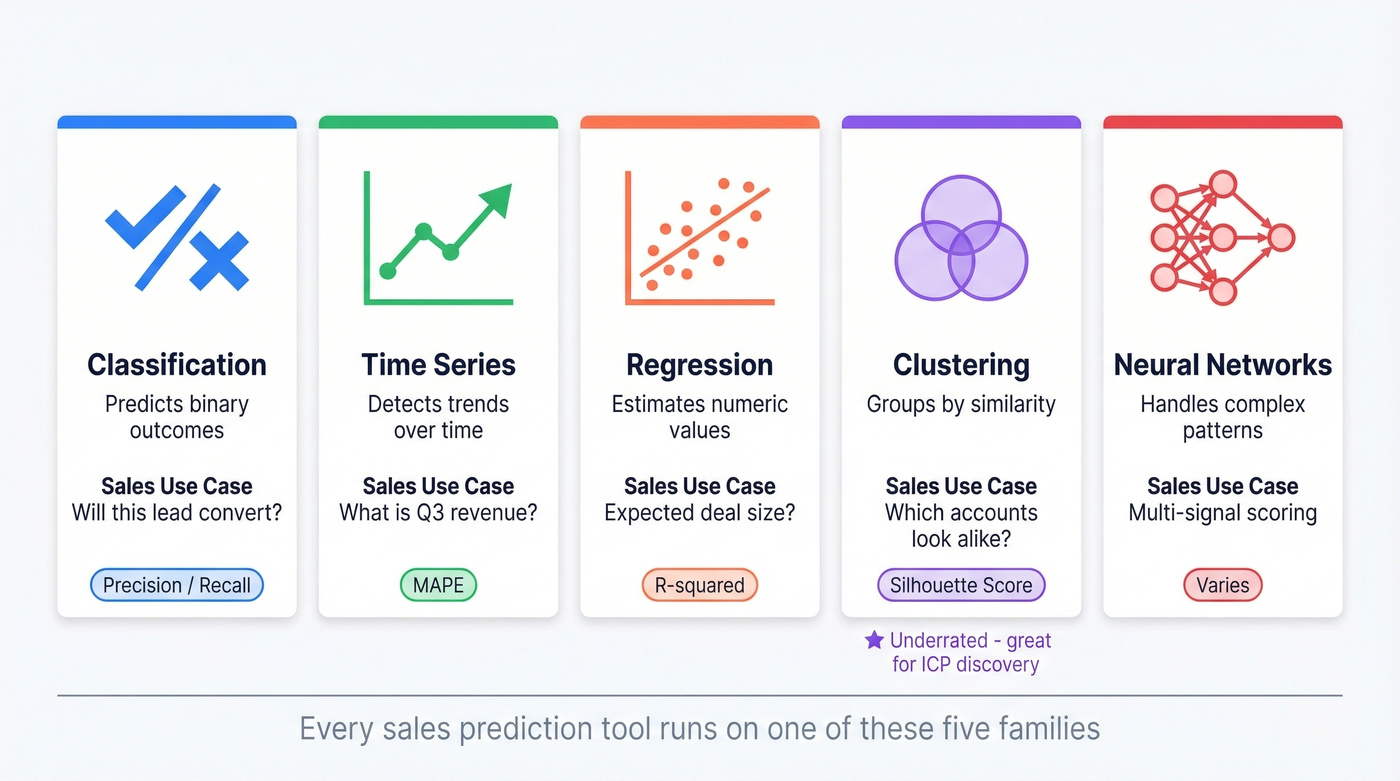

Five Model Families Behind Every Prediction

Every sales prediction tool, whether it's Salesforce Einstein or a custom Python model, runs on one of five model families. Understanding which one powers your tool helps you diagnose why it's wrong.

| Model Family | What It Predicts | Sales Use Case | Key Metric |

|---|---|---|---|

| Classification | Binary outcomes | Will this lead convert? | Precision / Recall |

| Time Series | Trends over time | What's Q3 revenue? | MAPE |

| Regression | Numeric values | Expected deal size? | R-squared / MSE |

| Clustering | Group similarity | Which accounts look alike? | Silhouette score |

| Neural Networks | Complex patterns | Multi-signal scoring | Varies |

Classification is the workhorse behind lead scoring - it looks at hundreds of signals from past won/lost deals and assigns a probability. Time series models power revenue forecasting by detecting seasonality in your historical bookings. Regression estimates continuous values like deal size or days-to-close.

Clustering is underrated. It groups accounts by behavioral similarity, which is powerful for territory planning and ICP refinement. We've seen teams uncover entirely new segments just by running clustering on their existing customer base - segments that manual analysis missed for years.

Neural networks handle the most complex scenarios but require more data and are harder to explain to your VP, which matters more than most data teams admit. Conversation intelligence data from tools like Gong - call transcripts, objection patterns, sentiment shifts - is an emerging signal layer that feeds these models beyond traditional CRM fields.

MAPE (Mean Absolute Percentage Error) is the standard metric for forecasting accuracy. Precision and recall matter for lead scoring: high precision means fewer false positives wasting rep time, high recall means you aren't missing good leads.

High-Impact Use Cases

Forecasting and Pipeline Prediction

This is the use case everyone buys for and the one most likely to disappoint.

Pipeline prediction models analyze deal velocity, stage progression, and historical conversion rates to estimate future revenue. They work when your CRM data is disciplined - consistent stage definitions, accurate close dates, complete activity logging. They fall apart when reps skip stages or sandbag deals. If you want forecasting to work, treat data cleanup as part of the implementation, not a "nice to have." Forecasting models don't fix broken pipeline hygiene. They amplify it.

Lead Scoring and Prioritization

This is the highest-ROI starting point for most teams. Predictive lead scoring replaces manual point systems with ML models trained on your actual conversion data. Instead of "downloaded a whitepaper = +10 points," the model identifies that leads from the financial industry with 200+ employees who visit the pricing page twice convert at 3x the average rate.

| Dimension | Rules-Based | Predictive |

|---|---|---|

| How it works | Manual point assignments | ML trained on outcomes |

| Signals used | 5-15 (manually chosen) | Hundreds (auto-selected) |

| Maintenance | Breaks when behavior shifts | Auto-retrains on new data |

| Explainability | Transparent by design | Requires feature importance UI |

Dynamics 365's implementation is a solid reference. It assigns a 0-100 score with A-D grades, scores new leads within minutes, and refreshes every 24 hours. The UI shows top positive and negative reasons - a tooltip might read: "64% of leads from the financial industry are qualified within 3 days."

The black-box concern is real, though. On r/Business_Ideas, "deployment hell" and explainability consistently rank as top concerns with predictive tools. Modern platforms address this with feature importance displays showing which signals drove each score. If your tool can't explain its scores, pick a different tool.

Churn, Cross-Sell, and Territory Optimization

Churn prediction is significantly more reliable than acquisition prediction. The reason is straightforward: churn models run on first-party product usage data, support tickets, and contract timelines. Signals you control. Acquisition models depend on third-party contact data and intent signals that are noisier by nature.

Clustering models shine for cross-sell and upsell, grouping existing customers by product usage and firmographic similarity to surface expansion-ready accounts. Territory optimization - the NovaMed problem - uses predictive models to identify overcrowded territories and misaligned quotas. High-impact, but it requires organizational buy-in most sales orgs simply don't have. (If you need examples to operationalize this, start with cross-sell and upsell.)

Every predictive model in this article fails the same way: garbage in, garbage out. Prospeo's 5-step verification delivers 98% email accuracy with a 7-day refresh cycle - not the 6-week industry average. Clean inputs mean your lead scoring and forecasting models actually work.

Fix your data layer before you buy another AI tool.

Accuracy Benchmarks Worth Knowing

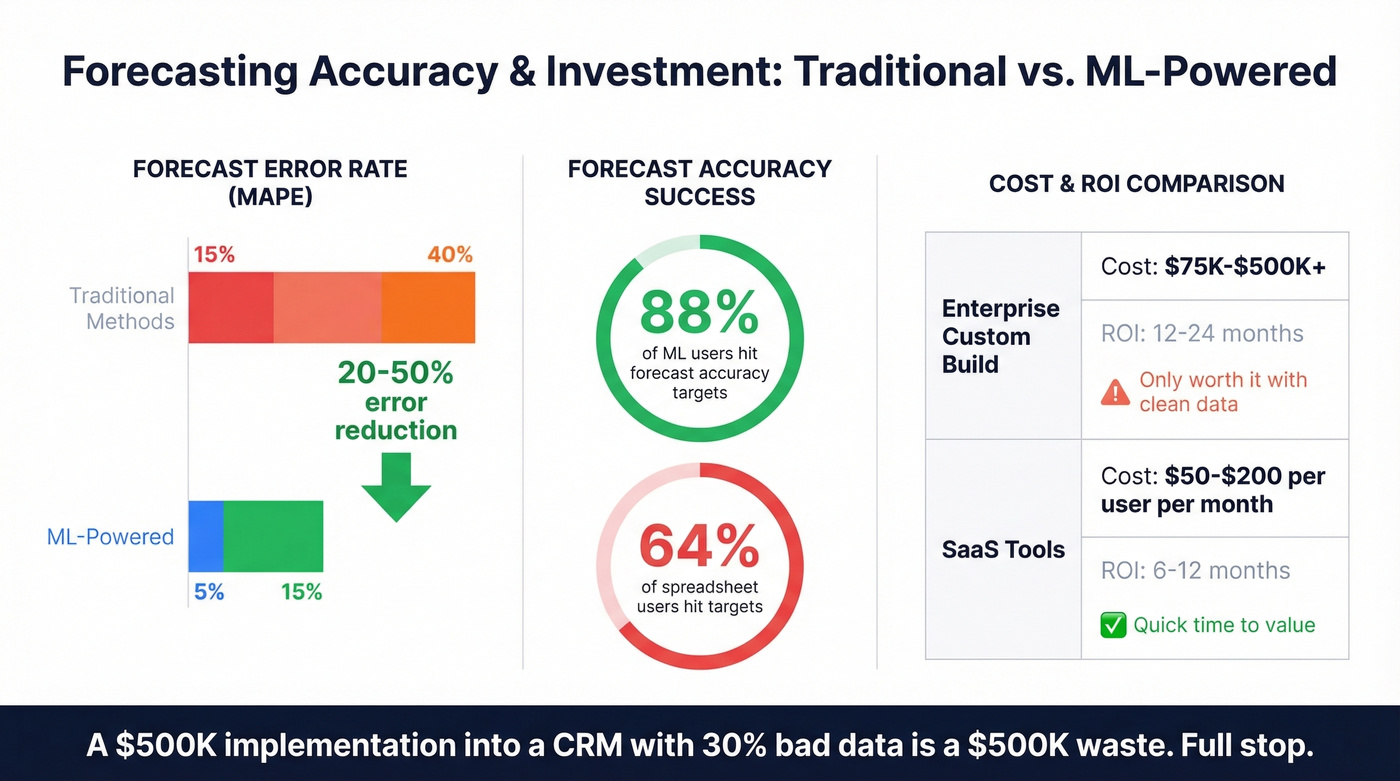

ML-powered forecasting reduces errors by 20-50% compared to traditional methods. Traditional approaches typically produce 15-40% MAPE; ML models bring that down to 5-15%. About 88% of businesses using machine learning hit their forecast accuracy targets, compared to 64% relying on spreadsheets.

Implementation costs vary wildly. Enterprise custom builds run $75K-$500K+ with 12-24 month ROI timelines. SaaS tools range from $50-$200/user/month and deliver faster returns, typically 6-12 months. But a $500K implementation into a CRM with 30% bad data is a $500K waste. Full stop.

Why Most Implementations Fail

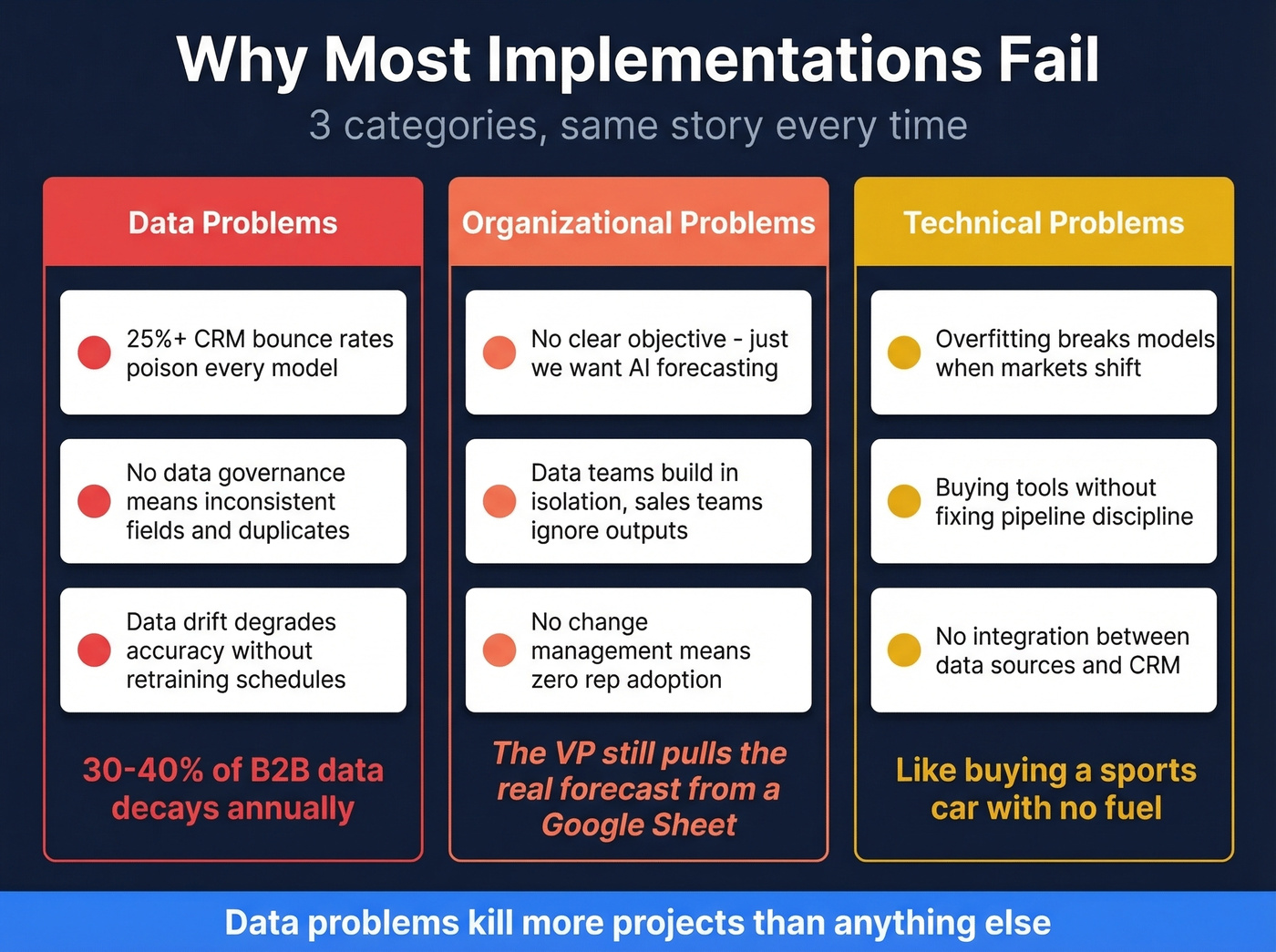

We've watched the same failure patterns repeat across nearly every stalled implementation. They fall into three categories.

Data problems kill more projects than anything else. Poor data quality tops the list - if your CRM contacts bounce at 25%+, every model built on that data is compromised from day one. No data governance means inconsistent stage definitions, missing fields, and duplicate records that make models unreliable. And data drift degrades accuracy over time as buyer behavior changes; without retraining schedules, models go stale within months.

Organizational problems are harder to fix. "We want AI forecasting" isn't an objective - "reduce Q3 forecast variance from plus-or-minus 30% to plus-or-minus 10%" is. Without cross-team collaboration, data teams build models in isolation and sales teams ignore outputs they didn't help design. Without change management, reps won't trust a score they don't understand.

Technical problems round it out. Overfitting - models trained too tightly on historical data - breaks when market conditions shift. And buying Gong Forecast or Clari without fixing pipeline discipline is like buying a sports car with no fuel.

A 2024 research paper on AI-powered sales forecasting validated what practitioners already know: data quality, system integration, and ongoing model refinement remain the persistent barriers. Nothing has changed in two years.

Let's be honest about what predictive analytics is good at. It works beautifully as decision support - highlighting risk bands, surfacing anomalies, prioritizing rep attention. It fails when treated as autonomous decision-making. Garbage in, garbage out isn't a cliche here. It's the literal failure mode.

The Data Quality Foundation

Your lead scoring model rates a prospect at 85. A rep spends 45 minutes researching and crafting a personalized email. The email bounces. The prospect left the company eight months ago.

Multiply that by hundreds of leads per quarter, and you start to see why industry estimates of 30-40% annual B2B data decay aren't just a nuisance - they're the reason your predictive models underperform. Bounced emails, wrong phone numbers, and outdated job titles don't just waste rep time. They poison every downstream model. A lead scoring algorithm trained on engagement data that includes bounced emails is learning from noise.

Snyk saw this firsthand. With 50 AEs prospecting 4-6 hours per week, their bounce rate sat at 35-40%. After implementing Prospeo for contact verification and enrichment, bounces dropped under 5%, and AE-sourced pipeline jumped 180% - generating 200+ new opportunities per month. The predictive models didn't change. The data feeding them did. A 7-day data refresh cycle (versus the 6-week industry average) meant their CRM stayed current, which is critical when models retrain on recent engagement signals.

Here's our hot take: if your average deal size is under $25K, skip the six-figure forecasting platform entirely. Spend $5K on data enrichment and verification, use your CRM's native scoring, and you'll outperform 80% of teams running expensive AI tools on dirty data. (If you're shopping, start with the best data enrichment tools and a dedicated email verifier.)

You read it above - churn models beat acquisition models because they run on first-party data you control. But acquisition predictions improve dramatically when your contact data is accurate. Prospeo enriches leads with 50+ data points at a 92% match rate, giving your models the clean signal they need.

Stop feeding your predictive models stale, unverified contacts.

A 90-Day Implementation Playbook

Phase 1: Audit and Define (Days 1-30)

Start by measuring your data quality. Run your contact database through a verification tool to identify how much is actually usable. Then pick a single pilot use case - we recommend lead scoring because it has the fastest feedback loop and doesn't require forecasting infrastructure. If you're on Salesforce Enterprise+ or HubSpot Sales Hub Pro, start with native predictive features before evaluating standalone platforms.

Phase 2: Build and Integrate (Days 31-60)

Configure your model using 12+ months of CRM history with deal outcomes. Key inputs: historical data, engagement signals, firmographic attributes, and clean contact records. Split historical data into training and validation sets to avoid overfitting. Integrate scoring outputs directly into your CRM so reps see scores in their workflow - not in a separate dashboard they'll never check. This is where most teams lose adoption, and it's entirely preventable.

Phase 3: Measure and Iterate (Days 61-90)

Track three things: model accuracy (are high-scored leads actually converting?), rep adoption (are reps using scores to prioritize?), and pipeline impact (has velocity or conversion rate improved?). Iterate based on what you learn. Then - and only then - consider expanding to pipeline forecasting or churn prediction.

Tools Worth Evaluating in 2026

The market splits into two layers: analytics platforms that generate predictions, and data platforms that feed them clean inputs.

Salesforce Einstein is the default for teams already deep in the Salesforce ecosystem. Native integration, typically $150+/user/month depending on edition and add-ons. Skip it if you're on Professional or below - the ROI math doesn't work at that tier.

Gong Forecast combines conversation intelligence with pipeline forecasting, giving it a unique signal layer most competitors lack. Expect $30K-$100K+/year depending on team size. Best for teams that want deal inspection, not just top-line numbers.

Clari is the revenue platform focused on pipeline visibility. Enterprise pricing, typically $30K+/year. Strong when your problem is pipeline inspection across multiple teams and regions.

HubSpot Forecasting comes with Sales Hub Pro at around $90-$100/user/month. Good enough for teams under 50 reps who don't need a standalone tool - and honestly, most teams under 50 reps don't.

Monday CRM at roughly $12-$15/seat/month is the lightweight option. Limited predictive features but surprisingly capable for small teams running simple pipelines. For teams with fewer than 10 reps and straightforward deal stages, it's worth a look before spending more.

Scoop Analytics offers spreadsheet-native forecasting starting at $299/month. If your team lives in Google Sheets and refuses to leave, this is the bridge.

Every analytics tool on this list assumes your CRM data is clean. None of them will tell you it isn't. If you're rebuilding your stack, start with a tighter RevOps tech stack and basic CRM automation before adding more AI.

FAQ

How much does predictive analytics for sales cost?

SaaS tools range from $12-$200/user/month; enterprise platforms run $30K-$100K+/year. Custom implementations cost $75K-$500K+. Start with your CRM's native scoring features - they're included in most Pro-tier plans and require zero data science headcount.

Can small sales teams benefit from predictive models?

Yes. CRM-native features in HubSpot and Salesforce require no data science team. Start with lead scoring, which delivers the highest ROI for teams under 20 reps. Pair it with verified contact data to avoid training models on noise.

What data do I need to get started?

At minimum: 12+ months of CRM history with deal outcomes, contact engagement data, and verified contact records. Without clean emails and current job titles, your model trains on decay.

How long before I see measurable results?

Most teams see forecast accuracy improvement within 60-90 days of deploying lead scoring. Full ROI on SaaS tools typically takes 6-12 months. Enterprise custom builds take 12-24 months - another reason to start with native CRM features.

Does data quality really matter that much?

It's the number one reason implementations fail. If 30% of your CRM emails bounce, your lead scoring model is blind to a third of your pipeline. Weekly data refresh cycles keep models accurate - no algorithm compensates for records that are simply wrong.

Fix the data first. Then the models follow. Every team we've seen succeed with sales predictive analytics started not with a better tool, but with a cleaner database.