AI BDR: What They Cost, Why They Fail, and How to Make Them Work

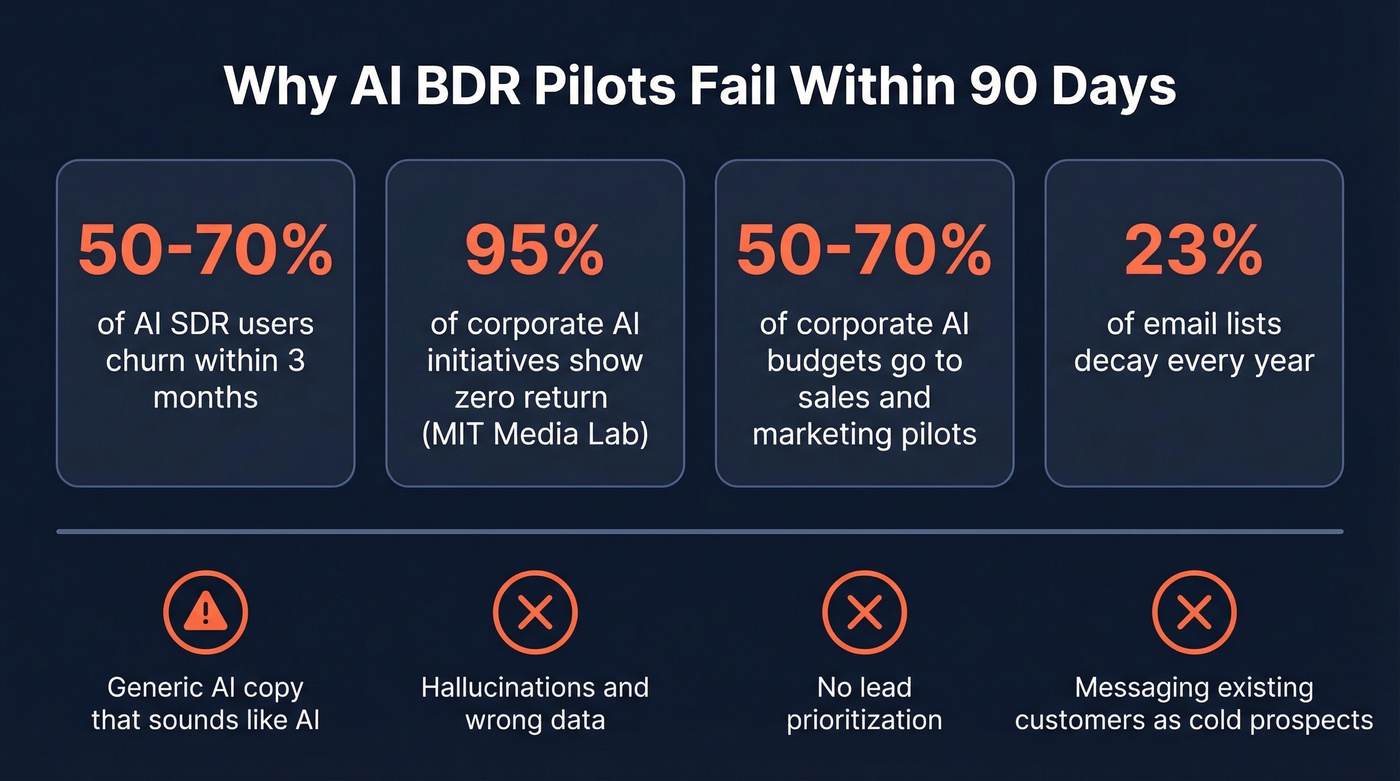

Your CEO just forwarded you an article about the AI BDR revolution. The subject line says "thoughts?" - which really means "why aren't we doing this yet?" Here's what every vendor page won't tell you: 50-70% of AI SDR users churn within three months. The tools work, but only under specific conditions, and most teams don't meet them.

What You Need (Quick Version)

Want a turnkey AI BDR? [AiSDR](#aisdr - best-value-dedicated-option) at $900/mo or [Reply.io](#replyio-jason-ai - ai-on-a-proven-sequencer) from $259/mo deliver the best value-to-capability ratio right now. Both let you run a real pilot without a five-figure annual commitment.

Not sure yet? Read the failure data below before signing anything.

What Is an AI BDR?

It's software that automates the core tasks of a business development rep: prospecting, writing and sending cold outreach, following up, qualifying inbound leads, and booking meetings. The "AI" part means the tool uses language models to personalize messages, research prospects, and make routing decisions - not just run static sequences.

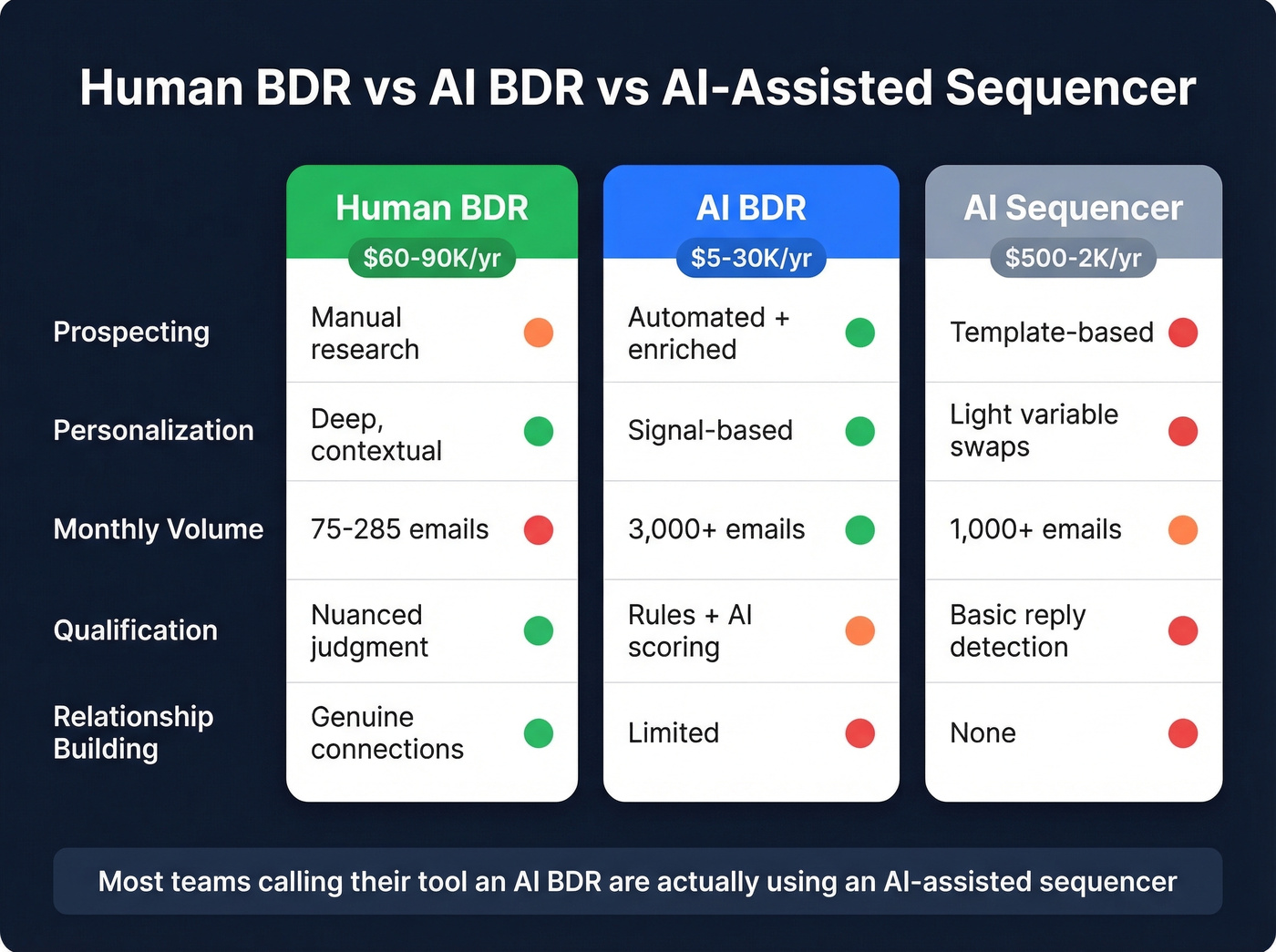

Here's the thing: most tools calling themselves AI-powered BDRs are sequencers with a ChatGPT wrapper. They write slightly varied email copy and send it on a schedule. A real automated business development agent handles research, adapts messaging based on prospect signals, qualifies responses, and routes hot leads to humans. The gap between those two categories is enormous, and vendors have every incentive to blur it.

| Human BDR | AI BDR | AI-Assisted Sequencer | |

|---|---|---|---|

| Prospecting | Manual research | Automated + enriched | Template-based |

| Personalization | Deep, contextual | Signal-based | Light variable swaps |

| Volume | 75-285 emails/mo | 3,000+ emails/mo | 1,000+ emails/mo |

| Qualification | Nuanced judgment | Rules + AI scoring | Basic reply detection |

| Cost | $60-90K/yr total comp | $5-30K/yr | $500-2K/yr |

The outbound world has split into three camps: teams that blast volume, teams that spend two hours researching every prospect, and teams that have abandoned cold outreach entirely for intent-based warm leads. AI-driven prospecting tools are an attempt to combine the volume of camp one with the quality of camp two. When they work, they deliver. When they don't, they burn your domain and your pipeline.

| Can Do | Can't Do |

|---|---|

| Research prospects at scale | Navigate procurement committees |

| Personalize based on signals | Build genuine relationships |

| Follow up consistently | Read political dynamics |

| Qualify inbound 24/7 | Handle complex discovery |

| Send 3,000+ emails/month | Know the difference between a lead and a customer |

Why Most Pilots Fail Within 90 Days

That 50-70% churn figure isn't a rounding error - it's a category-level problem. And the stakes are high: 50-70% of corporate AI budgets flow to sales and marketing pilots, making this the most expensive category to get wrong. Across all industries, 95% of corporate AI initiatives show zero return, per an MIT Media Lab study that reviewed 300+ initiatives.

The failure modes are predictable and well-documented on r/gtmengineering:

- Generic AI copy that reads like AI copy. The "I noticed your company recently..." opener is now the cold email equivalent of "Dear Sir/Madam."

- Hallucinations and embarrassing errors. AI confidently referencing a prospect's role at a company they left two years ago, or congratulating someone on a funding round that never happened.

- Weak lead prioritization. The AI treats every contact equally, burning through your best prospects with the same generic sequence it sends to everyone else.

- Context failures that damage relationships. One Bain Capital VC documented a case where AI outbound accidentally messaged existing customers as cold prospects. That's not a bug - it's a fundamental limitation of tools that don't understand relationships.

The common thread? Bad data in, bad outreach out. Most teams blame the AI when the real problem is the contact data feeding it.

The Deliverability Problem Nobody Warns About

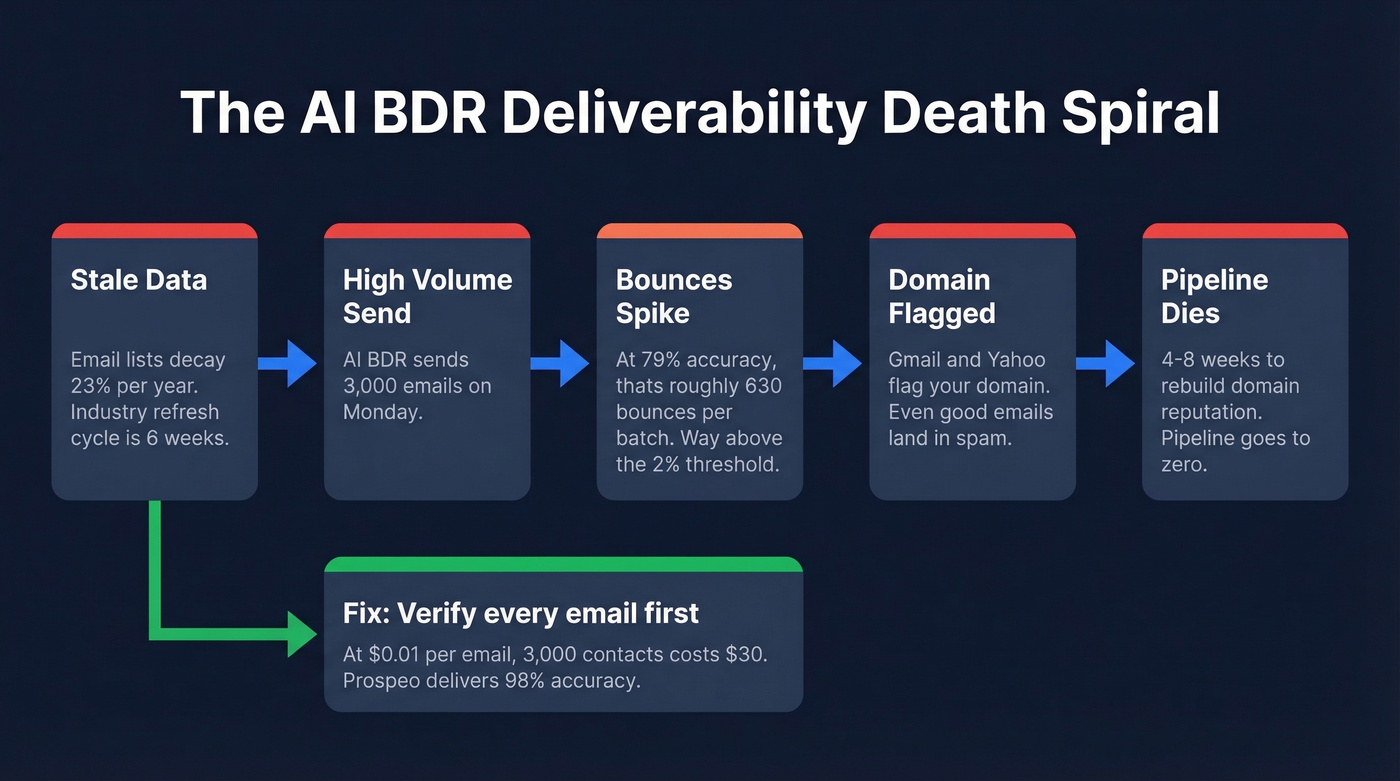

Your shiny new automated rep sends 3,000 emails on Monday. By Wednesday, your domain is flagged. What happened?

Email lists decay at 23% per year. People change jobs, companies rebrand, domains expire. Industry-average data refresh runs about six weeks, which means a meaningful chunk of contacts are already stale by the time you hit "send." Bounce rates above 2% or spam complaints above 0.3% can severely damage your domain reputation. Once you're flagged, even your good emails land in spam.

On r/b2bmarketing, users report that even with "solid email infrastructure," tools like Apollo combined with Smartlead kept landing in spam. Apollo's email accuracy runs around 79% - at high-volume outbound, that's roughly 630 bounces per 3,000-email batch. That's a domain killer.

Gmail and Yahoo's bulk sender requirements made this worse. You need SPF/DKIM/DMARC authentication, one-click unsubscribe, and spam complaint rates under 0.3%. Automated outbound tools that blast volume without verified data trip every one of these thresholds.

This is where your data provider matters more than your AI provider. We've seen this play out repeatedly with our own users: Snyk's team of 50 AEs went from 35-40% bounce rates to under 5% after switching to Prospeo for email verification, and Stack Optimize maintains 94%+ deliverability with zero domain flags across all their clients. At ~$0.01 per email, verifying 3,000 contacts costs about $30 - less than one month of Instantly. Rebuilding a burned domain takes 4-8 weeks.

50-70% of AI BDR pilots fail because of bad data, not bad AI. Prospeo refreshes 300M+ profiles every 7 days - not every 6 weeks like the providers your AI agent is pulling from. At $0.01 per verified email, protecting your domain costs less than one day of your AI BDR subscription.

Fix the data layer before you automate the outreach layer.

Snyk's 50 AEs dropped bounce rates from 35% to under 5% with Prospeo. Stack Optimize runs 94%+ deliverability across every client with zero domain flags. Your AI BDR can send 3,000 emails a month - but only if every address is real. Prospeo's 5-step verification and 98% accuracy make sure they are.

Stop feeding your AI BDR dead emails. Start with verified data.

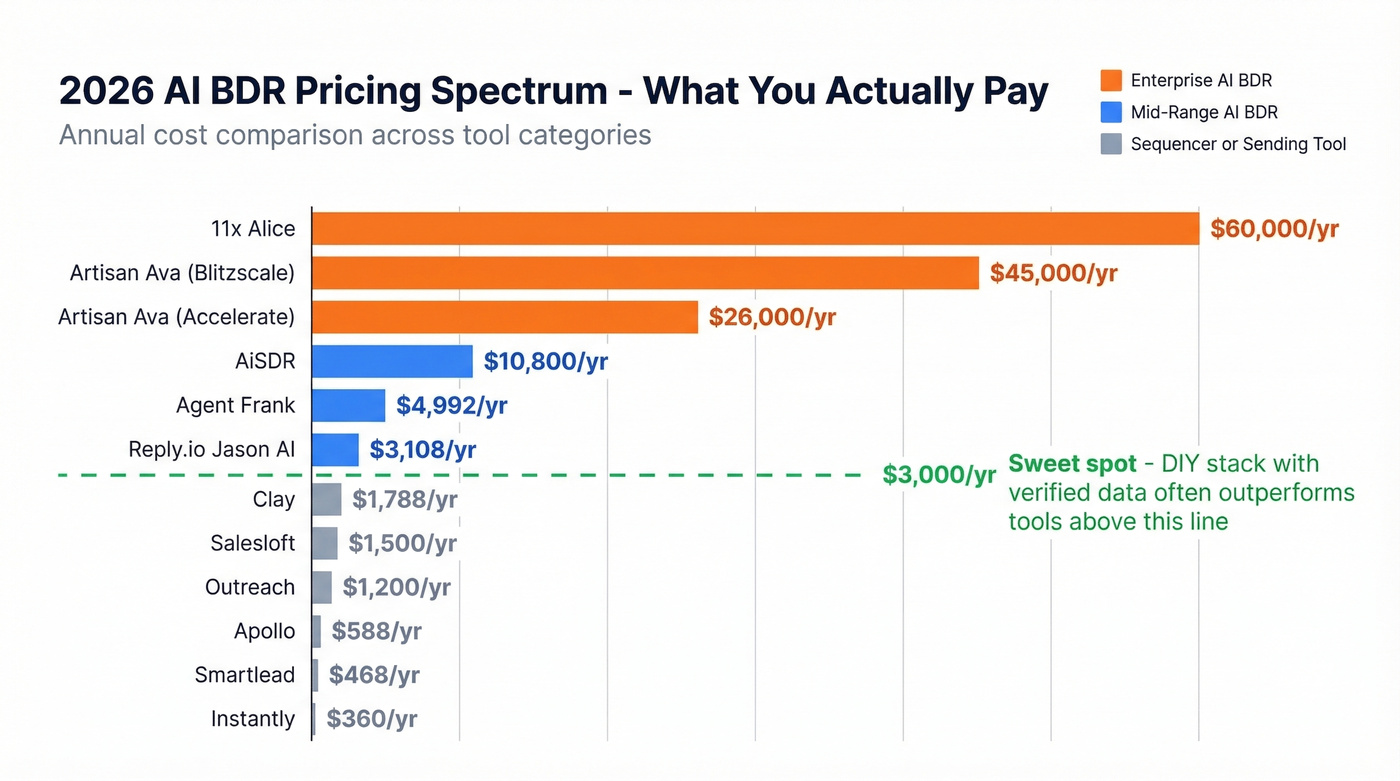

Real Pricing in 2026

Pricing transparency in this category is terrible. Most vendors hide behind "request a demo" buttons. Here are real numbers we've gathered from procurement data, G2, Reddit buyer reports, and vendor pages.

| Tool | Type | Starting Price |

|---|---|---|

| Artisan (Ava) | Full AI BDR | ~$9,000-$57,000+/yr |

| 11x (Alice) | Full AI BDR | ~$5,000+/mo |

| AiSDR | Full AI BDR | $900/mo |

| Agent Frank | Full AI BDR | $416/mo |

| Reply.io (Jason AI) | AI sales engagement | From $259/mo |

| Clay | Build-your-own | $149/mo |

| Apollo | Sequencer + data | $49/mo (free tier available) |

| Instantly | Sending infra | $30/mo |

| Smartlead | Sending infra | $39/mo |

| Outreach | Sales engagement | ~$100/user/mo |

| Salesloft | Sales engagement | ~$125/user/mo |

Other tools like Lindy.ai and Regie.ai exist in this space but don't publish clear pricing.

Let's be honest about what these numbers tell you. The pricing gap between sequencers ($30-$50/mo) and real AI BDR platforms ($900-$5,000/mo) is the clearest signal of what you're actually buying. Artisan at a median contract of $26,250/year with no free trial is betting you won't comparison-shop. 11x refusing to publish pricing is a red flag, not a feature - if a vendor won't tell you what it costs, they're optimizing for their sales team's commission, not your buying experience.

Most teams would get better results from a $250/mo DIY stack with verified data than from a $2,000/mo agent running on stale contacts.

Tools Worth Evaluating

AiSDR - Best Value Dedicated Option

Use this if you want a turnkey solution without enterprise pricing. AiSDR's Explore tier runs $900/mo and covers prospecting, personalized outreach, and follow-up sequencing. G2 reviewers give it 4.6/5 across 89 reviews, with consistent praise for customer support and ease of onboarding.

Skip this if you need deep CRM integrations or flawless email deliverability out of the box. G2 cons consistently flag integration issues and email problems. You'll still need human oversight on anything going to high-value prospects. Best for mid-market teams that want to test automated outbound without signing a $25K annual contract.

Artisan (Ava) - Enterprise Pricing, Enterprise Expectations

Artisan charges by leads contacted per year: the Accelerate tier covers 12,000 leads/year for $9,000-$26,000, while Blitzscale handles 65,000 leads/year for $26,000-$45,000. Custom plans for 20+ seats push past $57,000+/year. There's no free trial, no monthly option, and contracts are annual upfront.

For that money, you could run AiSDR plus a verified data provider plus sending infrastructure and still have budget left over.

Agent Frank (Salesforge) - Budget Full Option

Agent Frank sits in the sweet spot between basic sequencers and enterprise platforms at $416/mo. It handles prospecting, personalization, and multi-step follow-ups without the $900+/mo price tag of dedicated solutions. Early signals are promising, and it's the most interesting budget option in the category right now.

11x (Alice) - Enterprise-Only, Pricing Hidden

11x runs ~$5,000+/mo with custom pricing only. No published tiers, no self-serve option. If a vendor won't tell you what it costs on their website, negotiate hard and demand a pilot period.

Reply.io (Jason AI) - AI on a Proven Sequencer

Reply.io's Jason AI adds an AI agent layer on top of their established sales engagement platform, starting from $259/mo. If you're already using Reply.io for sequences, adding Jason AI is the lowest-friction path to testing AI-driven outbound. It handles email generation, response classification, and meeting booking - augmentation for an existing workflow, not a standalone agent.

Clay - Build Your Own Stack

Clay takes the composable approach at $149/mo for the Starter tier. Claygent handles prospect research, waterfall enrichment pulls data from multiple providers, and you pipe everything into your sequencer of choice. Best for technical RevOps teams who want full control. The tradeoff is real - you'll spend time building and maintaining workflows that turnkey tools handle automatically.

The Budget Stack: Apollo + Instantly

When AI BDRs Actually Work

Not every pilot fails. The SaaStr team published detailed numbers from their AI-powered inbound qualification: 668K sessions narrowed to 45K AI-engaged, then 1,025 conversations, 91 meetings, and $1.01M closed-won with $2.5M in total pipeline. By October, 71% of their closed-won sponsorship deals came from AI-qualified meetings - up from a historic 29-34% inbound contribution. On the outbound side, their AI SDR sent 3,221 emails per month versus 75-285 per human SDR.

Setup took 1-2 weeks with ongoing maintenance of 2-4 hours per week. This wasn't set-and-forget.

The pattern in successful deployments comes down to five conditions:

- Clean, verified contact data. Not "probably good" data - verified emails with sub-2% bounce rates.

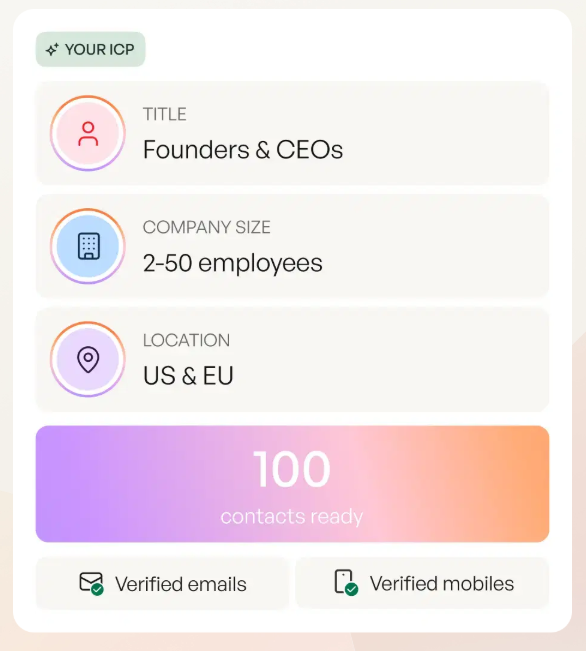

- A clearly defined ICP. The AI needs guardrails. Without them, it prospects indiscriminately. (If you need a framework, start with an ICP.)

- Human oversight on qualification. AI handles volume; humans handle judgment calls on high-value prospects. Use a consistent deal qualification framework so humans and AI score the same way.

- Proper email infrastructure. SPF/DKIM/DMARC configured, domains warmed, sending limits respected. If you’re troubleshooting, start with sender authentication and then check domain reputation.

- Realistic expectations. These tools augment your team - they don't replace the need for humans who understand context and relationships.

Teams that nail all five consistently build real pipeline. Teams that skip even one - especially data quality - end up in the 50-70% churn bucket.

When NOT to Use One

Automated outbound isn't universally appropriate. Deploying it in the wrong context can actively damage your business.

Enterprise and high-ACV deals are off-limits. When you're selling six- and seven-figure contracts, the prospect expects a human who understands their business. As the Bain Capital VC team put it: "Machines don't understand context; they don't know the difference between a lead and a relationship. BDRs do." (If you’re selling upmarket, align this with your enterprise sales strategy.)

Existing customer outreach is another no-go. The Bain example of AI accidentally messaging current customers as cold prospects is the nightmare scenario. One context failure can undo years of relationship building.

If your TAM is under 500 companies, you can't afford a single embarrassing AI hallucination - every prospect matters too much. And complex discovery requiring multi-threaded conversations across a procurement committee is still firmly human territory. AI can open doors, but it can't navigate the politics behind them.

Making AI + Human Workflows Work

Before you sign anything, run through four questions adapted from the Heinz Marketing framework:

Where does AI fit in your current process? Map your existing outbound workflow. Identify the specific bottleneck - is it prospecting volume, personalization quality, follow-up consistency, or qualification speed? Buy a tool that solves that bottleneck, not one that replaces your entire process. (If you need options, compare outbound email automation stacks first.)

Is your data clean enough? This is the question most teams skip, and it's the one that kills pilots. If your CRM has stale contacts, duplicate records, or unverified emails, no AI tool will save you. Run a verification test on a sample of your list before committing to any platform. If you’re sourcing lists, start with B2B list providers.

What will the prospect experience be? Send test emails to yourself and your team. Read them as a prospect would. If they feel generic or contain obvious AI artifacts, you're not ready.

Who owns ongoing optimization? These tools need 2-4 hours per week of human attention - reviewing outputs, adjusting targeting, updating messaging. If nobody owns this, the tool will degrade within weeks.

For teams that decide to move forward, our recommended timeline looks like this: Month 1 is setup and pilot - configure the tool, verify your data, send to a small test segment of 200-500 contacts. Months 2-3 are optimization - review outputs weekly, tighten ICP targeting, A/B test messaging. Month 4+ is the decision point - scale if conversion rates justify the spend, or kill the pilot and reallocate budget. Always ask for a free trial or pilot period. If the vendor says no, walk.

FAQ

What's the difference between an AI BDR and an AI SDR?

Functionally, almost nothing in 2026. "BDR" typically implies outbound prospecting, while "SDR" includes inbound qualification. Most AI tools handle both - the labels are interchangeable across vendors.

Can an AI BDR fully replace a human rep?

No. AI handles research, sequencing, and follow-ups at 10-40x human volume, but humans still outperform on complex discovery, relationship-building, and multi-threaded enterprise deals. The best results come from hybrid workflows where AI handles volume and a human rep closes.

What's the biggest reason AI BDR pilots fail?

Bad contact data. Stale emails cause bounces above 2%, bounces damage your domain reputation, and a damaged domain means even your good emails land in spam. Verify contacts at 98%+ accuracy before scaling any automated outbound.

How long does it take to see results?

Setup takes 1-2 weeks. Budget 60-90 days of optimization before evaluating ROI. Teams that expect results in week one almost always churn by month three.

How do I keep AI outbound from landing in spam?

Verify emails before sending (98%+ accuracy minimum), warm up dedicated sending domains for 2-3 weeks, authenticate with SPF/DKIM/DMARC, keep bounce rates under 2%, and cap daily volume at 50-75 emails per mailbox.