AI Sales Forecasting Accuracy: What the Data Actually Shows in 2026

It's the last Thursday of the quarter. Your CRO pulls up the forecast call, and the number is off by 22% - again. Reps blame "slipped deals." Finance blames "optimistic pipeline." Everyone blames the spreadsheet.

The real problem? Nobody knows what good AI sales forecasting accuracy actually looks like, or whether the six-figure platform they just bought is making things better or worse.

The Short Version

Median B2B forecast accuracy sits at 70-79%. AI/ML methods reduce variance to ±8-15%, a 15-25% improvement over manual roll-ups. Meaningful, but not magic.

The biggest accuracy lever isn't the model - it's your data. B2B contact data decays at 2.1% per month. Within a year, up to 70% of your database becomes unreliable.

Before investing $200-400/user/month in a forecasting platform, verify and enrich your CRM. Clean data with basic forecasting beats dirty data with expensive AI every time.

The Ugly Truth About Forecast Accuracy

Only 7% of sales organizations achieve forecast accuracy of 90% or higher, according to Gartner research. The median sits at 70-79%. And 69% of sales ops leaders say forecasting is getting harder, not easier.

The operational data is worse. XANT Labs studied 270,912 closed-won opportunities representing $18.1B in revenue. Only 28.1% of opportunities closed within 5% of the 90-day forecasted amount. Nearly half - 47% - were off by more than half. The average 90-day prediction missed by over 31%.

Here's the thing: this isn't a technology gap. McKinsey's Global Survey shows 88% of organizations report regular AI use in at least one function, but nearly two-thirds haven't begun scaling AI across the enterprise, and only 39% report any measurable EBIT impact at the enterprise level. Companies are buying AI tools. They're just not getting results from them - and forecasting is one of the hardest places to see ROI because the inputs are so messy.

What AI Forecasting Actually Delivers

Variance by Method

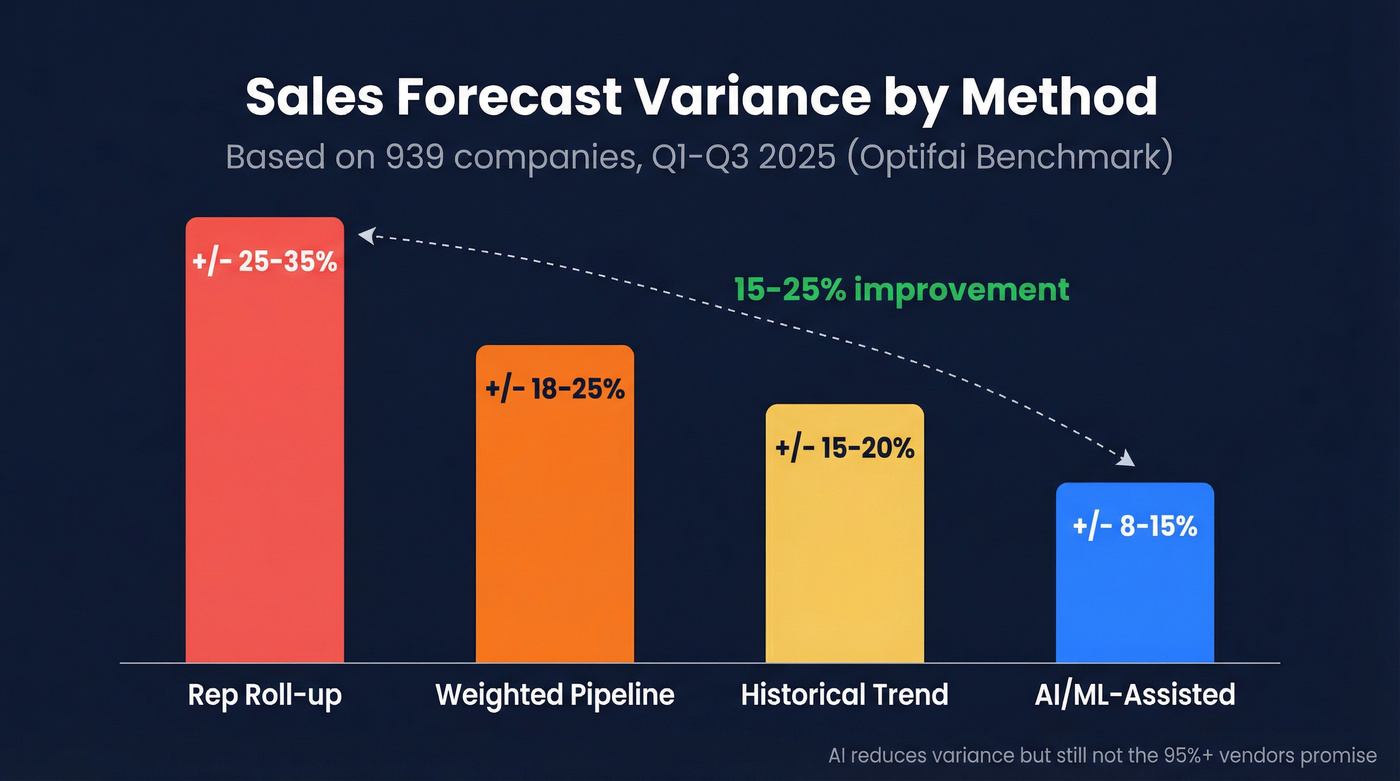

The Optifai benchmark (N=939 companies, Q1-Q3 2025) breaks down forecast variance by methodology:

| Forecasting Method | Typical Variance |

|---|---|

| Rep roll-up | ±25-35% |

| Weighted pipeline | ±18-25% |

| Historical trend | ±15-20% |

| AI/ML-assisted | ±8-15% |

AI/ML-assisted forecasting delivers a 15-25% improvement over manual methods. That's real. But it's still ±8-15% variance, not the "95%+ accuracy" that vendors splash on their landing pages.

The improvement comes from consistency - AI doesn't sandbag, doesn't get optimistic before board meetings, and doesn't forget to update stage probabilities. The highest-performing teams treat forecasts as living numbers that update in real-time from CRM activity, email engagement, and deal signals, not weekly snapshots frozen in a slide deck. These benchmarks hold across most B2B verticals, though companies with longer sales cycles tend to see wider variance at 90-day horizons.

Accuracy by Forecast Horizon

Accuracy decays the further out you forecast:

| Forecast Horizon | Accuracy Range | Decay Rate |

|---|---|---|

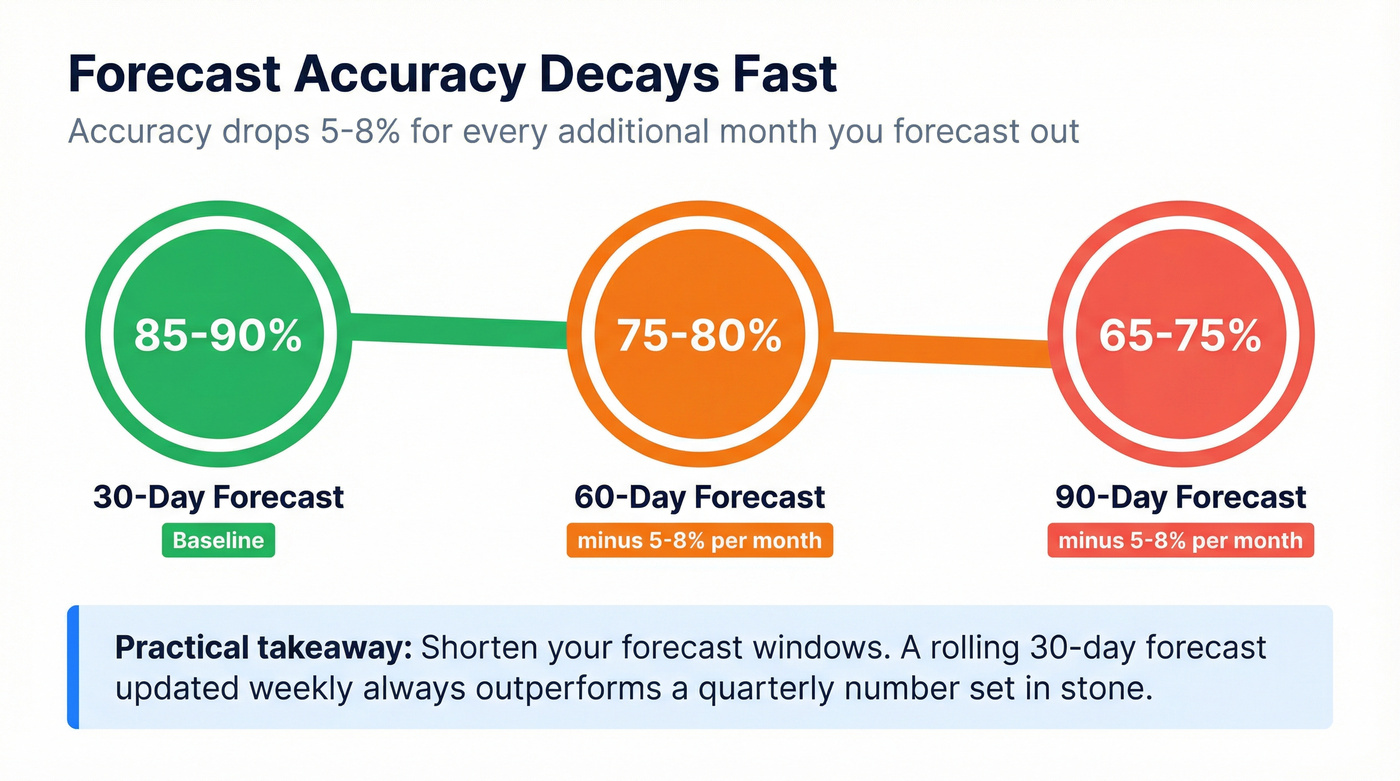

| 30-day | 85-90% | Baseline |

| 60-day | 75-80% | ~5-8%/month |

| 90-day | 65-75% | ~5-8%/month |

A 30-day forecast at 87% accuracy drops to roughly 70% at 90 days. That 5-8% monthly decay is consistent across company sizes and industries. The practical takeaway: shorten your forecast windows or re-forecast monthly. A rolling 30-day forecast updated weekly will always outperform a quarterly number set in stone.

Performance Tiers

Not all teams are equally bad at this. The benchmark segments companies into tiers: elite performers at ±5% variance, top quartile at ±5-10%, median at ±15-25%, and bottom quartile at ±30% or worse. Forecastio's framework uses slightly different bands - world-class at 80-95%, average B2B at 50-70%, lagging below 50%.

If you're consistently above 80% accuracy, you're in the top quartile. Below 50%? You're guessing, and your board knows it.

How to Measure Forecast Accuracy

MAPE vs WAPE vs WMAPE

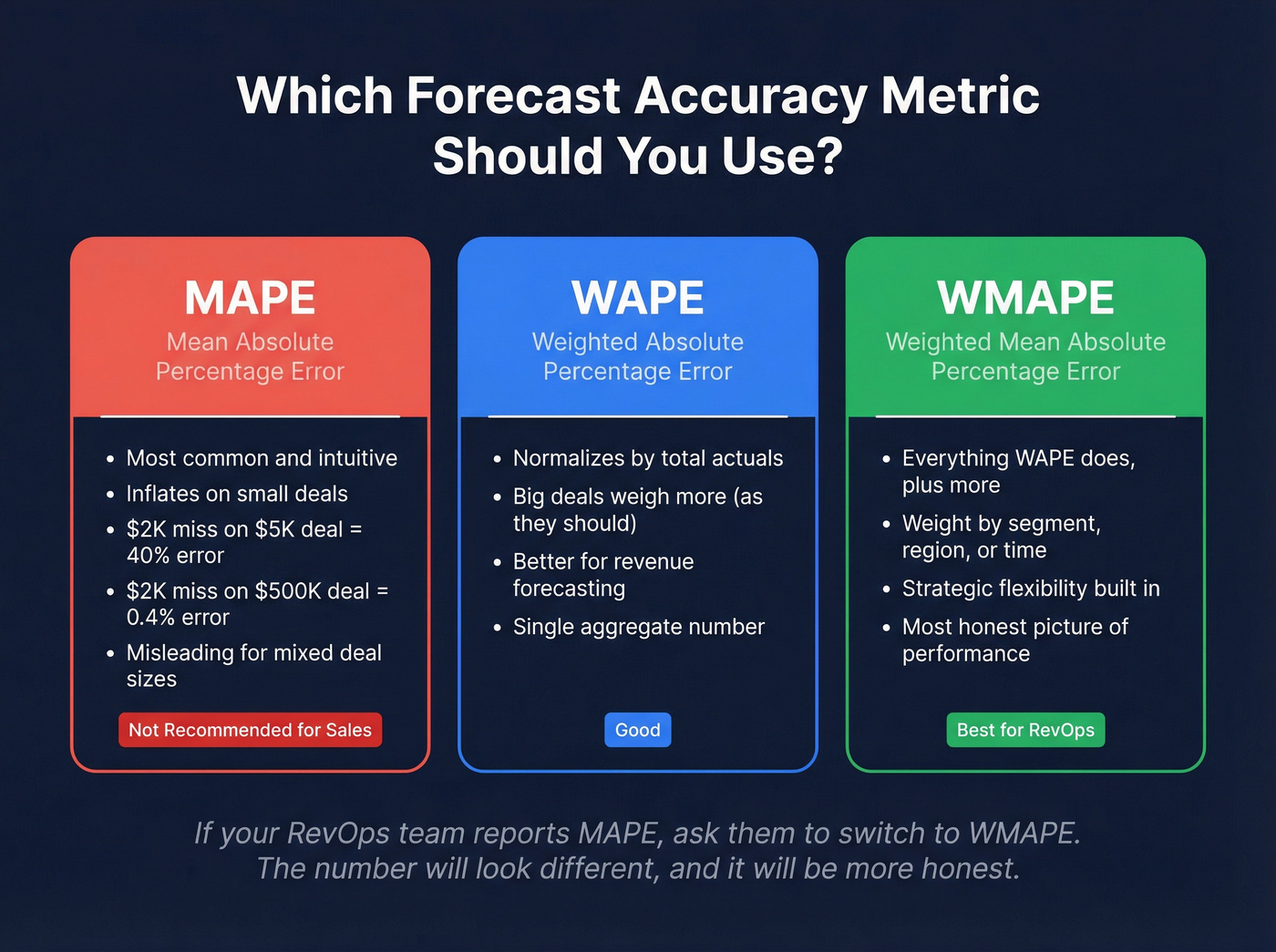

Most teams default to MAPE (Mean Absolute Percentage Error) because it's intuitive. It's also misleading.

MAPE inflates dramatically when actual values are small. A $2,000 miss on a $5,000 deal registers as 40% MAPE. That same $2,000 miss on a $500,000 deal? 0.4% MAPE. The absolute error is identical, but MAPE treats the small deal as 100x worse. For sales forecasting, revenue-weighted metrics are the right choice. WAPE normalizes by total actuals, so a miss on a $500K deal appropriately weighs more than a miss on a $5K deal. WMAPE goes further, letting you weight specific segments, regions, or time periods by strategic importance.

If your RevOps team is reporting MAPE, ask them to switch to WMAPE. The number will look different - and it'll be more honest.

Forecast Bias

Accuracy metrics tell you how far off you are. They don't tell you which direction. Over-forecasting means you're consistently promising revenue that doesn't materialize. Under-forecasting means you're leaving upside on the table and under-investing in capacity.

A team that's 85% accurate but consistently 10% high is making worse decisions than a team that's 80% accurate with no directional bias. Track bias separately. If your forecast is always high, you don't need a better model - you need to recalibrate your stage definitions or coach reps on deal qualification.

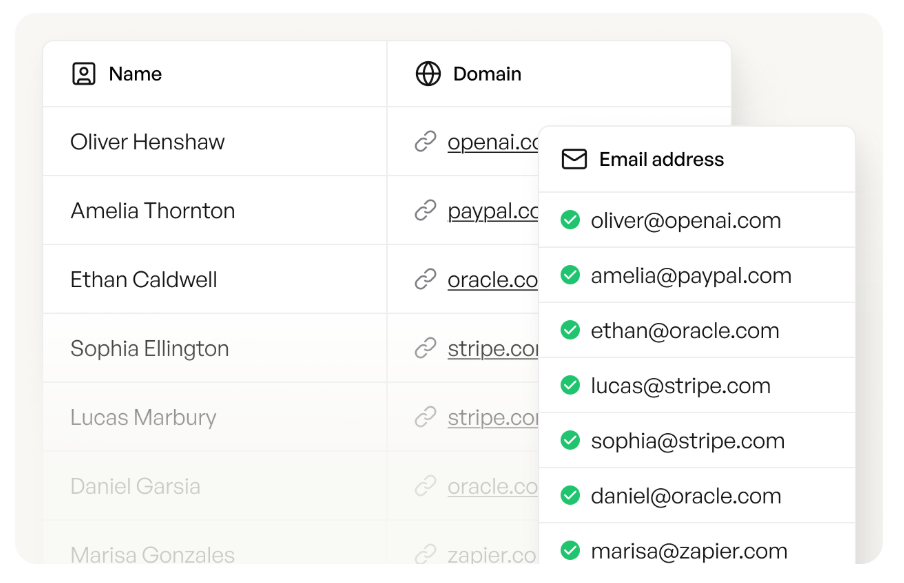

The article says it clearly: B2B data decays at 2.1% per month, and 70% of your database goes stale within a year. That's not a forecasting problem - it's a data problem. Prospeo enriches your CRM with 50+ data points per contact at a 92% match rate, refreshed every 7 days. At $0.01 per email, cleaning your pipeline costs less than one bad forecast.

Fix the inputs and the forecast fixes itself.

Why AI Forecasting Fails

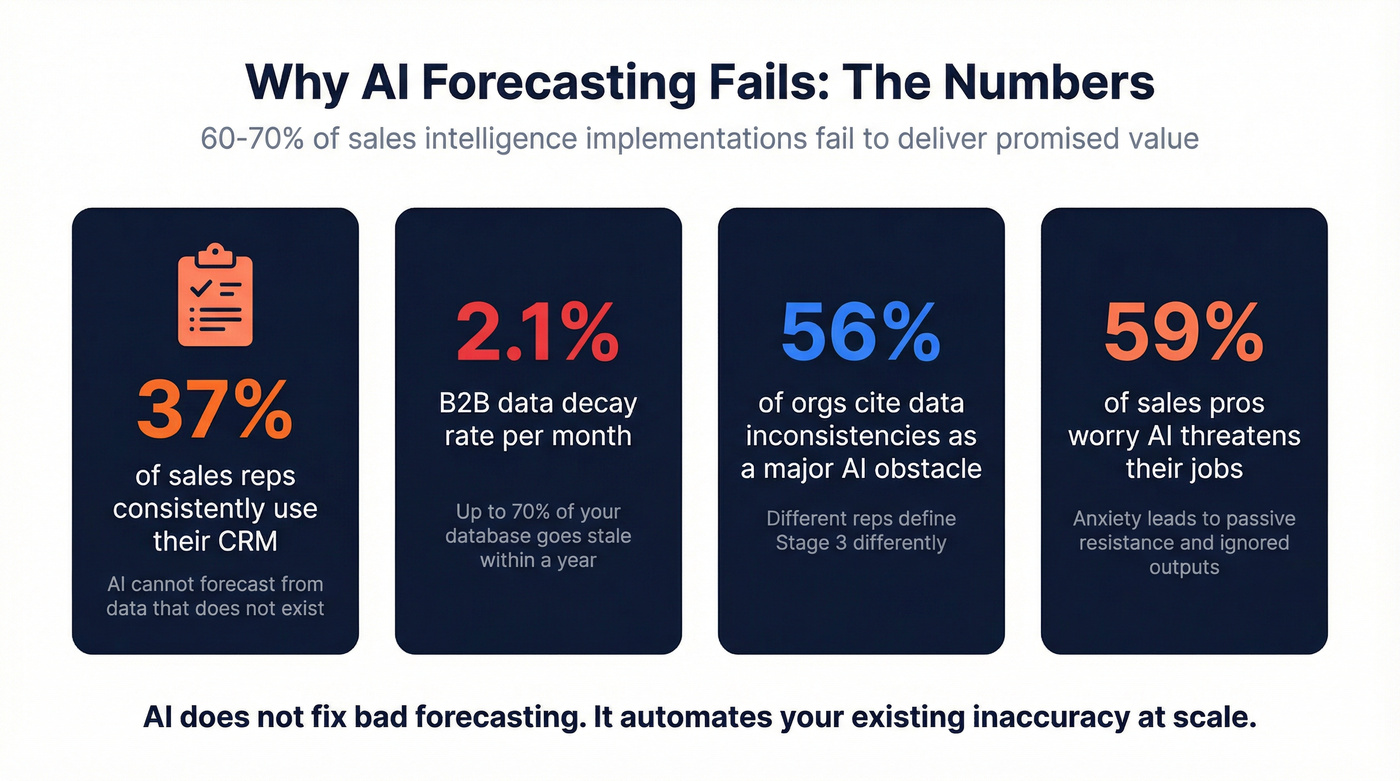

60-70% of sales intelligence implementations fail to deliver their promised value. That's a people-and-data problem, not a technology problem. The failure modes are predictable:

Nobody uses the CRM. Fewer than 37% of sales reps consistently use their CRM. AI can't forecast from data that doesn't exist. This mirrors what surfaces constantly in sales ops communities on Reddit - the most common complaint isn't "our AI is bad," it's "our reps don't log anything."

Data decays faster than you think. B2B contact data decays at 2.1% per month. Within a year, up to 70% of your database becomes unreliable. US companies lose roughly 27% of revenue annually due to incomplete or inaccurate customer data, and forecast accuracy is one of the first casualties.

Data inconsistency is endemic. 56% of organizations cite data inconsistencies as a major obstacle to AI adoption. Different reps define "Stage 3" differently. Close dates are aspirational. Deal amounts change without explanation.

Reps fear the tool. 59% of sales professionals worry AI tools threaten their positions. That anxiety translates into passive resistance - not updating the system, overriding AI recommendations, or simply ignoring the output. Managers need to review model outputs, validate assumptions, and build rep trust in the system rather than letting it run unchecked.

Look, AI doesn't fix bad forecasting. It automates your existing inaccuracy at scale. If your CRM data is 70% stale and your reps update deal stages once a month, an AI model trained on that data will produce confidently wrong numbers faster than a spreadsheet ever could.

The Data Quality Foundation

Here's the contrarian take nobody in the forecasting software space wants to hear: your forecast accuracy problem is a data problem, not a software problem. Forecastio's benchmarking found that improving CRM data hygiene can increase forecast accuracy by up to 30%. That's more improvement than most standalone AI platforms deliver.

B2B data decays at 2.1% per month. People change jobs, companies get acquired, phone numbers go stale, emails bounce. Every bad record in your CRM is a signal the forecasting model treats as truth. Multiply that across thousands of contacts and opportunities, and you've got a model confidently predicting outcomes based on people who don't work at those companies anymore.

Before you spend $200/user/month on a forecasting platform, spend $0.01/email verifying the contacts feeding it. We've seen this play out firsthand - Snyk's 50-person sales team saw bounce rates drop from 35-40% to under 5% after switching to Prospeo for verification, and their AE-sourced pipeline jumped 180%. Cleaner contact data means cleaner pipeline data, and that's what forecasting models actually consume.

56% of orgs say data inconsistencies block AI adoption. Your forecasting model can't predict revenue from contacts who've changed jobs, outdated phone numbers, and bouncing emails. Prospeo's 7-day refresh cycle replaces the industry-standard 6-week lag - so your pipeline reflects reality, not last quarter's org chart.

Stop forecasting on data that expired weeks ago.

AI Forecasting Models Explained

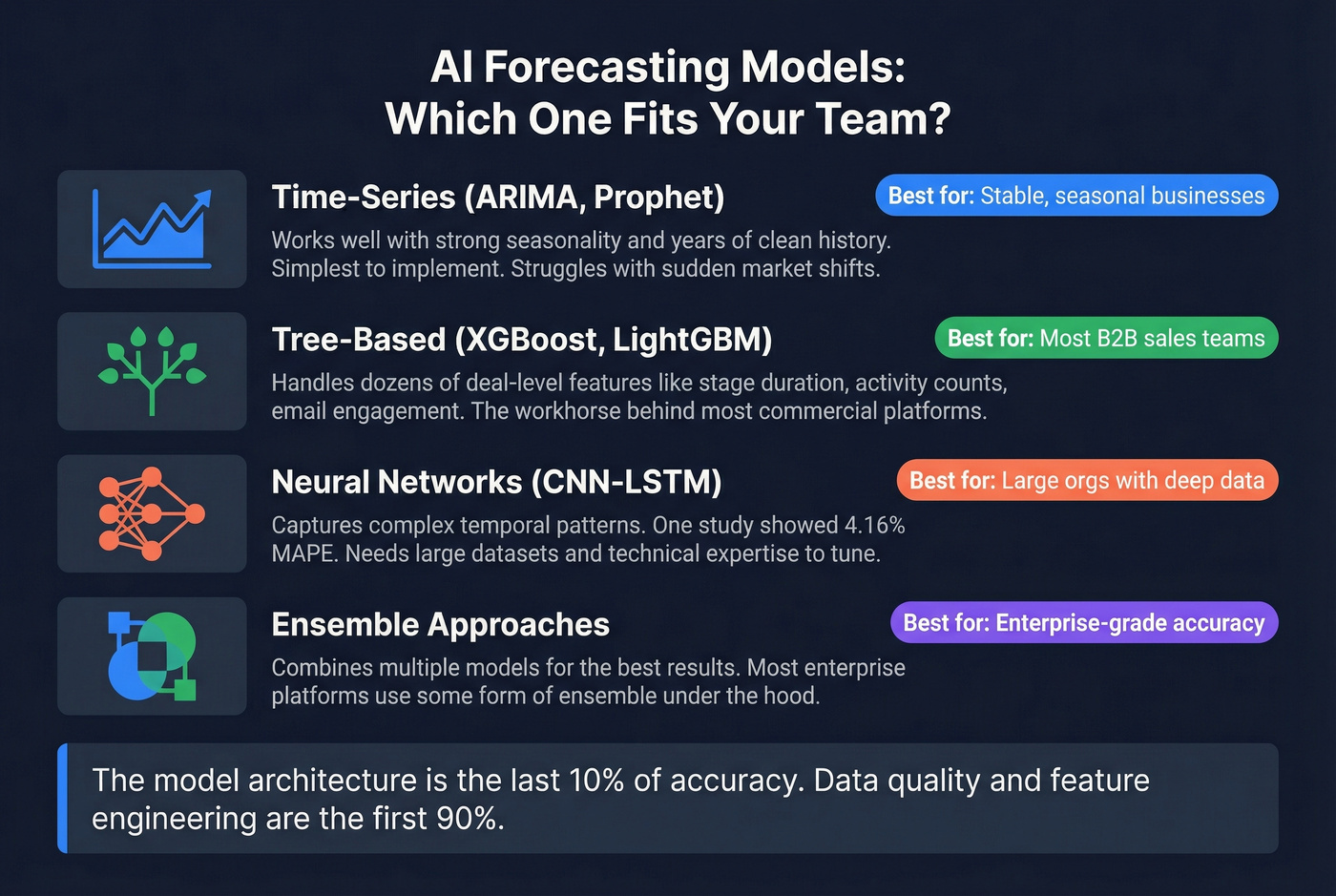

Time-series models like ARIMA and Prophet work well when your sales patterns have strong seasonality and years of clean history. They're the simplest to implement and interpret, but they struggle with sudden market shifts.

Tree-based models like XGBoost and LightGBM excel at incorporating dozens of deal-level features - stage duration, activity counts, email engagement, competitor mentions. They're the workhorse behind most commercial forecasting platforms because they handle messy, mixed-type data well.

Neural networks capture complex temporal patterns that simpler models miss. A 2025 academic study showed a hybrid CNN-LSTM model incorporating external variables achieved a MAPE of just 4.16%, and prior research suggests AI models can deliver up to 35% better accuracy than traditional methods - though real-world results depend heavily on data quality.

Ensemble approaches combine multiple models and typically deliver the best results. Most enterprise platforms use some form of ensemble under the hood.

What matters more than model choice is feature engineering and data quality. We've seen teams obsess over LSTM vs XGBoost when their CRM has 40% stale contacts and reps update close dates once a quarter. The model architecture is the last 10% of accuracy. The first 90% is clean, complete, timely data.

AI Forecasting Tools Compared

| Tool | Best For | AI Approach | Pricing | Key Limitation |

|---|---|---|---|---|

| Clari | Enterprise, 50+ reps | Ensemble + deal signals | ~$200-400/user/mo | Overkill below $50M pipeline |

| Gong | Conversation-led teams | Conversation intelligence | ~$250/user/mo | Forecasting is one module of many |

| Outreach | Rep-facing workflows | Explainable AI + signals | ~$100-150/user/mo | Forecasting less mature than engagement |

| Salesforce Einstein | Salesforce-native shops | Native ML on CRM data | $25-500/user/mo | Einstein 1 Sales at $500/user/mo is steep |

| HubSpot | Mid-market, HubSpot-first | Native CRM forecasting | $100-250/user/mo | Less customizable than standalone |

| Forecastio | HubSpot-first mid-market | Dual-probability framework | ~$79/user/mo | HubSpot-only |

Our pick for most teams: Start with your CRM's native forecasting and invest the savings in data quality. Graduate to Clari or Gong only when you're running $50M+ in pipeline and need cross-functional revenue visibility.

Clari at $200-400/user/month makes sense for enterprise teams. The Salesloft merger gives them a combined ~$450M ARR platform spanning forecasting, engagement, and revenue orchestration. Outreach takes a different angle, emphasizing explainable AI - showing reps why a deal is flagged - which addresses the trust gap that kills adoption.

Let's be honest about vendor claims: Clari and Forecastio both advertise 95%+ accuracy. Neither defines the metric, the forecast horizon, or the conditions under which that number was achieved. A 30-day forecast for a mature enterprise with stable deal cycles will always look better than a 90-day forecast for a startup with variable deal sizes. Don't compare vendor accuracy claims - compare your own before-and-after numbers.

Skip the dedicated platform if your average deal size is under $25k and your team is under 20 reps. A well-maintained CRM with native forecasting and verified data will get you to 80% accuracy, which puts you in the top quartile. The remaining 5-10% improvement from a $200/user/month tool rarely justifies the cost.

Implementation Roadmap

Getting from "our forecast is a guess" to "our forecast is useful" doesn't require a six-figure platform purchase.

Audit and enrich your CRM data. Fix bouncing emails, update job titles, fill in missing phone numbers. You can't forecast accurately from stale records. (If you want a deeper workflow, see CRM verify and B2B contact data decay.)

Establish your baseline. Measure current forecast accuracy using WMAPE over the last 4 quarters. You need to know where you are before you can measure improvement.

Ensure 12+ months of clean historical data. AI models need training data. If you just migrated CRMs or changed your sales process, simpler approaches will outperform ML methods until you've accumulated enough history.

Start with CRM-native AI forecasting. Salesforce Einstein, HubSpot's forecasting tools, or Forecastio for HubSpot shops. Don't buy a $200/user/month platform until you've maxed out what your existing stack can do.

Measure with WMAPE, not MAPE. Track both accuracy and bias. Expect 3-6 months to see measurable improvement.

FAQ

How accurate is AI sales forecasting?

AI/ML methods achieve ±8-15% variance, a 15-25% improvement over manual roll-ups. Top-quartile teams hit ±5-10%. No model eliminates irreducible uncertainty - 85-90% accuracy at 30-day horizons is genuinely world-class.

What's a good forecast accuracy for B2B?

Above 80% puts you in the top quartile across 939 benchmarked companies. Average B2B sits at 50-70%. Below 50% means your forecast isn't meaningfully better than a coin flip.

How long until AI forecasting pays off?

Expect 3-6 months assuming clean data and 12+ months of history. The first ROI usually comes from flagging at-risk deals earlier, not from the headline forecast number.

Does accuracy degrade over longer horizons?

Yes - at 5-8% per month. A 30-day forecast at 87% drops to roughly 70% at 90 days. Shorten your forecast windows and re-forecast monthly for better results.

What's the fastest way to improve forecast accuracy?

Fix your data. B2B contact data decays at 2.1% per month, and stale records poison every model built on them. Prospeo's 7-day refresh cycle and 98% email accuracy keep CRM records current - often delivering more improvement than switching forecasting platforms entirely.