Cold Email Open Rate: Why Every Benchmark Is Wrong (and What to Do About It)

One practitioner sent 147,000 cold emails last year and got a 1.2% positive reply rate. Another blog tells you to expect 40-60% open rates. Someone on r/coldemail is celebrating 10% opens on 256 sends and wondering what went wrong. The benchmarks for cold email open rate don't just vary - they contradict each other so fundamentally that the metric itself has become almost meaningless.

Here's what's actually happening, what the numbers really look like in 2026, and what to fix first when your campaigns underperform.

The Short Version

- The "average" cold email open rate lands somewhere between 15-45% depending on who you ask, and Apple Mail Privacy Protection makes all of them unreliable.

- Track reply rate instead. 3.43% is average, 5.5%+ is strong, 10.7%+ is elite.

- If your open rate is below 15%, the problem is almost certainly deliverability or data quality - not your subject line.

- Fix your list first, authenticate your domain, warm up properly, then optimize copy. That order matters.

What Does Open Rate Actually Measure?

Cold email open rate is the percentage of delivered emails that get opened: unique opens divided by delivered emails, multiplied by 100. Your email tool embeds a tiny invisible pixel, and when the recipient's client loads that pixel, it registers an "open."

The problem: that pixel can fire without a human ever reading your email. And it can fail to fire even when someone does.

Reply rate measures intent. Open rate measures... something.

2026 Benchmarks: Real Data

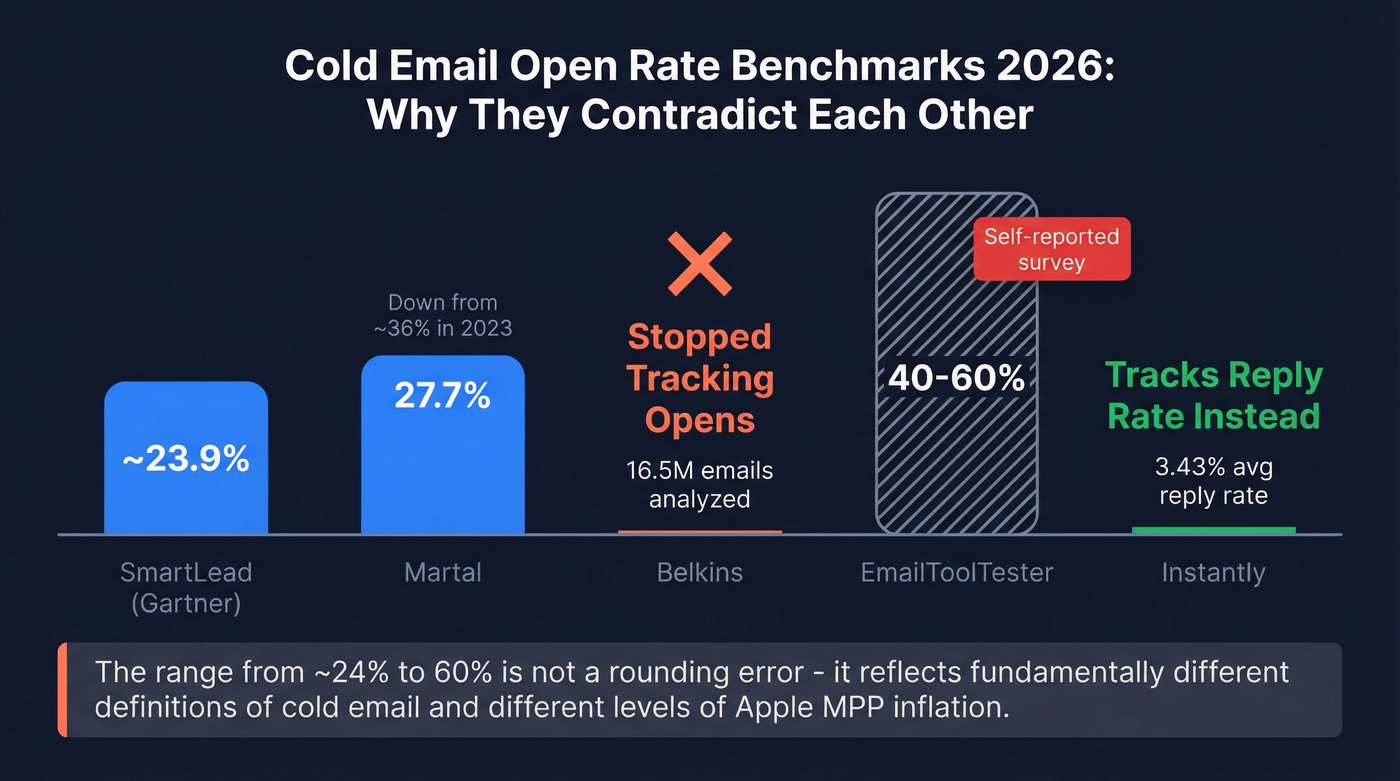

The spread across sources tells a story on its own.

| Source | Claimed Average | Dataset | Notes | Reliability |

|---|---|---|---|---|

| SmartLead (citing Gartner) | ~23.9% | Not disclosed | Aggregated stat | Low-Medium |

| Martal | 27.7% | Platform data | Down from ~36% in 2023 | Medium |

| Belkins | N/A (stopped tracking) | 16.5M emails, 93 domains | Stopped tracking opens mid-year | High |

| EmailToolTester | 40-60% (claimed range) | 1,800-person survey | Self-reported | Low |

| Instantly | N/A (reply focus) | Billions of interactions | 3.43% avg reply rate | High |

That range - ~23.9% to 60% - isn't a rounding error. It reflects fundamentally different definitions of "cold email," different audiences, different methodologies, and different levels of Apple MPP inflation.

EmailToolTester's 40-60% comes from a consumer survey of 1,800 people - self-reported data that conflates cold outreach with broader email behavior. Their own survey found 50.9% of recipients don't engage with cold emails at all, 13.7% delete immediately, and 10.3% mark them as junk. Yet the same source claims 40-60% open rates. That contradiction tells you everything.

The most honest thing Belkins did was stop tracking open rates entirely across 16.5 million emails. That's not a data gap - it's a signal that the metric isn't worth measuring.

The trajectory matters too. Martal's dataset shows open rates around ~36% in 2023, stabilizing at 27.7% by 2024-2026. Meanwhile, reply rates keep trending down: Instantly's platform-wide average sits at 3.43% in 2026, and Martal summarizes it as down from 5.1% in 2024 and ~7% the year prior. The most honest benchmark for 2026 cold email performance is reply-rate data across billions of interactions, because it measures something real.

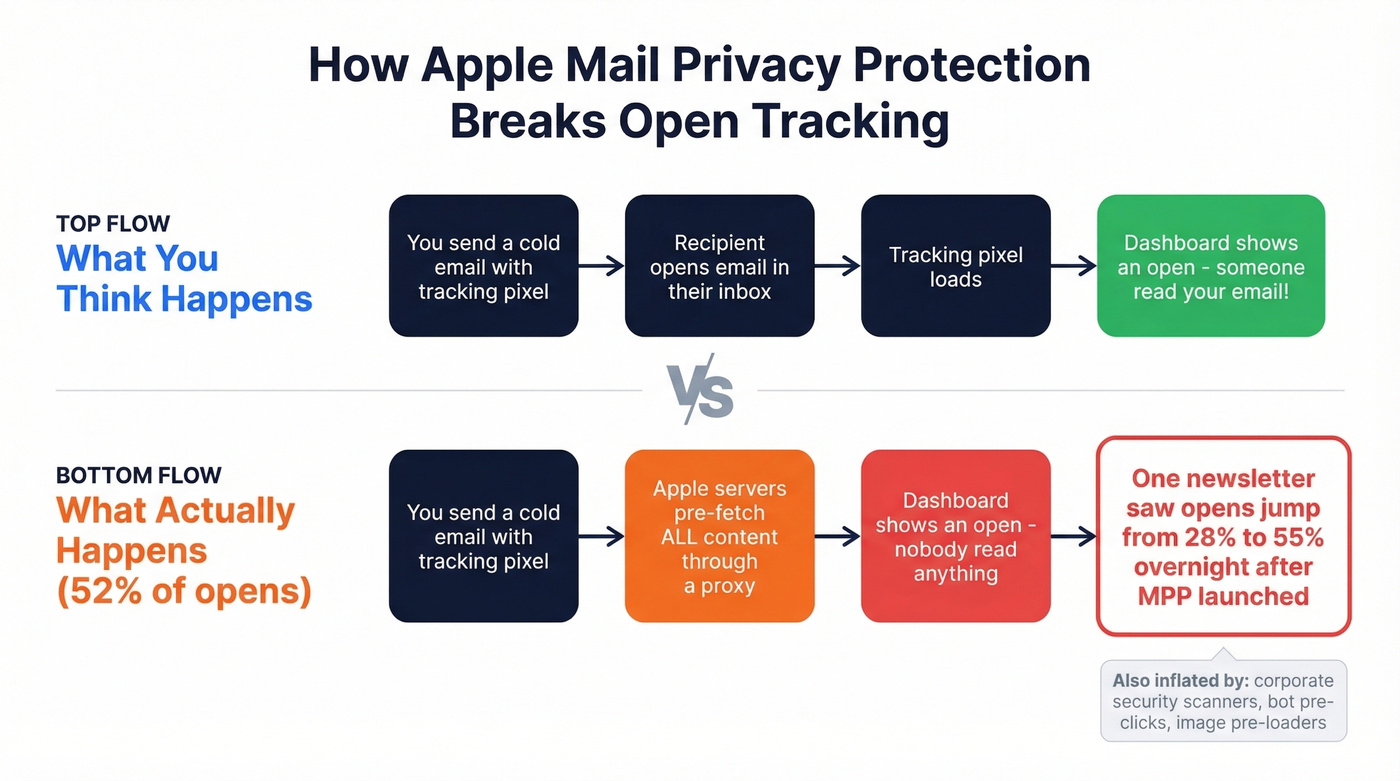

Why Your Reported Opens Are Inflated

Apple Mail Privacy Protection, launched in 2021, fundamentally broke open tracking. When someone uses Apple Mail - even if their actual mailbox is Gmail or Outlook - Apple's servers preload all email content, including your tracking pixel, through a proxy before the user ever sees the message. The pixel fires. Your dashboard counts an "open." Nobody read anything.

Apple devices accounted for roughly 52% of all email opens in 2021. According to Omeda's data, unique open rates nearly doubled within six months of MPP going live. One beehiiv newsletter saw open rates jump from 28% to 55% overnight - not because more people were reading, but because Apple was pre-fetching every pixel.

Add corporate security scanners that pre-click links and pre-load images, and your "open rate" becomes a composite of human attention, Apple proxies, and bot activity. Any open rate your outbound team reports should be treated as directional at best.

Apple MPP broke open tracking. Bounces destroy your domain. The only metric you control is data quality. Prospeo's 5-step email verification delivers 98% accuracy - teams using it report under 3% bounce rates and zero domain flags.

Stop debugging subject lines. Start with a list that actually lands in inboxes.

Why Benchmarks Contradict Each Other

Three forces drive the contradiction.

MPP inflation varies by audience. Selling to tech companies where most people use Apple Mail? Your reported open rate will be dramatically higher than your real one. Sell to manufacturing companies running Outlook on Windows, and MPP barely touches your numbers. Same email, same subject line, wildly different reported opens.

"Cold email" means different things. EmailToolTester's 40-60% range includes warm outreach, opted-in lists, and marketing emails that happen to be unsolicited. Truly cold B2B outreach to people who've never heard of you performs very differently from a "cold" email to someone who downloaded your whitepaper last month.

Survey data and platform telemetry produce different numbers. When you ask 1,800 people "do you open cold emails?", you get aspirational answers. When you measure billions of actual sends, you get reality.

What Actually Drives Opens

Deliverability and Authentication

Your email can't get opened if it lands in spam. We've seen teams spend weeks A/B testing subject lines while their SPF records were misconfigured - a complete waste of effort.

The authentication baseline in 2026 is non-negotiable: SPF, DKIM, and DMARC must all pass. Microsoft enforced this for high-volume senders - as of May 5, 2025, Outlook routes non-compliant high-volume mail to Junk, with outright rejection coming next.

Warm-up timelines have stretched too. Domains that used to be ready in three weeks now take 6-8 weeks before you can trust volume, per practitioners on r/coldemail.

Bad Data Kills Everything

Here's a case from r/coldemail that illustrates the problem perfectly. Someone ran a campaign - 256 emails sent, 10.55% open rate, 7% bounce rate, zero conversions. They asked whether to fix their subject line or their personalization.

The answer was neither. A 7% bounce rate is a flashing red alarm. Bad data leads to bounces, bounces lead to inbox providers flagging your domain, reputation damage pushes you to the spam folder, and then you're looking at 0% opens. If your bounce rate is above 3%, stop tweaking subject lines. Your list is the problem.

Stack Optimize built to $1M ARR using Prospeo's verified data - 94%+ deliverability, under 3% bounce, zero domain flags across all clients. Meritt switched and went from a 35% bounce rate to under 4%, tripling their pipeline. The common thread? Verified data before anything else. With 300M+ professional profiles refreshed every 7 days versus the 6-week industry average, you're not emailing people who changed jobs two months ago. (If you want a deeper breakdown, start with email bounce rate benchmarks and fixes.)

Meritt went from 35% bounce to under 4% and tripled pipeline. Stack Optimize hit $1M ARR with 94%+ deliverability across every client. The difference wasn't copy - it was switching to Prospeo's 143M+ verified emails refreshed every 7 days.

Clean data at $0.01 per email. No contracts. No bounced campaigns.

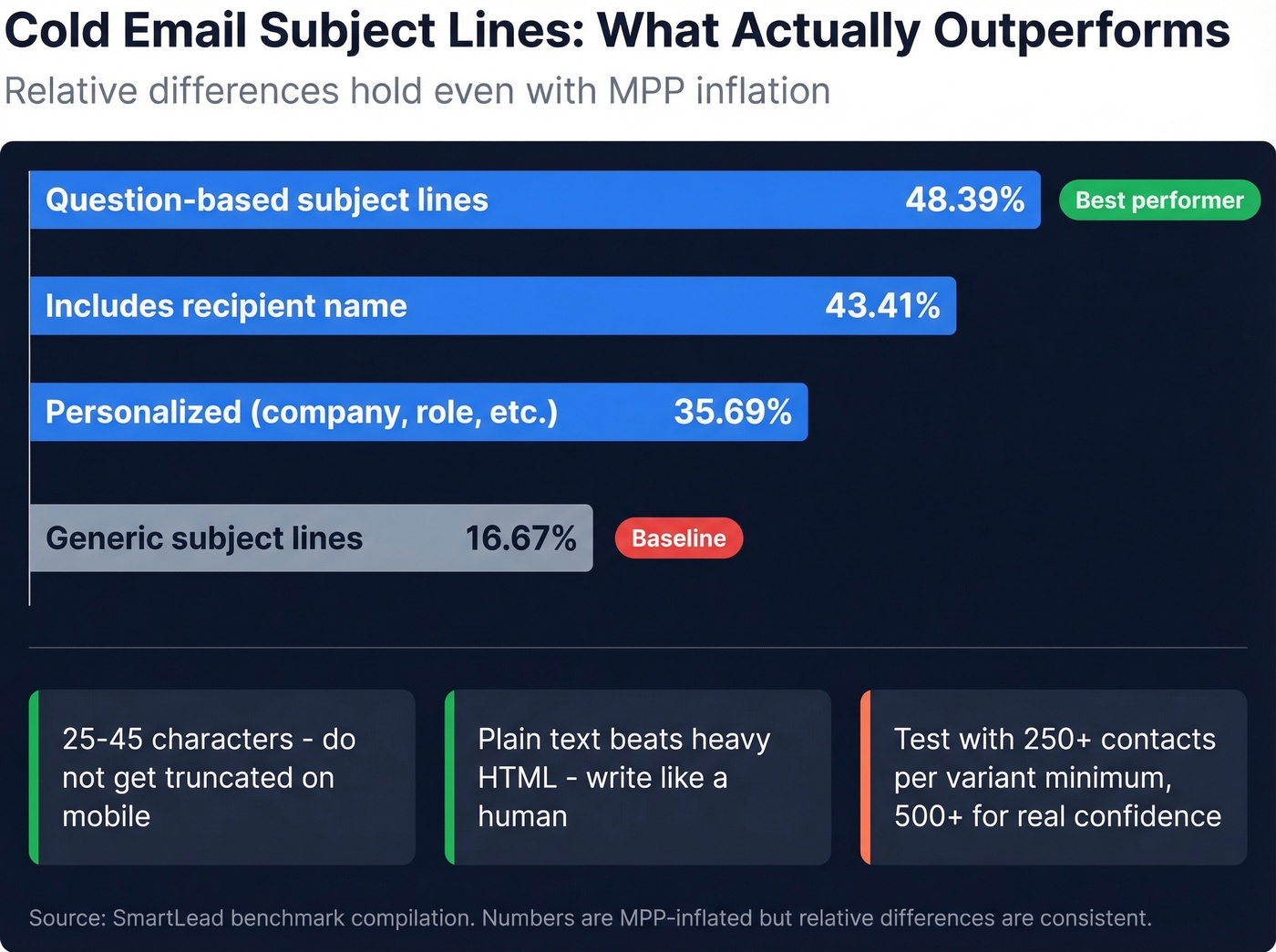

Subject Lines: What Works

Forget everything you've read about emoji subject lines and clickbait. SmartLead's compilation of benchmark data shows personalized subject lines pulling 35.69% opens versus 16.67% for generic ones. Question-based subject lines hit 48.39%. Including the recipient's name lands at 43.41%.

If you need a starting point, pull from proven cold email subject line examples and then test.

Those numbers are MPP-inflated, but the relative differences hold. Personalization outperforms generic. Questions outperform statements. Keep subject lines to 25-45 characters so they don't get truncated on mobile, where many clients cut off after 33-43 characters.

One thing that holds up in cold outreach: plain text typically beats heavy HTML. Heavily branded emails with logos and formatting trigger spam filters and signal "marketing blast." Write like a human, not a design team.

When you A/B test, use 250+ contacts per variant minimum - 500+ for real statistical confidence. Test one variable at a time. And measure reply rate, not opens.

Timing and Length

Best days: Tuesday and Wednesday pull the highest reply rates. Friday generates an auto-reply surge - not engagement. (More detail: best time to send cold emails.)

Email length: Sub-80-word emails drive the best reply rates. Get in, make your point, get out.

Follow-ups matter. Instantly's benchmark shows 58% of replies come from step 1, and 42% come from follow-ups. The 3-7-7 cadence - first follow-up at day 3, then day 7, then day 7 - captures 93% of replies by day 10. But diminishing returns hit hard after step 4-5, with spam complaints escalating sharply on later touches. Skip this if you're already getting spam complaints on step 2 or 3 - more follow-ups won't fix a targeting problem. If you want plug-and-play copy, use these cold email follow-up templates.

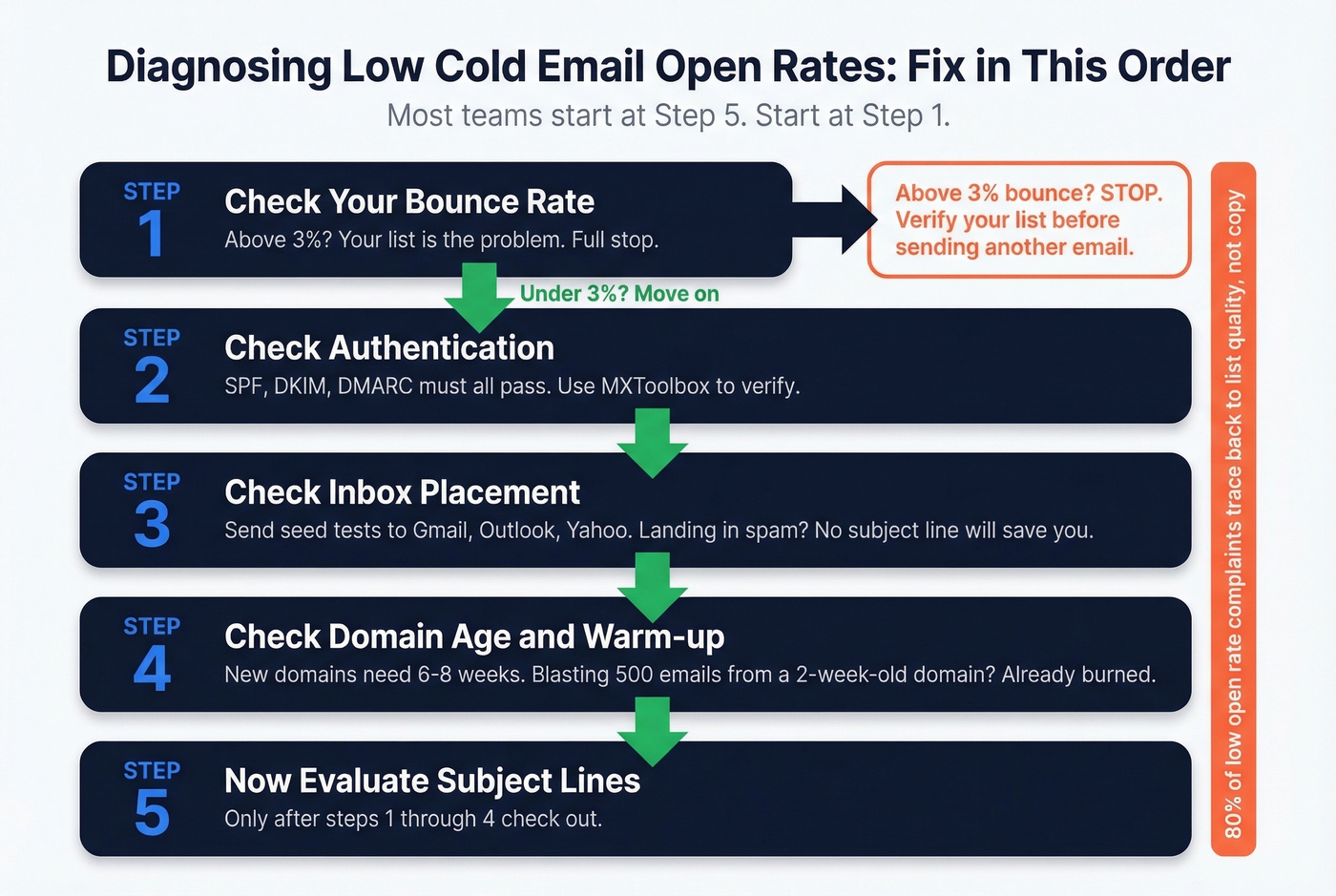

Diagnosing Low Opens: A 5-Step Fix

When open rates tank, most people jump to subject line testing. That's step five, not step one.

Step 1: Check your bounce rate. Above 3%? Your list is the problem. Full stop. Run your contacts through a verification tool before sending another email.

Step 2: Check authentication. SPF, DKIM, and DMARC must all pass. Use MXToolbox to verify. One misconfigured record can tank deliverability across your entire domain. (If you’re troubleshooting, this guide on how to verify DKIM is working helps.)

Step 3: Check inbox placement. Send seed tests to accounts across Gmail, Outlook, and Yahoo. Some practitioners prefer tracking inbox placement rate - the percentage of emails that land in the primary inbox - as a more honest proxy than open rate. If you're landing in spam on any major provider, no subject line will save you.

Step 4: Check domain age and warm-up. New domains need 6-8 weeks of gradual warm-up. Blasting 500 emails a day from a two-week-old domain? You've already burned it. (Related: email velocity limits and safe ramp schedules.)

Step 5: Now evaluate subject lines. Only after steps 1-4 check out.

That Reddit case - 10.55% opens, 7% bounce - is a textbook Step 1 failure. In our experience, 80% of "low open rate" complaints trace back to list quality, not copy. Let's be honest: most teams want the problem to be their subject line because that's a quick fix. The real fix is usually less fun.

Should You Even Track Opens?

Open rate in 2026 is a health check, not a performance metric. It's like foot traffic in a store - useful for knowing the lights are on, useless for measuring whether your pitch is working.

Reply rate is the metric that matters. The largest 2026 cold email dataset gives you the anchors: 3.43% average, 5.5%+ for top quartile, 10.7%+ for elite campaigns. Timeline-based hooks - referencing a trigger event or deadline - average 10.01% reply rates versus 4.39% for generic problem hooks. That's a 2.3x gap worth optimizing for. (If you’re building sequences end-to-end, see B2B cold email sequence structure and benchmarks.)

Disabling open tracking can actually improve deliverability, because tracking pixels can be a negative signal for some filters. If you want one number to obsess over, make it positive reply rate. For a secondary signal, watch bounce rate - it tells you more about campaign health than your cold email open rate dashboard ever will.

FAQ

What's a good cold email open rate in 2026?

On a warmed domain with verified contacts, 27-60% is commonly reported - but Apple MPP inflates this significantly, so your real human open rate is lower. Focus on reply rate instead: 3.43% is average and 5.5%+ is strong across billions of sends analyzed in 2026.

Why is my open rate below 15%?

Almost always deliverability or data quality, not your subject line. Check your bounce rate first - above 3% signals bad list data damaging your sender reputation. Then verify SPF, DKIM, and DMARC authentication. Subject lines are the last thing to optimize, not the first.

Are open rates even accurate anymore?

No. Apple Mail Privacy Protection preloads tracking pixels via proxy servers, registering "opens" before any human reads the email. With Apple devices representing roughly half of all email opens, your reported numbers are significantly inflated. Corporate security scanners add further noise.

What tools help fix bad deliverability?

Start with email verification - Prospeo's 5-step verification process (98% accuracy, catch-all handling, spam-trap and honeypot removal) keeps bounce rates under 3%. Pair verification with GlockApps or MailReach for inbox placement testing and warm-up monitoring.

Should I track open rate or reply rate?

Reply rate. Opens are inflated by Apple MPP and bot activity, making them unreliable for measuring campaign performance. Reply rate measures actual human engagement and correlates directly with pipeline. Use open rate only as a directional health check - if it drops below 15%, investigate deliverability.