How to Build a Lead Qualification Matrix That Sales Won't Ignore

It's Monday morning. Your SDR opens the CRM, sees 200 "qualified" leads from last week's campaign, and starts dialing. By noon, half the phone numbers are dead, a third of the contacts have left their companies, and the ones who pick up have zero budget authority. Only 27% of leads sent to sales are actually qualified. The other 73% waste your team's best hours.

Over on r/LeadGeneration, someone asked whether lead scoring can even be "accurate and reliable" - and that question keeps coming up because the underlying system is usually broken.

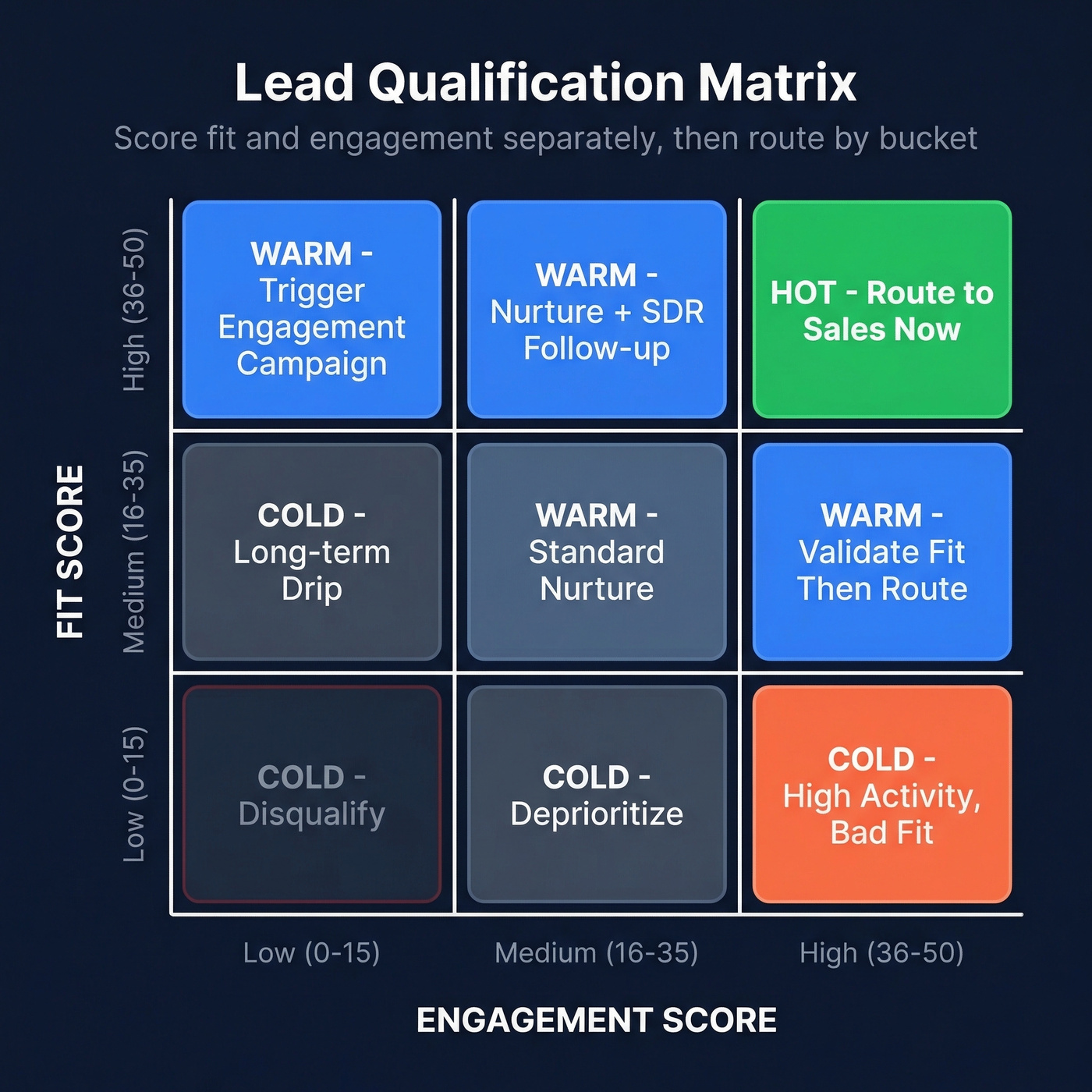

A lead qualification matrix fixes this by scoring every lead on two separate axes - fit and engagement - then routing them into hot, warm, or cold buckets. You need one framework to define your criteria, a 0-100 scoring model with clear thresholds, clean underlying data, and a quarterly recalibration habit. Let's break it down.

What Is a Lead Qualification Matrix?

Most teams run a single lead score. One number that mashes firmographic fit and behavioral signals into an opaque composite. A director at a perfect-fit company who's never visited your site gets the same score as an individual contributor who downloaded every whitepaper you've published. One deserves a call. The other deserves a nurture sequence. A single number can't tell you which is which.

A qualification matrix separates those dimensions. One axis measures fit - company size, industry, seniority, tech stack. The other measures engagement - demo requests, pricing page visits, content downloads, email activity. The intersection creates a grid with distinct action buckets: hot (sales-ready), warm (nurture), and cold (disqualify or deprioritize).

Why Scoring Fit and Engagement Separately Matters

The ROI gap is stark. Organizations with lead scoring see 138% ROI versus 78% without. Teams with mature qualification processes hit 9.3% higher sales quota attainment and 26% higher conversion rates. Yet only about 44% of organizations score leads at all.

That's a massive competitive edge sitting on the table.

When reps trust the scoring system, they stop cherry-picking leads based on gut feel and start working the list in priority order. Pipeline velocity goes up. Marketing stops hearing "these leads are garbage" in every standup. The matrix aligns your entire revenue team around a shared definition of "qualified" - and in our experience, that shared definition is worth more than any individual scoring tweak.

Which Framework Feeds Your Matrix?

Your matrix needs criteria, and those criteria should come from a proven qualification framework.

| Framework | Criteria | Best For | Weakness |

|---|---|---|---|

| BANT | Budget, Authority, Need, Timeline | High-velocity SMB/inbound | Fails in multi-stakeholder deals |

| MEDDIC | Metrics, Economic Buyer, Decision Criteria/Process, Pain, Champion | Enterprise / six-figure ACV | Slows deals if applied rigidly |

| CHAMP | Challenges, Authority, Money, Prioritization | Pain-first mid-market | Reps skip budget questions |

| GPCT | Goals, Plans, Challenges, Timeline | Strategic / longer-cycle B2B | Too slow for transactional sales |

Here's the thing: consistency matters more than which framework you pick. One team switching from BANT to MEDDIC improved forecast accuracy from 62% to 89% - not because MEDDIC is magic, but because they finally had a shared language that matched their deal complexity. A practitioner on r/sales boiled it down even simpler: requirements, budget, competition. If a lead fails on any of those three, disqualify fast.

Pick the framework that matches your sales motion. Then translate its criteria into scoreable fields.

Your qualification matrix scores fit criteria like company size, industry, and tech stack - but those scores are worthless if the underlying contact data is stale. Prospeo refreshes 300M+ profiles every 7 days and returns 50+ data points per contact, so every field in your matrix is current and scoreable.

Stop scoring leads against data that expired six weeks ago.

Building Your Matrix - A B2B Example

Let's build a real one. We'll use a 0-100 scale split evenly: fit criteria worth 0-50 points and engagement criteria worth 0-50 points. Keep these scores separate until the final routing step.

Fit Criteria (0-50 Points)

| Criterion | 10 pts | 5 pts | 0 pts |

|---|---|---|---|

| Company size | 50-500 employees | 500-5,000 | <50 or >5,000 |

| Industry | SaaS / fintech | Adjacent tech | Non-tech |

| Seniority | VP+ / Director | Manager | Individual contributor |

| Tech stack | Uses your integration | Uses competitor | Unknown |

| Region | Target market | Adjacent market | Outside market |

A perfect-fit lead maxes out at 50 points across all five criteria.

Engagement Criteria (0-50 Points)

| Signal | Points |

|---|---|

| Demo request | 20 |

| Pricing page visit (2+) | 15 |

| Case study download | 10 |

| Email opened in last 30 days | 5 |

Not all lead sources are equal. A webinar attendee converts at roughly 11.2% versus 1.8% for organic traffic, so weight source channel accordingly in your engagement score.

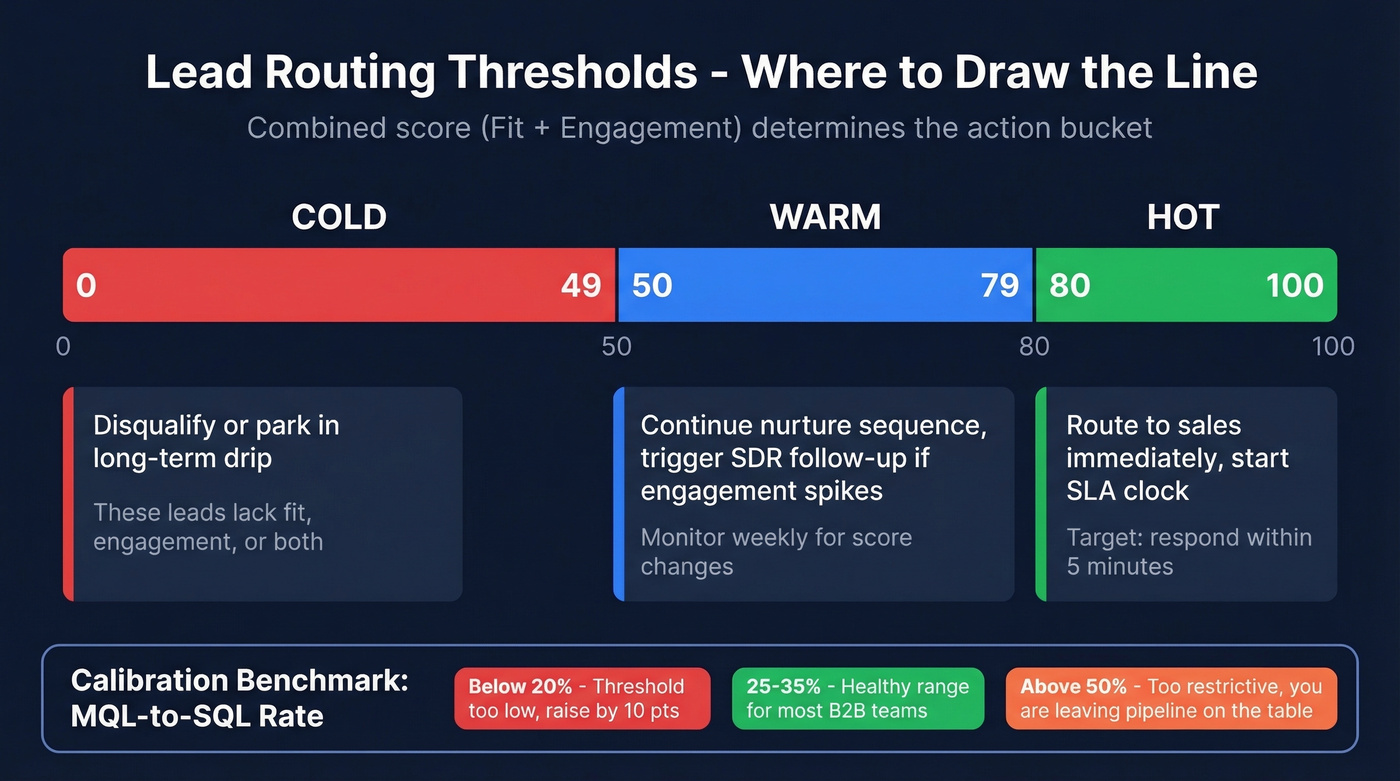

Routing Thresholds

- 80+ combined - Hot. Route to sales immediately.

- 50-79 combined - Warm. Continue nurture, trigger SDR follow-up if engagement spikes.

- Below 50 - Cold. Disqualify or park in a long-term drip.

Calibrate against your MQL-to-SQL conversion rate. Typical B2B teams land at 25-35%. Below 20% means your threshold is too low. Above 50% means you're leaving pipeline on the table. Adjust quarterly.

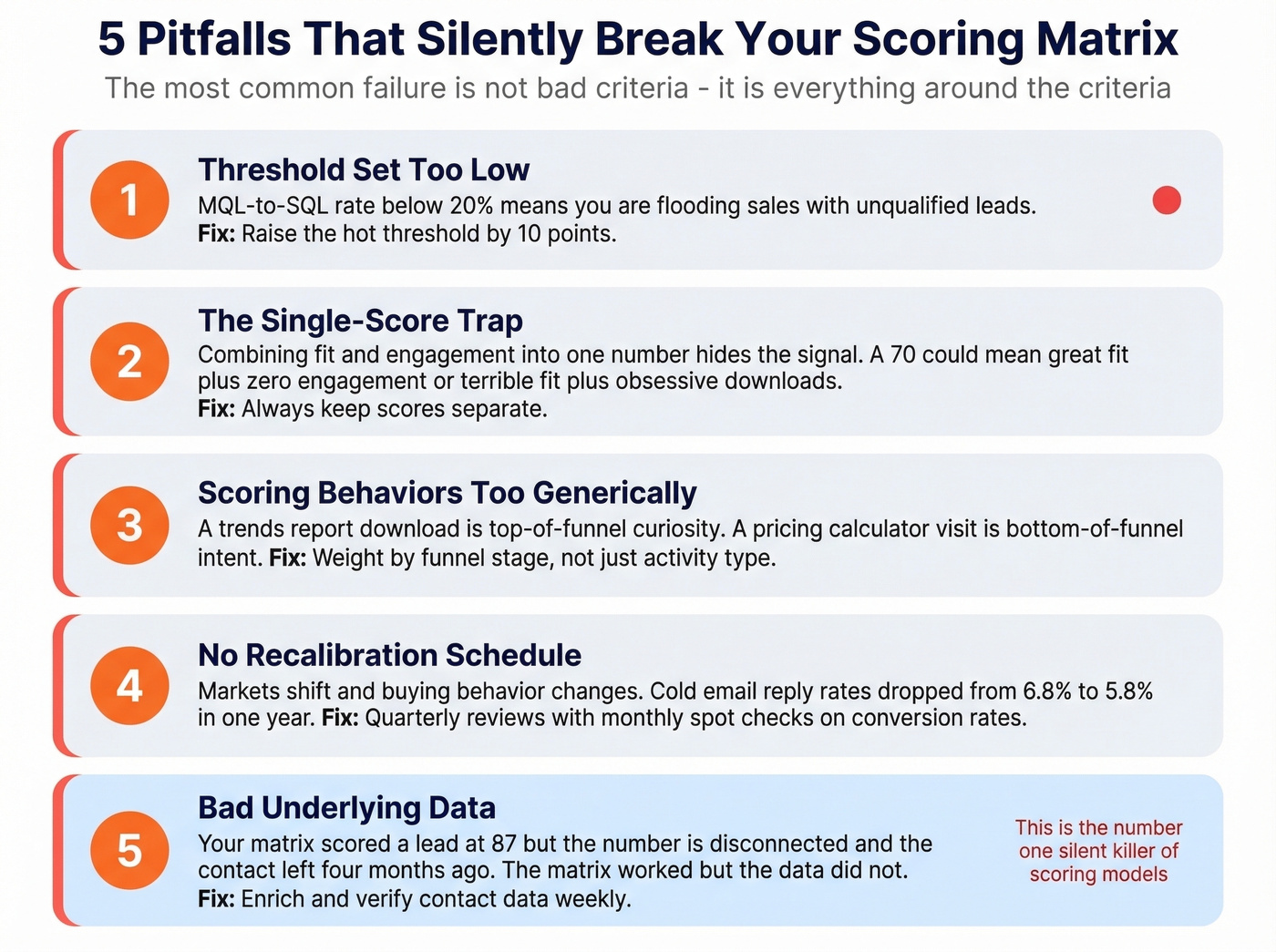

Common Pitfalls That Break Your Matrix

Look, the most common failure isn't bad criteria - it's everything around the criteria. We've watched teams build elegant scoring models and still get terrible results because of these five mistakes:

Threshold set too low. If your MQL-to-SQL rate is below 20%, you're flooding sales with unqualified leads. Raise the hot threshold by 10 points and measure again.

The single-score trap. Combining fit and engagement into one number hides the signal. A score of 70 could mean great fit plus zero engagement, or terrible fit plus obsessive content consumption. Teams that keep scores separate see faster sales adoption because reps can instantly see why a lead is hot.

Scoring behaviors too generically. A "2026 trends report" download is top-of-funnel curiosity. A buyer's guide or pricing calculator is bottom-of-funnel intent. Weight them differently - or your matrix treats tire-kickers like buyers.

No recalibration. Markets shift and buying behavior changes. Cold email reply rates dropped from 6.8% in 2023 to 5.8% in 2024, and the trend hasn't reversed. Run quarterly reviews with monthly spot checks on conversion rates.

Bad underlying data. This one's frustrating because it's invisible until a rep picks up the phone. Your matrix scored a lead at 87. Your SDR calls - the number is disconnected, the email bounces, the contact left four months ago. The matrix worked; the data didn't. We've seen matrices fail more often from stale data than bad criteria, which is why Prospeo refreshes its 300M+ professional profiles every 7 days and verifies emails at 98% accuracy - so your scores reflect actual humans, not ghosts.

Implementing in Your CRM

Set up these custom properties: Fit Score, Engagement Score, Total Score, Qualification Tier (hot/warm/cold), Lifecycle Stage, Owner, and SLA Clock. In HubSpot, manual scoring starts at Professional tier; predictive scoring is Enterprise-only. In Salesforce, build a formula field and use assignment rules to route by tier.

High-alignment teams hit MQL-to-SQL rates of 40-50%. If you're nowhere near that, the matrix isn't your problem - your ICP definition is. And for teams already hitting those numbers, early adopters of AI-powered predictive scoring are reporting roughly 20% revenue lifts, so bolt predictive on top of your lead qualification matrix once the manual version is dialed in.

Before you score anything, enrich your database. Missing fields mean missing points, which means misrouted leads. If your CRM doesn't have seniority, tech stack, or company size filled in for most contacts, skip the matrix build and fix the data first - tools like Prospeo's CRM enrichment return 50+ data points per contact at a 92% match rate, which fills exactly the gaps your fit score depends on.

If you're evaluating vendors, start with a shortlist of data enrichment services and a clean set of firmographic and technographic data requirements.

Bad underlying data is the silent matrix killer. Reps dial dead numbers, email bounced addresses, and chase contacts who left their companies months ago. Prospeo delivers 98% email accuracy, 125M+ verified mobiles with a 30% pickup rate, and an 83% enrichment match rate - so your hot-bucket leads actually convert.

Feed your scoring model data that sales will actually trust.

FAQ

What's the difference between lead scoring and a lead qualification matrix?

Lead scoring assigns a single number. A lead qualification matrix uses two axes - fit and engagement - to route leads into distinct action buckets (hot, warm, cold). This prevents bad-fit leads with high engagement from getting misrouted to sales, which a single composite score can't do.

How often should I recalibrate my matrix?

Quarterly at minimum, with monthly spot checks on your MQL-to-SQL conversion rate. Below 20% means your hot threshold is too low and you're flooding reps with junk. Above 50% means you're qualifying too restrictively and leaving pipeline on the table.

Can I build a lead qualification matrix without a CRM?

Yes. A spreadsheet with fit columns, engagement columns, a scoring formula, and conditional formatting for hot/warm/cold tiers works for teams under 500 leads per month. Beyond that volume, CRM automation with HubSpot or Salesforce saves hours of manual scoring each week - especially when paired with enrichment tools that keep your contact data current.