Lead Quality Scoring: How to Build a Model Sales Won't Ignore

A founder on r/hubspot built what they thought was a bulletproof scoring model - whitepaper downloads worth +15, pricing page visits +25, case studies +10. Their highest-scoring lead, an 89, turned out to be a grad student doing research. Their biggest deal that quarter? A lead scoring 12 who booked a demo from a cold outbound message and never opened a single email.

The model measured curiosity, not buying intent. That's the problem with most lead quality scoring setups, and it's fixable.

What Lead Quality Scoring Actually Is

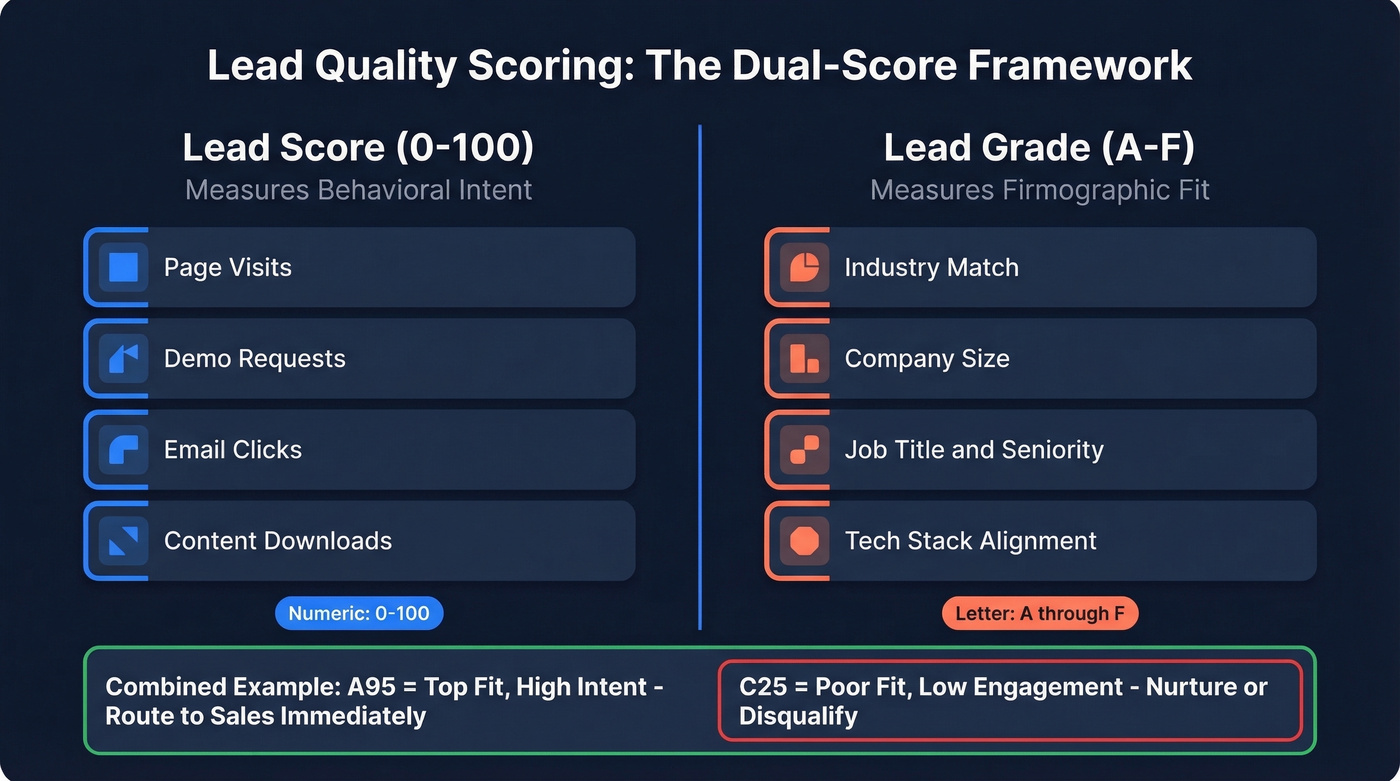

Lead quality scoring assigns a numeric or letter-grade value to every lead based on how likely they are to buy. The distinction most teams miss: a lead score (0-100) measures behavioral intent - page visits, demo requests, email engagement. A lead grade (A-F) measures firmographic fit - industry, company size, job title, tech stack.

You need both. An "A95" means top-fit, high-intent, and that lead goes to sales immediately. A "C25" is a poor-fit, low-engagement contact that belongs in nurture or gets disqualified entirely. When teams only score on engagement, they end up routing researchers and competitors to their SDRs. That's how trust in scoring dies, and once reps stop believing in the numbers, you've lost months of buy-in you won't easily get back.

How to Build a Scoring Model From Real Data

Start With Your CRM, Not a Brainstorming Session

Pull the last 50 closed-won deals and 50 closed-lost or no-show deals. Look for patterns in both groups.

For fit criteria, identify which industries, company sizes, job titles, and tech stacks show up disproportionately in your wins. These become your grade inputs (A-F). For behavior signals, track which actions your closed-won leads took before converting - demo requests, pricing page visits, multiple stakeholders from the same account engaging within a short window. Typical MQL-to-SQL conversion runs 25-35% for most teams, and high-alignment orgs hit 40-50%.

Set binary disqualification rules for non-negotiables. Wrong industry, no budget authority, company size below your minimum - these leads don't get routed to sales regardless of their behavior score.

Assigning Point Values With Math, Not Gut Feel

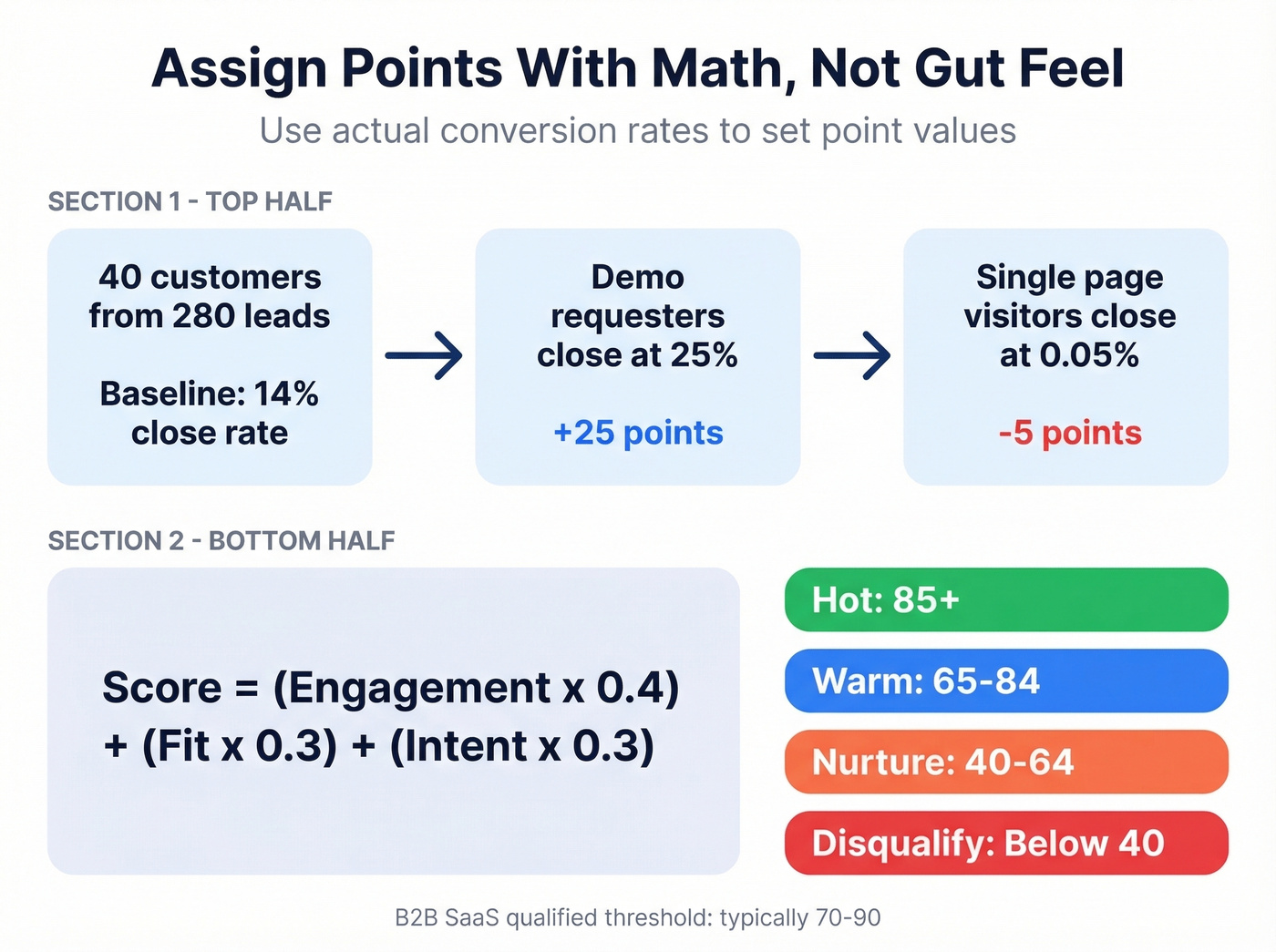

Here's the thing: most teams assign points based on vibes. "A webinar attendance feels like 10 points." That's how you end up with a broken model.

Use conversion rates instead. Calculate your baseline: if 40 customers came from 280 leads, your lead-to-customer rate is 14%. Now look at individual actions. Leads who request a demo close at 25%? That action gets 25 points. Leads who only visit your website once close at 0.05%? Negative points. The math does the work - you don't have to guess.

A solid weighted formula: Score = (Engagement x 0.4) + (Fit x 0.3) + (Intent x 0.3). Score buckets that work in practice:

- Hot: 85+

- Warm: 65-84

- Nurture: 40-64

- Disqualify: Below 40

Industry benchmarks vary. B2B SaaS typically runs 70-90 for qualified thresholds, e-commerce 60-80, healthcare 50-70.

Your scoring formula means nothing when 40% of your firmographic fields are blank. Prospeo enriches CRM records with 50+ data points at 98% email accuracy and an 83% match rate - on a 7-day refresh cycle so your model scores against current reality, not six-month-old ghosts.

Stop scoring leads on data you can't trust.

Data Quality Makes or Breaks Everything

We've seen teams spend weeks building scoring models only to realize 40% of their industry fields are blank and half their contact records haven't been updated in six months. Scoring on dirty data just automates bad decisions faster.

75% of marketing teams report that inaccurate or outdated lead data actively hurts their pipeline. Over 60% say poor data disrupts lead handoffs and slows sales productivity. Before you score a single lead, make sure the underlying data is accurate and current - stale records mean you're prioritizing ghosts.

Enrichment platforms like Prospeo feed 50+ data points per contact into your CRM via API or native integrations with Salesforce and HubSpot, running on a 7-day refresh cycle with 98% email accuracy and an 83% enrichment match rate. That gives your model accurate firmographic and technographic fields to score against instead of blank or outdated records.

If you're cleaning up fields before scoring, start with CRM database enrichment and a repeatable data hygiene process.

Seven Scoring Mistakes That Kill Pipeline

1. Scoring on email opens. Bots and spam filters trigger opens constantly. An "open" isn't a signal - it's noise. Build scoring around clicks, page visits, and form submissions.

2. Blocking hand-raisers with formulas. If someone requests a demo, they go to sales. Full stop. Don't let a low score override explicit buying intent.

3. Not involving sales in model design. If reps don't trust the score, they'll ignore it. Get their input on what "qualified" actually means before you assign a single point value. Let's be honest - most scoring models fail because marketing built them in isolation.

4. Scoring on fields you don't capture consistently. If your industry field is blank on 40% of records, weighting it heavily produces garbage outputs.

5. Set-it-and-forget-it. If your model hasn't changed in six months, you're filtering out real opportunity by accident. Recalibrate quarterly using actual conversion data.

6. No negative scoring. Competitors, students, and job seekers inflate your pipeline if you don't subtract points for disqualifying signals like personal email domains or irrelevant industries. (If you want a ruleset, use a dedicated negative scoring layer.)

7. Over-engineering. A 50-variable model sounds impressive. For teams processing fewer than 500 leads per month, three questions outperform it: do they have the problem, the budget, and the authority? The consensus on Reddit threads about early-stage scoring backs this up - simple qualification beats complex models until you have the volume to justify them.

Scoring Tools and What They Cost

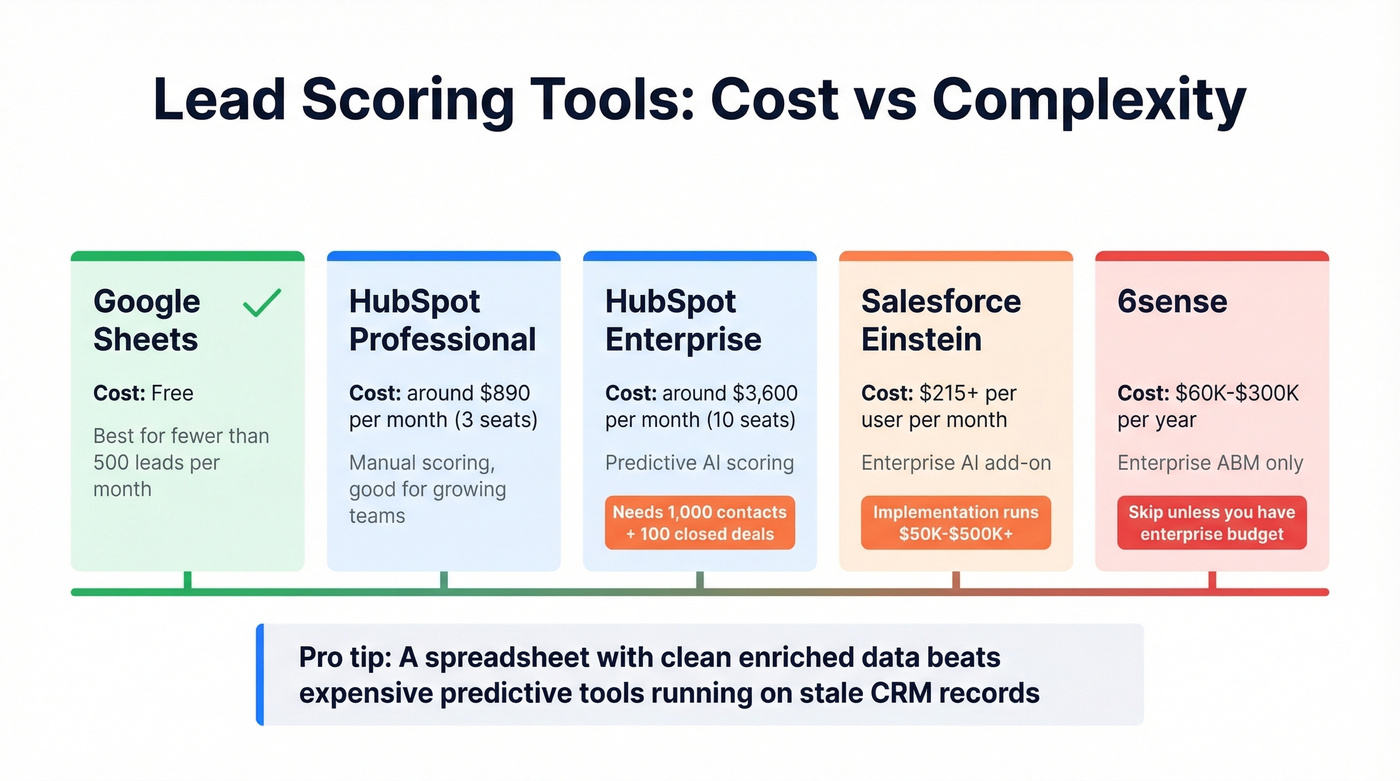

61% of B2B marketers send every lead to sales without scoring, and only 27% of those leads are actually qualified. So any scoring model - even a basic one - puts you ahead of most teams. The question is how much to spend.

| Tool | Plan Required | Monthly Cost | Data Requirement |

|---|---|---|---|

| HubSpot | Professional (manual) | ~$890/mo (3 seats) | - |

| HubSpot | Enterprise (predictive) | ~$3,600/mo (10-seat min) | 1,000 contacts + 100 closed deals |

| Salesforce Einstein | Enterprise + AI add-on | ~$215+/user/mo | ~1,000 converted leads |

| 6sense | Annual contract | $60K-$300K/yr | - |

| Spreadsheet | Google Sheets | Free | Your time |

For most teams, a spreadsheet with modular sub-scores - Behavioral (0-40) + Firmographic (0-35) + CRM Stage (0-25) - will outperform $3,600/month predictive scoring until you're processing thousands of leads per month. HubSpot's predictive model needs at least 1,000 contacts and 100 closed deals to generate reliable outputs. Salesforce Einstein implementations run $50K-$500K+ before you score a single lead.

Start simple, prove the model works, then upgrade the tooling. Skip 6sense entirely unless you're running enterprise ABM with budget to match.

Whichever tool you choose, the scoring layer is only as reliable as the data underneath it. In our experience, teams that pair even a basic spreadsheet model with clean, enriched contact data outperform teams running expensive predictive tools on stale CRM records. If you're deciding between approaches, compare AI lead scoring vs traditional lead scoring and keep your lead scoring best practices tight.

You don't need a $60K scoring platform - you need accurate data underneath whatever model you run. Prospeo feeds verified firmographics, technographics, and intent signals into Salesforce and HubSpot via native integrations, starting at $0.01 per email. No contracts, no sales calls.

Fix the data layer and your scoring model fixes itself.

FAQ

What's the difference between lead scoring and lead grading?

Lead scoring measures behavioral intent - page visits, demo requests, email clicks - on a numeric scale (0-100). Lead grading measures firmographic fit - industry, company size, job title - on a letter scale (A-F). You need both working together. A high-fit, low-engagement lead is still worth a call; a high-engagement, poor-fit lead is probably a researcher or a competitor poking around.

How often should I recalibrate my scoring model?

Quarterly at minimum. Pull conversion data from the last 90 days, compare predicted scores against actual outcomes, and do it with both sales and marketing in the room. If your model hasn't changed in six months, it's filtering out real opportunity. Markets shift, buyer behavior evolves, and your ICP may have drifted without anyone noticing.

Do I need a scoring tool, or can I use a spreadsheet?

Teams with fewer than 500 leads per month can absolutely start with a spreadsheet. Behavioral (0-40) + Firmographic (0-35) + CRM Stage (0-25) gives you a 0-100 composite score that's easy to maintain and easy to explain to sales. Upgrade to CRM-native scoring when volume makes manual review impossible. Either way, feed your model with clean, enriched data - that's the part that actually determines whether your scores mean anything.

How do I improve lead quality scoring accuracy over time?

Track your score-to-close rate monthly and flag any lead that scored above 80 but didn't convert. Compare those false positives against your closed-won cohort to find which signals are misleading. Adjust point weights quarterly, add negative scoring for new disqualifiers, and make sure your enrichment data stays current. A scoring model that doesn't evolve with your pipeline is just a snapshot of assumptions that were true six months ago.