Marketing Qualified Leads Measurement: The Practitioner's Playbook

Marketing says leads are fine. Sales says they're garbage. The dashboard shows 400 MQLs last month, but pipeline didn't move. Sound familiar?

Without proper marketing qualified leads measurement, you're arguing about a number nobody agreed on. Only 38.9% of companies have a formal definition of a qualified lead - the other 61% are fighting over a metric with no shared meaning.

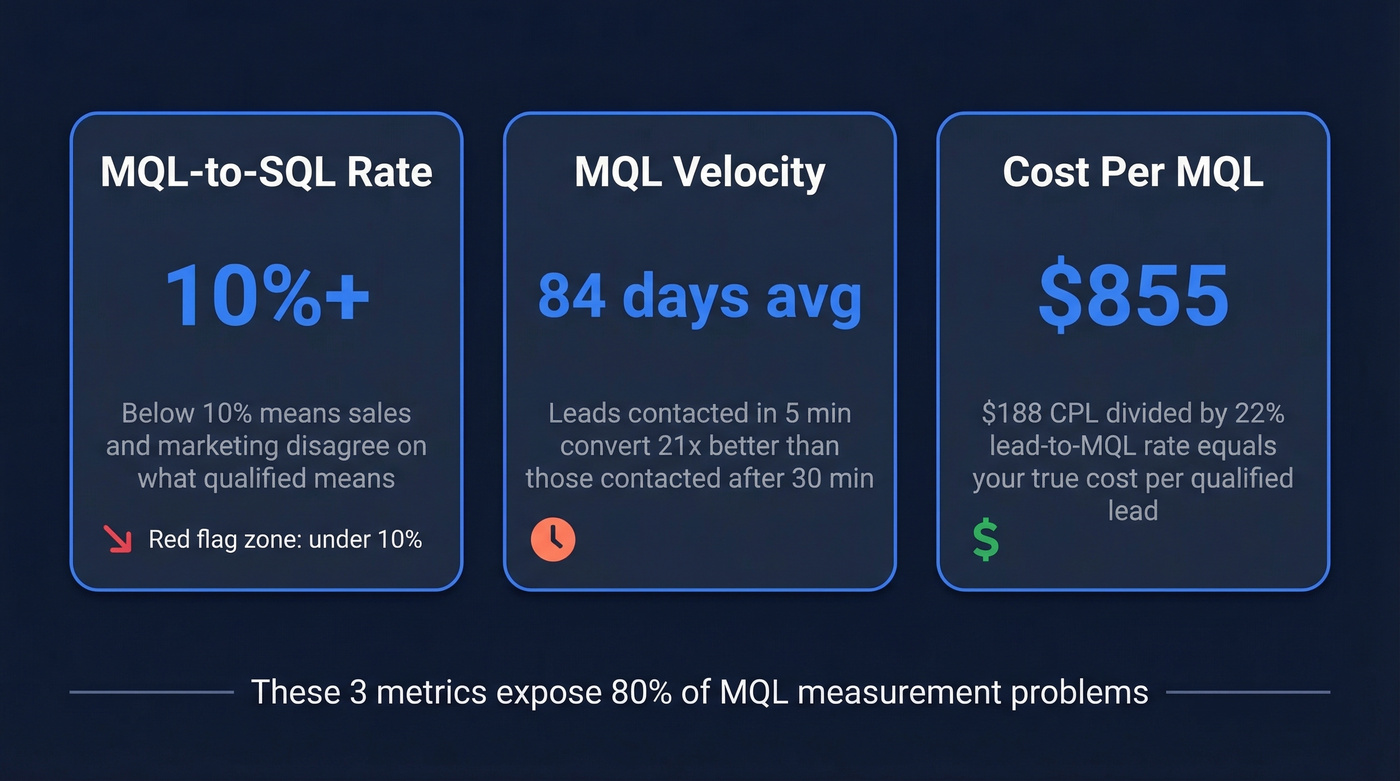

Start With These Three Metrics

If you're short on time, measure three things today.

MQL-to-SQL conversion rate tells you whether marketing and sales agree on what "qualified" means. Below 10%? That's a red flag.

MQL velocity tracks how fast leads move from capture to MQL status. A commonly cited benchmark is roughly 84 days, and if yours is longer, you're handing competitors more time to close the deal before you even pick up the phone.

Cost per MQL - not cost per lead, cost per qualified lead. Most teams track CPL and call it a day, which is like measuring ingredients instead of the finished dish. These three metrics expose 80% of MQL measurement problems. The full framework below covers the rest.

What Counts as an MQL

An MQL is a lead that passes two tests: "Are they interested in us?" (engagement) and "Are we interested in them?" (fit). If only one is true, it's not an MQL - it's either a curious browser or a great-fit company that doesn't know you exist yet.

92% of world-class organizations have a standardized process to qualify leads. Only 42% of everyone else does. The gap isn't talent - it's definitions.

| Stage | Definition | Owned By | Moves to Next Stage When... |

|---|---|---|---|

| MQL | Engaged + fits ICP | Marketing | Sales accepts within SLA window |

| SAL | Sales accepted | Sales (SDR) | SDR confirms fit via initial outreach |

| SQL | Sales verified | Sales (AE) | Discovery call scheduled |

The handoff between these stages is where most pipelines leak. If your MQL definition doesn't map cleanly to what sales considers worth a call, you're manufacturing friction.

7 Metrics for MQL Measurement

MQL Volume

Formula: Count of leads reaching MQL status in a period.

Simple, but it's the baseline. Early-stage SaaS companies typically see 50-150 MQLs/month; growth-stage teams land at 200-500. Track weekly rather than monthly so you spot trends before they become problems.

Lead-to-MQL Ratio

Your competitor's marketing team generated 1,000 leads last quarter and celebrated. Then someone asked how many became MQLs. Eighty. An 8% lead-to-MQL ratio, meaning their top-of-funnel was a firehose pointed at the wrong audience.

The formula: (MQLs / Total Leads) x 100.

The MarketJoy benchmark for lead-to-MQL sits at 22%. HubSpot benchmark data puts B2B SaaS MQL conversion rates between 5-15%, with top performers reaching 25-30%. Below 15% means your top-of-funnel is too broad. Above 35% means your MQL bar might be too low.

Not all channels convert equally. One benchmark set shows website leads converting at 31.3%, referrals at 24.7%, webinars at 17.8%, and email campaigns at just 0.9%. If you're pouring budget into email blasts and wondering why MQL volume is flat, there's your answer.

MQL-to-SQL Conversion Rate

Formula: (SQLs / MQLs) x 100

This is the metric that exposes sales-marketing misalignment. MarketJoy's cross-industry benchmark sits around 15%, with a range of 12-18%.

Industry benchmarks vary widely. First Page Sage's industry analysis shows business insurance at 26% while construction sits at 12%. If you're at 8%, either your scoring model is broken or sales isn't following up fast enough.

MQL-to-Customer Rate

The end-to-end number, and the only one your CFO actually cares about.

Formula: (Customers from MQLs / Total MQLs) x 100

Expect 1-5% for most B2B SaaS companies, with top performers pushing toward 7%.

MQL Velocity

Your competitor called the lead 5 minutes after the form fill. You called 3 days later. Guess who won?

The average time from lead capture to MQL status hovers around 84 days. And speed-to-lead is a real lever: leads contacted within 5 minutes convert at 21x the rate of leads contacted after 30 minutes. Buyers also conduct 70% of their research independently before engaging sales - by the time they hit your scoring threshold, the window is already narrowing.

Cost Per MQL

Most teams get this wrong because they stop at cost per lead. B2B SaaS CPL averages $188. But if only 22% of leads become MQLs, your true cost per MQL is $188 / 0.22 = ~$855. That single calculation reframes every budget conversation you'll have this quarter.

Formula: Total Marketing Spend / MQLs Generated.

Here's how to get channel-level clarity: take each channel's total spend and divide by the number of MQLs that channel produced - not total leads. This view reveals where your budget actually drives qualified pipeline.

MQL ROI

Revenue from MQL-sourced deals, minus marketing cost, divided by marketing cost, times 100. If MQL-sourced deals generated $500K on $100K in spend, your MQL ROI is 400%. One line, one number, one conversation with finance.

Benchmarks That Actually Matter

Raw numbers without context are useless. Here's what the data says.

MQL-to-SQL by Industry

| Industry | MQL-to-SQL Rate |

|---|---|

| B2B SaaS | 13% |

| Cybersecurity | 15% |

| IT & Managed Services | 13% |

| Construction | 12% |

| Business Insurance | 26% |

MQL-to-SQL by Company Stage

| Stage | MQL-to-SQL Range | MQL-to-Close |

|---|---|---|

| Early | 15-25% | 1-2% |

| Growth | 20-30% | 2-4% |

| Scale | 25-35% | 3-5% |

| Enterprise | 20-30% | 4-7% |

Enterprise rates dip on MQL-to-SQL because deal complexity increases, but close rates climb because those deals are larger and better qualified.

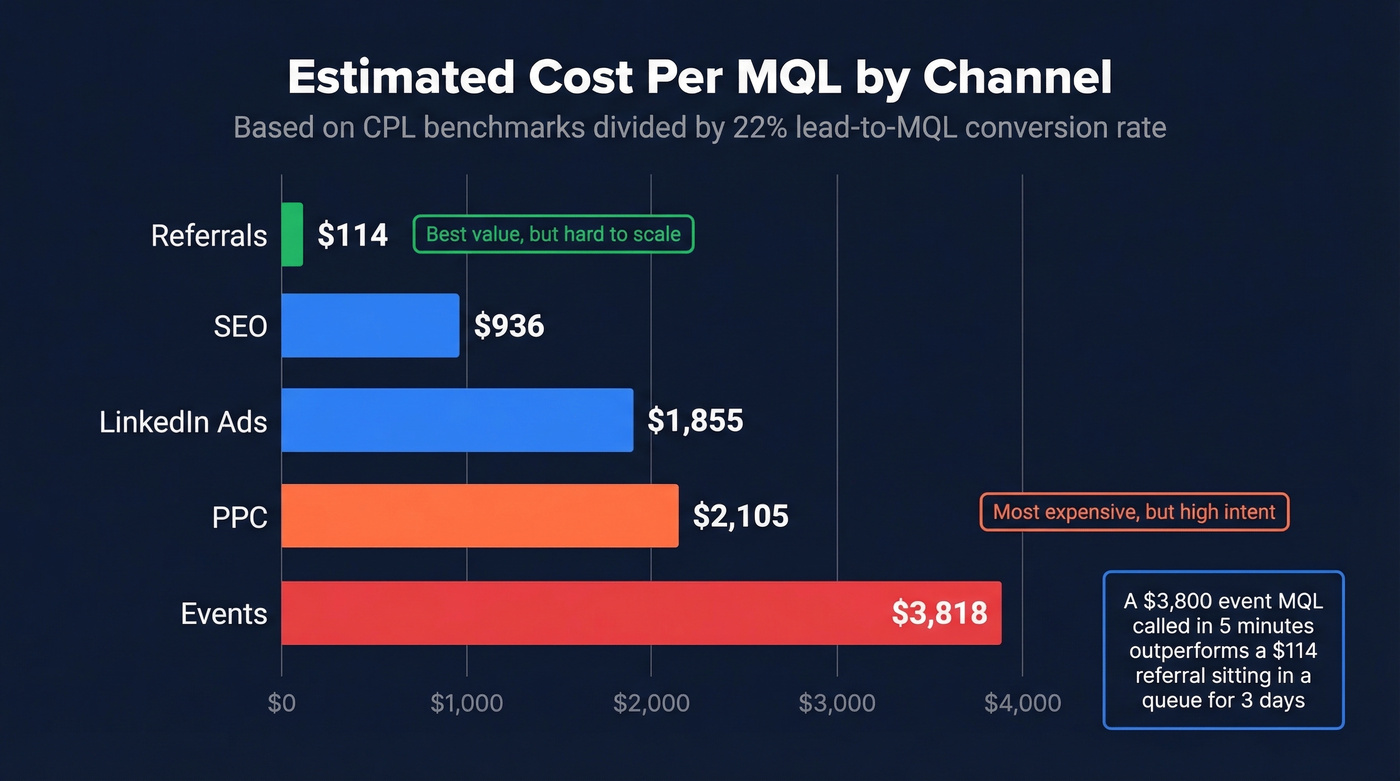

Estimated Cost Per MQL by Channel

Derived from Sopro's CPL benchmarks divided by a 22% lead-to-MQL rate:

| Channel | Est. CPL | Est. Cost/MQL |

|---|---|---|

| SEO | $206 | ~$936 |

| PPC | $463 | ~$2,105 |

| LinkedIn Ads | $408 | ~$1,855 |

| Events | $840 | ~$3,818 |

| Referrals | $25 | ~$114 |

Referrals look absurdly cheap - and they are. The catch is they don't scale. A $3,800 event MQL that gets called in 5 minutes outperforms a $114 referral sitting in a queue for three days. Speed to lead matters more than cost per lead.

Your cost-per-MQL jumps from $855 to infinity when 35% of emails bounce. Prospeo's 98% email accuracy and 7-day data refresh mean every MQL you pass to sales has a verified, deliverable contact - not a dead inbox.

Cut your MQL bounce rate below 4% like Snyk did with 50 AEs.

Build a Lead Scoring Model Sales Trusts

Most lead scoring models are set-and-forget, which means set-and-broken. The investment is worth it: formal lead qualification is linked to 10% higher revenue growth, and lead scoring can produce 50% more sales-ready leads at 33% lower cost per lead.

Here's a framework that actually holds up.

Point Values by Action/Attribute

| Signal | Points |

|---|---|

| Job title match | +10 |

| Company size >100 | +5 |

| Opened 3+ emails | +8 |

| Clicked email link | +10 |

| Viewed pricing page | +15 |

| Filled contact form | +20 |

| Requested demo | +30 to +40 |

| Unsubscribed | -15 |

Based on LeadsBridge's scoring research. Set your MQL threshold at 50+ points as a starting point, then calibrate quarterly against closed-won data. In our experience, the 50-point threshold works reliably across most B2B funnels - but set your initial number based on sales capacity. If reps can handle 20 new leads per week, calibrate the score that produces roughly that volume.

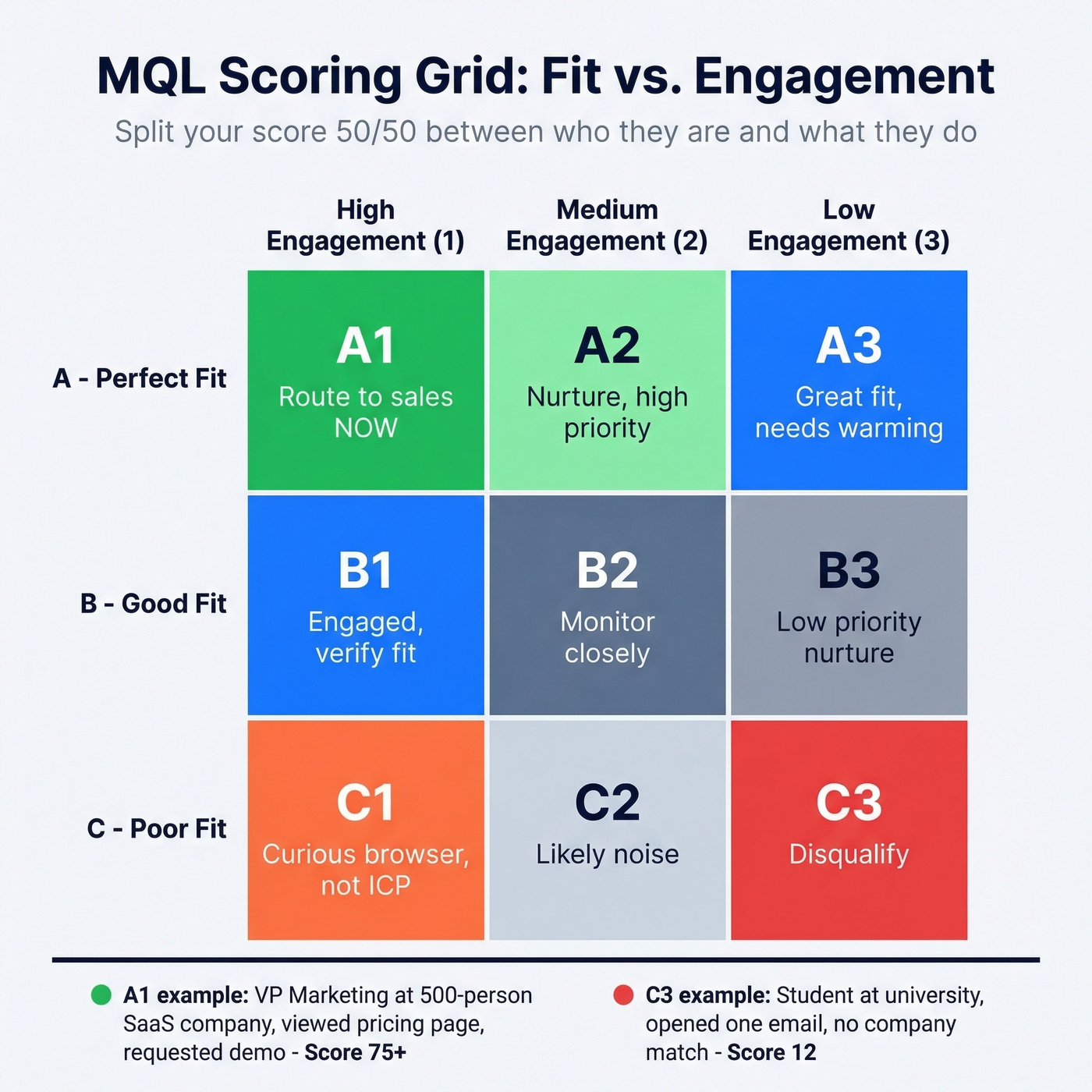

Fit vs. Engagement: The Dual Score

Split your total score 50/50 between fit (firmographic and demographic data) and engagement (behavioral signals). A common scoring grid uses A/B/C for fit and 1/2/3 for engagement - an A1 lead is your ideal buyer who's actively engaged. A C3 is noise.

Enrich lead records with verified contact data before scoring. Prospeo returns 50+ data points per contact at a 92% match rate, giving your scoring model accurate inputs from the start.

Scoring Hygiene Checklist

- Demo requests, trial signups, and "talk to sales" fills bypass scoring - route to sales immediately

- Negative scoring built in for unsubscribes, bounces, and competitor domains

- Score decay active - reduce by a percentage monthly rather than hard cutoffs

- Quarterly recalibration against closed-won data, not just gut feel

CRM Setup for Tracking MQLs

HubSpot

Navigate to Reports, then Create Report, then Journey Reports, and select your lifecycle stages (Lead to MQL to SQL). This gives you a visual funnel with conversion rates between each stage.

HubSpot Professional caps lead scores at 100 total; Enterprise allows up to 500. Choose decay over time-frame mechanics when possible - decay reduces a group's score by a percentage over time, while time-frame drops points entirely after a window. You can't use both in the same scoring group.

Salesforce

Salesforce tracks top-of-funnel on the Lead object and post-conversion on the Contact object. The critical detail: once Leads convert, reporting often loses visibility into the original Lead-stage history unless you stamp lifecycle stages and track re-qualification explicitly.

Let's be honest - if you're running HubSpot and Salesforce together, watch for record merging on lead conversion. It kills re-qualification visibility and makes your MQL-to-SQL reporting look cleaner than reality.

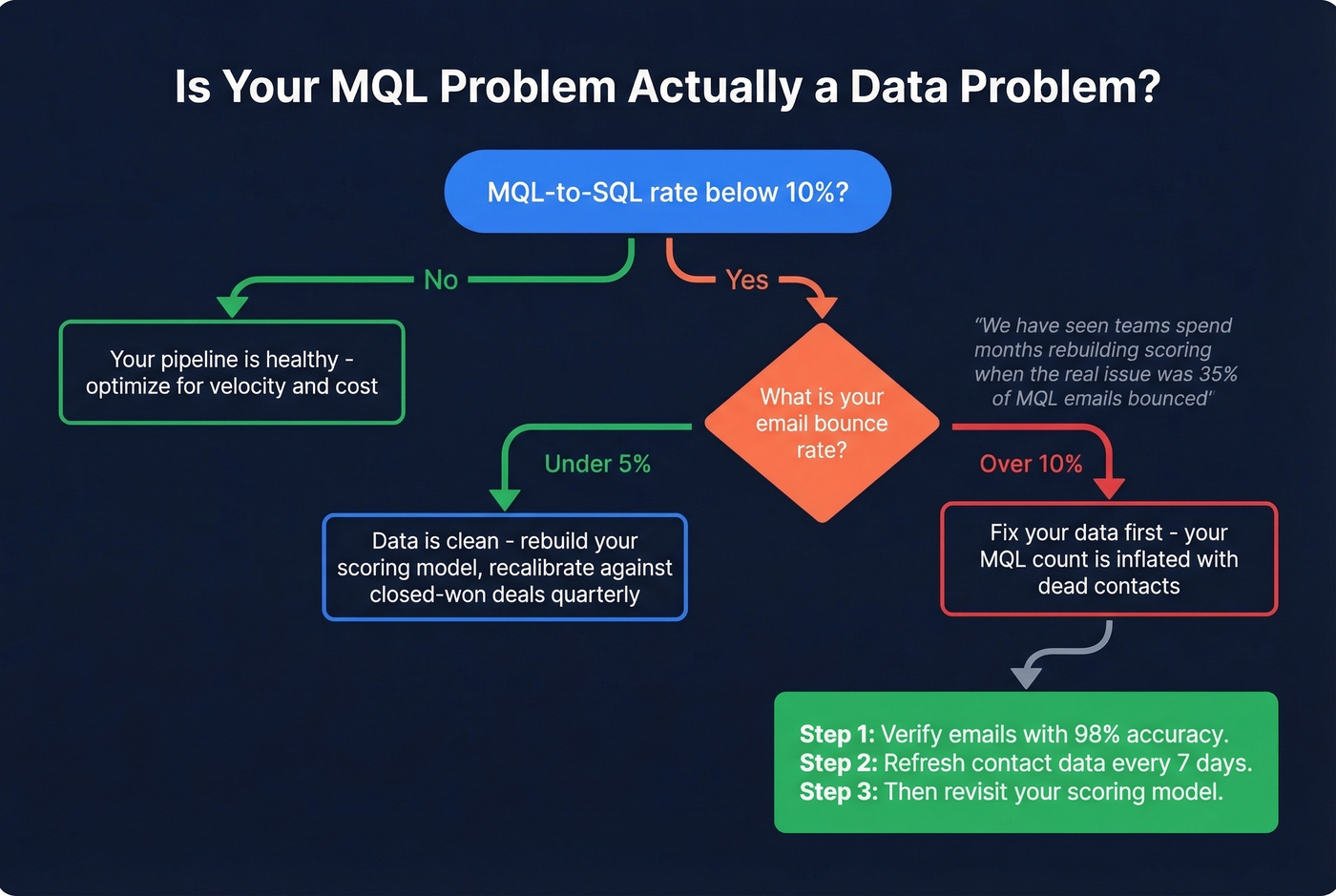

Why Your MQL Data Is Wrong

Here's the contrarian anchor for this entire article: you probably don't have an MQL problem. You have a data quality problem.

If you're running a lower deal-size motion and your MQL-to-SQL rate is below 10%, don't redesign your scoring model first. Fix your data. We've seen teams spend months rebuilding lead scoring when the real issue was that a huge share of their MQL emails bounced. Your conversion metrics aren't measuring marketing effectiveness - they're measuring your data vendor's accuracy. Every bounced email inflates your MQL count with a lead that never had a chance.

Before you blame your scoring model, check your data. Prospeo's real-time email verification delivers 98% accuracy on a 7-day refresh cycle. Snyk's team cut bounce rates from 35-40% to under 5% after switching, and AE-sourced pipeline jumped 180%.

Attribution model choice also warps your numbers. First-touch credits the original source with 100% of the conversion. Last-touch credits the final interaction. Neither is accurate for B2B, where buying cycles involve multiple stakeholders and dozens of touchpoints. Multi-touch or account-level attribution gives a more honest picture, but it requires unified data across your CRM, ad platforms, and website analytics.

The recurring practitioner take on MQL definition mistakes is consistent: the bar is too low, there are no feedback loops, teams overweight shallow engagement like page views and email opens, and nobody updates criteria as the product and ICP evolve. If your MQL definition hasn't been updated in 12 months, it's wrong.

Leads contacted within 5 minutes convert 21x faster - but only if the data is real. Prospeo gives your sales team verified emails and direct dials across 300M+ profiles so MQL-to-SQL handoffs happen in minutes, not days.

Stop losing deals to stale data. Get contacts your reps can actually reach.

Is MQL Dead?

The MQL concept traces back to the SiriusDecisions Demand Waterfall in the early 2000s. Forrester later acquired SiriusDecisions and popularized the framework globally. Two decades later, the "MQL is dead" take is everywhere - Reddit threads on r/sales and r/marketing regularly debate whether the metric has outlived its usefulness.

It's not dead. It's been demoted.

Reporting MQL volume to the board is a mistake. MQL is an internal leading indicator - useful for marketing ops, useless as a board KPI. The evolution ladder goes: form fills, then meetings booked, then second-meeting rate, then revenue. Each rung gets closer to what the business actually cares about.

The real shift is from individual leads to buying groups. Forrester data shows 93% of B2B buyers participate in groups of 2+ people, and 71% in groups of 4+. Marketing qualified accounts (MQAs) capture this account-level reality, and intent data tracking thousands of topics helps identify in-market accounts rather than just individual leads.

The transition has pitfalls. We've seen teams fall into four traps repeatedly: analysis paralysis designing the perfect MQA framework instead of shipping a minimum viable version, removing MQLs too early and creating a measurement gap before MQA reporting is ready, defining MQA criteria so narrowly that barely any accounts qualify, and over-automating the scoring with so many rules that nobody can debug why an account did or didn't qualify. The smart move is to run both in parallel, gradually shifting weight from MQL to MQA as your account-level data matures.

FAQ

What's a good MQL-to-SQL conversion rate?

B2B SaaS averages 13%, while business insurance can hit 26%. Early-stage companies typically see 15-25%, scaling to 25-35% at maturity. If you're consistently below 10%, check bounce rates before blaming the funnel - bad data drags down conversion more often than bad scoring.

How often should you update MQL criteria?

Quarterly at minimum, calibrated against closed-won data. Look at which MQL attributes and behaviors actually predicted revenue, then adjust scoring weights accordingly. Products change, pricing shifts, and your ICP evolves - a static model decays fast.

What's the difference between MQL and MQA?

An MQL is an individual lead meeting your qualification criteria. An MQA - marketing qualified account - qualifies at the account level, recognizing that B2B purchases involve buying groups of 2+ people 93% of the time. Intent data tracking thousands of topics helps identify in-market accounts, not just individual leads, making the MQL-to-MQA transition operationally feasible.

How do you calculate cost per MQL?

Divide total marketing spend by MQLs generated - not total leads. If your CPL is $188 and your lead-to-MQL ratio is 22%, your true cost per MQL is ~$855. Track this by channel: referrals average ~$114 per MQL while events can run $3,800+.