Revenue Predictability AI: The Measurement-First Playbook for 2026

You just walked out of another Monday forecast call. The CRO's number doesn't match the VP of Sales' number, which doesn't match what's in the CRM. Everyone nods, nobody trusts the output, and the board deck gets a confidence interval wide enough to drive a truck through. 79% of sales organizations miss their forecast by more than 10%. Among enterprises not using dedicated forecasting platforms, 87% missed their 2025 revenue targets. The problem isn't a lack of AI tools. It's a lack of measurement infrastructure to make those tools trustworthy.

Before You Do Anything Else

- Standardize stage definitions. If your SDRs and AEs disagree on what "Stage 3" means, no model can save you.

- Track forecast error weekly using WAPE or MAPE. You can't improve what you don't measure.

- Fix pipeline inputs. Verified contacts, enrichment, intent signals - dirty data just produces confident garbage faster.

What Revenue Predictability AI Actually Means

Most people hear the phrase and think sales forecasting. That's the narrow version. The full scope covers every revenue moment across the customer lifecycle: net-new pipeline creation, deal progression, renewal prediction, expansion revenue, and churn.

A r/CustomerSuccess thread recently asked what data churn prediction models are actually trained on - and whether anyone's seen real results. That's the right question. Revenue predictability breaks into three pillars. First, predictability: is the pipeline real or inflated? Second, efficiency: are resources allocated to the right motions? Third, cross-functional alignment: do marketing, sales, and CS share definitions and handoffs? The AI layer sits at the intersection of all three. It's not a tool category. It's an operating model that uses machine learning to make revenue outcomes repeatable.

The Four-Layer Predictability Stack

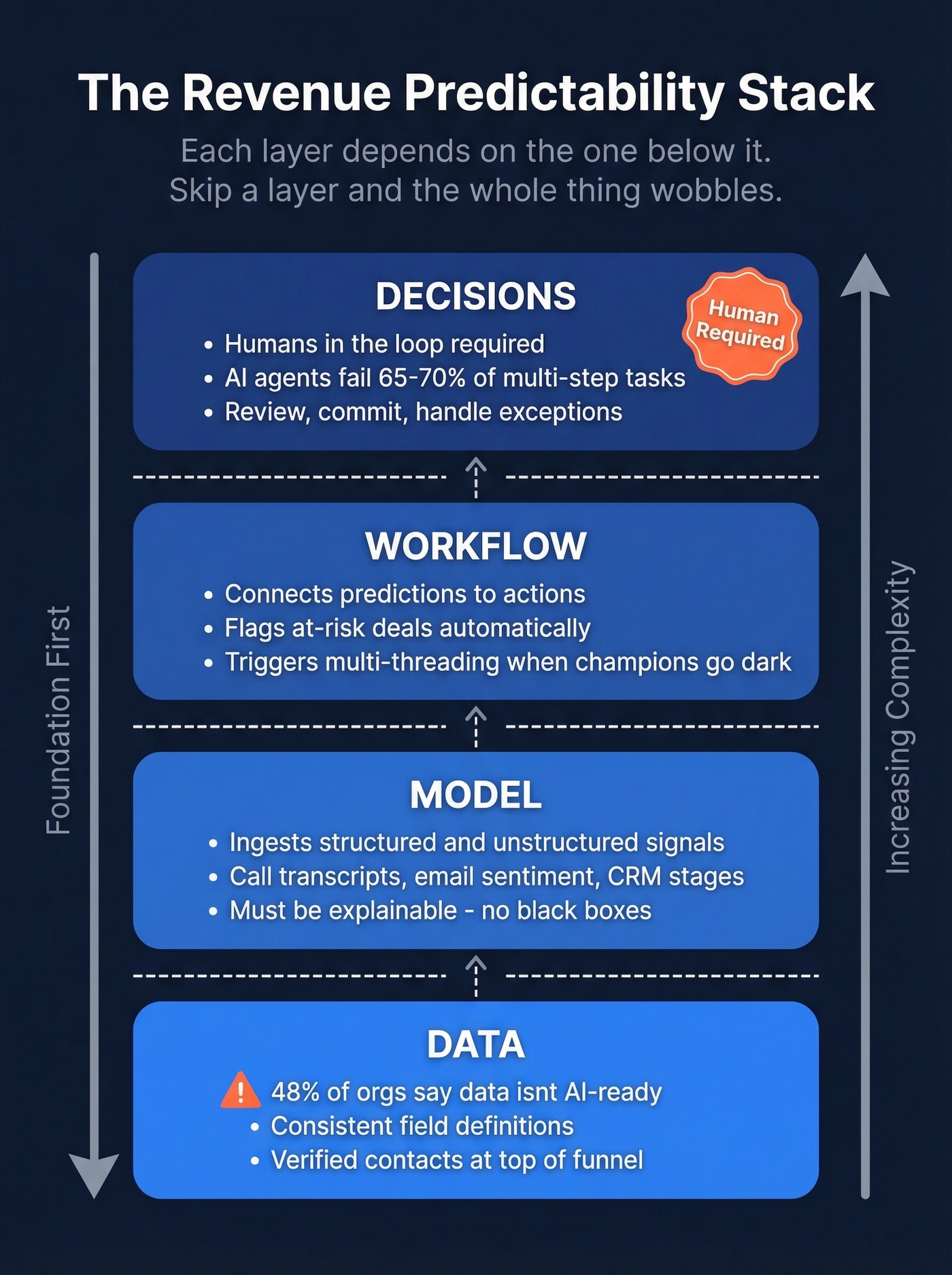

Think of revenue predictability as four layers, each depending on the one below it. Skip a layer and the whole thing wobbles.

Layer 1 - Data

This is where most teams fail before they start. 48% of organizations say their revenue data isn't AI-ready, and 55% report conflicting pipeline signals from disconnected sources. The fix is boring: consistent field definitions, enforced data entry, and verified contact data at the top of funnel.

Layer 2 - Model

The algorithms that ingest your data and produce forecasts, risk scores, and scenario ranges. Here's the thing: if you can't explain what the model is trained on, you're trusting a black box with your board commitment. The best predictive models ingest unstructured signals too - call transcripts, email sentiment, champion engagement patterns - not just CRM stage labels.

Layer 3 - Workflow

A forecast that lives in a dashboard nobody checks is useless. This layer connects predictions to actions: flagging at-risk deals, triggering multi-threading when a champion goes dark, adjusting territory plans when pipeline shifts.

Most tools don't close this gap well.

Layer 4 - Decisions

AI agents fail roughly 65-70% of multi-step tasks in current benchmarks. The decision layer requires humans in the loop - reviewing AI-surfaced risks, making commit calls, handling exceptions. 46% of organizations cite accountability and trust as their biggest challenge with AI in revenue operations. Anyone selling you "set it and forget it" revenue AI is selling a fantasy.

How to Measure Forecast Accuracy

Most teams buying forecasting tools never measure whether the forecasts actually improve. A thread on r/SalesOperations asked exactly this. The silence was telling.

When a vendor claims "98% accuracy," ask: measured how, and over what time window? BCG found the median AI ROI is 10%, with a third of organizations reporting limited or no gains. Measurement separates teams that get value from teams that get a dashboard.

Our strong take: If your average deal size is under $30k and your sales cycle is under 45 days, a well-maintained spreadsheet with WAPE tracking will outperform a $40k/year forecasting platform for the first 12 months. The tool isn't the bottleneck - your data hygiene is. (If you're evaluating platforms, start with a shortlist of sales forecasting solutions and compare implementation effort, not just features.)

One underused technique we've recommended to multiple teams: run your AI forecast alongside your human forecast each week. The gap between the two is your bias signal. When the model consistently predicts lower than your reps commit, you've caught sandbagging before it hits the board.

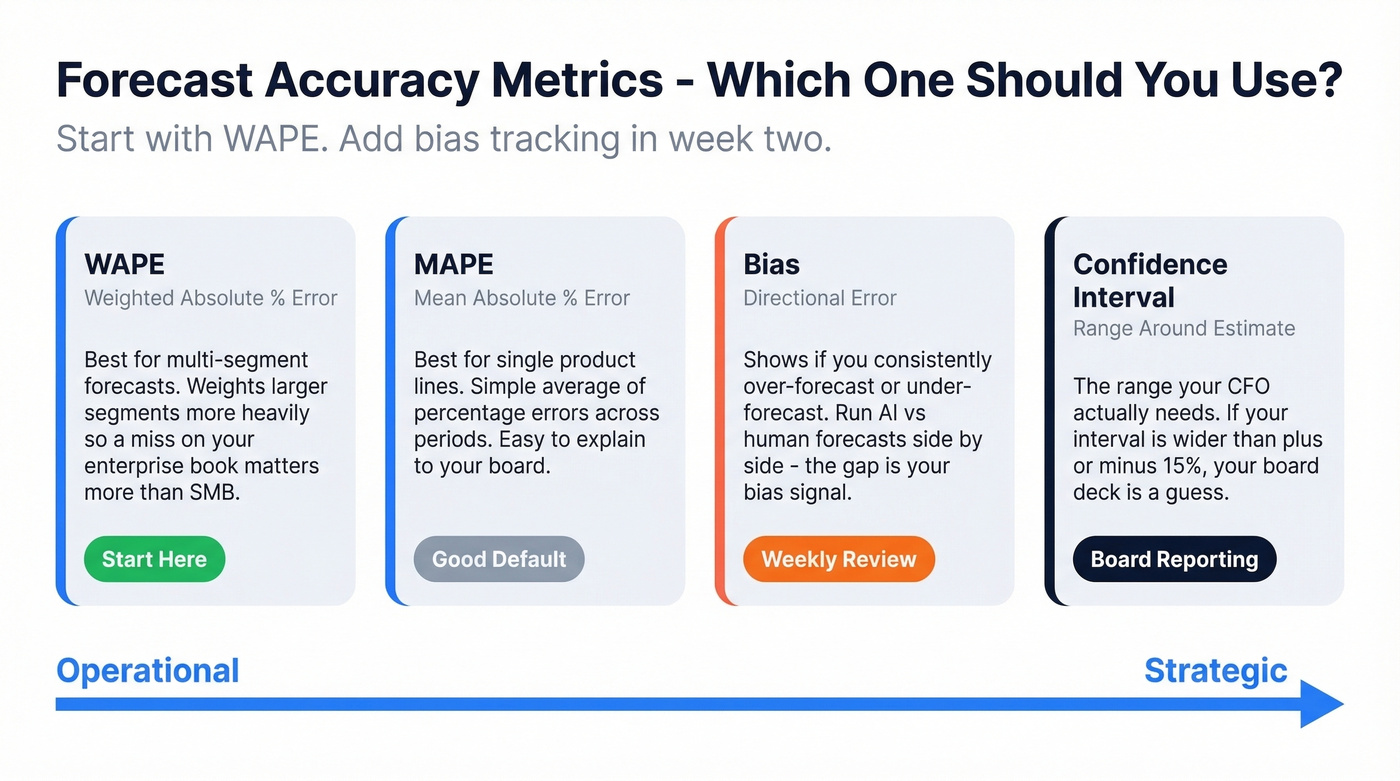

| Metric | What It Measures | When to Use |

|---|---|---|

| WAPE | Weighted error across segments | Multi-segment forecasts |

| MAPE | Avg % error per period | Single-product lines |

| Bias | Over/under-forecasting | Weekly review |

| Confidence interval | Range around estimate | Board/CFO reporting |

Start with WAPE if you forecast across multiple products or segments. Add bias tracking in week two.

Garbage in, garbage out - and 48% of orgs admit their revenue data isn't AI-ready. Prospeo stabilizes the top-of-funnel inputs your forecasting models depend on: 98% email accuracy, 83% enrichment match rate, intent data across 15,000 topics, and a 7-day refresh cycle that keeps pipeline contacts current while competitors run on 6-week-old data.

Stop feeding stale data into a $40k forecasting platform.

Fix Inputs Before Buying Another Platform

We've seen this pattern repeatedly: a team buys a $40k/year forecasting platform, spends three months implementing it, and gets outputs barely better than a spreadsheet. The reason is almost always garbage inputs.

Simple systems scale. Complex ones create more failure points.

The governance gap is real - 42% of organizations lack formal data governance frameworks, and 64% say CIO teams lead tool selection for forecasting, with 96% reporting that CIO involvement improves accuracy. If your CIO isn't in the room when you're picking revenue tools, you're already behind. Software spending is rising 15.2% in 2026, and Gartner explicitly ties higher software costs to GenAI features being bundled into products companies already own. CFOs are rightly nervous about cost volatility.

The input checklist that matters:

- Verified contacts at the top of funnel. Predictable pipeline creation requires contacts that are real. Prospeo's 98% email accuracy and 7-day refresh cycle stabilize the inputs feeding forecasting models, with an 83% enrichment match rate and intent data across 15,000 topics filtering out junk pipeline before it hits a forecast. (If you're comparing vendors, see our breakdown of data enrichment services.)

- Standardized stages with exit criteria agreed on by every revenue team.

- CRM hygiene enforced at the field level - required fields, validation rules, automated updates. (This is also where good contact management software can reduce manual errors.)

- Intent signals layered on pipeline to separate real buying activity from noise. (If you need a practical scoring approach, start with identifying buying signals.)

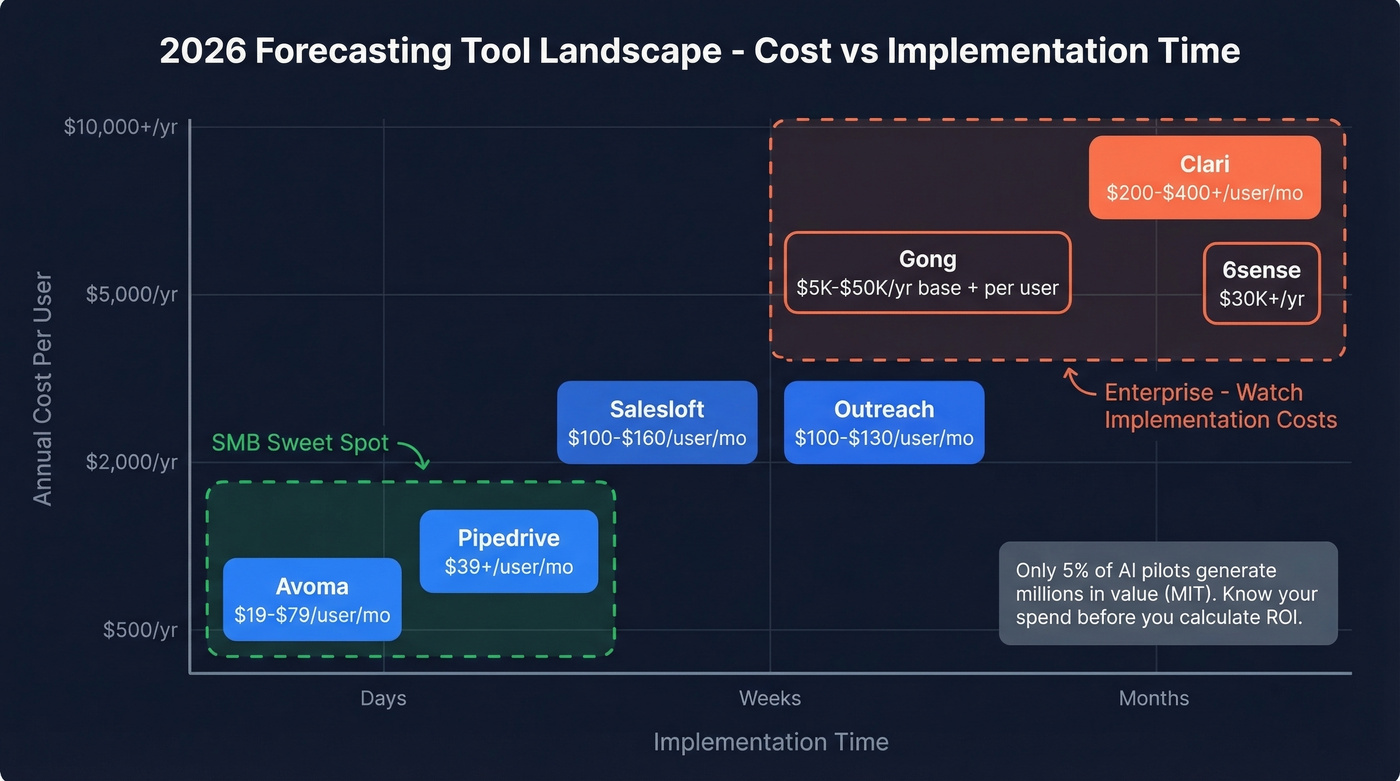

What Forecasting Tools Cost in 2026

Only 5% of AI pilots generate millions in value, per MIT research. Know what you're spending before you calculate whether it's worth it. (For a broader view of the category, compare best sales forecasting tools by pricing and rollout time.)

| Tool | Typical Cost | Notes |

|---|---|---|

| Avoma | $19-$79/user/mo | Forecasting features require the Business plan at $79/user/mo; 14-day trial |

| Pipedrive | From $39/user/mo | Forecasting requires Growth plan |

| Salesloft | $100-$160/user/mo | Engagement + forecasting bundle |

| Outreach | $100-$130/user/mo | Engagement + forecasting bundle |

| Gong | $5K-$50K/yr + $100-$250/user/mo | Conversation intel + forecasting |

| Clari | $200-$400+/user/mo; ~$30K-$50K/yr min | Dedicated forecasting platform |

| 6sense | $30K+/yr | Intent + ABM + forecasting signals |

SMB tools like Avoma or Pipedrive go live in days. Enterprise platforms like Clari or Gong take 6-24+ weeks depending on integrations and data migration. The tool cost is often the smaller number - implementation labor and opportunity cost are where the real spend hides.

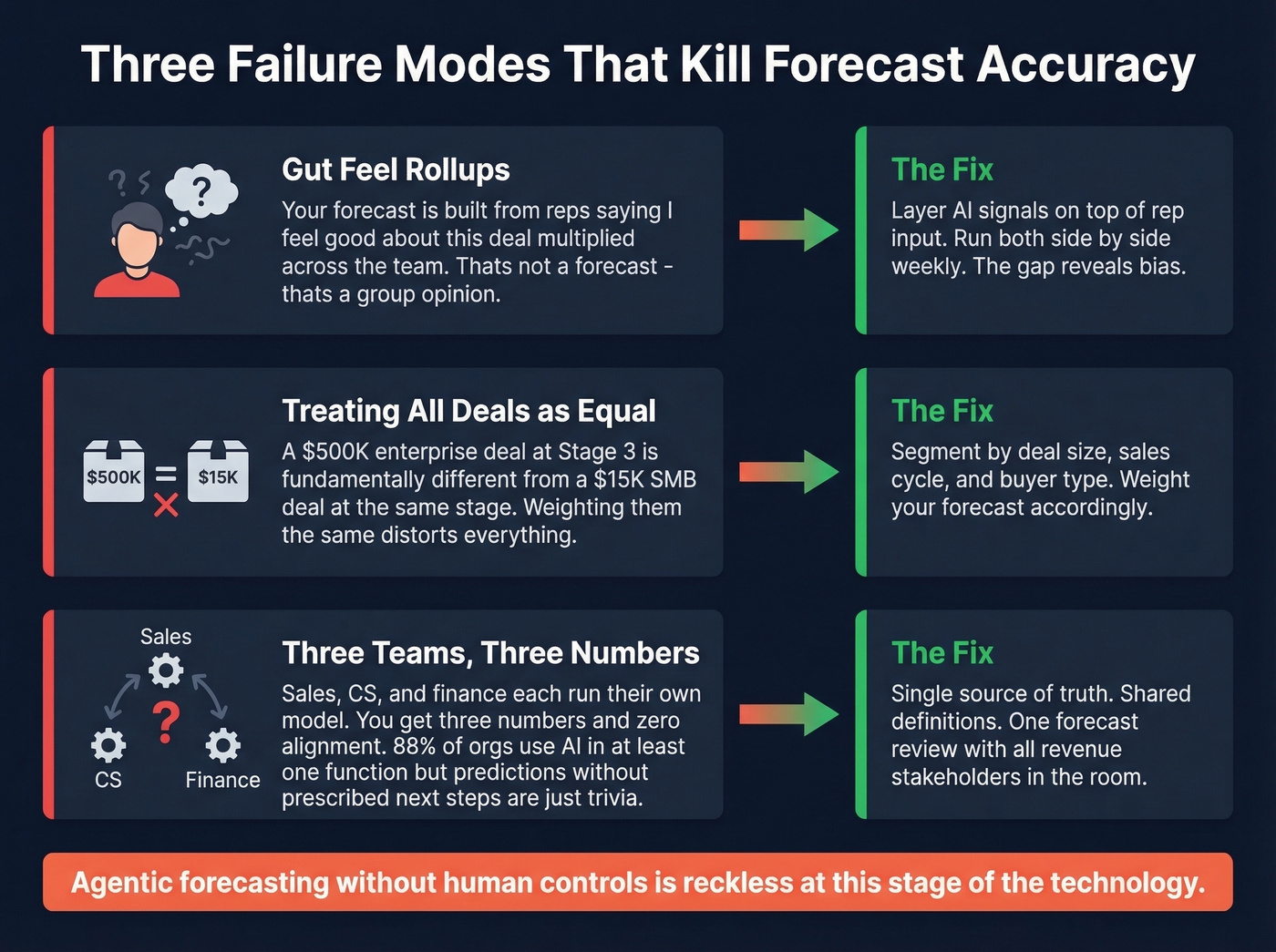

Common Failure Modes

The most common failure is treating the forecast as a rollup of rep gut feel. If your number is built from "I feel good about this deal" multiplied across the team, you don't have a forecast - you have a group opinion. This gets worse when CRM data is messy: missing close dates, stale stages, contacts that bounced six months ago still sitting in active opportunities. (If this sounds familiar, start by diagnosing sales pipeline challenges before you add more tooling.)

Equally damaging is treating all deals in a stage as equal. A $500K enterprise deal at Stage 3 is fundamentally different from a $15K SMB deal at the same stage. Weight accordingly.

And if sales, CS, and finance each run their own forecast model, you'll get three numbers and zero alignment. 88% of organizations now use AI in at least one function, and 23% are expanding agentic systems - but a prediction without a prescribed next step is just trivia. Let's be blunt: agentic forecasting without human controls is reckless at this stage of the technology. (If you're building the operating cadence, borrow the KPI set from sales operations metrics.)

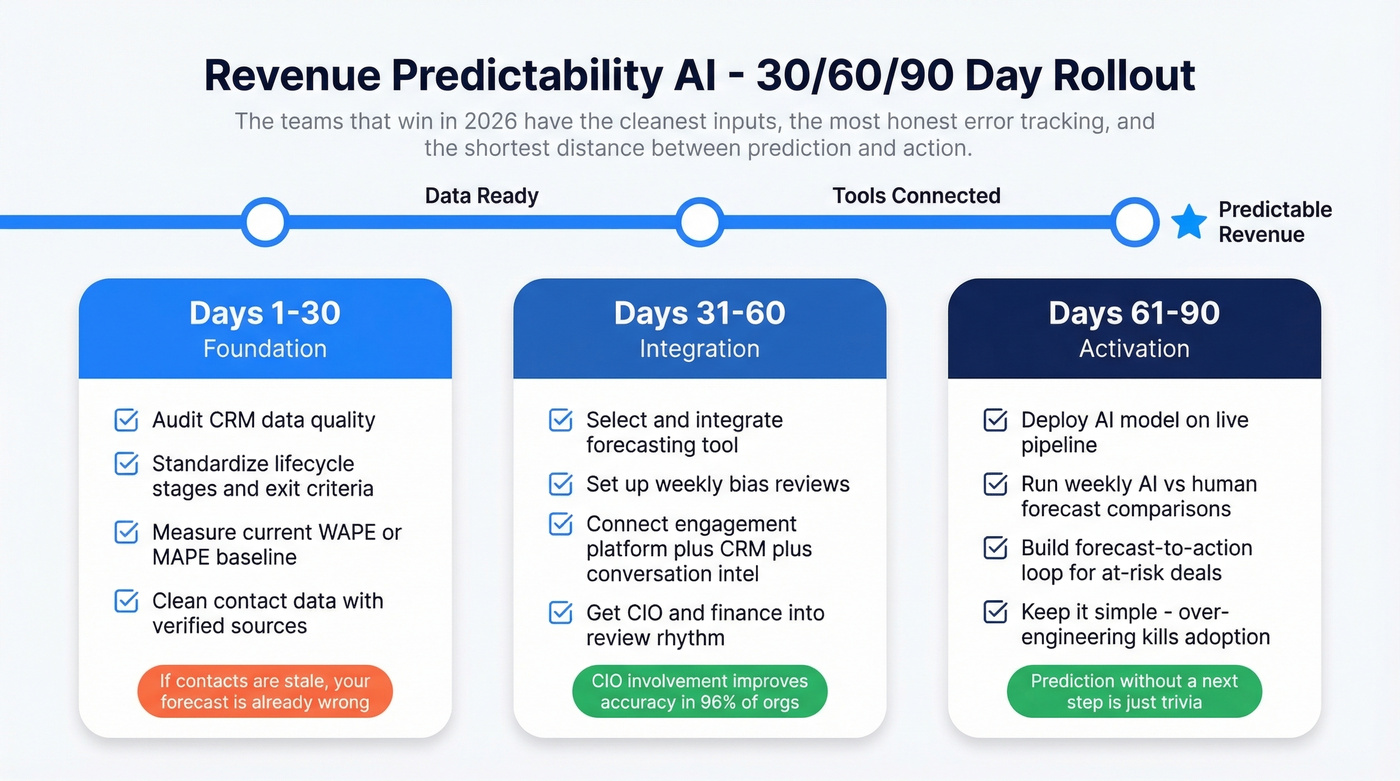

The 30/60/90-Day Rollout

Days 1-30: Audit CRM data quality. Standardize lifecycle stages and exit criteria. Measure your current WAPE or MAPE to establish a baseline. Clean contact data with verified sources - if you're feeding stale emails and dead phone numbers into your pipeline, your forecast is already wrong before the model touches it. (If you want a template you can adapt, use this 30-60-90 day plan structure.)

Days 31-60: Select and integrate your forecasting tool. Set up weekly bias reviews and monthly accuracy tracking. Connect engagement platform, CRM, and conversation intelligence into a single data flow. Get CIO and finance into the review rhythm.

Days 61-90: Deploy the AI model on live pipeline. Run weekly comparisons of AI output versus human judgment. Build the forecast-to-action loop: when the model flags a deal at risk, what happens next? Keep it simple - over-engineering at this stage kills adoption.

Revenue predictability AI isn't a single purchase. It's a discipline. The teams that win in 2026 won't be the ones with the fanciest model. They'll be the ones with the cleanest inputs, the most honest error tracking, and the shortest distance between a prediction and an action.

You just read that 87% of enterprises without dedicated forecasting infrastructure missed targets. But the fix isn't another AI dashboard - it's verified contacts, real intent signals, and enrichment that returns 50+ data points per lead at 92% match rates. At $0.01 per email, Prospeo costs less than one missed forecast costs your credibility.

Predictable revenue starts with data you can actually trust.

FAQ

What's the difference between revenue forecasting and revenue predictability?

Forecasting produces a number. Predictability is the system that makes it trustworthy: standardized definitions, clean data, error measurement, and governance. You can forecast without predictability, but you can't trust the result. Most teams that adopt AI forecasting tools without fixing the underlying system see less than 10% ROI.

Which forecast error metric should I start with?

Start with WAPE if you forecast across multiple segments - it weights errors by segment size so misses on your largest revenue line count most. Add bias tracking in week two to catch systematic over- or under-forecasting before it compounds.

How long until AI forecasting tools show ROI?

Expect 6-24 months for measurable returns. In BCG's finance AI survey, the median ROI is 10%, and roughly one in five organizations reports 20%+. The fastest wins come from fixing data inputs first - not from the model itself.

Can AI fully replace human judgment in forecasting?

Not yet. AI agents fail 65-70% of multi-step tasks in 2026 benchmarks. Use AI for risk signals, scenario ranges, and anomaly detection. Keep humans in the loop for commit decisions and exception handling. Full automation is years away.

How do I make pipeline data clean enough for AI?

Start with verified contact data - Prospeo delivers 98% email accuracy on a 7-day refresh, eliminating stale contacts polluting your pipeline. Then standardize stage definitions, enforce CRM field hygiene, and layer intent signals to separate real buying activity from noise.