SaaS Lead Scoring: Why Most Models Fail and How to Fix Yours

A founder we know built an elaborate scoring model in HubSpot. Perfect prospect = 100, unqualified = below 30. Their biggest deal that quarter? A lead scored 12 - booked a demo from cold outreach, never opened a single email. Meanwhile, a lead scoring 89 turned out to be a grad student researching a thesis. They scrapped the model, went back to three qualification questions, and their close rate tripled.

SaaS lead scoring isn't broken as a concept. It's broken in how most teams implement it.

What You Need (Quick Version)

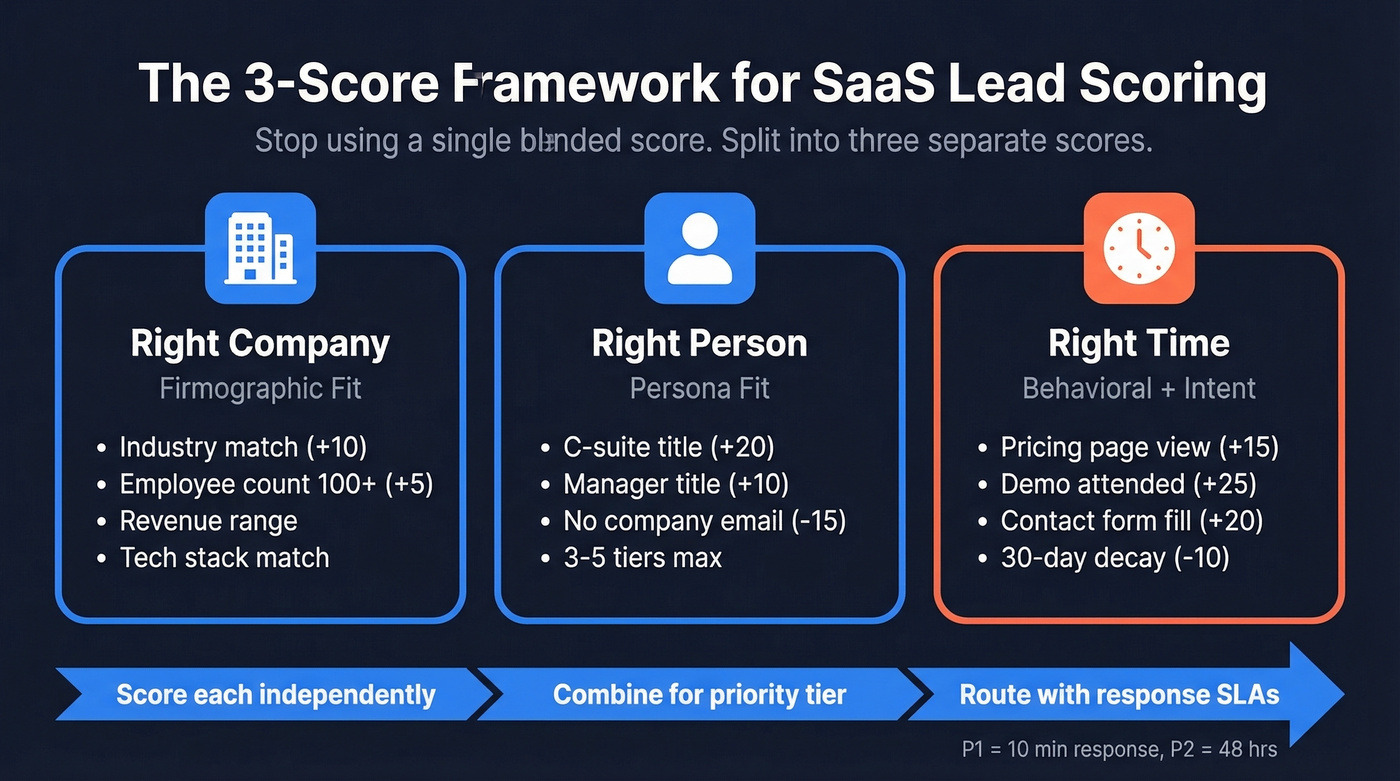

Stop using a single blended score. Split your model into three separate scores: Company Fit, Person Fit, and Timing. Start with manual rules in your existing CRM - you can build this with separate properties in HubSpot or Salesforce. You don't need a $40k/year predictive platform to start.

Before you assign a single point value, fix your data. Up to 21% of prospect data is inaccurate. That's a fifth of your database poisoning every model you build.

What Lead Scoring Actually Means in SaaS

Lead scoring assigns numerical values to leads based on explicit data - title, company size, industry - and implicit data like page visits, email opens, and feature usage. The output is a priority ranking that tells reps where to spend their time.

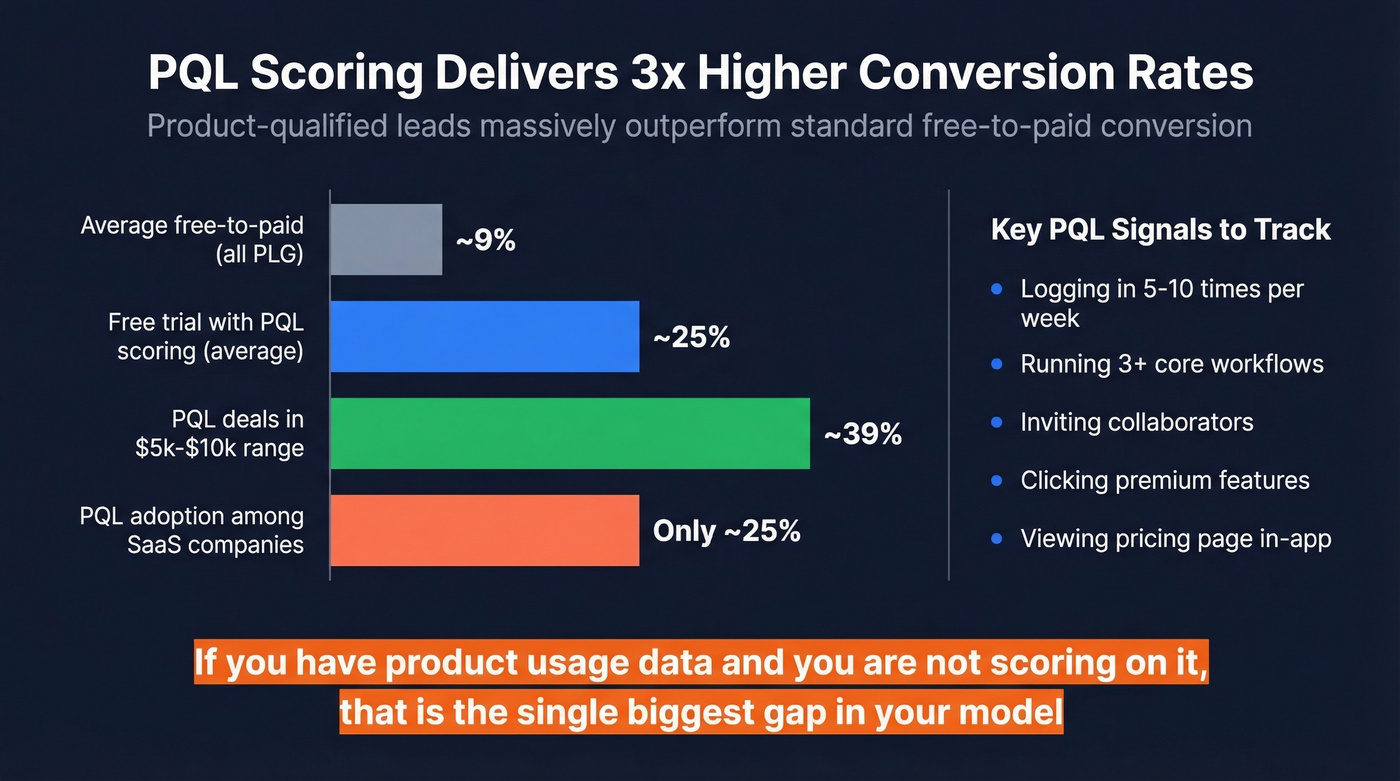

The SaaS-specific wrinkle: you're not just scoring MQLs and SQLs. Product-led companies need PQLs, product-qualified leads scored on in-app behavior. If you're running a freemium motion and you're still treating every trial signup the same, you're leaving money on the table.

Why Most Scoring Models Fail

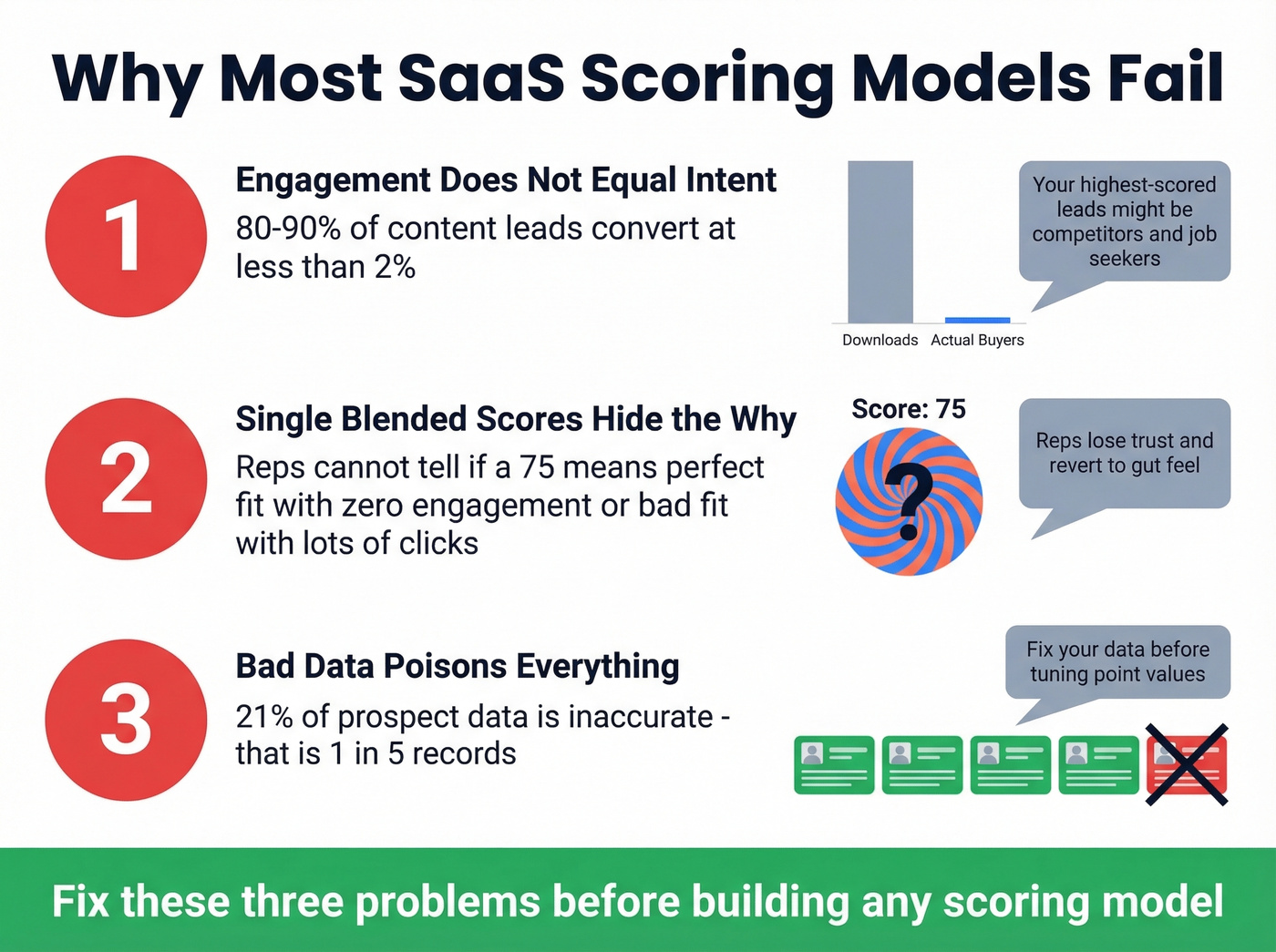

Three failure modes kill scoring models in the wild.

Engagement doesn't equal intent. A prospect who downloads five ebooks and attends two webinars looks great on paper. But 80-90% of leads are typically content leads that convert at less than 2%. One r/b2bmarketing poster summed it up: "Our highest-scored leads were competitors and job seekers - not buyers." Students, competitors, and tire-kickers all trigger the same behavioral signals as real prospects.

Single blended scores hide the "why." When firmographic fit, persona match, and behavioral timing get rolled into one number, reps can't tell why a lead scored 75. Is it a perfect-fit company with zero engagement, or a terrible-fit company whose intern visited the pricing page eight times? Reps lose trust, revert to gut feel, and your model becomes shelfware. According to SiriusDecisions research, teams that align sales and marketing around shared scoring definitions see 24% higher revenue growth and 27% higher profit growth over three years. Adobe saw a 30% improvement in sales acceptance rates just by implementing shared lead definitions.

Bad data poisons everything. If a fifth of your contact records carry stale emails, outdated titles, or incorrect company data, your scores are built on a cracked foundation. We've watched teams spend months tuning point values when the real problem was garbage inputs. Tools like Prospeo verify emails at 98% accuracy on a 7-day refresh cycle - the kind of data hygiene that keeps scores from rotting.

You just read it: 21% of prospect data is inaccurate, and bad data poisons every scoring model you build. Prospeo's 5-step verification delivers 98% email accuracy on a 7-day refresh cycle - so your Company Fit, Person Fit, and Timing scores actually reflect reality, not stale records.

Stop tuning point values on top of garbage inputs.

The 3-Score Framework

Each question produces its own score.

Right Company

This covers firmographic fit: industry, employee count, revenue range, tech stack. A B2B scoring model might give +10 for target industry match and +5 for 100+ employees. Heap's early scoring model was exactly this simple - firmographic points bucketed into Low/Medium/High, with routing tiers by company size.

Right Person

Persona fit: title, seniority, department. A C-suite contact gets +20; a marketing manager gets +10. Someone with no company email gets -15. Keep it tight. We've seen models with 40+ persona rules that nobody can explain six months later - three to five tiers is plenty.

Right Time

Behavioral and intent signals. In our experience, this is where most teams under-invest. Pricing page views (+15), contact form submissions (+20), live demo attendance (+25), three or more email opens (+8). Critically, this score should decay - no activity in 30 days means -10.

| Signal | Category | Points |

|---|---|---|

| C-suite title | Person Fit | +20 |

| Marketing Manager | Person Fit | +10 |

| 100+ employees | Company Fit | +5 |

| Target industry | Company Fit | +10 |

| Pricing page view | Timing | +15 |

| Contact form fill | Timing | +20 |

| Live demo attended | Timing | +25 |

| Opened 3+ emails | Timing | +8 |

| Unsubscribed | Negative | -15 |

| No company email | Negative | -15 |

| No activity 30 days | Decay | -10 |

The operational piece matters as much as point values. LeanData uses priority tiers tied to response SLAs: P1 leads get a 10-minute response, P2 leads get a 48-hour window. Without response SLAs, scoring is just an academic exercise. (If you need a broader refresher, see our lead scoring guide.)

PQLs - The SaaS-Specific Score

If you're running a free trial or freemium model, PQLs are criminally underused.

A product-qualified lead fits your ICP and has experienced product value - not just signed up, but actually used the thing. Track these signals: logging in 5-10 times per week, running 3+ core workflows, inviting collaborators, clicking premium features like exports or advanced analytics, and viewing the pricing page from inside the product.

The benchmarks are compelling. PQL adoption sits at only ~25% among SaaS companies, but those using PQLs see roughly 3x higher conversion rates. Free trials with PQL scoring convert at ~25% on average, jumping to 39% for deals in the $5k-$10k range. Compare that to the ~9% average free-to-paid conversion across all PLG models. If you have product usage data and you're not scoring on it, that's the single biggest gap in your model.

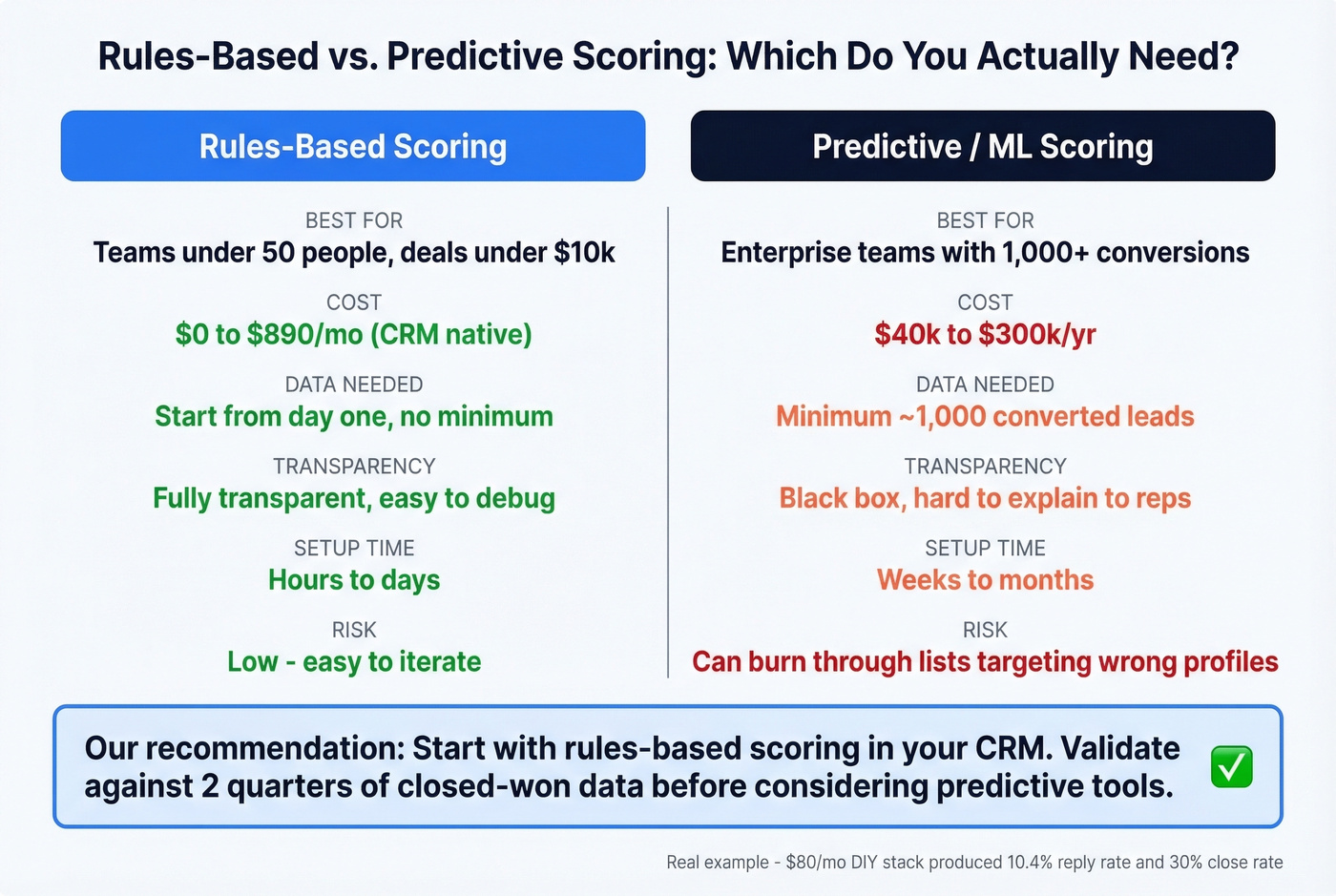

Rules-Based vs. Predictive

Here's the thing: if your average deal size is under five figures and your team is under 50 people, you almost certainly don't need predictive scoring. Manual rules-based scoring - the kind you build in HubSpot or Salesforce with if/then logic - is transparent, easy to debug, and works from day one. Predictive scoring needs ~1,000 converted leads to produce reliable models. Without that volume, the algorithm is guessing. (If you're considering ML anyway, start with B2B predictive analytics basics.)

One r/SaaSSales poster put it plainly: they worried AI scoring tools would "burn through our list" by targeting wrong profiles. That fear is valid - B2B teams waste up to one-third of resources chasing accounts that never convert.

A practical middle ground exists. One founder built a signal-based scoring system for $80/month using Google Alerts for signals, Claude API for scoring and email drafting, and an email tool for sequencing. After two months: 10.4% reply rate, 3-4 meetings per week, 30% close rate. Real pipeline from a stack cheaper than a team lunch. (If you're building outbound alongside scoring, these sales prospecting techniques help.)

Skip predictive tools until you've validated manual rules against at least two quarters of closed-won data. If your rules-based model is already routing leads accurately, throwing ML at the problem won't magically double your conversion rate - it'll just triple your software bill.

What Scoring Tools Cost

| Tool | What You Get | Cost |

|---|---|---|

| HubSpot Professional | Manual scoring | $890/mo (3 seats) |

| HubSpot Enterprise | Predictive scoring | $3,600/mo (10-seat min) + $3,500 onboard |

| Salesforce Einstein | ML-based scoring | ~$40k+/yr all-in (10 reps) |

| 6sense | Intent + account scoring | $60k-$300k/yr |

| DIY stack | Signal-based scoring | ~$80/mo |

| Prospeo | Verified contacts + enrichment | Free tier to ~$0.01/email |

A 10-rep Salesforce Einstein deployment runs $40k+ per year before you've scored a single lead. 6sense contracts start around $60k and climb fast. Most SaaS companies under 50 people don't need any of these. Start with manual rules in your CRM, validate against closed-won deals, and only upgrade when you've genuinely outgrown the model.

For the data layer underneath your scoring model, Prospeo's enrichment API returns 50+ data points per contact at a 92% match rate - firmographics, technographics, and intent signals across 15,000 topics. That's the foundation your point values actually sit on. (If you're comparing vendors, see data enrichment services.)

Building a 3-score framework means nothing if your firmographic and persona data is wrong. Prospeo gives you 300M+ profiles with 30+ filters - industry, headcount, tech stack, job title, seniority - at $0.01 per email. That's the foundation your scoring model needs before a single point gets assigned.

Accurate scoring starts at accurate data - for a penny per lead.

FAQ

How many leads do I need before scoring models work?

Start with manual rules from day one - no minimum needed. Predictive and ML-based scoring requires ~1,000 converted leads to produce reliable models. Below that threshold, rules-based scoring in your CRM outperforms any algorithm.

Should I buy a scoring tool or build in my CRM?

Build in your CRM first. HubSpot Professional ($890/mo) and Salesforce both support native scoring rules. Only evaluate dedicated tools after you've validated criteria against actual closed-won deals. Most B2B teams can meet their scoring needs with native CRM functionality until they hit real scale.

What's the fastest way to improve lead score accuracy?

Fix your data before touching your model. With 21% of prospect data typically wrong, verifying emails and enriching contacts is where most models silently break. A 7-day data refresh cycle prevents the decay that makes scores unreliable within weeks.

How does SaaS lead scoring differ from traditional B2B scoring?

SaaS scoring adds product-usage signals - trial logins, feature adoption, workspace invites - that traditional models ignore. Companies using PQL scoring see roughly 3x higher conversion rates than those relying on marketing-qualified leads alone. If you run a free trial or freemium motion, layering product signals into your model is the single highest-leverage change you can make.