How to Build an Inbound Lead Scoring Model That Sales Actually Uses

Marketing hit their MQL target last month. Sales hit 60% of quota. Nobody's talking about why those two numbers don't match - but the answer is almost always the same: the scoring model is broken, and it's been broken since launch.

Why Inbound Scoring Is Different

Only 44% of B2B organizations use lead scoring at all. The other 56% treat every inbound lead the same - and it shows in their conversion rates. Companies that score leads see 138% ROI versus 78% without scoring.

Inbound leads self-select. A VP of Engineering at a $50M SaaS company and a marketing intern researching a term paper can both download the same whitepaper. Unlike outbound, where you chose the titles and industries, inbound gives you richer behavioral data but less targeting control. That behavioral richness is the advantage and the trap. You get pricing page visits, content downloads, webinar attendance, chatbot conversations - signals outbound doesn't generate. But without a model that separates curiosity from buying intent, you're routing noise to your sales team.

Speed compounds the problem. Deals closed within 50 days carry a 47% win rate versus 20% or lower after that window. Every day a real buyer sits in a nurture sequence because your scoring model missed them is money left on the table.

What You Need (Quick Version)

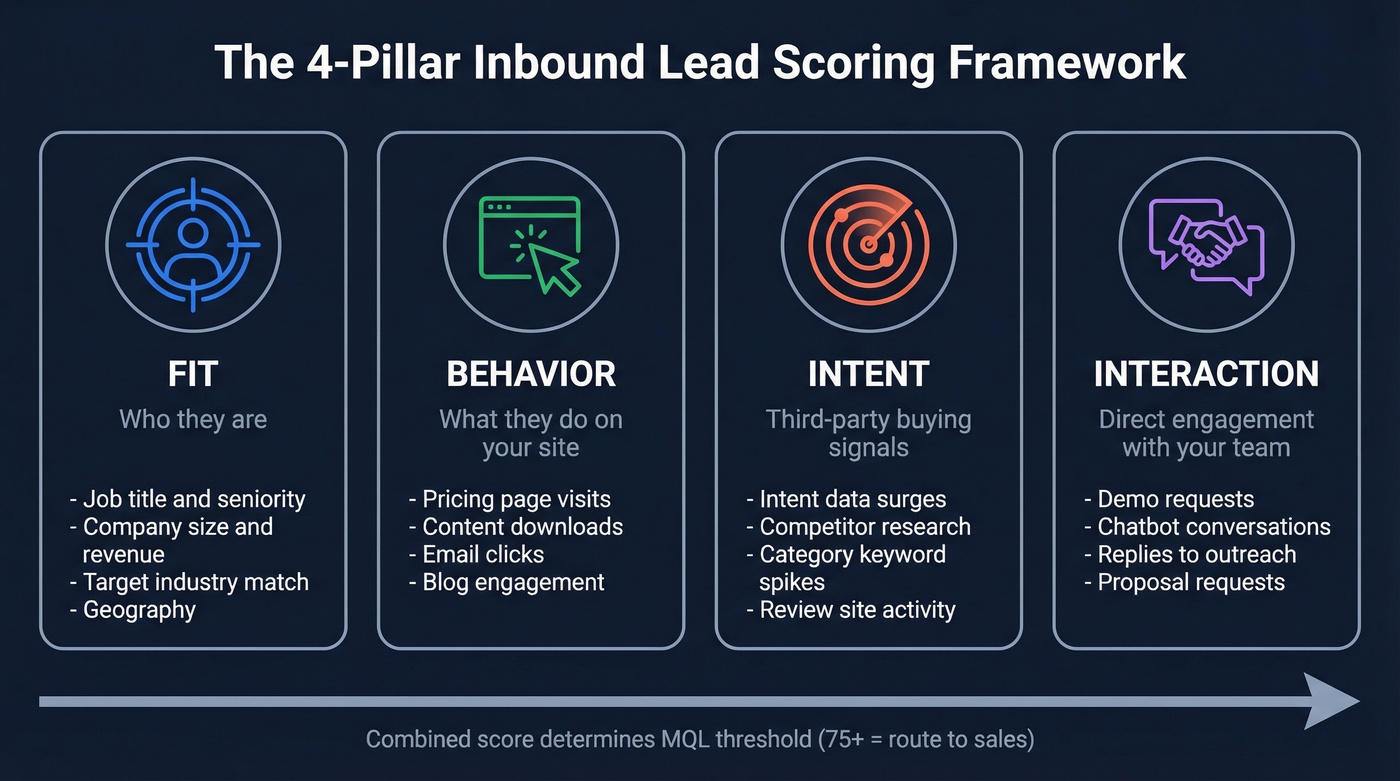

- Use a 4-pillar scoring model - fit, behavior, intent, and interaction. Copy the point-value rubric below. (If you want the broader context, see our lead scoring guide.)

- Set your MQL threshold at 75+ on a 0-100+ scale, then adjust based on sales capacity and quarterly feedback loops.

- Clean your data before you score it. Scoring stale contacts produces confidently wrong prioritization, and that's worse than not scoring at all.

Why Most Scoring Models Fail

Here's the thing: most scoring models don't fail because the math is wrong. They fail because the inputs are garbage and the thresholds are political.

Roughly 32% of MQLs turn out to be unusable - disconnected numbers, role changes, ICP mismatches. In our experience, the model breaks down at the data layer long before it breaks down at the logic layer. Marketing teams set the MQL bar too low to inflate growth metrics. A free checklist download shouldn't be an MQL, but it is at plenty of companies because it makes the dashboard look good. The consensus on r/sales echoes this: page views and email opens aren't buying signals. They're breathing signals.

The 4-Pillar Scoring Framework

The model that works scores across four dimensions: fit (who they are), behavior (what they do on your site), intent (third-party buying signals), and interaction (direct engagement with your team). Let's break each one down.

Fit + Behavior Scoring

| Signal | Points |

|---|---|

| VP+ at target company | +25 |

| Director at target company | +15 |

| Target industry | +15 |

| Revenue $10M-$500M | +15 |

| Company <10 employees | -20 |

| Intern / student title | -15 |

| Non-decision-maker title | -10 |

| Pricing page visit | +40 |

| Case study download | +15 |

| Email open | +3 |

| Email CTA click | +10 |

| Webinar attendance | +20 |

| Blog visit | +2 |

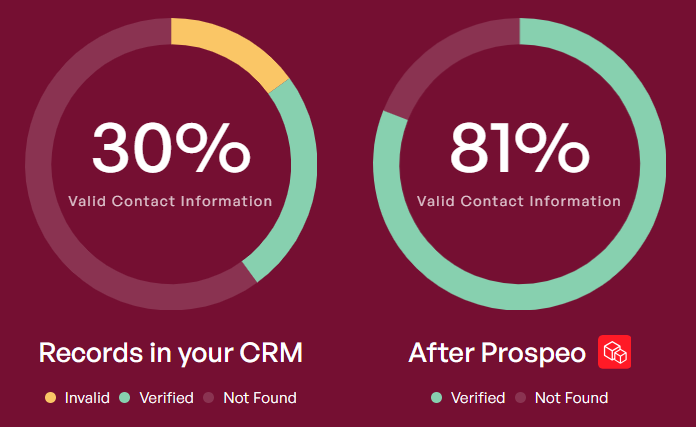

Fit scoring only works if the data behind it is accurate. Enrich inbound leads with verified contact data - Prospeo returns 50+ data points per record at 98% email accuracy - so your fit criteria score against real information, not whatever the lead typed into a form field. (If you're comparing vendors, start with data enrichment services.)

Negative scoring matters just as much as positive. One team we spoke with saw a 13% lift in MQL-to-meeting rate just by tightening title and seniority filters while lowering the activity threshold. Think about it: an intern at a 5-person agency who downloads three case studies shouldn't score higher than a VP who visited your pricing page once. The negative weights for tiny companies and non-buyer titles keep your model honest.

Intent + Interaction Scoring

| Signal | Points |

|---|---|

| Live demo attended | +50 |

| Proposal request | +60 |

| Reply to outreach | +20 |

| Chatbot conversation | +10 |

| Third-party intent surge | +20 |

Cap repeat behaviors - don't let someone score 300 points by opening 100 emails. And build in decay: halve any action older than 6 months, zero it after 12. (More on separating noise from real intent in identifying buying signals.)

Worked Example

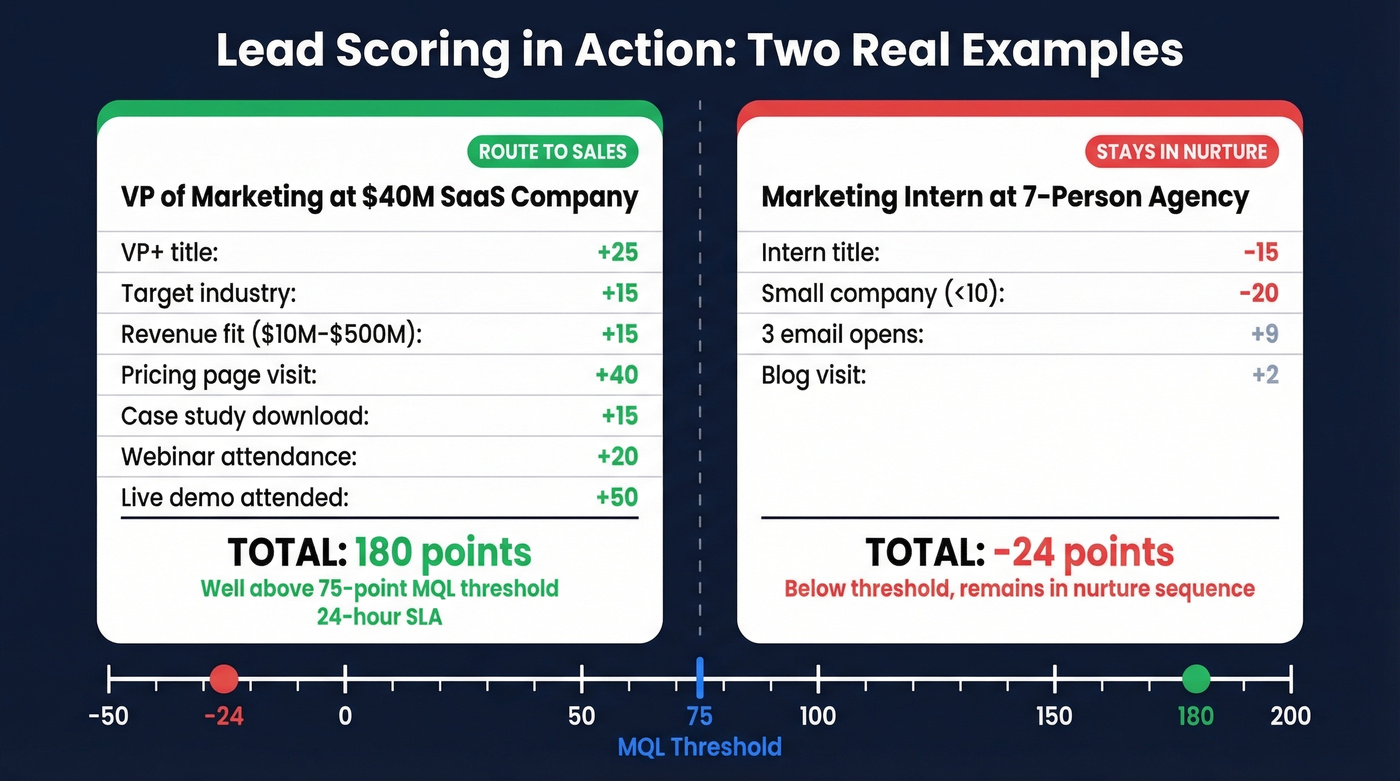

A VP of Marketing at a $40M SaaS company visits your pricing page, downloads a case study, attends a webinar, and requests a live demo:

VP+ title (+25) + target industry (+15) + revenue fit (+15) + pricing page (+40) + case study (+15) + webinar (+20) + live demo (+50) = 180 points. Well above the 75-point MQL threshold. Route to sales immediately with a 24-hour SLA.

Now compare that to a marketing intern at a 7-person agency who opened three emails and visited a blog post: intern title (-15) + small company (-20) + 3 email opens (+9) + blog visit (+2) = -24 points. That lead stays in nurture. The model did its job.

32% of MQLs fail because the underlying data is wrong - stale titles, bad emails, companies that no longer fit. Prospeo enriches every inbound lead with 50+ verified data points at 98% email accuracy, so your fit scores reflect reality, not form-fill fiction. Data refreshes every 7 days, not every 6 weeks.

Stop scoring leads against data your contacts typed six months ago.

MQL Thresholds and Routing SLAs

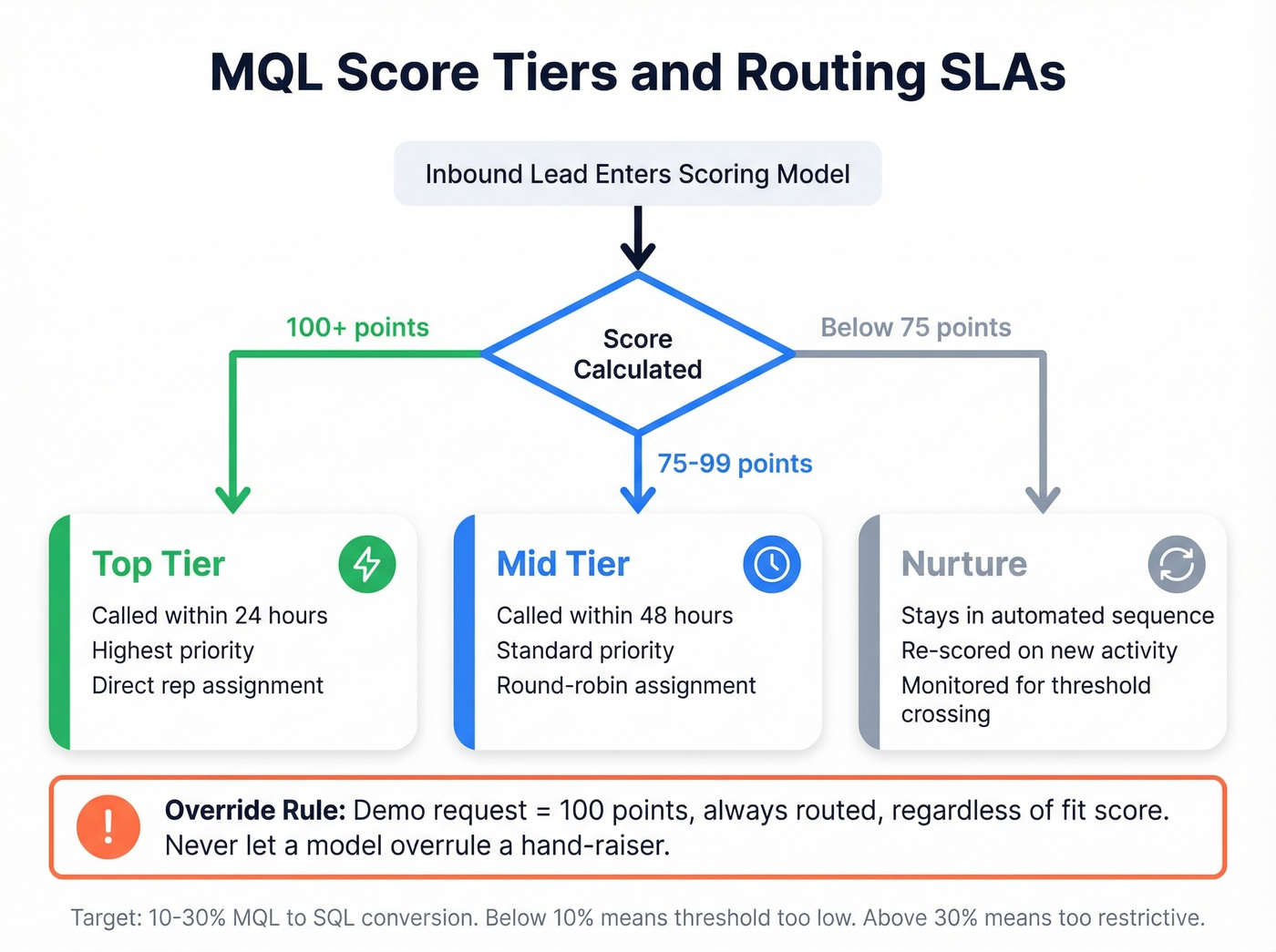

Set your initial MQL threshold at 75 on a 0-100+ scale. If reps are drowning, raise it. If they're starving, lower it.

If you don't have a shared definition of what happens after MQL, fix that first with a simple lead status workflow.

One rule we don't bend: a demo request equals 100 points, always routed, regardless of fit score. With average B2B demo CPLs running $600-$800, every misrouted lead is expensive. If someone raises their hand and says "show me the product," a scoring model shouldn't overrule that. Qualification is a human conversation, not a formula. (If your reps need consistency here, use a product demo checklist.)

Tie score tiers to SLA windows:

- Top-tier leads (100+): Called within 24 hours.

- Mid-tier (75-99): Called within 48 hours.

- Below 75: Stays in nurture until behavior pushes them over.

Strong programs convert 10-30% of MQLs to SQLs. Below 10% means your threshold is too low. Above 30%, you're likely leaving pipeline on the table. (Benchmarks: average B2B lead conversion rate.)

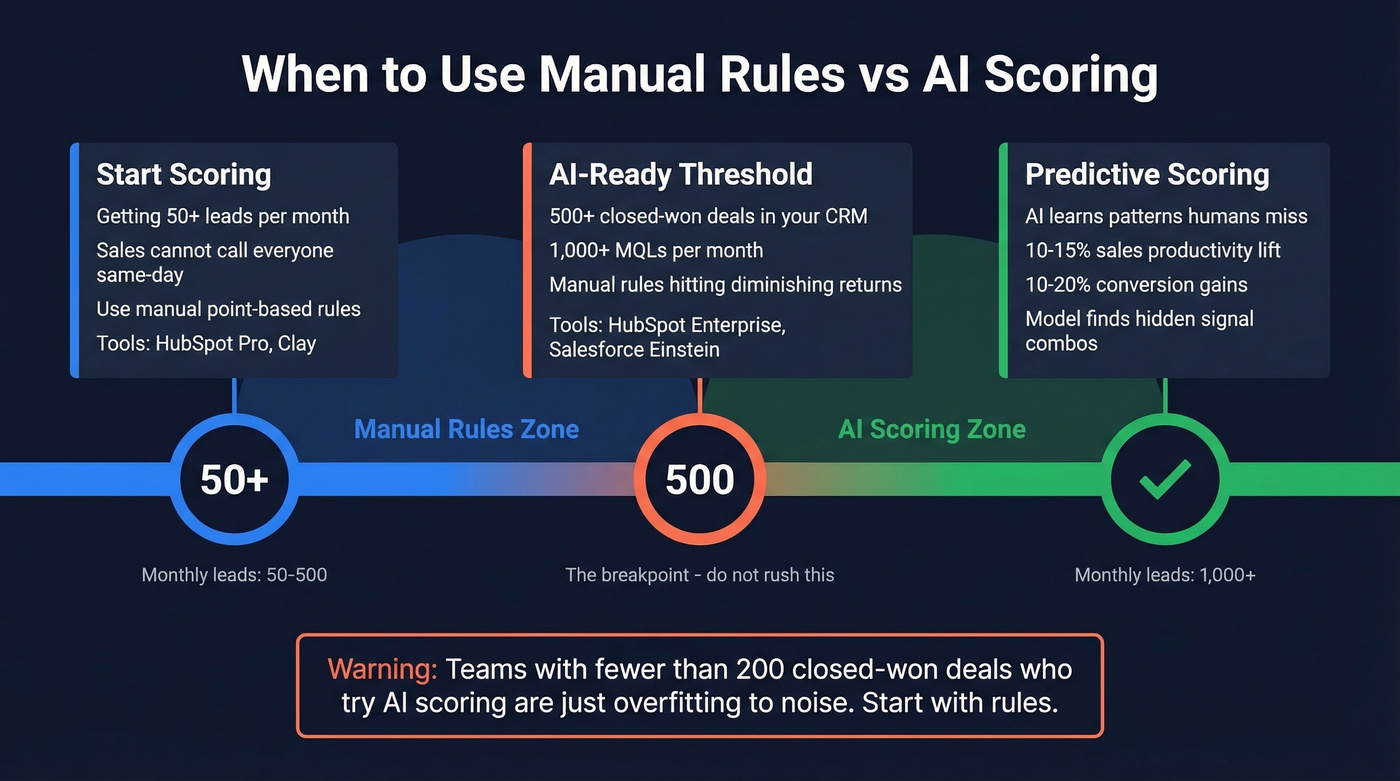

When to Move to AI Scoring

Rule-based scoring works until it doesn't. The breakpoint is data volume: you need at least 500 closed-won deals before a predictive model has enough signal to outperform manual rules. We've seen teams waste months tuning AI models built on fewer than 200 closed-won deals - the output was just overfitting to noise.

Once you cross that threshold, AI scoring simplifies routing: 95+ = highly likely to convert, 50-94 = likely, below 50 = unlikely. The model learns patterns humans miss - like the combination of company growth rate, tech stack, and content consumption sequence that predicts a deal. Forrester research shows a 10-15% sales productivity lift alongside 10-20% conversion gains from AI-driven models. But don't rush it. Most teams under 1,000 MQLs/month build effective models with manual rules in HubSpot or Clay (which also supports AI-driven classification for edge cases where deterministic rules fall short). (If you're evaluating the approach, see B2B predictive analytics.)

Skip the six-figure intent platforms if your average deal size is under $10K and you're running fewer than 500 inbound leads a month. A well-tuned HubSpot Pro scoring model with clean data will outperform a poorly fed 6sense instance every time. The bottleneck is never the tool - it's the data going into it.

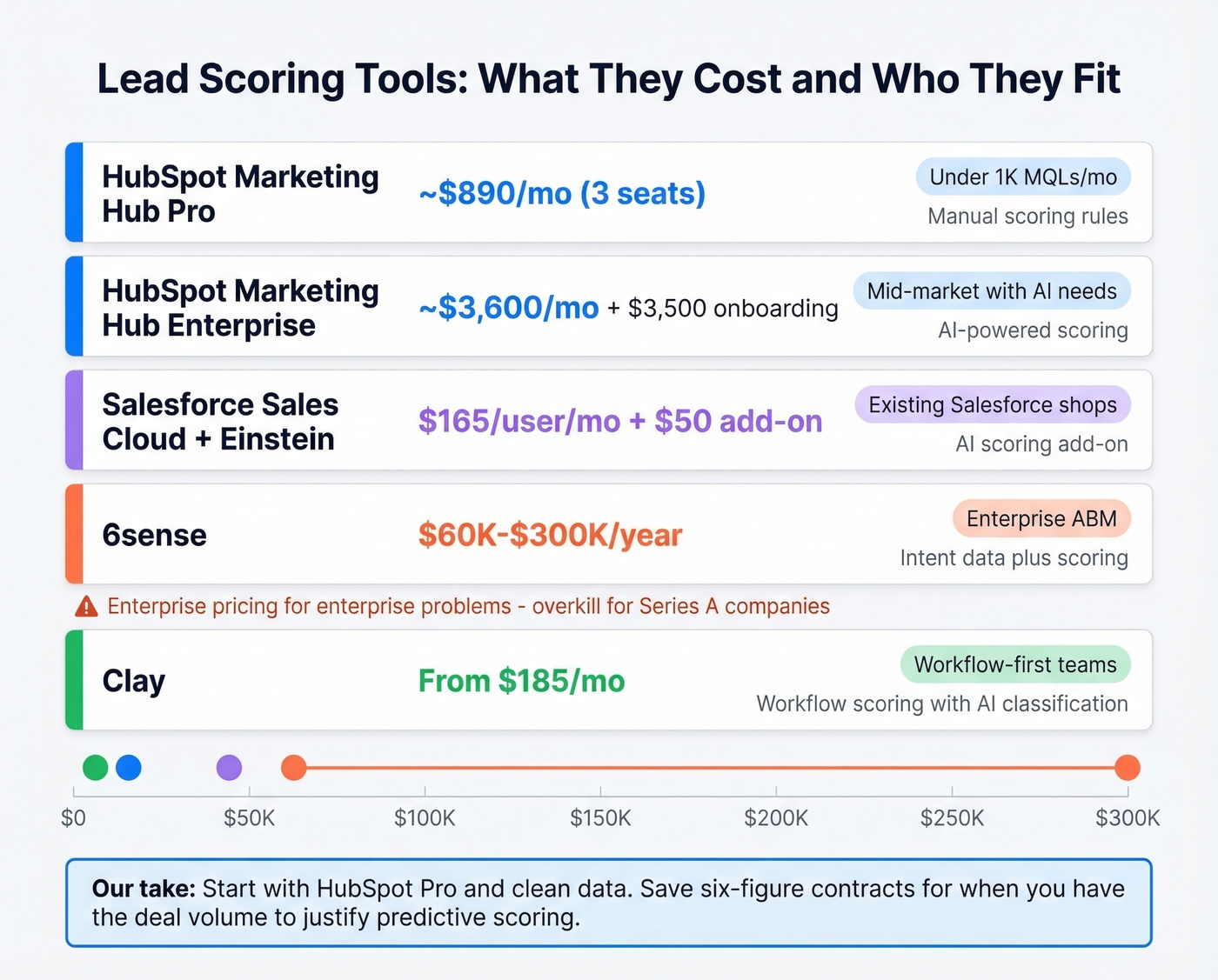

What Scoring Tools Cost

| Tool | What You Get | Typical Cost | Best For |

|---|---|---|---|

| HubSpot Marketing Hub Pro | Manual scoring | ~$890/mo (3 seats) | Teams under 1K MQLs/mo |

| HubSpot Marketing Hub Enterprise | AI scoring | ~$3,600/mo (10 seats) + $3,500 onboarding | Mid-market with AI needs |

| Salesforce Sales Cloud + Einstein | AI scoring | $165/user/mo + $50 add-on | Existing Salesforce shops |

| 6sense | Intent + scoring | $60K-$300K/yr | Enterprise ABM |

| Clay | Workflow scoring | From $185/mo | Workflow-first teams |

6sense is enterprise-grade pricing for enterprise-grade problems. For Series A companies running 200 inbound leads a month, HubSpot Pro handles scoring fine. Save the six-figure contracts for when you've outgrown manual rules and have the deal volume to justify predictive.

Fix Your Data Before You Score

This is the part most guides skip, and it's the part that matters most.

Scoring bad data is worse than not scoring at all. A lead with an outdated title gets fit-scored against a role they left eight months ago. A bounced email address means your "hot lead" is unreachable. The fix isn't a better model - it's better data going into the model. Prospeo refreshes its 300M+ profile database every 7 days and returns 50+ data points per enrichment, so the contacts entering your model are actually current and reachable. Enrich and verify records before they enter your scoring workflow, and the points actually mean something. (Related: lead enrichment.)

Fit scoring collapses when you can't verify title, seniority, company size, or revenue. Prospeo returns all of it - 83% enrichment match rate, 92% via API - for roughly $0.01 per lead. Plug it into HubSpot or Salesforce and let your scoring model work with clean inputs instead of guesswork.

Accurate scoring starts at a penny per lead. No contracts, no sales calls.

FAQ

When should we start scoring inbound leads?

Once you're getting 50+ inbound leads per month and sales can't call them all same-day. Below that volume, just call everyone - the overhead of building and maintaining a scoring model isn't worth it until volume forces prioritization.

How often should we recalibrate?

Quarterly at minimum. Pull closed-won and closed-lost data, compare scores at MQL stage, and adjust weights that aren't predicting outcomes. Markets shift, your ICP evolves, and a model that worked in Q1 can be stale by Q3.

What if our lead data is outdated?

No model fixes wrong inputs. Enrich and verify records before they enter your scoring workflow - a 7-day refresh cycle keeps fit scores grounded in current roles and reachable contacts, not stale form fills from six months ago.

What's a good MQL-to-SQL conversion rate?

Strong inbound lead scoring programs convert 10-30% of MQLs to SQLs. Below 10% means your threshold is too low and you're flooding reps with unqualified leads. Above 30% usually signals you're being too restrictive and leaving winnable pipeline on the table.