How to Prioritize B2B Leads: The Practitioner's Scoring Playbook

Your SDR is calling "hot leads" right now. Half of them are interns who downloaded an ebook. Meanwhile, a VP of Engineering just visited your pricing page twice in 48 hours - and nobody noticed.

This is the lead prioritization problem in B2B, and it bleeds pipeline every single day. Salesforce's State of Sales report found that reps spend only 30% of their time actually selling. The other 70% disappears into admin, qualifying noise, and chasing contacts who were never going to buy. A real scoring model fixes this, and it's what separates top-performing teams from everyone else.

Let's build one.

What B2B Lead Prioritization Actually Requires

Lead prioritization comes down to three things: a scoring model that separates fit from intent, data clean enough to trust the scores, and a process where sales actually uses the output.

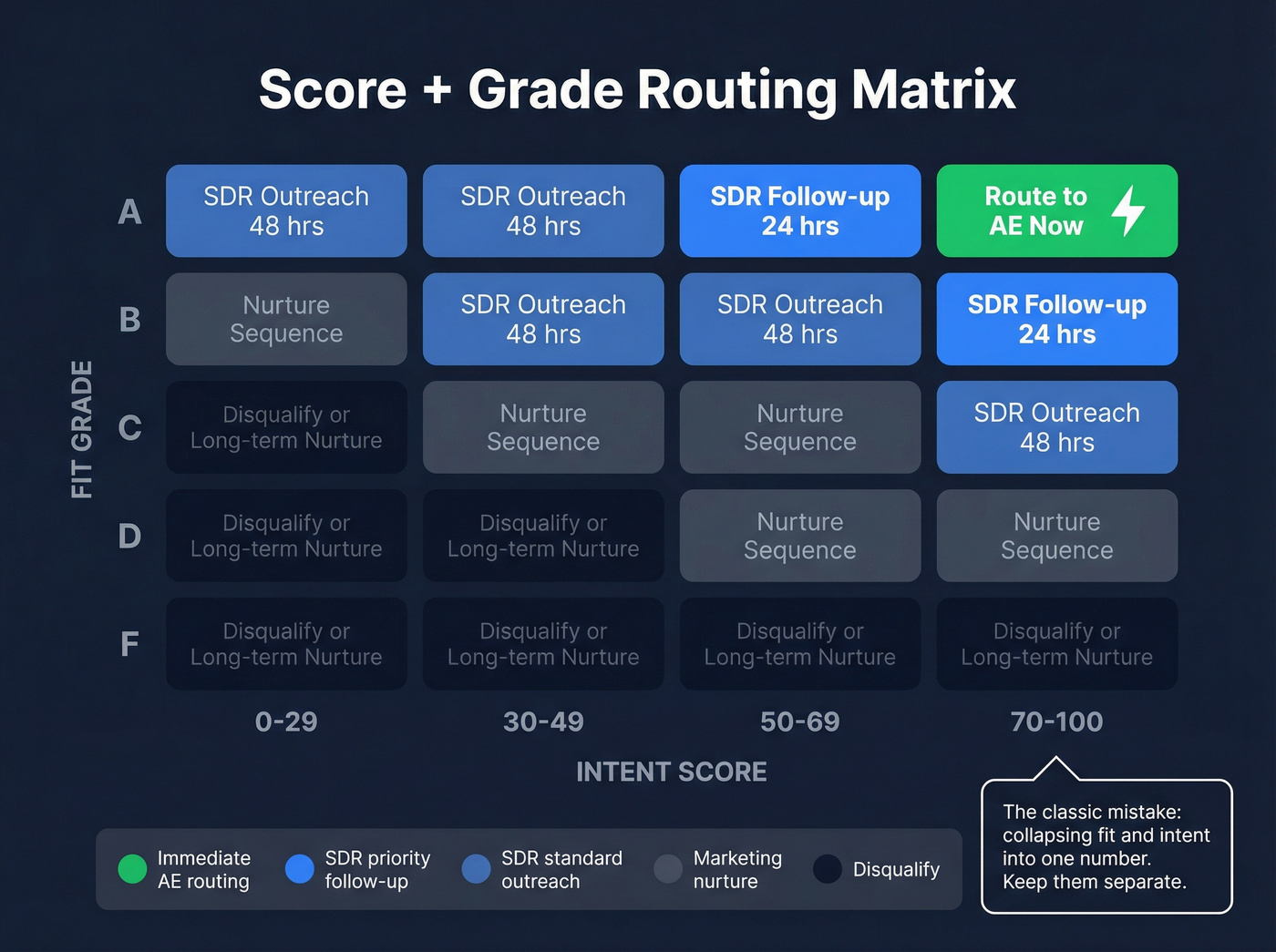

The concept that makes everything click is Score + Grade. A lead's grade (A through F) measures fit - will they ever buy? The score (0-100) measures intent - are they buying now? An A95 is your top prospect: perfect ICP, actively researching. A C25 is a nurture candidate: okay fit, low activity. This two-axis system prevents the classic mistake of routing a high-activity intern to your AE.

Start with just three signals: ICP fit grade, pricing page visit in the last 14 days, and multiple contacts engaged from the same account. Add complexity only after those three prove they drive pipeline.

Why Sales Lead Prioritization Pays

The ROI isn't theoretical. Companies that invest in lead enrichment and scoring see measurable gains across every pipeline metric:

| Metric | Improvement |

|---|---|

| Conversion rates | +25% |

| Customer acquisition cost | -15% |

| Sales cycle length | -25% |

| Average deal size | +30% |

Enriched leads convert 20-30% better than raw ones - not because enrichment is magic, but because you're scoring against reality instead of guessing.

The productivity math is even more compelling. RevBlack's playbook documents a representative before/after: reps went from handling 40 leads per month (spending 25% of their time just qualifying) to 55 leads per month with only 10% on qualification. That's a 37% productivity gain without adding headcount. High-alignment teams hit MQL-to-SQL rates of 40-50%, compared to the typical 25-35% range. When scoring works, reps sell instead of sort.

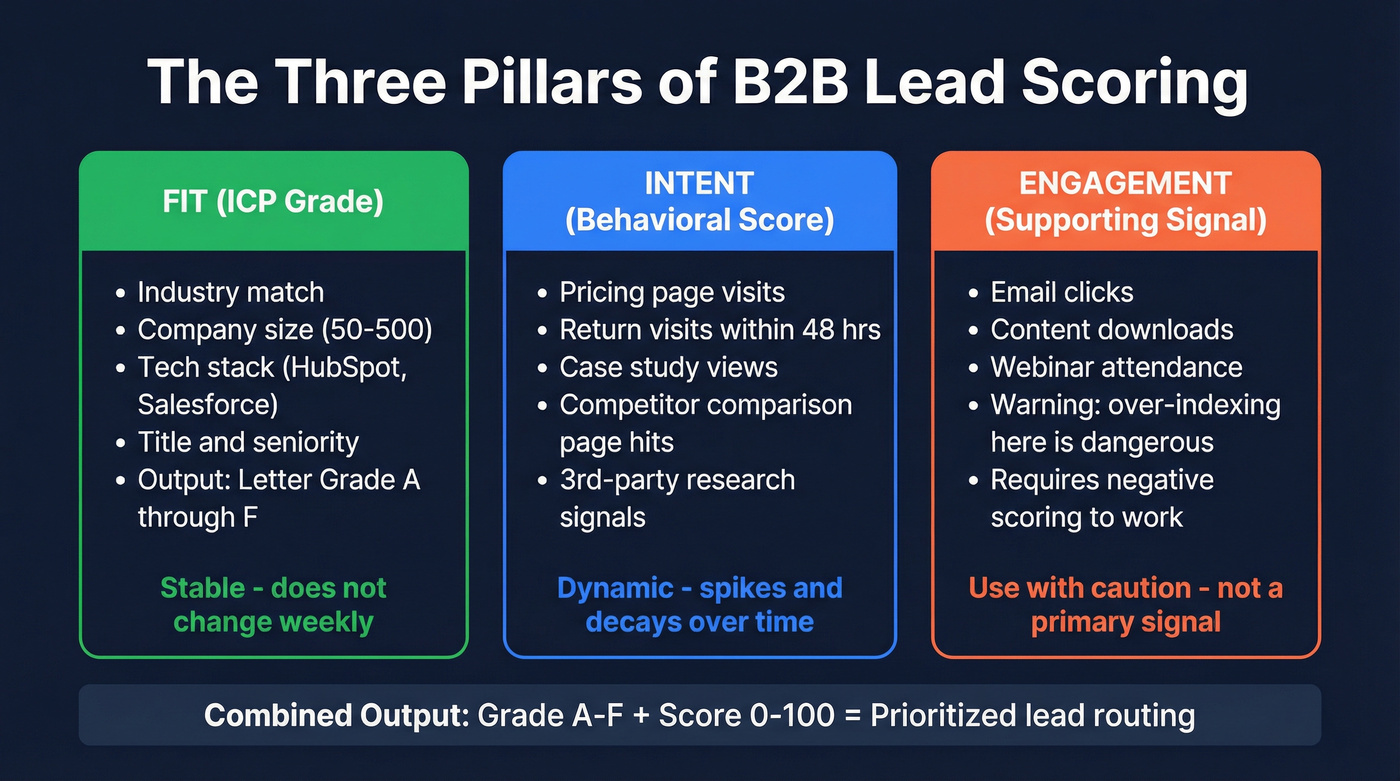

The Three Pillars: Fit, Intent, Engagement

Every effective B2B lead scoring model rests on three pillars. Miss one and the whole thing wobbles.

Fit (ICP Grade)

Picture two leads who both requested a demo this morning. One is a Director of Revenue Operations at a 200-person SaaS company running HubSpot and Salesforce. The other is a marketing coordinator at a 12-person nonprofit. Same action, wildly different value.

You assign a letter grade - A through F - based on firmographics like industry and company size, technographics like their current tool stack, and role-level data including title and seniority. An A-grade lead matches your ICP on every dimension. An F is a student, a competitor, or a company in a vertical you don't serve. Fit doesn't change week to week. It's the stable "will they ever buy?" filter.

Intent (Behavioral Score)

Intent answers a different question: are they buying now? Pricing page visits, return visits within 48 hours, case study views, competitor comparison page hits - these are buying signals, not browsing signals. Third-party intent data showing what topics they're researching across the web adds another layer.

The intent score is dynamic. It spikes during active evaluation and decays when a prospect goes quiet. A lead with a B fit grade but a 90 intent score deserves immediate attention - they're in-market right now, and this is where scoring delivers the most value: surfacing accounts that are ready to buy before a competitor reaches them.

Engagement (The Dangerous One)

Stop treating engagement as a primary signal.

A marketing intern who downloads every whitepaper racks up engagement points fast - but they're not a buyer. The most common complaint we hear from sales teams is simple: "the scores are useless." It almost always traces back to models that over-index on content consumption.

Engagement matters as a supporting signal. And it requires negative scoring: personal email addresses (-20 points), email bounces (-25), and competitor domains (-50) should actively pull scores down. Without negative scoring, your "hottest" leads will always include noise.

Build Your Scoring Model

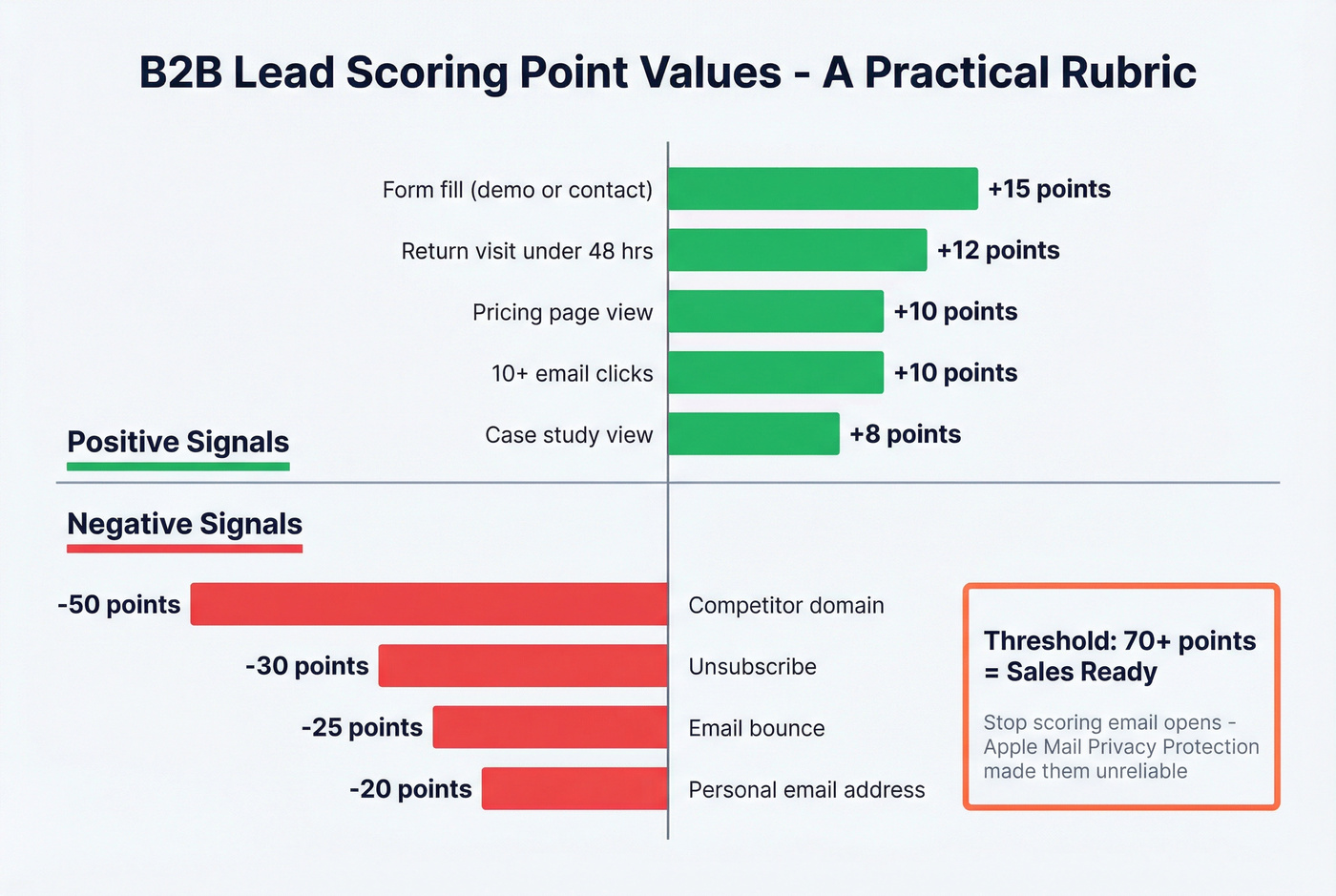

Point Values That Work

Here's a practical rubric that many mid-market B2B teams use as their starting point:

| Signal | Points | Why |

|---|---|---|

| Pricing page view | +10 | Direct buying intent |

| Form fill (demo/contact) | +15 | Hand-raise signal |

| Case study view | +8 | Evaluating outcomes |

| Return visit <48 hrs | +12 | Active research |

| 10+ email clicks | +10 | Sustained engagement |

| Email bounce | -25 | Bad data |

| Personal email | -20 | Likely not a buyer |

| Competitor domain | -50 | Disqualify immediately |

One critical note: stop scoring email opens. Apple's Mail Privacy Protection made open rates unreliable years ago. Shift your weight toward on-site behavior - pricing page views, form submissions, and return visits are signals you can actually trust.

Set your threshold at 70+ for sales-ready leads. Teams with well-calibrated models see win rates of 30-45% on top-scored leads, compared to 20-30% overall. Review monthly with sales feedback driving recalibration. If reps say the 70+ leads aren't converting, your point values are wrong - adjust them.

Score + Grade Combined

The real power comes from combining the numeric score with the letter grade:

- A + 70+ score - Route to AE immediately. High-fit, high-intent. Don't let it sit.

- B + 50+ score - SDR follow-up within 24 hours. Good fit, moderate intent, needs a conversation.

- C or below + under 30 - Nurture sequence. Let marketing warm them up.

Use this if you want a system reps will actually trust. Skip this if you're trying to collapse fit and intent into one number - that's how you end up routing interns to your best AE.

Negative Scoring and Decay

Non-negotiable elements for any model that doesn't want to drown in false positives: student or personal emails (-20), bounces (-25), competitor domains (-50), unsubscribes (-30), and the one most teams skip - time-based decay at -5 per week of inactivity.

Score decay causes the most damage when it's missing. Without it, a lead who was active six months ago still shows as "hot." Reps call, get ghosted, and lose trust in the entire scoring system. Decay forces your model to reflect reality.

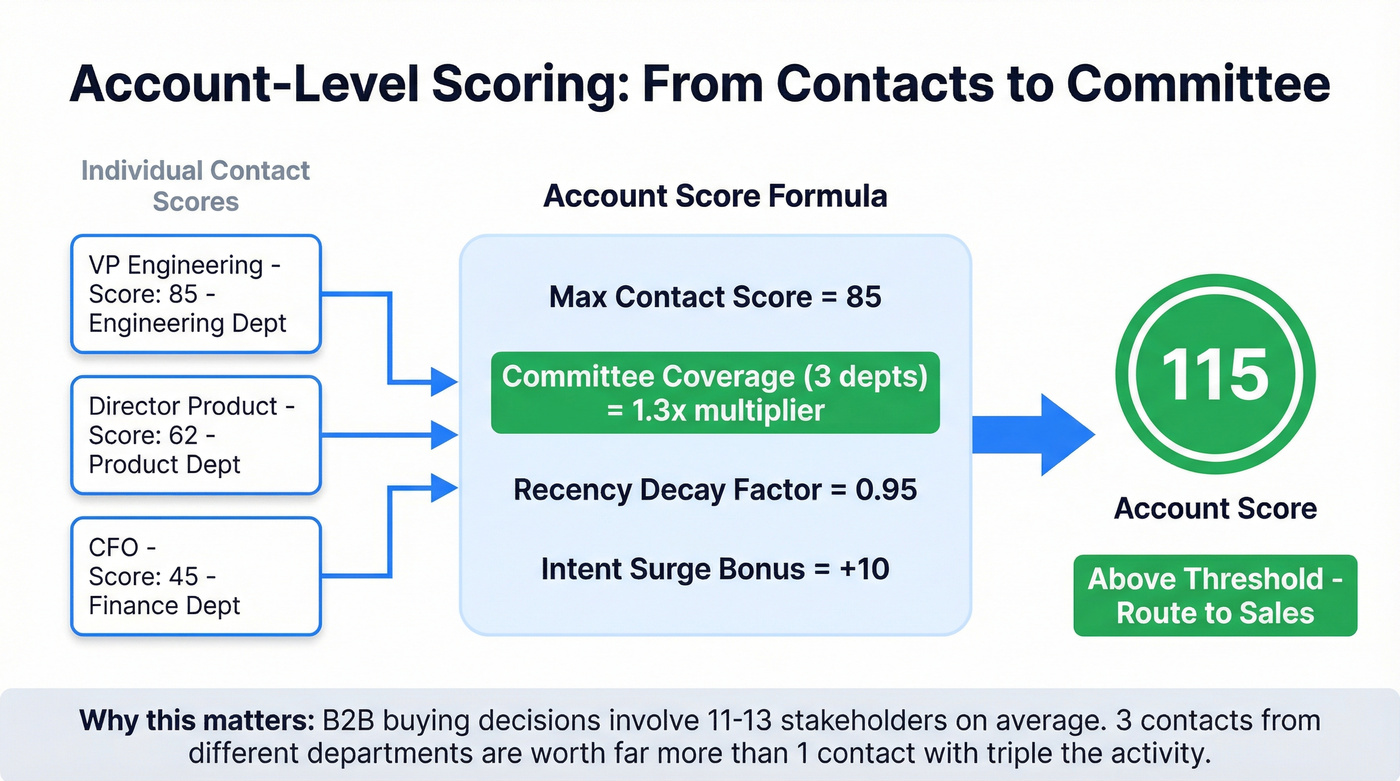

Account-Level Scoring

Individual lead scores tell half the story. B2B buying decisions involve 11-13 stakeholders on average, each consuming 4-5 pieces of information independently. If you're only scoring individual contacts, you're missing the committee.

This is the "consensus gap." You might have a champion with an A95 score, but if nobody else at the account is engaged, the deal stalls in committee. We've seen this kill deals that looked like sure things on paper.

A practical rollup formula:

Account Score = Max Contact Score + Committee Coverage Multiplier + Recency Decay + Intent Surge Bonus

A worked example: your top contact scores 85, three departments are engaged (1.3x multiplier), activity was recent (0.95 recency factor), and third-party intent shows a category surge (+10 bonus). The account score comes to roughly 115 - well above threshold. The committee coverage multiplier rewards breadth because three contacts from different departments are worth far more than one contact with triple the activity.

Your fit grades are guesses without real firmographic and technographic data. Prospeo gives you 30+ filters - buyer intent, tech stack, headcount growth, funding - so every lead hits your scoring model with 50+ verified data points. 83% enrichment match rate means your A-grades are actually A-grades.

Stop scoring leads against incomplete data. Enrich them first.

Intent Data for Prioritization

Not all intent data is equal.

First-party intent comes from your own properties - website visits, email engagement, form fills. It's the highest quality signal, but it only captures people who've already found you. Third-party intent comes from external publisher networks. Bombora's Company Surge data, for instance, pulls from a cooperative of 5,000+ B2B publisher sites across 12,000+ topic categories, revealing which accounts are researching your category even if they've never visited your site.

Then there's the "dark funnel" - buying research happening in Slack communities, podcasts, peer conversations, and private forums that no tracking pixel captures. You can't score what you can't see, which is exactly why third-party intent data matters: it's the closest proxy for dark funnel activity.

When evaluating intent providers, focus on signal freshness (weekly vs. real-time), granularity (account-level vs. contact-level), and activation model (raw data feed vs. full orchestration). Pricing spans a massive range: basic visitor identification starts around $99/month, while enterprise ABM suites run $25K-$100K+/year.

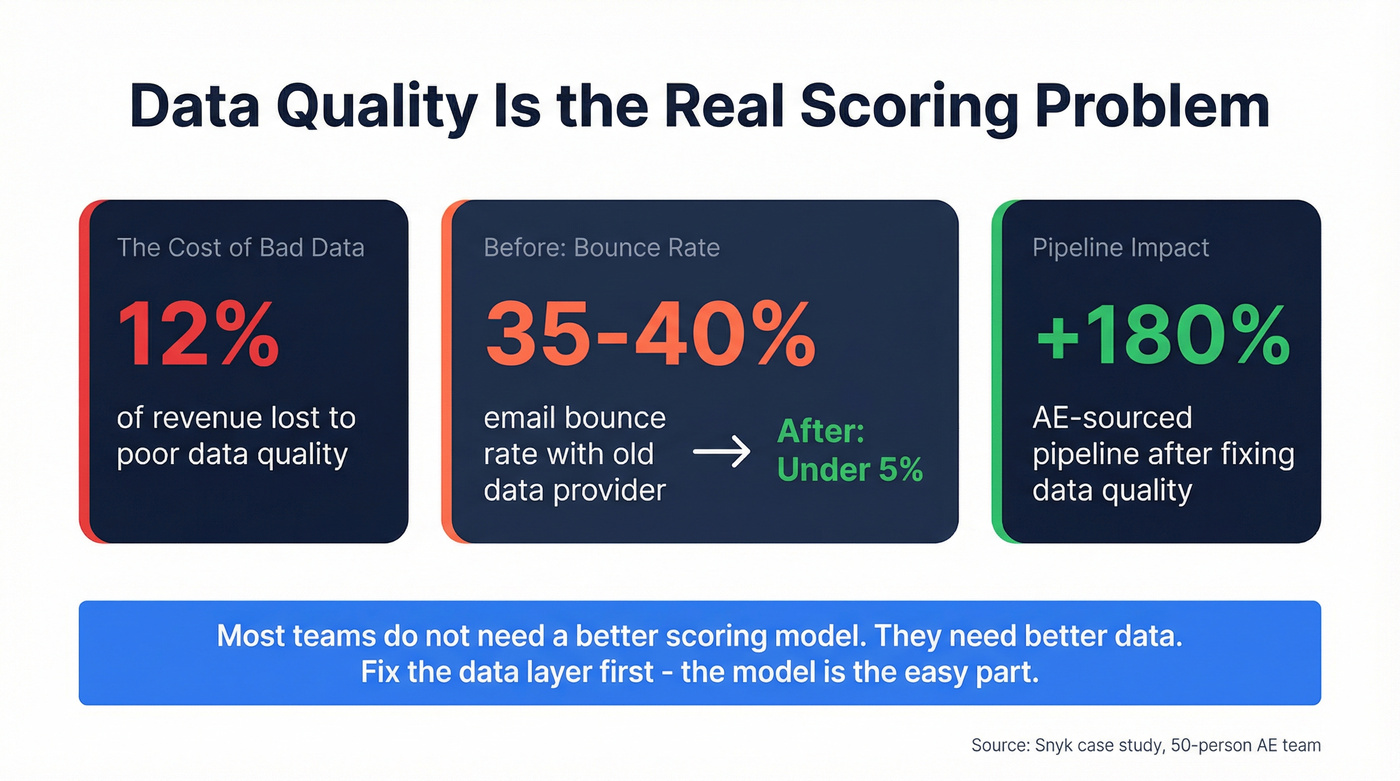

Data Quality: The Foundation Nobody Wants to Talk About

Here's the thing: your scoring model is only as good as the data feeding it. Poor data quality costs companies roughly 12% of revenue. If your CRM is full of stale records and bounced emails, even a perfect scoring rubric produces garbage outputs.

Most teams don't need a better scoring model. They need better data. I've watched teams spend months fine-tuning point values and thresholds while 20% of their email addresses bounce and 15% of their job titles are outdated. Fix the data layer first. The model is the easy part.

Snyk's 50-person AE team is the clearest proof point. After switching their data provider, bounce rates dropped from 35-40% to under 5%, and AE-sourced pipeline jumped 180%. That's not a scoring improvement - it's a data quality improvement that made their existing scoring model actually work. Tools like Prospeo that verify emails in real-time and refresh records on a 7-day cycle (vs. the six-week industry average) make this kind of difference immediately, because your scores are only as current as your underlying contact data.

Score decay punishes stale data, but most providers only refresh every 6 weeks. Prospeo refreshes every 7 days - so your intent scores reflect reality, not last quarter's job titles. At $0.01 per email with 98% accuracy, bad-data penalties like bounces (-25 points) practically disappear.

Kill bounce penalties with data that refreshes weekly, not monthly.

Five Scoring Mistakes That Kill Pipeline

1. Scoring activity instead of buying behavior. An ebook download is content consumption, not purchase intent. A pricing page visit followed by a demo request - that's buying behavior. If your highest-scored leads are content consumers, your model measures the wrong thing.

2. Marketing-only ownership. When marketing builds scoring in isolation, sales doesn't trust the output. Scoring must be a revenue function with Sales, RevOps, and Marketing aligned on thresholds and feedback loops.

3. No negative scoring. Without penalties for bounced emails, personal addresses, and competitor domains, your "hot" list fills with noise. We've audited models where 30% of MQLs were students or competitors.

4. No score decay. A lead active six months ago isn't hot today. Without time-based decay, your CRM becomes a graveyard of zombie leads that reps waste time calling.

5. Ignoring data quality. The most sophisticated model in the world can't fix a CRM where 20% of emails bounce. Fix the data layer first, then build the model on top. The consensus on r/sales is the same - bad data is the number one reason scoring fails, not bad logic.

How SDRs and BDRs Should Prioritize Leads

The scoring model only matters if frontline reps actually use it.

SDR prioritization should follow a simple daily workflow: start with A-grade leads scoring 70+, then work B-grade leads with rising intent signals, and leave everything else to automated nurture sequences. For teams that run outbound, BDR prioritization works best when it combines third-party intent surges with ICP fit - a target account researching your category is worth ten cold accounts that match on firmographics alone.

The key for both roles is the same: trust the model, work the top of the list, and feed conversion data back to RevOps so the scores keep improving. When budget is tight, even a simple spreadsheet-based triage beats no prioritization at all.

Tools and Costs at a Glance

The predictive lead scoring market hit $5.6B in 2025 and continues growing through 2026. Here's what the options look like:

| Tool | Category | Pricing | Best For | Setup |

|---|---|---|---|---|

| Prospeo | Data + intent | ~$0.01/email, free tier | Data + intent layer | Same day |

| HubSpot | CRM + scoring | Free-$1,000+/mo | SMBs starting out | 1-2 weeks |

| ActiveCampaign | Marketing automation | From ~$49/mo | Small teams | 2-3 weeks |

| Bombora | 3rd-party intent | $10K+/yr (custom) | Enterprise intent | 4-8 weeks |

| 6sense (4.3/5 on G2) | ABM + predictive | $25K-$100K+/yr | Enterprise ABM | 3-6 months |

| Demandbase | ABM + ads | $30K-$100K+/yr | Enterprise ABM + ads | 3-6 months |

| Salesforce Pardot | Marketing automation | $1,250-$4,000/mo | Salesforce teams | 3-6 months |

| MadKudu | Predictive scoring | $1,500-$5,000/mo | Product-led growth | 4-6 weeks |

The median cost for a 15-person team runs $1,200-$2,500/month. AI-powered scoring delivers 40-60% more accurate prioritization compared to rules-based models - but only if you have the data to feed it.

Most teams need two layers: a CRM with scoring capabilities and a data layer feeding it clean, enriched records. If your average deal size is under $10K, you almost certainly don't need a six-figure ABM platform. Start with HubSpot's free CRM and a solid data provider, then scale tooling as your model proves itself.

Predictive and AI Scoring

A 2026 study published in Frontiers in AI tested 15 classification algorithms against 23,000+ real CRM lead records spanning four years. The Gradient Boosting Classifier outperformed everything else - including logistic regression and random forests - on both accuracy and ROC AUC.

The most interesting finding wasn't which algorithm won. It was which features mattered most. Lead source and lead status were the strongest predictors of conversion, not engagement signals like email clicks or content downloads. That aligns with what practitioners have been saying for years: fit and intent beat activity every time.

Real talk: predictive scoring isn't for everyone. You need at least 12 months of clean CRM data before any ML model has enough signal to outperform a well-tuned rules-based system. HubSpot, Salesforce Einstein, and 6sense all offer predictive scoring out of the box, but garbage-in-garbage-out applies doubly here. If your CRM data is messy, fix that first - no algorithm compensates for a 30% bounce rate.

FAQ

What's the difference between lead scoring and lead prioritization?

Lead scoring assigns numeric values to individual actions and attributes - a pricing page visit gets +10, a form fill gets +15. Lead prioritization is the broader strategy that uses scores, grades, intent data, and account-level signals to decide which leads get attention first. Scoring is one input into prioritization, not the whole system.

How many scoring signals should I start with?

Three. ICP fit grade, pricing page visit in the last 14 days, and multiple contacts from the same account. Only 44% of organizations even use lead scoring, and overbuilding from day one is a top reason the other 56% gave up or never started. Prove those three drive pipeline before adding complexity.

Can I do lead prioritization without expensive tools?

Yes. HubSpot's free CRM includes basic scoring. Pair it with a data provider that offers verified contacts and intent signals - Prospeo's free tier gives you 75 email credits/month plus access to intent data across 15,000 topics. You don't need a $50K platform. You need clean data and three good signals.

How does B2B lead prioritization differ from B2C?

B2B involves longer sales cycles (3-9 months), buying committees of 11-13 stakeholders, and account-level scoring - none of which apply in most B2C contexts. You need to track multiple contacts across a single account and weight firmographic fit heavily. B2C scoring relies on individual behavioral signals and moves much faster from lead to purchase.