The MQL Funnel: How It Actually Works and How to Fix Yours

Your VP of Sales just told the CEO that marketing's leads are garbage. Marketing fires back with a dashboard showing 400 MQLs last month. Sales says they called 50 and none were real. Sound familiar?

Most MQL funnel guides hand you a definition and a BANT acronym and call it a day. This one gives you the scoring model, the benchmarks, and the handoff mechanics that separate functional funnels from the ones that breed resentment between your two revenue teams.

The problem isn't the leads or the reps - it's the funnel between them. Three things fix it:

- A shared MQL definition between marketing and sales - not just "downloaded a PDF," but a scoring model both teams built together and agreed on.

- A lead scoring model with negative scoring and a 60-80 point MQL threshold - so you're grading fit and intent, not just activity.

- A 5-minute response SLA for every MQL handoff - because 42 hours is the average response time, and by then your prospect has talked to two competitors.

What Is an MQL Funnel?

An MQL (Marketing Qualified Lead) funnel is the staged process that moves a raw lead from first touch to closed deal, with explicit gates where marketing and sales evaluate readiness.

The concept traces back to SiriusDecisions' Demand Waterfall, launched in 2002 and revised significantly in 2012. That revision broke the monolithic "MQL" into sub-stages - AQL (Automation Qualified Lead), TAL (Teleprospecting Accepted Lead), TQL (Teleprospecting Qualified Lead) - because even two decades ago, practitioners knew a single bucket wasn't enough. Forrester later acquired SiriusDecisions and pushed the framework broadly.

Here's the thing: MQL was never meant to be one stage. It was designed as a series of gates. Most teams collapsed it into a single checkbox, and that's where the dysfunction started.

Funnel Stages and 2026 Benchmarks

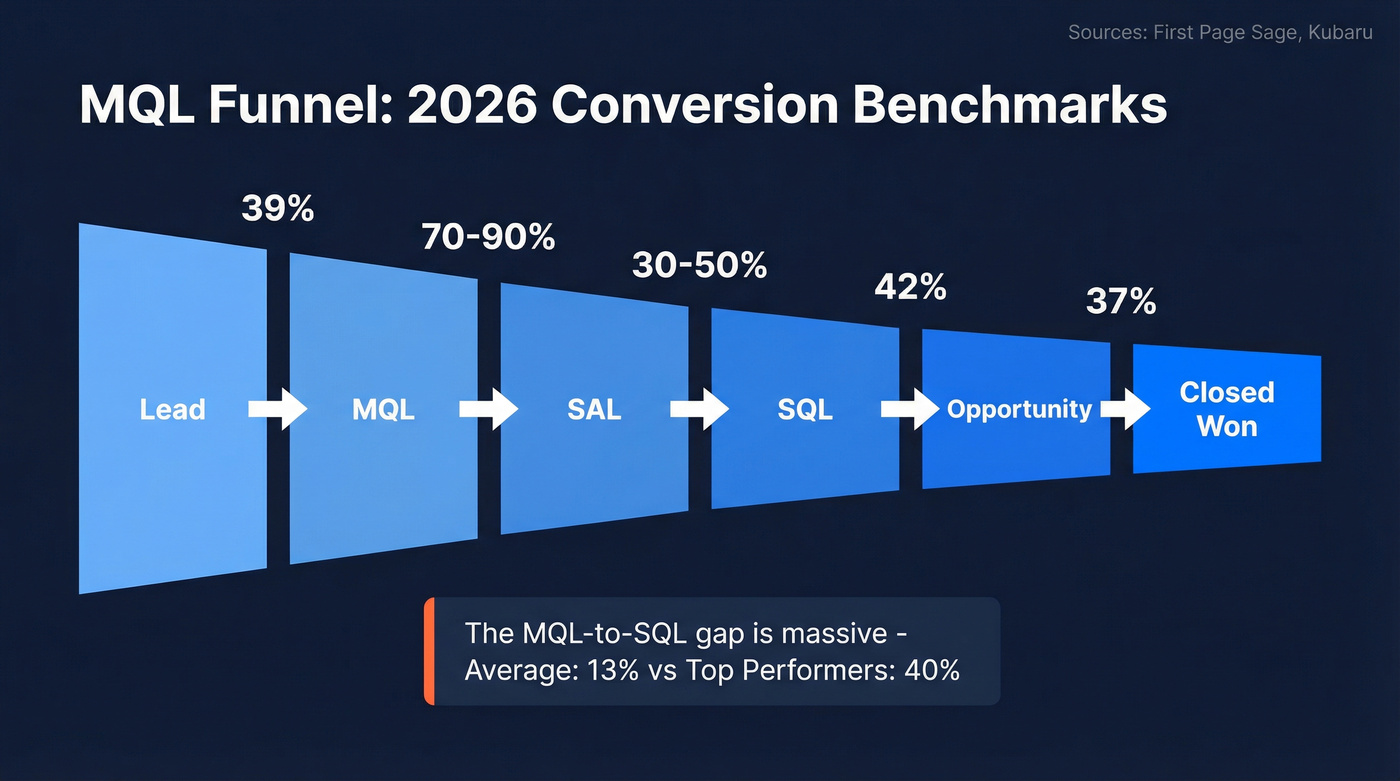

Each stage below has practical benchmarks drawn from two sources: First Page Sage for B2B SaaS and Kubaru for general B2B ranges. The MQL-to-SQL performance spread comes from a separate 2026 handoff and automation benchmark analysis.

| Stage | Definition | B2B SaaS | General B2B |

|---|---|---|---|

| Lead → MQL | Meets scoring threshold | 39% | 20-40% |

| MQL → SAL | Sales accepts | - | 70-90% |

| SAL → SQL | Sales validates | - | 30-50% |

| MQL → SQL | Combined (no SAL stage) | 38% | - |

| SQL → Opp | Creates pipeline | 42% | - |

| SQL → Closed | Becomes customer | 37% | 20-30% |

For MQL-to-SQL specifically, the widely-cited performance spread is ~13% average and ~40% for top performers.

Dashes indicate the source doesn't publish a benchmark for that transition.

Two things jump out. The MQL-to-SQL gap is enormous - average 13%, top performers 40%. If you're materially below that top-performer band, your scoring model or MQL definition needs tightening. And industry variance is wide enough that "average benchmarks" are misleading without segmentation. A cybersecurity company and an HR tech company don't measure against the same numbers.

If your MQL-to-SQL rate is above 80%, your bar is probably too high and you're leaving pipeline on the table. We've seen this pattern repeatedly: marketing tightens criteria to avoid sales complaints, and suddenly real buyers get filtered out before a rep ever sees them. Most B2B SaaS teams operate best in a healthy middle ground where sales trusts the leads but marketing isn't over-filtering.

How to Build a Lead Scoring Model

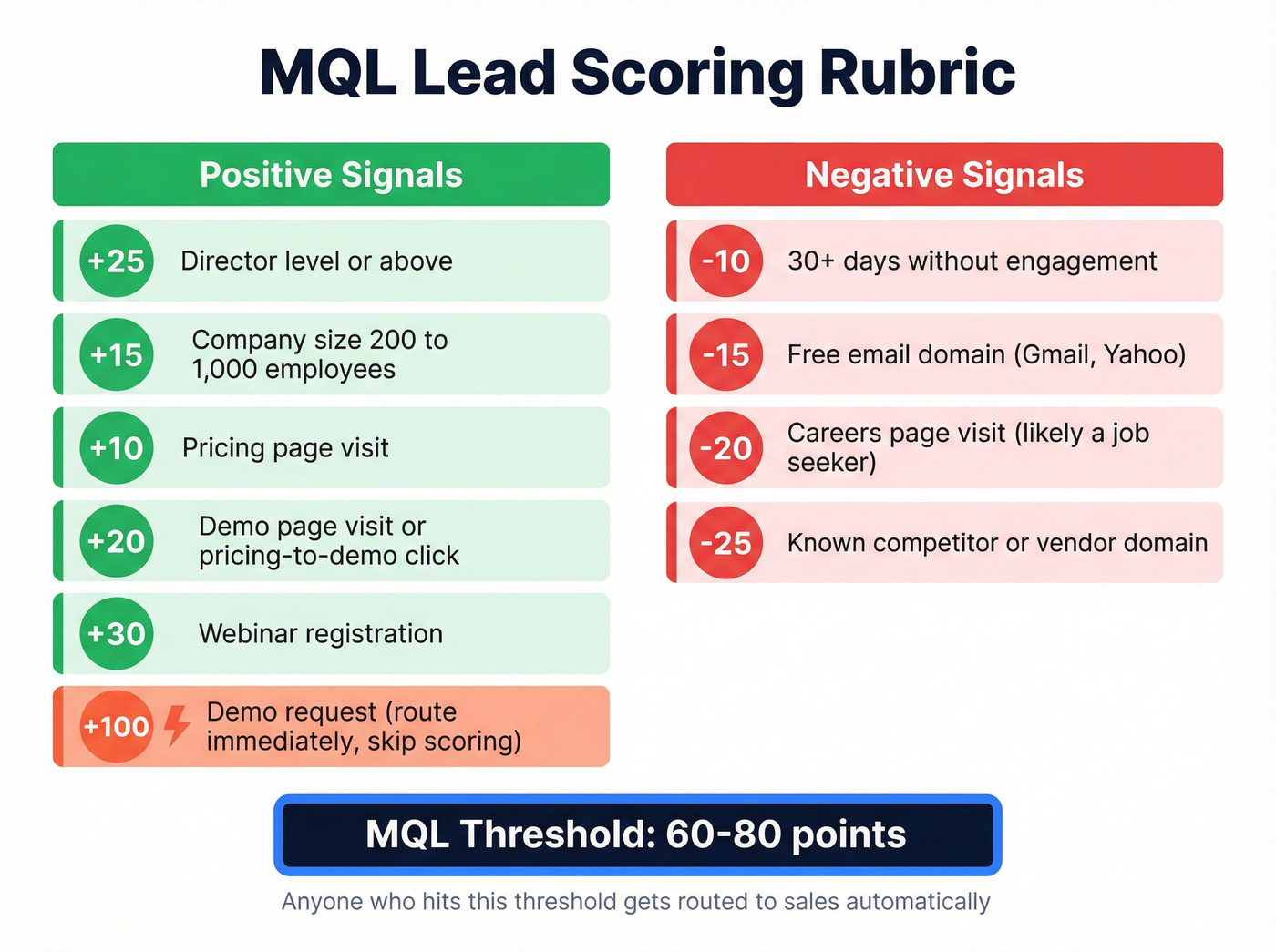

Your scoring model is the engine of the entire qualification process. Here's a rubric that works for most B2B SaaS teams.

Positive signals:

- +25 - Director-level or above

- +15 - Company size 200-1,000 employees (adjust to your ICP)

- +10 - Pricing page visit

- +20 - Demo page visit or pricing-to-demo click

- +30 - Webinar registration

- +100 - Demo request form submit (route immediately, skip scoring)

Negative signals:

- -10 - 30+ days without engagement

- -15 - Free email domain (Gmail, Yahoo) if you sell to enterprise

- -20 - Careers page visit (likely a job seeker, not a buyer)

- -25 - Known competitor or vendor domain

Set your MQL threshold between 60-80 points. Anyone who hits it gets routed to sales. The +100 demo request is the critical exception - a hand-raiser should never wait for a scoring formula to catch up. Route immediately and let humans qualify.

That negative scoring block is where most teams fall short. Without decay and exclusion filters, you end up with "MQLs" who downloaded a whitepaper 90 days ago and haven't been back since. You also end up routing students, job seekers, consultants, and competitors to your SDR team, which is the fastest way to make sales stop trusting marketing leads entirely. The consensus on r/b2bmarketing is blunt: if your SDRs are calling college students and consultants, they'll stop calling anyone marketing sends them.

Teams that tighten their scoring filters - raising the seniority threshold, adding negative scoring for inactivity and non-buyers - see a 13% jump in MQL-to-meeting rate almost immediately. Structured scoring with automated routing has driven 40% conversion rate increases in documented cases.

But your scoring model is only as accurate as your contact data. If your bounce rate is high, you're scoring dead addresses and sales loses trust in the entire system. We ran into this ourselves while testing enrichment workflows - one client's MQL volume looked healthy until we realized 30% of those contacts had invalid emails. Prospeo's 98% email accuracy and 7-day data refresh cycle fixed that gap, returning 50+ data points per enrichment so the scoring model graded real people with complete profiles, not outdated records.

Your lead scoring model grades contacts it can't reach. When 30% of MQLs have invalid emails, sales stops calling - and your funnel breaks. Prospeo returns 50+ data points per contact at 98% email accuracy with a 7-day refresh cycle, so every MQL that hits your scoring threshold is a real person with a working inbox.

Stop scoring dead addresses. Score real buyers with verified data.

The MQL-to-SQL Handoff: Where Funnels Die

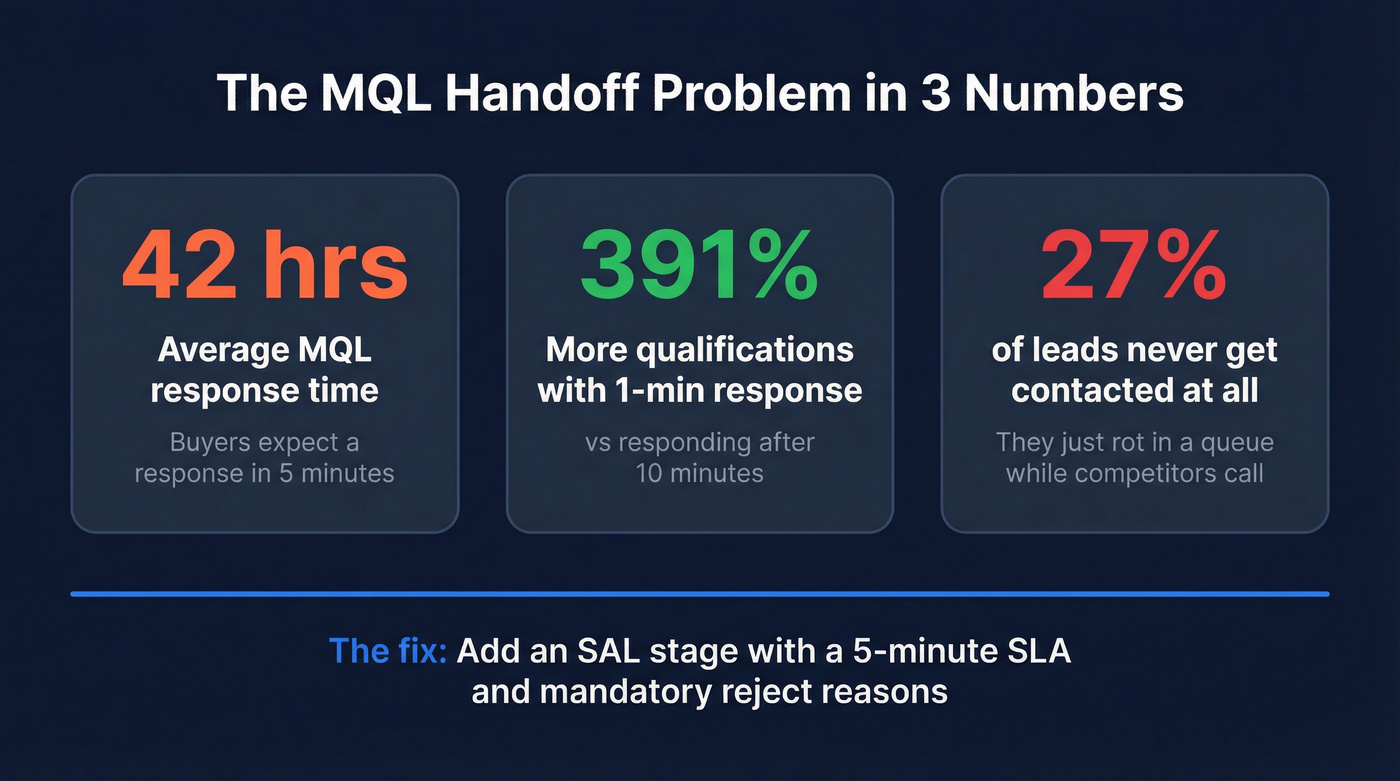

42 hours. That's how long the average MQL waits for a call.

Buyers expect a response within 5 minutes. Responding within 1 minute versus 10 minutes increases qualification rate by 391%. And 27% of leads never get contacted at all - they just rot in a queue while your competitors pick up the phone.

The SAL (Sales Accepted Lead) stage is the most underused stage in B2B. Add it. When a lead hits MQL threshold, it moves to SAL - meaning a rep has formally reviewed and accepted it, committing to follow up within your SLA. If they reject it, they have to say why. That rejection data is the feedback loop most teams are missing, and it's how marketing actually improves lead quality over time.

CRM Implementation Tip

In most CRMs, use Lifecycle Stage for the cross-functional journey (Lead → MQL → SQL → Opportunity → Customer) and Lead Status as the sales-facing property (New, Attempted to Contact, Connected, Open Deal, Unqualified). In HubSpot specifically, lifecycle stages only move forward by default. To move a lead backward, you have to clear the existing value first - which matters when you're building automation around disqualification.

Five Mistakes That Kill Conversion

The recurring questions on r/b2bmarketing and similar communities line up with what we see in practice. Five mistakes kill most funnels:

1. Setting the MQL bar too low to inflate numbers. Marketing hits their MQL target, sales ignores the leads, everyone's unhappy. This is the most common failure mode and the easiest to diagnose - just look at your MQL-to-SAL acceptance rate. Below 70%? Your bar is too low.

2. Sales ignoring MQLs because they don't trust quality. This is a symptom, not a cause. Fix the definition and the trust follows. But you have to earn it back with a quarter of clean leads, not a slide deck promising things will be different.

3. No feedback loop between sales and marketing. Without SAL accept/reject data, marketing can't improve. This is why the SAL stage matters - it forces the conversation.

4. Over-weighting surface engagement. Page views and email opens aren't intent. Pricing page visits and demo requests are. A prospect who opened six nurture emails but never visited your site isn't more qualified than someone who hit your pricing page once.

5. Never updating criteria as your ICP evolves. Definition decay is real. The scoring model you built 18 months ago reflects an ICP that no longer matches your best customers. Revisit scoring quarterly, and use closed-won analysis to validate which signals actually predicted revenue. Skip this step and you'll watch conversion rates silently degrade for months before anyone notices.

Is the MQL Dead?

The "MQL is dead" crowd is half right. The B2B Playbook calls MQL "fantasy intent" - a form fill isn't buying intent, and building your revenue engine on it creates the exact sales/marketing trust issues everyone complains about. They advocate replacing MQL with account intelligence: contract renewal dates, current provider, buying committee structure.

On the other side, AI SDR vendors argue the problem isn't MQL itself - it's the human bottleneck. Their data shows 130% more meetings booked after implementing an AI SDR, largely because 60% of leads previously got no follow-up at all. A DemandGen Report analysis from early 2026 framed it starkly: 98% of website traffic never converts, and static forms capture too little to qualify well.

Let's be honest about what this means in practice. MQL as a board-level KPI is dead. Stop putting MQL volume on the slide deck. But MQL as an internal operational signal - a leading indicator that tells marketing and sales "this account needs attention now" - is alive and necessary.

Board metrics should be pipeline and revenue. Team metrics should include MQL-to-SQL conversion, speed-to-lead, and stage velocity. The median B2B sales cycle runs about 84 days, and you need intermediate signals to know if things are breaking before quarter-end. When these metrics are tracked at the team level rather than the board level, they give reps and marketers the operational clarity they need without inflating vanity numbers.

If you want a cleaner way to operationalize this, start by standardizing your funnel metrics and then pressure-test your sales conversion rate by segment (channel, persona, company size) so you can see exactly where the handoff breaks.

A 5-minute SLA means nothing if your reps dial a bounced email or a disconnected number. Prospeo gives you 143M+ verified emails and 125M+ verified mobiles - updated weekly, not monthly - so the MQL-to-SQL handoff actually converts. At $0.01 per email, fixing your data costs less than one wasted SDR hour.

Make every MQL handoff count with contacts that connect.

MQL Funnel FAQ

What's a good MQL-to-SQL conversion rate?

Average is 13%, top performers hit 40%. In B2B SaaS specifically, First Page Sage reports 38%. If you're below 20%, start with negative scoring and seniority filters - those two changes alone close the gap fastest.

What's the difference between MQL, SAL, and SQL?

An MQL meets marketing's scoring criteria. A SAL is an MQL that sales has formally accepted, committing to follow up within a defined SLA. An SQL is a SAL that sales has validated as a real opportunity via BANT or MEDDIC qualification. The SAL stage creates the accountability most teams are missing.

How does data quality affect funnel performance?

Bad contact data means your scoring model grades unreachable people and sales wastes time on dead-end outreach - the fastest way to destroy trust in marketing leads. Keeping your database accurate with verified emails and a short refresh cycle ensures your funnel operates on real, reachable prospects instead of outdated records that inflate MQL counts without producing pipeline.

How often should you revisit your MQL definition?

Revisit scoring criteria quarterly using closed-won analysis. Compare the firmographic and behavioral traits of deals that actually closed against your current scoring weights. Most teams find their ICP shifts meaningfully every 6-12 months, and stale criteria silently degrade conversion rates until someone finally asks why pipeline dried up.