Revenue Attribution: What It Is, Why It Breaks, and How to Fix It

Your marketing team says they drove 45% of revenue. Content claims 40%. Events says 30%. That's 115% of revenue accounted for - and the CFO is staring at a spreadsheet showing actual closed-won is $320K, not the $500K your dashboards celebrate. Almost every B2B org with more than two channels is living this problem right now.

Quick Version

Attribution models are directional signals, not truth. The teams getting it right use three layers: software attribution, self-reported data ("How did you hear about us?"), and incrementality testing. Start with clean CRM data and a self-reported field - both cost $0.

What Is Revenue Attribution?

Revenue attribution assigns closed revenue back to the marketing, sales, and customer interactions that contributed to the deal. It's broader than marketing attribution, which only tracks marketing touchpoints before conversion, and deeper than conversion tracking, which tells you someone filled out a form but not whether they eventually signed a $50K contract.

The distinction matters because it connects the full customer journey to actual dollars. Marketing attribution might tell you a webinar generated 200 leads. Mapping revenue back to those leads tells you they produced $180K in pipeline and $42K in closed deals. Finance cares about the second number.

Here's the uncomfortable baseline: only 32% of organizations can measure media spending holistically across digital and traditional channels, per Nielsen's Annual Marketing Report. The other 68% are flying partially blind.

Who Needs It (And Why)

This isn't a marketing vanity project. It's the connective tissue between spend and outcomes:

- Marketing leaders - Justify budget by showing which programs produce pipeline and revenue, not just MQLs.

- Finance / CFO - Validate that marketing's reported contribution matches actual closed-won numbers. Catch the 115% problem before board meetings.

- RevOps - Align marketing and sales data in the CRM so handoff points don't create attribution black holes.

- Product teams - Understand which acquisition channels bring high-LTV customers vs. high-churn ones (see churn basics if you need a refresher).

If your organization spends more than $100K/year on demand generation and has a sales cycle longer than 30 days, you need this. Skip it and you're guessing where to spend next quarter's budget.

Attribution Models Explained

Every model you'll encounter, from simplest to most complex.

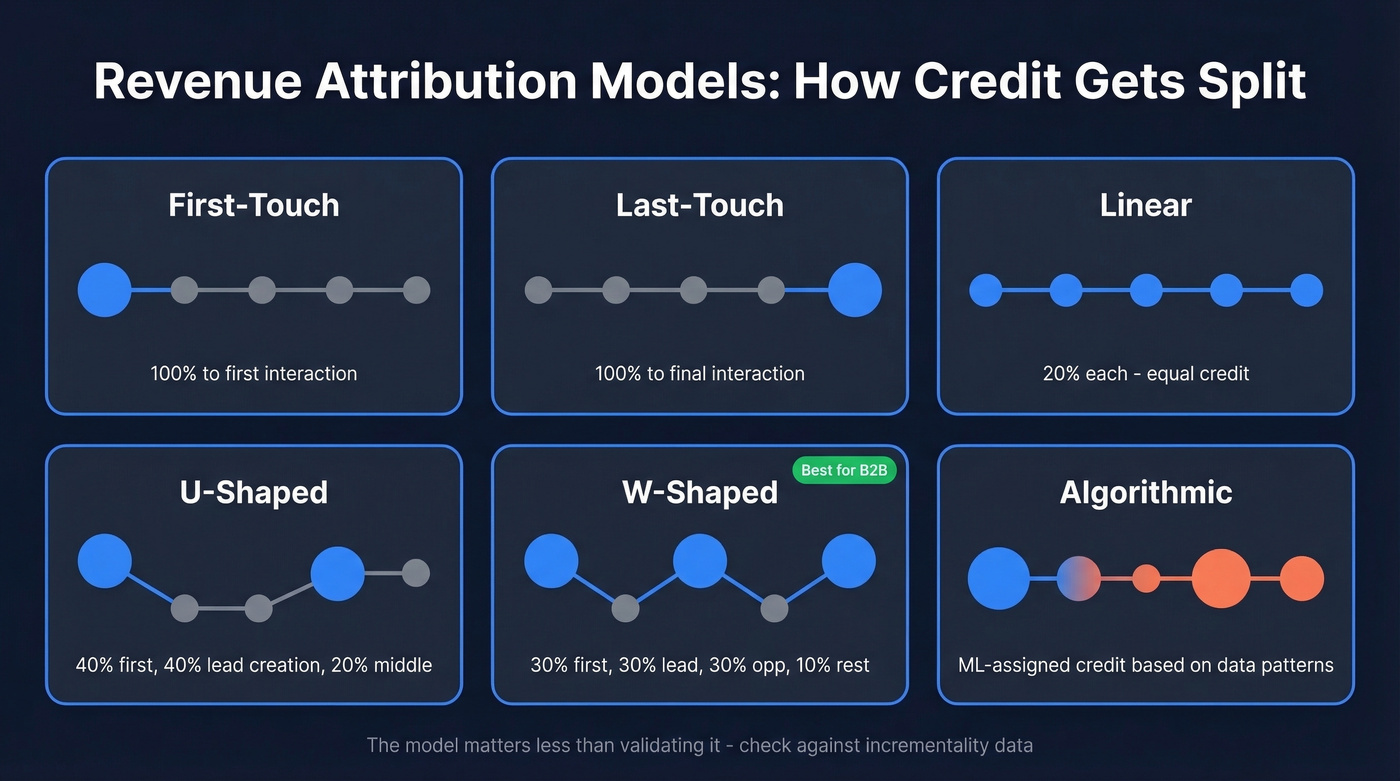

Single-Touch Models

| Model | Credit Split | Best For |

|---|---|---|

| First-touch | 100% to first interaction | Measuring awareness channels |

| Last-touch | 100% to final interaction | Short purchase cycles |

First-touch gives all credit to whatever brought someone in the door. Last-touch gives all credit to the last thing they touched before converting. Both are wrong for any journey with more than one touchpoint - which is every B2B deal. Yet 41% of marketers still rely on last-touch as their primary model.

Multi-Touch Rule-Based Models

| Model | Credit Split | Best For |

|---|---|---|

| Linear | Equal across all touches | Simple multi-touch baseline |

| Time-decay | More credit to recent touches | Short-to-mid sales cycles |

| U-shaped (40/40/20) | 40% first, 40% lead creation, 20% middle | Lead gen focus |

| W-shaped (30/30/30/10) | 30% first, 30% lead, 30% opp, 10% rest | B2B pipeline |

| J-shaped (20/60/20) | 20% first, 60% lead creation, 20% middle | Lead-creation-heavy orgs |

| Full-path | 22.5% each to 4 key stages, 10% to middle touches | Full-funnel B2B |

About 75% of marketers now use some form of multi-touch, which is progress. The W-shaped model is the sweet spot for most B2B teams because it credits the three moments that actually matter: first touch, lead creation, and opportunity creation. The 10% distributed across middle touches acknowledges nurture without over-crediting it.

Algorithmic / Data-Driven Models

Algorithmic models - Markov chains, Shapley value analysis, and platform-native data-driven attribution like GA4's - use statistical methods to assign credit based on observed conversion patterns rather than predetermined rules. They're theoretically superior. In practice, very few organizations use them because they require large datasets, clean tracking, and analytical resources most teams don't have.

Here's the thing: the model you pick matters less than whether you're validating it. A W-shaped model checked against incrementality data will outperform an algorithmic model running on dirty CRM data every time.

Your attribution model is only as good as the data feeding it. If 10% of your contact emails are invalid, you're attributing revenue to touchpoints that never reached anyone. Prospeo's 5-step email verification catches bad data before it corrupts your CRM - 98% accuracy, 7-day refresh cycle, $0.01 per email.

Fix your attribution at the source - start with data that's actually real.

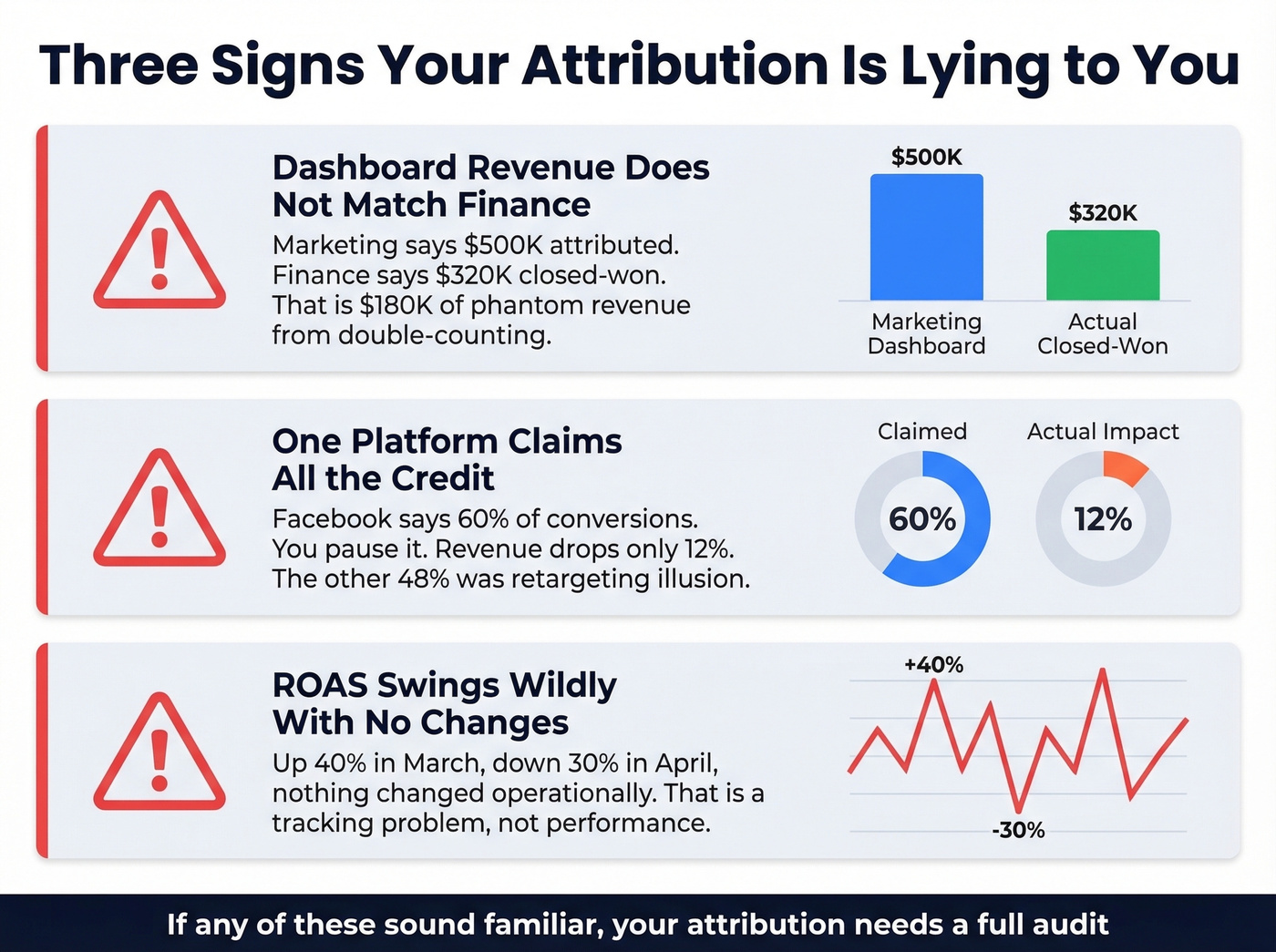

Signs Your Attribution Is Lying

Three red flags that your reporting is misleading you.

Your dashboard revenue doesn't match finance's number. If marketing's attribution dashboard shows $500K in attributed revenue but finance reports $320K in actual closed-won, you've got double-counting. Multiple channels claim credit for the same deals, and nobody's deduplicating. This pattern shows up constantly in practitioner discussions on r/DigitalMarketing - teams discover their dashboards are celebrating phantom revenue. It destroys credibility with the CFO faster than anything else.

One platform takes credit for everything. We've seen this pattern repeatedly: Facebook shows it's responsible for 60% of conversions. The team pauses Facebook for two weeks. Revenue drops 12%, not 60%. That gap between attributed credit and actual impact is the retargeting illusion - platforms over-credit themselves because their attribution windows are generous and they count view-throughs liberally.

Your ROAS swings wildly without operational changes. If you didn't change spend, targeting, or creative, but ROAS jumped 40% in March and dropped 30% in April, your measurement is unstable. That's a tracking problem, not a performance problem. Cross-device journeys compound the issue - someone researches on mobile, converts on desktop, and your analytics records it as "direct."

One often-overlooked contributor to bad attribution is bad CRM data quality. If your email bounce rates exceed 5%, those "touchpoints" never actually reached anyone - but your model still counts them. Cleaning your CRM with a tool like Prospeo removes invalid emails and duplicates before they corrupt your data, verifying emails at 98% accuracy for $0.01 each. (If you want benchmarks and fixes, start with email bounce rates.)

Why Attribution Is Harder in 2026

The infrastructure that worked in 2020 is breaking down, and it's not just about cookies.

Meta's attribution accuracy has deteriorated 40-60% over the past 18 months due to iOS privacy changes, browser restrictions, and ad blockers, which now sit at 25-30% usage. iOS 17's Link Tracking Protection strips tracking parameters like fbclid in Safari Private Browsing. iOS 18 expanded that stripping to more contexts. When Meta deprecated its 7-day view and 28-day view attribution windows, reported conversions dropped 15-30% overnight - not because performance changed, but because measurement did.

Google kept third-party cookies in Chrome after all, but the privacy threat didn't disappear - it shifted to enforcement. Regulators now target automated decision-making, AI governance requirements, inferred behavioral signals, and youth privacy. The cookie isn't the only thing that breaks attribution; it's the entire identity layer underneath.

And then there's the AI discovery problem. Marketers on r/digital_marketing report traffic patterns that "scream AI search" - direct spikes, odd referral paths - but no clean way to attribute that traffic to revenue. When someone discovers your product through ChatGPT or Perplexity, it shows up as "direct" in your analytics. That's a growing chunk of your funnel that's completely invisible to software attribution.

Let's be honest: most B2B teams obsessing over algorithmic attribution models would get more value from a $0 self-reported field and a two-week channel pause test. Perfect measurement is a mirage. Good-enough measurement that you actually act on beats a six-figure attribution platform that nobody trusts.

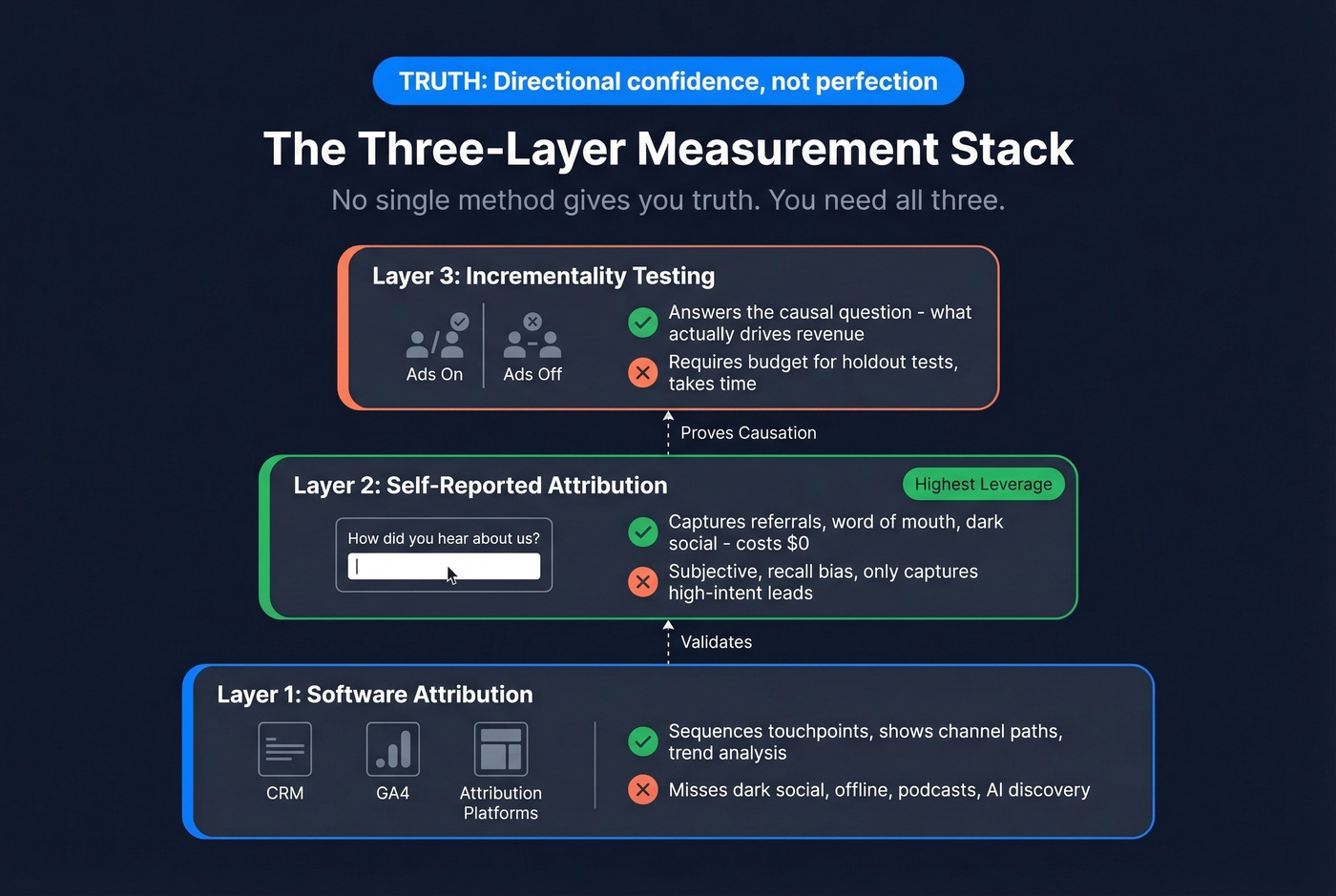

The Three-Layer Measurement Stack

No single method gives you truth. You need all three layers working together.

Layer 1: Software Attribution

Software attribution - your CRM, GA4, attribution platforms - does one thing well: it sequences touchpoints. It shows you the path from first visit to closed deal, which channels appeared in that path, and how many touches it took. Use it for channel-level directional signals and trend analysis. What it misses: dark social, offline conversations, podcast mentions, AI-driven discovery, and anything that doesn't generate a trackable click. (If you're standardizing infrastructure, a dedicated tracking domain can help reduce measurement drift.)

Layer 2: Self-Reported Attribution

This is the highest-leverage, lowest-cost addition most teams aren't making. Add a required open-text "How did you hear about us?" field to your high-intent forms - demo requests, not newsletter signups.

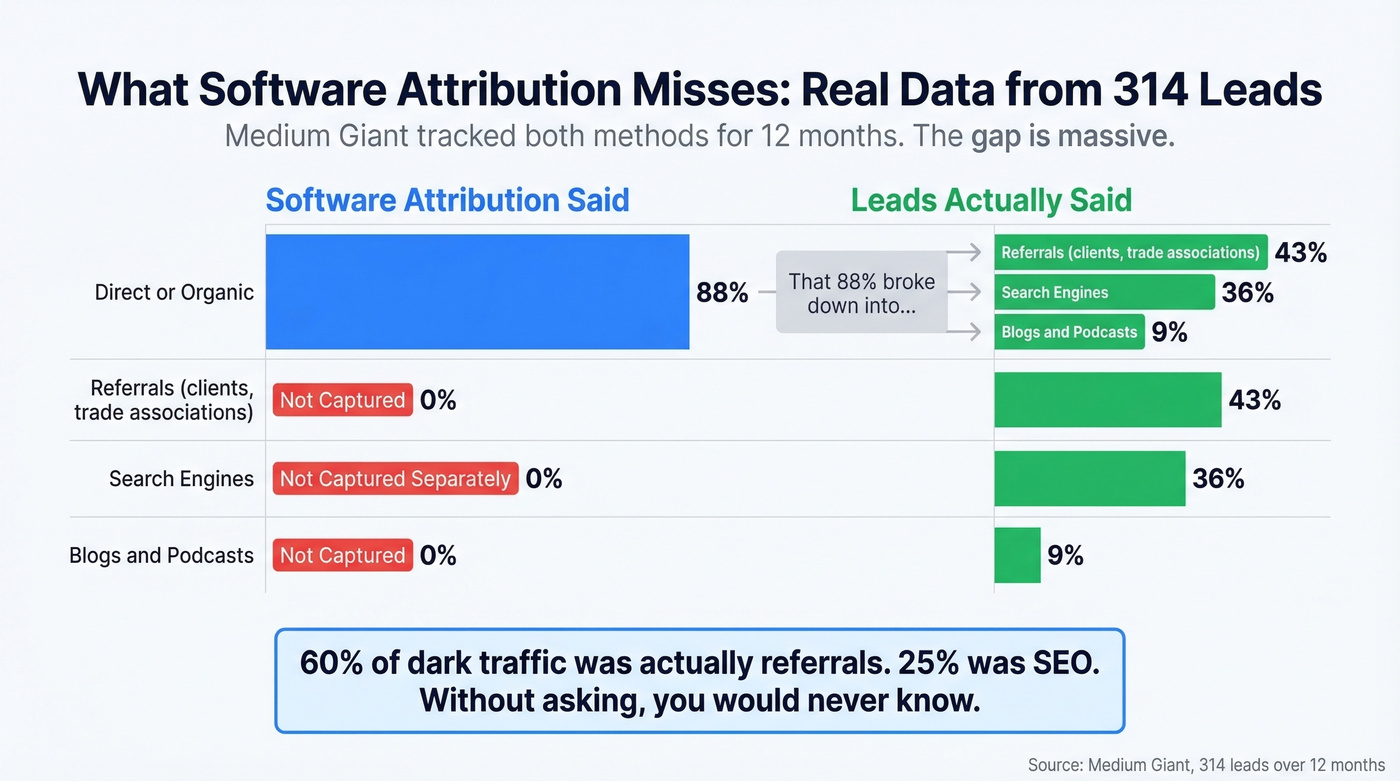

Medium Giant ran this for 12 months across 314 leads. The results were striking:

| Source | Software Attribution | Self-Reported |

|---|---|---|

| Direct / organic | 88% | - |

| Referrals (clients, trade assoc.) | Not captured | 43% |

| Search engines | Not captured separately | 36% |

| Blogs / podcasts | Not captured | 9% |

For the "direct/dark" traffic subset, 60% self-identified as referrals and 25% attributed their discovery to SEO. Without the self-reported field, that team would've under-invested in their most effective channels. We've run this implementation with multiple teams - the self-reported field pays for itself within the first 30 days.

Layer 3: Incrementality Testing

Incrementality answers the causal question attribution can't: "If we turn this off, what actually happens to revenue?"

The gold standard is true experiments - geo tests where you pause spend in matched markets, or audience splits where a holdout group sees no ads. Remember the Facebook example: attribution said 60% of conversions, but pausing it dropped revenue only 12%. That 48-point gap is the incrementality truth. 36% of marketers cite difficulty proving incrementality as a top challenge, which means most teams aren't doing this yet. Start now - run your first geo test within 90 days of implementing layers 1 and 2.

For budget-level allocation decisions across channels, Marketing Mix Modeling complements incrementality testing - it's privacy-proof and doesn't require user-level tracking. (If you're tying this back to unit economics, keep an eye on CAC alongside attributed revenue.)

How to Implement It Step by Step

Five steps, in order. Don't skip ahead.

Step 1: Audit your CRM data quality. Before configuring any model, check your foundation. If email bounce rates exceed 5%, your attribution is mapping revenue to contacts who never received your emails - phantom touchpoints inflating your numbers. Prospeo verifies emails at 98% accuracy and enriches contacts with 50+ data points on a 7-day refresh cycle, at $0.01 per email. Clean data isn't glamorous, but it's the difference between attribution that's directionally useful and attribution that's fiction. (If you're evaluating vendors, compare options in data enrichment services.)

Step 2: Centralize your tracking. Standardize UTM conventions across every campaign. Implement server-side tracking via Conversion API for Meta, Google, and any platform that supports it - pixel-only tracking misses 25-30% of conversions due to ad blockers. Make sure your attribution setup ingests channel costs, not just touchpoints. Without spend data, you can calculate attributed revenue but not ROI.

Step 3: Add a self-reported field. Required, open-text, on demo request and pricing page forms. This takes 10 minutes to implement and costs nothing. You'll start seeing useful data within 30 days.

Step 4: Choose a model. Short sales cycles under 30 days can start with time-decay or U-shaped. Longer B2B cycles should use W-shaped or full-path. For teams with a data team and 10,000+ conversions per quarter, algorithmic models are worth exploring. For everyone else, rule-based models with self-reported validation will outperform an under-fed algorithm.

Step 5: Validate with incrementality within 90 days. Pick your highest-spend channel. Run a geo test or holdout. Compare the attributed lift to the actual lift. The gap tells you how much to trust your model - and where to recalibrate. (To keep the pipeline view honest, track pipeline health in parallel.)

Attribution breaks when your CRM is full of duplicates, bounced emails, and stale contacts. Prospeo's enrichment engine returns 50+ data points per contact at a 92% match rate - so every touchpoint in your model maps to a real person who actually received your message.

Stop attributing revenue to contacts who never saw your emails.

Revenue Attribution Tools Worth Knowing

Every attribution tool uses different rules for touchpoint definitions, identity resolution, and credit assignment. Two tools analyzing the same data will produce different numbers. Ask any vendor how their model assigns credit to view-throughs - if they can't show you the raw data, you're trusting a black box.

B2B-focused:

| Tool | Best For | Approx. Pricing |

|---|---|---|

| HubSpot Enterprise | Teams already on HubSpot CRM | ~$3,600/mo |

| Dreamdata | B2B pipeline attribution | Free tier; paid ~$800-$1,500/mo |

| HockeyStack | Revenue intelligence | ~$10K-$50K/yr |

| CaliberMind | ABM-heavy orgs | ~$3K-$6K/mo |

| Ruler Analytics | Agencies, SMBs | ~$200-$400/mo |

DTC / Ecommerce:

| Tool | Best For | Approx. Pricing |

|---|---|---|

| Triple Whale | Shopify brands | ~$100/mo |

| Northbeam | Media buying optimization | ~$1,000/mo |

| GA4 | Everyone (baseline) | Free; GA360 ~$50K/yr |

Look - if you're a B2B team under 50 employees, GA4 plus a self-reported field plus clean CRM data will get you 80% of the insight at 0% of the cost of a dedicated platform. The dedicated tools earn their keep when you're spending $500K+ on demand gen and need to optimize allocation across 10+ channels. Skip the expensive platform until you've outgrown the free stack. (If you're still choosing your system of record, see examples of a CRM to sanity-check fit.)

FAQ

What's the difference between revenue attribution and marketing attribution?

Revenue attribution maps all customer interactions - marketing, sales, support - to actual closed revenue. Marketing attribution focuses only on marketing touchpoints before a conversion event. The revenue-focused approach is broader and more useful for finance and RevOps teams because it connects spend to dollars, not just leads.

Which attribution model works best for B2B?

For B2B with sales cycles longer than 30 days, start with a W-shaped model (30/30/30/10) that credits first touch, lead creation, and opportunity creation. Supplement with self-reported attribution to capture dark traffic that no software model can see.

How do you measure attribution without cookies?

Use server-side tracking via Conversion API, self-reported fields on high-intent forms, incrementality testing via geo tests or holdouts, and Marketing Mix Modeling for budget-level decisions. Cookie-based tracking is one input, not the system.

Does CRM data quality affect attribution accuracy?

Directly. If email bounce rates exceed 5%, those "touchpoints" never happened, but your model still counts them. Cleaning your CRM removes ghost records before they corrupt your analysis - it's the single highest-ROI step most teams skip.

Is last-touch attribution still useful?

Only for short, single-session purchase cycles like impulse ecommerce buys. For B2B or any multi-touch journey, last-touch over-credits branded search and retargeting while starving the demand-generation channels that actually created awareness.