Technographic Insights from Job Postings

Your competitor just posted 12 Snowflake engineer roles. That's not a hiring signal - it's one of the most actionable technographic insights from job postings you'll find anywhere, telling you they're migrating data infrastructure right now. The alternative data market hit $1.64B in 2020 and is projected to reach $17.35B by 2027. Job postings are one of the richest, most underused veins in that market.

Here's how to mine them.

What You Need (Quick Version)

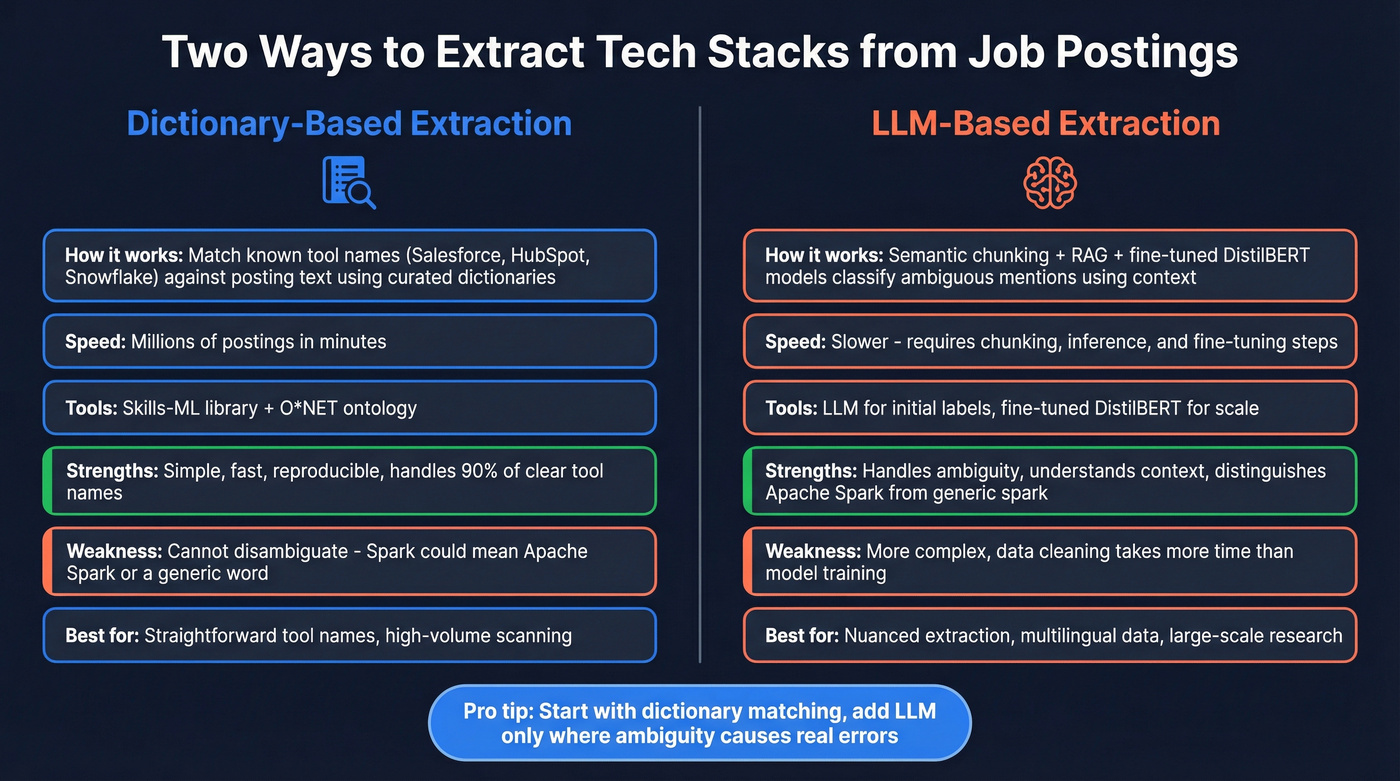

- DIY extraction: A dictionary-based pipeline using Skills-ML + O*NET for obvious tool names, or an LLM pipeline for ambiguous mentions.

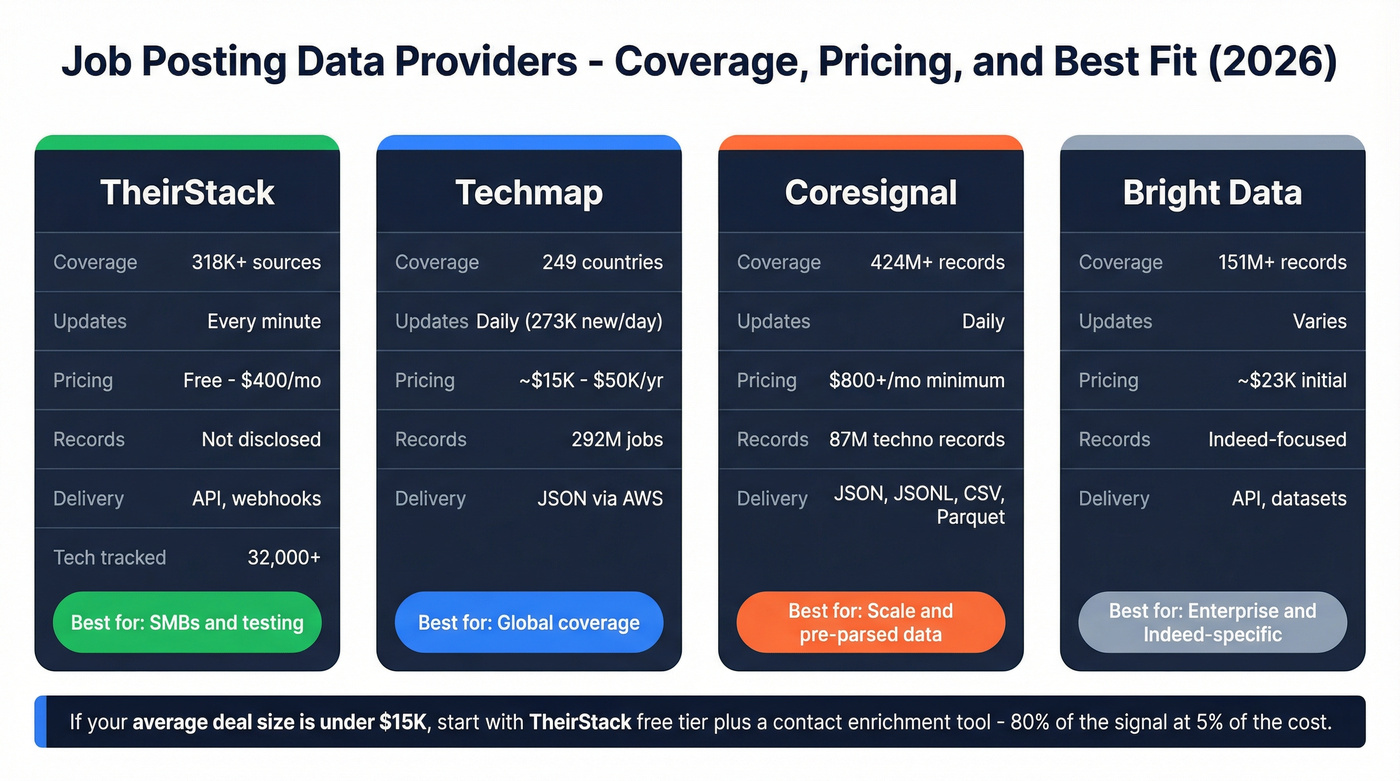

- Buy job posting data: TheirStack ($59/mo starting) for SMBs, Techmap for global coverage, Coresignal (from $800/mo minimum, with separate search credits) for scale.

- Turn signals into outreach: Prospeo filters by technographics - including live job posting signals - and gives you verified contacts in one step.

What Job Postings Actually Reveal

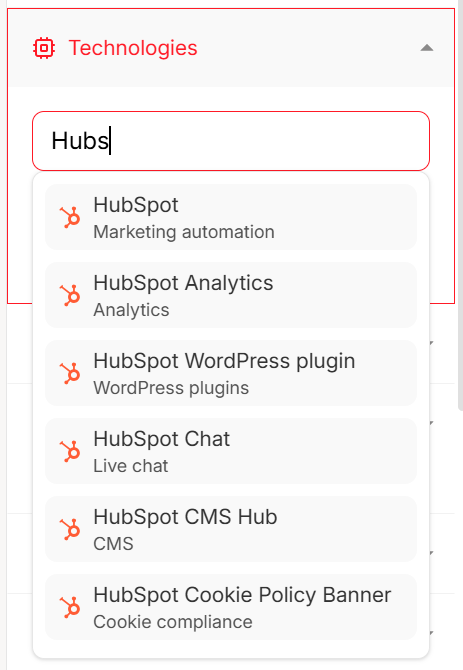

Every job posting is a company broadcasting its tech stack to the world. The mapping is direct. Data engineer roles mention Azure, Scala, Spark, and SQL. ML engineer postings reference TensorFlow and PyTorch. A "Salesforce Admin with HubSpot migration experience" posting tells you that company is actively switching CRMs - a buying signal for anyone selling migration services, integrations, or competing platforms.

The categories you can extract are broad: CRM systems, marketing automation platforms, cloud infrastructure across AWS, Azure, and GCP, developer tools and languages, security products, and analytics stacks. Extracting security technology data is especially valuable because postings for InfoSec and DevSecOps roles reveal firewalls, SIEM platforms, and endpoint protection tools that never appear in frontend code. A single posting for a "Senior DevOps Engineer" reveals Kubernetes, Terraform, Datadog, and Jenkins in one paragraph. Multiply that across hundreds of postings from the same company and you've got a detailed technographic profile that website scrapers miss entirely - backend infrastructure, internal tools, and planned migrations don't show up in client-side code.

This makes job postings a powerful complement to website scraping, which only captures frontend tools. Together, the two methods give you full-stack visibility.

Two Extraction Methods

Dictionary-Based Extraction

The simplest approach uses a curated dictionary of technology names matched against posting text. The open-source library Skills-ML does exactly this - it scans job descriptions and maps matches to the O*NET skill ontology, a taxonomy maintained by labor market experts.

One well-documented implementation scraped 6,590 job descriptions across 7 European countries and ran dictionary matching to extract tool mentions by role. Data engineers clustered around Azure, Scala, and ETL tools while ML engineers clustered around TensorFlow and PyTorch. The original dataset was multilingual - English, French, Dutch, German - and extraction accuracy varies by language, so budget for language-specific dictionaries if you're working outside English. Teams sourcing technographic data for EMEA markets should pay special attention here, since job boards across the region mix local languages with English technical terms.

Dictionary matching is simple, fast, and reproducible. You can run it on millions of postings in minutes. The limitation is ambiguity: "Spark" could mean Apache Spark or a generic adjective. For straightforward tool names - Salesforce, HubSpot, Snowflake - dictionary matching handles 90% of what you need.

LLM-Based Extraction

When you need to handle ambiguity, an LLM pipeline is worth the extra complexity. One research team applied a combination of semantic chunking, retrieval-augmented generation, and fine-tuned DistilBERT models to ~1.2 million job postings. They generated initial labels with an LLM on a 10,000-record sample, then fine-tuned four separate DistilBERT models for specific extraction targets. The pipeline handles disambiguation that dictionaries can't - distinguishing "Spark" as Apache Spark from a generic mention based on surrounding context.

Here's the thing: data quality matters more than model architecture. In that 1.2M dataset, salary fields were ~50% NULL, roughly 2,000 postings contained raw HTML noise, and posting length averaged 4,178 characters with a standard deviation of 2,746. If you're building this in-house, budget more time for data cleaning than for model training.

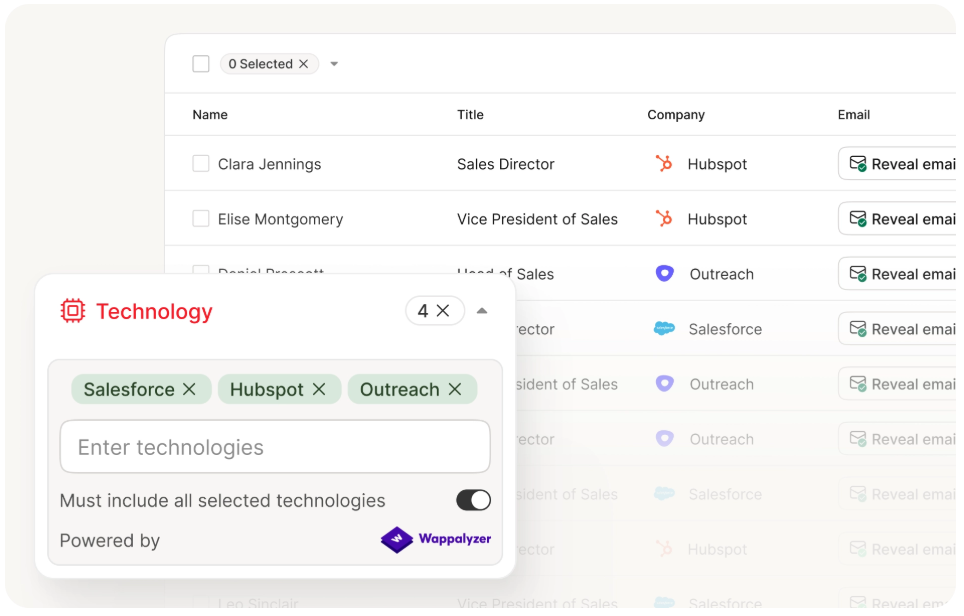

Building extraction pipelines is one option. Prospeo already tracks technographic signals from live job postings and Wappalyzer data across 300M+ profiles - with 30+ filters including tech stack, buyer intent, and headcount growth. You get verified contacts attached to every signal, not just raw data to clean.

Skip the pipeline. Filter by tech stack and get verified emails at $0.01 each.

Separating Signal from Noise

Not every tech mention in a job posting is a reliable signal.

Trust these signals:

- Multiple postings from the same company mentioning the same tool - three Snowflake roles beats one Snowflake mention every time

- "Must-have" requirements listed as hard prerequisites

- Tools mentioned in the context of current projects or existing infrastructure

Discount these signals:

- "Nice-to-have" skills listed as bonus qualifications - companies throw these in aspirationally

- Single mentions in a single posting, especially for common tools

- Stale postings - companies post after adoption decisions are made, and postings often stay live weeks after roles fill

The biggest operational issue with job-posting technographics is signal lag: by the time a posting goes live, the buying decision may have been made weeks or months earlier. That's why cross-referencing matters. Check job posting signals against web-detection tools like BuiltWith or Wappalyzer. If a company's website runs HubSpot tracking code and their job postings mention HubSpot, that's a high-confidence signal. If only one source shows it, treat it as probabilistic.

Job postings are publicly available data, so scraping them doesn't raise the same compliance concerns as behind-the-firewall methods. That said, if you're buying data from a provider, confirm they handle deduplication and data retention in a GDPR-compliant way (and align with your internal GDPR for Sales and Marketing process).

Job Posting Data Providers

For context, typical alternative data subscriptions run $25,000-$500,000 annually. Job posting APIs are a fraction of that. Building your own scraping pipeline sounds appealing until you're debugging rate limits at 2 AM - we've tested several providers, and buying is almost always cheaper than building.

| Provider | Coverage | Updates | Pricing | Delivery | Best For |

|---|---|---|---|---|---|

| TheirStack | 318K+ sources | Every minute | Free-$400/mo | API, webhooks | SMBs / testing |

| Techmap | 292M jobs, 249 countries | Daily (273K new/day) | ~$15K-$50K/yr | JSON via AWS | Global coverage |

| Coresignal | 424M+ records | Daily | $800+/mo minimum | JSON, JSONL, CSV, Parquet | Scale / pre-parsed data |

| Bright Data | 151M+ records | Varies | ~$23K initial | API, datasets | Enterprise / Indeed-specific |

TheirStack is the obvious starting point for SMBs. The free tier gives you 200 credits/month to test, and tiers scale from $59/mo for 1,500 credits to $400/mo for 50,000 credits. They track 32,000+ technologies and support company-level filtering, so you can query "show me companies posting roles that mention Snowflake" directly.

Techmap positions itself as a technographics-from-job-postings provider and has the broadest geographic coverage at 249 countries. If you're doing global analysis, 292M available jobs with 273K new ones added daily is hard to beat. Pricing isn't public - based on market positioning and typical alternative data costs, expect to negotiate in the $15K-$50K/year range depending on scope.

Coresignal is the scale play. Their 424M+ job posting records and 87M dedicated technographic records make it the deepest dataset available. They've already done the NLP extraction - you get parsed, structured tech stack data rather than raw postings. One client reported +25% net new pipeline in 2 months, growing to +40% after 6 months using technographic enrichment. The $800/mo minimum and separate search credits put the platform firmly in mid-market-and-up territory.

Bright Data is enterprise territory. A single Indeed jobs dataset snapshot runs ~$23K initial plus ~$2,934/mo for refreshes. Skip this unless you're operating at serious scale.

Let's be honest: if your average deal size is under $15K, you don't need Coresignal or Bright Data. TheirStack's free tier plus a contact enrichment tool gets you 80% of the signal at 5% of the cost. Save the enterprise data stack for when you've proven the motion works.

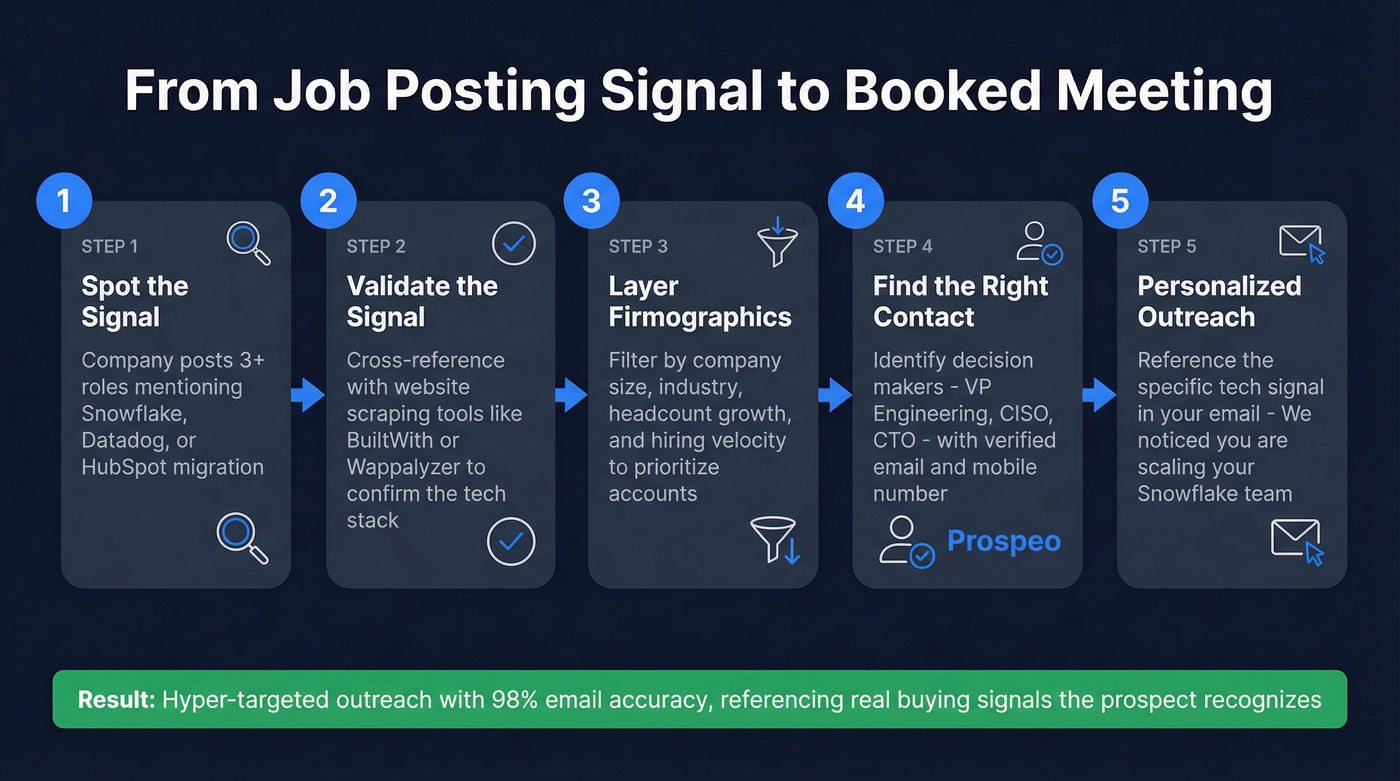

Turning Tech Stack Signals into Outreach

Identifying that a company uses Snowflake is step one. The harder part is finding the right person to email about it and making sure that email actually lands.

Tech stack signals are most powerful when layered with firmographics like company size and industry alongside intent data showing active research behavior - job postings give you the first, and the right enrichment tool layers in the rest (especially if you're building firmographic and technographic data into your targeting). For teams doing competitive analysis, this is where things get interesting: if you sell a Datadog alternative, filtering job postings for companies hiring Datadog engineers tells you exactly who your addressable market is and which accounts might be open to switching based on hiring volume and role seniority. SaaS vendors are especially easy to profile because they hire heavily for platform-specific roles, making their stacks straightforward to map from postings alone.

Security tech stack intelligence is another area where this workflow shines - CISOs and security leads are notoriously hard to reach, but filtering by security tool mentions in job postings narrows your list to accounts with confirmed budget and active projects (a core idea in signal-based outbound).

You just identified companies migrating to Snowflake from job postings. Now what? Prospeo turns technographic signals into outreach-ready lists with 98% email accuracy and 125M+ verified mobile numbers - no separate enrichment step, no bounced emails burning your domain.

Go from tech stack signal to booked meeting without switching tools.

FAQ

How accurate are technographic insights from job postings?

In a 1.2M-posting dataset, ~50% of salary fields were NULL and thousands contained HTML noise - raw accuracy varies significantly by source. Cross-reference with BuiltWith or Wappalyzer for validation. Three or more postings from one company mentioning the same tool is a reliable pattern; single mentions are probabilistic.

What's the cheapest way to get job posting data?

TheirStack's free tier gives you 200 credits/month at zero cost. Paid plans start at $59/month - enough for most SMB technographic profiling without building a custom scraper or negotiating enterprise contracts.

Can I get technographic data and verified contacts in one tool?

Yes. Prospeo's B2B database includes technographic filters alongside 143M+ verified emails and 125M+ verified mobiles. Search by tech stack, get decision-maker contacts, and launch outreach from a single platform - the free tier includes 75 emails/month.

How do job postings compare to website scraping for technographic data?

Website scrapers like BuiltWith and Wappalyzer only detect client-side tools - analytics tags, marketing pixels, and frontend frameworks. Job postings reveal backend infrastructure, internal tools, and planned migrations that never touch public-facing code. Use both together for full-stack visibility.