The Best Email A/B Testing Tools in 2026

A lot of your opens are fake. Apple Mail Privacy Protection pre-fetches tracking pixels, which means a chunk of your "opens" are phantom signals from Apple's servers - not humans reading your email. If you're split-testing subject lines based on open rate alone, you're optimizing against noise.

The email A/B testing tools and methodology you use matter more than ever. Litmus reports that 12% of marketers credit A/B testing with helping drive email marketing's 36:1 ROI. That's a real number, but only if your tests are actually valid.

Our Picks (TL;DR)

- Best overall: ActiveCampaign - 5 variants, automation split testing, Starter at $15/mo for ~1,000 contacts (billed yearly)

- Best for ecommerce: Klaviyo - flow A/B testing, auto-winner selection, 4+ variants per test

- Before you test anything: Verify your list so bounced emails don't shrink your sample size and poison results

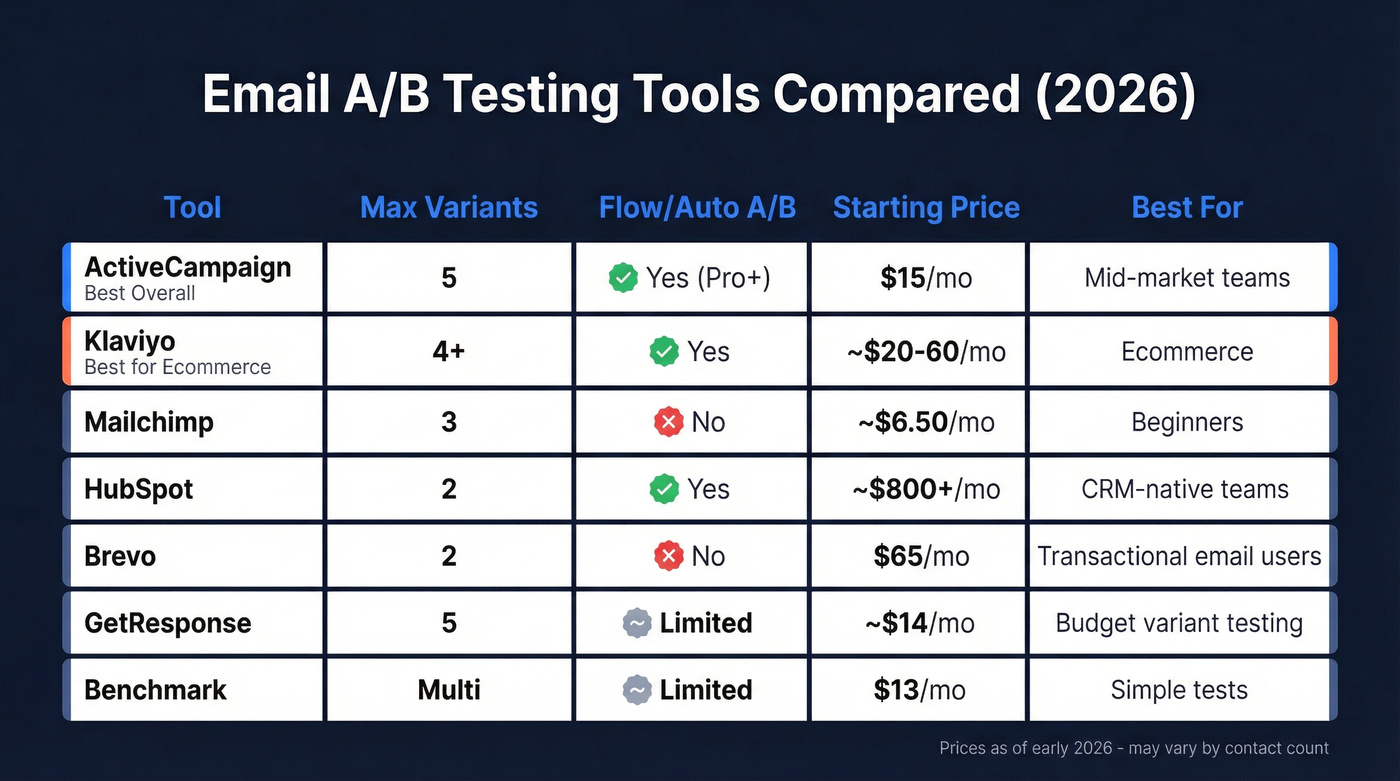

Side-by-Side Comparison

Variant limits, testable elements, and automation testing are what separate serious split-testing platforms from the basics. Here's how they stack up.

| Tool | Max Variants | Testable Elements | Flow/Auto A/B | Starting Price |

|---|---|---|---|---|

| ActiveCampaign | 5 | Subject, content, images, CTAs | Yes (Pro+) | $15/mo (Starter, yearly) |

| Klaviyo | 4+ | Subject, content, images, CTAs, send time | Yes | ~$20-$60/mo (small lists) |

| Mailchimp | 3 | Subject, send time, content | No | ~$6.50/mo |

| HubSpot | 2 | Subject, content | Yes | ~$800-$1,000+/mo (Pro) |

| Brevo | 2 | Subject lines | No | $65/mo (Business plan) |

| GetResponse | 5 | Subject, content | Limited | ~$14-$16/mo |

| Benchmark | Multi | Subject, content | Limited | $13/mo |

Every bounced email shrinks your A/B test sample size and delays statistical significance. Prospeo's 98% email accuracy and 7-day data refresh cycle mean your test segments actually reach real inboxes - not dead addresses that poison your results.

Clean your list before you test. 75 free verifications to start.

Best Tools for Email Split Testing

ActiveCampaign

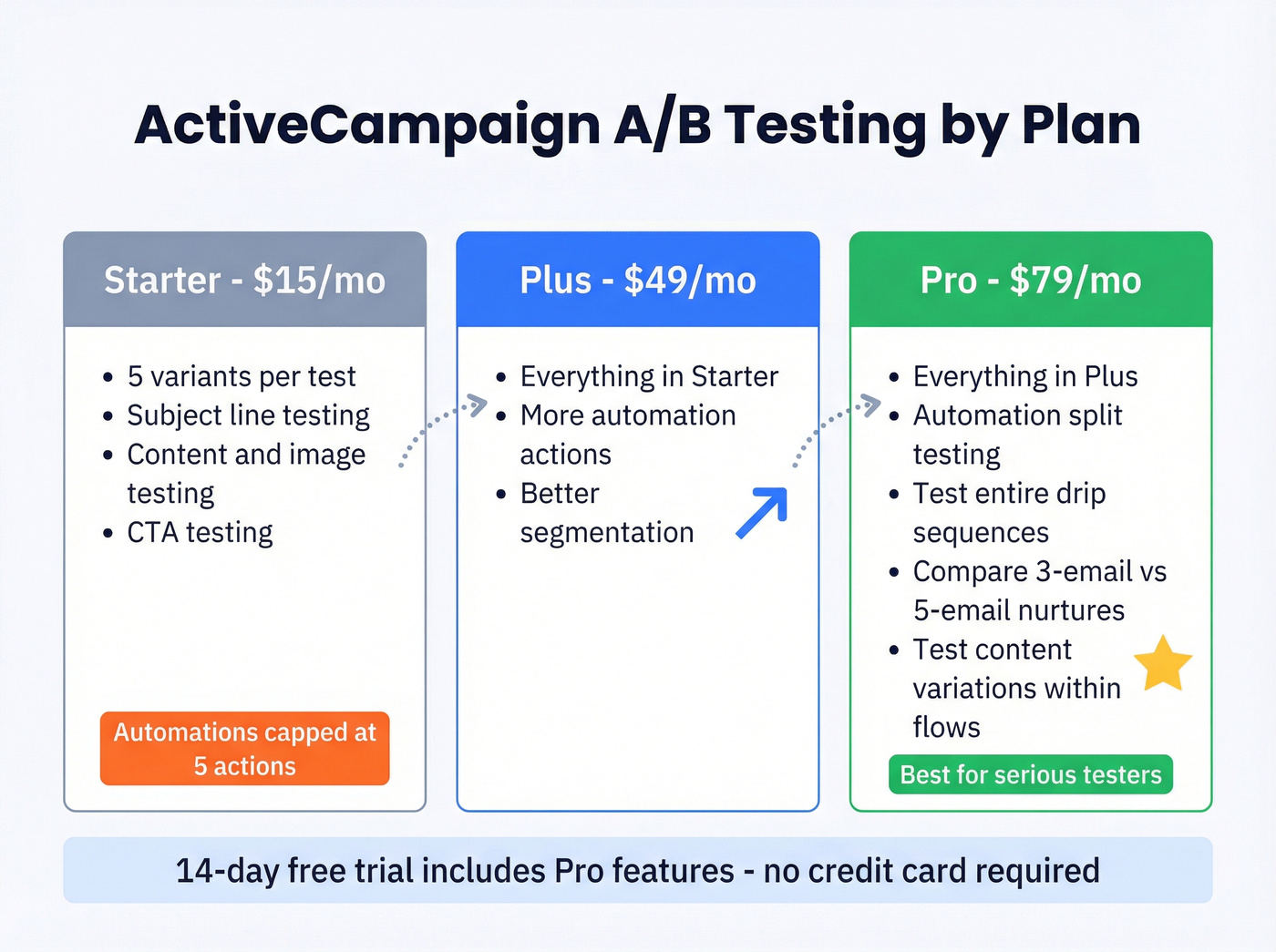

Use this if you want the deepest A/B testing in a mid-market ESP. Five variants per test, the ability to test subject lines, content, images, and CTAs - and on the Pro plan, you can split-test entire automation workflows, not just one-off campaigns.

If you're comparing platforms, see our breakdown of ActiveCampaign vs Brevo.

That automation testing is the real differentiator. Most ESPs limit you to campaign-level A/B testing. ActiveCampaign lets you test different paths inside drip sequences, which means you can figure out whether a 3-email nurture beats a 5-email one, or whether a case study on day two outperforms a product demo invite. The Starter plan at $15/mo billed yearly includes email A/B but caps automations at 5 actions, so complex split tests need Plus ($49/mo) or Pro ($79/mo). There's a 14-day free trial with Pro features.

We've used ActiveCampaign for internal testing and found the automation split-testing workflow intuitive enough that non-technical team members could set up tests without hand-holding. That's rare.

Klaviyo

Klaviyo is the obvious pick for ecommerce brands. It lets you test 4+ subject line variants in a single campaign, A/B test within automated flows like abandoned cart and post-purchase sequences, and auto-select the winning variant to send to remaining subscribers. The statistical significance reporting is genuinely useful - it flags when results hit significance instead of just looking directionally positive.

The catch: pricing scales by contact count and gets expensive fast past 5,000-10,000 profiles. For B2B teams or content publishers, ActiveCampaign gives you more automation flexibility at a fraction of the cost. Skip Klaviyo unless ecommerce is your core business.

If deliverability is a concern, start with the Klaviyo bounce rate basics before you test.

Mailchimp

Three variants, subject line and content testing, and a UI that doesn't require a tutorial. Paid plans start around $6.50/mo, with Standard typically running $13-$20+/mo depending on contacts. It's the right starting point for teams running their first A/B tests.

The ceiling comes fast, though. No automation A/B testing and a hard 3-variant limit mean you'll outgrow it within a few months of serious testing.

Brevo

Here's the thing about Brevo: it handles subject line testing competently, and if you're already on it for transactional email, there's convenience in staying put. But A/B testing is gated behind the Business plan at $65/mo. That's 4-5x what competitors charge for the same feature, and you're limited to subject line tests only - no full content variants. Hard to justify unless you're locked into the ecosystem.

GetResponse

GetResponse supports 5 variants and starts at ~$14-$16/mo. A solid middle ground if you need more variants than Mailchimp without jumping to ActiveCampaign's price tier. AI-powered subject line suggestions are a nice bonus, though you should still validate any AI-generated variants with proper statistical testing. Don't let the AI pick your winner.

HubSpot

If your team already lives in HubSpot's CRM, the built-in A/B testing avoids another tool in the stack. The limitation: A/B testing requires Marketing Hub Professional, which typically runs $800-$1,000+/mo, and you're capped at 2 variants. For dedicated email testing, that's expensive and restrictive. But for HubSpot-native teams running full-funnel attribution, the CRM integration and reporting make it worth considering despite the price.

Benchmark Email

Benchmark offers multivariate testing on paid plans starting at $13/mo. Budget-friendly, but the testing depth doesn't match ActiveCampaign or Klaviyo. Worth a look for small teams running simple subject line tests who don't need automation-level split testing.

One customer cut their bounce rate from 35% to under 4% with Prospeo - turning stalled A/B tests into statistically significant winners. At $0.01 per verified email, cleaning a 10,000-contact test segment costs less than your morning coffee.

Stop optimizing against noise. Start with data that's actually valid.

How to Run a Valid A/B Test

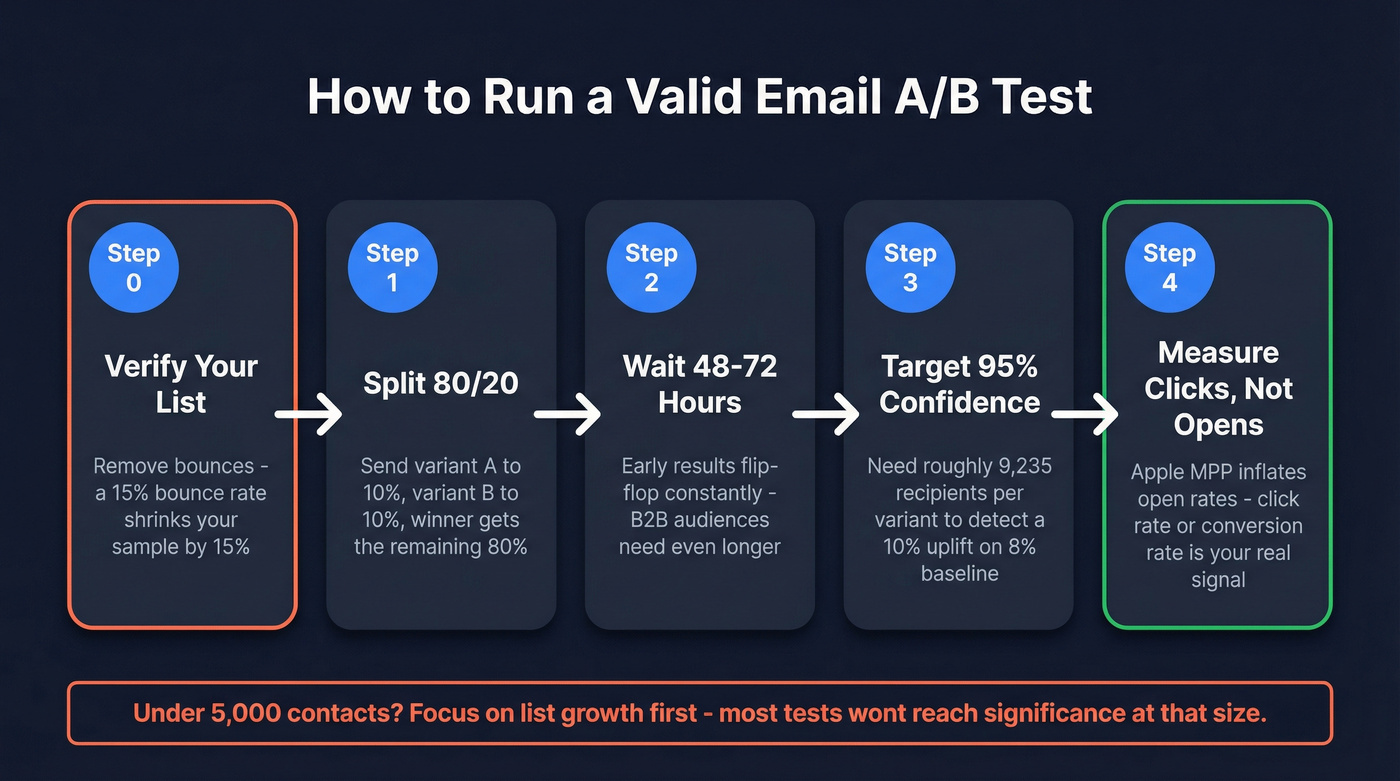

Most email A/B tests fail not because the tool is wrong, but because the methodology is sloppy. We've seen this pattern dozens of times: a team picks a great ESP, runs three "tests," declares a winner based on vibes, and wonders why performance doesn't improve. Here's the checklist that actually matters.

Step zero: verify your list. A 15% bounce rate shrinks your effective sample size by 15%. We've audited A/B test setups where teams burned 2-3 test cycles before realizing their data - not their creative - was the problem. Run your segment through an email verification tool before testing. One Prospeo customer dropped their bounce rate from 35% to under 4% after switching, and that's the difference between a test that reaches significance and one that stalls indefinitely.

Use the 80/20 split. For lists of 1,000+, send Version A to 10% and Version B to 10%. The winner goes to the remaining 80%. Simple, effective, and supported by every tool on this list.

Target 95% confidence. Don't call a winner until you hit statistical significance. If your baseline click rate is 8% and you want to detect a 10% uplift, you need roughly 9,235 recipients per variant. That's not a small number. Meaningful tests require real volume.

Wait at least 48 hours. Early results flip-flop constantly. Litmus recommends a minimum 48-hour window before declaring a winner, and in our experience, 72 hours is even better for B2B audiences where people check email less frequently.

Measure clicks, not opens. With Apple MPP inflating open rates across the board, click rate or conversion rate is a far more reliable success metric for A/B tests in 2026. If you want the deeper breakdown, see open rate vs click rate. If your testing tool only reports open-rate winners, you're flying blind.

Let's be honest: if your list is under 5,000 contacts, you probably don't need a sophisticated split-testing tool at all. At that size, most tests won't reach statistical significance anyway. Focus on list growth and email hygiene first, then invest in testing infrastructure once your volume supports it.

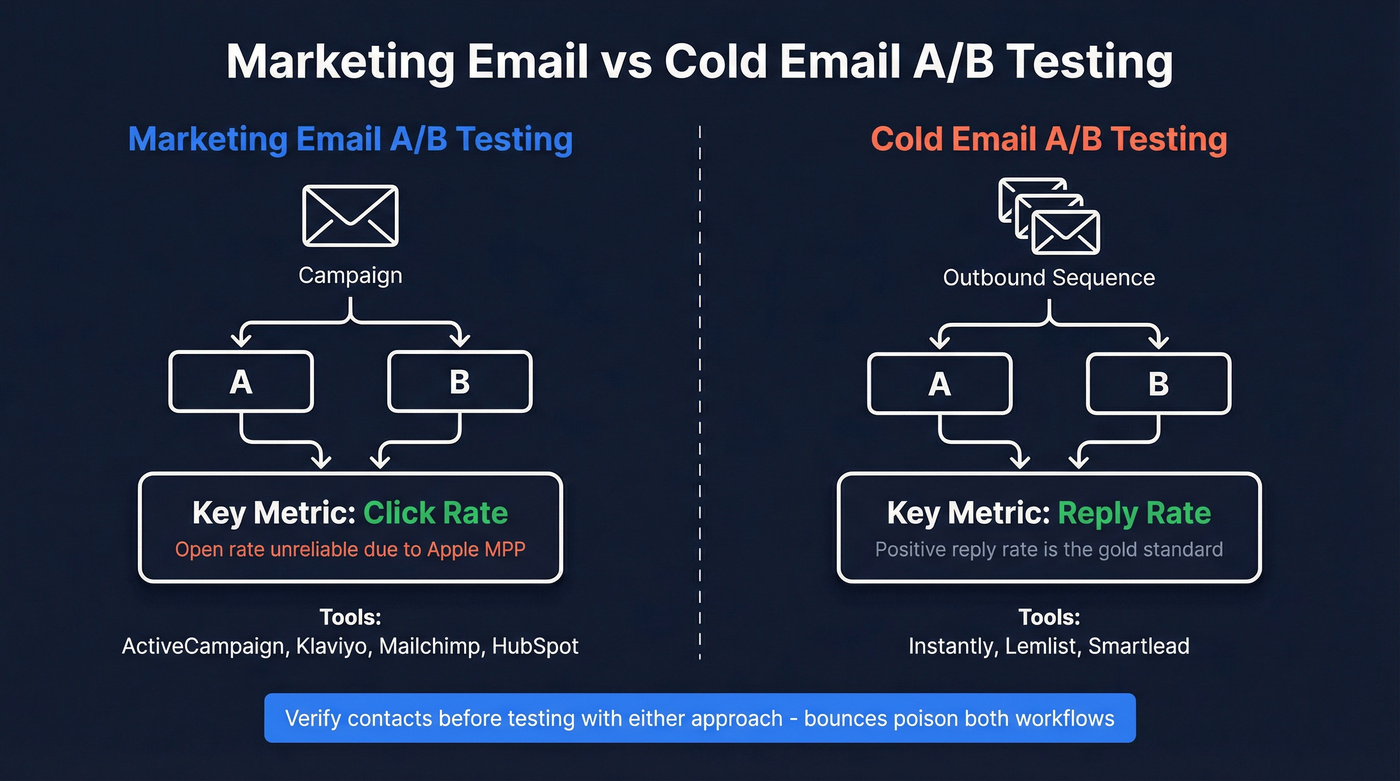

What About Cold Email A/B Testing?

The platforms above focus on marketing email. If you're running outbound sequences, you need cold email A/B testing tools built for that workflow.

Platforms like Instantly, Lemlist, and Smartlead let you rotate subject lines and body copy across cold sequences with per-variant reply-rate tracking - a metric that matters far more than opens in outbound. The consensus on r/coldemail is that reply rate and positive reply rate are the only metrics worth optimizing in cold outreach, and we agree. Prospeo integrates natively with all three platforms, so you can verify contacts before they enter a sequence and keep bounce rates under control across every variant. For teams where cold outreach is the primary use case, evaluate those alongside the ESPs listed here.

If you want a deeper outbound workflow, start with split testing cold emails and our list of cold email marketing tools.

FAQ

How many subscribers do I need for A/B testing?

At least 1,000 for the 80/20 split method - send each variant to 10%, then the winner to the remaining 80%. For statistically significant results at 95% confidence, aim for 5,000-10,000+ per variant. Use Evan Miller's calculator to plan your test.

Can I A/B test automated email flows?

ActiveCampaign (Pro plan, $79/mo) and Klaviyo both support flow-level A/B testing, letting you split-test entire automation paths. Mailchimp, Brevo, and most budget ESPs limit testing to one-off campaigns only.

Should I clean my list before running A/B tests?

Yes. Bounced emails reduce your sample size and skew results. Run your test segment through a verification tool before launch. Clean data is the single biggest factor in whether your test reaches significance or wastes your time.

What's the best free option for email split testing?

Mailchimp's free plan includes basic A/B testing for up to 500 contacts, but the 3-variant cap is limiting. For list verification before testing, Prospeo's free tier (75 credits/month) paired with any ESP's built-in A/B feature is a practical starting point for small teams.