How to Nurture MQLs to SQLs: The Complete Operational Playbook

400 MQLs generated last quarter. 38 accepted by sales. 12 converted to SQL. That's a 3% conversion rate, and the CMO wants to know what's broken.

Here's the thing: it's never one thing. It's a definition problem layered on top of a scoring problem layered on top of a data problem. If your MQL-to-SQL rate sits below 13-15%, you're likely dealing with at least two of those three. This playbook gives you the exact models, templates, and workflows to fix each one - and shows you how to increase sales qualified leads without inflating your top-of-funnel with junk.

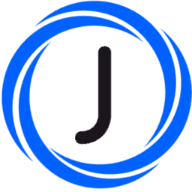

MQL vs SQL - Get Definitions Right First

Most teams treat MQLs like a volume metric. Someone fills out a form, they're an MQL. That's not a definition - that's a headcount.

A real MQL shows both intent signals and fit signals. They've done something that suggests buying interest (visiting pricing pages, attending a product webinar, downloading a comparison guide) and they match your ICP on firmographic criteria. An SQL goes further: it's a lead that's been vetted by a salesperson, not just scored by an algorithm. A meeting has been booked, or a budget conversation has started. The salesperson has confirmed this person is worth pursuing using a framework like [BANT](https://www.gartner.com/en/digital-markets/insights/bant-framework) or [MEDDIC](https://www.salesforce.com/blog/meddic-sales/).

Some frameworks add an IQL stage before MQL. For most B2B teams under 500 leads per month, this adds complexity without improving conversion. Skip it.

| Criteria | MQL Signal | SQL Signal |

|---|---|---|

| Content | Downloaded 3+ assets | Requested pricing |

| Engagement | Attended webinar | Booked a demo |

| Fit | Matches ICP firmographics | Budget authority confirmed |

| Behavior | Repeat site visits | Responded to SDR outreach |

| Validation | Scored by automation | Vetted by salesperson |

If your sales and marketing teams can't agree on these definitions in a 30-minute meeting, that's your first problem. Fix it before you touch anything else.

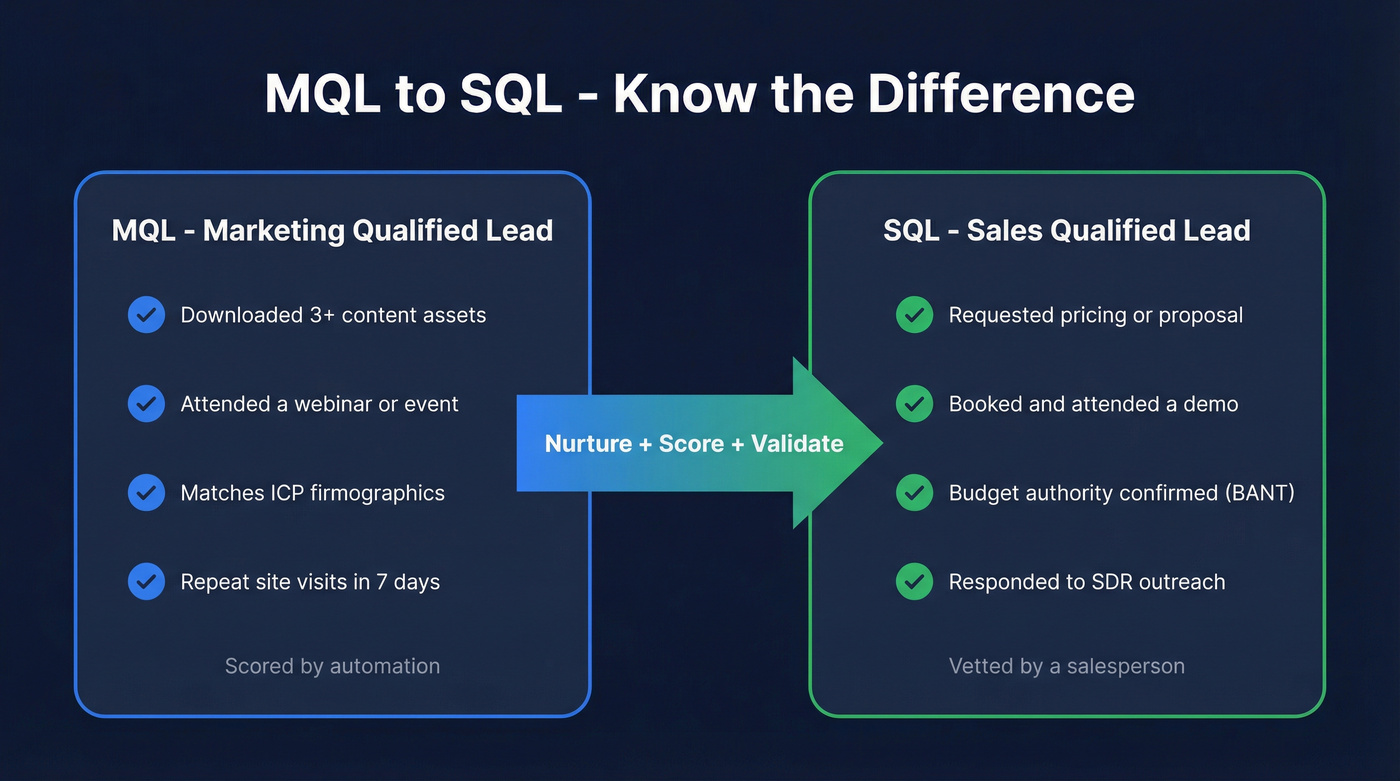

MQL-to-SQL Benchmarks by Industry

Knowing what "good" looks like prevents two mistakes: celebrating mediocre results and panicking over perfectly normal conversion rates. The B2B median sits around 13-15%, but industry variation is massive.

| Industry | MQL-to-SQL Rate |

|---|---|

| B2B SaaS | 13% |

| Cybersecurity | 15% |

| Financial Services | 13% |

| IT & Managed Services | 13% |

| eCommerce | 23% |

| HVAC | 26% |

| Business Insurance | 26% |

| Construction | 12% |

| Legal Services | 10% |

Lead-source conversion gaps are even more dramatic:

| Lead Source | MQL-to-SQL Rate |

|---|---|

| SEO | 51% |

| Email Marketing | 46% |

| Webinars | 30% |

| PPC | 26% |

| Events | 24% |

If you're running paid-heavy acquisition, your conversion rate will naturally be lower - that's expected, not a failure. An SEO lead who finds your pricing page organically has fundamentally different intent than someone who clicked a display ad. Don't hold both channels to the same target.

Build Your Lead Scoring Model

Most scoring models fail because they're built on vibes instead of point values. Here's a complete scoring table you can copy directly into HubSpot, Salesforce, or whatever CRM you're running.

Fit Signals

| Signal | Points |

|---|---|

| C-level title | +30 |

| Director-level or above | +25 |

| Target industry match | +25 |

| Company 200-1,000 employees | +15 |

Intent Signals

| Signal | Points |

|---|---|

| Demo request submitted | +40 |

| Demo booked/attended | +20 |

| Pricing page visit | +10 |

Negative Signals

| Signal | Points |

|---|---|

| Competitor employee | -50 |

| Unsubscribed from email | -25 |

| Personal email domain | -15 |

| Single page bounce | -10 |

| 30+ days inactive | -10 |

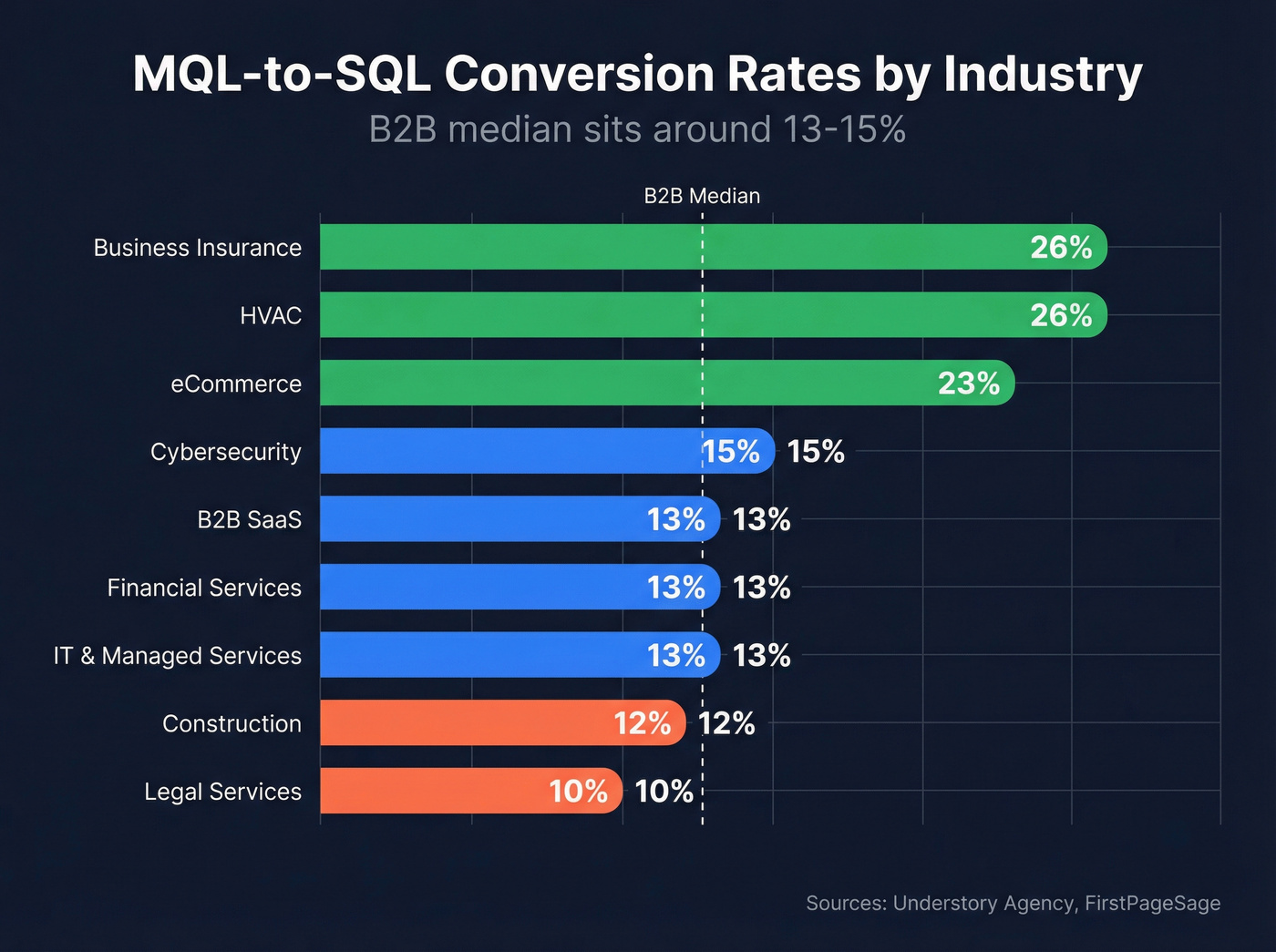

Set your MQL threshold at 60-80 points. This should capture roughly the top 20% of your leads by score. Leads crossing 60 points get routed to AEs within minutes - not hours, not the next business day. Minutes.

Two rules most teams skip. First, build a two-dimensional model with separate fit and intent scores. A VP at a target account who hasn't engaged yet is different from a junior marketer who's downloaded everything on your site. Second, apply score decay - reduce points by 25% monthly without new activity. A lead who was hot in January and silent through March isn't still hot.

Review your scoring model monthly against closed-won and closed-lost data. If 60%+ of closed-won deals scored below your MQL threshold, your model is miscalibrated.

Scoring models depend on complete firmographic data. If your CRM records are missing job titles, company size, or industry, your model is guessing. Prospeo's CRM enrichment returns 50+ data points per contact at an 83% match rate, giving your model actual inputs to work with.

Five Mistakes That Kill Conversion

1. Scoring activity instead of buying behavior. An ebook download isn't intent. Three ebook downloads aren't intent either. Pricing page visits, demo requests, and multi-stakeholder engagement from the same account - that's intent. Weight accordingly.

2. No negative scoring. If competitors, personal email addresses, and bounced contacts never lose points, your "hot leads" list is contaminated. We've seen scoring models where 15% of "MQLs" were competitors doing research. That's not a pipeline - it's noise. (If you need rules and examples, start with negative scoring.)

3. No score decay. Leads from years ago still showing as "hot" because they downloaded a whitepaper and nobody ever reduced their score. Apply the 25% monthly decay rule and watch your MQL quality jump overnight.

4. Marketing-only ownership. Lead scoring isn't a marketing project. It's a revenue function that needs input from sales, RevOps, and CS. If your AEs have never reviewed the scoring model, it's probably wrong. (This is where Revenue Operations alignment pays off fast.)

5. No data quality check. This is the frustration that surfaces most often in B2B marketing communities: SDRs stop working MQLs because too many bounce. Your reps have stopped trusting the leads marketing sends over, and honestly, can you blame them? This is the most fixable problem on the list, and it's the one most teams ignore the longest. Use a CRM hygiene checklist to keep it from creeping back in.

Bad data is the silent killer of MQL-to-SQL conversion. When 15% of your 'hot leads' are competitors and half your contacts bounce, your scoring model is working blind. Prospeo's CRM enrichment fills in missing job titles, company size, and industry at an 83% match rate - giving your scoring model real inputs instead of guesses.

Stop scoring incomplete records. Enrich your CRM and watch MQL quality jump.

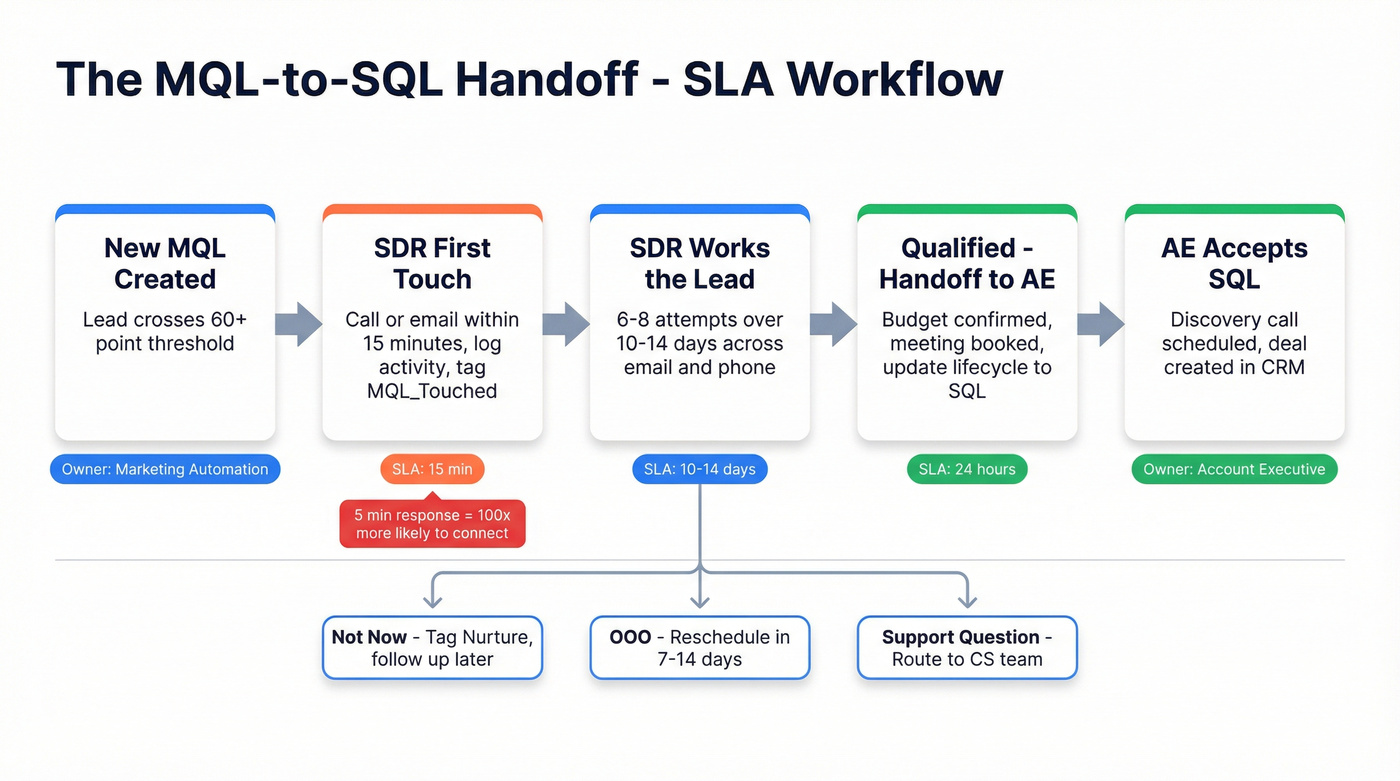

The Handoff - Your SLA Template

The gap between "marketing generated an MQL" and "sales actually works it" is where leads go to die. RevOps practitioners consistently flag this handoff gap as the number-one pipeline killer. A formal SLA eliminates ambiguity.

| Trigger | Owner | SLA | Action |

|---|---|---|---|

| New MQL | SDR | 15 minutes | Touch lead, log activity, tag MQL_Touched |

| Interested reply | SDR to AE | 24 hours | Loop AE, update lifecycle, post to Slack |

| Closed won | AE to CS | 48 hours | CS kickoff, include promise notes |

The speed-to-lead data from Harvard Business Review is unambiguous: responding within 5 minutes makes you 100x more likely to connect and 21x more likely to qualify versus waiting an hour. Every minute past five is conversion rate you're leaving on the table.

For disposition codes, keep it simple:

| Reply Type | Action |

|---|---|

| Interested/pricing | Create CRM record, assign AE, pause outreach |

| Not now/later | Tag Nurture, schedule follow-up |

| OOO | Reschedule +7-14 days |

| Support question | Tag CS, create task |

After the initial touch, SDRs should run 6-8 attempts over 10-14 days across email and phone. If your SDRs are giving up after two emails, you don't have a lead quality problem - you have a process problem. (Use a consistent SDR follow-up strategy to standardize attempts.)

Workflow Recipes That Convert

Before launching any sequence, enrich new contacts on form submission. Prospeo's API can append verified email, phone, company size, and seniority in real time - so your scoring model fires on complete data, not just a name and email address.

New Lead Welcome Sequence

Day 0 (within 5 minutes of signup): Value-first email. No pitch. Deliver whatever they signed up for plus one additional resource. Day 2: Educational content - a blog post, framework, or industry insight relevant to their ICP segment. Day 5: Social proof. Case study or customer story that mirrors their company profile. Day 9: Soft CTA. Offer a demo or consultation, but frame it as "see if this is a fit" rather than a hard sell.

Suppression rules are non-negotiable. Unenroll when lifecycle stage hits Opportunity or Customer, when a deal isn't Closed/Lost, or when the contact opts out. Set a workflow goal - lifecycle becomes MQL or demo request - and unenroll when the goal is met.

Pricing-Page Interest Fast-Track

Trigger: 2+ pricing page views within 7 days. This is high-intent behavior - don't bury it in a generic nurture cadence.

One important distinction: workflows are one-to-many automations with if/then branching. Sequences are one-to-one sales outreach that stops when a prospect replies. The recipes in this section are workflows.

Action: personalized rep email with a scheduling link, sent within 60 minutes. Layer retargeting ads simultaneously. Build an if/then branch: if they click the scheduling link, create a task for the assigned AE. If no response in 3 days, send an educational email focused on ROI and customer results rather than another "just checking in." The pricing-interest trigger is the single highest-converting workflow you can build. Prioritize it.

Re-Engagement for Stale Leads

Trigger: last activity more than 90 days ago. Send two emails - a casual check-in followed by a relevant resource three days later. If no response after both, set the contact to non-marketing status. (If you want subject lines ready to paste, use these re-engagement email subject lines.)

Let's talk about buying committees, because this is where single-lead thinking breaks down. When 2+ contacts from the same domain engage within a short window, that's not two MQLs - it's a signal. Notify the account owner, add the account to an account-level nurture, and send role-tailored content. A single MQL is a lead. Two MQLs from the same company is an opportunity forming.

Fix Your Data Before Anything Else

Most teams don't have a nurture problem. They have a data problem wearing a nurture costume.

We've watched teams spend weeks perfecting email copy and workflow logic, only to see 30%+ bounce rates on the first send. That's not a messaging problem, and it torches your sender reputation on every send. Bounce rates above 5% damage deliverability for every future campaign you'll ever run. (If you need a remediation checklist, start with email deliverability.)

The proof points are stark. Snyk's outbound team of 50 AEs was running a 35-40% bounce rate before switching to Prospeo - it dropped to under 5%, and AE-sourced pipeline jumped 180%. Meritt's pipeline tripled from $100K to $300K per week after fixing data quality with verified contacts. These aren't incremental improvements. They're order-of-magnitude jumps that came from solving the data layer first.

Your SDRs have 15 minutes to touch a new MQL. They can't afford to waste that window on bounced emails and wrong numbers. Prospeo delivers 98% email accuracy and 125M+ verified mobile numbers on a 7-day refresh cycle - so when your SLA clock starts, reps connect with real buyers.

Every bounced email is a dead MQL. Start with data your reps actually trust.

Measure What Matters

Don't drown in dashboards. Track these six metrics monthly and you'll know exactly where your funnel is leaking.

- MQL-to-SQL rate: Target 13-15% for B2B SaaS, adjust by industry using the benchmarks above.

- Time to SQL: Days from MQL creation to SQL status. Shorter is better, but don't sacrifice quality for speed.

- Meetings from nurture: The direct output metric. If workflows aren't generating meetings, the content or targeting is off.

- Opportunity rate: What percentage of nurtured MQLs become pipeline? Compare against non-nurtured leads to prove the program's value.

- Revenue: nurtured vs non-nurtured: The ultimate proof point. Track sales cycle length too - nurtured leads typically close faster.

- Email health: Open rate above 35-40%, CTR above 2.5%, unsubscribe below 0.5%, goal conversion 3-5% of enrolled leads becoming MQLs. If your open rates are healthy but goal conversion is flat, your content is engaging but not driving action. Low open rates point to a deliverability or subject line problem. Let the metrics tell you where to dig.

FAQ

What's a good MQL-to-SQL conversion rate?

The B2B median is 13-15%. SaaS averages 13%, eCommerce hits 23%, and HVAC and business insurance can reach 26%. Your target depends on industry and lead source - SEO leads convert at 51%, while PPC converts at 26%.

How long should a nurture sequence be?

A welcome sequence typically runs 3-7 emails over 1-2 weeks. Re-engagement sequences are shorter - 2 emails triggered after 90+ days of inactivity. Fast-track leads showing buying signals rather than forcing everyone through the same linear cadence.

What's the difference between lead scoring and lead grading?

Scoring measures behavioral intent - page visits, form fills, and email clicks. Grading measures demographic fit - job title, company size, and industry. The best MQL-to-SQL systems combine both into a two-dimensional model. Neither alone is sufficient.

How do I keep my nurture list clean?

Verify emails before launching any sequence - bounce rates above 5% damage sender reputation. Exclude current opportunities, customers, and opted-out contacts from every workflow. Clean data is an ongoing discipline, not a one-time project.

What tools help nurture MQLs to SQLs faster?

You need three layers: a CRM with workflow automation (HubSpot, Salesforce), a data enrichment platform for verified contacts and firmographics, and a sequencing tool for sales outreach. The data layer is where most teams underinvest - and it's the layer that determines whether everything downstream actually works.