Lead Qualification Strategies That Actually Improve MQL→SQL

A RevOps lead we know ran a scoring audit last quarter. Her team had thousands of MQLs, and SDRs were burning their day prepping for calls with leads who'd never buy. The scoring model hadn't been touched in over a year. Only 5% of sales reps say they receive high-quality leads from marketing. That's not a qualification problem. That's an alignment collapse.

These lead qualification strategies fix it: the right framework, a scoring model you actually maintain, speed-to-lead discipline, and clean data underneath all of it.

Why Most Qualification Breaks Down

37% of leads are missing key data - company size, role, or email validity - before SDR outreach even starts. Cold emails pull roughly a 2% reply rate. The margin for error is nonexistent.

A Reddit thread in r/sales put it bluntly: teams obsess over "faster qualification vs. better leads" when the real answer is both. Qualification breaks for three reasons:

- No shared definition of "qualified" between marketing and sales

- Scoring models that reward vanity actions (opened an email!) over buying signals (see Identifying Buying Signals)

- Dirty data that poisons every downstream step

Here's the thing: if your MQL→SQL rate is consistently low, the problem usually isn't your framework. It's your data. Fix that first, then worry about MEDDIC vs. BANT.

MQL vs. SQL: Quick Definitions

An MQL has engaged enough to suggest interest - downloaded content, visited pricing pages, matches your ICP. Marketing says "this one's warm."

An SQL has been vetted by a human. Budget exists, need is confirmed, timeline is real. Sales says "I'll work this." A SAL sits between them as the handoff step where sales accepts the lead for follow-up. PQLs matter if you run freemium - their conversion rates hit 15-30%, which is why PLG companies obsess over in-product signals.

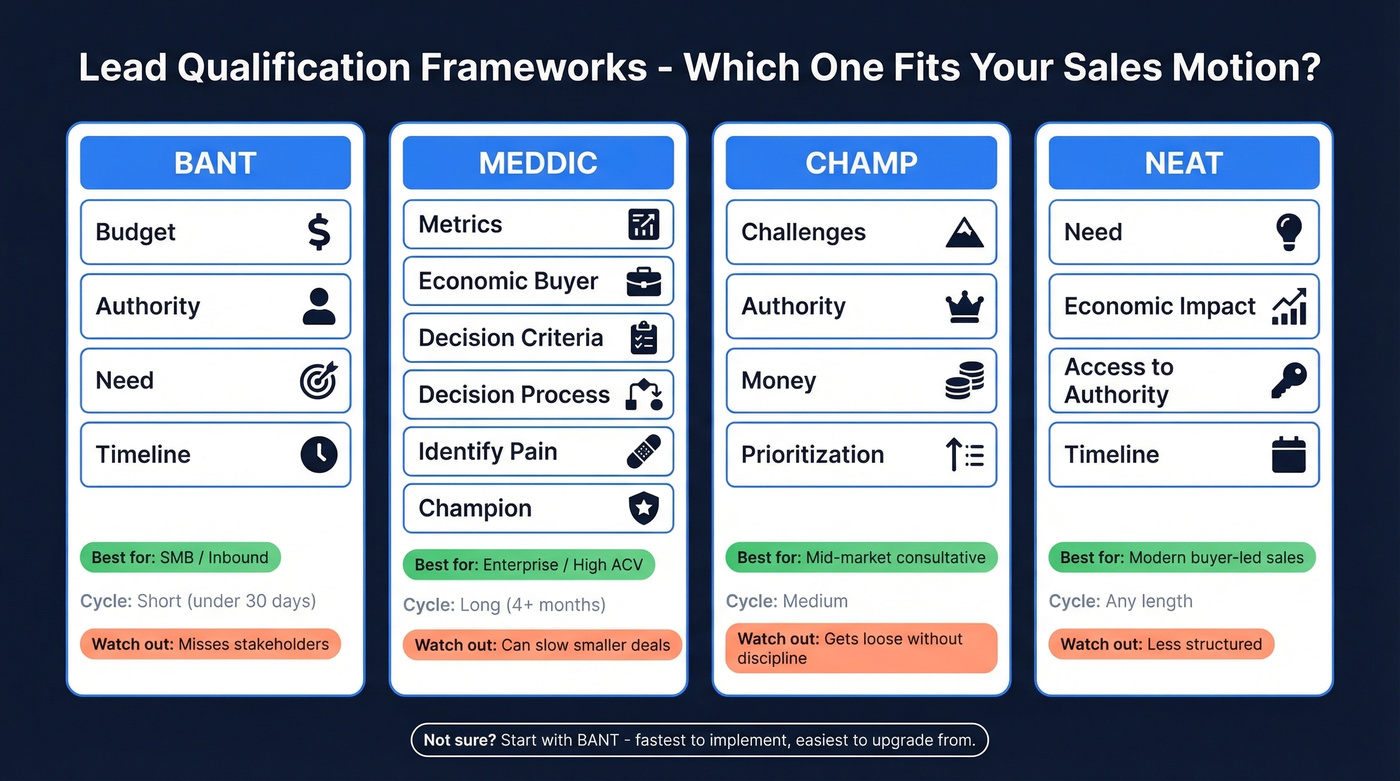

Pick a Framework and Enforce It

The framework matters less than consistency. One team switched from BANT to MEDDIC and watched forecast accuracy jump from 62% to 89%. The magic wasn't the framework - it was the discipline of actually using it on every single deal.

| Framework | Best for | Cycle length | Weakness |

|---|---|---|---|

| BANT | SMB / inbound | Short | Misses stakeholders |

| MEDDIC | Enterprise / high-ACV | Long | Can slow deals |

| CHAMP | Mid-market consultative | Medium | Gets loose fast |

| NEAT | Buyer-first / modern | Any | Less structured |

If you're unsure, start with BANT. It's the fastest to implement and easiest to upgrade from.

BANT - When Speed Beats Depth

Your inbound team is fielding 200 leads a week with a 14-day sales cycle. You need to qualify or disqualify in one call. That's BANT territory: Budget, Authority, Need, Timeline. We've seen SMB teams cut disqualification time dramatically just by enforcing BANT consistently across every first call - no freelancing, no skipping questions because the prospect "seemed ready."

MEDDIC - When the Deal Has a Buying Committee

If your average deal involves 6-10 decision-makers and a 4+ month cycle, MEDDIC forces reps to map the buying group. Unlike BANT, it accounts for Decision Criteria, Champion identification, and the Paper Process. The tradeoff: applying it to a $5K deal is like using a sledgehammer on a thumbtack.

CHAMP - The Anti-BANT

Where BANT leads with budget, CHAMP (Challenges, Authority, Money, Prioritization) leads with pain. That's the right move for mid-market consultative sales where the prospect hasn't sized the problem yet, let alone set a budget.

NEAT - For the Modern Buyer

Need, Economic Impact, Access to Authority, Timeline. NEAT was built for buyers who arrive with 90% of their research done. It turns qualification into a business conversation instead of an interrogation.

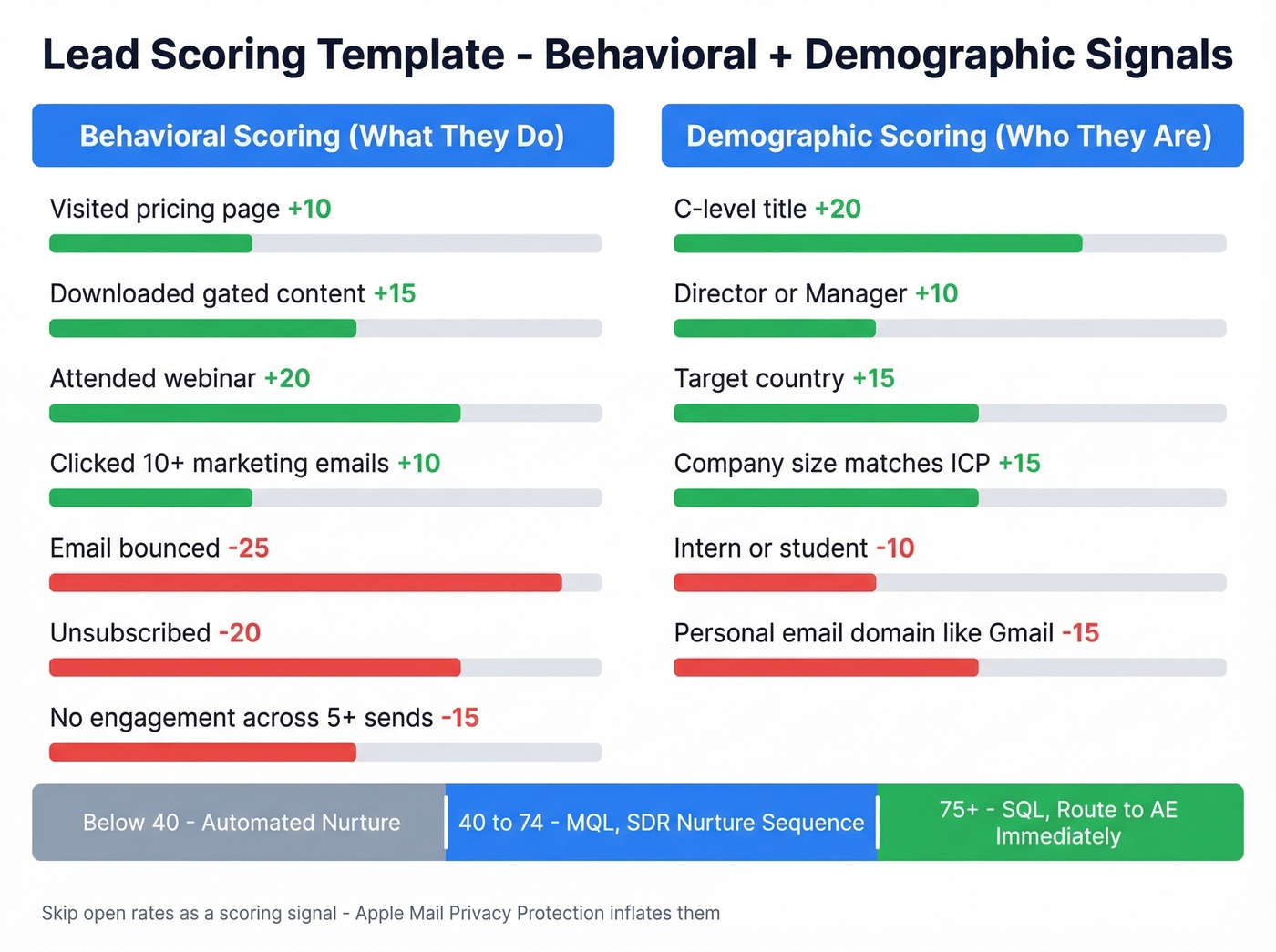

Build a Scoring Template

A framework tells reps what to ask. A scoring model tells the system who to prioritize. You need both (and a documented lead status workflow).

Behavioral scoring (what they do)

- Visited pricing page: +10

- Downloaded gated content: +15

- Attended webinar: +20

- Clicked 10+ marketing emails: +10

- Email bounced: -25

- Unsubscribed: -20

- No engagement across 5+ sends: -15

Demographic scoring (who they are)

- C-level title: +20

- Director/Manager: +10

- Intern or student: -10

- Target country: +15

- Company size matches ICP: +15

- Personal email domain (Gmail, Yahoo): -15

Thresholds

- 75+ → SQL, route to AE immediately

- 40-74 → MQL, SDR nurture sequence

- Below 40 → stay in automated nurture

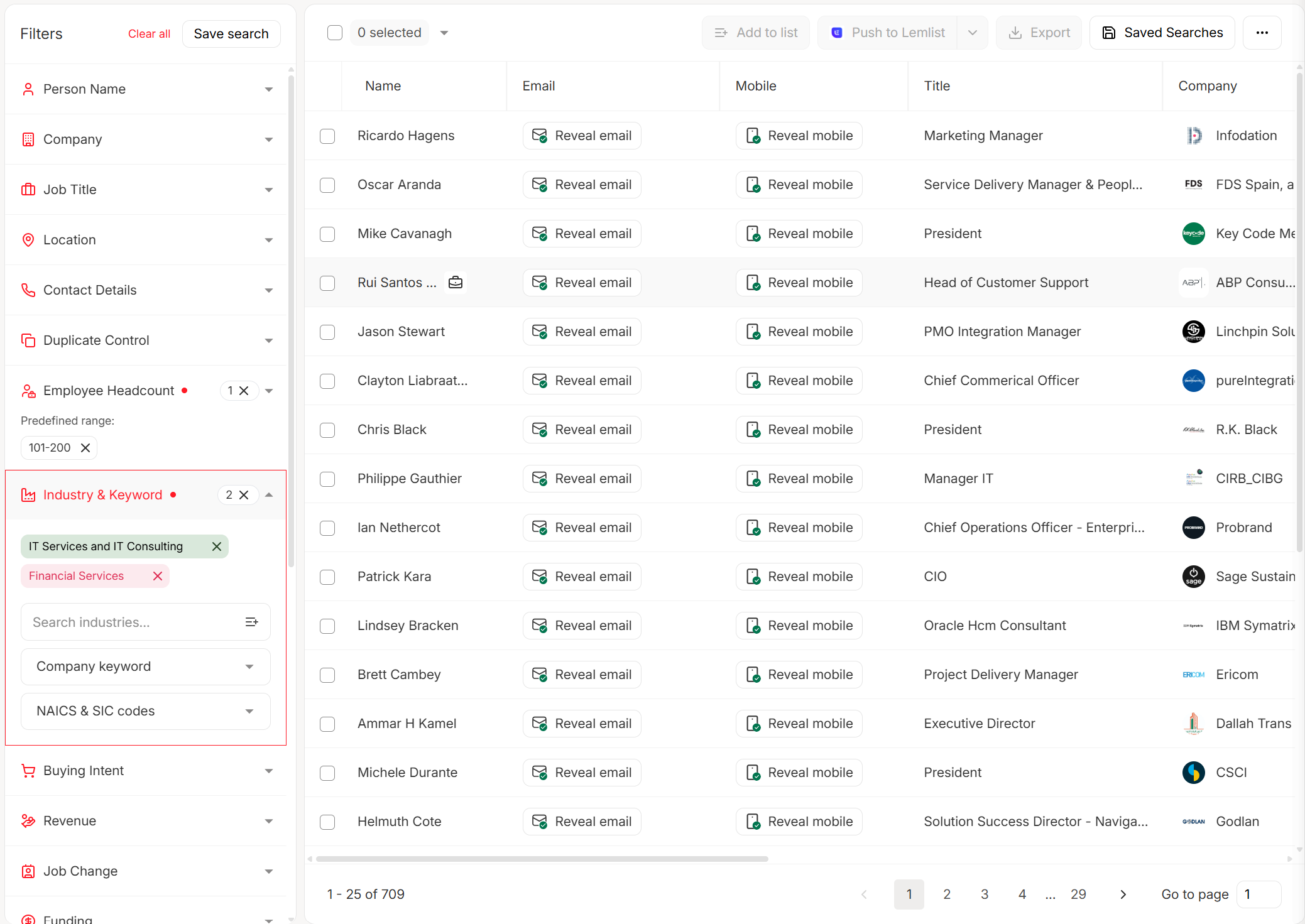

Skip open rates as a scoring signal. Apple Mail Privacy Protection inflates them across the board. Weight on-site behavior - pricing page visits, demo requests, feature comparisons - instead. Enrichment platforms that return firmographic and technographic data per contact make your demographic scoring dramatically more accurate, because you're scoring on verified company size and tech stack rather than whatever the prospect typed into a form field three months ago.

37% of leads are missing key data before SDRs even pick up the phone. Prospeo enriches every contact with 50+ data points - verified emails, direct dials, firmographics, and technographics - so your scoring model works on real information, not form-fill guesses. 98% email accuracy. 92% API match rate. 7-day refresh cycle.

Stop scoring leads built on dirty data. Enrich them first.

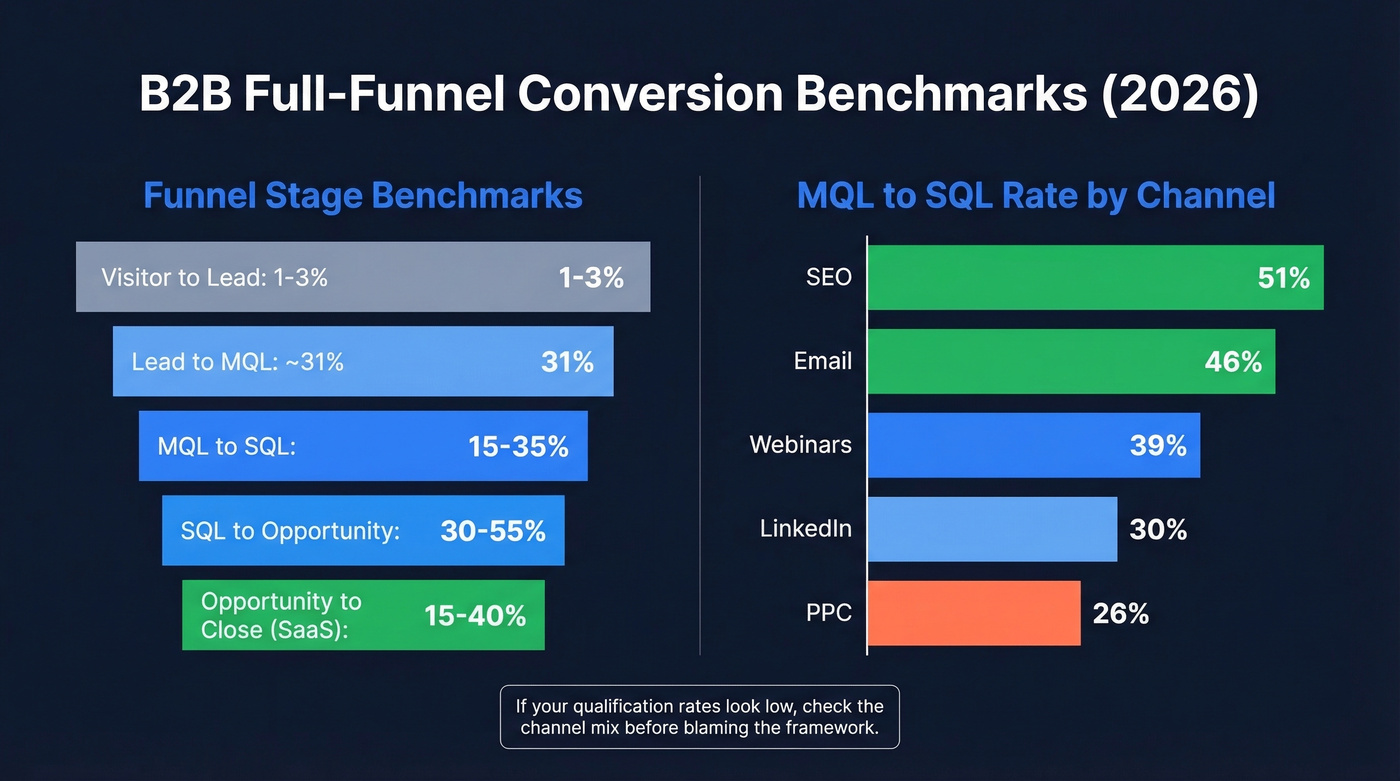

Benchmarks Worth Knowing

Here are full-funnel conversion benchmarks for B2B (more in our funnel metrics guide):

| Funnel stage | Benchmark range |

|---|---|

| Visitor → Lead | 1-3% |

| Lead → MQL | ~31% |

| MQL → SQL | 15-35% |

| SQL → Opportunity | 30-55% |

| Opp → Close (SaaS) | 15-40% |

MQL→SQL varies wildly by industry - B2B SaaS sits at 13%, cybersecurity at 15%, business insurance at 26%.

| Channel | MQL→SQL rate |

|---|---|

| SEO | 51% |

| 46% | |

| Webinars | 39% |

| 30% | |

| PPC | 26% |

If your qualification rates look low, check the channel mix before blaming the framework.

Speed-to-Lead Wins Deals

The best scoring model doesn't help if your team takes 6 hours to follow up. Leads contacted within 5 minutes are 21x more likely to enter the sales cycle than those contacted after 30 minutes. Let's be honest - most teams know this stat and still don't act on it.

If you're trying to operationalize this, treat it like sales process optimization, not a one-off initiative.

Build a three-step SLA:

- Route instantly - CRM assigns the lead within 60 seconds of form submission

- Alert in real time - Slack, mobile push, SMS - whatever gets the rep's attention

- First touch within 5 minutes - a phone call or personalized email, not a generic autoresponder (use these sales follow-up templates)

The drop-off from 53% conversion within 1 hour to 17% after 24 hours is brutal. This is the single highest-leverage fix most teams ignore.

Fix Data Before Scoring

Every framework and scoring model assumes accurate data. When it isn't accurate, SDRs waste prep time on dead contacts, bounce rates climb, and sender reputation tanks. We've watched teams spend weeks tuning scoring weights when the real problem was that 30%+ of their contact records had invalid emails.

Non-negotiable guardrails:

- Bounce rate below 2% (see email bounce rate)

- Spam complaint rate below 0.01%

- GDPR compliance - penalties run up to EUR 20M or 4% of global annual revenue

- Enrich every lead with firmographics and title before it enters scoring (see lead enrichment)

- Deduplicate across sources weekly

Run every inbound lead through verification before it touches your scoring model. Prospeo's 98% email accuracy and 7-day data refresh cycle mean you're scoring contacts that actually exist - not phantom records from a stale database. Its enrichment returns 50+ data points per contact at a 92% match rate, so your demographic scoring has real firmographic and technographic signals behind it. The free tier covers 75 verified emails per month, and paid plans run about $0.01 per verified email.

Your lead scoring penalizes bounced emails at -25 points, but what if 30% of your records are invalid before they ever enter the model? Prospeo's 5-step verification catches bad emails, spam traps, and catch-all domains - keeping bounce rates under 2% and your sender reputation intact. At $0.01 per email, clean data costs less than one wasted SDR hour.

Verify every contact before it touches your scoring model.

AI and Automation in 2026

Sellers spend roughly 25% of their time actually selling. Bain reports that AI-driven process redesign can improve win rates by 30%+ when applied across the funnel.

AI helps qualification when it pre-qualifies inbound leads 24/7 via chatbots, recalibrates scoring models automatically, and fills in missing firmographics without manual research. One underused tactic we've seen work: teams mining 100K+ B2B reviews from sites like Clutch and GoodFirms to extract ICP signals - company pain points, tech stack mentions, budget ranges - and feeding those into qualification models. Nobody talks about this, but it works.

AI hurts when you over-automate discovery calls, trust scores built on dirty data, or spend $3,200/mo on HubSpot Marketing Hub Enterprise when your lead volume doesn't justify it. Salesforce Einstein runs $50/user/month. Warmly's AI agents cost $10K-$20K/year. HubSpot Breeze Intelligence starts at $45/mo. These tools add value at scale, but they don't fix a broken process. Get the framework and data right first.

Questions That Map the Buying Group

B2B deals involve 6-10 decision-makers on average. Your questions need to map the buying group, not just qualify the person on the call.

Early-stage - confirm fit: What problem are you solving, and what's the cost of not solving it? What are you using today, and where does it fall short? Who else is evaluating solutions?

Mid-stage - confirm intent: What does your decision process look like? What would success look like 6 months post-implementation? Is there a deadline driving this evaluation? What's the budget range? Who signs off?

The best qualification conversations don't feel like interrogations. Lead with curiosity, not a checklist. If the prospect feels like they're being processed through a form, you've already lost the deal's momentum - even if they technically qualify.

FAQ

What's the difference between an MQL and an SQL?

An MQL has shown interest through engagement and matches your ICP. An SQL has been vetted by sales with confirmed budget, authority, need, and timeline. The gap between them is human validation - a rep confirming the lead is real, not just engaged.

Which framework fits SMB vs. enterprise?

BANT for SMB and high-velocity inbound. MEDDIC for enterprise with long cycles and multiple decision-makers. Choosing the right method for your sales motion matters more than picking the "best" framework on paper. Skip MEDDIC if your average deal closes in under 30 days - it'll just slow your reps down.

What score should trigger an SDR handoff?

75+ is the standard SQL threshold in most B2B scoring models. Recalibrate quarterly based on actual conversion data - if 75-point leads aren't converting at 30%+, your scoring weights need adjustment.

How do I prevent bad data from ruining qualification?

Verify every email before it enters scoring. A bounced-email penalty of -25 points can poison an otherwise qualified lead. Pair verification with weekly deduplication across all lead sources, and enrich records with firmographic data so your demographic scoring reflects reality rather than self-reported form fills.