Lead Scoring Models: Build One That Works in 2026

Your SDR team has 2,000 leads in the queue and no way to tell which 50 are worth calling today. So they guess - and only 27% of the leads they call are actually qualified. Meanwhile, companies that score leads properly see 138% ROI versus 78% without scoring. Yet only 44% of organizations bother to use lead scoring models at all. The gap isn't awareness - it's implementation. Every guide tells you to "assign points based on attributes" and then stops. Which attributes? How many points? What threshold triggers a handoff? Below: the spreadsheet formulas, the point values, and the routing rules - everything you need to build a working score model this week.

What You Need (Quick Version)

If you have fewer than 500 closed-won deals: use the rules-based spreadsheet framework later in this article. Don't buy a tool yet.

If you have 1,000+ closed-won records and budget: Salesforce Einstein or HubSpot Enterprise predictive scoring will outperform manual rules - but only if your data is clean.

If your CRM data is stale or incomplete: fix that first. Your model scores ghosts otherwise.

Why Most Scoring Models Fail

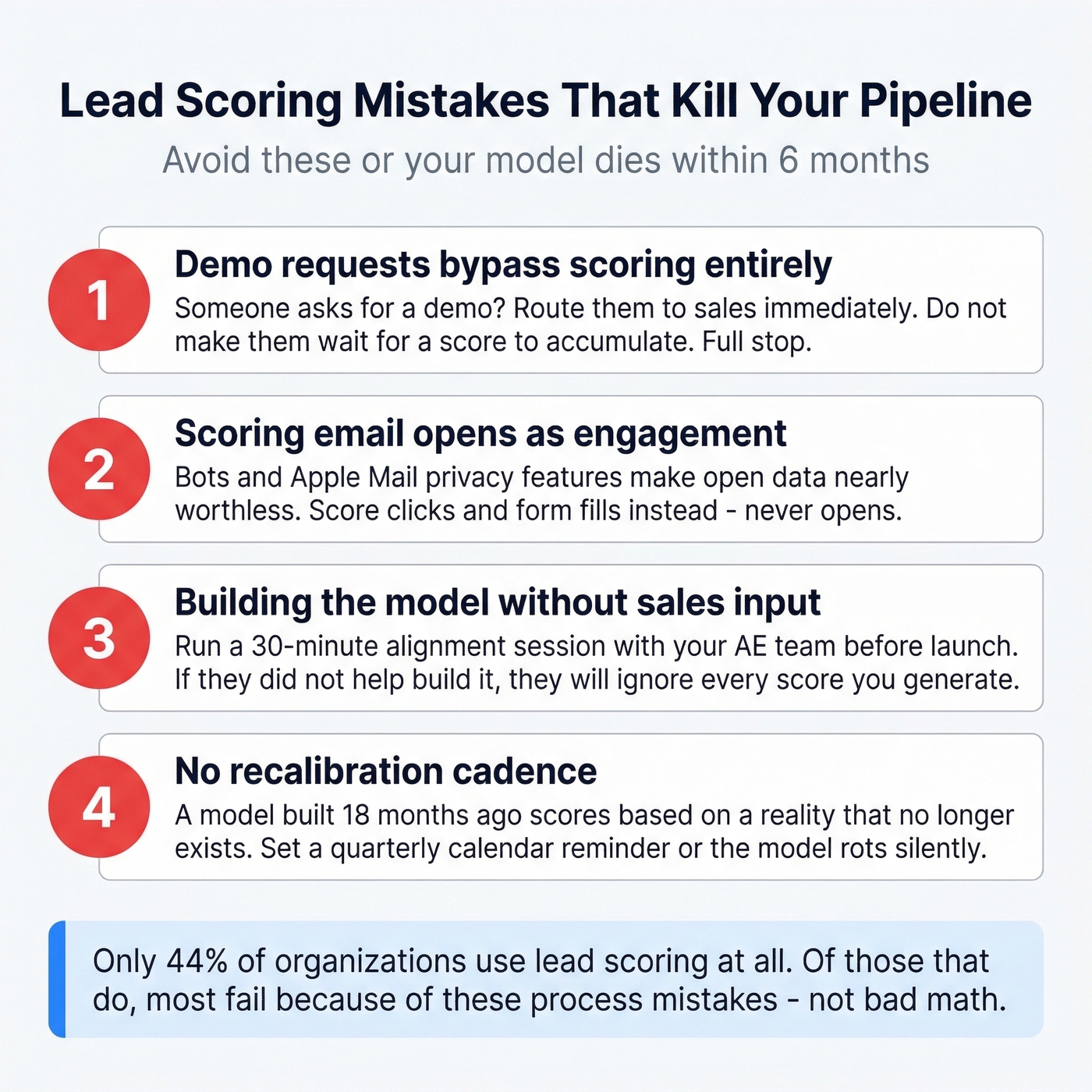

The number-one reason scoring models die isn't bad math. It's bad process. The failure modes cluster into four buckets:

- Fit vs. engagement guesswork. Teams can't decide whether a VP at a 500-person company who never opens emails should outscore a marketing coordinator who downloads every whitepaper. Without a lead scoring matrix that separates fit from activity, they wing it.

- Sales never bought in. If your AE team wasn't in the room when you assigned point values, they'll ignore the scores. 41% of B2B marketers report difficulty aligning marketing-generated leads with sales expectations.

- Tribal knowledge instead of documentation. One RevOps person knows why "webinar attendee" is worth 15 points. They leave. Nobody knows anymore. The model rots.

- No recalibration cadence. A model built 18 months ago scores based on a reality that no longer exists.

The Reddit thread on r/hubspot echoes this - practitioners describe scoring as "starting from zero" every time, with the biggest friction being the balance between fit and engagement signals.

Six Model Types (With Real Point Values)

Demographic & Firmographic Scoring

This is the foundation of any customer scoring model - scoring who the lead is, not what they've done. Build a lookup table: SaaS industry → 20 points. Company size 51-200 employees → 15 points. VP or C-suite title → 20 points. Wrong geography → subtract 10. Cap your firmographic score at 35 so it doesn't overwhelm behavioral signals. (If you need help defining what “fit” actually means, start with an Ideal Customer Profile rubric.)

Behavioral & Engagement Scoring

Score actions, not vanity metrics. Page visits, content downloads, pricing page views, demo requests - these all get points. Don't score email opens. Bots and Apple Mail privacy features make open data nearly worthless.

Apply exponential decay so recent activity counts more: =MAX(0, 40 * EXP(-0.05 * (TODAY() - LastActivityDate))). Cap behavioral scores at 40 points.

Intent-Based Scoring

Intent data tells you when a company is actively researching your category. Standalone intent platforms like 6sense typically run $60k-$300k/year. (If you’re building this into your segmentation, use an intent-based segmentation approach so scoring and targeting match.)

Lead Source Scoring

Not all channels are equal. SEO-driven leads close at 14.6% versus 1.7% for outbound. Assign source-based points: organic inbound → 20, referral → 18, paid search → 12, cold outbound → 5. If you're only going to score one dimension, start here. Here's a quick lead scoring example: a referral lead from a SaaS company with 200 employees would start at 18 (source) + 20 (industry) + 15 (size) = 53 before any behavioral data.

Negative Scoring

Subtract points for disqualifying signals. Competitor email domains (-30), student .edu addresses (-20), no activity in 365+ days (-25). Some teams use extreme negatives (-1,000) for competitor domains to guarantee suppression regardless of other signals. Negative scoring is the most overlooked model type and often the highest-ROI to implement.

Outbound Buying-Readiness Scoring

For outbound teams, traditional scoring doesn't apply - your leads haven't engaged with your content. Score buying readiness instead using five signals:

- Recent funding or revenue milestone - 30 points

- Public pain signals (founder posting about hiring sales, scaling outbound) - 25 points

- Decision-maker accessibility - 20 points

- Existing stack investment (already paying for tools like Apollo, Clay, or Instantly) - 15 points

- Timing triggers (new VP Sales hire, planning cycle, product launch) - 10 points

Accounts scoring 70+ close at 3x the rate of sub-50 scorers. Manual research doesn't scale past roughly 50 accounts per week - that's where intent data and enrichment tools cut research time from hours to minutes per account. (To operationalize those triggers, build a simple system for tracking sales triggers.)

Build Your Model: A Copy-Paste Framework

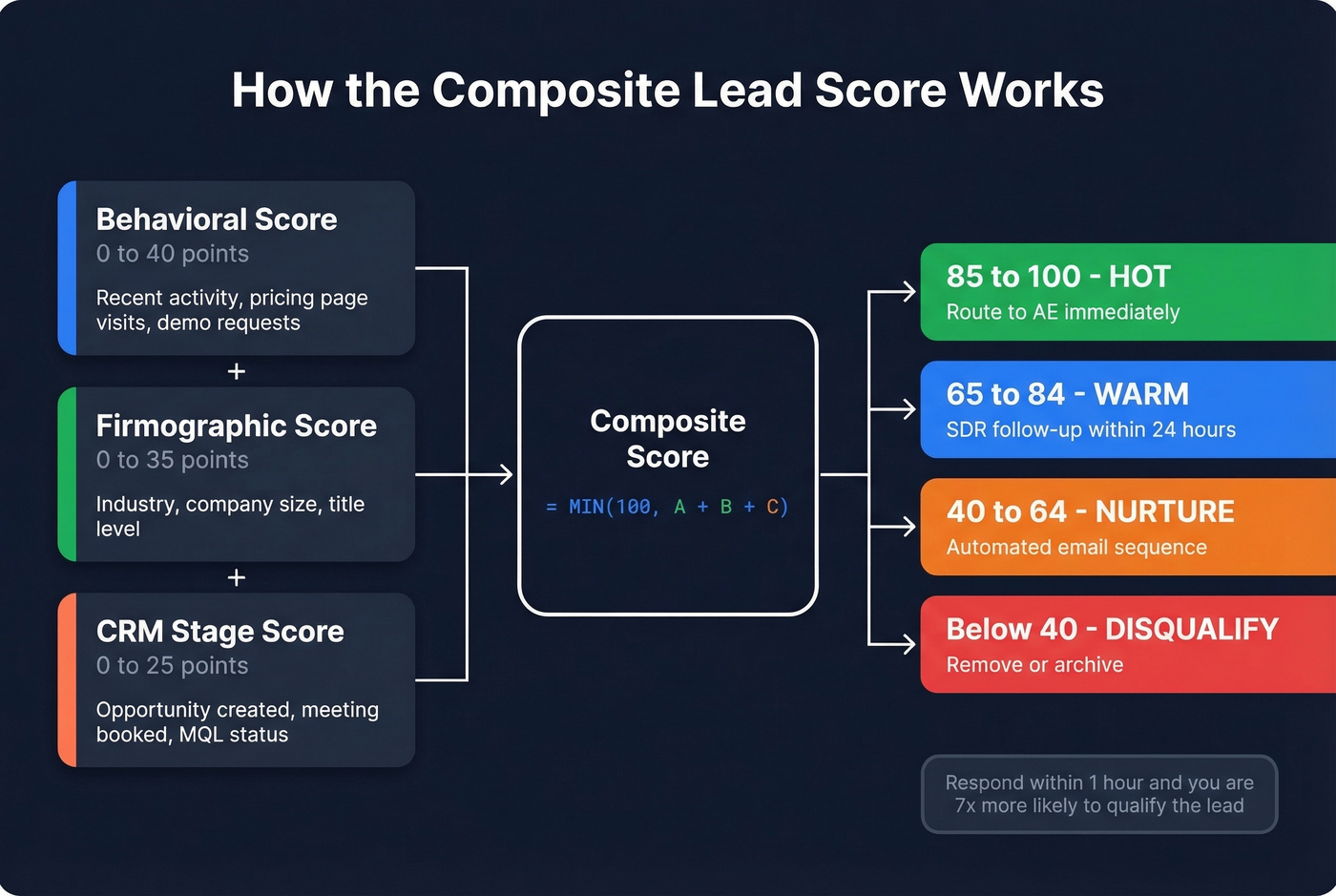

Your composite score combines three sub-scores into a single 0-100 number. Use the lead scoring table template below as your starting point - copy it into a spreadsheet and customize the values for your business.

Behavioral Score (0-40):

=MAX(0, 40 * EXP(-0.05 * (TODAY() - LastActivityDate)))

Recent activity dominates, old activity fades. Add bonus points for high-value actions (pricing page visit +10, demo request +15) with a cap at 40.

Firmographic Score (0-35):

| Attribute | Value | Points |

|---|---|---|

| Industry | SaaS | 20 |

| Industry | FinTech | 15 |

| Company size | 51-200 | 15 |

| Company size | 201-1,000 | 10 |

| Title level | VP/C-suite | 20 |

| Title level | Director | 15 |

If you have conversion data by attribute, there's a more precise method: divide each attribute's close rate by your baseline. A 20% close rate against a 1% baseline = 20 points. This grounds your point values in actual performance rather than gut feel.

CRM Stage Score (0-25): Opportunity created → 25. Meeting booked → 20. MQL → 10. Raw lead → 5. (If your stages are messy, fix your lead status definitions first.)

Composite Score:

=MIN(100, BehavioralScore + FirmographicScore + CRMScore)

Route based on bands. Speed matters - responding within the first hour makes you 7x more likely to qualify the lead.

| Score Range | Label | Action |

|---|---|---|

| ≥85 | Hot | Route to AE immediately |

| 65-84 | Warm | SDR follow-up within 24h |

| 40-64 | Nurture | Automated sequence |

| <40 | Disqualify | Remove or archive |

In my experience, a spreadsheet with these formulas, updated weekly, beats a $3,600/month predictive tool trained on dirty data.

Data Quality: The Prerequisite Nobody Talks About

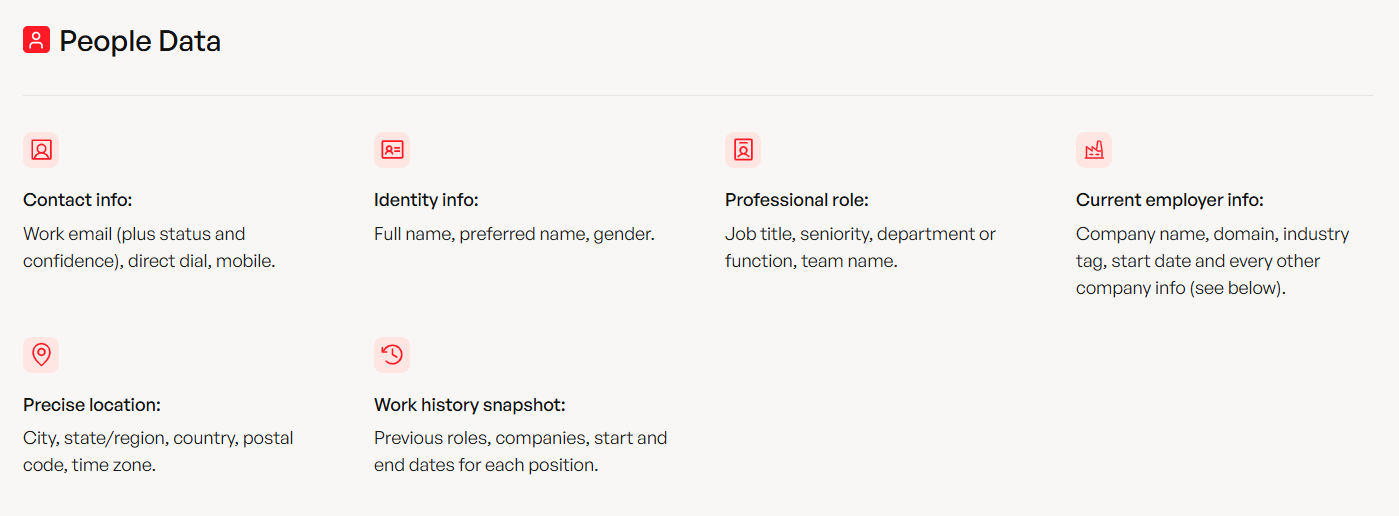

Your model scores ghosts if a big chunk of emails bounce and job titles are 18 months stale. Clean data before scoring. Prospeo enriches CRM records with 50+ data points per contact, verifies emails at 98% accuracy, and refreshes everything on a 7-day cycle versus the 6-week industry average. It plugs into Salesforce and HubSpot, so enrichment runs automatically rather than as a quarterly cleanup project. (If you’re comparing vendors, see the best data enrichment services.)

We've watched teams spend months building sophisticated scoring models on top of CRM data that hadn't been updated since the leads were imported. The model worked perfectly - it just scored people who'd changed jobs two years ago.

Your scoring model collapses when 35% of emails bounce and job titles are 18 months stale. Prospeo enriches every CRM record with 50+ data points, verifies emails at 98% accuracy, and refreshes all records every 7 days - not the 6-week industry average.

Stop scoring ghosts. Start scoring leads with data you can trust.

Outbound buying-readiness scoring needs fresh firmographic data, verified decision-maker contacts, and intent signals across 15,000 topics. Prospeo delivers all three at $0.01 per email - 90% cheaper than ZoomInfo - so your scoring model actually routes real deals.

Score on real signals, not stale records. Build your model on Prospeo data.

Rules-Based vs. Predictive Scoring

Most teams should start rules-based. Here's why.

| Criteria | Rules-Based | Predictive |

|---|---|---|

| Data needed | Any amount | 500-1,000+ closed-won |

| History needed | None | 12-24 months |

| Setup time | 1-2 days | 3-6 months |

| Cost | Free (spreadsheet) | $3,600+/mo (HubSpot) or ~$215/user/mo (Salesforce) |

| Transparency | Full | Black box |

Salesforce Einstein needs around 1,000 converted leads to perform well. HubSpot overhauled its scoring tool in late 2025, adding multi-model support and score explainability - a significant upgrade from the previous black-box approach, but it still needs Enterprise tier at $3,600/month.

Here's my hot take: predictive scoring is overhyped for most teams. Rules-based scoring with quarterly recalibration outperforms a poorly trained AI model every time. If you're closing deals under $15k and generating fewer than 1,000 leads per month, you probably don't need machine learning touching your pipeline. A spreadsheet with the formulas above will serve you better.

Align Your Qualification Framework

Your scoring model should mirror how your team actually qualifies deals. A lead qualification scorecard ensures every rep evaluates the same criteria in the same order. (If you’re standardizing MEDDIC, keep a set of MEDDIC discovery questions next to your scoring fields.)

| Framework | Best For | Scoring Emphasis | Risk |

|---|---|---|---|

| BANT | High-velocity SMB | Budget + authority | Misses complex deals |

| MEDDIC | Enterprise / committees | Champion + decision process | Slows smaller deals |

| CHAMP | Mid-market consultative | Pain-first discovery | Too loose without discipline |

Consistency matters more than framework choice. One team improved forecast accuracy from 62% to 89% after standardizing on MEDDIC and aligning scoring weights to match. The framework itself wasn't magic - the consistency was. If you're running BANT, weight budget and authority signals heavily in your firmographic score. Running MEDDIC? Add points for identified champions and documented decision processes in your CRM stage score.

Scoring Mistakes That Kill Your Pipeline

- Demo requests bypass scoring entirely. Someone who asks for a demo goes to sales immediately. Full stop.

Don't score email opens. Bots and Apple Mail privacy make open data unreliable. Score clicks and form fills instead.

Sales must co-build the model. Run a 30-minute alignment session before launch or your AEs will ignore every score you generate.

Use title-level buckets, not string matching. "VP Sales," "Vice President of Sales," and "Head of Revenue" are the same person.

Remove leads inactive for 365+ days. They're database weight dragging your averages down.

Start with 8-12 signals. Scoring 47 behaviors doesn't make your model smarter - it makes it unmaintainable.

Tools and What They Cost

| Tool | Scoring Type | Price | Best For |

|---|---|---|---|

| HubSpot Pro | Rules-based | ~$890/mo (3 seats) | SMB manual scoring |

| HubSpot Enterprise | Predictive | ~$3,600/mo + $3,500 setup (10-seat min) | Mid-market with clean data |

| Salesforce Einstein | Predictive | ~$165/user/mo + $50/user/mo add-on | Enterprise CRM-native |

| 6sense | Intent + predictive | $60k-$300k/yr | Enterprise ABM |

The tool doesn't matter if your data is wrong. A $3,600/month predictive model trained on stale CRM records produces confidently wrong scores. The smarter play: start with a spreadsheet and a free data enrichment tier. Build your model manually, validate it against closed-won data for one quarter, then decide if you need a paid scoring tool. Most teams under 1,000 leads per month don't. (If you’re building a full stack, compare SDR tools before you commit.)

Account Scoring for ABM Teams

If you're running account-based marketing, you need an account scoring template alongside your lead-level model. Account scoring aggregates signals across all contacts at a company - total engagement, number of decision-makers identified, firmographic fit, and intent surges - into a single account-level number. The same 0-100 composite framework works; just roll up individual lead scores and weight accounts with multiple engaged contacts higher. Three engaged directors at one company are a stronger signal than one engaged VP at three separate companies.

FAQ

What's a good MQL threshold score?

Start with Hot at 85+, Warm at 65-84, and Nurture at 40-64. Recalibrate quarterly by comparing scored-Hot leads against actual close rates - if fewer than 30% of Hot leads convert, lower the threshold by 5-10 points.

How often should I recalibrate my scoring model?

Quarterly at minimum. Pull scored-Hot leads from the prior quarter and check close rates against your baseline. Monthly recalibration is better during the first six months after launch.

Do I need a tool to build a scoring model?

No. A spreadsheet with the composite formulas above outperforms expensive predictive tools trained on dirty data. Graduate to predictive scoring at 1,000+ closed-won records and 12+ months of clean history.

What's the minimum data needed for predictive scoring?

You need 500-1,000 closed-won outcomes and 12-24 months of lead history. Below that threshold, rules-based scoring consistently wins on accuracy and ROI.

How do I fix bad CRM data before scoring?

Use an enrichment tool with automated refresh cycles - stale job titles and bounced emails make even well-built models useless. Prospeo's CRM enrichment returns 50+ data points per contact at a 92% match rate, refreshed every 7 days, with a free tier of 75 credits to start.