Marketing Qualified Lead Criteria: Scoring Model, Benchmarks, and SLA for 2026

A 10-seat sales team ignoring most of the leads marketing sends them isn't a sales problem. It's a criteria problem. Most MQL definitions were written once, taped to a wiki page, and never touched again. Meanwhile the product changed, the ICP shifted, and the scoring thresholds stayed frozen in 2021.

Here's the scoring model, the benchmarks, and the handoff SLA that actually make the "Marketing Qualified Lead" label mean something in 2026.

What You Need (Quick Version)

- Use the two-axis model (Fit + Interest). Set your MQL threshold at ~70 points and review quarterly.

- Benchmark yourself. The average MQL-to-SQL rate is 13% for B2B SaaS. If yours is far below that, your criteria are too loose.

- Add an SLA. Assignment under 5 minutes, first touch under 24 hours. Without response-time commitments, scoring is academic.

What Makes a Lead "Marketing Qualified"

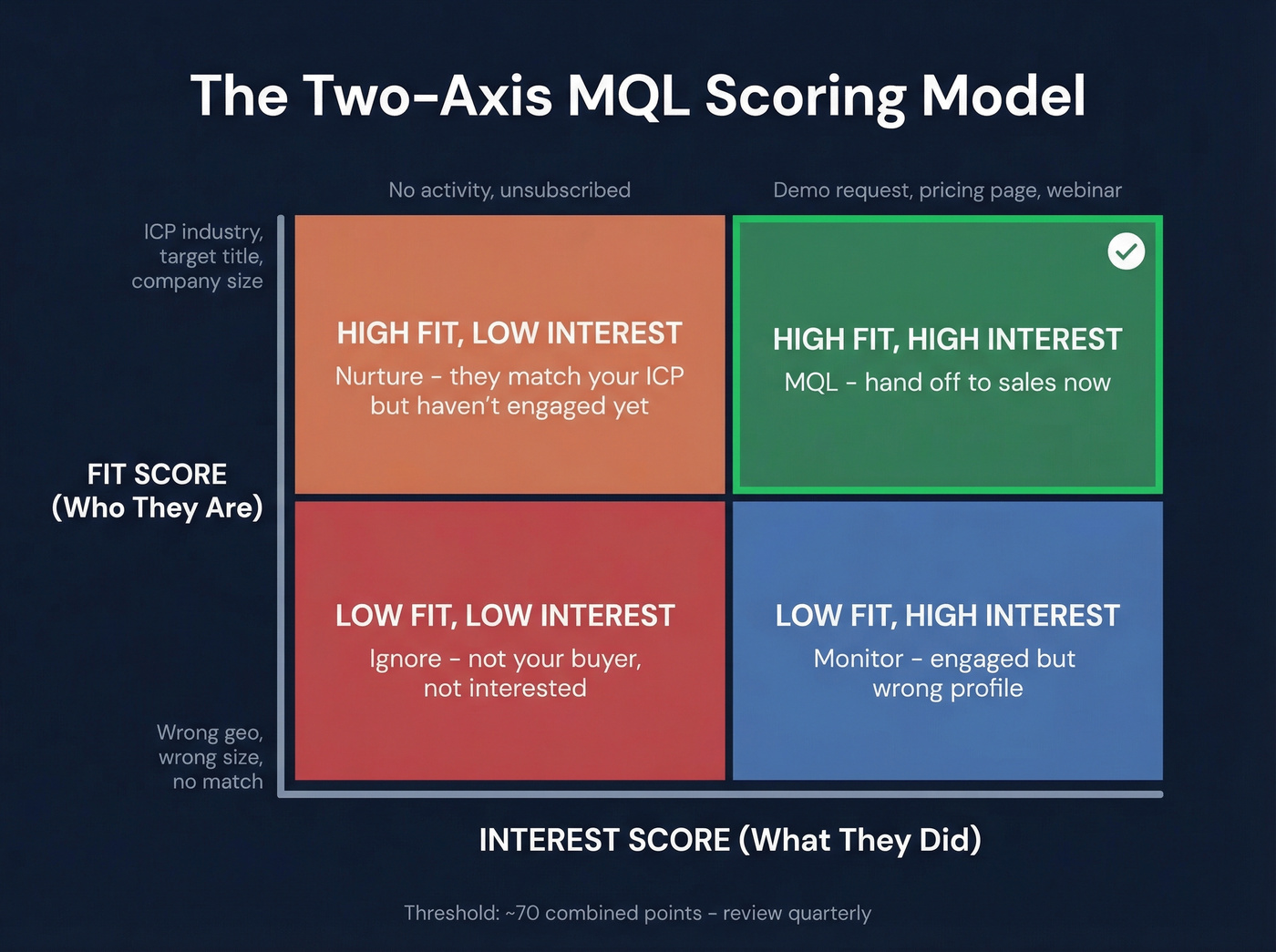

An MQL passes two tests at the same time: "Is the lead interested in us?" and "Are we interested in the lead?" Engagement scoring covers what they've done. Fit grading covers who they are.

You need both. One without the other produces garbage.

A VP of Engineering at a 500-person SaaS company who visited your pricing page twice this week? That's a real MQL. A student who downloaded your whitepaper for a class project? Not so much - even if they filled out every form field perfectly.

MQL Criteria Mistakes That Tank Pipeline

Three patterns kill pipeline quality before sales ever touches a lead.

The bar is set too low to inflate metrics. A checklist download isn't an MQL. Teams do this to show fast growth, then wonder why sales ignores 80% of what marketing passes over. We've seen this play out repeatedly, and the consensus on r/MarketingGeek echoes it: treating "anyone who downloads a free asset" as qualified, even when there's zero buying intent, poisons the whole funnel.

Vanity signals get weighted equally. A pricing page visit is worth far more than a blog pageview. Email opens are nearly meaningless. Yet most scoring models treat all engagement as interchangeable.

No feedback loop exists. Criteria defined years ago and never updated will reflect a product, market, and ICP that no longer exist. Strong teams treat MQL definitions as living documents - refined quarterly with direct sales input.

MQL Scoring Model That Works

Most lead scoring advice explains the concept without giving you usable numbers. Let's fix that. This model is a practical template synthesized from common B2B scoring patterns we've tested across multiple campaigns. Calibrate the point values to your funnel - a demo request might be +100 for a high-ACV product or +25 for a self-serve tool.

Behavior Score (Actions That Signal Intent)

| Signal | Points |

|---|---|

| Demo request | +100 |

| Pricing page visit | +50 |

| Webinar registration | +30 |

| Asset download | +10 |

| Unsubscribe | -15 |

| 30-day inactivity | -10 |

Not all engagement carries equal weight. A demo request signals active buying intent, while an asset download might just mean curiosity. Score accordingly.

Demographic Score

| Signal | Points |

|---|---|

| ICP industry match | +15 |

| Target title/seniority | +15 |

| Target company size | +10 |

| Outside target geo | -15 |

Combine both scores for routing. Most B2B teams land on a threshold band of 60-100 points, refined against actual conversion data. Start at 70 and adjust quarterly.

Two things matter as much as the model itself: score decay and data quality. Intent fades - a webinar attendee from three months ago isn't the same lead they were on day one, so build in automatic decay that drops points over time. And a scoring model built on stale contact data produces stale MQLs. Platforms with short refresh cycles, like Prospeo's 7-day refresh versus the 6-week industry average, keep the firmographic signals feeding your scores current rather than months out of date.

Here's the thing: the model matters less than the discipline of iterating on it. One team we worked with tightened title and seniority filters while lowering the activity threshold, and their MQL-to-meeting rate jumped 13%. Obsess over the feedback loop, not the spreadsheet.

Your scoring model is worthless if the firmographic data behind it is stale. Prospeo refreshes 300M+ profiles every 7 days - not every 6 weeks like most providers. That means your ICP filters, title matches, and company-size signals stay accurate, so leads that hit your MQL threshold actually deserve to be there.

Stop scoring leads against data that expired last month.

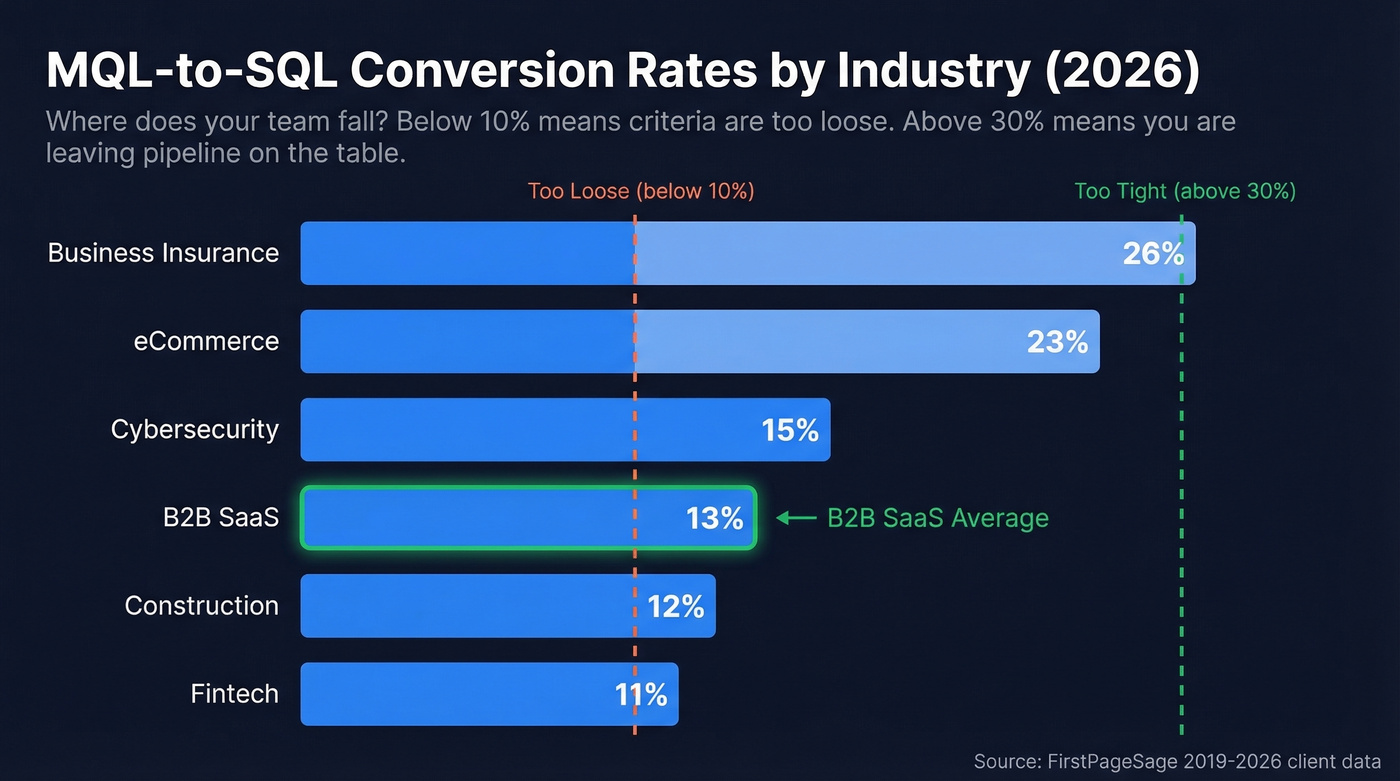

MQL-to-SQL Benchmarks by Industry

Without benchmarks, you're scoring in the dark. Here's where B2B industries actually land based on 2019-2026 client data:

| Industry | MQL-to-SQL Rate |

|---|---|

| Business Insurance | 26% |

| eCommerce | 23% |

| Cybersecurity | 15% |

| B2B SaaS | 13% |

| Construction | 12% |

| Fintech | 11% |

And here's how channels perform at the lead-to-MQL stage, with an overall average of 31%:

| Channel | Lead-to-MQL Rate |

|---|---|

| Client Referrals | 56% |

| SEO | 41% |

| 38% | |

| PPC | 29% |

| Webinars | 19% |

If your MQL-to-SQL rate sits below ~10%, your qualification criteria are almost certainly too loose. Above ~30%, you're probably being too restrictive and leaving pipeline on the table. The sweet spot depends on your sales capacity and deal size, but these ranges give you a sanity check.

Marketing-to-Sales Handoff SLA

Scoring without an SLA is like building a highway that dead-ends at a dirt road.

- Assignment - under 5 minutes

- Acceptance - under 4 hours (if sales doesn't accept within 4 hours, the lead auto-recycles to the next available rep)

- First touch - under 24 hours

- Disposition - under 5 business days

- Feedback returned - qualification status, notes, and reason

What marketing passes at handoff: lead source, engagement history, content consumed, score breakdown, and account context. What sales returns: qualification status, quality feedback, and next steps. This two-way data flow keeps criteria sharp. Aligned teams see 24% faster revenue growth - the SLA is what creates that alignment.

If you want to operationalize this beyond a doc, build lead assignment rules and a repeatable lead handoff process so nothing slips through the cracks.

Intent Data and Buying Groups in 2026

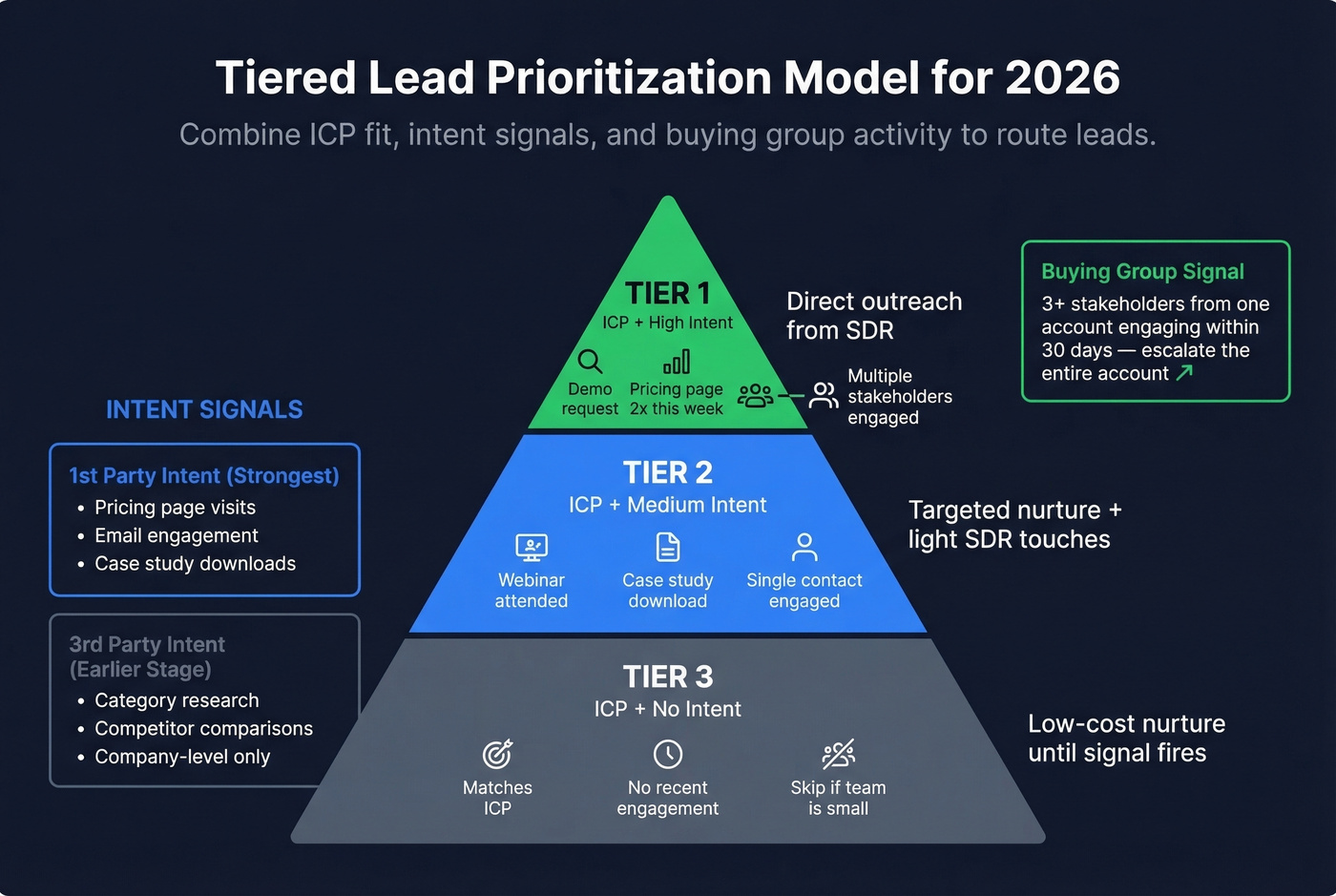

Classic scoring treats leads as individuals acting alone. Two upgrades matter now.

Intent data. First-party intent - pricing page visits, case study downloads, email engagement - is the strongest signal you have. Third-party intent covers category research and competitor comparisons, which is earlier-stage but valuable for prioritization. The catch: third-party intent is company-level, not person-level. You know someone at Acme is researching your category, but not who. Layering intent signals from providers like Bombora into your scoring model helps you prioritize accounts that are actively in-market rather than treating every lead equally.

Buying groups. A single champion filling out a demo form is great. Three stakeholders from the same account engaging within 30 days? That's a deal in motion. Build a group engagement threshold into your model - when multiple contacts from one account cross individual scoring thresholds within a tight window, escalate the entire account.

The tiered model we've seen work best: Tier 1 (ICP with high intent) gets direct outreach. Tier 2 (ICP with medium intent) gets targeted nurture with light SDR touches. Tier 3 (ICP with no intent) sits in low-cost nurture until a signal fires. Skip Tier 3 if your team is small - better to focus reps on accounts showing real buying behavior than to spread them thin across cold lists.

This is also where account scoring and ABM lead scoring outperform contact-only models.

Is the MQL Dead?

No. But static criteria defined once and never updated are dead.

The "MQL is dead" argument has real teeth in one area: speed. 78% of buyers choose the first vendor to respond. Leads contacted within five minutes are 20x more likely to convert. The industry average response time hovers around five hours. That gap - not the scoring model itself - is where deals die.

If you want to tighten this, track speed-to-lead and compare your team to average lead response time benchmarks.

Update your marketing qualified lead criteria quarterly, enforce your SLA, and the MQL stays the most useful handoff mechanism in B2B. The teams that struggle aren't the ones using MQLs. They're the ones who set their criteria in 2022 and forgot about them.

The MQL criteria above only work when you can trust the contact data at handoff. Prospeo delivers 98% email accuracy and 125M+ verified mobile numbers, so when sales gets that scored lead, they reach a real person - not a bounced inbox. Teams using Prospeo book 26% more meetings than ZoomInfo users.

Give your sales team MQLs with contact data that actually connects.

FAQ

How do you identify marketing qualified leads?

Combine fit scoring (industry, title, company size) with engagement scoring (demo requests, pricing page visits, content downloads). A lead that matches your ICP and crosses your engagement threshold - typically ~70 points - earns the MQL label. Neither dimension alone is sufficient; a perfect-fit contact with zero engagement isn't ready for sales, and a highly engaged contact outside your ICP isn't worth a rep's time.

What's the difference between an MQL and an SQL?

An MQL is marketing-qualified via scoring - it passes fit and engagement thresholds that say "we should call them." An SQL is sales-verified with confirmed budget, authority, need, and timeline. MQL answers "should we reach out?" SQL answers "is this a real opportunity?" The gap between them is where sales qualification happens.

How often should you revisit MQL criteria?

Quarterly at minimum. Review your MQL-to-SQL conversion rate each quarter against industry benchmarks. If the rate drops below your benchmark, tighten criteria. Product launches, pricing changes, and new ICP segments all trigger immediate review - don't wait for the next quarter if something fundamental shifts.

What tools improve MQL scoring accuracy?

CRM and marketing automation platforms like HubSpot, Salesforce, and Marketo handle scoring logic. For the contact data feeding those scores, freshness matters most - stale records produce stale MQLs. Prospeo's 7-day refresh cycle and 98% email accuracy keep demographic scores reliable, while its Bombora-powered intent data adds in-market signals that separate active buyers from passive browsers.