Prospect Qualification: Frameworks, Scoring, and Scripts That Actually Work

It's Thursday afternoon. You're staring at a pipeline review with 47 "qualified" opportunities, and you already know half of them are dead. The VP asks why forecast accuracy is stuck at 60%, and the honest answer is that reps are confusing interest with intent.

Prospect qualification remains the #1 seller challenge, and the environment isn't getting easier - 80% of B2B interactions happen in digital channels, buying committees average 7 people, and 75% of buyers take longer to make decisions than they did two years ago.

The fix isn't another framework deck or a 90-minute enablement session. It's operational discipline: a clear definition of "qualified," a question bank that surfaces real blockers, and a scoring model built on data that's actually current.

Quick version: Pick one framework and enforce it. Use the phase-based question bank below. Build a simple scoring model. Verify your data before you score it. The rest is execution discipline.

What Is Prospect Qualification?

Prospect qualification is the process of evaluating whether a potential buyer is worth your team's time and resources. The terminology gets muddy fast, so let's nail down definitions.

A lead is anyone who enters your funnel - downloaded a whitepaper, filled out a form, got scraped from a list. A prospect is a potential buyer that matches your ICP and is in your sights for outreach or follow-up. A qualified prospect has been evaluated against your team's criteria and meets your threshold for active sales engagement. There's no single universal definition of "qualified" - what matters is that your team uses one definition consistently.

An MQL (marketing-qualified lead) has hit behavioral triggers marketing deems significant: pricing page visits, content downloads, webinar attendance. An SQL (sales-qualified lead) has been vetted by a rep as a fit and ready for a direct sales conversation.

Think of qualification as three layers. First, organization fit: does this company match your ICP on firmographics, technographics, and market segment? Second, opportunity fit: is there a real problem, a budget, and a timeline? Third, stakeholder fit: are you talking to someone who can actually make or influence the decision? Skip a layer and you'll waste cycles on deals that were never real.

The 5 Criteria That Matter

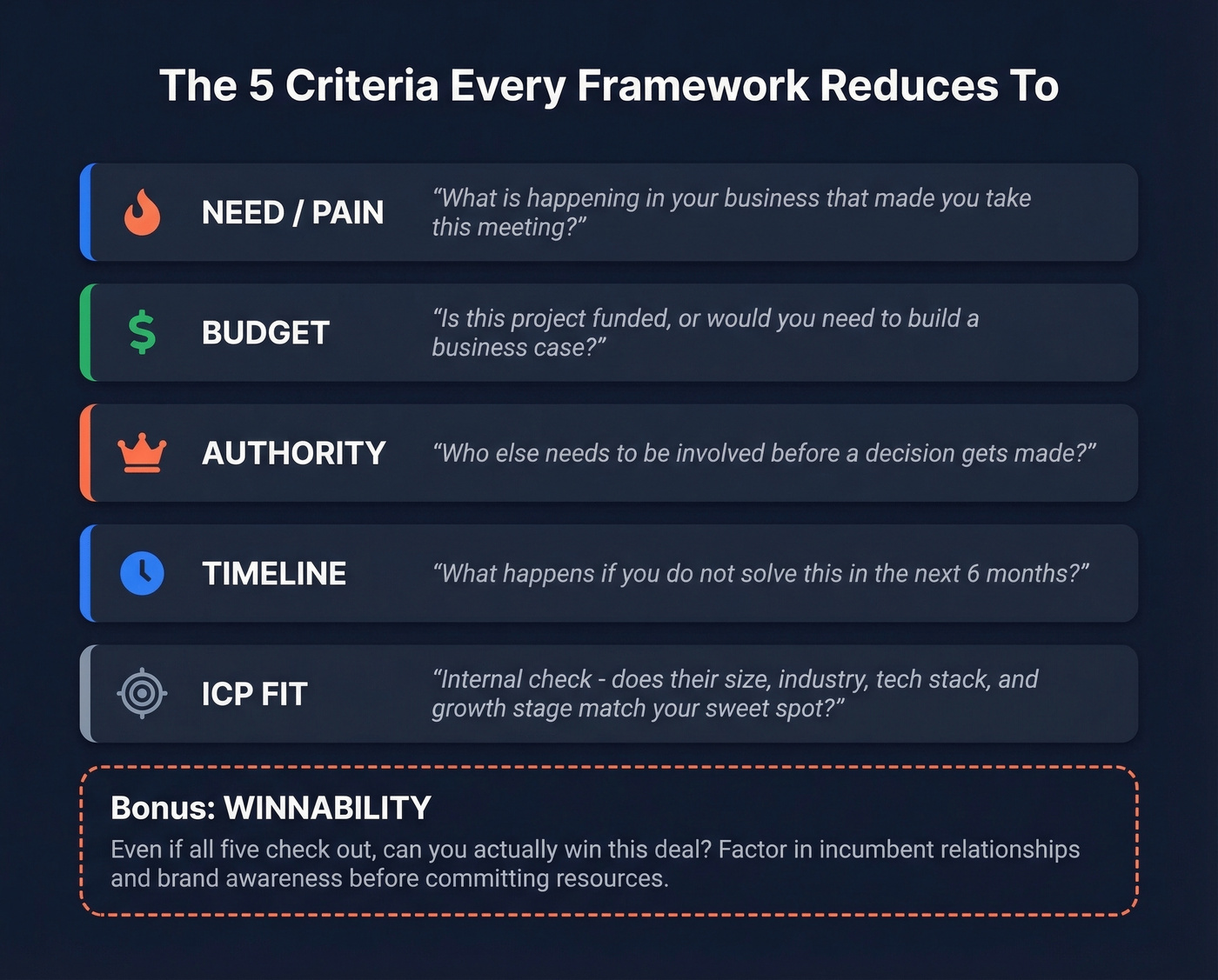

Every qualification framework - BANT, CHAMP, MEDDIC, GPCTBA/C&I, FAINT - reduces to the same five things. The acronyms change, the order shifts, but the core criteria don't.

- Need / Pain - Does the prospect have a problem your product solves? "What's happening in your business that made you take this meeting?"

- Budget - Can they pay for a solution, or is there a path to funding? "Is this project funded, or would you need to build a business case?"

- Authority - Are you talking to someone who can sign, or at least champion internally? "Who else needs to be involved before a decision gets made?" (If you're running enterprise deals, align this with your MEDDIC definition of economic buyer vs champion.)

- Timeline - Is there urgency, or is this a "someday" initiative? "What happens if you don't solve this in the next 6 months?"

- ICP Fit - Does the company match your ideal customer profile? This one's an internal check, not a question you ask the prospect: does their size, industry, tech stack, and growth stage land in your sweet spot? (If you need a rubric, use an ideal customer profile template.)

One criterion that doesn't get enough attention: winnability. Even if all five boxes check out, ask yourself whether you can actually win this deal. If the prospect's incumbent has a 10-year relationship and your brand is unknown in their market, qualification criteria alone won't tell you that. Factor it in before committing resources.

Which Framework Should You Use?

BANT, CHAMP, MEDDIC, and FAINT Compared

| Framework | Best For | Strengths | Weakness |

|---|---|---|---|

| BANT | High-velocity SMB / inbound | Simple, fast, easy to train | Misses stakeholder complexity |

| CHAMP | Mid-market consultative | Pain-first, flexible | Can get too loose without enforcement |

| MEDDIC | Enterprise / long cycles | Forecast confidence, rigor | Slows deals if applied rigidly |

| GPCTBA/C&I | Complex consultative B2B | Buyer-centric, deep discovery | Time-intensive, hard to train |

| FAINT | Prospects without allocated budget | Works when budget isn't set | Less useful post-budget |

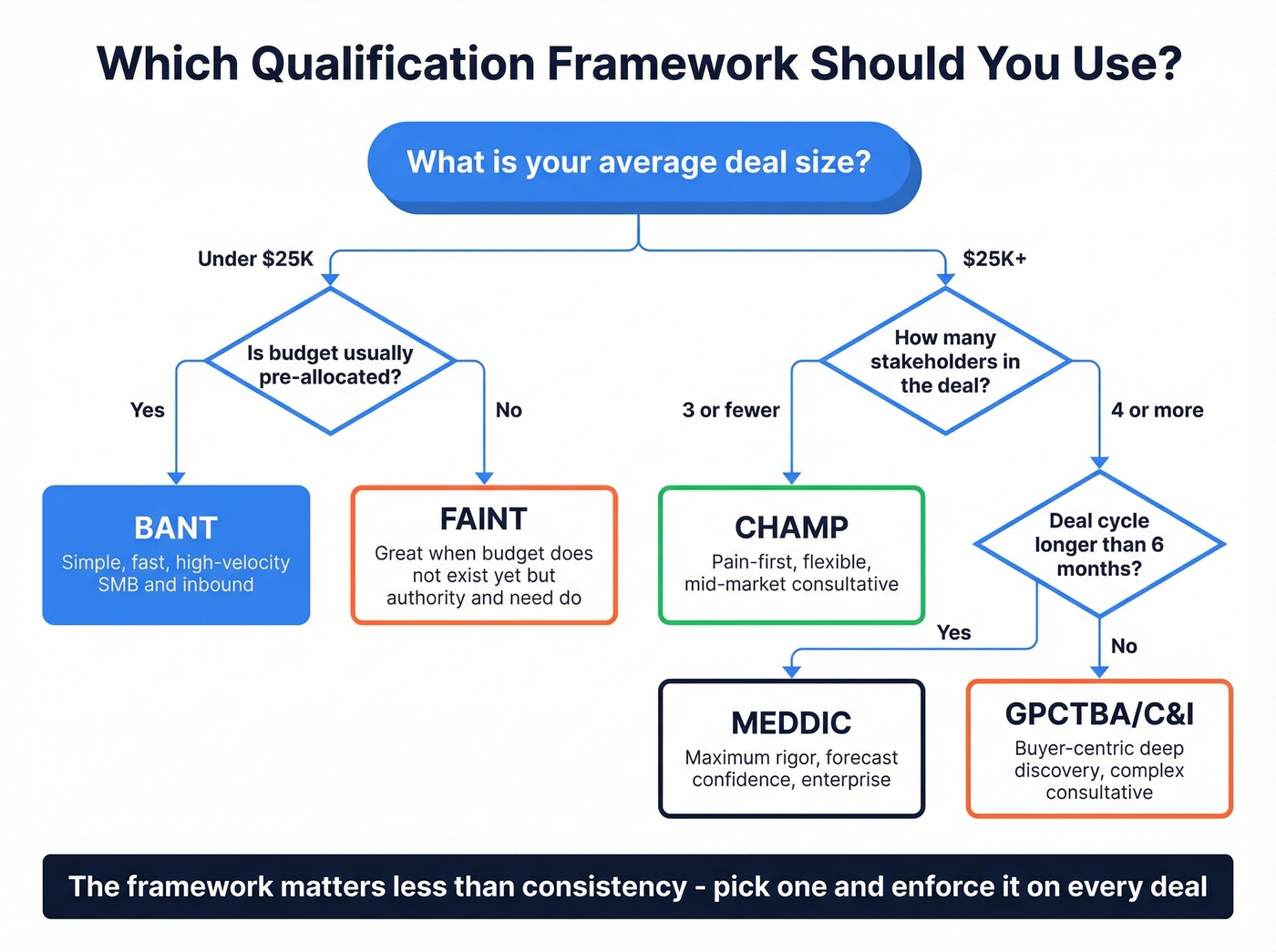

BANT originated at IBM in the 1950s. It's still one of the most widely used frameworks because it's dead simple. But for enterprise deals with 7-person buying committees, it's a starting point, not a solution. MEDDIC adds the rigor enterprise deals demand - metrics, economic buyer, decision process, decision criteria, identified pain, champion. (If you want a ready-to-use prompt list, pull from these MEDDIC discovery questions.)

GPCTBA/C&I, widely attributed to HubSpot, flips the script to start with the buyer's goals and plans before getting to budget and authority, making it a natural fit for consultative SaaS sales. FAINT deserves a mention for one specific scenario: selling into organizations that don't have an allocated budget but do have the authority and need to create one. If your product creates a new category or displaces a manual process, FAINT is more useful than BANT's rigid "is there budget?" gate.

Discipline Beats Framework Choice

Here's the thing: the consensus on r/sales is that most frameworks are interchangeable. Sandler, BANT, Challenger, MEDDIC - they all reduce to fundamentals.

If your average deal size is under $25K, you probably don't need MEDDIC-level rigor. BANT or CHAMP will do. The teams that win aren't the ones with the fanciest framework - they're the ones where every rep uses the same framework on every deal.

Industry case studies bear this out. One mid-market team standardized on CHAMP and watched forecast accuracy climb from 62% to 89%. An enterprise org that enforced MEDDIC consistently saw a similar jump - 58% to 84%. The lift didn't come from the framework itself. It came from consistency. AI-assisted scoring is gaining traction - 45% of teams now use a hybrid AI-SDR model - but the fundamentals haven't changed. The model is only as good as the criteria and data feeding it. (If you're building a system around this, start with lead scoring.)

Pick one framework, enforce it, iterate quarterly. Complexity is the enemy of adoption.

Your scoring model is worthless if the data feeding it is stale. Prospeo refreshes 300M+ profiles every 7 days - not every 6 weeks like competitors. 98% email accuracy means your reps spend time on real conversations, not bounced emails and dead leads.

Stop qualifying prospects with data that expired last month.

The Qualification Question Bank

Prospecting and qualifying go hand in hand - you can't separate outreach from evaluation. The questions below are organized by phase so reps know exactly what to ask and when. (For more outbound tactics that support better qualification, see these sales prospecting techniques.)

Discovery - Why Are We Talking?

Start every call by understanding why the prospect showed up. These questions force the buyer to articulate their own motivation, which is far more valuable than your pitch.

- "What's going on in your world that made you decide to talk to me?"

- "Why did you agree to meet with me today?"

- "What would need to be true for this conversation to be worth your time?"

These come straight from practitioner playbooks on r/sales, and they work because they're disarmingly direct. If the prospect can't articulate why they're on the call, that's a red flag.

Pain and Consequences

Once you understand the "why," dig into the cost of inaction.

- "What happens if nothing changes?"

- "If you didn't solve this in the next 6-12 months, what would the impact be?"

- "How is this problem affecting your team day to day?"

- "What have you already tried?"

The "what happens if nothing changes" question is the single best way to test urgency. If the answer is "honestly, we'd be fine," you're looking at a nice-to-have, not a must-have. Move on.

Opportunity Assessment

- "Who else should be involved in this decision before we move forward?"

- "Is this project funded, or would you need to build a business case?"

- "What does your evaluation process look like?"

- "Are you looking at other solutions right now?"

The stakeholder question is non-negotiable. With buying committees averaging 7 people, the rep who only talks to one champion is building on sand.

The Post-Demo Disqualifier

Ask this immediately after your demo, before you discuss pricing:

"Based on what you've seen, is there anything that would prevent you from moving forward if the price was right?"

This forces the prospect to surface real blockers - competitive evaluations, internal politics, timing issues, or the classic "I'm just researching." One practitioner shared on Reddit an example where this question moved their close rate on qualified demos from 13% to 30%. You're not closing more deals by being more persuasive. You're closing more by spending time only on deals that are actually closeable.

Building a Lead Scoring Model

Implicit vs. Explicit Scoring

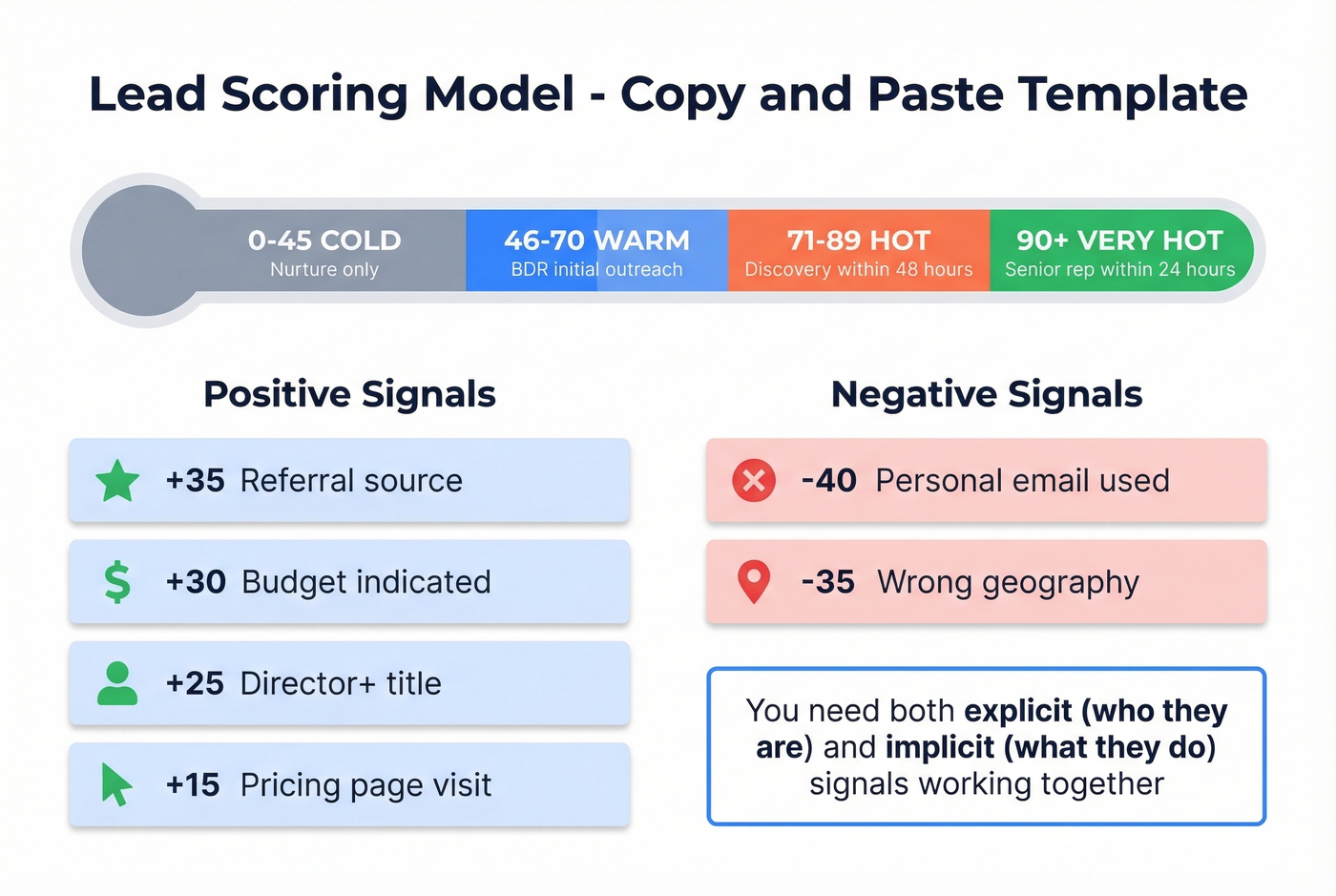

Lead scoring splits into two categories. Explicit scoring uses firmographic and demographic data - job title, company size, industry, declared budget. Implicit scoring uses behavioral signals - pages visited, content downloaded, emails opened, pricing page hits.

You need both. A Director of Engineering at a 200-person SaaS company scores high on explicit criteria but hasn't visited your site in three months - that implicit score says they're not ready for a call. Flip it: a marketing intern who's visited your pricing page six times has strong implicit signals but zero explicit fit. Neither deserves a rep's time on their own. Companies with effective lead scoring see up to a 70% increase in lead generation ROI, but only when both dimensions work together. (If your inputs are messy, start with lead enrichment before you tune scoring.)

Copy-Paste Scoring Template

Drop this into HubSpot, Salesforce, or any CRM with scoring capabilities:

| Signal | Points | Type |

|---|---|---|

| Job title (Director+) | +25 | Explicit |

| Target company size | +20 | Explicit |

| Budget indicated | +30 | Explicit |

| Referral source | +35 | Explicit |

| Pricing page visit | +15 | Implicit |

| RFP / proposal request | +40 | Implicit |

| Personal email used | -40 | Explicit |

| Wrong geography | -35 | Explicit |

Thresholds:

- 0-45: Cold - nurture only

- 46-70: Warm - BDR initial outreach

- 71-89: Hot - schedule discovery within 48 hours

- 90+: Very hot - senior rep reaches out within 24 hours

Qualification Benchmarks for 2026

Let's put numbers on what "good" looks like. These B2B SaaS funnel benchmarks give you a baseline:

| Stage | Conversion Rate |

|---|---|

| Lead to MQL | 39% |

| MQL to SQL | 38% |

| SQL to Opportunity | 42% |

| SQL to Closed Won | 37% |

If your SQL-to-Closed rate is significantly below 37%, unqualified deals are likely slipping into the pipeline and burning rep capacity. If it's well above 37%, you're probably filtering too aggressively and missing winnable deals. (To diagnose the upstream leak, track funnel metrics.)

Cycle length matters too. Outreach's data shows that deals closed within 50 days carry a 47% win rate. After 50 days, win rates drop to 20% or lower. That's not a suggestion to rush deals - it's a signal that deals dragging past two months have unresolved qualification gaps. Either a blocker wasn't surfaced, or the prospect was never truly qualified.

5 Qualification Mistakes That Kill Deals

Confusing Politeness With Intent

"Looks great, we'll discuss internally" isn't a buying signal. It's a polite exit. Buyers say 58% of sales meetings aren't valuable to them - many prospects sit through demos out of courtesy, not intent. That said, 43% of buyers say it's fine to be contacted 5+ times before connecting. The issue isn't persistence. It's persisting on the wrong prospects. Use the post-demo disqualifier.

Talking to the Wrong Stakeholder

With 7-person buying committees, single-threading is a death sentence. If your champion gets reassigned or loses internal momentum, the deal dies. Always ask "who else should be involved?" and don't accept "just me" at face value for any deal above $20K.

Skipping Data Validation

Reps waste hours qualifying prospects whose emails bounce and phones are disconnected. It's the most preventable mistake on this list. Verify before you qualify - tools like Prospeo with 98% email accuracy and real-time verification catch dead contacts before they enter your pipeline. (If you're comparing vendors, start with these data enrichment services.)

Over-Qualifying (Analysis Paralysis)

Some teams layer MEDDIC on top of BANT on top of a custom scorecard and wonder why reps spend more time filling out fields than selling. The r/sales community calls this out regularly. Pick one framework, enforce it, iterate. A simple framework used consistently beats a complex one used sporadically.

Not Disqualifying Fast Enough

In our experience, the best qualification skill is the willingness to disqualify. If your quota is $1M and you work 2,200 hours a year, every hour spent on a dead deal costs $450 in direct quota capacity - and double that in opportunity cost. If the post-demo disqualifier surfaces a blocker like a competitive eval locked in, no budget until next fiscal year, or "just researching" - thank the prospect and move on. Your pipeline will be smaller. Your win rate will be dramatically higher. (This is also a core fix for sales pipeline challenges.)

ICP fit is the qualification criterion you check before the first call - but only if your firmographic, technographic, and intent data is accurate. Prospeo gives you 30+ filters including buyer intent across 15,000 topics, tech stack, headcount growth, and funding data so you disqualify bad fits before they waste a single rep hour.

Filter out the dead deals before they ever hit your pipeline.

FAQ

How does prospect qualification differ from lead qualification?

Prospect qualification evaluates whether a specific person and organization merit active sales engagement, using criteria like need, budget, authority, timeline, and ICP fit. Lead qualification is the broader triage that sorts inbound contacts into MQL and SQL buckets based on behavioral and demographic signals. The distinction matters because qualifying prospects demands rep judgment, not just automation rules.

Which sales qualification framework is best?

BANT works for SMB velocity deals under $25K with short cycles. CHAMP fits mid-market consultative selling. MEDDIC is built for enterprise deals with multi-stakeholder buying processes. Consistency matters far more than which framework you pick - a simple framework enforced across every deal beats a sophisticated one used sporadically. Skip MEDDIC if your average deal closes in under 30 days; you'll just slow your team down.

How do you disqualify a prospect who seems interested but won't commit?

Use the post-demo disqualifier: ask directly whether anything would prevent them from moving forward if the price was right. If they hedge, dig into the specific blocker - often you haven't reached the economic buyer, or the timeline is further out than suggested. One team moved close rates from 13% to 30% by ruthlessly applying this single question.

What tools help keep qualification data accurate?

Scoring models fail when contact data goes stale - outdated titles, wrong company sizes, and bounced emails corrupt every score. A weekly-refresh enrichment tool paired with your CRM ensures qualification decisions are built on current facts, not last quarter's org chart. We run our own scoring on Prospeo's enrichment API, which returns 50+ data points per contact at a 92% match rate.