Best AI Lead Scoring Tools for 2026

Your marketing team delivered 200 MQLs last month. Sales worked them, and maybe 20 turned into real conversations. The other 180? Wrong title, wrong company size, or just someone who downloaded a whitepaper and never thought about you again. That's not a lead gen problem - it's a scoring problem, and the right AI lead scoring tools can fix it. The numbers back this up: 67% of lost sales stem from improper lead qualification, and companies using lead scoring achieve 138% ROI on lead generation versus 78% without.

The predictive lead scoring market hit $5.6B in 2025, up from $1.4B in 2020. That kind of growth means dozens of tools now claim to solve this. Most are fine. A few are great. And some will charge you $50K/year to tell you things a spreadsheet could've figured out.

Our Top Picks

| Pick | Best For | Starting Price |

|---|---|---|

| Prospeo | Data quality foundation - scoring is only as good as your inputs | Free; ~$0.01/email |

| HubSpot | Teams already on HubSpot CRM who can afford Enterprise | ~$3,600+/mo |

| MadKudu | PLG companies needing product-usage scoring | ~$999/mo |

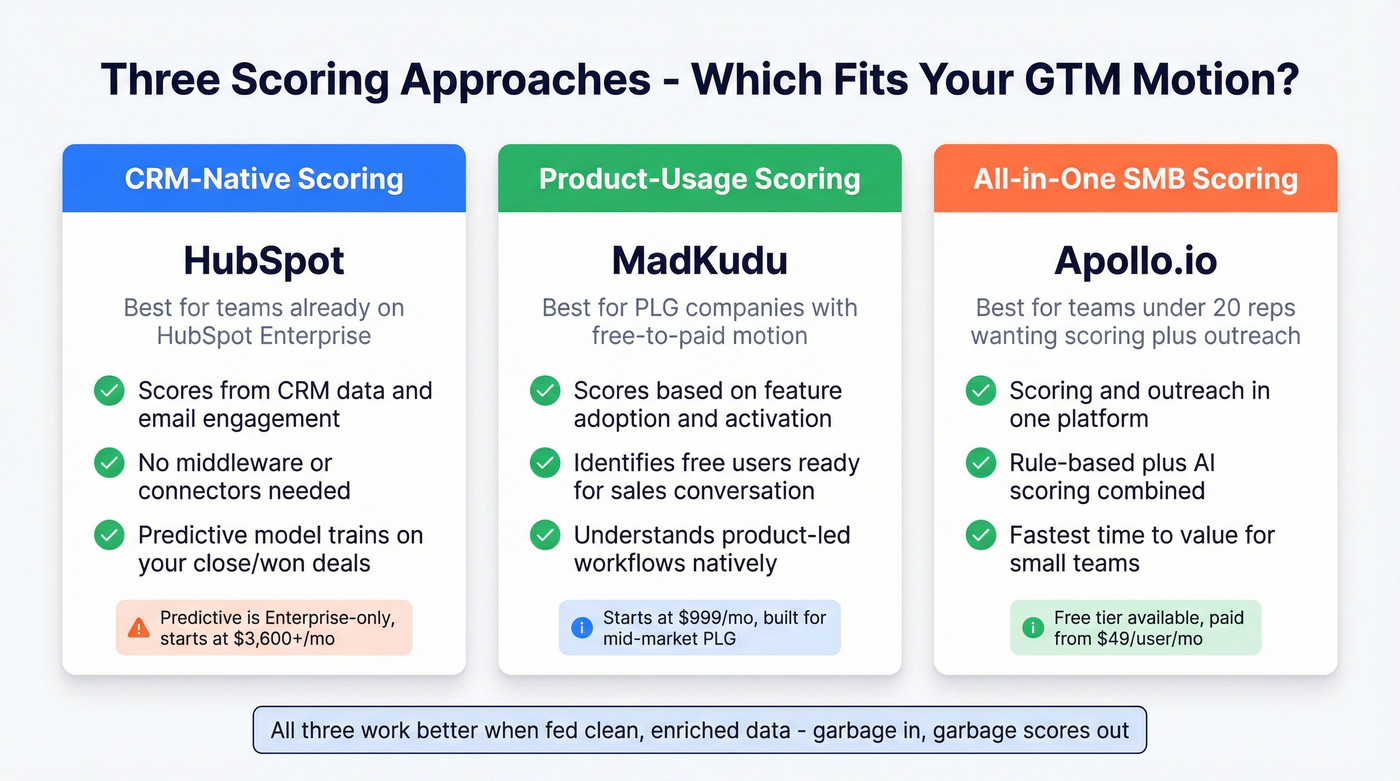

Prospeo isn't a scoring algorithm - it's what makes your scoring algorithm actually work. If your CRM data is stale, every score you generate is fiction. HubSpot is the easiest path if you're already in their ecosystem, but predictive scoring is locked behind Enterprise pricing. MadKudu is the specialist pick for product-led growth teams that need to score based on in-app behavior, not just firmographics.

How AI Lead Scoring Works

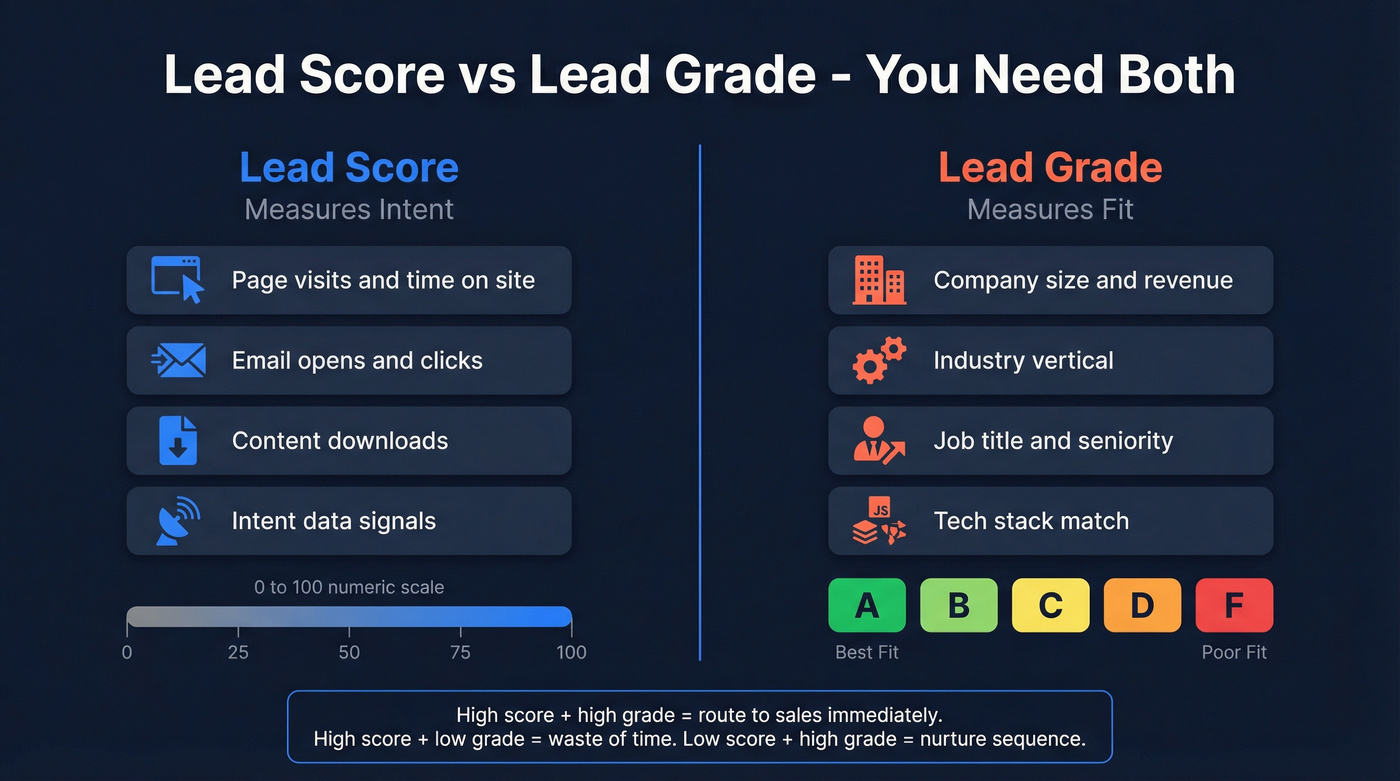

There are two distinct concepts most teams conflate. A lead score measures intent: a numeric value (typically 0-100) based on behavioral signals like page visits, email engagement, content downloads, and intent data. A lead grade measures fit: an A-F rating based on firmographic and demographic attributes like company size, industry, job title, and tech stack.

You need both. A perfect-fit company showing zero intent isn't ready to buy. A highly engaged visitor at a company that'll never be your customer is a waste of a rep's time.

The AI part comes in how these signals get weighted. Rule-based scoring - where you manually assign points ("VP title = +15, visited pricing page = +20") - works, but it doesn't learn. Predictive models analyze your historical conversion data, find patterns humans miss, and adjust weights automatically. They continuously retrain on new conversion data instead of relying on assumptions your team made six months ago.

In one study of 88,000+ inbound leads, AI-powered qualification reduced lead servicing time by 31%. Traditional methods are right only 15-25% of the time, while AI-powered qualification hits 40-60% accuracy - that's a massive gap when you're routing hundreds of leads per week.

A common scoring band setup: 95+ is hot (route to a rep immediately), 50-94 is warm (drop into a nurture sequence), and below 50 is cold (park or disqualify). Simple. But most teams never define these thresholds clearly, and their reps end up ignoring scores entirely.

Here's the thing: the model is only as good as the data feeding it. If your CRM has outdated job titles, wrong company sizes, and emails that bounce 30% of the time, even the best algorithm produces garbage scores. Data quality isn't a nice-to-have prerequisite - it's the foundation the entire system sits on. (If you want the benchmarks and fixes, start with B2B contact data decay and CRM hygiene.)

Your scoring model is only as smart as the data feeding it. Prospeo enriches your CRM with 50+ data points per contact at an 83% match rate, refreshes records every 7 days, and layers in intent signals across 15,000 topics - so every lead score reflects reality, not six-month-old job titles.

Stop scoring stale data. Start scoring real buying signals.

12 Best Tools for Lead Scoring

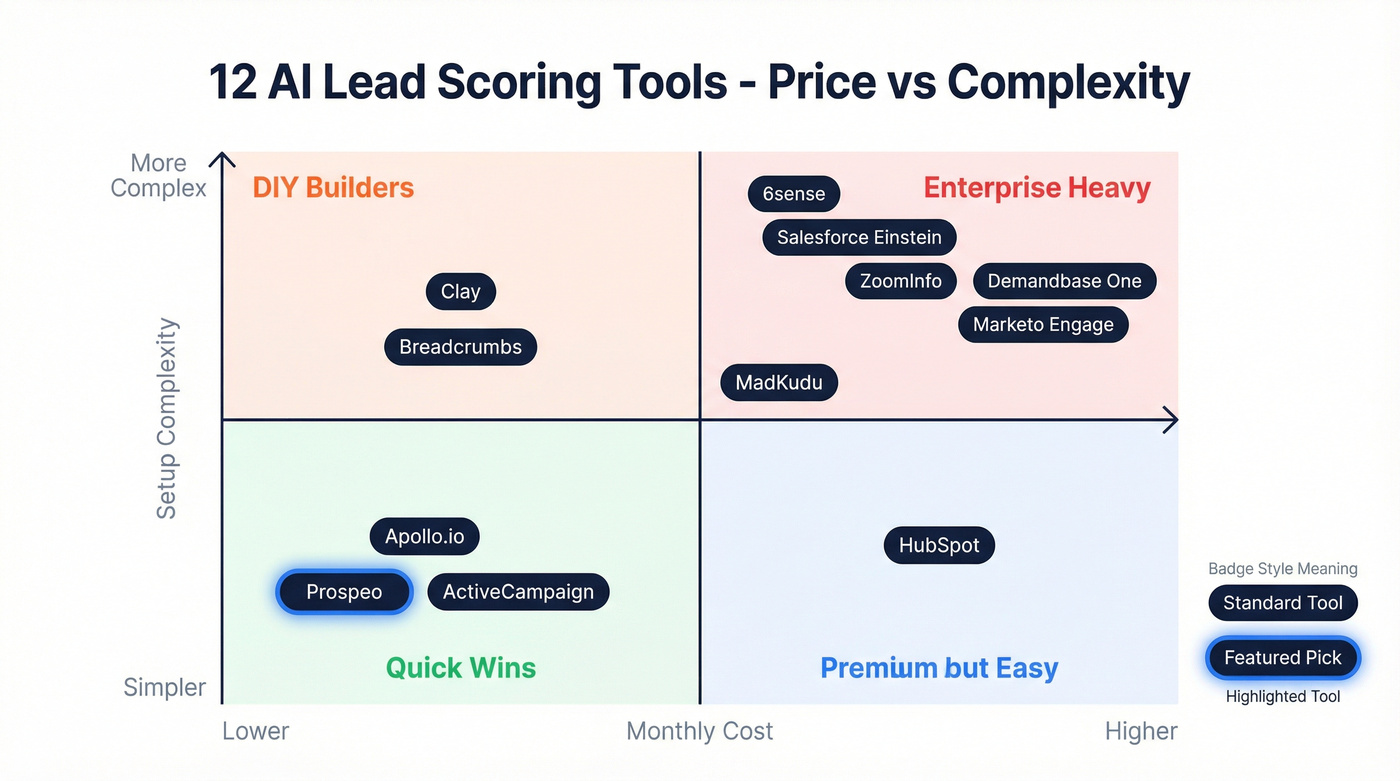

Here's every tool worth considering, organized by depth. The comparison table gives you the quick view; detailed reviews follow.

| Tool | Best For | AI Scoring Type | Starting Price |

|---|---|---|---|

| Prospeo | Data quality for scoring | Enrichment + intent | Free; ~$0.01/email |

| HubSpot | CRM-native scoring | Predictive (Enterprise) | ~$3,600+/mo |

| Salesforce Einstein | Enterprise Salesforce orgs | Predictive ML | ~$215+/user/mo |

| Apollo.io | SMB scoring + outreach | Rule-based + AI | Free; $49+/user/mo |

| 6sense | Enterprise intent + ABM | Predictive + intent | $25K-$100K+/yr |

| MadKudu | PLG product-usage scoring | Predictive ML | ~$999/mo |

| Clay | Custom scoring workflows | Enrichment-driven | Free; $149+/mo |

| ZoomInfo | Data-rich enterprise | Fit + intent + engagement | $15K-$40K+/yr |

| ActiveCampaign | Small marketing teams | Rule-based | ~$49/mo |

| Marketo Engage | Enterprise marketing auto | Behavior + predictive | ~$895+/mo |

| Breadcrumbs | Mid-market predictive | Predictive scoring | ~$1K-$3K/mo |

| Demandbase One | ABM account scoring | Account-level AI | $25K-$75K+/yr |

Prospeo

Use this if: Your CRM data is months old and you need verified, enriched contacts feeding your scoring model before you invest in a scoring algorithm.

Skip this if: You already have pristine data and only need a scoring engine layered on top.

Prospeo's 300M+ professional profiles, 98% email accuracy, and 7-day data refresh cycle solve the problem most scoring tools ignore: input quality. Your predictive model doesn't know that the "VP of Marketing" in your CRM left that company eight months ago. Prospeo does, because it refreshes every week while the industry average sits at six weeks.

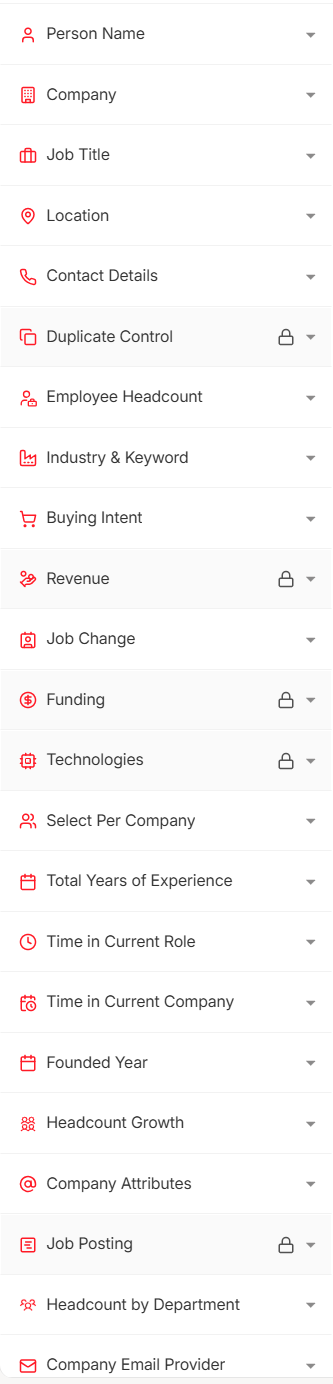

The platform tracks intent data across 15,000 Bombora topics, so you're not just cleaning data - you're layering in buying signals. Combine that with 30+ search filters covering buyer intent, technographics, job changes, headcount growth, and funding, plus CRM enrichment at an 83% match rate returning 50+ data points per contact, and you've got the raw material that makes every other scoring platform on this list perform better. (If you're building this from scratch, use a lead qualification framework and then document it as a lead scoring system.)

Real results: Snyk's 50-person AE team cut bounce rates from 35-40% to under 5% and saw AE-sourced pipeline jump 180% after switching to Prospeo for their data foundation. That's what happens when your scoring model finally has accurate inputs.

Pricing: Free tier (75 emails/month), credit-based at ~$0.01/email. No contracts, no sales calls required.

HubSpot

Use this if: You're already running HubSpot CRM and can justify Enterprise pricing for predictive scoring.

Skip this if: You're on Professional and assume you're getting AI scoring - you're not.

HubSpot's lead scoring comes in two flavors, and the distinction matters. Manual scoring (assign points to properties and behaviors) is available on Professional and Enterprise. Predictive scoring - where HubSpot's model analyzes your historical data and auto-scores leads - is Enterprise-only. That's the gotcha nobody mentions until you're mid-implementation.

If you're already paying for Enterprise, the predictive scoring is a clean, CRM-native path. It pulls from your existing CRM data, website activity, and email engagement to surface leads most likely to close. It works because it's native - no connectors, no middleware. But if you're on Professional and thought you were getting AI scoring, you're looking at a significant upgrade that starts around $3,600/month.

Salesforce Einstein

Use this if: You're a large Salesforce org with dedicated admins, clean data, and budget to burn.

Skip this if: You want something running in under a month, or you don't have a Salesforce admin on staff.

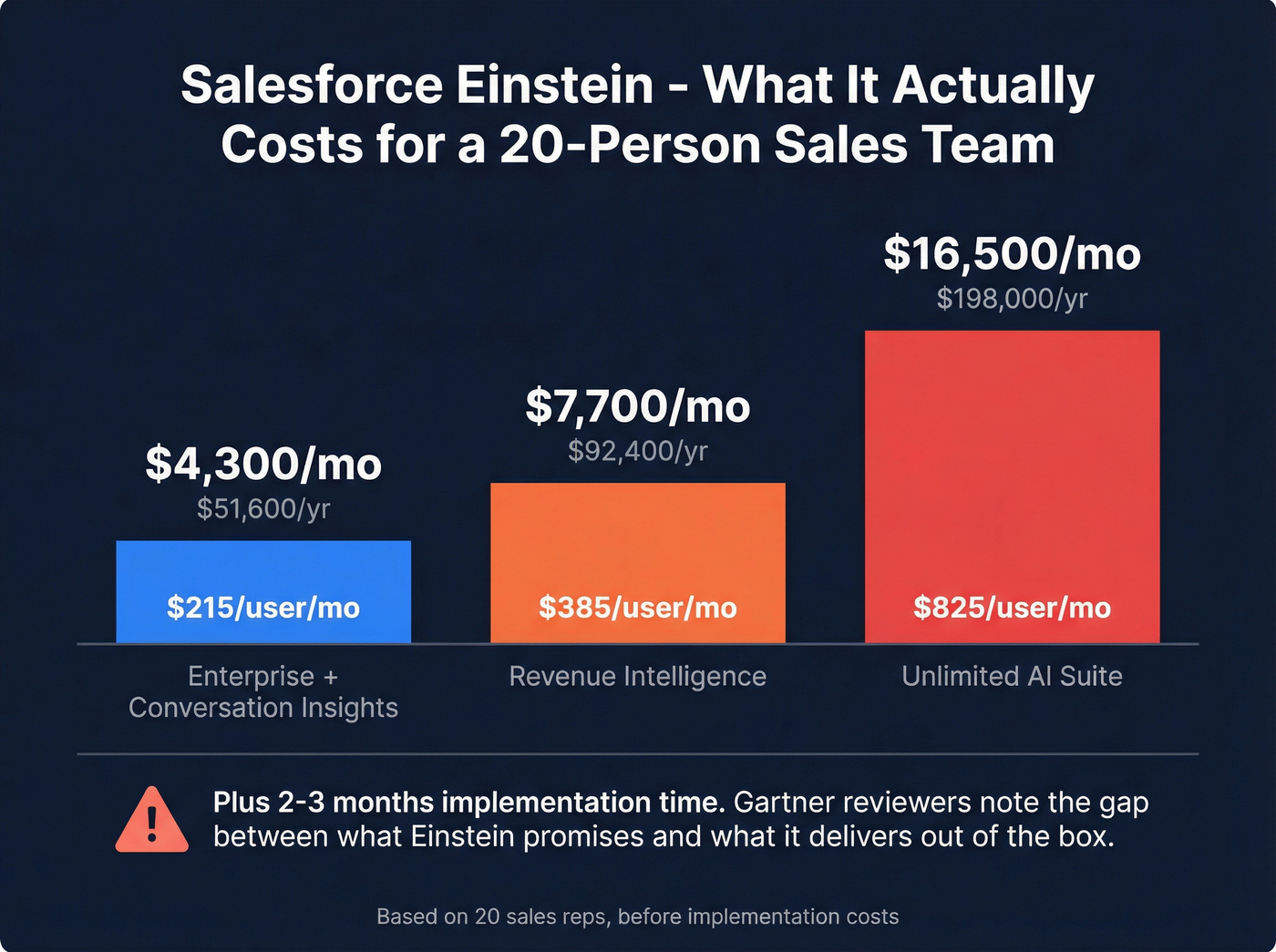

Einstein is powerful, but let's be honest about what "powerful" costs. A realistic mid-market deployment runs $215/user/month with Enterprise + Conversation Insights. Want Revenue Intelligence? That's $385/user/month. The comprehensive AI suite on Unlimited pushes to $825/user/month. For a 20-person sales team, you're looking at $4,300-$16,500/month before you've scored a single lead, and implementation typically takes 2-3 months.

Gartner Verified Reviews highlight the learning curve, data migration headaches, and the gap between what Einstein promises and what it delivers out of the box. One reviewer noted the "AI doesn't bring back the particular insights we're looking for." That's a $50K+ annual investment producing underwhelming results - and it happens more often than most teams expect.

Apollo.io

Apollo is the obvious starting point for SMB teams that want scoring and outreach in one platform. Paid plans start around $49/user/month. Where Apollo wins over ZoomInfo: price and speed to value. Where ZoomInfo wins: database depth for enterprise accounts and intent data sophistication. Apollo's scoring isn't best-in-class, but for teams under 20 reps who need "good enough" scoring without a separate vendor, it's hard to beat on value.

6sense

Enterprise intent-data powerhouse that scores accounts - not just leads - based on buying stage predictions. Genuinely impressive technology and genuinely expensive at $25,000-$100,000+/year with 3-6 month implementations. If you're running ABM at scale and need account-level scoring tied to intent, 6sense is the enterprise standard. For a 15-person team, look elsewhere. (If you're comparing stacks, see ABM account prioritization.)

MadKudu

The specialist pick for product-led growth companies. MadKudu scores leads based on product usage signals like feature adoption, activation milestones, and usage frequency - not just firmographics and page visits. Starting at ~$999/month, it's priced for mid-market PLG companies that need to identify which free users are ready for a sales conversation. If your GTM motion is "let them try the product, then sell," MadKudu understands that workflow better than any general-purpose scoring tool.

Clay

Clay isn't a scoring tool in the traditional sense - it's a workflow builder that lets you create custom scoring models by chaining together enrichment sources, AI prompts, and conditional logic. Free tier gives you 100 credits/month to experiment; Starter runs $149/mo, Pro $800/mo. Think of it as the "build your own scoring engine" option. Compared to ZoomInfo's rigid scoring, Clay gives you flexibility. The tradeoff: you need someone technical enough to build and maintain the workflows. (If you're cost-checking, start with Clay pricing.)

ZoomInfo

ZoomInfo scores leads using fit, intent, and engagement signals across its 250M+ professional contacts and 100M+ company profiles. The Copilot AI feature surfaces prioritized leads based on your ICP. At $15,000-$40,000+/year, it's the data-rich enterprise option.

Where ZoomInfo still wins over most alternatives: US database depth and the sheer breadth of signals it can score against. Where it loses: price, contract flexibility, and the fact that most teams use maybe 30% of what they're paying for. We've talked to plenty of teams who felt locked into expensive annual contracts for features they barely touched.

ActiveCampaign

Affordable scoring for small marketing teams that need basic lead prioritization without enterprise complexity. Plans start around $49/month. Not AI-powered in any meaningful sense, but functional for teams that want simple prioritization tied to engagement and rules. Skip this if you need anything beyond basic point-based scoring.

Marketo Engage

Enterprise marketing automation with built-in behavior scoring and predictive capabilities. Meaningful implementations typically run $1,500-$3,000/month. Best for large marketing orgs already invested in the Adobe ecosystem. If you're not already an Adobe shop, the integration overhead alone makes this a tough sell.

Breadcrumbs

Mid-market predictive scoring specialist at ~$1,000-$3,000/month. We haven't tested Breadcrumbs deeply enough to recommend it confidently, but it's worth evaluating for teams that want predictive scoring without 6sense-level pricing.

Demandbase One

ABM-focused account-level scoring that combines intent data, engagement signals, and firmographic fit into account scores. At $25,000-$75,000+/year, it's squarely enterprise. If you're running account-based everything and need scoring at the account level rather than the lead level, Demandbase competes directly with 6sense.

How to Choose the Right Tool

Most teams don't need a dedicated scoring platform. That's a controversial opinion in an article about scoring tools, but it's true. If you're getting fewer than 500 inbound leads per month, a simple fit + intent framework built on clean data will outperform a $30K platform running on garbage inputs.

Look, ask any sales rep on r/sales about MQL quality and you'll get an earful - the disconnect between marketing-qualified and sales-ready is the number one complaint, and throwing a fancier algorithm at bad data doesn't fix it.

Our hot take: If your average deal size is under $15K, you almost certainly don't need a dedicated predictive scoring platform. A clean database, a handful of firmographic rules, and a rep who picks up the phone fast will outperform any $50K scoring tool. Lead qualification success drops 10x when response time exceeds 5 minutes - your scoring model needs to trigger instant routing, not sit in a dashboard waiting for someone to check it.

Here's the decision framework that actually works:

Fix Your Data First

If your CRM bounce rate is above 10% or your contact records are more than 90 days old, fix the input before upgrading the algorithm. Weekly-refresh enrichment solves this. Stale data makes every downstream score unreliable, full stop.

Match Tool to Stack

Already on HubSpot Enterprise? Use their native scoring. Deep in Salesforce? Einstein makes sense if you have the admin resources. Running a PLG motion? MadKudu. No existing CRM commitment? Apollo gives you scoring and outreach in one.

Budget Realistically

For a 15-person team, median scoring tool cost runs $1,200-$2,500/month. Enterprise platforms like 6sense, Demandbase, and ZoomInfo push well past that. Self-serve tools like Apollo and ActiveCampaign can get you started under $100/month.

Involve Sales from Day One

A scoring model that Marketing builds in isolation will be ignored by reps. Get Sales to define what "qualified" means before you configure a single rule. Scoring works best when reps trust the outputs - and trust comes from involvement in defining the signals that matter, not from a dashboard they had no hand in building. (For the ops side, see RevOps lead scoring.)

Scoring Mistakes That Kill Your Model

We've seen teams invest months building scoring models that actively hurt their pipeline. These seven mistakes show up most often:

Scoring email opens. Spam filters and privacy tools inflate open rates to meaninglessness. Score form fills, demo requests, and pricing page visits instead - actions that require actual human intent.

Running multiple scoring models. One model for marketing, another for sales, a third for product. Within six months, nobody trusts any of them. Run a single model and iterate.

Building without Sales input. If reps don't believe the scores, they'll ignore them. Get your top closers in the room before you assign a single point value.

Scoring demo requests. If someone fills out a "talk to sales" form, don't score them - route them directly. Scoring a hand-raiser adds latency to your highest-intent leads.

Ignoring negative scoring. A contact with 365 days of zero activity shouldn't sit in your database inflating your "qualified" count. Decay scores aggressively for inactivity.

Over-relying on job title fields. Job titles are freeform text. "VP Sales," "Vice President, Sales," "VP of Sales & Partnerships" - your model treats these as three different things. Enrich and normalize before you score.

Never retraining the model. Your ICP shifts, your product evolves, and your buyer personas change. Retrain your scoring model quarterly at minimum. A model built on last year's conversion data is scoring for last year's buyer.

Snyk's 50 AEs saw pipeline jump 180% after switching to Prospeo - because their scoring models finally had accurate inputs. With 98% email accuracy and a 7-day refresh cycle, your AI scores stop being educated guesses and start predicting actual revenue.

Give your lead scoring model data it can actually trust.

FAQ

What's the difference between lead scoring and lead grading?

A lead score measures intent via behavioral signals (page visits, email engagement, content downloads) on a 0-100 scale. A lead grade measures fit via firmographic attributes (company size, industry, job title) on an A-F scale. Effective systems use both together to prioritize leads that match your ICP and are actively showing buying signals.

How long does it take to implement AI lead scoring?

Self-serve tools like Apollo or ActiveCampaign can be configured in 2-4 weeks. HubSpot's predictive scoring takes a few weeks with clean data. Salesforce Einstein deployments typically run 2-3 months due to CRM complexity and data migration. The biggest variable is always data readiness, not the tool itself.

Is AI lead scoring worth it for small teams?

For teams getting fewer than 500 inbound leads per month, a simple fit + intent framework with clean, enriched data often outperforms a $30K platform. Start with a free enrichment tier plus basic CRM rules, then layer in predictive tools as volume grows past that threshold.

What data do scoring models need?

Firmographics (company size, industry, revenue), behavioral signals (page visits, content downloads), engagement data (email clicks, meeting bookings), and intent data (third-party buying signals from providers like Bombora). Accuracy depends on data freshness - weekly-refresh enrichment keeps inputs reliable instead of letting records decay for months.

How accurate is AI scoring vs. manual scoring?

Traditional manual methods are right only 15-25% of the time, while AI-powered qualification hits 40-60% accuracy. The gap widens with volume - at 5,000+ leads per month, manual scoring becomes essentially random while predictive models keep improving with more training data.