How to Build a Lead Scoring System That Doesn't Waste Your Sales Team's Time

Your SDR just spent 45 minutes crafting a personalized sequence for a lead scored 92 out of 100. Perfect ICP fit. VP of Operations at a mid-market SaaS company. Opened three emails last quarter. One problem: the email bounced, the phone number's disconnected, and she left that company four months ago. Your lead scoring system worked flawlessly - it just scored garbage data with confidence.

That's the core tension most teams miss. The model gets all the attention. The data feeding it gets almost none. Let's fix both.

The Short Version

- Clean your data first. Scoring on stale job titles and bounced emails is worse than not scoring at all. (If you need a process, start with CRM hygiene.)

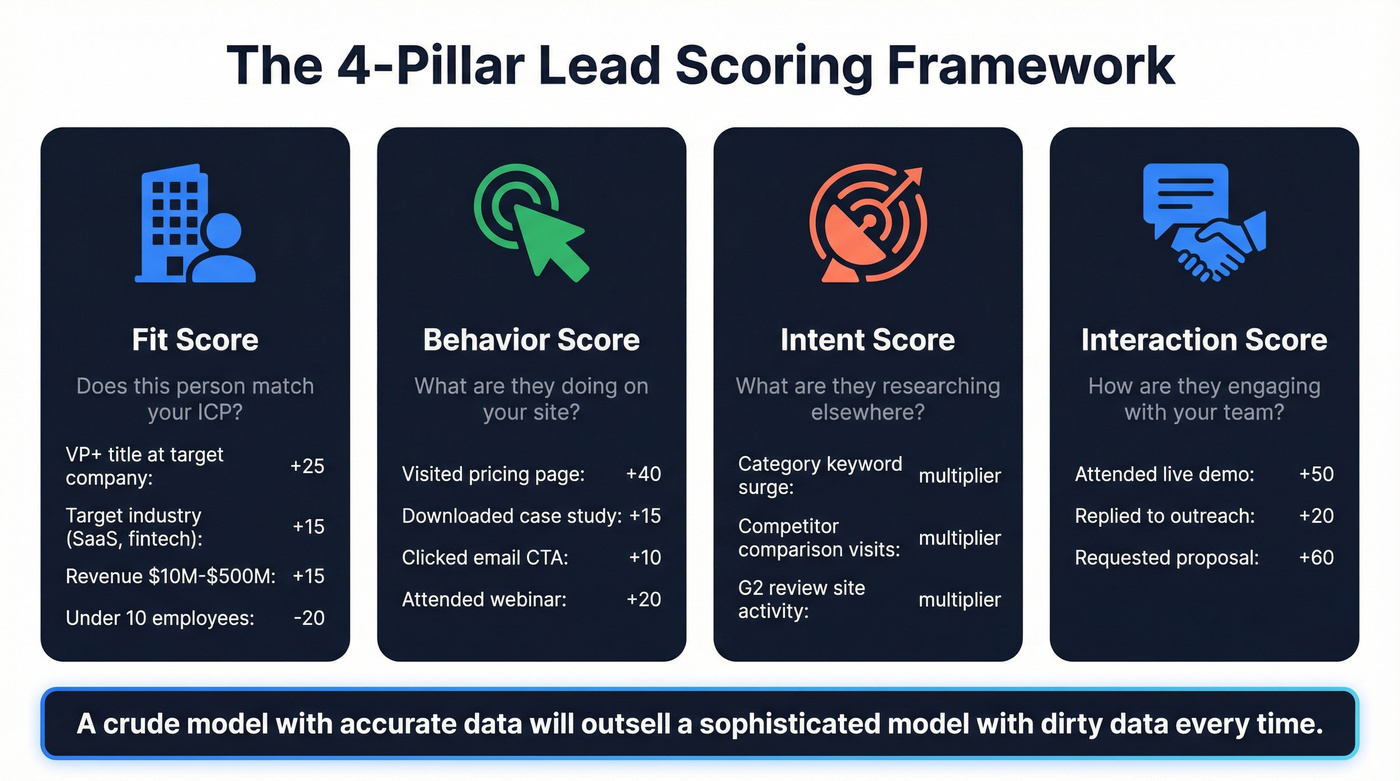

- Start with a manual, rule-based model using four pillars: fit, behavior, intent, and interaction.

- Set a handoff threshold with SLA windows - top-tier leads get a call within 24 hours, next-tier within 48. Get sales buy-in. Review every 90 days.

- Don't pay for AI/predictive scoring until you've got hundreds of closed deals to train on.

Most guides skip that first bullet entirely. It's the one that matters most.

What Lead Scoring Actually Does

Lead scoring assigns numerical values to prospects based on how closely they match your ideal customer profile and how engaged they are with your brand. Most teams use a 0-100 scale, where higher scores signal purchase readiness.

The business case is straightforward. Appcues reduced customer acquisition cost by 80% through lead scoring. LearnUpon lifted MQL-to-SQL conversion rates by 30%. ZoomInfo saw a 45% increase in sales conversions after implementation. When reps focus on the right leads, everything downstream improves.

Fix Your Data First

Here's a scenario we see constantly. Marketing hands sales 50 MQLs this week. Sales starts working them and discovers 8 have disconnected phone numbers, 5 changed roles since the data was last refreshed, and 3 work at companies well below the ICP floor. That's 16 leads - 32% - that should never have been scored highly in the first place.

A perfectly weighted scoring model fed stale data doesn't just fail quietly. It confidently prioritizes the wrong people. Your reps trust the scores, invest time in outreach, and get nothing back. After a few weeks of this, they stop trusting the scores entirely - and now you've got a dead system nobody uses.

The fix: verify and enrich your data before it enters the scoring model. Prospeo runs a 7-day refresh cycle on its 300M+ professional profiles, compared to the 6-week industry average, so the job titles, company data, and contact info feeding your model stay current. Each enrichment returns 50+ data points per contact at a 98% email accuracy rate. When your model scores a VP of Sales at a Series B company, you can trust she's actually still there. (If you want the benchmarks behind this, see B2B contact data decay.)

The 4-Pillar Scoring Framework

The modern approach goes beyond basic demographics and page views. Salespanel's research outlines four pillars that capture the full picture of buyer readiness: fit, behavior, intent, and interaction. Here's how to build each one with point values you can steal.

A crude model with accurate data will outsell a sophisticated model with dirty data every single time. We've watched it happen across dozens of implementations.

Fit Score (Firmographic + Demographic)

Fit scoring answers one question: does this person match your ICP on paper?

Example point values for a mid-market B2B SaaS company:

- VP+ title at a target company: +25

- Target industry like SaaS or fintech: +15

- Company revenue $10M-$500M: +15

- Company under 10 employees: -20

- Non-decision-maker title like intern or student: -15

These weights should reflect your actual closed-won data. If 70% of your deals come from Directors and above, weight seniority heavily. (If you need a tighter definition of ICP inputs, use firmographic and technographic data.)

Behavior Score (Engagement)

Not all actions are equal. A pricing page visit signals far more intent than a blog read.

- Visited pricing page: +40

- Downloaded case study: +15

- Opened email: +3

- Clicked email CTA: +10

- Attended webinar: +20

Cap repeated behaviors to prevent score inflation. Someone who opens 30 nurture emails isn't 30x more likely to buy - cap email opens at +15 total and expire engagement points after 6 months. (For a deeper breakdown of what to score, see explicit vs implicit buying signals.)

Intent Score (External Signals)

Intent data captures what prospects research outside your ecosystem - category keyword surges, competitor comparison page visits, review-site activity. When three people at one company are all researching "CRM migration" on G2, that's a signal your scoring model should capture.

Bombora is the standard intent data provider tracking topic-level research activity across a co-op of B2B publishers. Weight intent signals as multipliers rather than standalone scores. A lead with strong fit plus active intent is gold. Intent alone without fit is just someone doing research. (If you want to operationalize this, start with intent signals.)

Interaction Score (Omnichannel)

- Attended a live demo: +50

- Replied to outreach email: +20

- Had a chatbot conversation: +10

- Requested a proposal: +60

If reps aren't logging touchpoints in your CRM, this pillar breaks down immediately. Consider running multiple scoring profiles - one for fit, one for engagement - so you can diagnose where leads are strong and where they're weak. This also lets you build separate models for new business versus expansion. (If you're aligning this across teams, use a revenue operations alignment cadence.)

Your scoring model just flagged a VP as a hot lead - but she left that company 3 months ago. Prospeo's 7-day data refresh cycle and 98% email accuracy mean the fit scores, job titles, and contact info feeding your model are actually current. 50+ data points per enrichment at $0.01/email.

Stop scoring stale data. Start scoring verified buyers.

Worked Example: Scoring a Real Lead

Lead: Sarah Chen, Director of Revenue Operations at a $45M fintech company.

| Pillar | Signal | Points |

|---|---|---|

| Fit | Director-level title | +20 |

| Fit | Target industry (fintech) | +15 |

| Fit | Revenue $10M-$500M | +15 |

| Behavior | Visited pricing page twice | +40 |

| Behavior | Downloaded ROI calculator | +15 |

| Intent | Company researching "sales automation" | +10 |

| Interaction | Replied to SDR email | +20 |

| Total | 135 |

With a threshold of 75+ = MQL, Sarah's a clear handoff. Now apply the part most teams skip - negative scoring and decay:

- Lead uses a competitor domain email: -1,000 (instant disqualification)

- Lead visited your careers page: -30 (job seeker, not buyer)

- Consumer email address like @gmail.com: -15

For decay, halve points on any action older than 6 months and zero anything past 12. Separating fit criteria from engagement criteria into distinct worksheets or CRM views makes this maintenance far easier.

Tie scores to SLA windows. Top-tier leads get a call within 24 hours. Next-tier leads get outreach within 48 hours. The consensus across RevOps communities on Reddit is clear: the single biggest reason scoring systems die is that sales has no contractual obligation to act on the scores. An SLA fixes that. (If you need the routing logic, see AI lead qualification.)

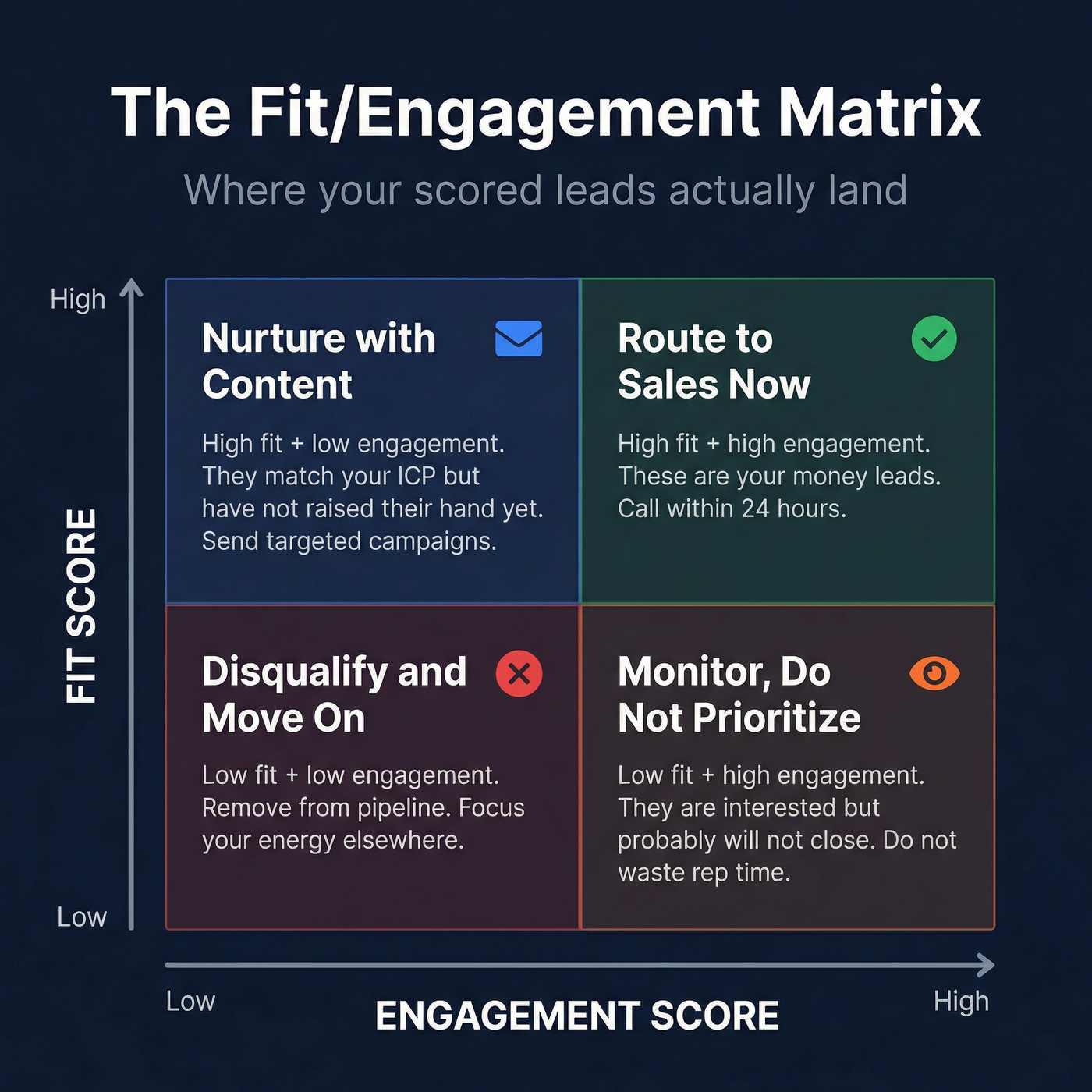

The Fit/Engagement Matrix

Think of your scored leads in four quadrants:

High fit + high engagement - route to sales immediately. High fit + low engagement - nurture with targeted content; they match your ICP but haven't raised their hand yet. Low fit + high engagement - monitor but don't prioritize; they're interested but won't close. Low fit + low engagement - disqualify and move on.

This matrix prevents the most common scoring mistake: treating a highly engaged bad-fit lead as a priority. (For a template-driven approach, use lead prioritization.)

When to Skip Scoring

Not every team needs a formal scoring program. If you're getting fewer than 50 leads per month, sell a single product, and have a short sales cycle, your reps can eyeball priority without a model. Adding scoring infrastructure to a low-volume funnel creates overhead without meaningful lift.

You're also not ready for AI-powered predictive scoring until you've got hundreds to thousands of closed deals with clean CRM data. Demandbase's AI scoring outputs qualification bands - 95+ = Highly Likely, 50-94 = Likely, under 50 = Unlikely - but those bands are only useful if the model trained on accurate historical outcomes. Rule-based scoring with clean data outperforms AI scoring with dirty data. Start manual. Get the data right. Graduate to predictive when you've earned it.

Lead Scoring Tools and Costs

Your scoring stack has two cost components: the platform that runs the model and the data that feeds it. (If you're building the full stack, start with a lean B2B sales stack.)

| Tool | Scoring Type | Best For | Starting Price |

|---|---|---|---|

| Prospeo | Data layer | Accurate scoring inputs | Free tier; ~$0.01/email |

| HubSpot | Rule-based + predictive | Mid-market, one platform | $800/mo |

| ActiveCampaign | Rule-based | SMBs on a budget | ~$49/mo |

| Salesforce Einstein | Predictive AI | Salesforce orgs | $150-300/user/mo |

| Demandbase | Account-level AI | ABM teams | ~$30K-100K+/yr |

| Marketo (Adobe) | Rule-based + predictive | Multi-product orgs | ~$1K-3K+/mo |

HubSpot Marketing Hub

Professional plans start at $800/month billed annually, with Enterprise at $3,600/month. The Lead & Health Scoring apps separate fit from engagement, which is the right architectural choice. HubSpot's ecosystem is its biggest strength - scoring, nurture, CRM, and reporting all live in one place. The downside: $800/month is real money if scoring is your primary use case, and the most advanced predictive features require Enterprise tier.

ActiveCampaign

Use this if you're an SMB that needs scoring without enterprise pricing. Plans with built-in scoring start around $49/month, and the native CRM means you don't need a separate tool. Skip this if you're running complex multi-product scoring or need account-level models.

Salesforce Einstein

Already in Salesforce? Einstein is the path of least resistance. Expect $150-300/user/month for a typical Sales Cloud setup. Models train on your CRM data automatically and surface scores directly on lead records. The catch: Salesforce requires commitment, both financially and operationally.

Demandbase

Account-level AI scoring for ABM teams managing hundreds of target accounts with multi-threaded buying committees. Pricing runs $30K-$100K+/year - squarely enterprise territory.

Marketo (Adobe)

Supports multiple scoring profiles per product line, which is useful if you sell three products to three different ICPs. Quote-based pricing, typically $1K-$3K+/month.

Why Most Scoring Models Fail

We've seen scoring implementations fail for three predictable reasons, and they're almost always the same.

Bad data. If 20% of your job titles are wrong and 15% of your emails bounce, your model is scoring ghosts. This is the most common failure mode and the easiest to fix - yet most teams skip it because data hygiene isn't as exciting as building the model. A common complaint in r/RevOps threads: teams spend weeks calibrating point values and zero hours verifying the data those points are assigned to. (If you need a checklist, use CRM verify.)

Sales ignores the scores. This usually happens when marketing builds the model in isolation, sets arbitrary thresholds, and never validates against actual closed-won data. The fix is a formal SLA where marketing defines the scoring criteria, sales agrees to work leads above the threshold within defined time windows, and both teams review results quarterly.

If your sales team ignores your lead scores, the problem isn't the sales team.

Set-it-and-forget-it decay. Buyer behavior changes. Your ICP shifts. New competitors emerge. A scoring model perfectly calibrated in January is stale by July. Review every 90 days minimum. Compare your highest-scored leads against actual closed-won deals. If they don't correlate, recalibrate.

Sarah Chen scored 135 in your model. Great - but does your data provider confirm she's still Director of RevOps at that fintech company? Prospeo returns 50+ verified data points per contact with a 92% API match rate, so every pillar of your scoring framework runs on reality, not last quarter's snapshot.

Feed your scoring model data that's 7 days fresh, not 6 weeks stale.

FAQ

What's a good MQL handoff threshold?

Most B2B teams start at 75 out of 100 and adjust quarterly. The exact number matters less than calibrating it against closed-won data every 90 days. If leads scoring 75+ convert below 10%, raise the threshold or reweight your criteria toward stronger buying signals.

Should I use AI or rule-based scoring?

Start rule-based. Switch to predictive only after you've accumulated 500+ closed deals with clean CRM data. AI scoring on dirty data consistently underperforms manual scoring on clean data - the model amplifies whatever you feed it, including errors.

How often should I recalibrate my scoring model?

Review every 90 days minimum. Pull a report comparing your highest-scored leads against actual closed-won deals from the same period. If the correlation is weak, recalibrate your weights - what worked last quarter won't necessarily reflect how buyers behave today.

How does data quality affect scoring accuracy?

A lead scored 95/100 with a bounced email and outdated job title wastes more rep time than an unscored lead. Regardless of your enrichment provider, verify emails, titles, and company data on a weekly cadence rather than waiting for quarterly cleanups. Stale data doesn't just reduce accuracy - it actively erodes your team's trust in the entire system.