How to Build a Lead Qualification Sales Funnel That Actually Converts

A sales rep with a 50% stage-to-stage conversion rate needs 160 pitches to close 10 deals. Drop that conversion to 33% - closer to reality for most teams - and you're looking at 810 pitches for the same 10 wins. That's the compounding math of a leaky funnel, and it's why building a lead qualification sales funnel with real gates at every stage is the single highest-impact activity in your pipeline.

The gap between those two numbers is entirely about who you let in. B2B SaaS websites convert visitors to leads at just 1.1%. Legal services hit 7.4%. But regardless of your top-of-funnel rate, the real damage happens downstream when unqualified leads clog every stage and burn rep hours on deals that were never going to close.

Here's the short version:

- Pick ONE framework - BANT for SMB , CHAMP for mid-market, MEDDIC for enterprise - and enforce it consistently across every rep.

- Build a lead scoring model this week. A starter rubric is below. Don't overthink it. (If you need a deeper walkthrough, see our lead scoring guide.)

- Write an MQL-to-SQL handoff SLA with a 5-minute response target for high-intent actions like demo requests. Use handoff email templates to standardize the motion.

- Fix your data upstream. Qualification falls apart fast when bounce rates hit the 30-40% range. Scoring models and SLAs are built on sand without verified contact data (see email bounce rate benchmarks and fixes).

The Math That Changes Everything

Let's make the skinny funnel argument concrete. If your team needs 10 closed deals this quarter and your stage-to-stage conversion averages 50%, you need 160 qualified conversations at the top. That's manageable. But if conversion drops to 33% - because reps are pitching unqualified prospects, chasing ghosts, or working stale data - you need 810 conversations for the same result.

The difference isn't effort. It's filtration.

The consensus on r/sales is clear: disqualify early and disqualify often. Every unqualified lead that survives to the demo stage costs you a slot that could've gone to a real buyer. The first and most important step in qualifying leads isn't generating more - it's removing the ones that were never going to buy.

What Qualification Actually Means Inside the Funnel

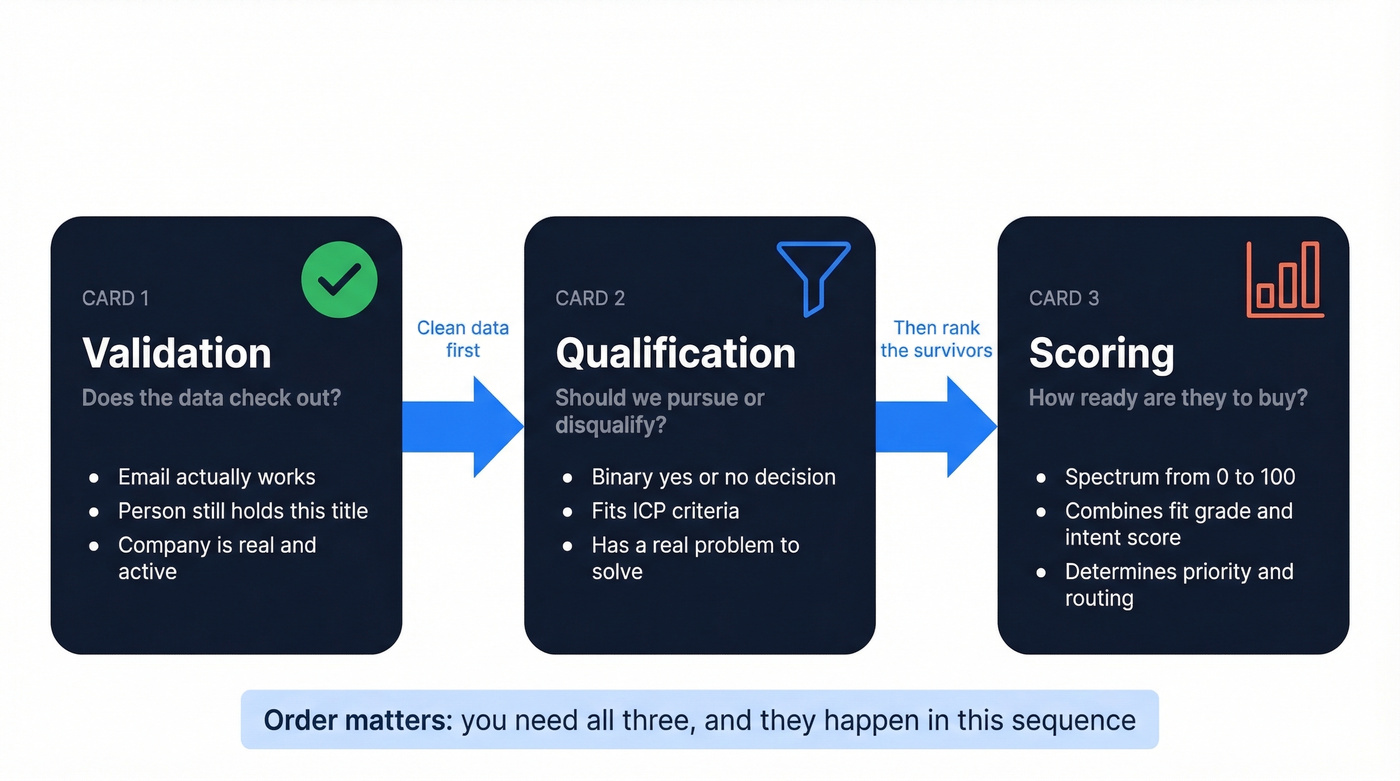

Qualification is a binary decision: pursue or disqualify. Scoring is a spectrum - a 0-to-100 ranking of how ready a qualified lead is to buy. Validation is data verification - confirming the email works, the person still holds that title, the company is real. You need all three, and they happen in that order.

Buyers are further along than most teams realize. 92% start with at least one vendor already in mind, and the winning vendor is on the Day One shortlist 95% of the time. First contact happens at 61% of the buying journey. The average B2B cycle runs 10.1 months. By the time a prospect fills out your form, they've already done the homework - your qualification process needs to match that reality, not assume you're educating from scratch.

Lead Types Beyond MQL and SQL

Most teams operate with two buckets: MQL and SQL. That's not enough. Here's the full lifecycle, with definitions that actually mean something operationally.

| Lead Type | Definition | Trigger |

|---|---|---|

| MQL | Fits ICP + shows engagement | Score threshold hit |

| SAL | Sales-reviewed, accepted | Rep confirms fit, commits to follow up |

| SQL | Strong buying intent confirmed | Discovery complete, next step booked |

| PQL | Product usage signals readiness | Feature adoption / usage threshold |

| QO | Qualified outbound via intent signals | Intent + ICP match, no inbound action |

The SAL stage is the one most teams skip - and it's the one that fixes the biggest handoff problem. SAL means a rep has looked at the lead, confirmed it's worth pursuing, and committed to a follow-up within a defined timeframe. Without it, MQLs get tossed over the wall and die in a queue. Teams that qualify MQLs through a formal SAL gate see dramatically fewer wasted rep hours.

Salesforce's State of Sales data shows reps spend just 28% of their time actually selling. The rest goes to admin, nurture, and figuring out who's ready. A proper lifecycle with SAL as a formal gate reclaims hours every week.

Funnel Benchmarks for 2026

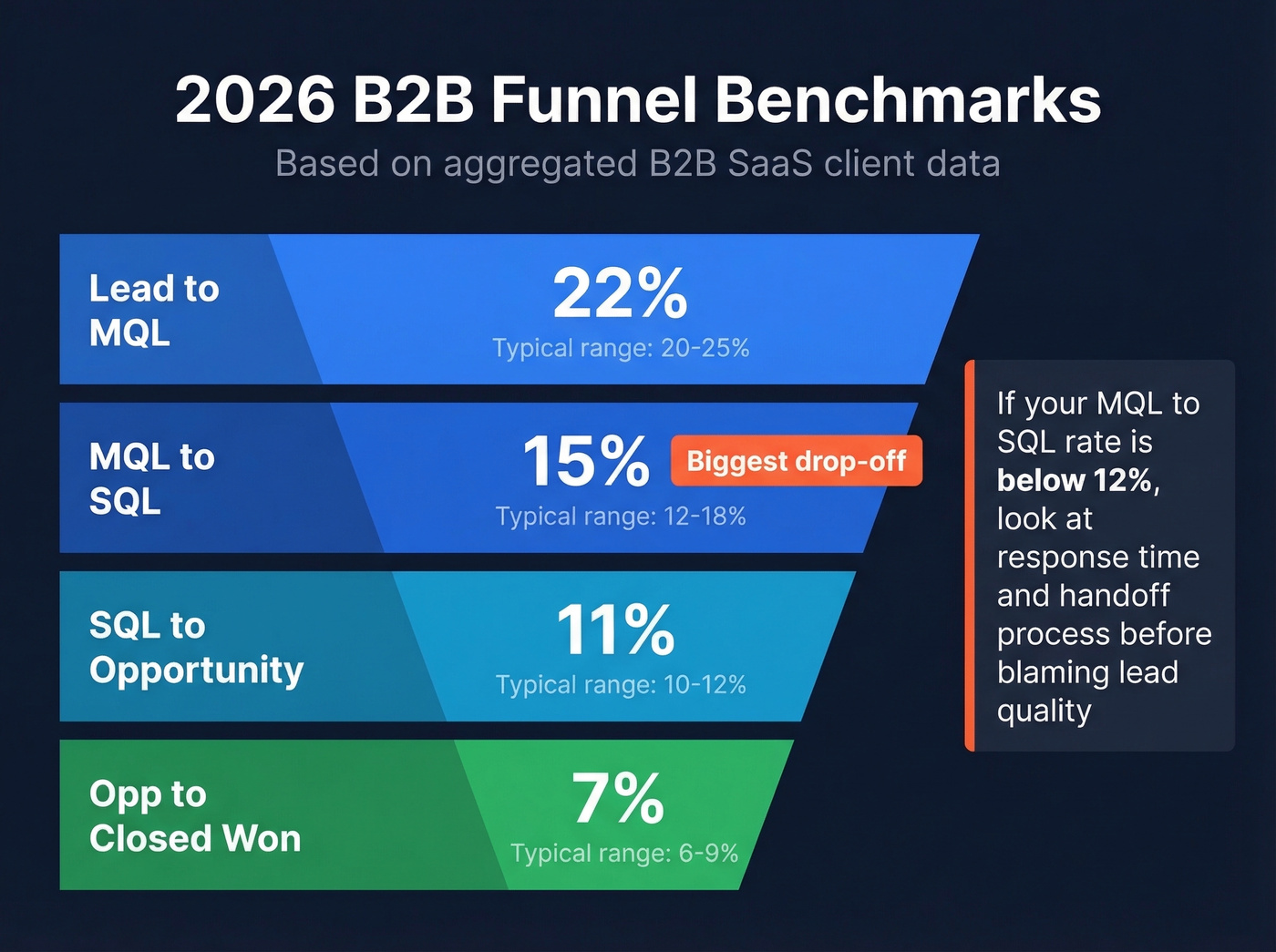

Here's where your funnel should land, based on MarketJoy's aggregated client data:

| Stage | Benchmark | Typical Range |

|---|---|---|

| Lead to MQL | 22% | 20-25% |

| MQL to SQL | 15% | 12-18% |

| SQL to Opportunity | 11% | 10-12% |

| Opp to Closed Won | 7% | 6-9% |

The biggest drop-off happens at MQL to SQL. That's not a marketing problem - it's a handoff problem. The conversion range varies significantly by motion: SaaS short-cycle teams see 15-25%, enterprise runs 10-20%, and high-velocity inside sales can hit 25-35%.

If your MQL-to-SQL rate is below 12%, don't blame lead quality first. Look at response time, handoff process, and whether your MQL definition actually means anything. We've seen teams double this number just by adding a 5-minute response SLA on demo requests. (To benchmark your full funnel, track funnel metrics consistently.)

Which Framework to Use

BANT, CHAMP, and MEDDIC

| Framework | Best For | Strengths | Weaknesses |

|---|---|---|---|

| BANT | High-volume SMB (<$25K ACV) | Fast, simple, scalable | Misses complexity |

| CHAMP | Mid-market consultative | Challenge-first, consultative | Requires more skill, slower |

| MEDDIC | Enterprise (>$50K ACV) | Thorough, multi-stakeholder | Training overhead |

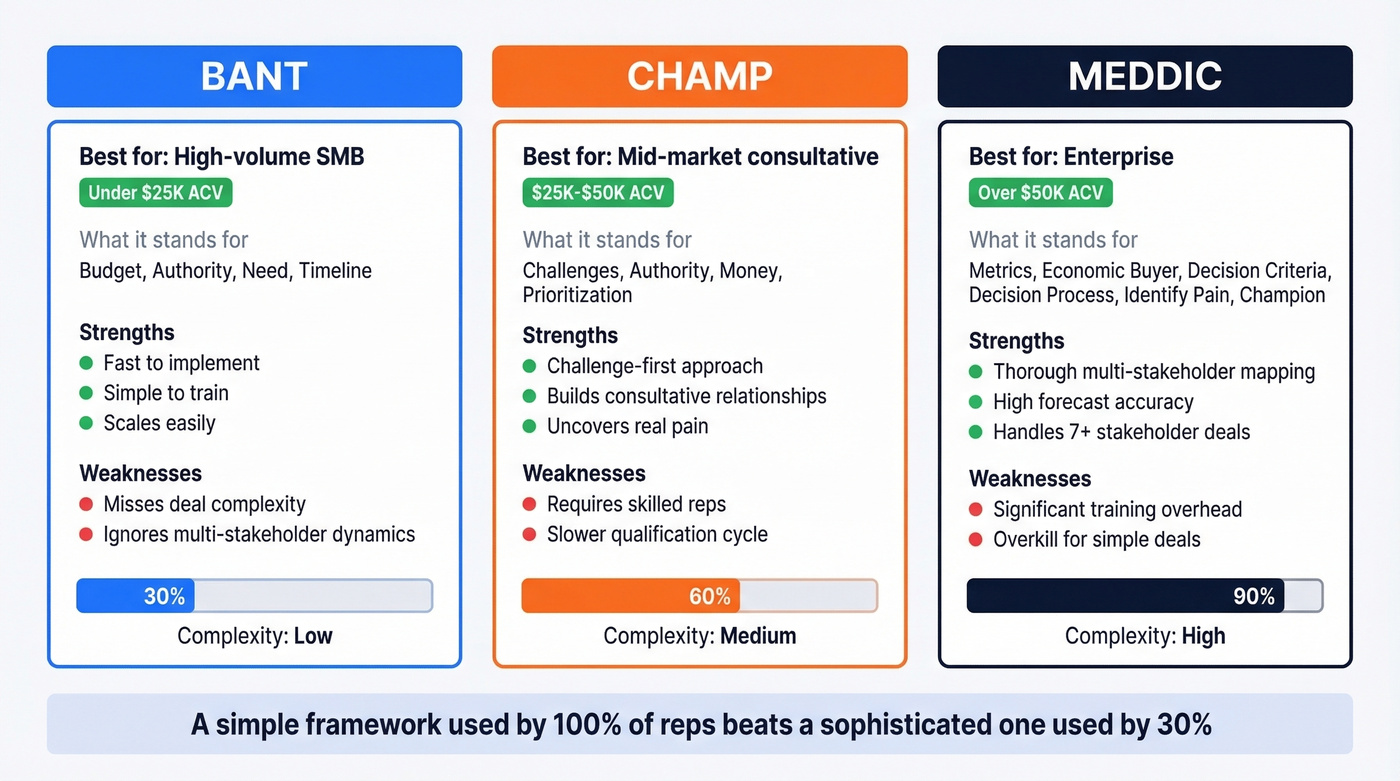

Modern B2B purchases involve around 7 stakeholders on average, which is exactly why MEDDIC's multi-stakeholder mapping exists. If your deals routinely involve 5+ decision-makers, BANT will leave you blind to half the buying committee.

The r/sales consensus is that most methodologies boil down to the same fundamentals: need, budget, stakeholders, timeline. The framework you pick matters less than whether your team actually uses it. One company saw forecast accuracy jump from 62% to 89% after standardizing on a single framework - not because the framework was magic, but because consistency created predictability.

A simple framework used by 100% of reps beats a sophisticated one used by 30%.

Here's the thing: most teams closing deals under $15K don't need a formal framework at all. They need three questions answered before any deal advances - requirements, budget, and competition - and the discipline to disqualify when the answers aren't there.

The Practitioner's Alternative

If BANT/CHAMP/MEDDIC feel like overkill, there's a stripped-down model that's been battle-tested for 20+ years: (1) Requirements - what do they need, why, and by when? (2) Budget - can they pay, and have they paid for something like this before? (3) Competition - who else are they evaluating, and where do you win or lose?

That's it. If you can't answer all three after discovery, you don't have a qualified opportunity. The relationship piece? Nice-to-have, not a qualification criterion.

Verify Adoption with Call Reviews

Picking a framework is step one. Knowing whether reps actually use it is step two. Record discovery calls and review a random sample each week against your chosen framework's criteria. Conversation intelligence tools like Gong or Chorus can flag calls where budget or timeline questions never come up. In our experience, teams that audit 10% of calls weekly catch framework drift before it tanks conversion rates.

If you want a tighter structure for discovery, use a discovery questions framework and standardize it across reps.

You just read it: qualification falls apart when bounce rates hit 30-40%. Prospeo delivers 98% email accuracy with a 7-day refresh cycle, so your scoring models actually work. Teams using Prospeo cut bounce rates from 35% to under 4%.

Stop qualifying leads you can't even reach.

Discovery Questions That Qualify

The first answer on a discovery call is almost never the real answer. Your job is to probe past the surface.

Problem:

- What triggered this evaluation? Is there a specific event, deadline, or mandate driving it?

- What happens if you don't solve this in the next 6 months?

Impact:

- How much time or money is the current approach costing you per quarter?

- If you could quantify the cost of doing nothing, what would that number look like?

Decision:

- Who else needs to sign off before this moves forward?

- What criteria will you use to make the final decision?

Timeline:

- Is there a hard deadline driving this, or is it exploratory?

- What could push this timeline back?

The SPIN implication style - "What happens if this problem gets worse?" - and MEDDIC decision-criteria questions - "What metrics will define success?" - are the highest-signal questions in any framework. Don't skip them because they feel uncomfortable. That discomfort is where qualification actually happens, separating conversion-ready prospects from people who are just browsing.

Build Your Lead Scoring Model

Starter Scoring Rubric

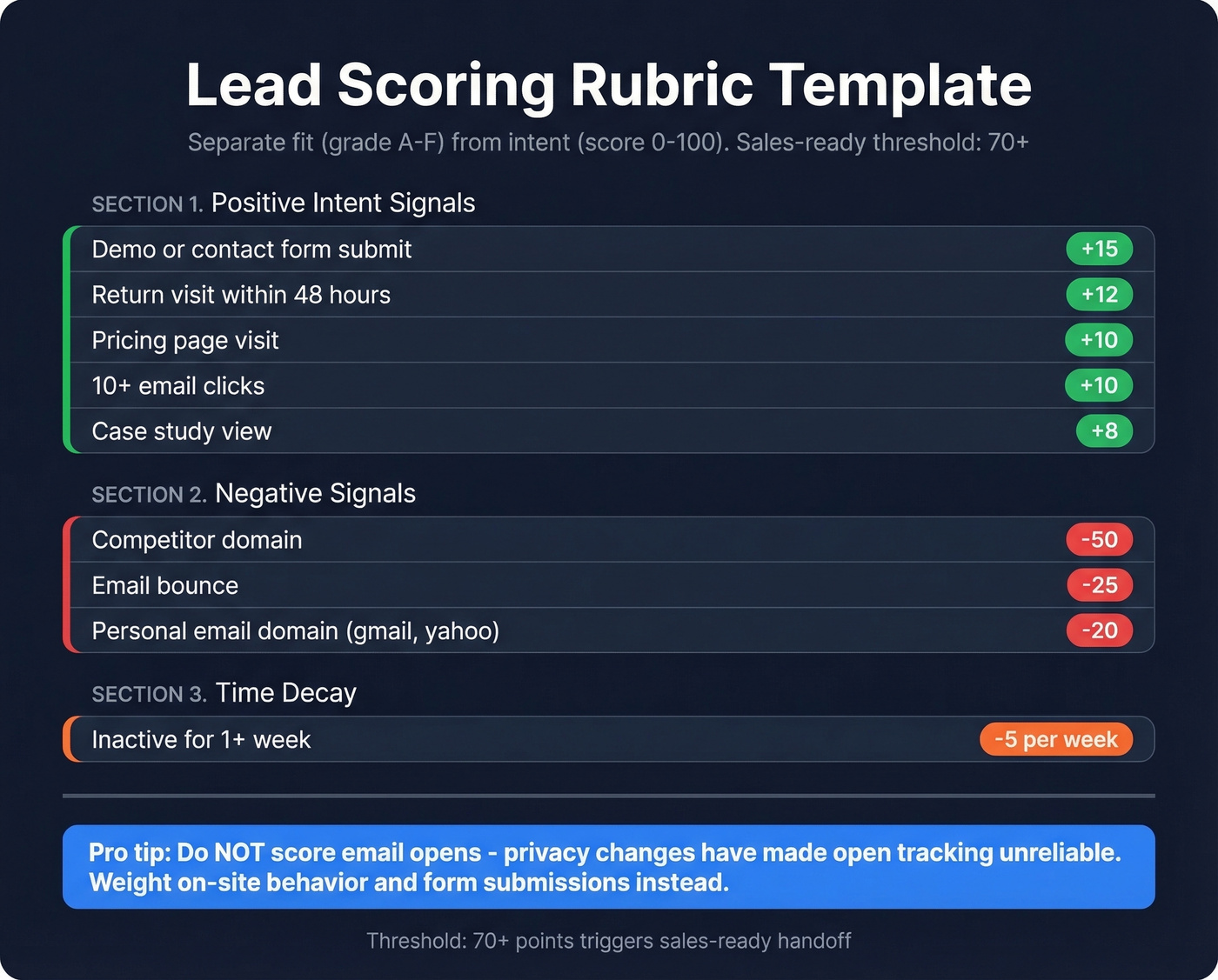

Separate fit (grade A-F) from intent (score 0-100). This prevents the "intern looks hot" problem - where a junior employee at a non-ICP company racks up points by downloading every whitepaper you publish. (If you need an ICP worksheet, use an ideal customer profile template.)

| Signal | Points | Category |

|---|---|---|

| Pricing page visit | +10 | Intent |

| Demo/contact form submit | +15 | Intent |

| Case study view | +8 | Intent |

| Return visit within 48 hours | +12 | Intent |

| 10+ email clicks | +10 | Intent |

| Email bounce | -25 | Negative |

| Personal email domain | -20 | Negative |

| Competitor domain | -50 | Negative |

| Inactive 1 week | -5 (per week) | Decay |

Set your sales-ready threshold at 70+. Don't score email opens - privacy changes have made open tracking unreliable. Weight on-site behavior and form submissions instead.

Before you score anything, your data needs to be clean. Enrich CRM records before running them through a scoring model. Tools like Prospeo return 50+ data points per contact at a 92% API match rate, giving your scoring model the firmographic and technographic signals it needs to separate real buyers from noise. (If you're comparing vendors, start with data enrichment services.)

For enterprise deals with 11-13 stakeholders on the buying committee, individual lead scoring isn't enough. Build account-level scores that aggregate signals across all contacts at a target company. A single champion scoring 85 matters less than five stakeholders at the same account collectively hitting your threshold. This is also where account-based selling best practices tend to outperform lead-by-lead prioritization.

Negative Scoring and Decay

Teams that skip negative scoring end up with inflated pipelines and frustrated reps. We've watched it happen repeatedly. A competitor domain visiting your pricing page isn't a lead - it's competitive intel. A bounced email isn't a prospect - it's dead weight. And a lead that hasn't engaged in 3 weeks shouldn't sit at the same score as one who requested a demo yesterday. Time decay of -5 per week of inactivity keeps your pipeline honest and your forecasts accurate.

Companies that master lead scoring and nurturing generate 50% more sales-ready leads at 33% lower cost, per Forrester research. The ROI is real, but only if the model reflects reality.

When to Add AI

Start rules-based. Layer AI once you have 6+ months of historical conversion data. Default's study of 88,000 inbound leads found AI-powered scoring reduced time to service leads by 31%, and ML-based models can improve scoring accuracy up to 60% versus manual approaches.

The implementation path:

- Define what "qualified" means for your business.

- Audit your data for completeness and accuracy.

- Choose a model type - predictive, propensity, intent-based, or hybrid.

- Train on historical conversion outcomes.

- Validate precision and recall before going live.

- Integrate with your CRM and retrain every 3-6 months.

The risk? AI scales bias. If your historical data is skewed toward a single industry or company size, the model will reinforce that skew. Always pair AI scoring with human review on edge cases.

The MQL-to-SQL Handoff SLA

Contacting a lead within 5 minutes makes you 100x more likely to convert them than waiting an hour. Contacting leads within 24 hours lifts conversion by 5x. Speed-to-lead isn't a vanity metric - it's the single biggest factor in your handoff process.

If your MQL-to-SQL handoff lives in a Slack channel, you don't have a handoff. You have a prayer chain. Here's what a real SLA includes:

- Shared lifecycle definitions - marketing and sales agree on what MQL, SAL, and SQL mean, in writing.

- Handoff triggers - specific actions like demo requests, pricing page visits 3x in a week, or high intent scores that create an immediate CRM alert.

- Maximum response time - 5 minutes for demo requests, 1 hour for high-score MQLs, 4 hours for standard MQLs.

- Auto-created CRM tasks with trigger context - not just "follow up," but "prospect viewed pricing page 3 times and downloaded the ROI calculator."

- Reassignment rules - if a lead goes unactioned past the SLA window, it routes to the next available rep automatically.

- Monthly joint reviews - marketing and sales review conversion rates, time-between-stages, and reassignment frequency together.

Put this in a document. Review it monthly. 67% of lost sales result from inadequate lead qualification - and most of that loss happens in the gap between marketing saying "here's a lead" and sales deciding to call.

Five Mistakes That Kill Qualified Leads

1. Stale or missing data. Your scoring model assigns +15 for a demo request, but if the email bounces, that score is meaningless. Run every new lead through email verification before it enters your scoring model. Prospeo's 98% email accuracy and 7-day refresh cycle catches dead contacts before they waste rep time. (If you're evaluating tools, compare options in our Bouncer alternatives guide.)

2. Volume over quality. A pipeline with 500 "opportunities" and a 3% close rate isn't a pipeline - it's a spreadsheet of false hope. Run a monthly pipeline scrub where any opportunity without a confirmed next step gets downgraded or removed. Celebrate conversion rate, not pipeline size.

3. Inconsistent criteria across reps. If your team doesn't have a documented ICP and enforced qualification framework, every rep is running their own definition of "qualified." Publish a one-page qualification checklist and make it a required field in your CRM before any deal advances past discovery.

4. Ignoring intent signals. A prospect who visited your pricing page three times this week and downloaded a case study is not the same as one who opened a newsletter. Layer intent data into your scoring model and route high-intent leads to a fast-track SLA tier. (For a practical system, see identifying buying signals.)

5. Slow follow-up on high-value leads. If your best leads wait 24 hours for a response, you're handing deals to competitors who respond in 5 minutes. Set up real-time alerts for demo requests and high-score MQLs that push to a rep's phone, not just their inbox.

Data Quality: The Prerequisite Nobody Talks About

Every framework, scoring model, and SLA in this article assumes one thing: your contact data is accurate. When it isn't, the entire qualification process breaks. You can't qualify a lead whose email bounces. You can't score intent signals from a contact who left the company six months ago. You can't hit a 5-minute response SLA when 35% of your list is dead on arrival. (If you need the full checklist, start with our email deliverability guide.)

The proof point is concrete: Snyk's sales team of 50 AEs was running a 35-40% bounce rate before switching their data provider. After moving to Prospeo, bounces dropped under 5%, and AE-sourced pipeline jumped 180% - generating 200+ new opportunities per month. The qualification system worked because the data underneath it was finally clean.

Your lead qualification sales funnel is only as good as the data feeding it. Start there.

810 pitches instead of 160 - that's the cost of letting unqualified leads into your funnel. Prospeo's 30+ filters including buyer intent, headcount growth, and technographics let you pre-qualify before a rep ever picks up the phone. At $0.01 per email, bad data is no longer an excuse.

Filter before the funnel. Qualify with data that's 7 days fresh.

FAQ

What's the difference between lead qualification and lead scoring?

Qualification is a binary gate - pursue or disqualify based on ICP fit and buying authority. Scoring ranks qualified leads on a 0-100 scale by purchase readiness using behavioral and firmographic signals. You need both: qualification filters who enters the funnel, scoring prioritizes who gets rep attention first.

How often should I recalibrate my scoring model?

Review score thresholds quarterly against actual conversion data, and retrain AI models every 3-6 months. Recalibrate immediately if your MQL-to-SQL conversion rate shifts more than 10 percentage points in either direction - that signals your thresholds no longer match buyer behavior.

What should I do with disqualified leads?

Route them to a nurture sequence with a 6-month drip cadence. Budget cycles change, org charts shift, and 15-20% of today's disqualified leads re-enter the funnel within a year. Skip this if you're running a high-velocity transactional motion where the deal size doesn't justify long-term nurture - in that case, let them go and focus your energy on new pipeline.

How do I get marketing and sales to agree on MQL definitions?

Write a shared SLA document with explicit scoring thresholds, behavioral triggers, maximum response times, and a monthly joint review cadence. Both teams sign it. Companies with documented marketing-sales SLAs see 34% higher revenue growth than those without one.

How does data quality affect lead qualification?

Bad data breaks every downstream process. If 35% of your emails bounce, your scoring model inflates pipeline with phantom leads and your handoff SLA is meaningless. Verify contacts before they enter the funnel - teams that maintain sub-5% bounce rates see 2-3x higher MQL-to-SQL conversion than those running on unverified lists.